By: Jeremy Pedersen

So you're looking for a way to test something. Perhaps you need to trigger an event, or insert a record into a database, or make an API call?

Enter Function Compute. Though it's intended as a serverless computing tool (say, to build responsive web apps without running a server 24/7), it can be put to many other uses just as easily.

Need some "glue" to tie Alibaba Cloud services together? Function Compute can do it.

Need your CloudMonitor alerts forwarded to a Slack chat channel? Not a problem for Function Compute.

But what about using it as a testing tool? Function Compute functions can run almost any code you give them. Function Compute functions can be "chained" together to call each other. They can be triggered by HTTP requests, by events within Alibaba Cloud, and they can even be run on a fixed schedule, like a cron job.

This means you can use Function Compute to simulate a dummy user or application. That's right: Function Compute is more than just glue, more than just cheap serverless capacity....it's also a great way to run tests.

Some of my more popular blog posts have focused on setting up MySQL and importing data with DTS as well as importing data from MySQL into MaxCompute using DataWorks.

I have also written about how to generate realtime data displays with DataV.

In those previous posts, I was forced to rely on a static dataset, or else I created new rows by executing INSERT INTO statements by hand.

What if I wanted to automate (or at least semi-automate) that process?

This is where Function Compute comes in handy. In those previous blogs, my MySQL database held three tables:

usersproductsordersWhat if I created Function Compute code that could insert new data into those tables for me, whenever I wanted? That's exactly what we'll set out to do in today's blog post.

Let's dive in!

Before going any further, we need to set up a functioning MySQL database. As with most things on Alibaba Cloud, there are multiple ways we could do this:

All of these are viable options, but #2 is the simplest, so that's the approach I'll use.

Note: I'll be setting up my RDS MySQL database in the Singapore region, but that's not a requirement. Feel free to set up your database (and Function Compute code) in whatever Region you like.

I'll walk you through all the steps here in the blog, but if you're interested, there's an official Quick Start guide as well, which you can check out.

Note: I'm using an aliyun.com account rather than an alibabacloud.com account.

If you're using alibabacloud.com, you might notice some differences in the user interface, and your estimated costs will be in USD rather than RMB (Chinese Yuan), but that's about it....everything should work the same!

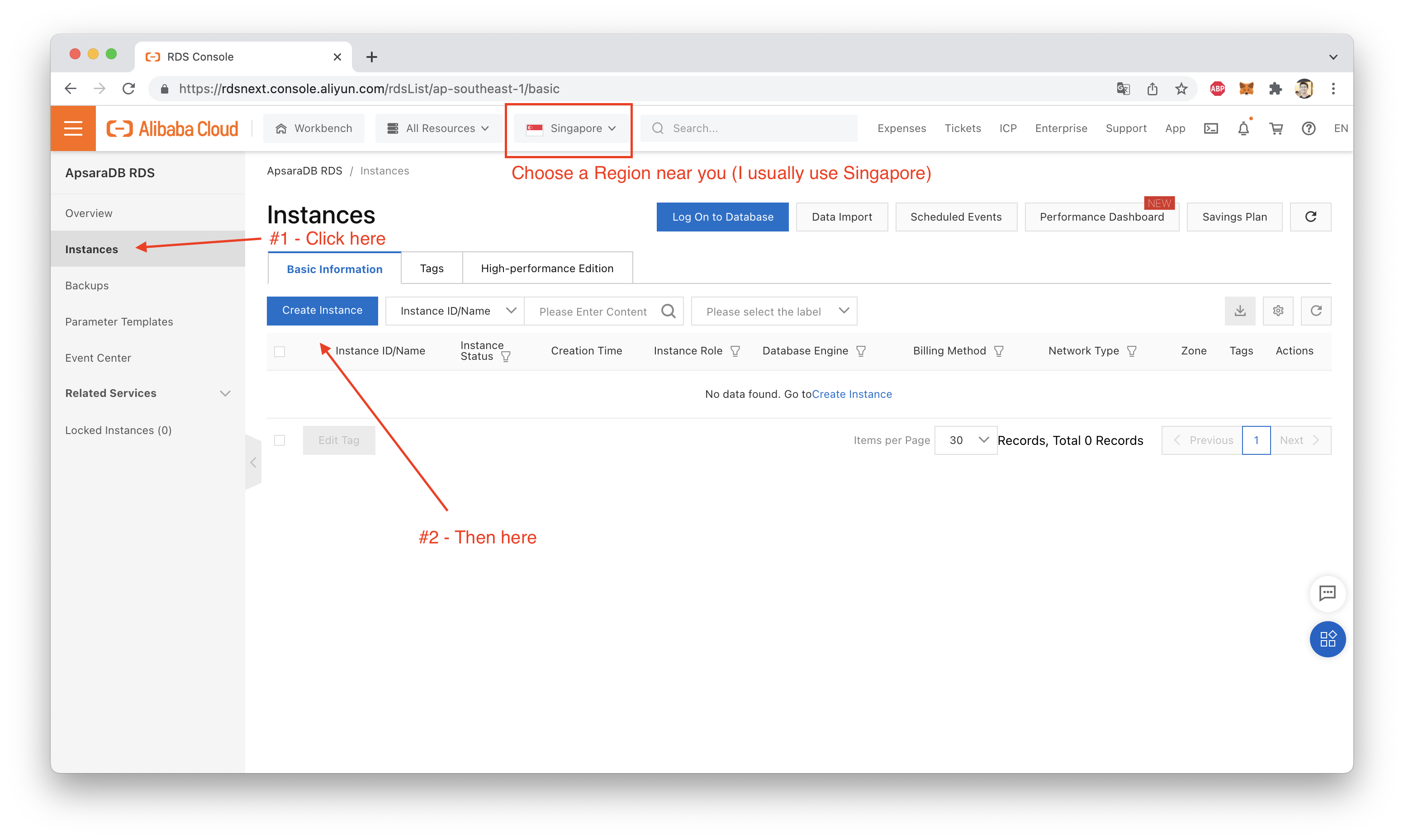

After you've logged into your Alibaba Cloud account, open up the web console and navigate over to the RDS "instances page", as shown here:

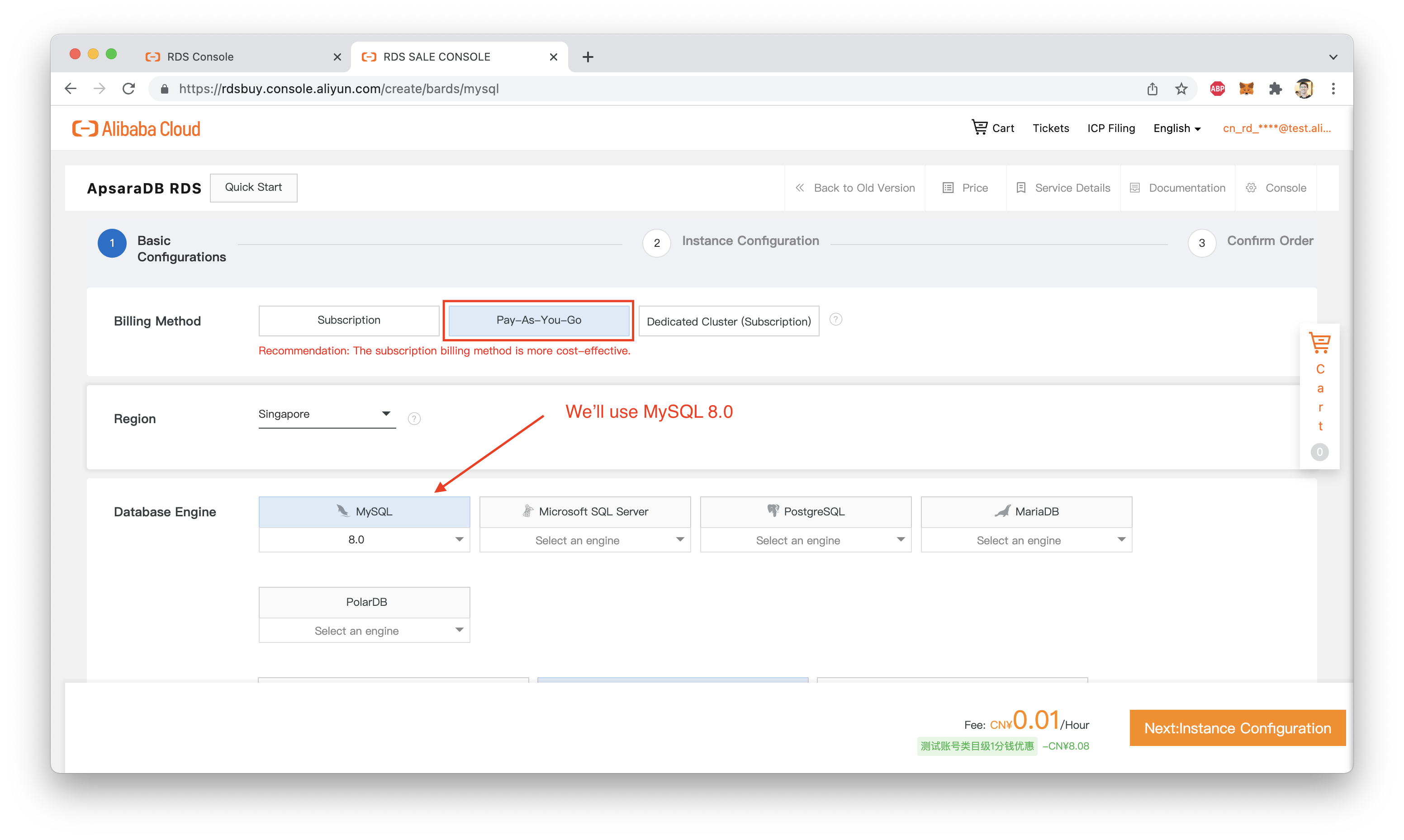

Make sure you are creating MySQL 8.0 instance, and choose Pay-As-You-Go as your Billing Method. You don't want to end up with a monthly subscription to RDS by accident!

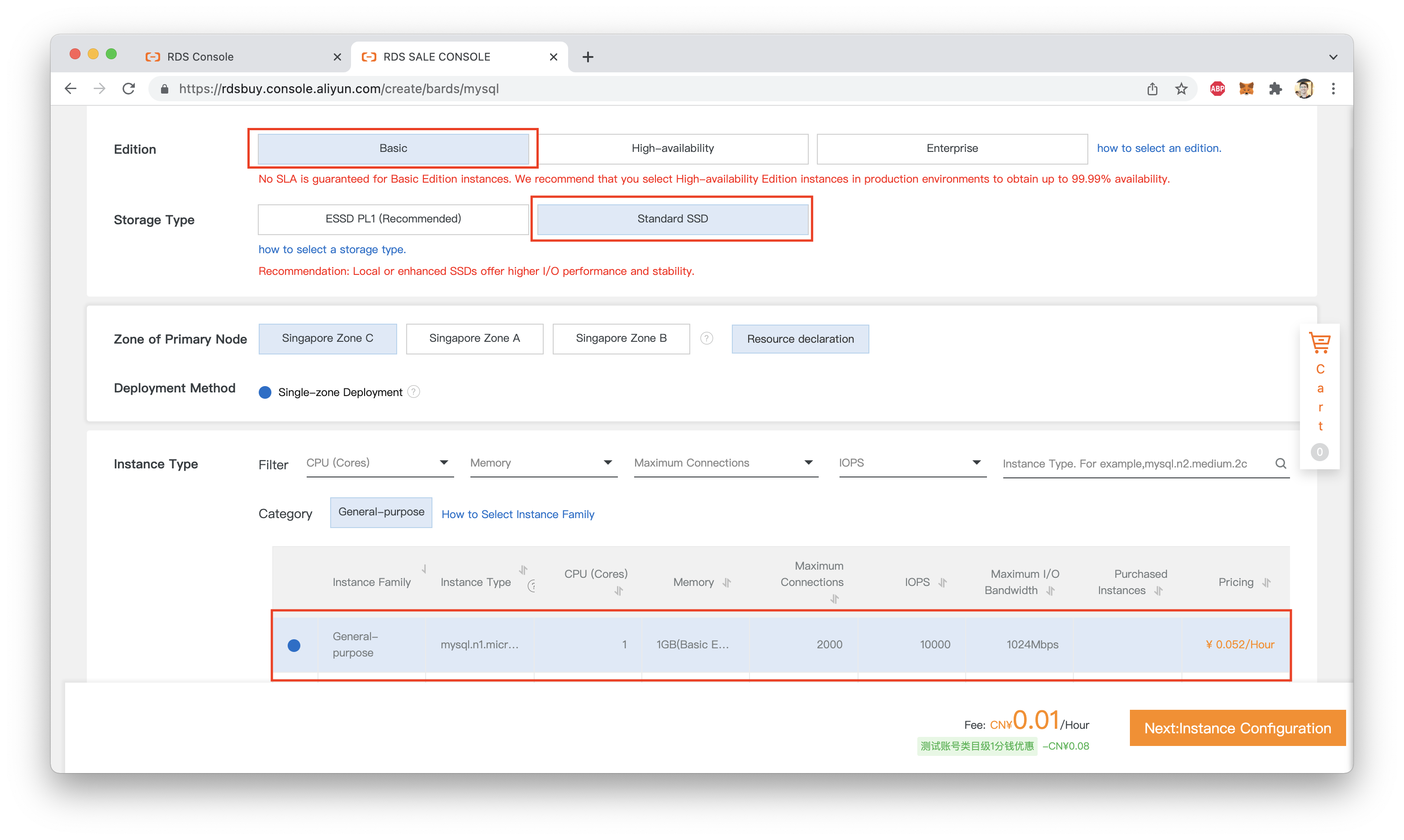

Choose the smallest, cheapest RDS instance type ("Basic", with a Standard SSD disk). That should be fine for our purposes:

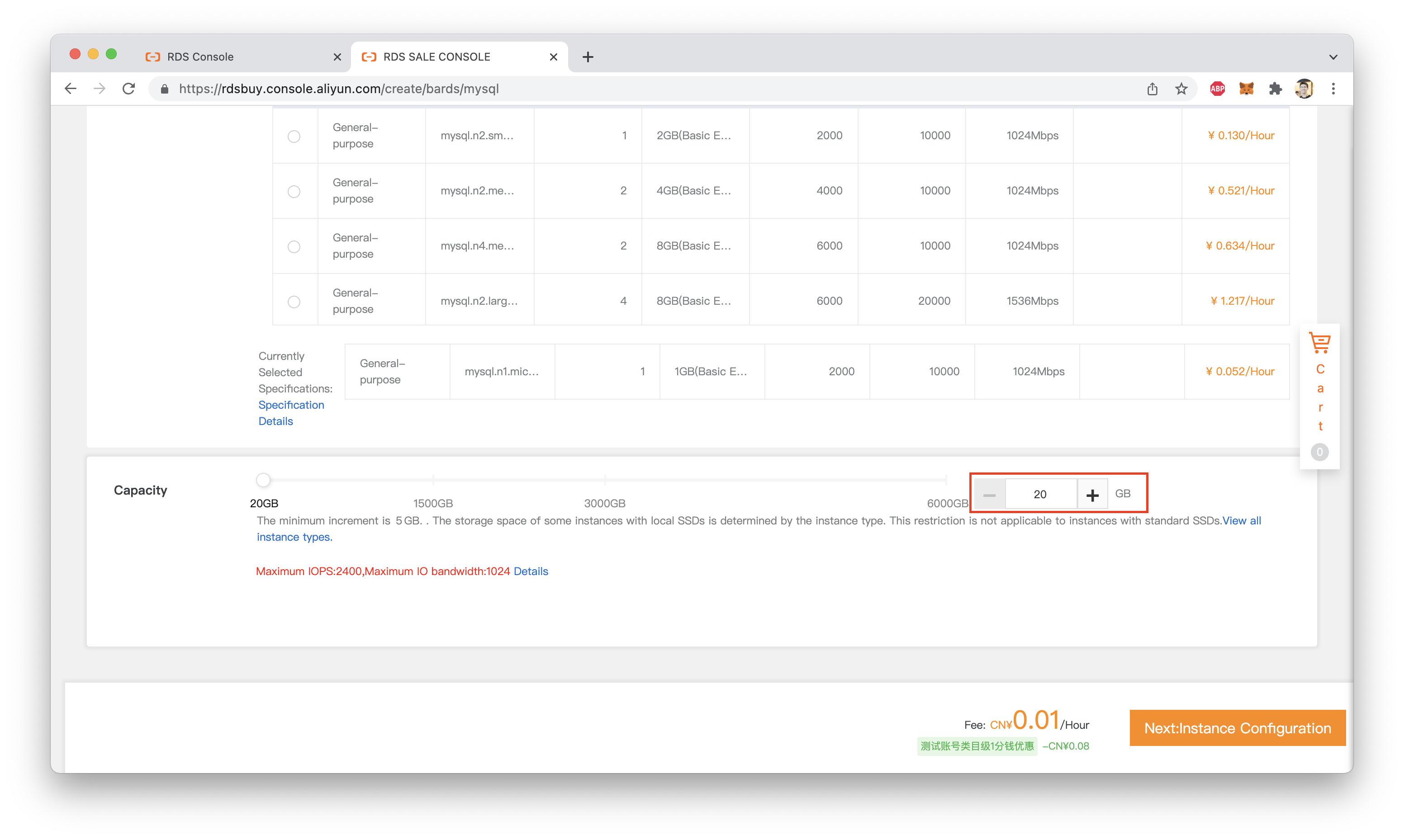

The smallest disk size (20 GB) will be more than enough:

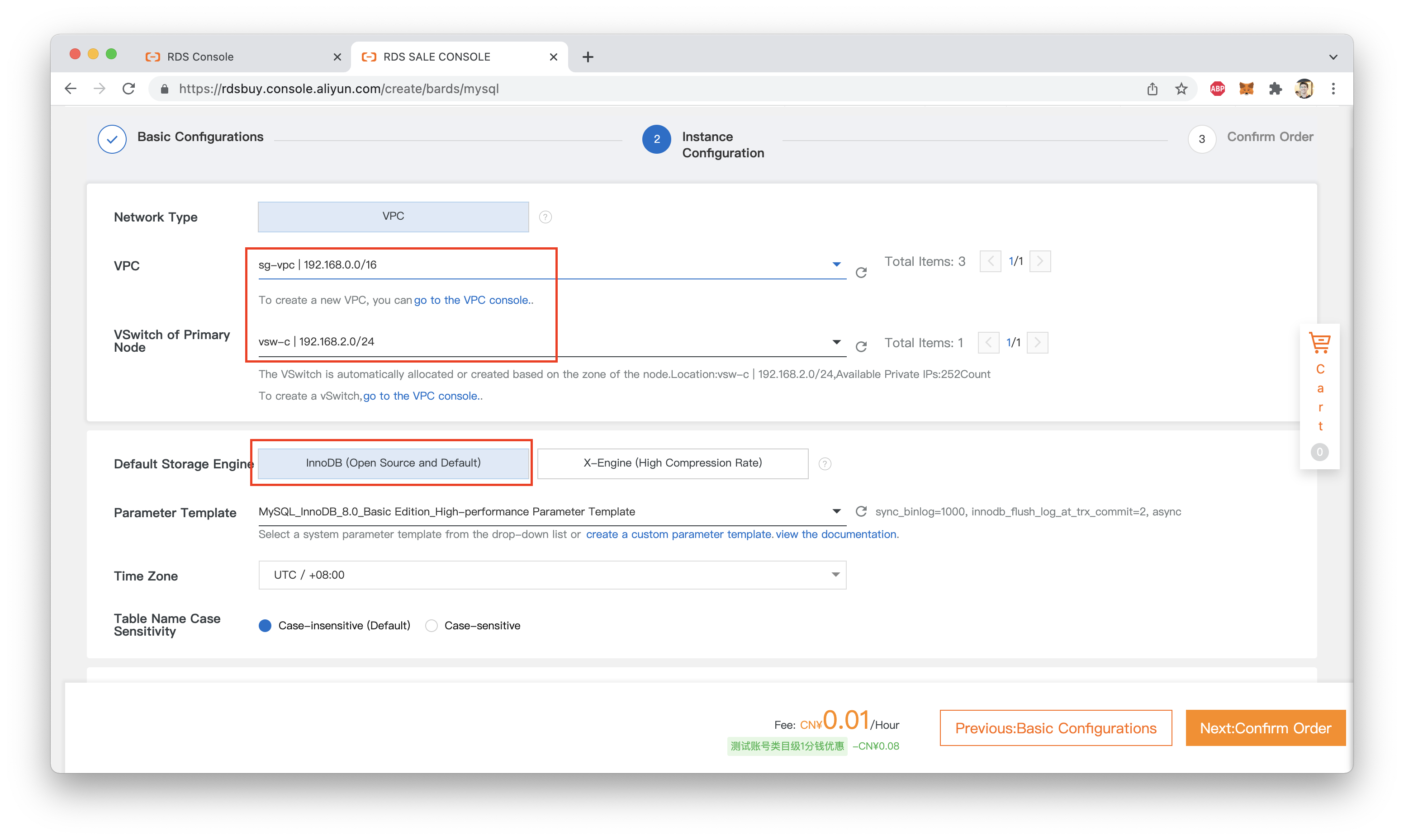

Click Next. Make sure you're using the default InnoDB database engine. The VPC group you assign the RDS instance to isn't terribly important, but make a note of which VPC it is. We'll need that information later when setting up Function Compute:

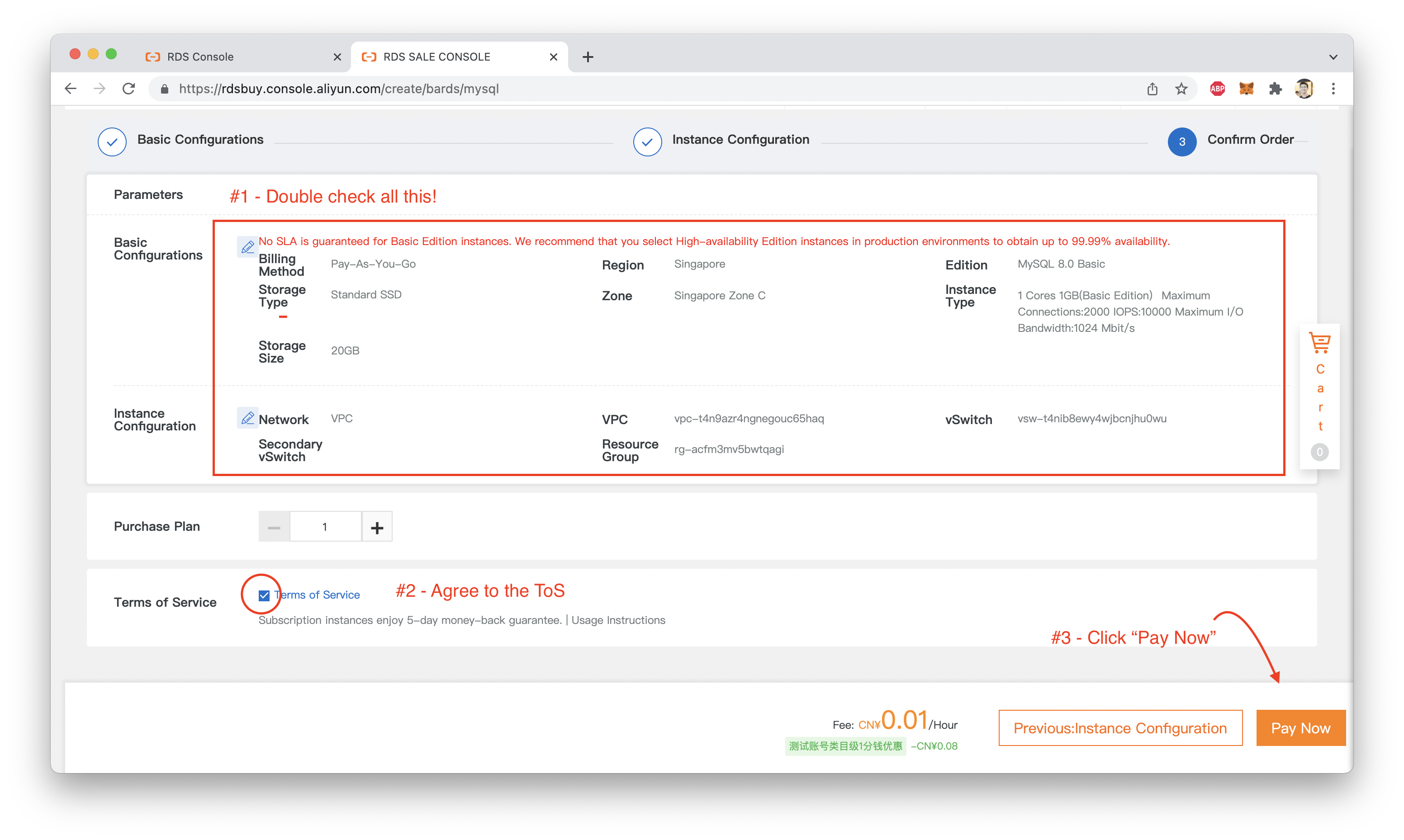

Click "Next", confirm that everything looks right, then click "Pay Now":

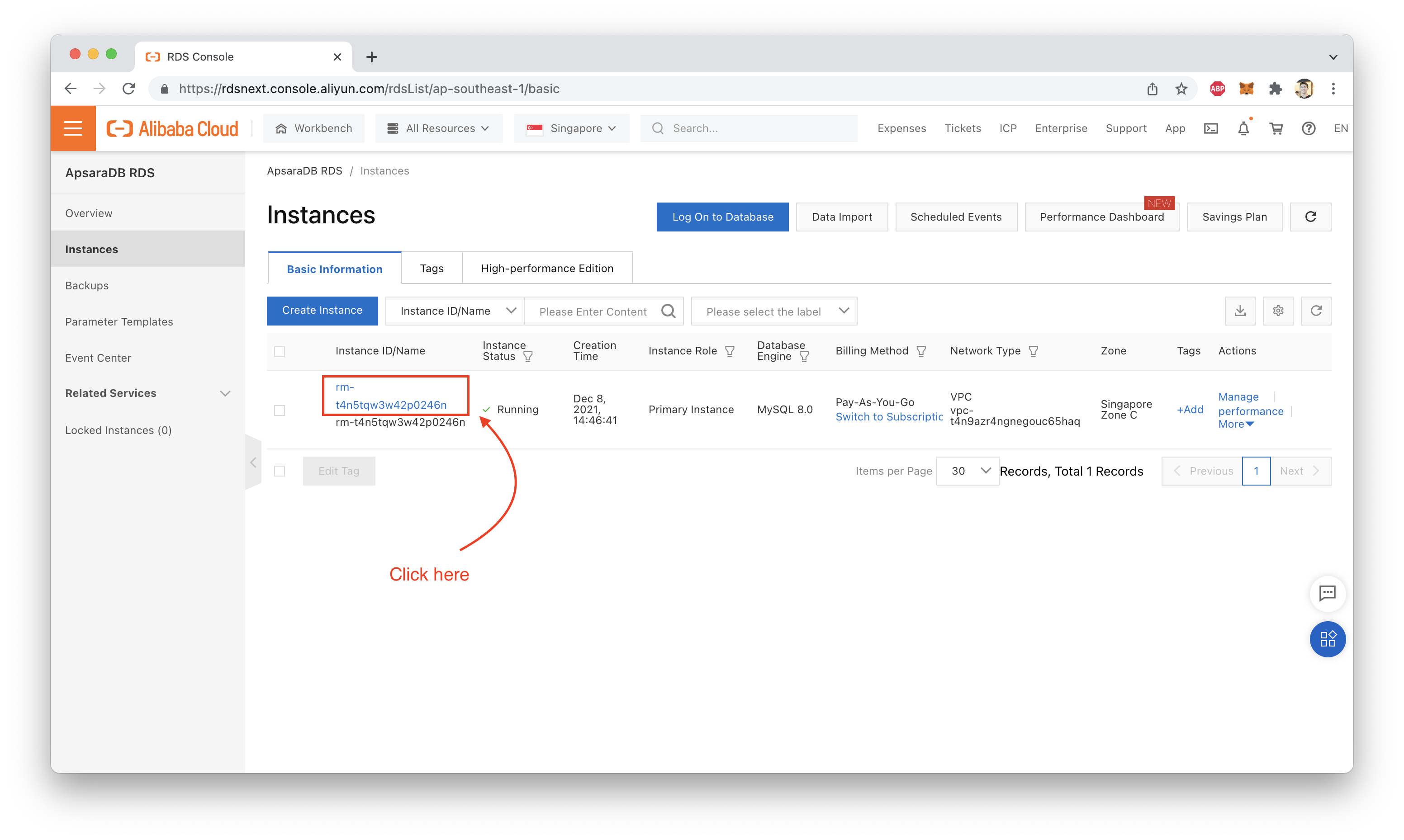

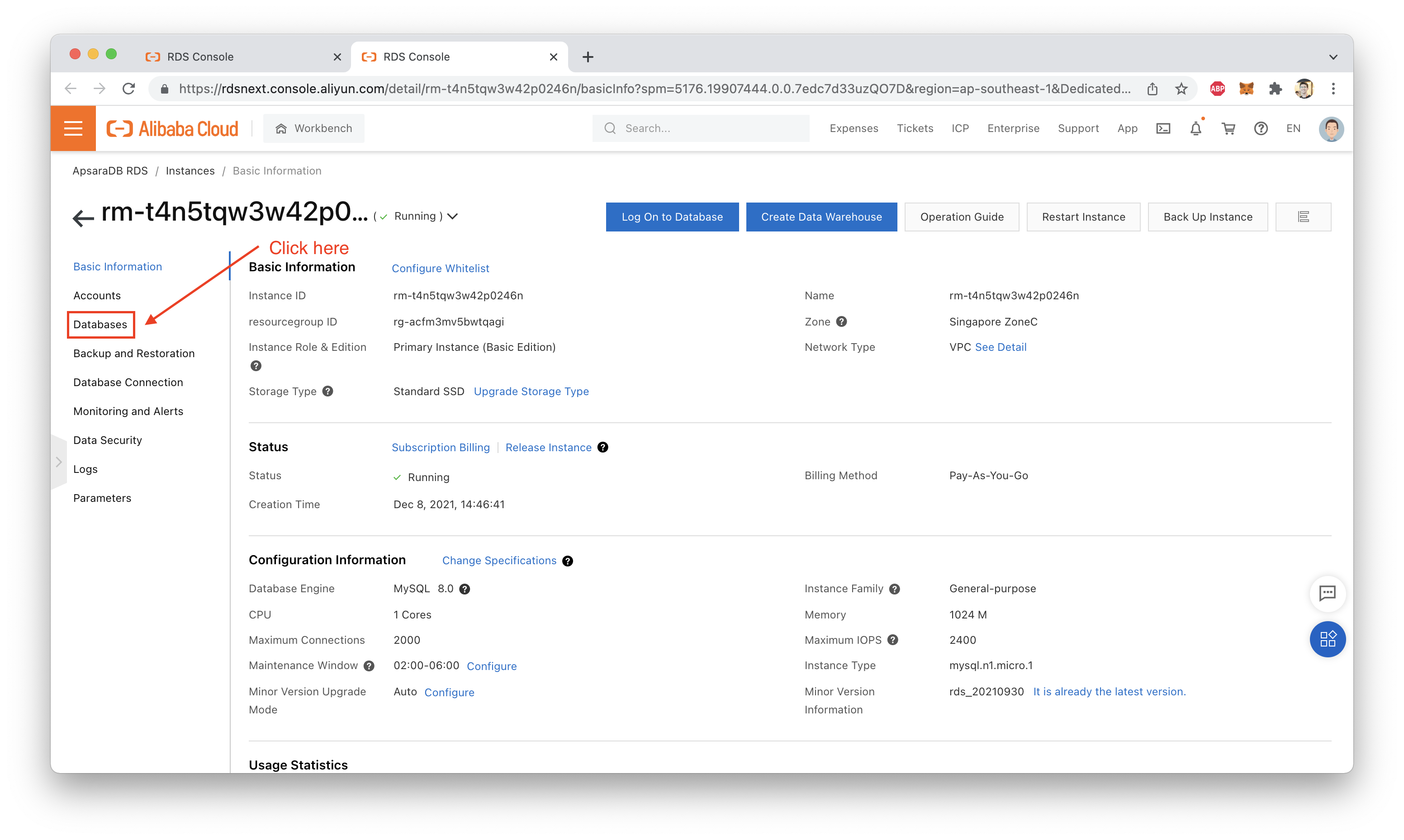

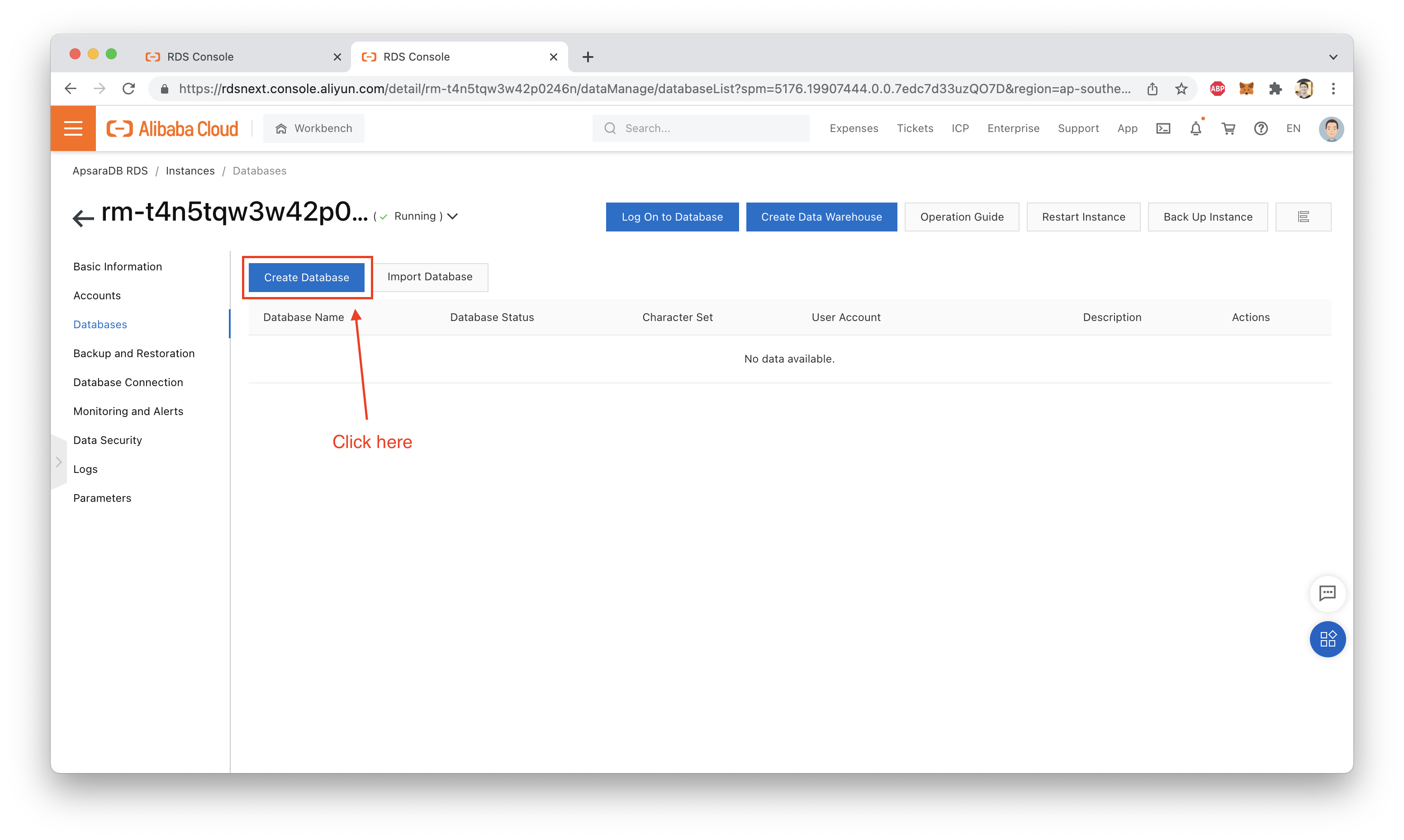

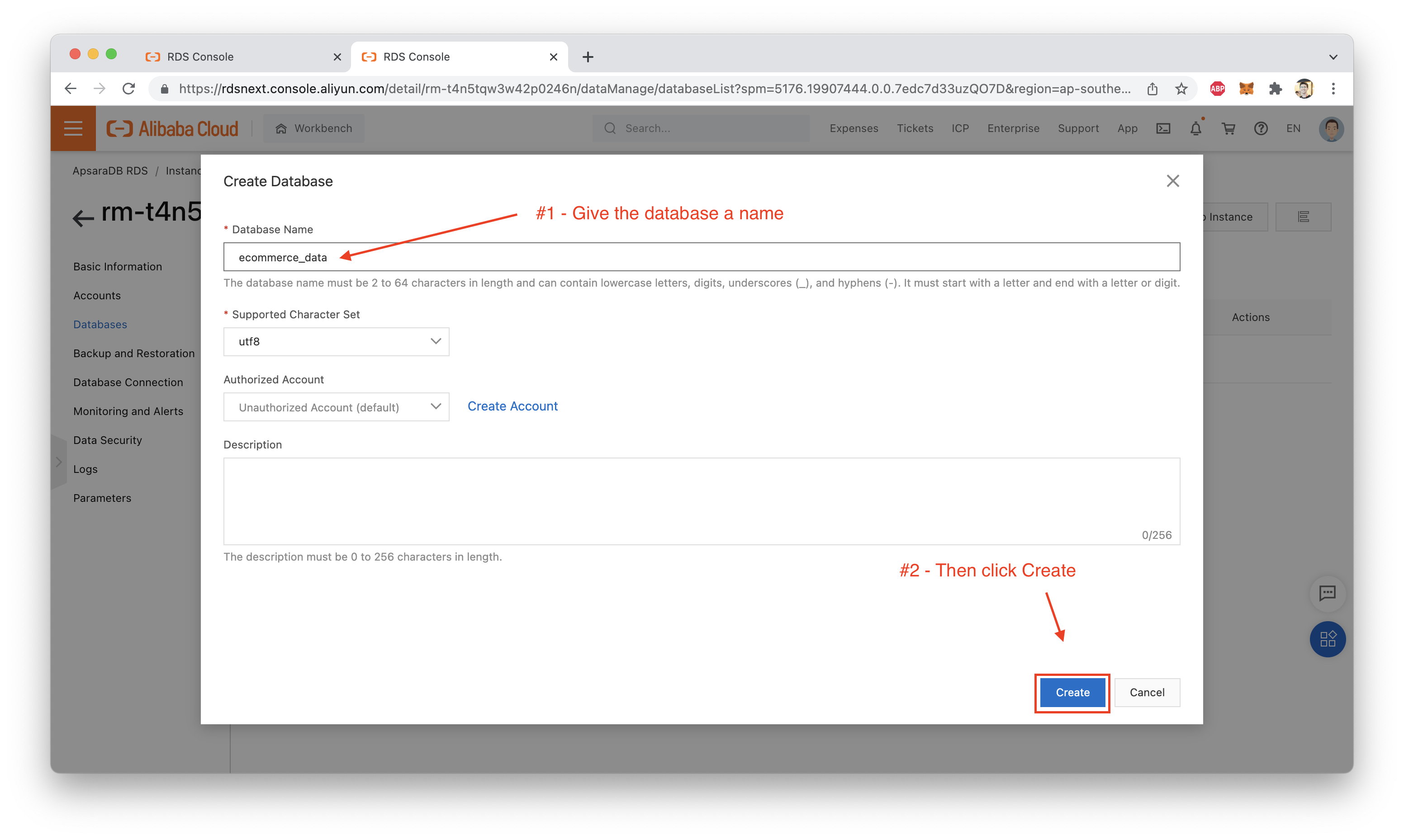

First, we need to create a new database. Since it will hold the users, products, and orders tables for a fake E-Commerce website, I'll call it ecommerce_data:

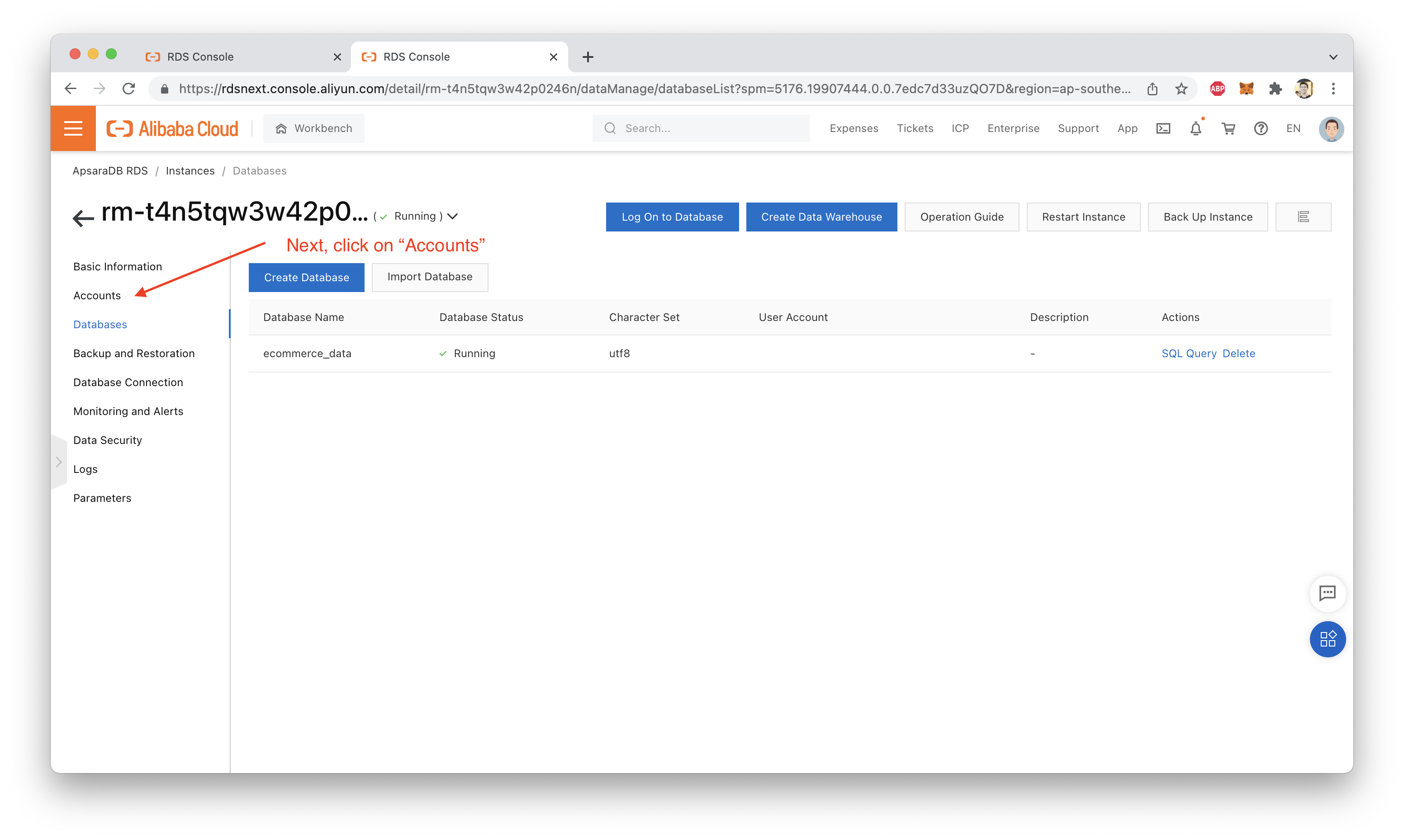

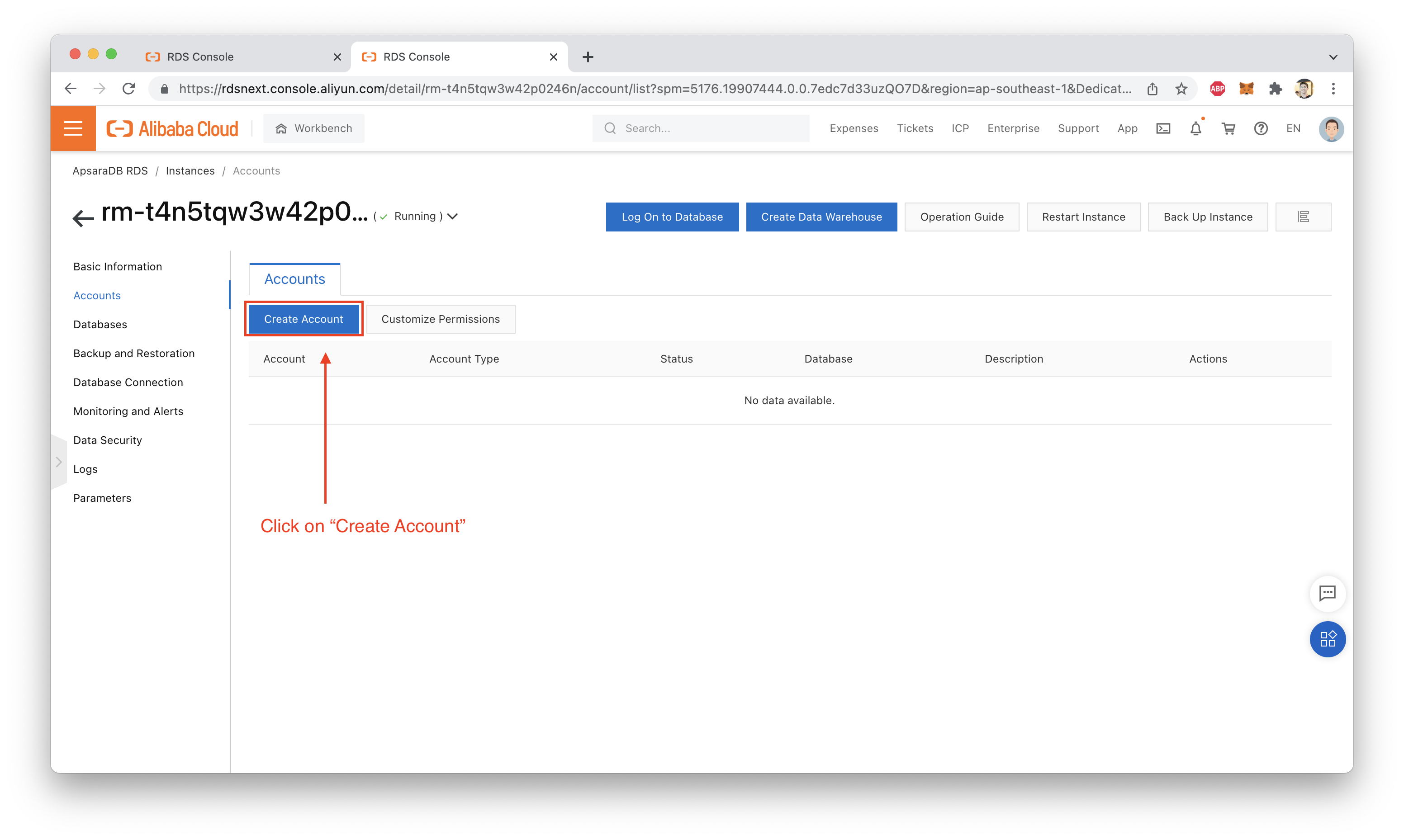

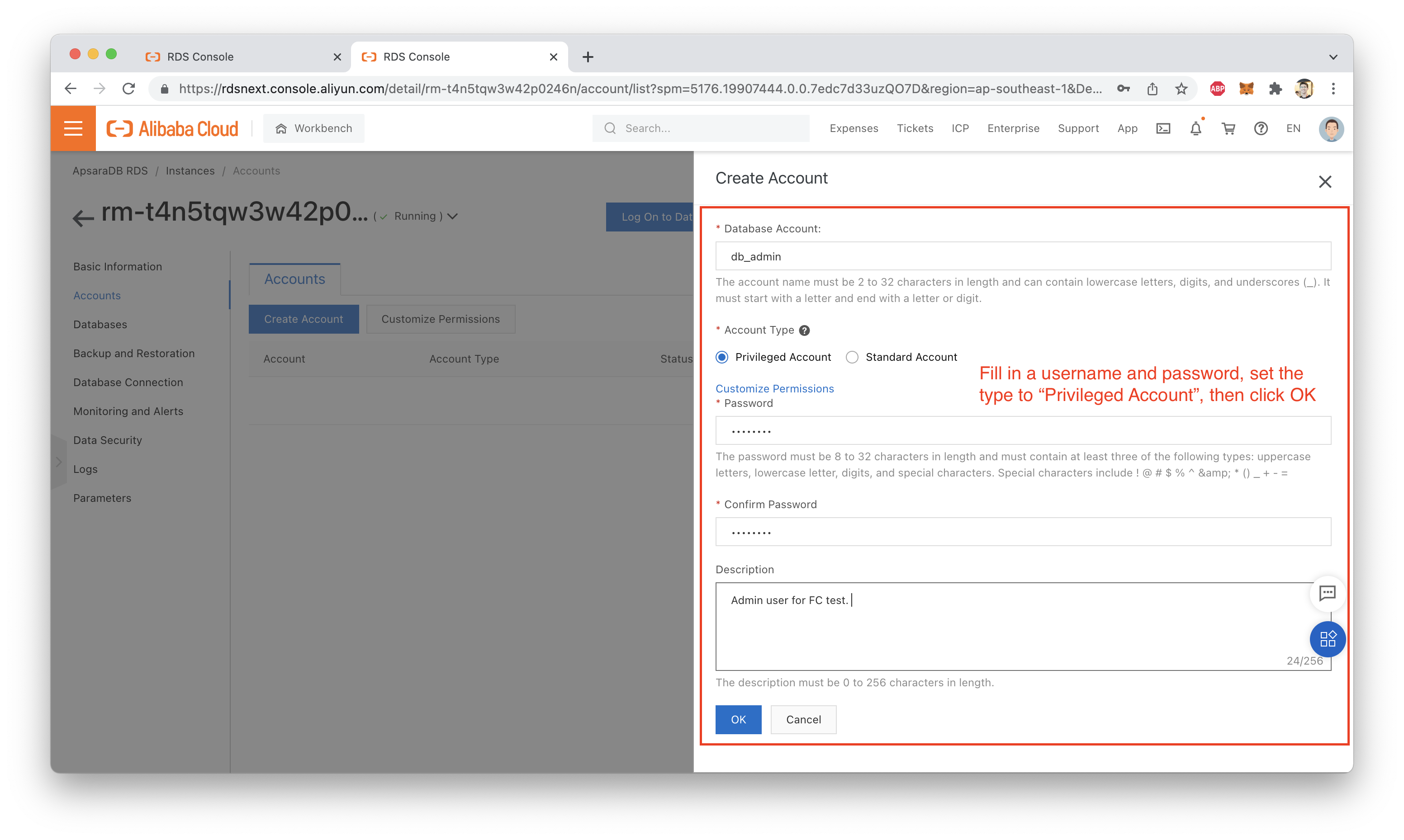

Next, we need to create a database account. We will create a privileged account, meaning it has full access to the RDS instance and any databases it contains.

Of course, in a real production environment, we would want to avoid doing this. In fact, we would probably want to have a dedicated account with limited permissions just for access from Function Compute to RDS, with separate developer and administrator accounts for other users and applications.

For the purposes of this demonstration, a single "admin" account is fine, however:

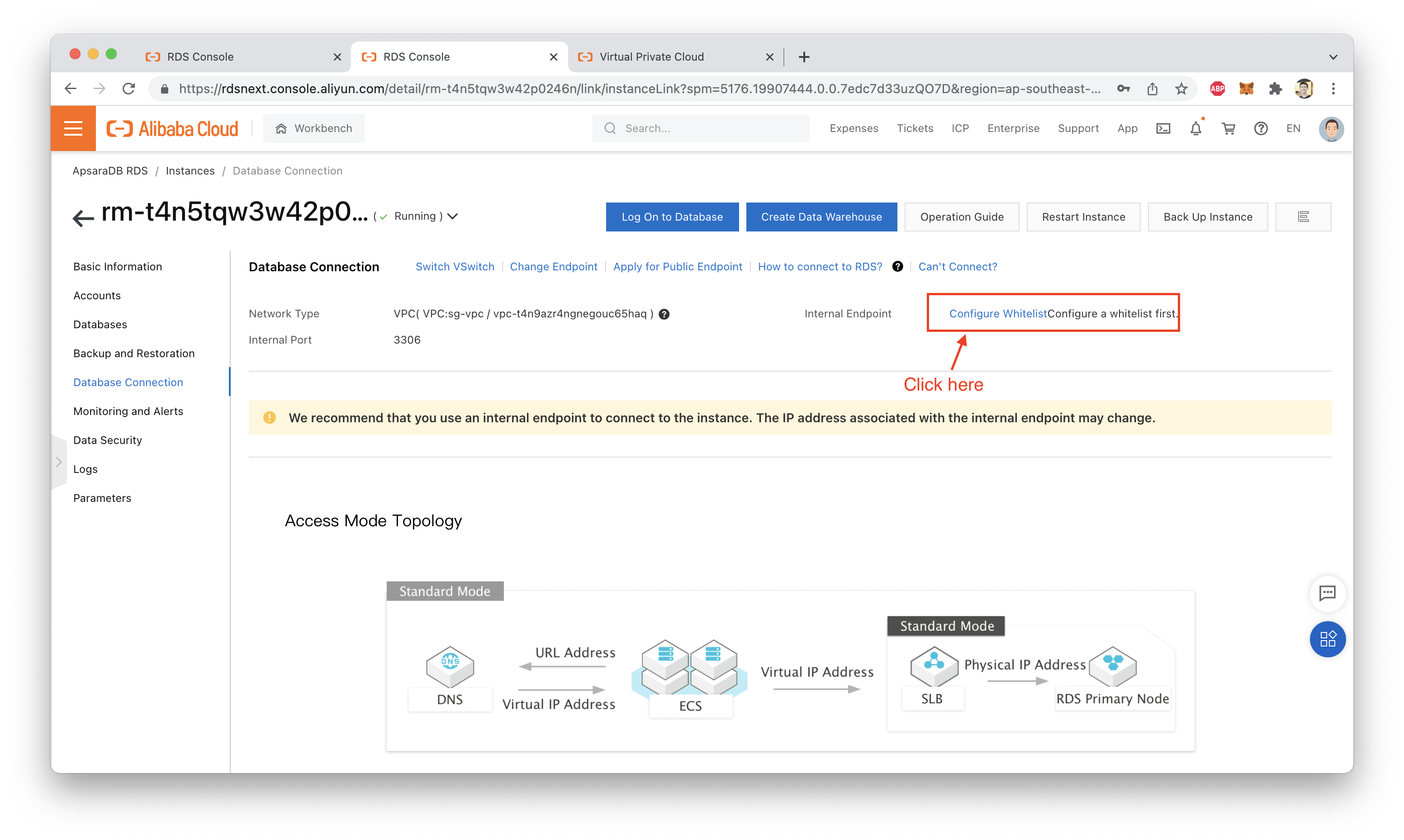

It's important to make sure our RDS database will allow our Function Compute functions to connect.

This means we need to modify the RDS database whitelist so that it allows access from IP addresses in the VPC CIDR block.

This means we need to know:

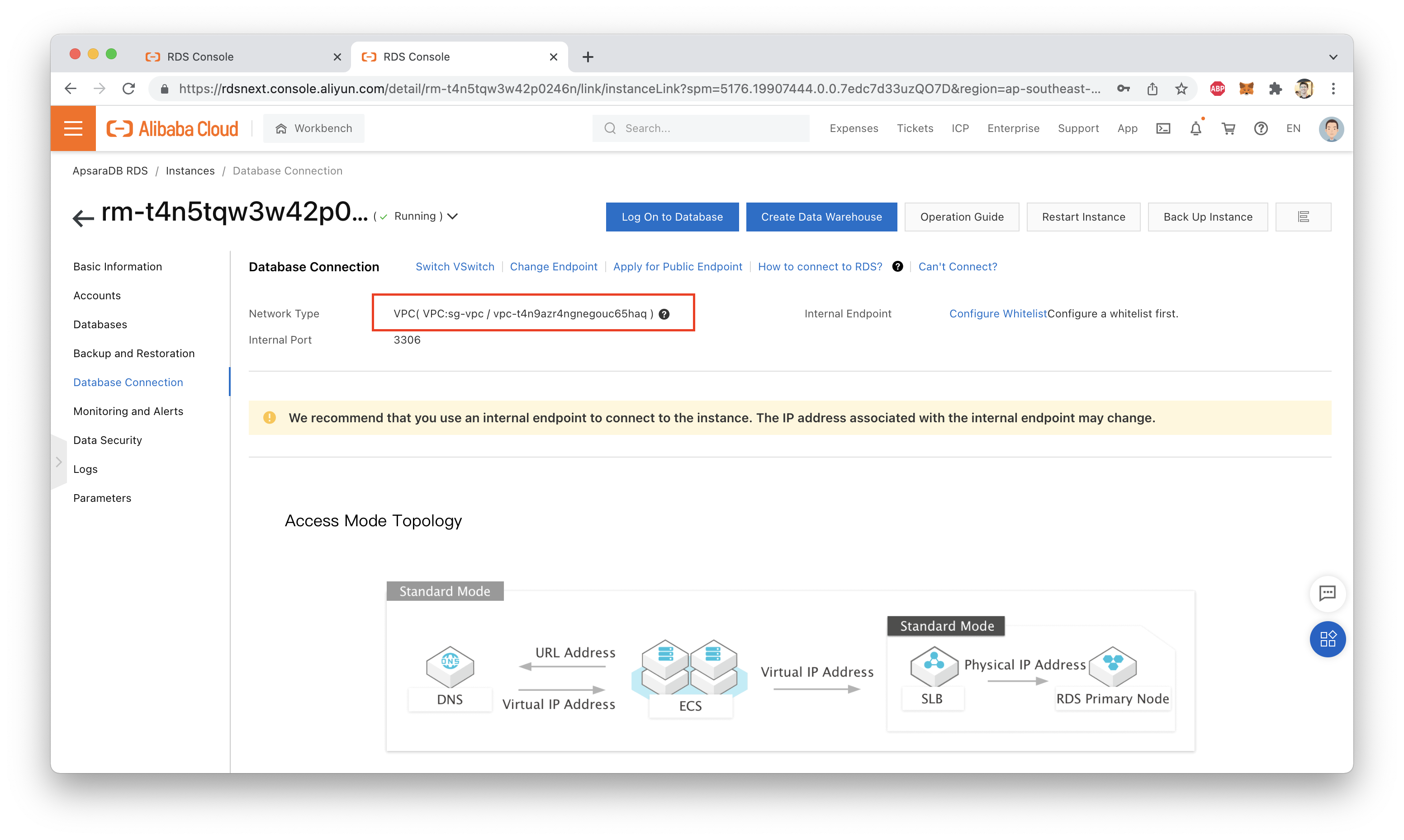

We can see which VPC our RDS instance is attached to by visiting the "Database Connection" tab in the RDS console:

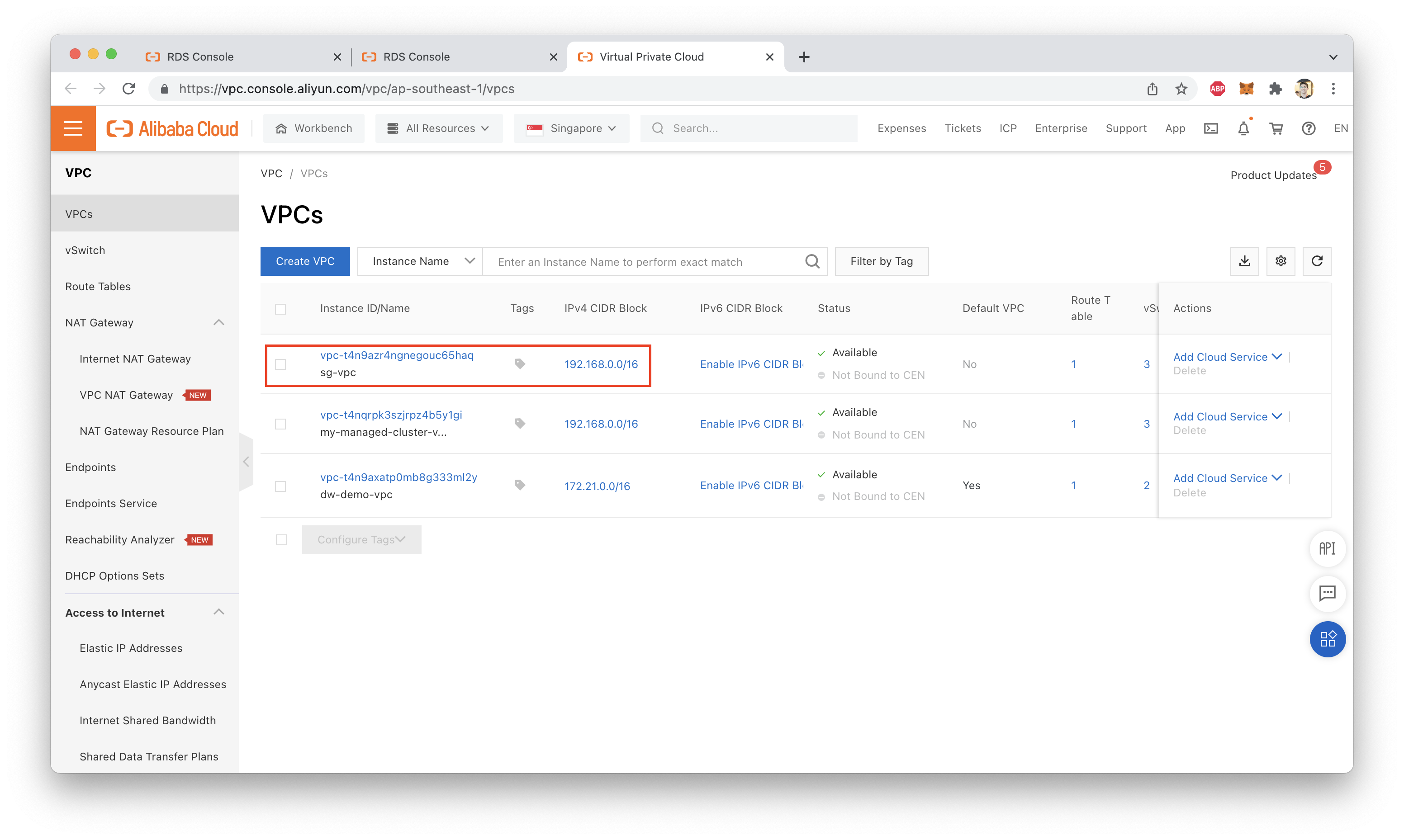

Armed with that information, we can find the CIDR address range of the VPC by visiting the VPC console:

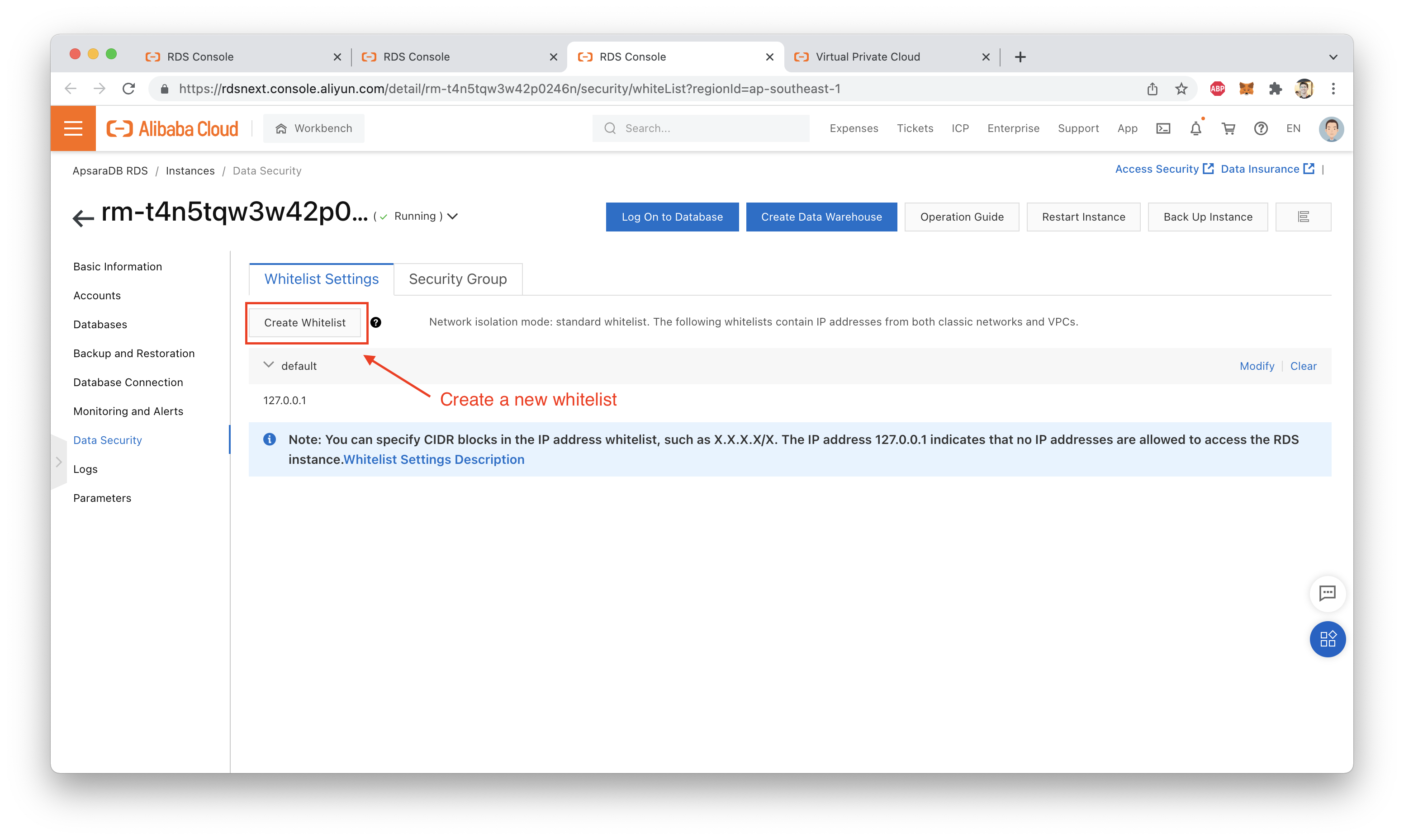

Now, we can return to the RDS console and finish setting up a new whitelist:

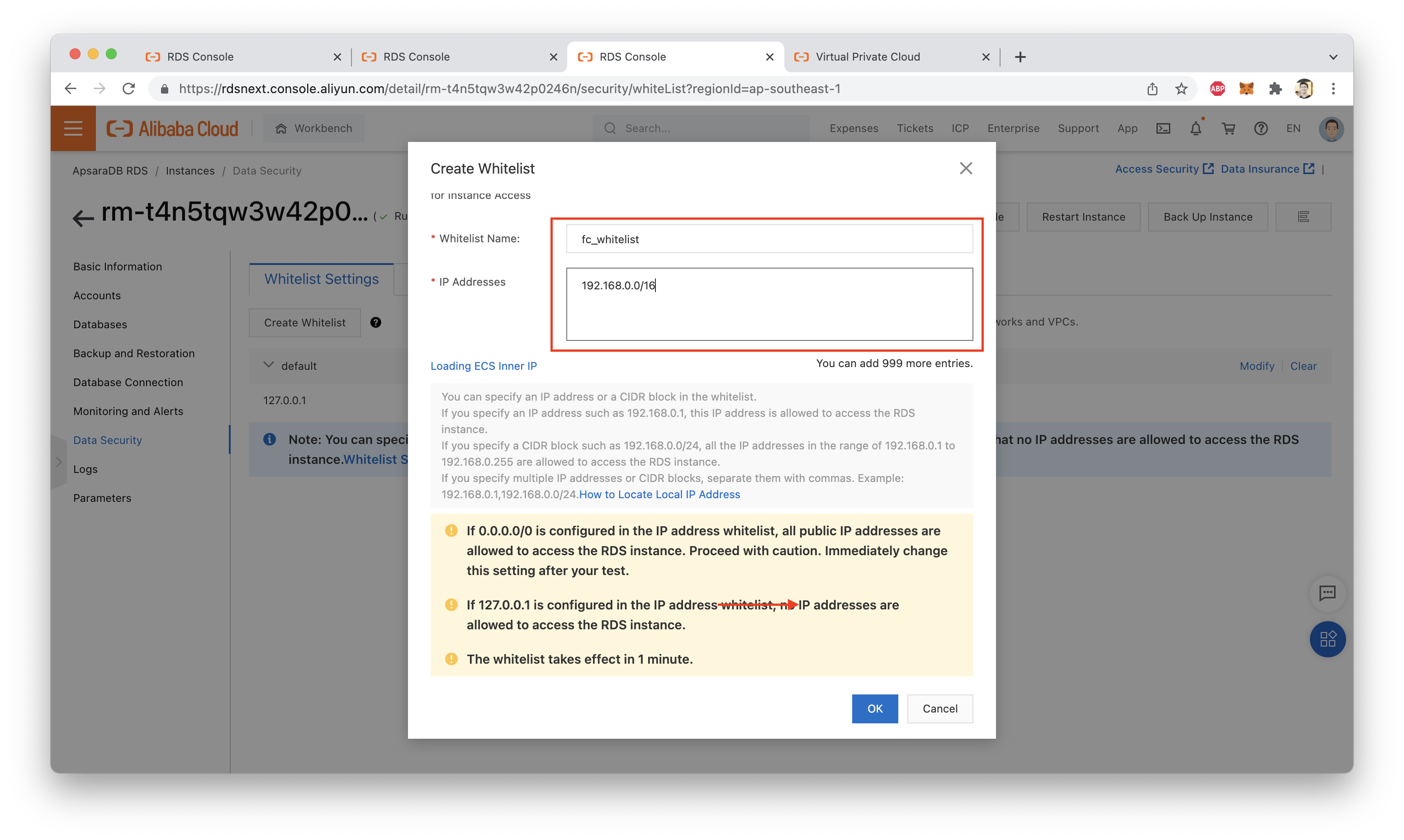

Finally, give the new whitelist a name and set the IP address range to the VPC's address range:

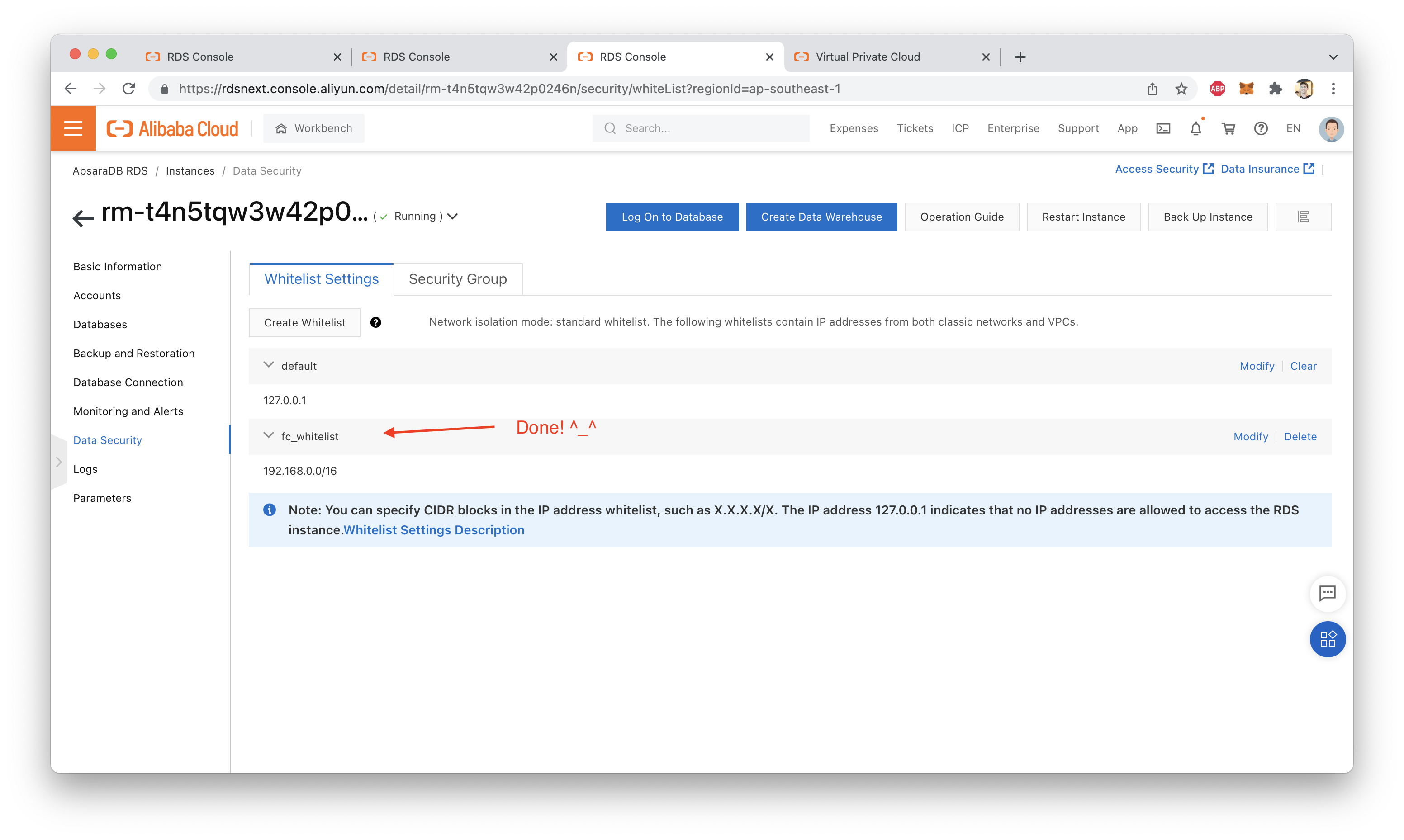

That's it! If everything worked, the new whitelist should be fully configured:

Before we can use Function Compute to write data into our database, we need to create some tables.

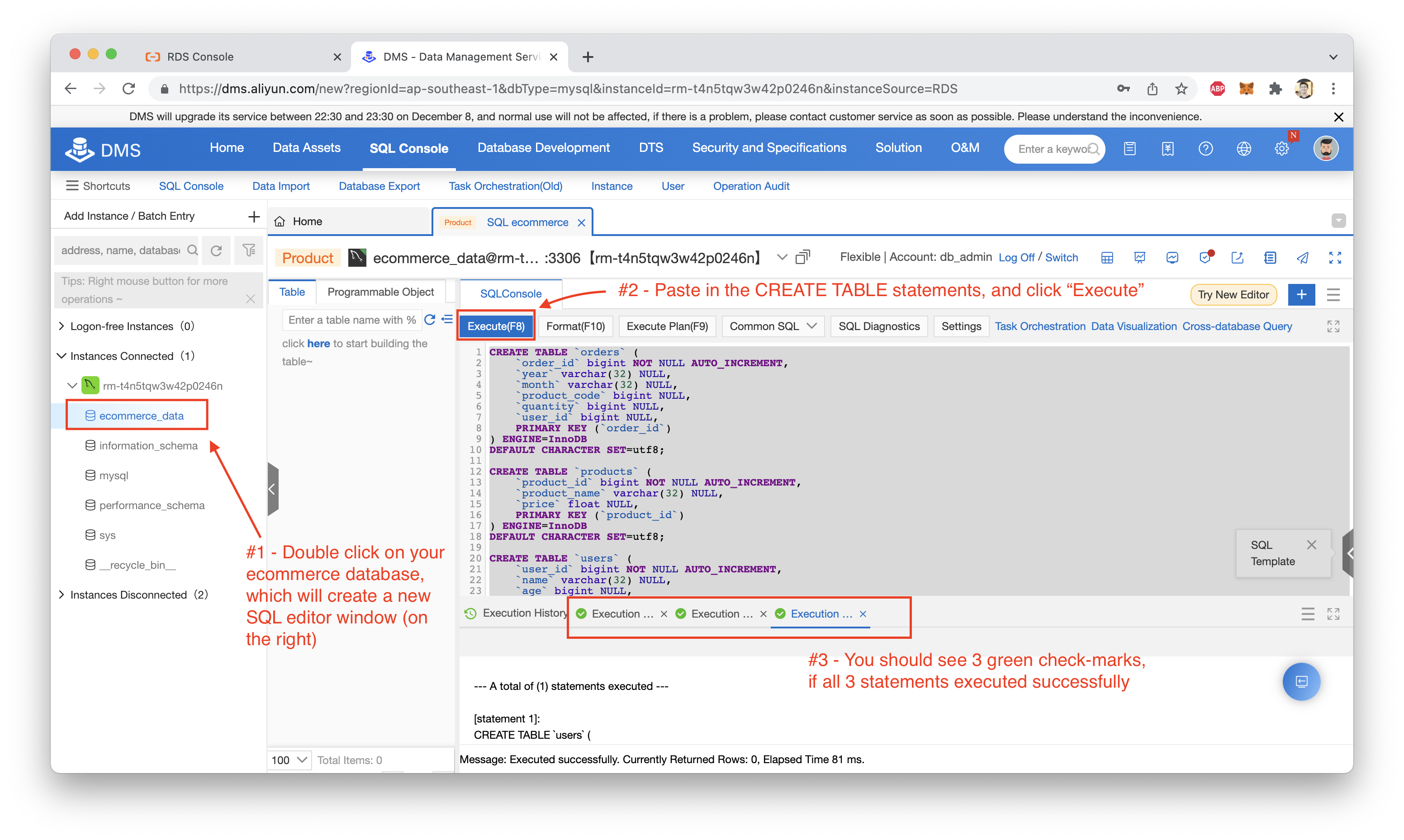

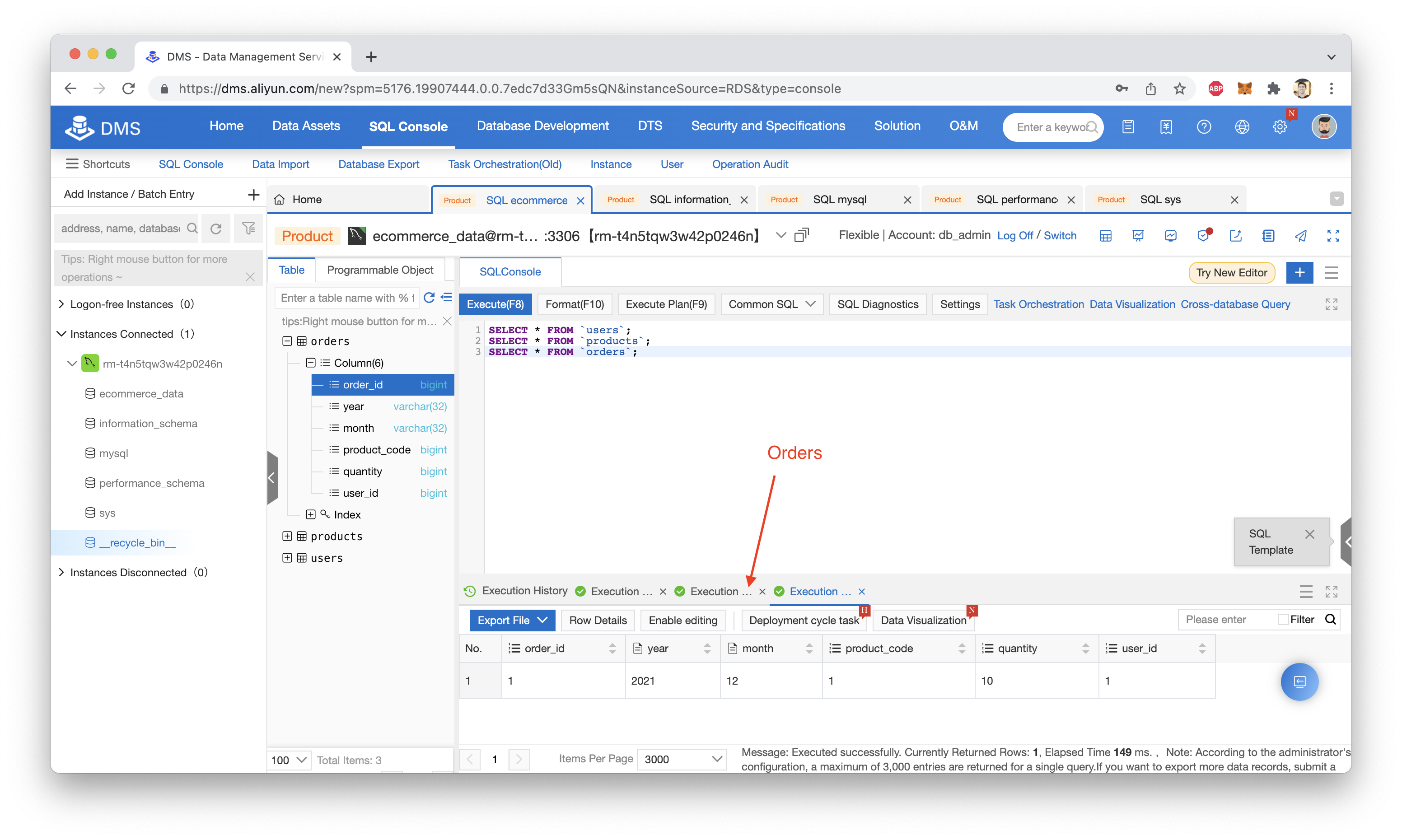

Let's use Alibaba Cloud's DMS to do this. DMS is an online SQL console and database management tool that makes it easy to manage multiple databases from a single browser window.

From within DMS, execute the following CREATE TABLE statements:

CREATE TABLE `orders` (

`order_id` bigint NOT NULL AUTO_INCREMENT,

`year` varchar(32) NULL,

`month` varchar(32) NULL,

`product_code` bigint NULL,

`quantity` bigint NULL,

`user_id` bigint NULL,

PRIMARY KEY (`order_id`)

) ENGINE=InnoDB

DEFAULT CHARACTER SET=utf8;

CREATE TABLE `products` (

`product_id` bigint NOT NULL AUTO_INCREMENT,

`product_name` varchar(32) NULL,

`price` float NULL,

PRIMARY KEY (`product_id`)

) ENGINE=InnoDB

DEFAULT CHARACTER SET=utf8;

CREATE TABLE `users` (

`user_id` bigint NOT NULL AUTO_INCREMENT,

`name` varchar(32) NULL,

`age` bigint NULL,

`sex` varchar(32) NULL,

`country` varchar(32) NULL,

`country_code` varchar(32) NULL,

PRIMARY KEY (`user_id`)

) ENGINE=InnoDB

DEFAULT CHARACTER SET=utf8;Done? Great! You should see something like this (note the 3 tabs at the bottom, all with a green check-mark next to them):

With all our database prep out of the way, we can move on to setting up Function Compute.

First, visit the Alibaba Cloud Academy GitHub Page. Specifically, download the function-compute repository, which you can find here.

Inside that repository, you'll find a folder called customer-data-generator. This folder contains the code for three Function Compute functions that can create new users, products, and orders. Here is a direct link to that code.

Building and deploying that code requires two things:

Docker is required to locally build and test your Function Compute functions (not necessary, but very handy). Serverless Devs is needed to build, test, and deploy your functions to Alibaba Cloud Function Compute.

You'll also need to perform a few setup steps with the Serverless Devs tool in order to make sure it can talk to your Alibaba Cloud account.

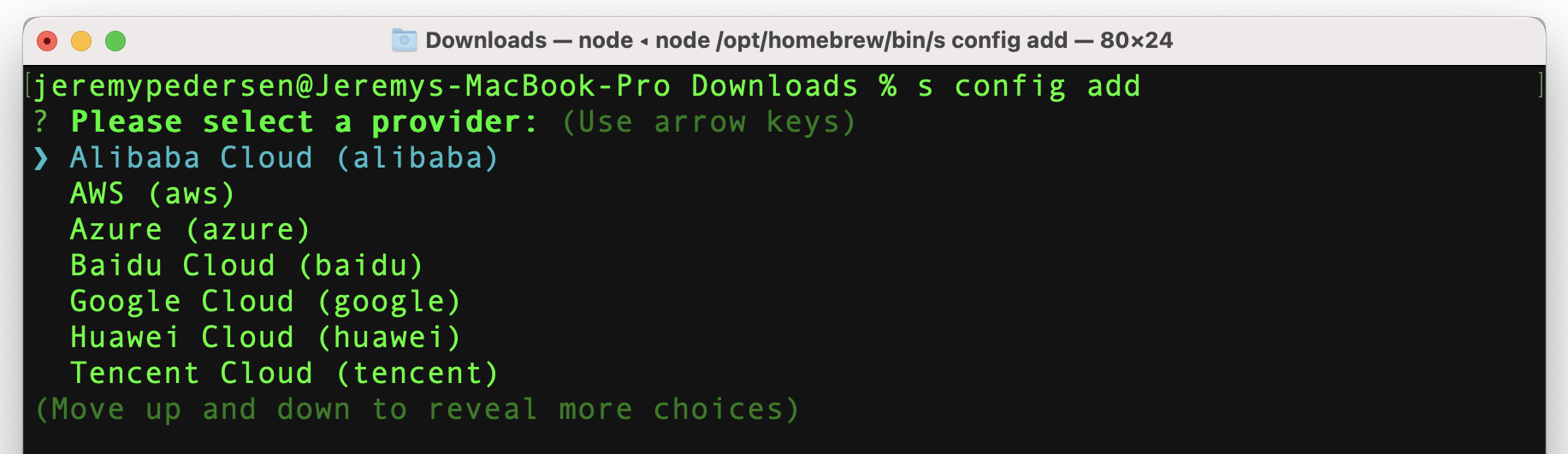

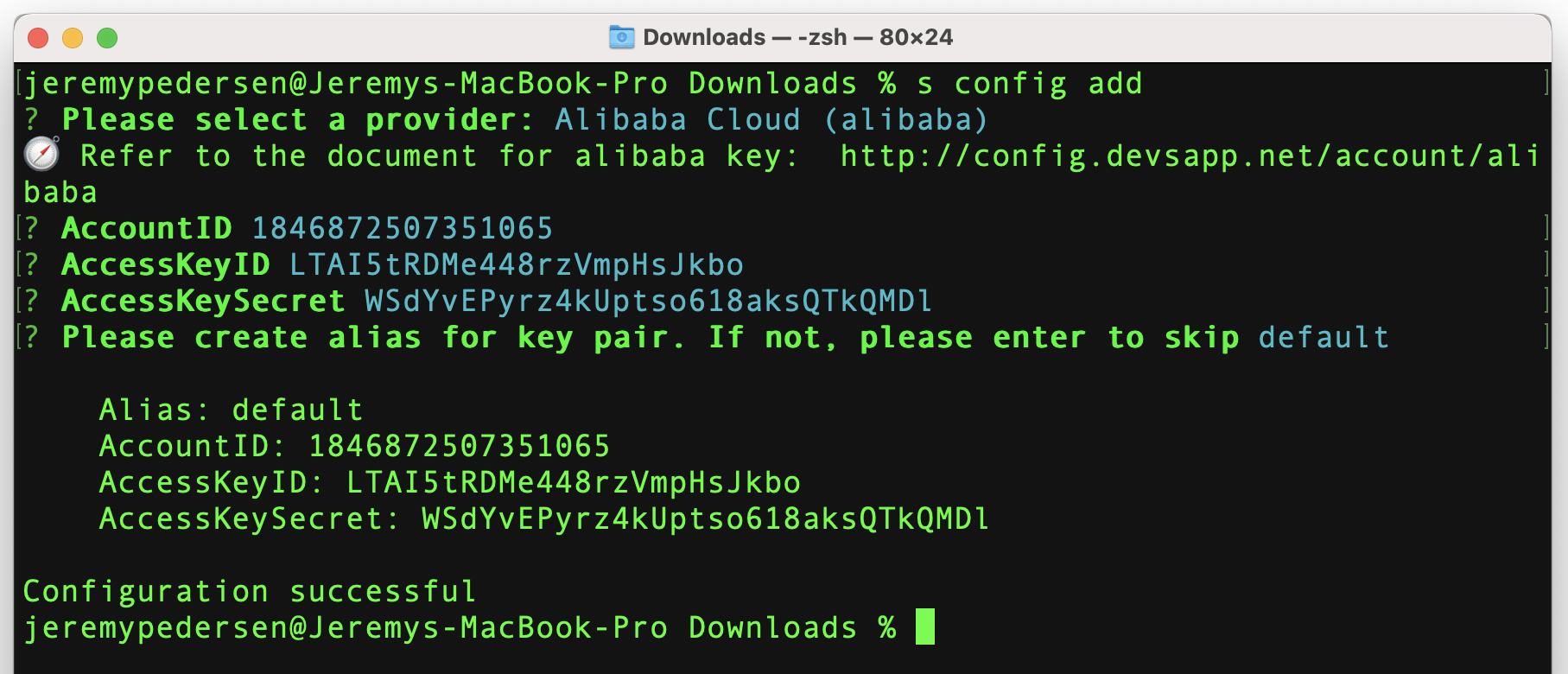

You'll then need to run a command to configure Serverless Devs. Note, the command to run Serverless Devs is just s. That's right..just the letter s!

Just run s config add to start the configuration process. Then step through the configuration options as shown here:

If those steps aren't clear, take a look at the configuration docs.

Inside customer-data-generator, you'll see another folder called ecomm_service. This folder holds the 3 functions that create users, orders, and products, respectively:

user_generator holds the user generation codeorder_generator holds the order generation codeproduct_generator holds the product generation codeAlso in the ecomm_service directory, you should see a file called s.yaml. This is the configuration file that tells the "Serverless Devs" tool how to create a new Function Compute Service, deploy our three Functions within that service, and set up three HTTP triggers for our three functions.

So what do we need to change?.

First, take a look at the index.py files contained in the three _generator directories. There should be some code similar to this, near the top:

config = {

'user': 'db_username',

'password': 'db_password',

'host': 'db_endpoint',

'database': 'db_name',

'raise_on_warnings': True

}You need to replace the user, password, host, and database values with ones that match the RDS database you created earlier.

Make sure you do this in all three index.py files. Of course, in a production environment we wouldn't hard code these values: we would either pass them in as Function Compute parameters or as environment variables, but in the interest of speed and simplicity, we hard-code them here.

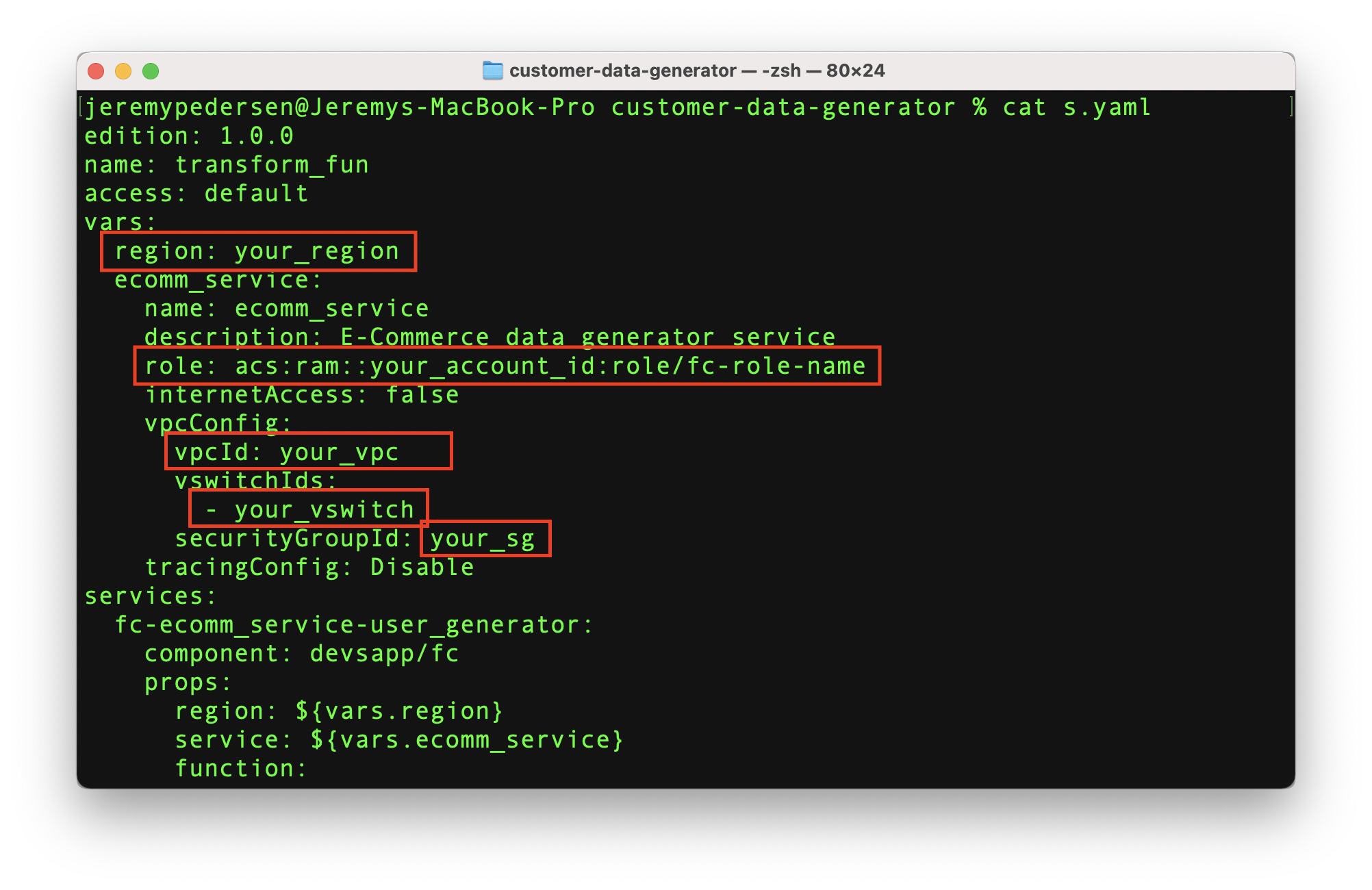

Inside customer-data-generator, there's also a file called s.yaml. Opening it up, you'll see some code that looks like this:

edition: 1.0.0

name: transform_fun

access: default

vars:

region: your_region

ecomm_service:

name: ecomm_service

description: E-Commerce data generator service

role: acs:ram::your_account_id:role/fc-role-name

internetAccess: false

vpcConfig:

vpcId: your_vpc

vswitchIds:

- your_vswitch

securityGroupId: your_sg

tracingConfig: Disable

services:

fc-ecomm_service-user_generator:

component: devsapp/fc

props:

region: ${vars.region}

service: ${vars.ecomm_service}

function:

name: user_generator

handler: index.handler

timeout: 60

memorySize: 128

runtime: python3

instanceType: e1

environmentVariables: {}

codeUri: ./ecomm_service/user_generator

triggers:

- name: http_trigger

type: http

config:

authType: anonymous

methods:

- GET

fc-ecomm_service-product_generator:

component: devsapp/fc

props:

region: ${vars.region}

service: ${vars.ecomm_service}

function:

name: product_generator

handler: index.handler

timeout: 60

memorySize: 128

runtime: python3

instanceType: e1

environmentVariables: {}

codeUri: ./ecomm_service/product_generator

triggers:

- name: http_trigger

type: http

config:

authType: anonymous

methods:

- GET

fc-ecomm_service-order_generator:

component: devsapp/fc

props:

region: ${vars.region}

service: ${vars.ecomm_service}

function:

name: order_generator

handler: index.handler

timeout: 60

memorySize: 128

runtime: python3

instanceType: e1

environmentVariables: {}

codeUri: ./ecomm_service/order_generator

triggers:

- name: http_trigger

type: http

config:

authType: anonymous

methods:

- GETPay attention to these values (below). They need to be modified to match your environment:

In my case, I need to change the value after region: to ap-southeast-1 (Singapore), which is where my RDS instance is deployed.

I also need to replace value in the vpcId: field with the ID of the VPC where my RDS instance is located (remember that from earlier?)

Further, I need to specify a value after securityGroupId: that corresponds to a security group attached to my VPC, and I need to specify at least one VSwitch ID from my VPC, here:

vswitchIds:

- your_vswitchNote that it doesn't need to be the ID of the VSwitch your RDS instance is attached to. Any VSwitch from the VPC group will do: by default, all VSwitches within a VPC can communicate with one another.

One of the trickier lines is this one:

role: acs:ram::your_account_id:role/fc-role-nameHere, you need to replace your_account_id with your Alibaba Cloud account ID (mouse over your avatar in the web console to find it), and you also need to replace fc-role-name with the name of a RAM Role that gives the Function Compute service the permissions it needs to set things up for you automatically.

If you have used the Function Compute web console before and have set up a Function Compute Service that allows functions to access a VPC, your account may already contain a RAM role called fc-default-role, which has all the correct permissions already.

If you haven't done that before, you'll need to manually create a new RAM role (see here).

If you are doing this through the web console, it should work like this:

AliyunECSNetworkInterfaceManagementAccess and attach it to the roleThat's it. Make sure to replace fc-role-name in s.yaml with the actual name of your role:

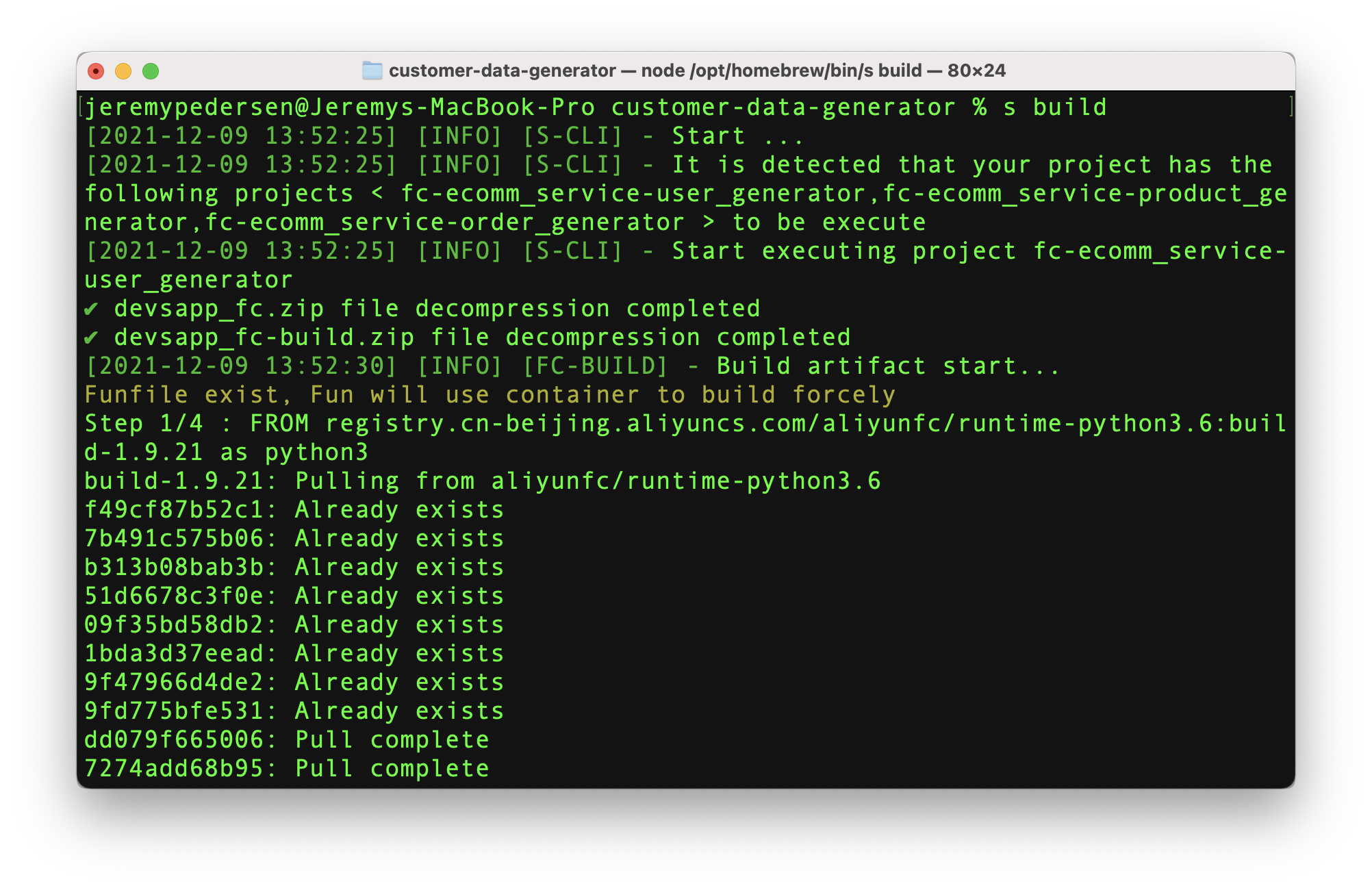

role: acs:ram::your_account_id:role/fc-role-nameGreat! Now it's time to build and deploy all our code. First, build the user, product, and order functions using:

s buildYou should execute this command from the customer-data-generator directory.

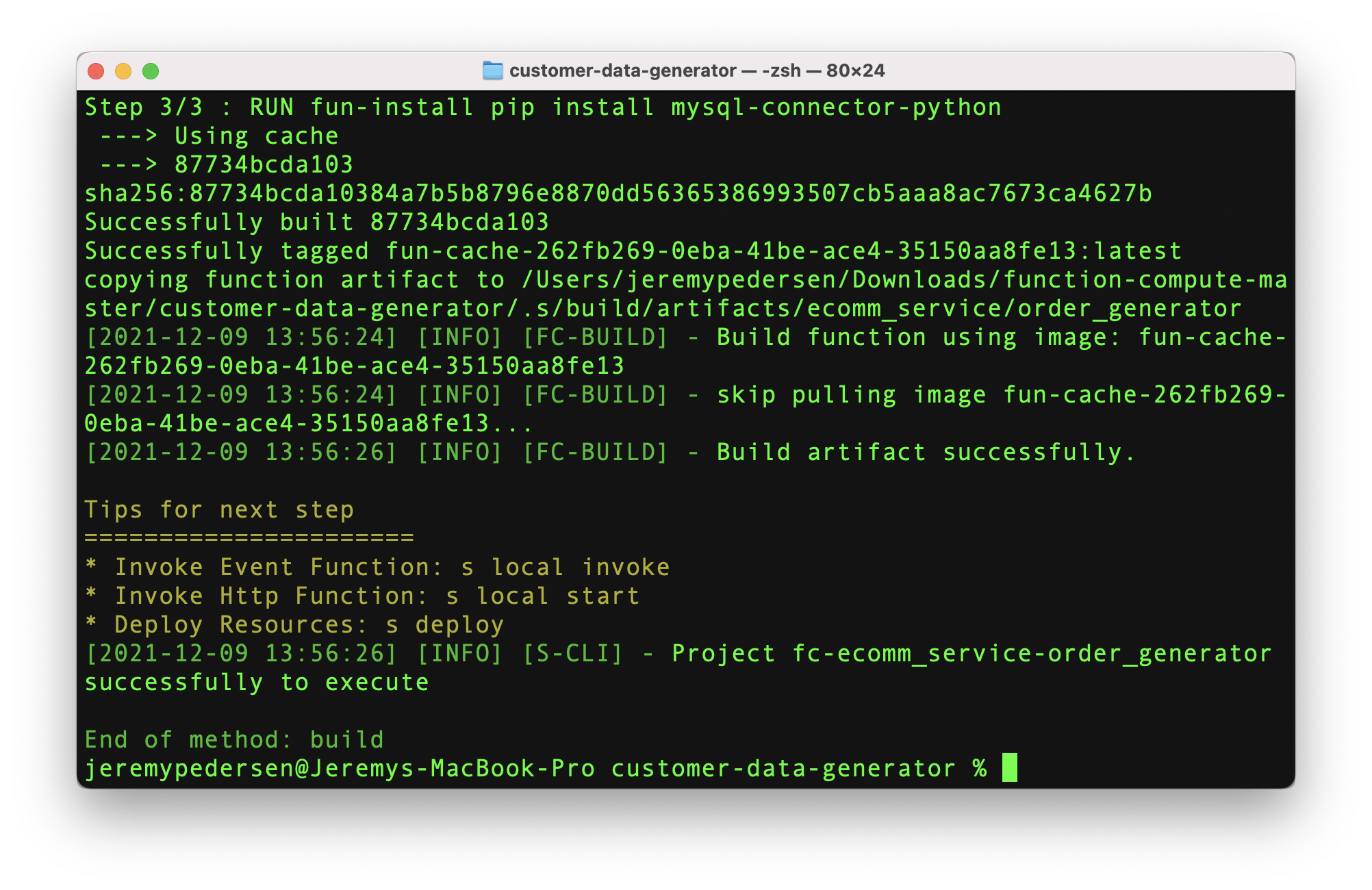

The process should look something like this:

If the build is successful, you'll see some "tips" appear at the end of the build, like so:

What we want to do next is to deploy our code, so we need to run:

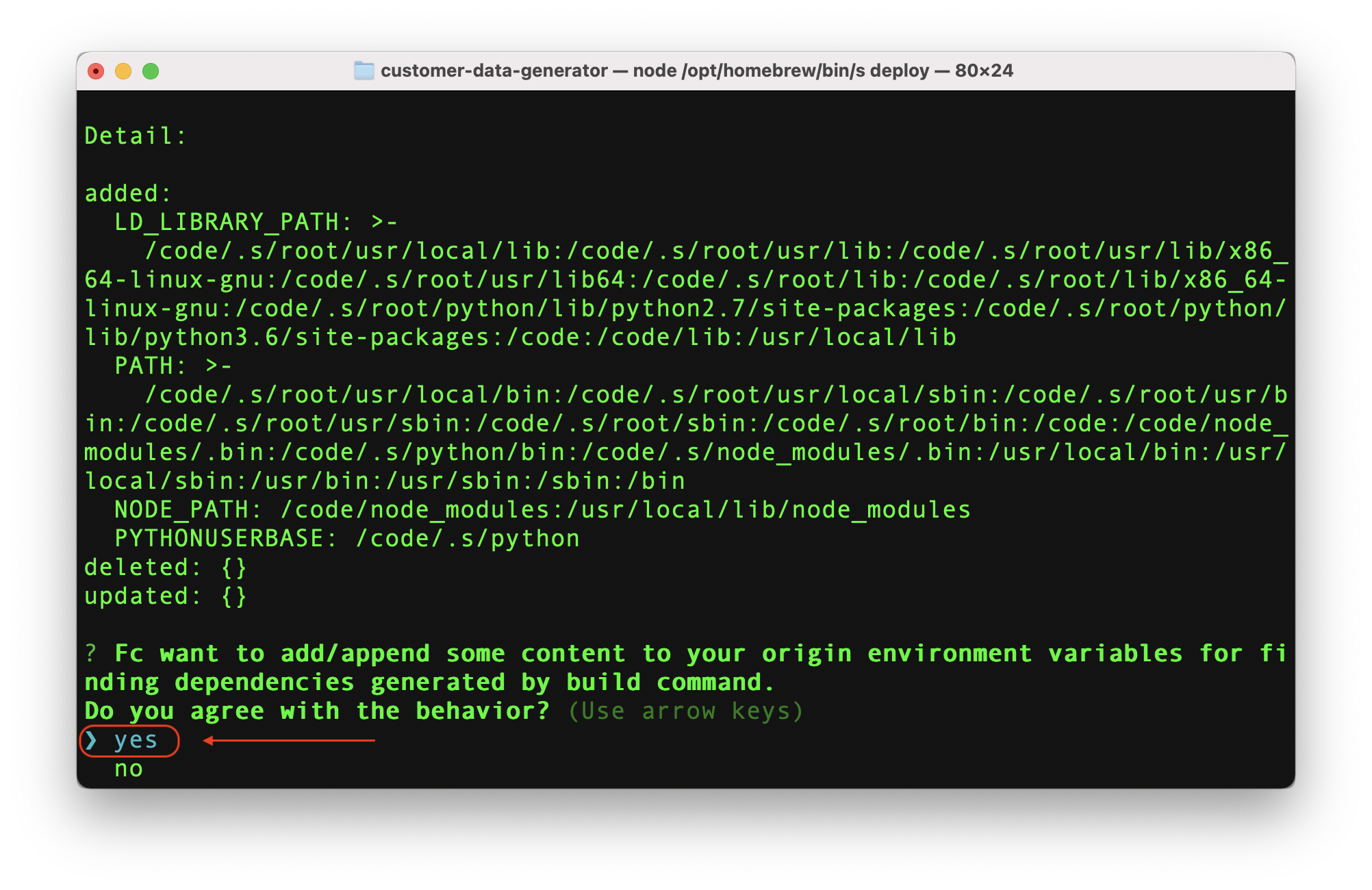

s deployDuring the deployment process, Serverless Devs might ask you whether or not to provide build-related environment variables to Function Compute. Choose "yes":

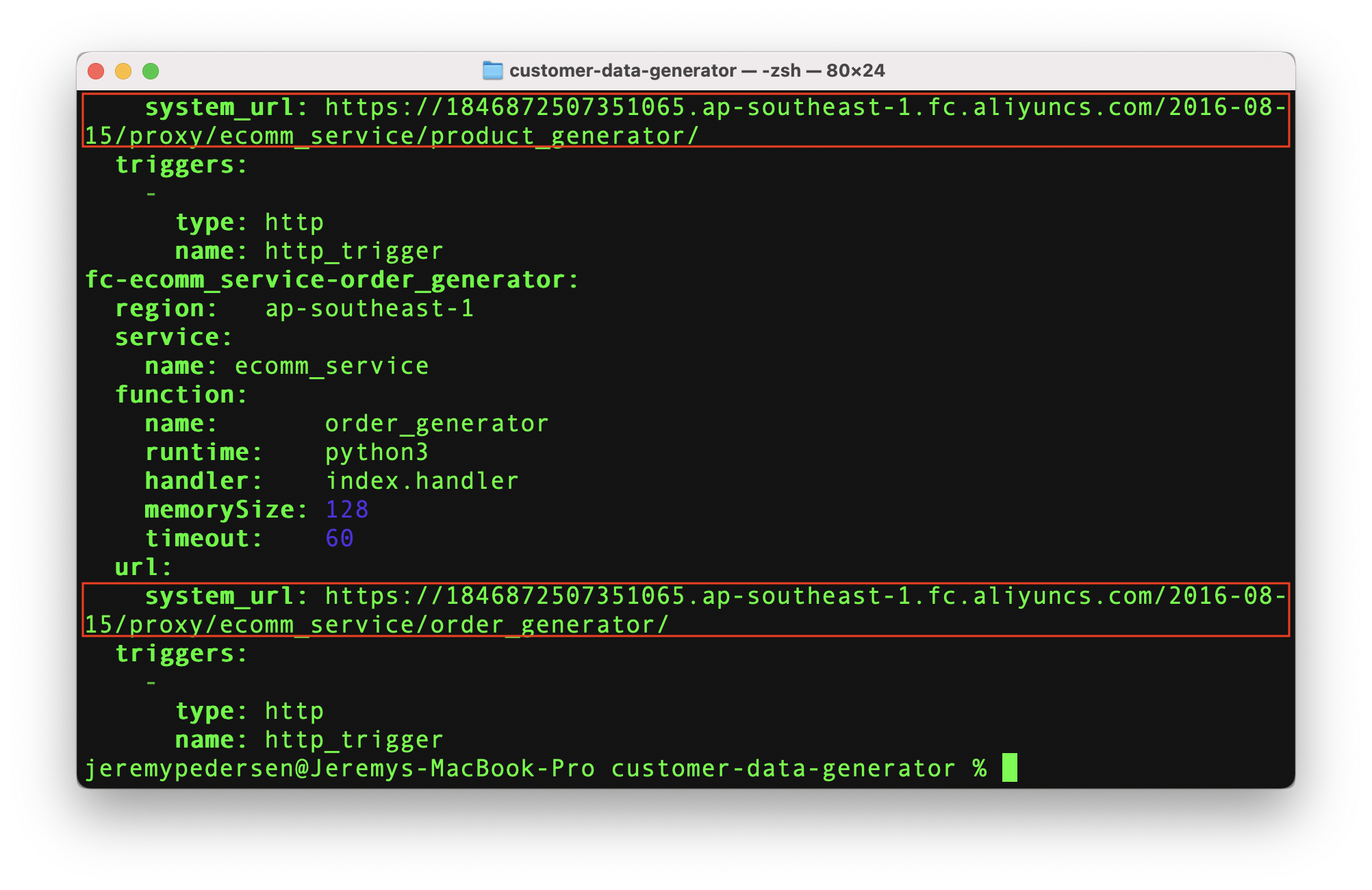

If the deploy process succeeds, the s command (Serverless Devs) will print out 3 URLs, one for each function. Make sure you make a note of these, because we'll need them later:

Great! With the deployment step done, let's try calling our functions to generate some user, product, and order data!

Note, in my case, the URLs for generating users, products, and orders are:

https://1846872507351065.ap-southeast-1.fc.aliyuncs.com/2016-08-15/proxy/ecomm_service/user_generator/https://1846872507351065.ap-southeast-1.fc.aliyuncs.com/2016-08-15/proxy/ecomm_service/product_generator/https://1846872507351065.ap-southeast-1.fc.aliyuncs.com/2016-08-15/proxy/ecomm_service/order_generator/Yours will be different. Each Alibaba Cloud account has a unique account ID, and therefore a unique function compute endpoint URL for each region.

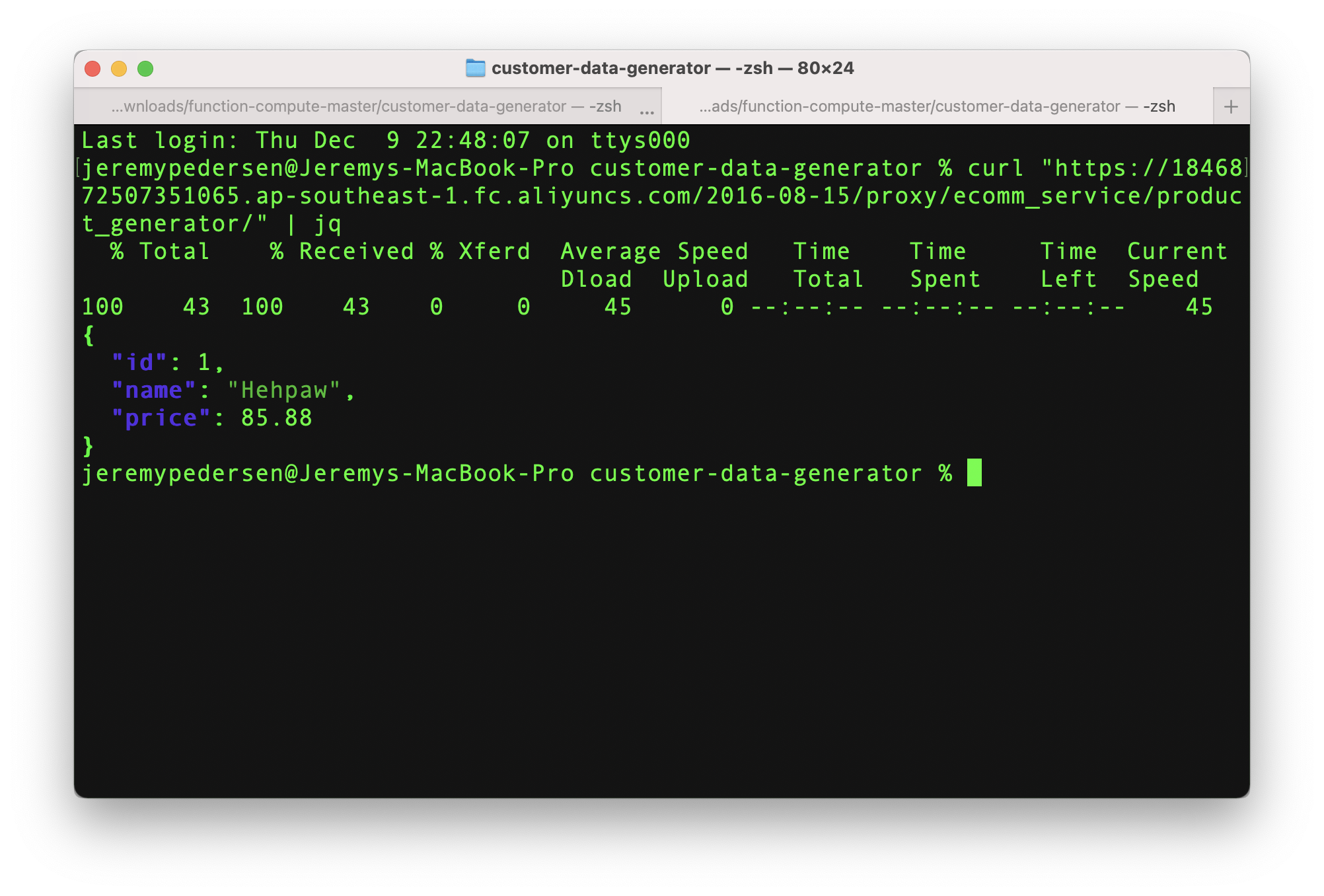

We can now begin testing out our functions! Any utility that can make an HTTP GET request should work, which means we can call our functions either by pasting URLs into a web browser, or by using common commands like curl or wget. Here I use curl.

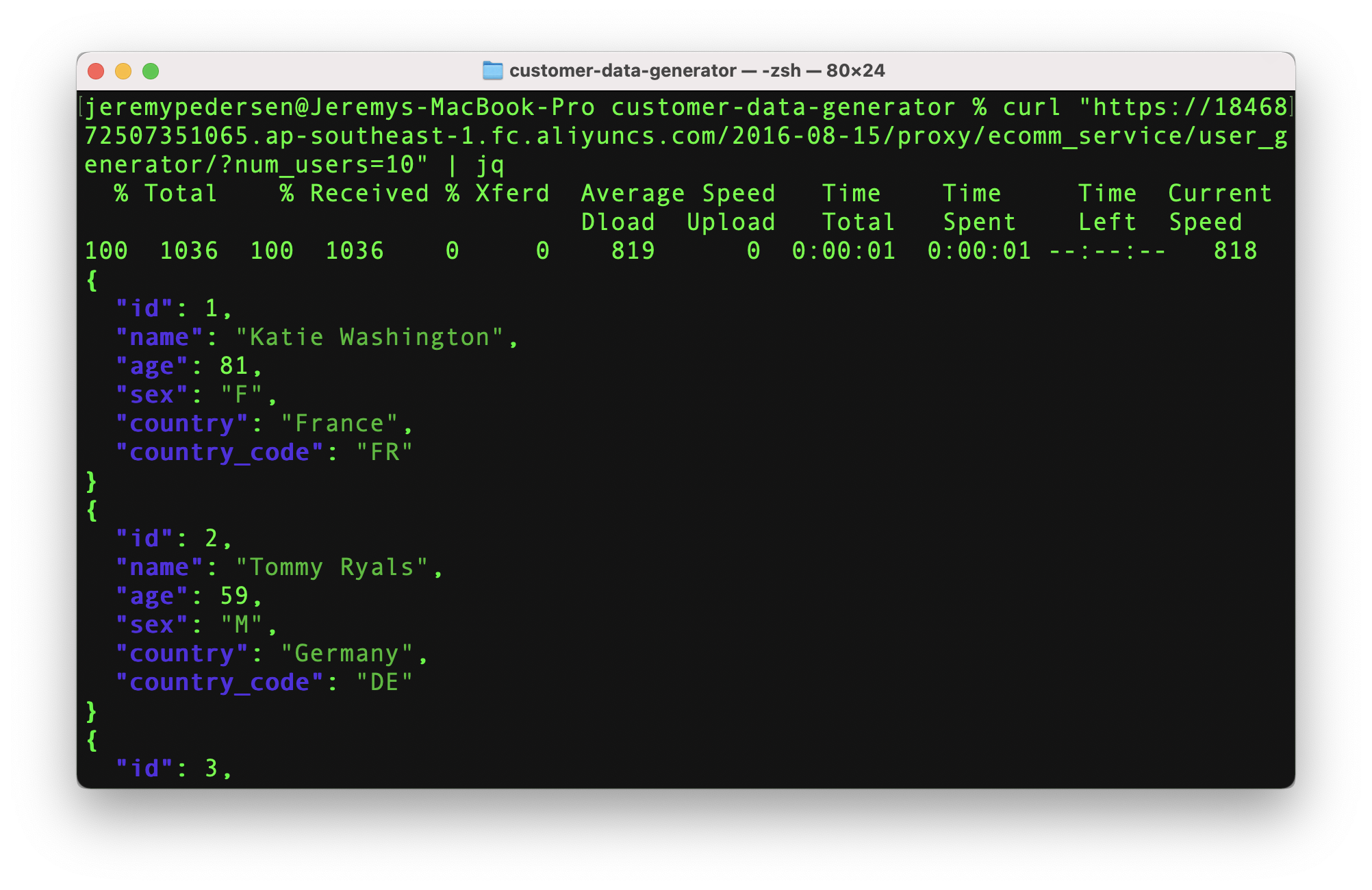

Let's start by making 10 users. Note that the request will return a JSON formatted response, so I use the command jq to pretty-print the response (this just makes it look nicer in the console).

To generate 10 new users with a random age, sex, name, and country, I issue the following command:

curl "https://1846872507351065.ap-southeast-1.fc.aliyuncs.com/2016-08-15/proxy/ecomm_service/user_generator/?num_users=10" | jqAgain, | jq pipes the response curl into the jq command, which pretty-prints the resulting JSON output.

If this worked, you'll see output like this:

Great! Now let's create a new fake product:

curl "https://1846872507351065.ap-southeast-1.fc.aliyuncs.com/2016-08-15/proxy/ecomm_service/product_generator/" | jqAwesome. We should see some output like this, which indicates a new product was created:

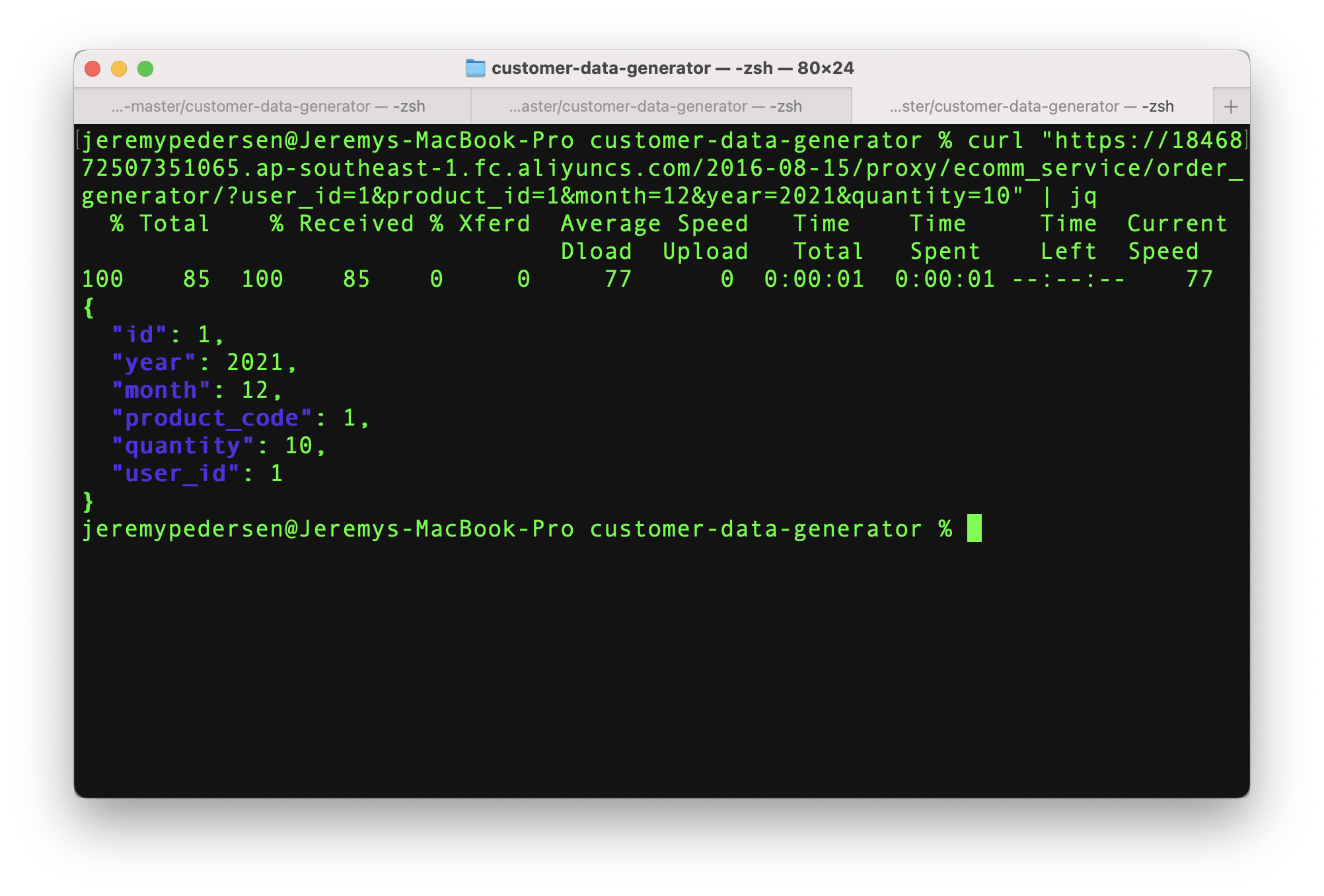

Now, we need to create an order. Note that this time we need to supply some URL parameters. A quantity, product ID, user ID, month, and year are all mandatory. The order of the parameters is not important, though. Use whichever ordering scheme you prefer:

curl "https://1846872507351065.ap-southeast-1.fc.aliyuncs.com/2016-08-15/proxy/ecomm_service/order_generator/?user_id=1&product_id=1&month=12&year=2021&quantity=10" | jqNote that we use a real user ID and real product ID, which we pulled from the previous calls to user_generator and product_generator. This is important! If we generate bogus orders, this will make our life a lot harder if we use this data later on to test out tools like QuickBI, since table joins and other SQL operations may not work like we expect.

If the order was submitted successfully, we'll see something like this:

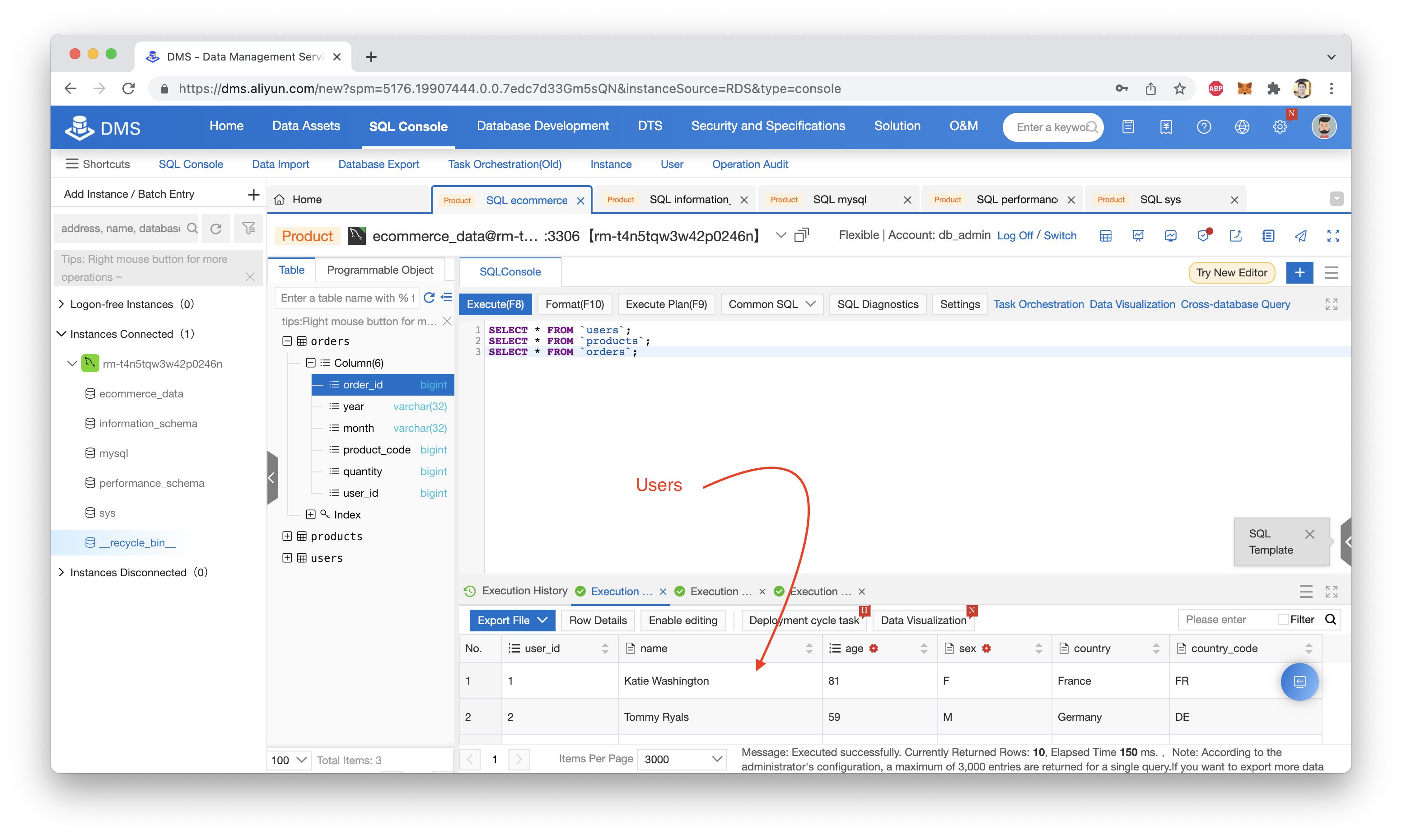

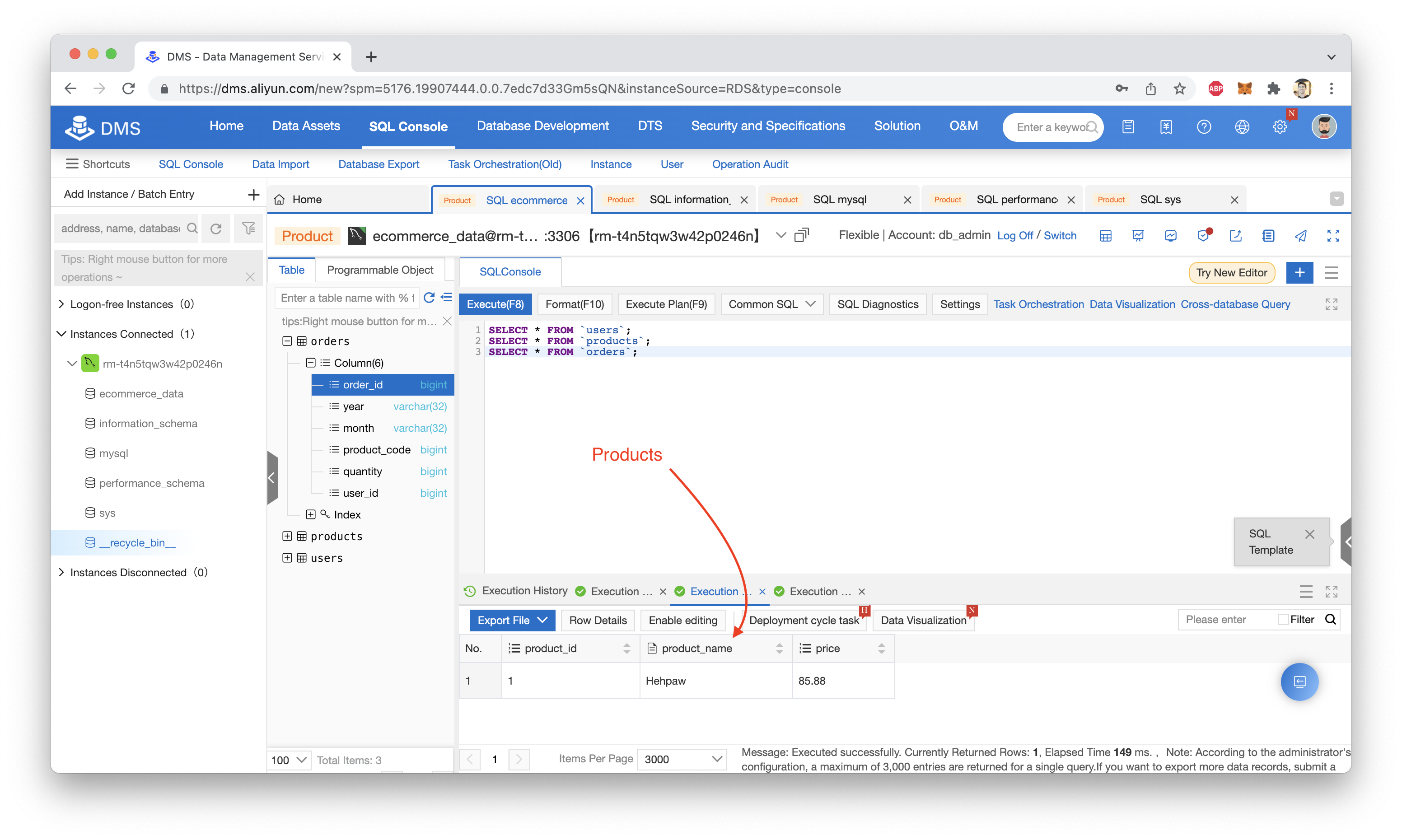

Now, we can log back into our RDS database and run a few SELECT queries to see if our records were inserted successfully. Specifically, we want to look at the users, orders and products tables:

SELECT * FROM `users`;

SELECT * FROM `products`;

SELECT * FROM `orders`;We should see the same data that was returned to us when we made our GET requests using curl:

Now you can create new users, orders, or products at will, using simple HTTP GET requests!

From here, you could write a Python script or even another Function Compute function that calls the user, product, and order generation functions on a regular basis, simulating regular additions of new data to your database.

Once you're done experimenting, be sure to delete your RDS database. Leaving your Function Compute functions in place won't cost you much (if anything), but if you do want to clean up, keep in mind that you must delete things in the following order:

That's it! See you next week.

Great! Reach out to me at jierui.pjr@alibabacloud.com and I'll do my best to answer in a future Friday Q&A blog.

You can also follow the Alibaba Cloud Academy LinkedIn Page. We'll re-post these blogs there each Friday.

JDP - August 27, 2021

JDP - December 23, 2021

JDP - September 15, 2021

JDP - July 9, 2021

JDP - December 30, 2021

JDP - May 20, 2022

Function Compute

Function Compute

Alibaba Cloud Function Compute is a fully-managed event-driven compute service. It allows you to focus on writing and uploading code without the need to manage infrastructure such as servers.

Learn More ApsaraDB RDS for MySQL

ApsaraDB RDS for MySQL

An on-demand database hosting service for MySQL with automated monitoring, backup and disaster recovery capabilities

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More PolarDB for MySQL

PolarDB for MySQL

Alibaba Cloud PolarDB for MySQL is a cloud-native relational database service 100% compatible with MySQL.

Learn MoreMore Posts by JDP

5658494509194225 October 11, 2023 at 6:08 am

Hello. I saw you mentioned about "chaining" of functions in Function Compute (FC). Could you please point me to documentation that talks about function chaining in Function Compute (FC)?