By Yang Lu and Wei Shi

With the significant increase in data center network bandwidth and the continuous decline in latency, the traditional Ethernet-based TCP protocol stack is facing new challenges. At this time, the traditional Ethernet network interface controller and TCP protocol stack can no longer meet the requirements for network throughput, transmission latency, efficiency, and cost reduction. At the same time, cloud and hardware providers offer high-performance DPU solutions. Therefore, a high-performance network protocol stack with software collaborating with hardware is required to adapt to DPUs, give full play to hardware performance, and support large-scale cloud application scenarios. This stack is friendly to development, deployment, and O&M and compatible with mainstream business architectures (such as cloud-native).

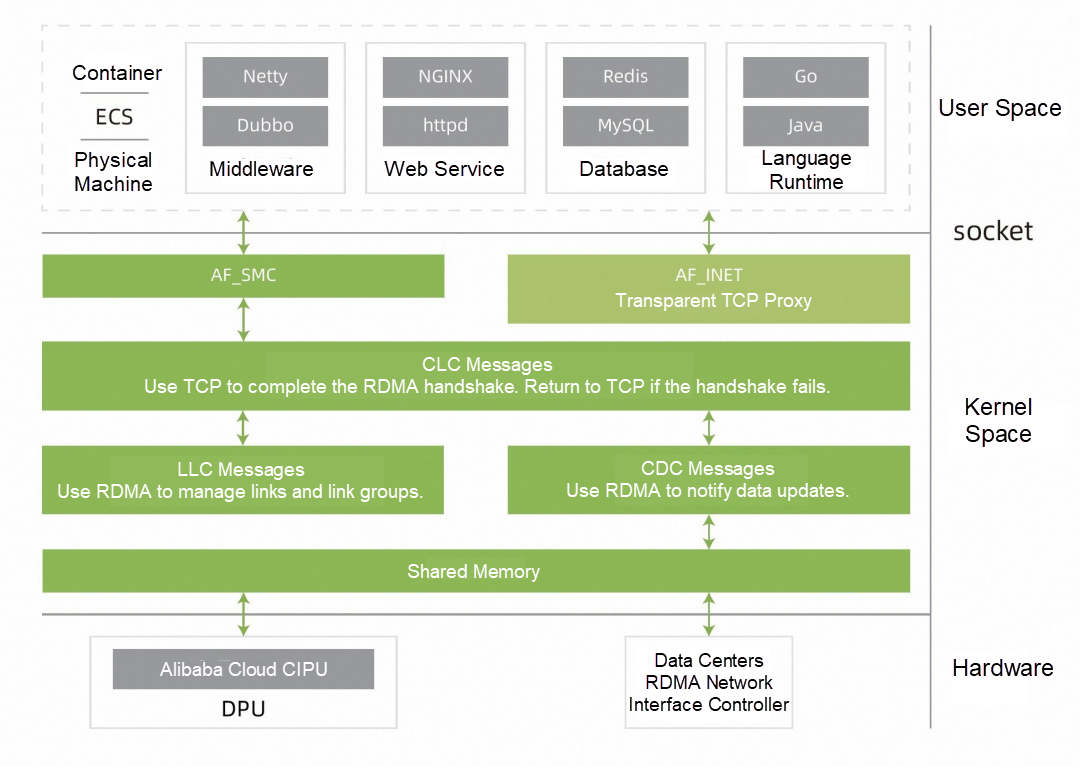

Shared Memory Communication (SMC) is a high-performance protocol stack for hardware and software collaboration that was contributed by IBM to the Linux community and enhanced and maintained by OpenAnolis. SMC provides the following all-in-one solutions for different scenarios, hardware, and application models.

SMC is a general-purpose and high-performance network protocol stack natively supported by the Linux kernel. It supports socket interfaces and fast rollback to TCP. Any TCP application can transparently replace the SMC protocol stack. Due to the difference in the proportion of business logic and network overhead, the acceleration benefits of different applications vary. The following are typical application scenarios and best business practices:

In general, the SMC protocol stack can improve the performance of TCP applications, reduce latency, and improve QPS and does not need to modify the application code. However, the acceleration effect is affected by the proportion of business logic and network overhead, and the acceleration effect varies with different applications. Using an SMC protocol stack can provide significant performance improvement in some specific application scenarios (such as high-performance computing and big data).

OpenAnolis White Paper: Using the EROFS Read-Only File System across the Cloud-Edge-End

Async-fork: Mitigating Query Latency Spikes Incurred by the Fork-based Snapshot Mechanism

105 posts | 6 followers

FollowOpenAnolis - December 7, 2022

OpenAnolis - September 6, 2022

Alibaba Cloud Community - July 27, 2022

OpenAnolis - March 7, 2022

OpenAnolis - July 27, 2023

OpenAnolis - June 19, 2023

105 posts | 6 followers

Follow Alibaba Cloud Linux

Alibaba Cloud Linux

Alibaba Cloud Linux is a free-to-use, native operating system that provides a stable, reliable, and high-performance environment for your applications.

Learn More Apsara Stack

Apsara Stack

Apsara Stack is a full-stack cloud solution created by Alibaba Cloud for medium- and large-size enterprise-class customers.

Learn More Accelerated Global Networking Solution for Distance Learning

Accelerated Global Networking Solution for Distance Learning

Alibaba Cloud offers an accelerated global networking solution that makes distance learning just the same as in-class teaching.

Learn More Networking Overview

Networking Overview

Connect your business globally with our stable network anytime anywhere.

Learn MoreMore Posts by OpenAnolis

Dikky Ryan Pratama June 27, 2023 at 12:49 am

awesome!