The Alibaba Cloud Operating System Team and Alibaba Cloud Database Team co-published the paper entitled Async-fork: Mitigating Query Latency Spikes Incurred by the Fork-based Snapshot Mechanism from the OS Level with the Emerging Parallel Computing Center (EPCC) of Shanghai Jiao Tong University. The paper was accepted by one of the top conferences in database systems - Very Large Data Bases (VLDB). VLDB has been held since 1975 and is one of the top conferences in the computer database systems (CCF-A) field, with an average acceptance rate of 18.6%.

In-memory key-value stores (IMKVSes) serve many online applications. They generally adopt the fork-based snapshot mechanism to support data backup. However, this method can result in query latency spikes because the engine is out-of-service for queries during the snapshot. In contrast to existing research optimizing snapshot algorithms, we address the problem from the operating system (OS) level, while keeping the data persistent mechanism in IMKVSes unchanged. Specifically, we first study the impact of the fork operation on query latency. Based on findings in the study, we propose Async fork, which performs the fork operation asynchronously to reduce the out-of-service time of the engine. Async-fork is implemented in the Linux kernel and deployed into the online Redis database in public clouds. Our experiment results show that Async-fork can significantly reduce the tail latency of queries during the snapshot.

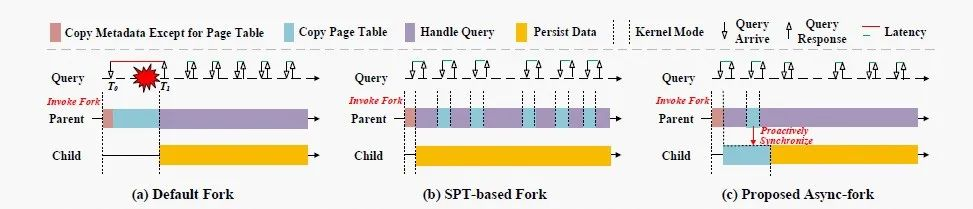

As all data resides in memory, the data persistent function is a key feature of IMKVSes for data backup. A common data persistent approach is to take a point-in-time snapshot of the in-memory data with the system call fork and dump the snapshot into the file system. Figure 1(a) gives an example of the fork-based snapshot method. In the beginning, the storage engine (the parent process) invokes fork to create a child process. As fork creates a new process by duplicating the parent process, the child process will hold the same data as the parent. Thus, we can ask the child process to write the data into a file in the background but keep the parent process continuous processing queries. Although the storage engine delegates the heavy IO task to the child process, the fork-based snapshot method can incur latency spikes. Specifically, queries arriving during the period of taking a snapshot (from the start of fork to the end of persisting data) can have a long latency because the storage engine runs into the kernel mode and is out-of-service for queries. For example, on the 64GB instance size, the optimization reduces the 99%-ile latency from 911.95ms to 3.96ms and the maximum latency from 1204.78ms to 59.28ms.

Figure 1: The workflow of the parent and child process with (a) default fork, (b) shared page table (SPT)-based fork, and (c) the proposed Async-fork in the snapshot procedure.

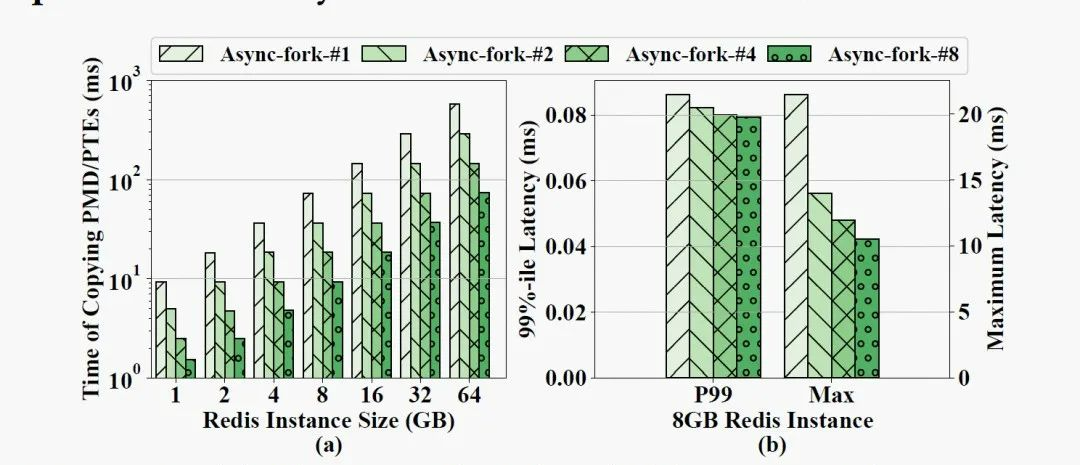

While invoking fork, the parent process runs in the kernel mode and copies the metadata (e.g, VMAs, the page table, file descriptors, and signals) to the child process. We can also see that the time of the page table copy dominates the execution time. Particularly, the copy operation takes over 97% percentage of the execution time.

Researchers have proposed a fork optimization method based on shared page tables. This method suggests sharing the page table between parent and child processes in a copy-on-write (CoW) manner. As shown in Figure 1 (b), page table copy is not performed during the fork call. It is performed when a modification occurs in the future. Although the shared page table technique reduces the latency of the default fork operation, the overhead incurred by frequent interruptions is non-negligible and the shared page table introduces the potential data leakage problem. Therefore, the shared page table approach is unsuitable for solving latency spikes.

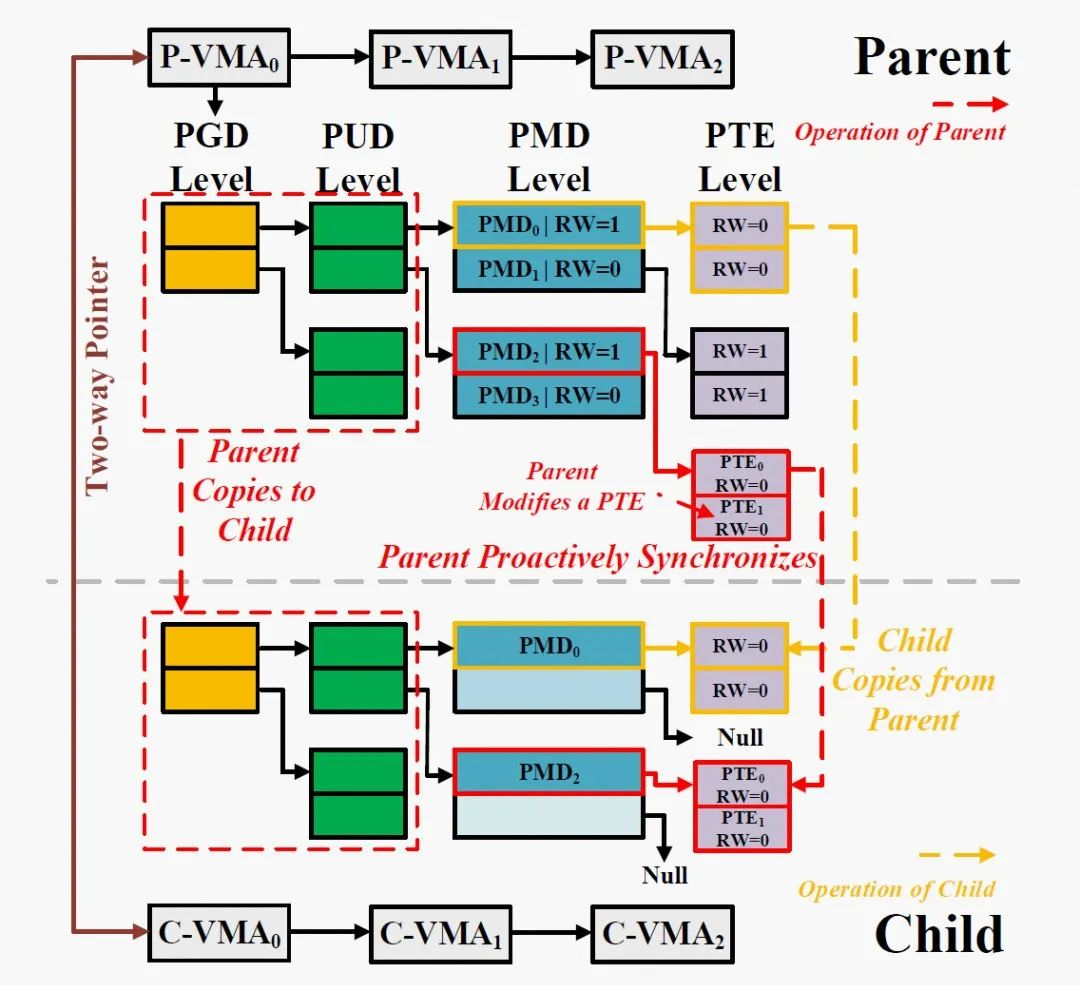

Motivated by these findings, we propose Async-fork to mitigate the latency spikes for snapshot queries by optimizing the fork operation. Figure 1(c) demonstrates the general idea. As copying the page table dominates the cost of the fork operation, Asyncfork offloads this workload from the parent process to the child process to reduce the duration that the parent process runs into the kernel mode. This design also ensures that both the parent and child processes have an exclusive page table to avoid the data leakage vulnerability caused by the shared page table.

However, it is far from trivial to achieve in design since the asynchronization operations of the two processes on the page table can result in an inconsistent snapshot, i.e., the parent process may modify the page table, while the copy operation of the child process is in process. To address the problem, we design the proactive synchronization technique. This technique enables the parent process to detect all modifications (including that triggered by either users or OS) to the page table. If the parent process detects that some page table entries will be modified and these entries are not copied, then it will proactively copy them to the child process. Otherwise, these entries must have been copied to the child process and the parent process will directly modify them. In this way, the proactive synchronization technique keeps the snapshot consistent and reduces the number of interruptions to the parent process compared with SPT-based fork. Additionally, we parallelize the copy operation of the child process to further accelerate Async-fork.

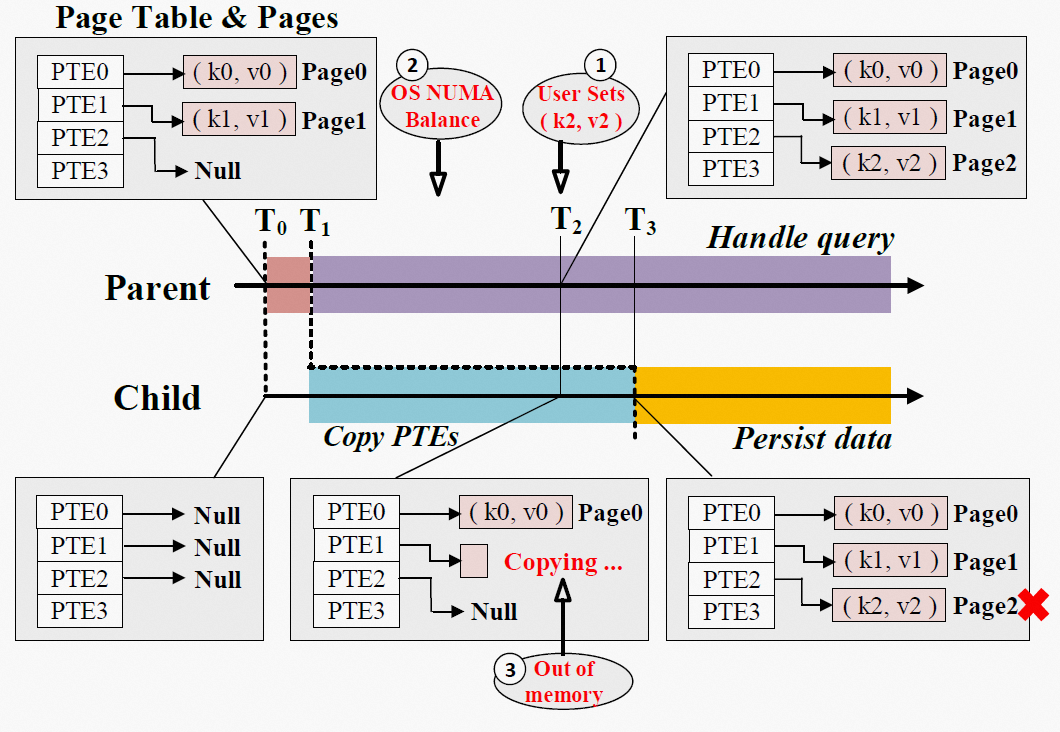

However, besides of the user operations, many inherent memory management operations in the operating system also cause PTE modifications. For instance, the OS periodically migrates pages among NUMA nodes, causing the involved PTEs to be modified as inaccessible (② in Figure 2). We describe the method to detect the PTE modifications in Section 4.3.

Secondly, errors may occur during Async-fork. For instance, the child process may fail to copy an entry due to out of memory (③ in Figure 2). In this case, error handling is necessary, as we should restore the process to the state before it calls Async-fork.

Figure 2: The Challenges in Async-Fork

We first briefly review two operating system concepts, virtual memory and fork that are closely related to this work. As our technique is implemented and deployed in Linux, we introduce these concepts in the context of Linux. Then, we discuss the use cases of fork in databases.

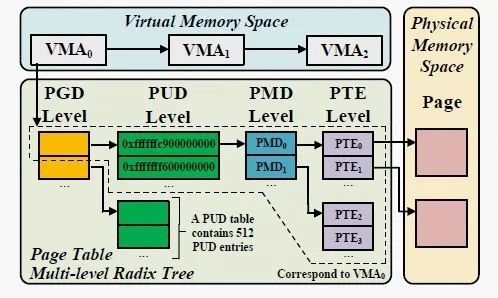

As an effective approach to managing hardware memory resources, virtual memory is widely used in modern operating systems. A process has its own virtual memory space, which is organized into a set of virtual memory areas (VMA). Each VMA describes a continuous area in the virtual memory space. The page table is the data structure used to map the virtual memory space to the physical memory. It consists of a collection of page table entries (PTE), each of which maintains the virtual-to-physical address translation information and access permissions.

To reduce the memory cost, the page table is stored as a multi-level radix tree in which PTEs locate in leaf nodes (i.e., PTE Level in Figure 3). The part in the area marked with the dashed line corresponds to VMA0. The tree at most has five levels. From top to bottom, they are the page global directory (PGD) level, the P4D level, the page upper directory (PUD) level, the page middle directory (PMD) level and the PTE level. As P4D is generally disabled, we focus on the other four levels in this paper. Except for the PTE level, an entry stores the physical address of a page while this page is used as the next-level node (table). With the page size setting to 4KB, a table in each level contains 512 entries. Given a VMA, “VMA’s PTEs” refers to the PTEs corresponding to the VMA and “VMA’s PMDs” is the set of PMD entries that are parents of these PTEs in the tree.

Figure 3: The Organization of the Page Table

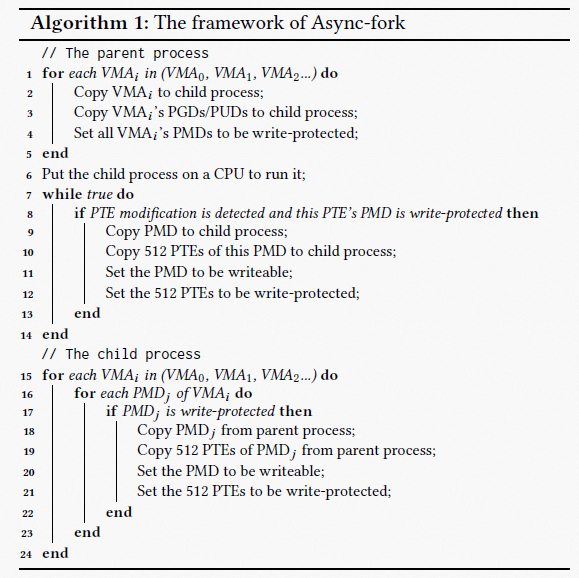

Algorithm 1 describes how the parent process and the child process work in Async-fork.

In the default fork, the parent process traverses all its VMAs and copies the corresponding parts of the page table to the child process. The page table is copied from top to bottom. In Async-fork, the parent process roughly follows the above process, but only copies PGD, P4D (if exists) and PUD entries to the child process (Lines 1 to 3 in Algorithm 1). After that, the child process starts to run, and the parent process returns to the user mode to handle queries (Lines 7 to 14). The child process then traverses the VMAs, and copies PMD entries and PTEs from the parent process. The child process copies PMDs/PTEs from the parent process (e.g., PMD0 and its PTEs).

When the child process is responsible for copying PMD entries and PTEs, it is possible that the PTEs are modified by the parent process before they are actually copied. Note that only the parent process is aware of the modifications.

In general, there are two ways to copy the to-be-modified PTEs for the consistency.

1) the parent process proactively copies the PTEs to the child process;

2) the parent process notifies the child process to copy the PTEs and waits until the copying is finished. As both ways result in the same interruption in the parent process, we choose the former way.

More specifically, when a PTE is modified during snapshot, the parent process copies not only this PTE but also all the other PTEs of a same PTE table (512 PTEs in total), as well as the parent PMD entry to the child process proactively. For instance, when PTE1 in Figure 4 is modified, the parent process proactively copies PMD2, PTE0 and PTE1 to the child process.

Figure 4: An Example of Copying Page Table in Async-Fork. RW-1 Represents Writable, and RW=0 Represents Write-Protected.

Eliminating Unnecessary Synchronizations - Always letting the parent process copy the modified PTEs is unnecessary, as it is possible that the to-be-modified PTEs have already been copied by the child process. We identify if the PMD entries and PTEs have been copied by the child process, to avoid unnecessary synchronizations.If a PMD entry and its 512 PTEs have not been copied to the child process, the PMD entry will be set as write-protected. Note that, it does not break the CoW strategy of fork since it still triggers the page fault when the corresponding page is written on x86. Once the PMD/PTEs have been copied to the child process (e.g., PMD0), the PMD entry is changed to be writable (the PTEs are changed to be write-protected to maintain the CoW strategy). Since both parent and child processes lock the page of the PTE table with trylock_page() when they are copying PMD entries and PTEs, they will not copy PTEs pointed by the same PMD entry at the same time.

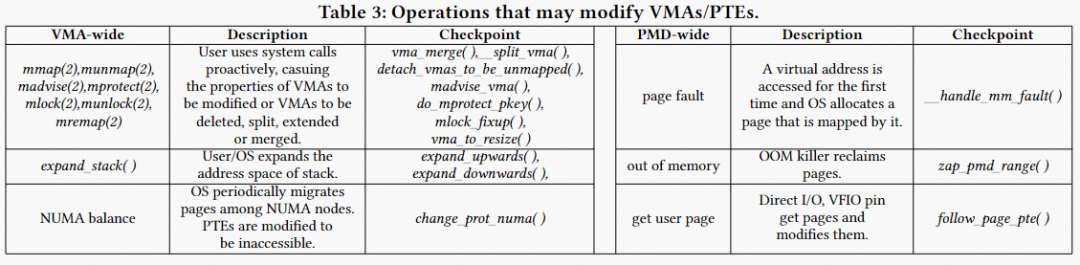

The operations that modify PTEs in the OS can be divided into two categories:

1) VMA-wide modification. Some operations act on specific VMAs, including creating, merging, deleting VMAs and so on. The modification of a VMA may also cause the VMA’s PTEs be modified. For example, the user sends queries to delete lots of KV pairs. The IMKVS (parent process) then reclaims the corresponding virtual memory space by munmap.

2) PMD-wide modification. Other operations modify the PTE directly. For example, the page of parent process can be reclaimed by the out of memory (OOM) killer. In this case, one PMD entry is involved. Table 1 summarizes the operations and lists the checkpoints of the operations.

Table 1: 0perations That May Modify VMAs/PTEs

We implement the detection by hooking the checkpoints. Once a checkpoint is reached, the parent process checks whether the involved PMD entries and PTEs have been copied (by checking the R/W flag of the PMD entry). For a VMA-wide modification, all the PMD entries of this VMA are checked, while only one PMD entry is checked for a PMD-wide modification. The uncopied PMD entries and PTEs will be copied to the child process before modifying them. If a VMA is large, the parent process may take a relatively long time to check all PMD/PTE entries by looping over each of them. We therefore introduce a two-way pointer, which helps the parent process quickly determine whether all entries of a VMA have been copied to the child, to reduce the cost. Each VMA has a two-way pointer, which is initialized by the parent process during the invocation of the Async-fork function. The pointer in the VMA of the parent process (resp. the child process) points to the corresponding VMA of the child process (resp. the parent process).

In this way, the two-way pointer maintains a connection between the VMAs of the parent and the child. The connection will be closed after all PMDs/PTEs of the VMA are copied to the child. Specifically, if no VMA-wide modification happens during the copy of PMDs/PTEs of the VMA, then the child closes the connection by setting the pointers in the VMAs of both the parent and child to null after the copy operation. Otherwise, the parent will synchronize the modification (i.e., copying the uncopied PMDs/PTEs to the child), and close the connection by setting the pointers to null after the copy operation. As both parent and child processes can access the two-way pointers, the pointers are protected by locks to keep the state consistent. When a VMA-wide modification occurs, the parent process can quickly determine whether all PMDs/PTEs of a VMA have been copied to the child by checking the pointer’s value, instead of looping over all these PMDs. Besides, the pointer is also used in handling errors.

Since copying the page table involves memory allocation, some errors may occur during both the default fork and Async-fork. For instance, a process may fail to initialize a new PTE table due to out of memory. Such error may only happen in the parent process in the default fork and has a standard way to handle the error. However, the copying of page table is offloaded to the child process in Asyncfork, such error may happen in the child process, and a method is required to handle such errors. Specifically, we should restore the parent process to the state before it calls Async-fork, to ensure that the parent process will not crash in the future.

As Async-fork may modify the R/W flags of the PMD entries of the parent process, we roll back these entries to be writable when errors occur in Async-fork. Errors may occur:

1) when the parent process copies PGD/PUD entries,

2) when the child process copies PMD/PTEs, and

3) during a proactive synchronization. In the first case, the parent process rolls back all the write-protected PMD entries. In the second case, the child process rolls back all the remaining uncopied PMD entries. After that, we send a signal (SIGKILL) to the child process. The child process will be killed when it returns to the user mode and receive the signal. In the third case, the parent process only rolls back the PMD entries of the VMA containing the PMD entry that is being copied. The purpose is to avoid contending for the PMD entry lock with the child process. An error code is then stored into the two-way pointer of the VMA. Before (and after) copying PMDs/PTEs of a VMA, the child process will check the pointer to see whether there are errors. If so, then it stops copying PMD/PTE entries immediately and performs the rollback operations that are already described in the second case.

Figure 5: The Implementation of Async-Fork

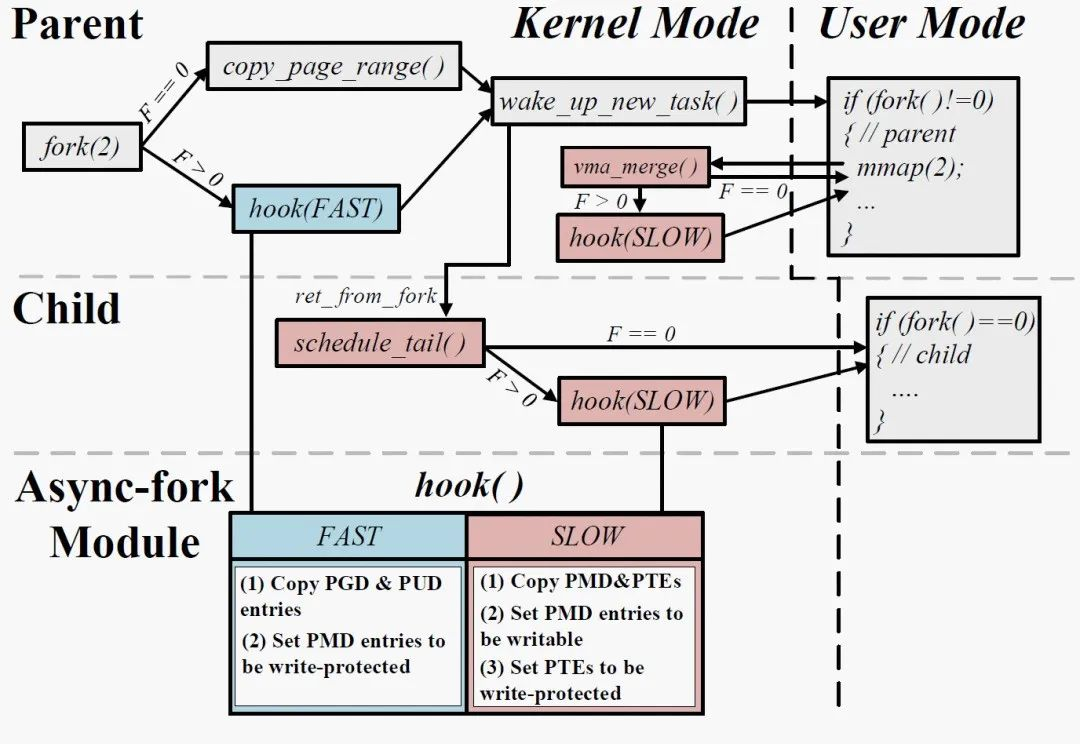

Figure 5 depicts the implementation of Async-fork in Linux. We insert hook functions into some functions in the fork call path and memory subsystem, and the function body of the hook function is encapsulated into the kernel module. Modular implementation allows maintainers to insert and unload modules at any time in the run time to modify Async-fork. The hook function is an improvement of the copy_page_range function on the default fork call path. It controls page table copy by receiving an argument (Fast or Slow).

Flexibility: For the IMKVS workload that has small memory footprint, the page table copy is already short. In this case, Async-fork brings small benefit. For these workloads, we provide an interface in memory cgroup to control whether Async-fork is enabled. Specifically, when users add a process to a memory cgroup, they can pass a parameter to enable/disable Async-fork at run time (i.e., the parameter F in Figure 8). As shown in the figure, if the parameter value is 0, then the process will use the default fork. Otherwise, Async-fork is enabled. As such, users can determine which fork operation the process uses as necessary and use Async-fork without any modification in the source code of applications. The process will use the default fork if no parameter is passed in.

Memory overhead: The only memory overhead of Async-fork comes from the added pointer (8B) in each VMA of a process. In the case, the memory overhead in a process is the number of VMAs times 8𝐵. This overhead is generally negligible.

Support for ARM64: The design of Async-fork can also be implemented on ARM64. Async-fork can also be implemented on other architectures that support hierarchical attributes in the page table.

Performance Optimization: Conducting the fork operation frequently leads the parent to frequently turn into the kernel mode. A straightforward way to reduce the cost of the proactive PTE synchronization is to let the child process to first copy the PTEs potentially modified before other PTEs. As VMAs are independent, the kernel threads can totally perform the copy in parallel and obtain near-linear speedup. Multiple kernel threads consume CPU cycles. These threads periodically check whether they should be preempted and give up CPU resources by calling cond_resched(), in order to reduce the interference on other normal processes.

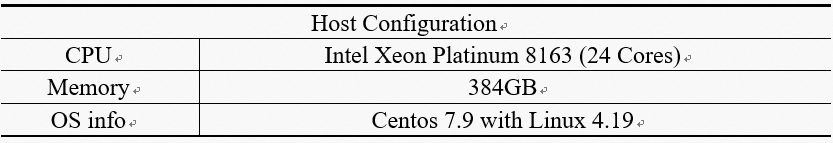

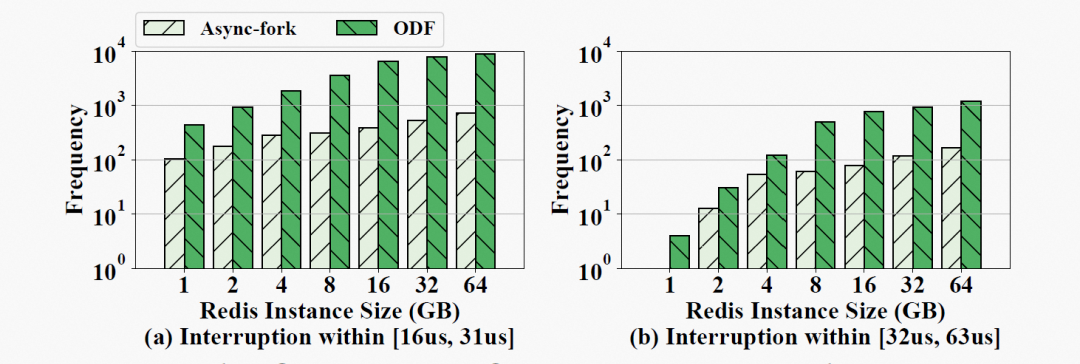

We evaluate Async-fork on a machine with two Intel Xeon Platinum 8163 processors, each of them has 24 physical cores (48 logical cores). We use Redis (version 5.0.10) and KeyDB (version 6.2.0) compiled with gcc 6.5.1 as the representative IMKVS servers, and use Redis benchmark as well as Memtier benchmark to be the workload generators.

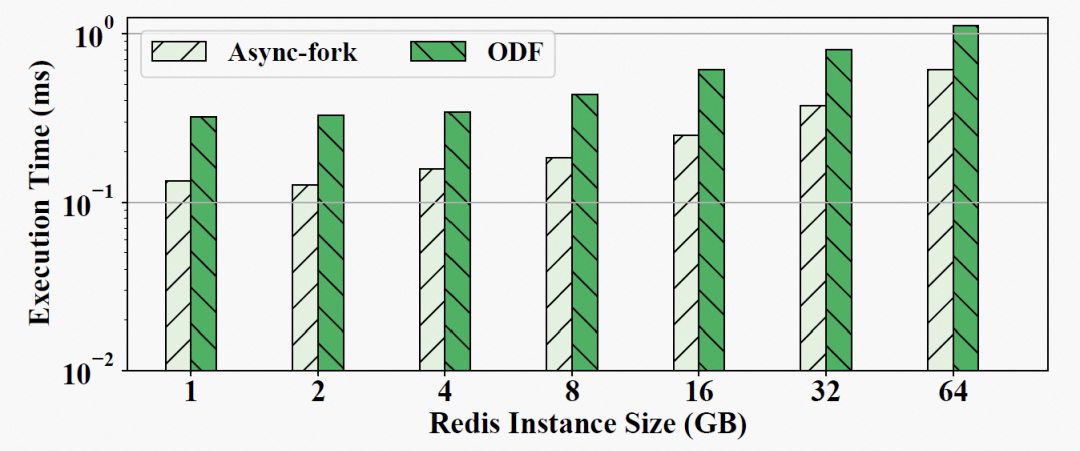

We compare Async-fork with On-Demand-Fork (denoted by ODF in short), the state-of-the-art shared page table-based fork. In ODF, each time a shared PTE is modified by a process, not only one PTE but 512 PTEs located on the same PTE table will be copied at the same time. We do not report the results of the default fork in this section since it results in 10X higher latency compared with both Async-fork and ODF in most cases.

Figure 6: The 99%-ile Latency of Snapshot Queries

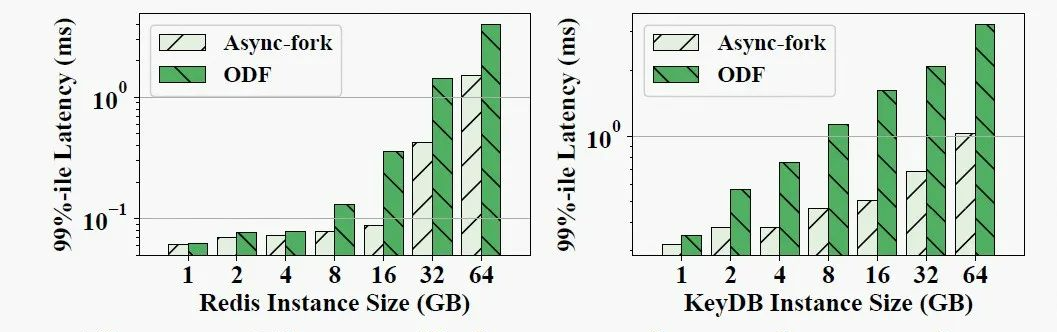

Figure 7: The Maximum Latency of Snapshot Queries

Figure 6 shows the Redis benchmark that generate the write-intensive workload. Figure 7 shows the 99%-ile latencies of snapshot queries in Redis and KeyDB, with ODF and Async-fork. As observed, Async-fork outperforms ODF in all the cases, and the performance gap increases when the instance size gets larger. For instance, operating on a 64GB IMKVS instance, the 99%-ile latency of the snapshot queries is 3.96ms (Redis) and 3.24ms (KeyDB) with ODF, while the 99%- ile latency reduces to 1.5ms (Redis, 61.9% reduction) and 1.03ms (KeyDB, 68.3% reduction) with Async-fork. Async-fork greatly reduces the maximum latency of the benchmarks compared with ODF, even if the instance size is small. For a 1GB IMKVS instance, the maximum latencies of the snapshot queries are 13.93ms (Redis) and 10.24ms (KeyDB) respectively with ODF, while the maximum latencies are decreased to 5.43ms (Redis, 60.97% reduction) and 5.64ms (KeyDB, 44.95% reduction) with Async-fork.

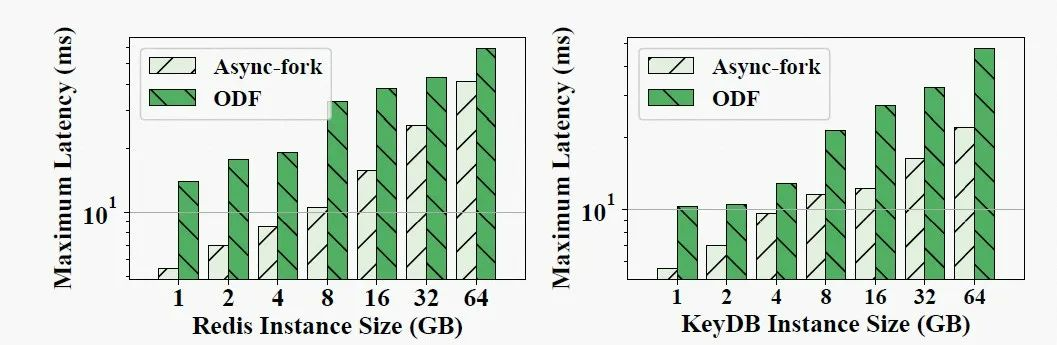

Figure 8: The Frequency of Interruptions in the Parent Process during the Snapshot Process

The interruption of Async-fork is caused by the proactive synchronization, whereas the interruption of ODF is caused by the CoW of the page table. We measure the interruptions of the parent process during the snapshot to understand the reason that Async-fork outperforms ODF. The result of bcc is a histogram in which the bucket is the time duration and the frequency is the number of invocations whose execution time falls into the bucket. The categories [16𝑢𝑠, 31𝑢𝑠] and [32𝑢𝑠, 63𝑢𝑠] are two default buckets of bcc. In our experiments, all invocations fall into the two buckets.

Figure 8 shows the frequency of the interruptions within [16us, 31us] and [32us, 63us]. We can see that Async-fork significantly reduces the frequency of interruptions. For example, Async-fork reduces the frequency of interruptions from 7348 to 446 on the 16GB instance. Async-fork greatly reduces the interruptions because the interruptions happen only when the child process is copying PMD/PTEs. However, the interruption can happen until all data is persisted by the child process in ODF, while the data persistence operation requires tens of seconds (e.g., persisting 8GB in-memory data takes about 40s). Under the same workload, the parent process is more vulnerable to interruption when using ODF.

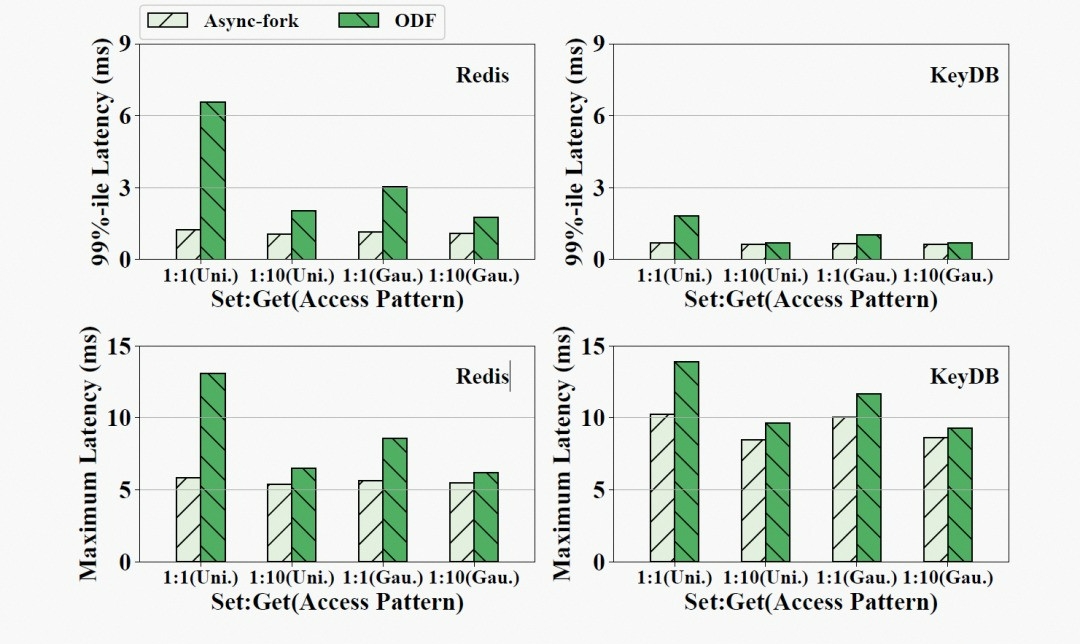

Figure 9: The 99%-ile Latency and Maximum Latency of Snapshot Queries under Different Workloads in an 8GB IMKVS

Figure 9 shows the results using four workloads with different read-write patterns generated with Memtier. In the figure, “1:1 (Uni.)” represents the workload with 1:1 Set:Get Ratio and the uniform random access pattern, while “1:10 (Gau.)” is the workload with 1:10 Set:Get ratio and the Gaussian distribution access pattern. Observed from Figure 12, Async-fork still outperforms ODF. The benefit is smaller for the workload with more GET queries.

In general, Async-fork works better for write-intensive workloads. The larger the modified memory is, the better Async-fork performs.

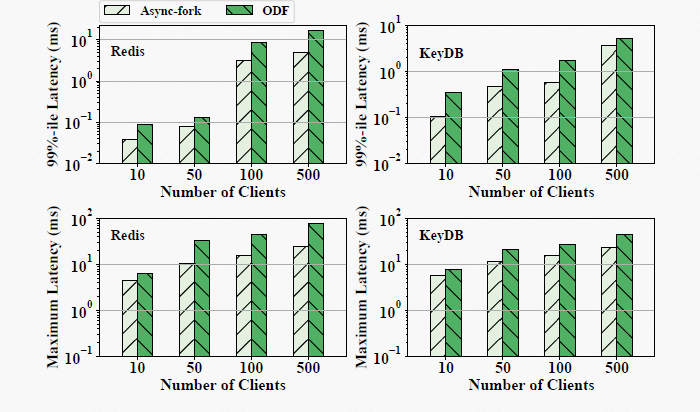

Figure 10: The 99%-ile Latency and the Maximum Latency of Snapshot Queries under Different Numbers of Clients in an 8GB IMKVS

Figure 10 shows the results of 99%-ile and maximum latency with 10, 50, 100 and 500 clients. As observed, Async-fork outperforms ODF, while the performance gap increases as the number of clients increases. This is because more requests arrive at the IMKVS at the same time when the number of clients increases. As a result, more PTEs may be modified at the same time, and the duration of one interruption to the parent process may become longer.

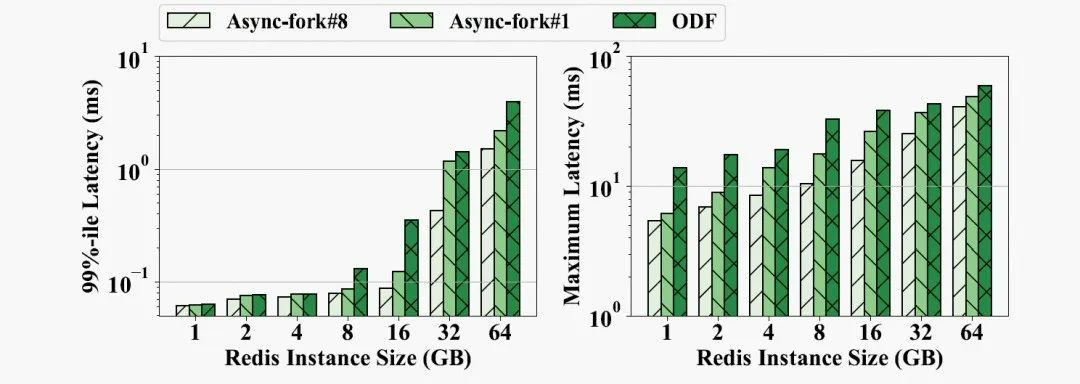

Figure 11: The 99%-ile and Maximum Latency of Snapshot Queries When Async-Fork Uses 1 or 8 Threads

Figure 12: (a) The Time That the Child Process Takes to Copy PMDs/PTEs in Async-Fork. (b) The 99%-ile and Maximum Latency in an 8GB Redis Instance

In Async-fork, multiple kernel threads may be used to copy PMD/PTEs in parallel in the child process. Figure 11 shows the 99%-ile and maximum latency of snapshot queries under different Redis instance sizes. Async-fork#𝑖 represents the results of using 𝑖 threads in total to copy the PMDs/PTEs. Observed from Figure 11, Async-fork#1 (the child process itself) still brings shorter latency than ODF. The maximum latency of the snapshot queries is decreased by 34.3% on average, compared with ODF.

We can also find that using more threads (Async-fork#8) can further decrease the maximum latency. This is because the sooner the child process finishes copying PMDs/PTEs, the lower probability the parent process is interrupted to proactively synchronize PTEs. Figure 12(a) shows the time that the child process takes to copy PMDs/PTEs with different numbers of kernel threads, while Figure 12(b) shows the corresponding 99%-ile latency and maximum latency in an 8GB Redis instance. As we can see, launching more kernel threads effectively reduces the time of copying PMDs/PTEs in the child process. The shorter the time is, the lower the latency becomes.

Figure 13: The Execution Time of Async-Fork and ODF

The duration of the parent process fork call significantly affects the latency of snapshot queries. Figure 13 shows the return time of the parent process from Async-fork and ODF calls at different Redis instance sizes. In a 64GB instance, the parent process takes 0.61ms to return from Async-fork and 1.1ms to return from the ODF (while the return from the default fork can take up to 600ms). It can be observed that Async-fork executes slightly faster than ODF. One possible reason is that ODF needs to introduce additional counters to implement CoW page tables, resulting in additional time costs for initialization.

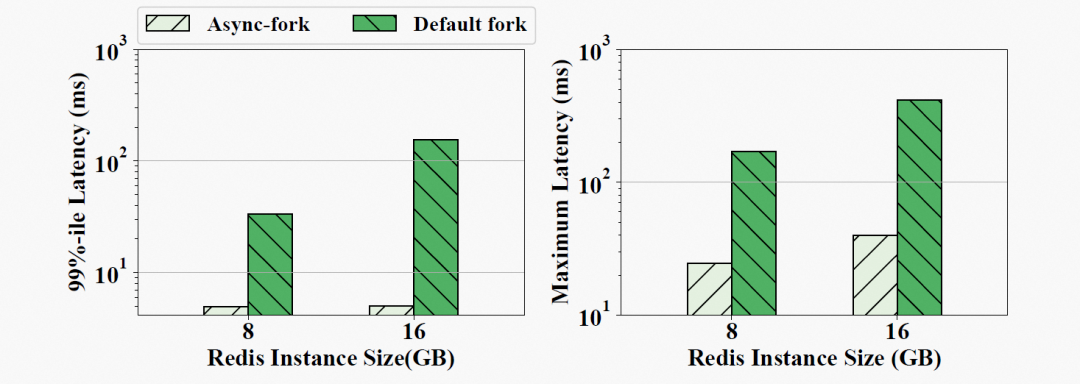

Figure 14: The 99%-ile Latency and Maximum Latency of Snapshot Queries with Async-Fork in Production

Async-fork is deployed in our Redis production environment. We rented a Redis server in the public cloud to evaluate Async-fork in production. The leased Redis server has 16GB of memory and 80GB of SSD. We also rented another ECS with 4 vCPUs and 16GB to run the Redis benchmark as a client.

Figure 14 shows the p99 latency and maximum latency of snapshot queries when using the default fork and Async-fork. For an 8GB instance, the p99 latency is reduced from 33.29 ms to 4.92 ms, and the maximum latency is reduced from 169.57 ms to 24.63 ms. For a 16GB instance, the former is reduced from 155.69ms to 5.02ms, while the latter is reduced from 415.19ms to 40.04ms.

In this article, we study the latency spikes incurred by the fork-based snapshot mechanism in IMKVSes and address the problem from the operating system level. In particular, we conduct an in-depth study to reveal the impact of the fork operation on the latency spikes. According to the study, we propose Async-fork. It optimizes the fork operation by offloading the workload of copying the page table from the parent process to the child process. To guarantee data consistency between the parent and the child, we design the proactive synchronization strategy. Async-fork is implemented in the Linux kernel and deployed in production environments. Extensive experiment results show that Async-fork can significantly reduce the tail latency of queries arriving during the snapshot period.

OpenAnolis White Paper: A Software and Hardware Collaborative Protocol Stack for DPU Scenarios

OpenAnolis White Paper: Scheduler Hot Upgrade SDK in Agile Development Scenarios

105 posts | 6 followers

FollowAlibaba Cloud Community - July 21, 2023

Alibaba Cloud Native Community - March 11, 2026

Alibaba Cloud MaxCompute - June 25, 2024

Iain Ferguson - February 17, 2022

OpenAnolis - March 25, 2026

Iain Ferguson - November 26, 2021

105 posts | 6 followers

Follow MaxCompute

MaxCompute

Conduct large-scale data warehousing with MaxCompute

Learn More Time Series Database (TSDB)

Time Series Database (TSDB)

TSDB is a stable, reliable, and cost-effective online high-performance time series database service.

Learn More Security Center

Security Center

A unified security management system that identifies, analyzes, and notifies you of security threats in real time

Learn More ApsaraDB for HBase

ApsaraDB for HBase

ApsaraDB for HBase is a NoSQL database engine that is highly optimized and 100% compatible with the community edition of HBase.

Learn MoreMore Posts by OpenAnolis