The engineering associated with machine learning is complex due to common software development problems and the data-driven features of machine learning. As a result, the workflow becomes longer, data versions are out of control, experiments cannot be easily traced, results cannot be conveniently reproduced, and it is costly to iterate the model. To resolve these inherent issues in machine learning, many enterprises have built internal machine learning platforms to manage the machine learning lifecycle, such as Google's TensorFlow Extended platform, Facebook's FBLearner Flow platform, and Uber's Michelangelo platform. However, these platforms depend on the internal infrastructure of these enterprises. This means that they cannot be completely open-source. These platforms use the machine learning workflow as the framework. This framework enables data scientists to flexibly define their own machine learning pipelines and reuse existing data processing and model training capabilities to better manage the machine learning lifecycle.

Google has extensive experience in building machine learning workflow platforms. Its TensorFlow Extended platform supports Google's core businesses such as search, translation, and video playback. More importantly, Google has a profound understanding of engineering efficiency in the machine learning field. Google's Kubeflow team made Kubeflow Pipelines open-source at the end of 2018. Kubeflow Pipelines is designed in the same way as Google's internal TensorFlow Extended machine learning platform. The only difference is that Kubeflow Pipelines runs on the Kubernetes platform while TensorFlow Extended runs on Borg.

The Kubeflow Pipelines platform consists of the following components:

You can use Kubeflow Pipelines to achieve the following goals:

This document has gone over what Kubeflow Pipelines are, covered some of the issues resolved by Kubeflow Pipelines, and the procedure for using Kustomize on Alibaba Cloud to quickly build Kubeflow Pipelines for machine learning.

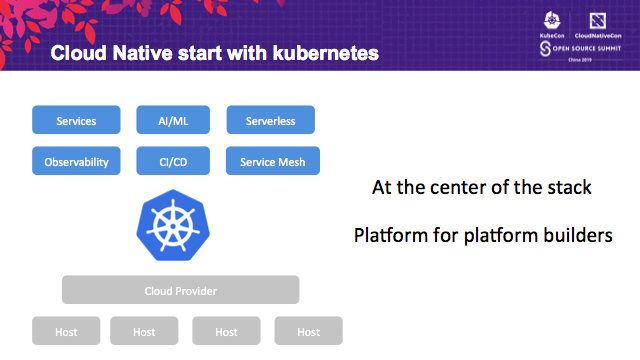

While an increasing number of developers continuously accept and recognize the design concept of the cloud-native applications, it is critical to note that Kubernetes has become the center of the entire cloud-native implementation stack. Cloud service capabilities are revealed from the standard Kubernetes interface to the service layer through Cloud Provider, CRD Controller, and Operator. Developers build their own cloud-native applications and platforms based on Kubernetes. Hence, Kubernetes is now the platform for building platforms.

It is very simple to use monitoring and autoscaling capabilities on the Alibaba Cloud Container Service for Kubernetes. Developers only need to install the corresponding component chart in one click to get complete access. With multi-dimensional monitoring and autoscaling capabilities, cloud-native applications obtain higher stability and robustness at the lowest cost.

In this blog,you could understand how a cloud-native application seamlessly integrates monitoring and autoscaling capabilities in Kubernetes.

Container Service for Kubernetes (ACK) is a fully managed service. ACK is integrated with services such as virtualization, storage, network and security, providing user a high performance and scalable Kubernetes environments for containerized applications. Alibaba Cloud is a Kubernetes Certified Service Provider(KCSP)and ACK is certified by Certified Kubernetes Conformance Program which ensures consistent experience of Kubernetes and workload portability.

This course aims to help IT companies who want to container their business applications, and cloud computing engineers or enthusiasts who want to learn container technology and Kubernetes. By learning this course, you can fully understand what Kubernetes is, why we need Kubernetes, the basic architecture of Kubernetes, some core concepts and terms of Kubernetes, and how to build a Kubernetes cluster on the Alibaba cloud platform, so as to provide reference for the evaluation, design and implementation of application containerization.

Through this course, you will not only learn about Alibaba Cloud Container Service for Kubernetes and its applicable scenarios, but also learn how to use Terraform to flexibly deploy of ACK clusters and realize blue and green deployment.

2,593 posts | 794 followers

FollowAlibaba Developer - February 3, 2020

Alibaba Cloud Native Community - March 6, 2023

Xi Ning Wang - March 19, 2020

Alibaba Developer - November 18, 2020

Alibaba Clouder - December 23, 2019

Alibaba Clouder - August 3, 2020

2,593 posts | 794 followers

Follow Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Cloud-Native Applications Management Solution

Cloud-Native Applications Management Solution

Accelerate and secure the development, deployment, and management of containerized applications cost-effectively.

Learn More Platform For AI

Platform For AI

A platform that provides enterprise-level data modeling services based on machine learning algorithms to quickly meet your needs for data-driven operations.

Learn More ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn MoreMore Posts by Alibaba Clouder