Knative Serving is a scale-to-zero and request-driven compute runtime environment built on Kubernetes and Istio to support the deployment and serving of serverless applications and functions. Knative Serving aims to provide Kubernetes extensions for deploying and running serverless workloads.

This post describes how to quickly build Knative Serving and implement automatic scaling with Alibaba Cloud Container Service for Kubernetes.

Alibaba Cloud Container Service for Kubernetes version 1.11.5 is now available. You can use the console to quickly and easily create a Kubernetes cluster. To learn more, see Create a Kubernetes cluster.

Knative Serving runs on Istio. Currently, Alibaba Cloud Container Service for Kubernetes allows you to quickly install and configure Istio in just one click. If you're unsure how to do this, see Deploy Istio.

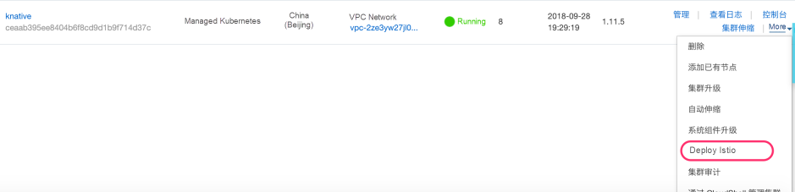

Log on to the Container Service - Kubernetes console. In the left-side navigation pane, choose Cluster, then Cluster again to go to the Clusters page. Then, you'll want to select a cluster and choose More, then Deploy Istio in the Actions column.

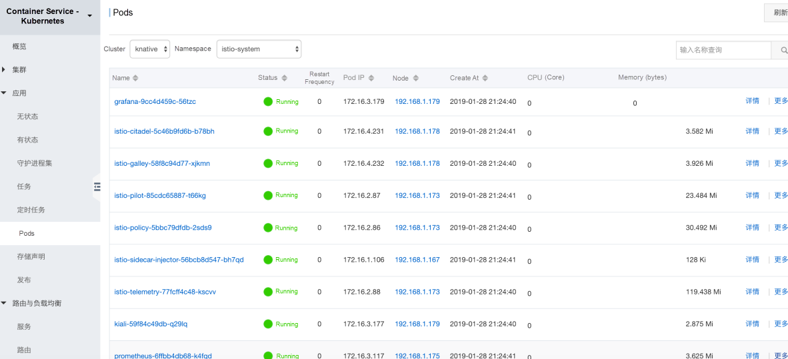

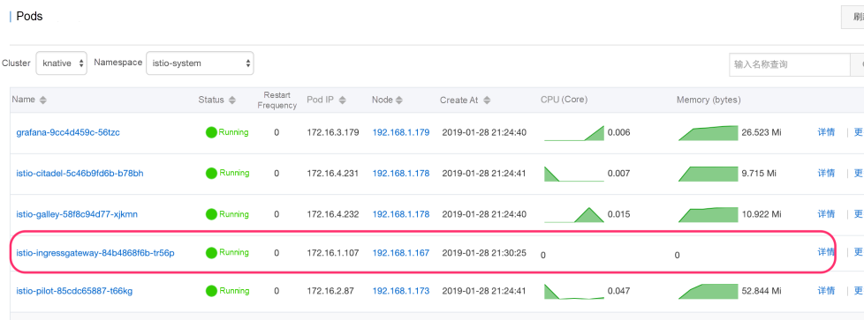

You'll need to set the parameters that appear on the Deploy Istio page, and then click Deploy Istio once you're done. After several seconds to a minute or so, the Istio environment will be deployed. You can confirm that it is indeed deployed by checking the pod status in the console. You'll see a screen similar to the one below.

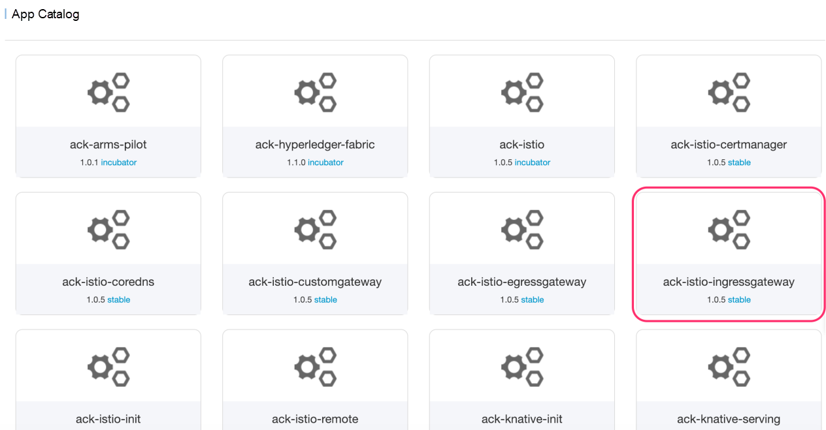

For this step, log on to the Container Service - Kubernetes console. In the left-side navigation pane, choose Marketplace and then App Catalog. On the page that appears, find and click ack-istio-ingressgateway. It's the one in a red square below.

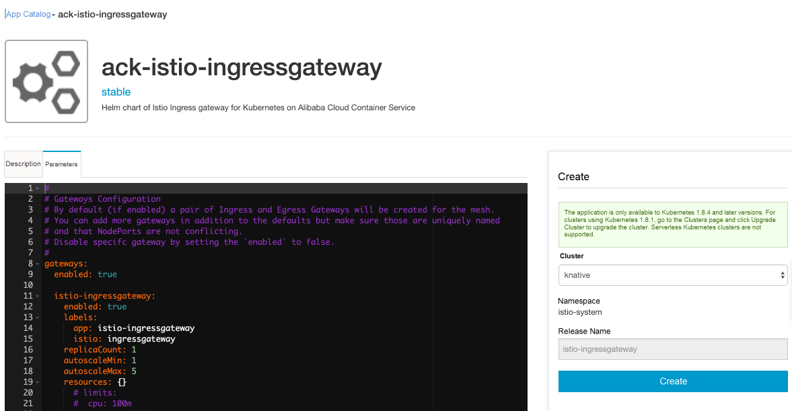

Click the Parameters tab. The default configuration of Istio Ingress Gateway is provided. Modify these parameters based on your specific requirements, and then click Create.

View the pod list in the istio-system namespace to check the running status. It should look something like what you see below.

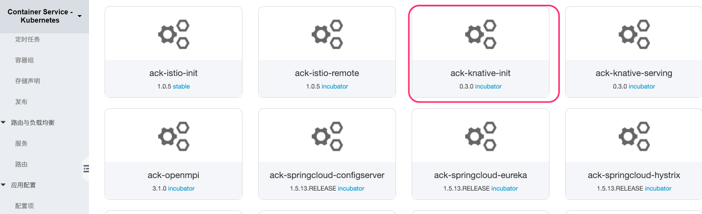

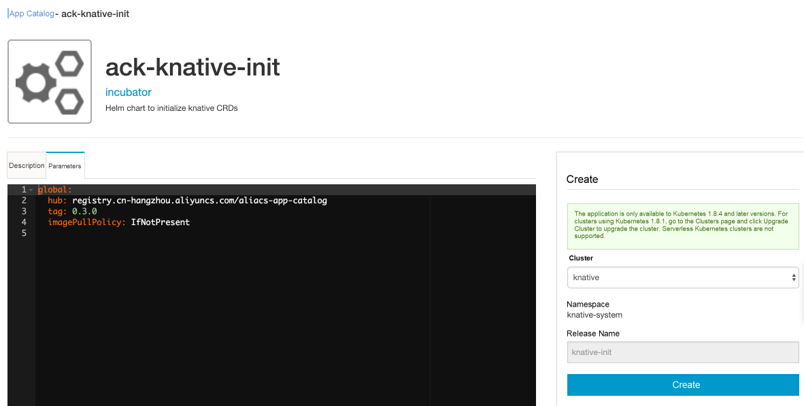

Log on to the Container Service - Kubernetes console. In the left-side navigation pane, choose Marketplace and then choose App Catalog. On the page that appears, find and click ack-knative-init.

Click Create to install the content required for Knative initialization, including Custom Resource Definitions (CRDs).

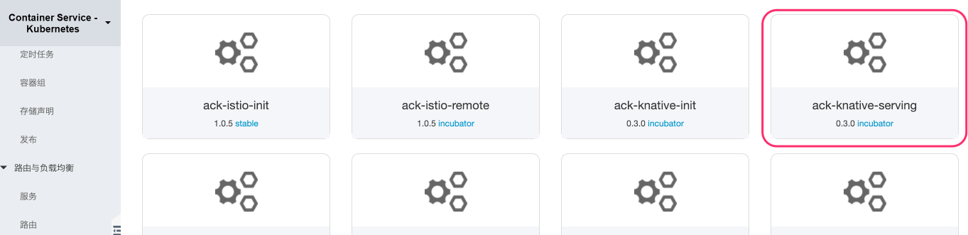

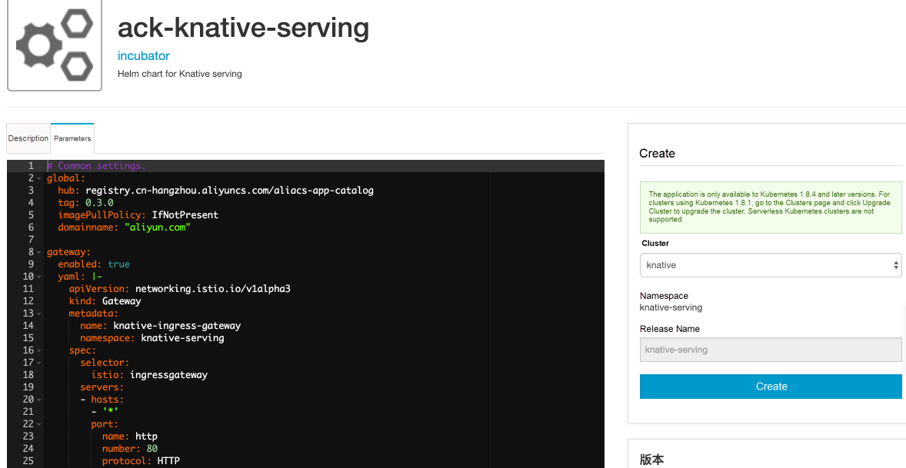

Log on to the Container Service - Kubernetes console. In the left-side navigation pane, choose Marketplace and then App Catalog. On the page that appears, find and click ack-knative-serving.

Next, click the Parameters tab. Default configuration of Istio Ingress Gateway is provided. Modify these parameters on demand, and then click Create.

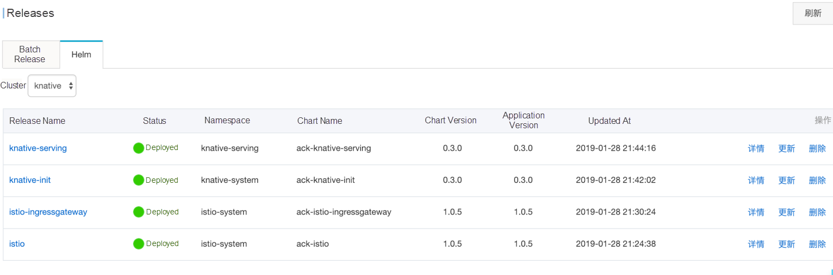

Now, the four Helm charts required for installing Knative Serving have been installed. This should be confirmed on the console.

For this first step, run the following command to deploy Knative Service for a sample autoscale app:

kubectl create -f autoscale.yamlThe content of the autoscale.yaml file is as follows:

apiVersion: serving.knative.dev/v1alpha1

kind: Service

metadata:

name: autoscale-go

namespace: default

spec:

runLatest:

configuration:

revisionTemplate:

metadata:

annotations:

# Target 10 in-flight-requests per pod.

autoscaling.knative.dev/target: "10"

autoscaling.knative.dev/class: kpa.autoscaling.knative.dev

spec:

container:

image: registry.cn-beijing.aliyuncs.com/wangxining/autoscale-go:0.1For this step, locate the entry host name and IP address and export them as environment variables.

export IP_ADDRESS=`kubectl get svc istio-ingressgateway --namespace istio-system --output jsonpath="{.status.loadBalancer.ingress[*].ip}"`Send a request to the autoscale app and check the resource consumption.

curl --header "Host: autoscale-go.default.{domain.name}" "http://${IP_ADDRESS?}?sleep=100&prime=10000&bloat=5"Note: You'll want to replace {domain.name} with your domain name suffix. In the default example, the suffix is aliyun.com.

curl --header "Host: autoscale-go.default.aliyun.com" "http://${IP_ADDRESS?}?sleep=100&prime=10000&bloat=5"

Allocated 5 Mb of memory.

The largest prime less than 10000 is 9973.

Slept for 100.16 milliseconds.Run the following command to install the load generator:

go get -u github.com/rakyll/heyMaintain 50 concurrent requests and send traffic for 30 seconds.

hey -z 30s -c 50 \

-host "autoscale-go.default.aliyun.com" \

"http://${IP_ADDRESS?}?sleep=100&prime=10000&bloat=5" \

&& kubectl get podsWithin the 30 seconds, you can see that the Knative Service automatically scales up as the number of requests increases.

Summary:

Total: 30.1126 secs

Slowest: 2.8528 secs

Fastest: 0.1066 secs

Average: 0.1216 secs

Requests/sec: 410.3270

Total data: 1235134 bytes

Size/request: 99 bytes

Response time histogram:

0.107 [1] |

0.381 [12305] |°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ°ˆ

0.656 [0] |

0.930 [0] |

1.205 [0] |

1.480 [0] |

1.754 [0] |

2.029 [0] |

2.304 [0] |

2.578 [27] |

2.853 [23] |

Latency distribution:

10% in 0.1089 secs

25% in 0.1096 secs

50% in 0.1107 secs

75% in 0.1122 secs

90% in 0.1148 secs

95% in 0.1178 secs

99% in 0.1318 secs

Details (average, fastest, slowest):

DNS+dialup: 0.0001 secs, 0.1066 secs, 2.8528 secs

DNS-lookup: 0.0000 secs, 0.0000 secs, 0.0000 secs

req write: 0.0000 secs, 0.0000 secs, 0.0023 secs

resp wait: 0.1214 secs, 0.1065 secs, 2.8356 secs

resp read: 0.0001 secs, 0.0000 secs, 0.0012 secs

Status code distribution:

[200] 12356 responses

NAME READY STATUS RESTARTS AGE

autoscale-go-00001-deployment-5fb497488b-2r76v 2/2 Running 0 29s

autoscale-go-00001-deployment-5fb497488b-6bshv 2/2 Running 0 2m

autoscale-go-00001-deployment-5fb497488b-fb2vb 2/2 Running 0 29s

autoscale-go-00001-deployment-5fb497488b-kbmmk 2/2 Running 0 29s

autoscale-go-00001-deployment-5fb497488b-l4j9q 1/2 Terminating 0 4m

autoscale-go-00001-deployment-5fb497488b-xfv8v 2/2 Running 0 29sAs shown in this post, you can quickly build Knative Serving and implement automatic scaling based on Alibaba Cloud's Container Service for Kubernetes. We invite you to use Alibaba Cloud Container Service to quickly build Knative Serving and easily integrate it into your project development.

56 posts | 8 followers

FollowAlibaba Container Service - June 16, 2020

Alibaba Container Service - April 18, 2024

Alibaba Container Service - July 22, 2021

Alibaba Container Service - May 14, 2025

Alibaba Cloud Native Community - September 19, 2023

Alibaba Cloud Community - July 11, 2024

56 posts | 8 followers

Follow Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn More Container Registry

Container Registry

A secure image hosting platform providing containerized image lifecycle management

Learn MoreMore Posts by Xi Ning Wang(王夕宁)