By Yongjiao Hou

There is an increasing demand for real-time data to make business decisions, such as the demand to make an adjustment to the resource niche based on sales. What's more, some activities require real-time data to enhance interaction with users. If there are two solutions for data processing (real-time data processing and offline data processing), priority is given to the real-time data processing solution. If the real-time solution cannot be implemented, the offline solution will be adopted.

Real-time data warehouses have been accepted by many companies, and many Aboutyun community members are preparing to build real-time data warehouses.

Users of real-time data warehouses (such as operators, managers, or bosses) can see the detection data in real-time, which is very convenient.

Let’s use the take-out scenario as an example:

(1) The real-time data warehouse can help the take-out platform observe the effect of the current marketing activity. If the effect is not good, the platform can remedy it in time.

(2) The real-time data warehouse can help the take-out platform judge whether customers like the newly launched business and adjust it according to the real-time detection and feedback of the customers.

(3) The real-time data warehouse can help the take-out platform find and process abnormalities in orders, merchants, and distribution in real-time.

(4) The real-time data warehouse can recommend goods in real-time according to user preferences.

In conclusion, with real-time data warehouses, we can deal with the verification of business ideas, detection of business anomalies, and recommendations based on user preferences in real-time. Real-time data warehouses allow companies to run more efficiently. If offline data warehouses support the operation strategy decisions of companies, real-time data warehouses support both strategic and tactical decisions.

If we don't know anything about data warehouses or have only used offline data warehouses, there may be such a question:

Are offline data warehouses and real-time data warehouses independent or related? From the perspective of efficiency, companies will not separate them. Real-time data will eventually flow into data warehouses.

If you do not understand, let me explain further. Most real-time data warehouses use Flink streaming processing as technical architecture and Kafka as storage. As we know, Kafka is generally used for caching, and data in it is generally valid temporarily. So, data in real-time data warehouses can flow into offline data warehouses at a certain stage.

If you really want to build a real-time data warehouse, you may have the following questions:

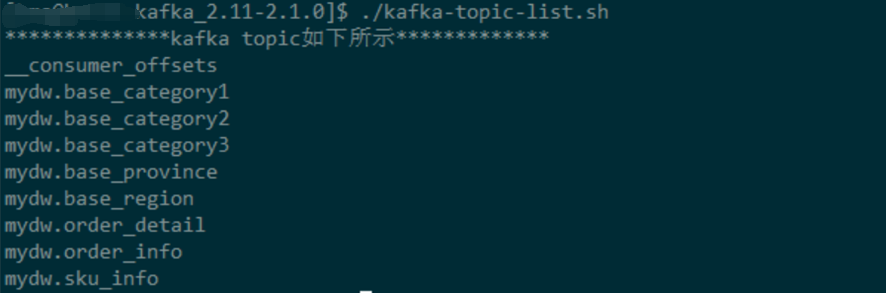

Kafka is layered by topics, and tables correspond to topics. The form is as shown in the following image:

You can implement Kafka as a real-time data warehouse using the image above.

We know the Topic is full of messages, but how can we integrate the messages into topics? Flink SQL is required. Flink SQL reads topics and performs various operations on data (such as joining).

Through the section of the article above, we have figured out how to cope with real-time data warehouse storage and perform operations on data, but how can we build data warehouses according to our business?

As long as we understand the real-time data warehouse, there are various ways and ideas to implement it. Generally speaking, the overall framework of a real-time data warehouse is divided into different levels according to the flow direction of data. The access layer collects data from various business systems through various data access tools (such as business data of buried point or merger and acquisition data from the business background) and puts them into Kafka. Data in the message queue is the raw data of both offline data warehouse and real-time computing. This ensures real-time and offline raw data are unified.

After getting the source data, we can process it with Flink and real-time computing engines in the computing layer and then store it in different storage media in the storage layer. Different storage mediums are used according to different application scenarios. There is also an interaction between Flink and Kafka in this architecture. Layer the data, retrieve some of the data through the computing engine from Kafka for processing, and then put it back into Kafka. The data put back here may be other layered data.

The processed data at the storage layer will be applied to different data applications through two services at the service layer (DWS or DM): unified query and metric management. The unified query is a service that calls API through the business side, and metric management aims to define and manage data metrics. Data applications may be our formal products or direct business systems.

If you don't know much about data warehouses, you may not understand. So, let me explain further. The difference between a real-time data warehouse and an offline data warehouse lies in time. A real-time data warehouse is more timely, whether in terms of collection or computing. What are the specific steps to implement the timely collection and computing of a real-time data warehouse? For example, in terms of collection, we can use Canal, Maxwell, and EPX for real-time collection. For better comparison, use Sqoop that adopts MapReduce at the bottom layer for offline collection. In terms of computing frameworks, we can use Hive for offline computing and (the currently mostly used) Flink for real-time computing.

When we build a data warehouse, we may face the following problems:

(1) Unclear Process

(2) Unclear Technical Architecture Selection

Let's look at several technical architectures to help us choose a more suitable architecture from the technical perspective.

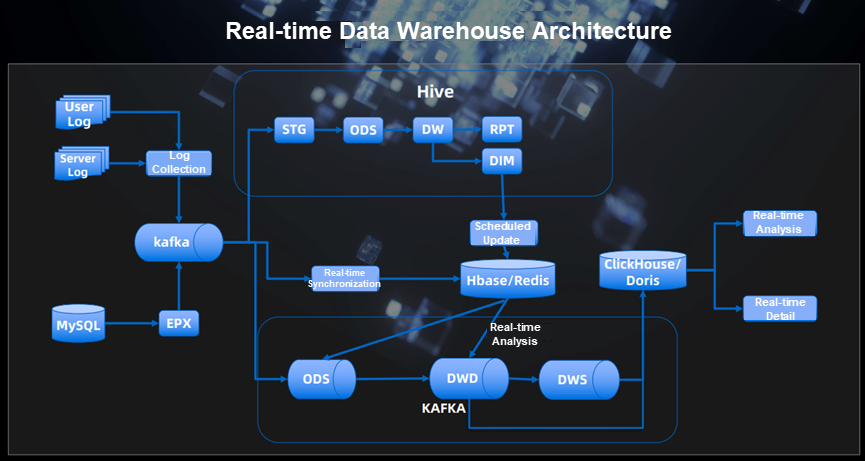

We can see that user logs and server logs enter Kafka through the log collection tool, and the processed data in Kafka flows into Hive and Kafka, respectively. Both Hive and Kafka are layered, which means Hive is an offline database and Kafka is a real-time data warehouse.

Hive: What needs to be explained here is that there is a corresponding table for each layer

STG: It stores data integrated from heterogeneous source systems.

ODS: It is the layer closest to the data in the data source. The data in the data source after ETL (extract, transform, load) is stored in this layer. In general, most of the data in this layer is classified according to the classification of the source business system.

DW: It is the data warehouse. In this layer, various data models are built based on the topic of the data obtained from the ODS.

RPT: It is oriented to the report layer, including aggregate table (which can be used when some query dimensions are small) and fact table used in report query.

DIM: The DIM layer defines conformed dimensions for enterprises based on the concept of dimensional modeling.

The data in the DW layer and DIM layer are regularly updated to Hbase/Redis.

Kafka real-time data warehouse:

DWD: Data Warehouse Detail

DWS: Data Warehouse Summary is the main content of a data platform. The data in the two layers is the data in the ODS layer after ETL. Most of the tables are widened to facilitate analysis and statistics.

We can see that data in DWD and DWS flows into ClickHouse/Doris, which is called OLAP.

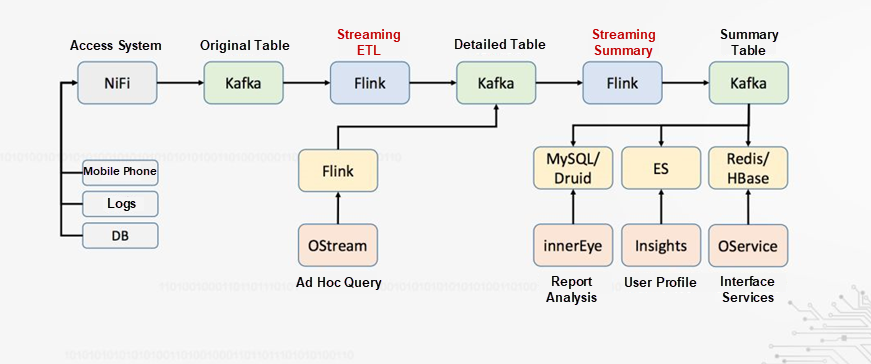

This is the real-time data warehouse of OPPO. The real-time data warehouse uses NiFi to collect logs and then logs enter Kafka. The original table here is the ODS layer. Then, after ETL by Flink, data flows into Kafka, the DWD layer. Ad hoc query (also known as OLAP) can be performed here. The summary of the Kafka DWD layer is the DM (ADS) layer of the data warehouse. The DM layer serves as a data source for report analysis, user profile analysis, and interface services.

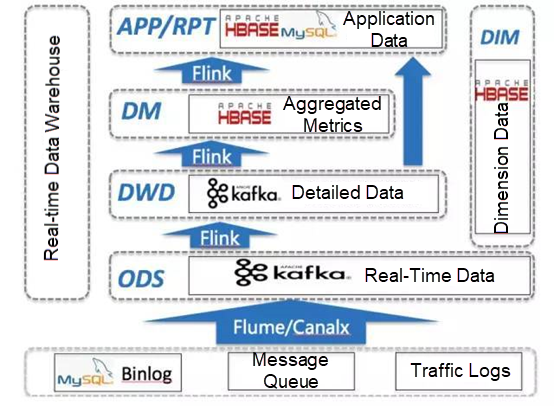

This technical architecture is very different from OPPO, but the content is mostly the same, except the DM layer is replaced by Hbase. It is worth noting that data in the DIM layer can be joined with data in other layers.

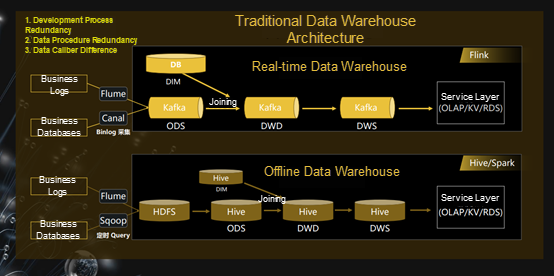

This architecture separates offline data warehouses and real-time data warehouses. The real-time data warehouse adopts Flink, while the offline data warehouse adopts Hive/Spark.

In Terms of Log Collection:

Storage:

Layer:

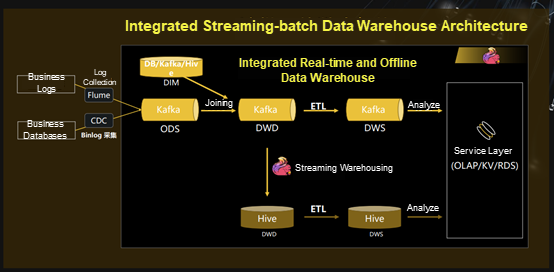

This is an integrated offline and real-time data warehouse. Many people in the creation of real-time data warehouses may ask, “If data is stored in Kafka, how can we deal with it if it is expired?

Each company stores the data (such as the data in the Kafka DWD layer) in its offline data warehouse, Hive, based on its need, and then further processes it.

In the whole process above, Flume and CDC are used to collect logs. After collection, log data is stored in the real-time data warehouse, Kafka. Data in the ODS layer and DIM layer forms a wide table, and then the wide table enters Kafka, forming the DWD layer. The data processed in the DWD layer flows into the offline data warehouse, Hive, and the DWS layer, Kafka, respectively. The subsequent process does not need to be described in detail. Finally, the DWS layer provides data for OLAP.

From the article above, we can see that whether it is a real-time data warehouse or an offline data warehouse, the database layering is similar. We need to decide the number of layers according to the actual need. Generally speaking, there are four to five layers in a data warehouse, except for Jingdong, whose data warehouse has nine layers. Layering has many functions. It is believed that layering plays a big role in reusing each layer, saving time, and improving efficiency.

If readers understand the content above, technical architecture selection will no longer be a problem. We need to consider scenarios and the team's knowledge reserve before choosing an architecture.

Disclaimer: This is a translated work of Yongjiao Hou. All rights reserved to the original author.

Serverless Data Warehouse Exploration for Agile Data Analysis

Apache Flink Community China - September 15, 2022

Apache Flink Community China - March 29, 2021

XianYu Tech - December 13, 2021

Hologres - June 30, 2021

Hologres - May 31, 2022

Hologres - July 16, 2021

Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More Big Data Consulting Services for Retail Solution

Big Data Consulting Services for Retail Solution

Alibaba Cloud experts provide retailers with a lightweight and customized big data consulting service to help you assess your big data maturity and plan your big data journey.

Learn More Data Lake Storage Solution

Data Lake Storage Solution

Build a Data Lake with Alibaba Cloud Object Storage Service (OSS) with 99.9999999999% (12 9s) availability, 99.995% SLA, and high scalability

Learn More ApsaraDB for HBase

ApsaraDB for HBase

ApsaraDB for HBase is a NoSQL database engine that is highly optimized and 100% compatible with the community edition of HBase.

Learn MoreMore Posts by ApsaraDB