Step up the digitalization of your business during the Alibaba Cloud 2020 Double 11 Big Sale! Get new user coupons and explore over 16 free trials, 30+ bestselling products, and 6+ solutions for all your needs!

By Jin, Xiaojun (Xianyin), Senior Technical Expert at Alibaba Cloud.

Released by Hologres

The final turnover from Tmall’s 2020 Double 11 is 498.2 billion Chinese Yuan (CNY). Behind all of this are the greatest human-machine collaboration in mankind history. This presents an unprecedented challenge in the digital world. Hologres, Alibaba Cloud’s next-generation cloud-native data warehouse, provided important technical support during Double 11. Real-time data generated by consumer searching, browsing, favorites, or purchasing flows into Hologres; they are stored and cross-checked with the accumulated historical offline data.

During the 2020 Double 11 Global Shopping Festival, Hologres withstood a real-time data peak of 596 million records per second, with a single table storage up to 2.5 PB. It provided multi-dimensional analysis and services for external systems based on trillions of data records and returned results within 80 millseconds for 99.99% of queries. Hologres realized real-time and offline data unification and supported online application services.

Hologres starts in 2017. Over the past three plus years, Hologres has achieved many breakthroughs:

As Hologres has been successfully used on Alibaba’s Double 11 Tmall shopping festival, the underlying core technologies of Hologres are now revealed to the public for the first time. This article gives an introduction to Hologres and explains how Hologres has been implemented in Alibaba's core application scenarios.

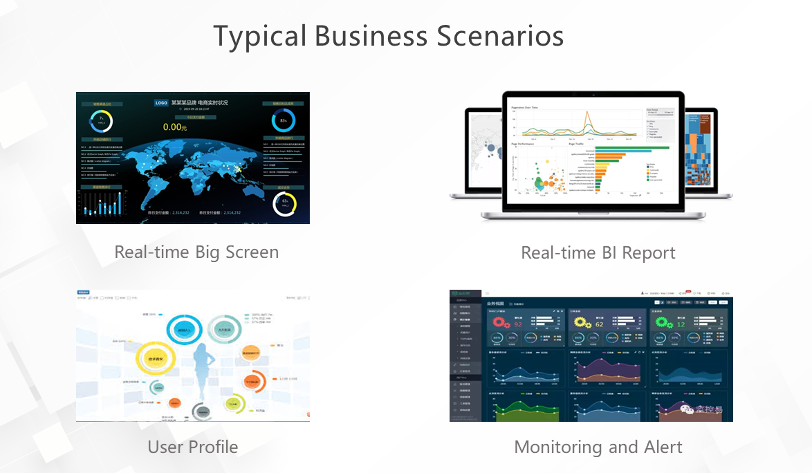

Currently, big data-related business scenarios generally include real-time big screen, real-time BI report, user profile, as well as monitoring and alert, as shown in the following figure.

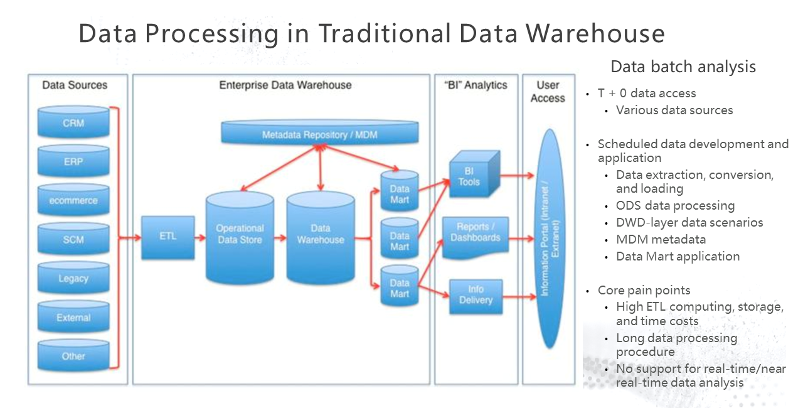

In the big data business scenarios mentioned above, the industry has long started to meet the needs of these scenarios through the construction of data warehouses. Traditionally, an offline data warehouse is constructed, as shown in the following figure. First, all kinds of data are collected and processed by ETL. Then, data aggregation, filtering, and other processing are conducted through layer-by-layer modeling. Finally, when needed, data is presented based on the tools of the application layer, or reports are created.

Although this method can be used to connect multiple data sources, it has some obvious pain points:

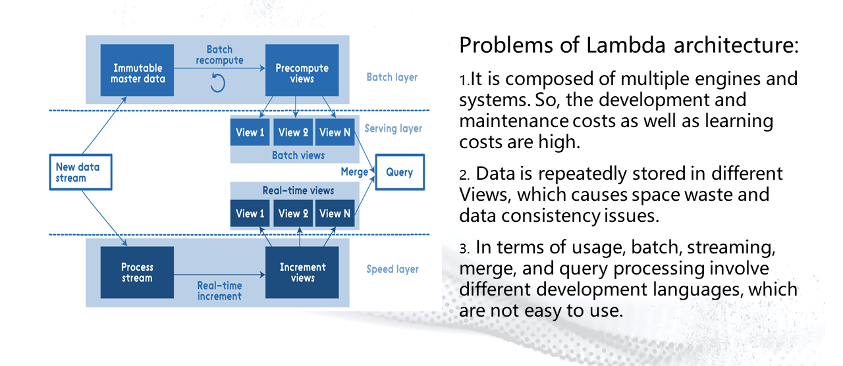

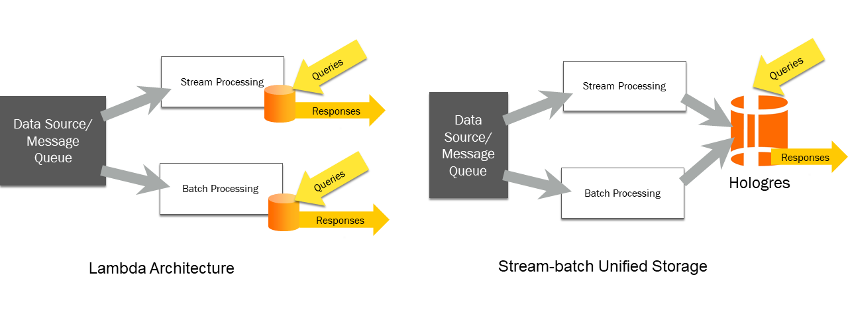

With the rise of real-time computing technology, Lambda architecture emerged. The following figure shows how the Lambda architecture works. It adds a layer to process real-time data on top of the traditional offline data warehouse. Then, the offline data warehouse and the data generated by the real-time procedure are merged in the serving layer. This allows users to query the offline and real-time data.

Since 2011, Lambda architecture has been adopted by many Internet companies and has solved some problems. However, the increase of data volume and application complexity has gradually brought out some problems in Lambda architecture:

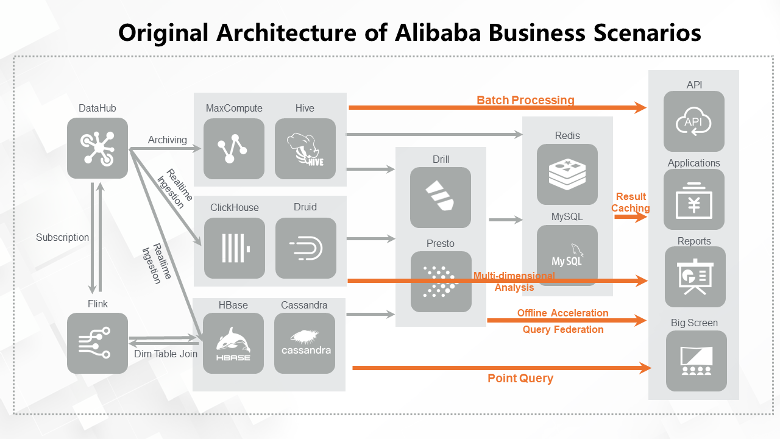

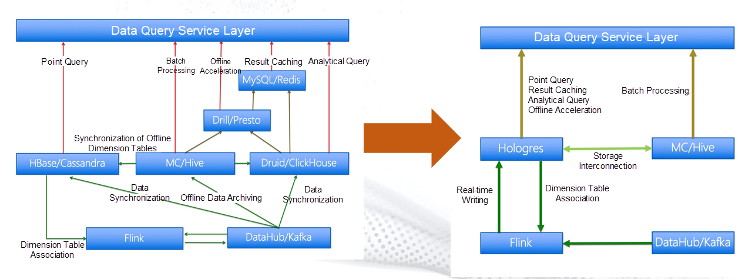

Alibaba has also encountered the problems mentioned above. The following figure shows the real-time data warehouse architecture developed by Alibaba from 2011 to 2016. The architecture was essentially a Lambda architecture. However, as the business volume and data increase, the complexity of relationships increases and the cost increases dramatically as well. Therefore, a more elegant solution was urgently needed to solve similar problems.

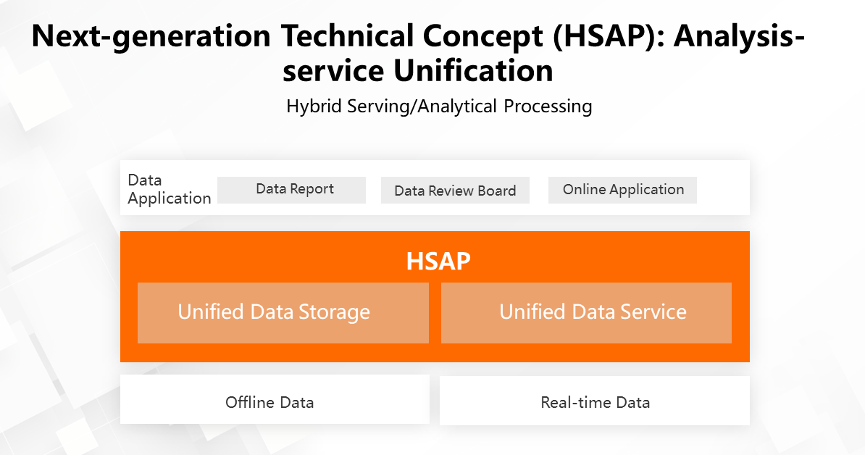

Based on the preceding background information and facing the pain points of traditional approach, HSAP (Hybrid Serving and Analytical Process) concept was proposed. HSAP supports complex analytical queries with high write QPS within the same system.

What are the core requirements of HSAP implementation?

Based on HSAP, the corresponding products needed to be developed and implemented. Therefore, Hologres was created.

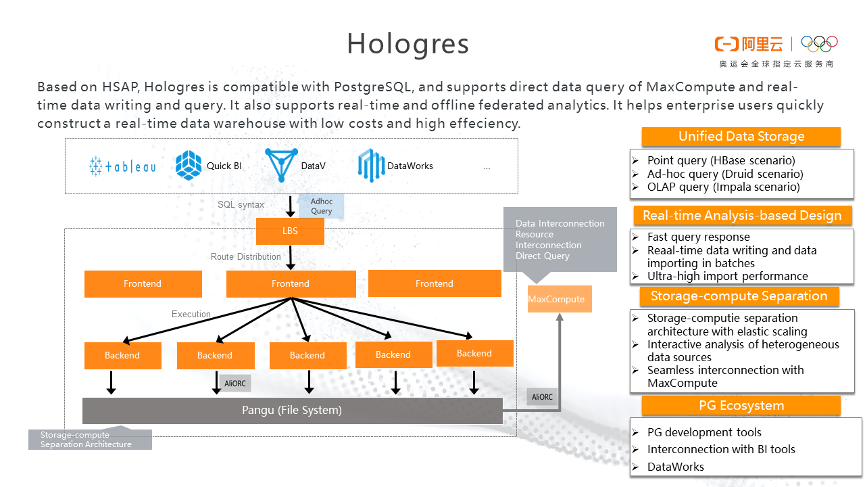

Hologres is the best HSAP system in the market at this time. It is compatible with the PostgreSQL ecosystem. It supports direct query of MaxCompute data (offline data), real-time data ingestion, real-time query, and real-time offline federated analytics. It helps enterprises quickly build a real-time data warehouse featuring stream-batch unification with low-cost and high efficiency.

The term Hologres is a combination of Holographic and Postgres. Postgres means that Hologres is compatible with the PostgreSQL ecosystem, which is easy to understand. Holographic needs to be explained in more detail. Let's take a look at the following figure:

Holographic is translated as "全息" in Chinese, which is often seen in the term “3D holographic projection technology.”

In physics, the Holographic Principle is used to explain the description of a volume of space. It can be thought of as encoded on a lower-dimensional boundary to the region. The picture above is an imaginary black hole. There are critical points a certain distance away from the black hole, which constitutes the Event Horizon. Objects that are outside the Event Horizon can overcome the gravity of the black hole. In the picture, the shining circle represents the Event Horizon. The Holographic Principle considers that the content of all objects that fall into the black hole may be completely contained on the surface of Event Horizon.

Hologres stores all of the information in the data black hole and perform various types of computing operations.

The Hologres architecture is very straightforward. It is a storage-compute separation architecture. All data are stored in the same distributed file system. The system architecture diagram is shown in the following figure:

Hologres uses a storage-compute separation architecture, allowing users to scale in or out either storage or computing resources elastically based on their business needs.

In distributed storage, there are three common architectures:

Hologres adopted unified storage based on stream-batch unification. In a typical Lambda architecture, real-time data is written into real-time data storage through the real-time data procedure, and offline data is written into offline storage through the offline data procedure. Then, different queries are put into different storages to perform merge operations. As a result, multiple storage overheads and complex merge operations at the application layer are required.

With Hologres, data can be collected and then processed through different procedures. The processing results can be directly written to Hologres, and data consistency is guaranteed. There is no need to distinguish between real-time tables and offline tables, which greatly reduces the complexity as well as the learning cost of IT professionals.

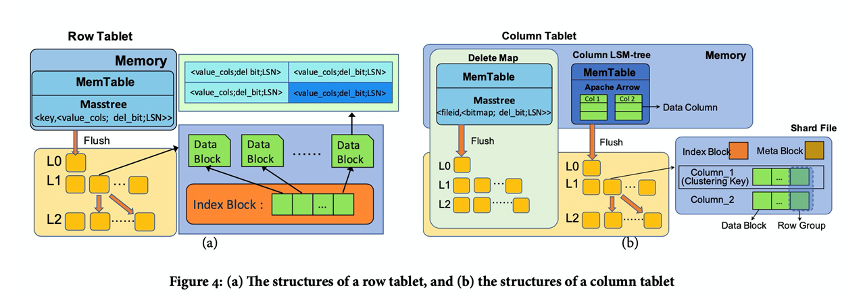

The underlying layer of Hologres supports two storage orientation formats: row storage and column storage. Row storage is suitable for point queries based on primary key (PK), and column storage is suitable for OLAP complex queries. Hologres deals with the two storage formats a little differently at the underlying layer, as shown in the following figure.

Logs are written first and stored in the distributed file system to ensure the integrity of all of the service data when data is ingested into Hologres. Logs can be recovered from the distributed system even if the server breaks down. MemTable, namely the memory table, is written after logs are written. In this case, the data is written successfully as considered by the system. MemTable has a certain capacity. When the capacity is full, the data in the MemTable is gradually flushed into files, which are stored in the distributed file system. There is a difference between row storage and column storage. During the file flushing process, row storage tables are flushed into row storage file format and column storage tables are flushed into column storage file format. After many flushing, a lot of small files are accumulated, the system will have backend process to compact these small files into large files.

The Hologres execution engine is an all-round distributed query engine that focuses on optimizing high-concurrency and low-latency real-time queries. “All-round” means that all types of SQL queries can be expressed and executed efficiently by the Hologres execution engine. Some other distributed query engines focus on optimizing common single real-time table queries but do not perform well in complex queries against all tables. Others support complex queries but have poor performance in real-time scenarios. Hologres aims to ensure high performance in all scenarios.

The Hologres execution engine can process various types of queries with high performance based on the following characteristics:

Hologres is aimed at out-of-box usage for users. Users can perform all daily business analysis through SQL statements without additional modeling and processing. Based on new hardware technologies, Hologres designs and implements its unique computing and storage engines. The optimizer plays a role in efficiently running SQL statements executed by users on computing engines.

The Hologres optimizer adopts a cost-based optimizer (CBO). It can generate complex query federation execution plans and utilize the capabilities of multiple computing engines. In addition, many optimization methods have been developed during long-term dealing with different business scenarios inside and outside Alibaba. Thus, Hologres computing engine can exert extreme performance in different business scenarios.

Blackhole, the core component of Hologres, is a storage and computing engine developed by Alibaba. It adopts asynchronous programming mode. Underneath, there are two frameworks: HoloOS and HoloFlow.

HoloOS (HOS) is a flexible and efficient asynchronous framework that is extracted from the bottom layer of Blackhole. In addition to high performance, HOS also implements load balancing and solves the long tail problem during query execution. A variety of sharing and isolation mechanisms are also realized to achieve efficient utilization of resources.

At the same time, HOS has been applied to the distributed environment and developed HoloFlow, a distributed task scheduling framework. Thus, the flexibility of stand-alone scheduling in the distributed environment can be ensured.

As the access layer of Hologres, Frontend is compatible with the PostgreSQL protocol and responsible for accessing and processing user requests and metadata management. However, PostgreSQL is a stand-alone system and is less capable of processing user requests with high concurrency. Hologres is faced with complex business scenarios and hundreds of millions of user requests. Therefore, Frontend adopted a distributed architecture. It also realizes the real-time synchronization of information among multiple frontends through multi-version management and metadata synchronization. It supports full linear scaling and ultra-high QPS through load balancing on the LBS layer.

Based on Frontend, Hologres also provides extended execution engines.

Hologres is a real-time data warehouse featuring stream-batch unification. It supports real-time and offline data writing for multiple heterogeneous data sources, such as MySQL and DataHub. It also enables tens of millions of data writing and data query per second. What’s more, data can be queried immediately right after it is written. These powerful capabilities are based on Hologres JDBC interfaces.

Hologres is fully compatible with PostgreSQL on interfaces, including the syntax, semantics, and protocol of PostgreSQL. The JDBC Driver of PostgreSQL can be used to connect Hologres and perform data reading and writing. Currently, all data tools on the market, such as BI tools and ETL tools, support the PostgreSQL JDBC Driver. This indicates that Hologres was born with strong tool compatibility and a powerful ecosystem. Thus, Hologres provides a complete closed-loop of the big data ecosystem from data processing to visualized data analysis.

Hologres is the best implementation practice of HSAP. In addition to the analytical query processing, it has powerful online service capabilities, such as Key-Value (KV) point query and vector search. In the KV point query scenario, Hologres supports million-level QPS throughput and extremely low latency easily and stably through SQL interfaces. In the vector search scenario, users can also use SQL statements to import vector data, create vector indexes, and perform queries. No additional conversion is needed for queries, and the performance is better than other products. Some non-analytical queries can also be applied in serving scenarios through reasonable table structures and the powerful indexing capabilities of Hologres.

Based on Hologres, architecture upgrades have also been done in multiple business scenarios. The business architecture is simplified, as shown in the following figure:

As the cloud-native next-generation real-time data warehouse, Hologres and Flink have implemented the "stream-batch unification" for the first time in core data business scenarios during the 2020 Double 11 Global Shopping Festival. It has passed the tests in stability and performance; and achieved the real-time full procedure of business. The millisecond-level data processing capability provides merchants and consumers with a more intelligent consumption experience.

With the development of business and technologies, Hologres will continue to improve its core technological competitiveness, realize the goal of service-analysis unification, and bring more services and value to users.

More articles about the core technologies above will be released. Please stay tuned for more information.

Xiaojun Jin (Xianyin), is a Senior Technical Expert with Alibaba Cloud. He has ten years of working experience in the big data field and is currently engaged in the design and R&D of Hologres.

What Is the Next Stop for Big Data? Hybrid Serving/Analytical Processing (HSAP)

Hologres - July 7, 2021

Alibaba Cloud MaxCompute - January 21, 2022

Hologres - July 22, 2020

Alibaba Cloud MaxCompute - January 22, 2021

Alibaba Clouder - July 1, 2020

Hologres - July 16, 2021

Black Friday Cloud Services Sale

Black Friday Cloud Services Sale

Get started on cloud with $1. Start your cloud innovation journey here and now.

Learn More Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More MaxCompute

MaxCompute

Conduct large-scale data warehousing with MaxCompute

Learn MoreMore Posts by Hologres