The Alibaba Cloud 2021 Double 11 Cloud Services Sale is live now! For a limited time only you can turbocharge your cloud journey with core Alibaba Cloud products available from just $1, while you can win up to $1,111 in cash plus $1,111 in Alibaba Cloud credits in the Number Guessing Contest.

At the Data Plane Development Kit (DPDK) Summit held in Beijing last month, Liang Jun, a Network Technology Expert at Alibaba Cloud, shared the architecture and design concepts of high-performance Server Load Balancer (SLB) as well as its application in massive traffic Internet scenarios such as the Singles' Day (Double 11) Shopping Festival and the mobile Taobao red envelope activity at the Spring Festival Gala. This article provides an overview of this conference speech.

SLB is a service designed to distribute traffic among multiple servers. SLB can expand the application system's service capability through traffic distribution, and improve the application system's availability by eliminating single points of failure. Therefore, SLB has been widely applied and has become one of the traffic entrances of Alibaba. The next-generation Alibaba SLB is implemented based on DPDK. SLB provides support for the rapid business development of Alibaba due to its high performance and high availability. A typical example is that SLB stood the test of massive and drastically fluctuating traffic to support the November 11 Shopping Festival in 2017. This PPT presentation describes Alibaba's next-generation SLB from two perspectives: the implementation architecture and the concurrency session synchronization mechanism of the DPDK-based high-performance SLB. This mechanism allows SLB to provide unaffected services in scenarios such as disaster recovery and upgrades.

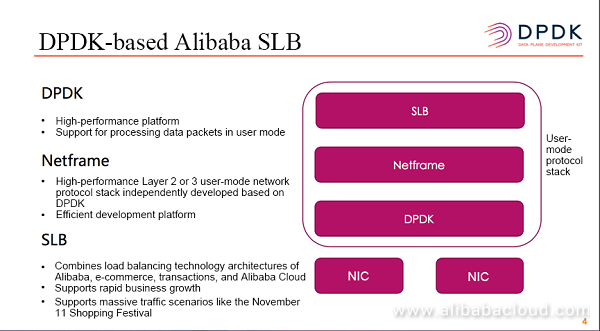

The majority of the load balancers that Alibaba currently uses have been replaced with the DPDK-based SLB, which is independently developed by Alibaba. The bottom layer of this version is DPDK. SLB utilizes useful high performance technologies provided by the DPDK platform, such as user-mode packet receiving and sending, hugepage, and NUMA. The upper layer is the Netframe platform, which is a high-performance user-mode network protocol stack (Layer 2 or 3) independently developed based on DPDK. Netframe implements the Layer 2 MAC address forwarding, Layer 3 route lookups and forwarding, and dynamic routing protocols. Additionally, it provides rich libraries of different types, which are eventually provided, in a way similar to Netfilter hooks, for use by upper-layer applications. The majority of current Alibaba SLB is developed based on the Netframe platform. By adopting this platform, SLB can receive and send data packets by directly using hooks, and focus on only the data streams of its own services, enabling fast and efficient network application development. This SLB version has been widely used in Alibaba business scenarios such as e-commerce transactions and payment. SLB products provided for customers on Alibaba Cloud's public cloud servers are also based on this version.

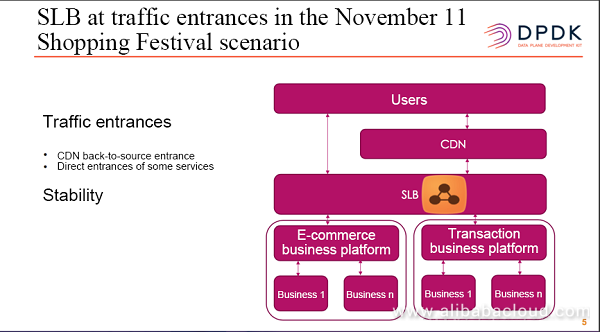

During the November 11 Shopping Festival, traffic on public network entrances is divided into two categories. One is the traffic generated from users' access to Alibaba sites. Traffic of this type first flows through CDNs and then gets back to the sources, namely, from CDNs to applications that provide services. In the back-to-source process, traffic will flow through SLB, which provides load balancing services. In general, this category accounts for the majority of the traffic. The other category is the traffic for which SLB directly provides services. Traffic of this type directly flows from SLB to backend applications. This type of traffic accounts for only a small proportion. For both traffic categories, SLB is placed at traffic entrances, and therefore plays a key role. This requires high SLB stability.

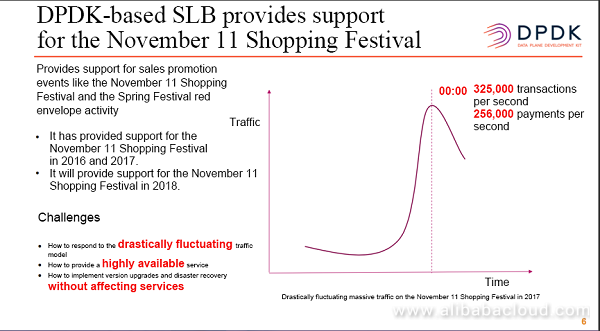

DPDK-based SLB has supported the November 11 Shopping Festival and the Spring Festival red envelope activity for two consecutive years. The preceding figure shows traffic on the November 11 Shopping Festival in 2017. As we can see, the traffic is drastically fluctuating at midnight because many flash sales began after 00:00, and goods for flash sales are limited in number. As shown in the figure, the transaction on the 2017 November 11 Shopping Festival peaked at 325,000 deals per second, and the payment peaked at 256,000 deals per second. This drastically fluctuating data model posed great challenges to SLB.

Based on the DPDK platform, high-performance SLB utilizes the optimization of the DPDK platform and creates the lightweight and efficient data forwarding plane. In the forwarding plane, no interruptions, preemptions, system calls, or locks are encountered. CPU cache misses are reduced by aligning to the core data structure "cache line". TLB misses are reduced by using hugepage. Delay time of CPU access across NUMA is reduced by applying NUMA to key data structure. The principle of separating the control plane from the forwarding plane is also applied to applications' processing logic. This ensures the efficiency and lightweight of the forwarding plane by minimizing packet-related events that the forwarding plane needs to handle and having tasks (such as complex management operations, scheduling, and statistics) done on the control plane.

In addition to utilizing the advantages of the DPDK platform and the separation of the control plane and the forwarding plane, the high-performance SLB itself implements a forwarding architecture that combines software and hardware. Data packets arriving on SLB from the client will experience Receive Side Scaling (RSS), and traffic will be evenly distributed to individual CPUs. Because Alibaba SLB uses the FNAT forwarding mode, each CPU has an independent local address. Therefore, data packets sent from SLB to backend RS will be replaced with the local address of each CPU. This ensures that the data packets sent back from RS can reach the same CPU as the streams from the client after these data packets go through Flow Director (FDIR) on an NIC. Therefore, an architecture that features "to and from the same CPU" is implemented in the aspects of the mechanism and forwarding architecture. The multi-CPU concurrency processing logic is instantiated on services after this architecture, and data structure is at a per-CPU level. CPUs can process data packets in parallel to considerably improve performance. Additionally, this concurrent processing architecture can fully utilize the current multi-CPU server architecture, enabling performance improvement in the case of subsequent CPU addition.

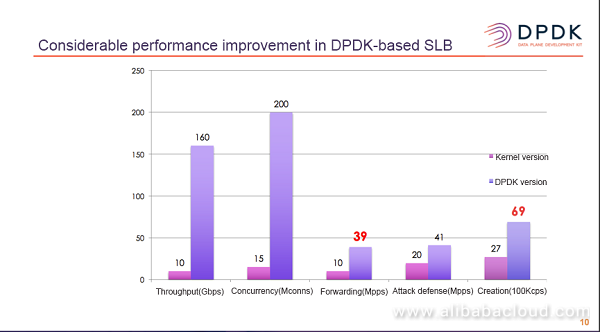

SLB combines the advantages of the DPDK platform, adopts the design principle of separating the control plane from the forwarding plane, and uses a forwarding architecture that features concurrent CPU processing. This greatly improves SLB performance. In the case of small 64-byte packets, the forwarding PPS is increased to 39 Mpps from 10 Mpps for the kernel version, and the speed of creating connections is increased to 6.9 Mcps from 2.7 Mcps for the kernel version. The considerable SLB performance improvement of DPDK-based SLB ensures that SLB can support drastic traffic growth scenarios on the November 11 Shopping Festival in 2016 and 2017.

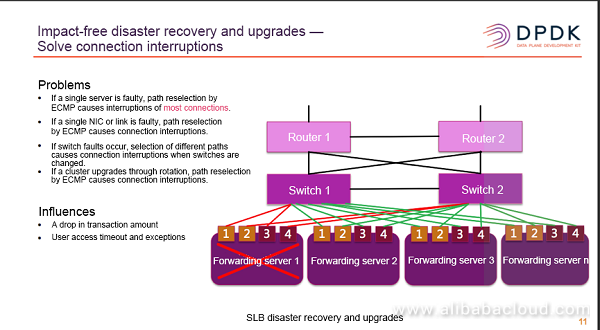

The preceding diagram shows SLB disaster recovery. SLB uses a clustering deployment mode that is horizontally expandable. The same VIP routing is distributed among multiple servers, and ECMP routing is formed on the switch side. This enables multi-dimensional disaster recovery. However, before connections are synchronized, CPU faults, memory faults, hard drive faults, and some other faults will cause the whole forwarding server to become unavailable. After network convergence, ECMP will reselect paths. Therefore, the majority of connections, after reselecting paths, will reach different forwarding servers instead of the previous ones, interrupting the existing connections. In scenarios such as faults of a single NIC, faults between NICs and switches, switch faults, or the rotation upgrade of SLB, ECMP will reselect paths, thus interrupting the existing connections. Interruptions of existing connections, especially long connections, will directly cause a drop in transaction amount. Additionally, access timeout or errors may occur on the user side, causing bad user experience.

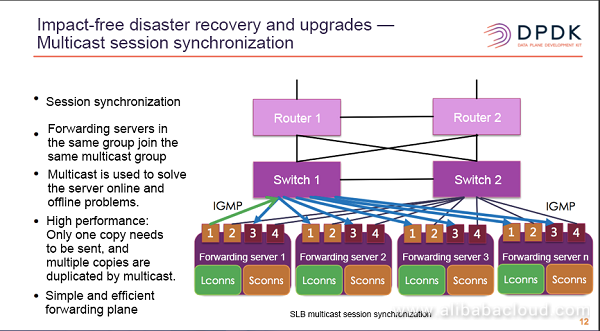

SLB uses the session synchronization mechanism to solve the long connection interruption problems in the upgrades and disaster recovery scenarios. Servers in the same group (for example, the four forwarding servers shown in the preceding figure) will join the same multicast group. When an SLB application starts, it will join the corresponding multicast group, and the server will go offline or restart. If a timeout occurs, the application will be automatically deleted from the multicast group. The multicast technology can simplify the server online and offline problems of the session synchronization mechanism. The multicast technology also reduces multiple copies of the multicast package that need to be sent to other servers to only one copy. The switches handle the copy offload of data packets on switch hardware, making SLB's forwarding plane simple, efficient, and high-performance.

When session synchronization is implemented, sessions are divided into two types: local sessions (Lconns) and synchronized sessions (Sconns). During the session synchronization, only local sessions are synchronized to devices, and the servers that receive the synchronization request will update the timeout and other information of Sconns. When a disaster recovery switchover occurs, the Sconns of the forwarding servers will be automatically switched to Lconns if the Sconns has forwarded data packets.

19 posts | 11 followers

FollowAlibabaCloud_Network - November 12, 2018

AlibabaCloud_Network - December 19, 2018

Alibaba Clouder - November 3, 2017

Alibaba Clouder - November 9, 2018

Alibaba Clouder - November 7, 2018

AlibabaCloud_Network - November 21, 2018

19 posts | 11 followers

Follow Black Friday Cloud Services Sale

Black Friday Cloud Services Sale

Get started on cloud with $1. Start your cloud innovation journey here and now.

Learn More Accelerated Global Networking Solution for Distance Learning

Accelerated Global Networking Solution for Distance Learning

Alibaba Cloud offers an accelerated global networking solution that makes distance learning just the same as in-class teaching.

Learn More Networking Overview

Networking Overview

Connect your business globally with our stable network anytime anywhere.

Learn MoreMore Posts by AlibabaCloud_Network