By Cui Xingcan

This article describes stream processing with Apache Flink from three different aspects including parallel processing and programming paradigm, DataStream API overview and simple application, and state and time in Flink.

Parallel computing or the "divide-and-conquer" method is an effective solution for computing-intensive or data-intensive tasks that require a significant amount of computation. The key of this method is to divide an existing task or to properly allocate computing resources.

Let's take an example. At school, teachers sometimes ask students to help mark examination papers. Suppose there are three questions A, B, and C, then the work can be divided in two different methods:

The methods highlighted above for work division according to the questions in the paper is known as computing parallelism and the division of the paper itself is known as data parallelism.

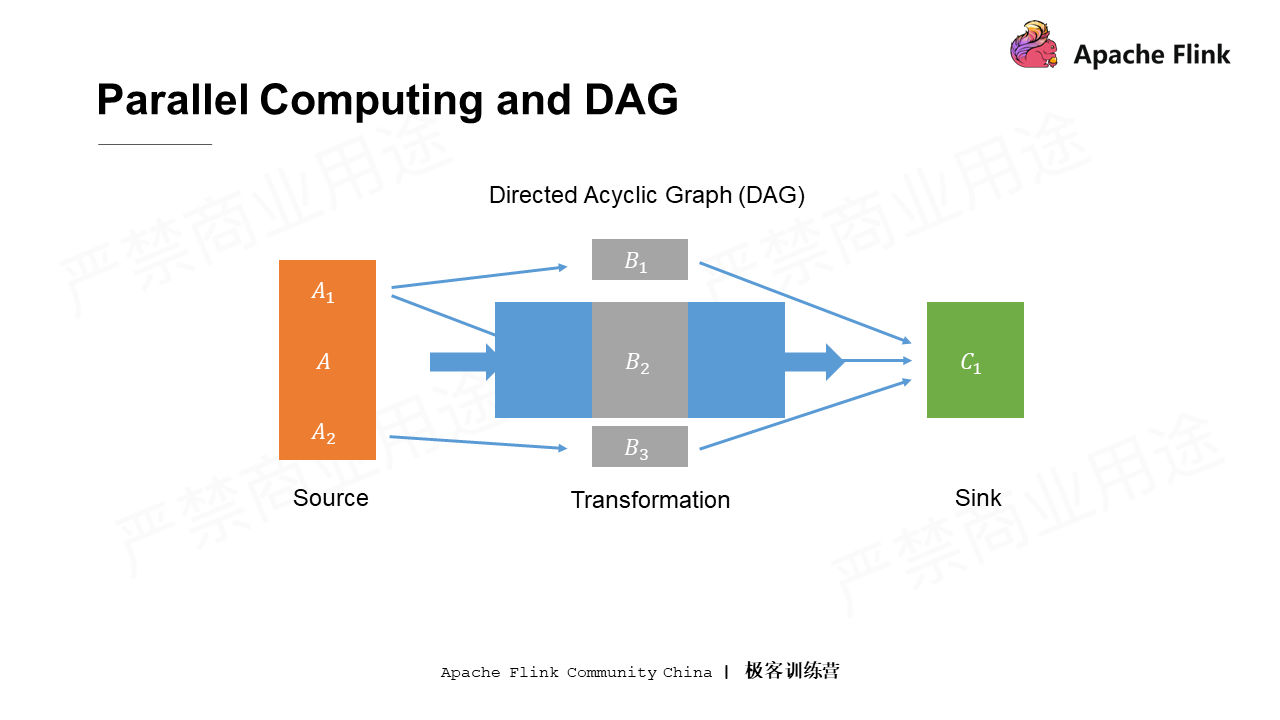

Acyclic graph for parallelism

In the picture, suppose that the students who review question A also undertake some additional tasks, such as taking the papers from the teacher's office to the place where the papers are reviewed. The students responsible for question C also have additional tasks, such as counting and recording the total scores after all the students finish reviewing. Accordingly, all nodes in the figure can be divided into three categories. The first type is responsible for acquiring data (taking papers). The second type is data processing nodes, which do not need to deal with external systems most of the time. The last type is responsible for writing the entire computing logic to an external system (counting and recording the total scores). The three types of nodes are the source node, the transformation node, and the sink node. In the DAG, nodes represent computations, and the lines between nodes represent the dependencies between computations.

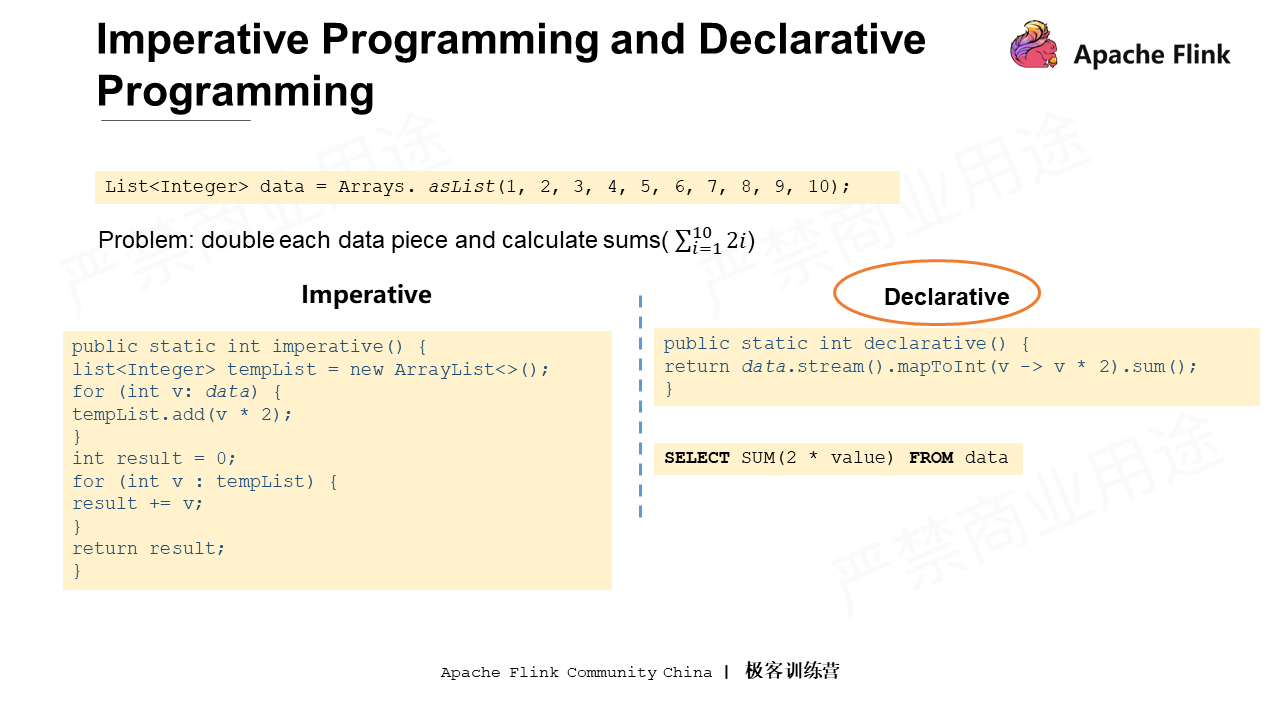

Assume that there is a dataset containing numbers from 1 to 10. How to multiply each number by 2 and perform a cumulative sum operation (as shown in the preceding figure)? Although there are different ways to perform a cumulative sum operation, two methods from the perspective of programming includes imperative programming and declarative programming.

Imperative programming is a step-by-step process that tells the machine how to generate data structures, how to store temporary intermediate results with these data structures, and how to convert these intermediate results into final results.

Declarative programming, on the other hand, tells the machine what tasks to complete, instead of delivering as many details as imperative programming does. For example, convert the source dataset to a Stream, then convert it to an Int-type Stream. In this process, multiply each number by 2 and lastly call the sum method to obtain the sum of all numbers.

The codes in declarative programming language are more concise, and conciseness is just what the computing engine is looking for. Therefore, all Flink APIs related to task writing tend to be declarative.

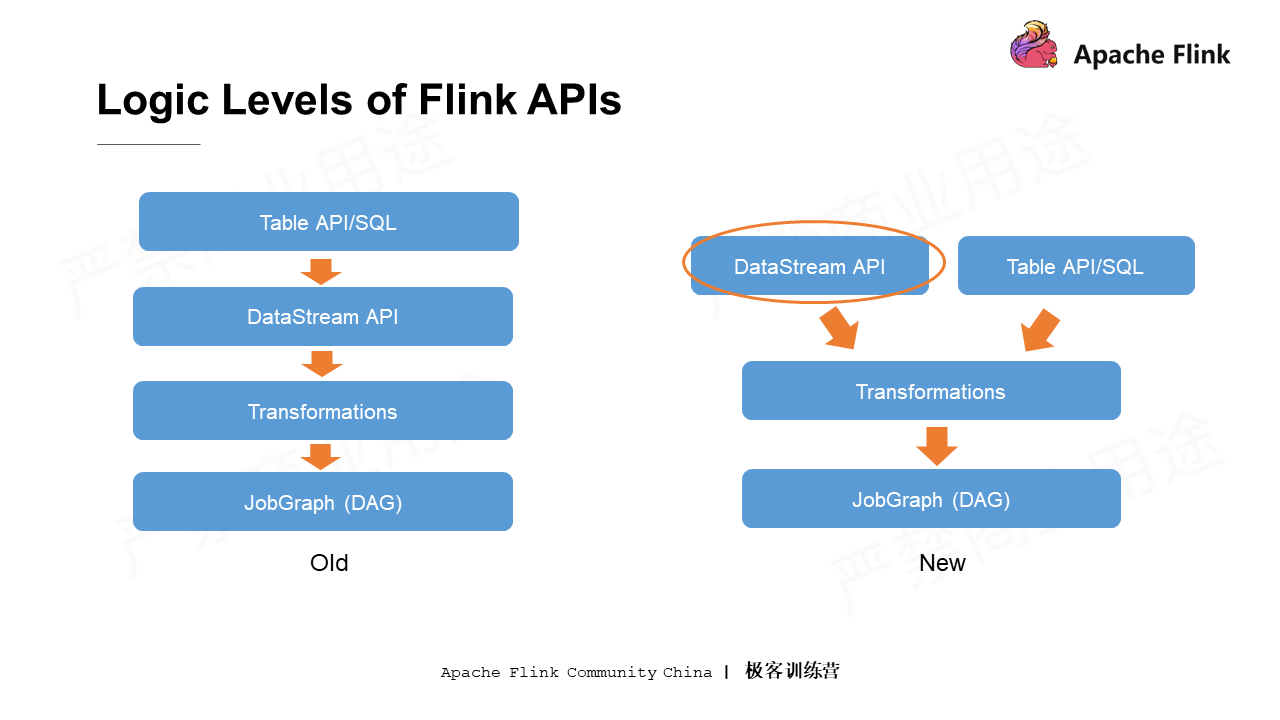

Before going into the details of the DataStream API, let's take a look at the logical layer of the Flink API.

The left column above shows the four-layer API logic in earlier versions of Flink. The top layer suggests that the logic can be written using a more advanced API, or a more declarative Table API and SQL. All the contents written by SQL and Table API are translated and optimized into a program implemented through the DataStream API. At the next layer down, the DataStream API is represented by a series of transformations that are finally translated into JobGraph (the DAG described above).

However, there are some changes in the later Flink versions, mainly at the Table API and SQL layer. It is no longer translated into the DataStream API program, but goes directly to the underlying transformation layer. In other words, the upper Table API layer and the lower DataStream API layer are now in parallel. This simplified process will correspondingly benefit the query optimization.

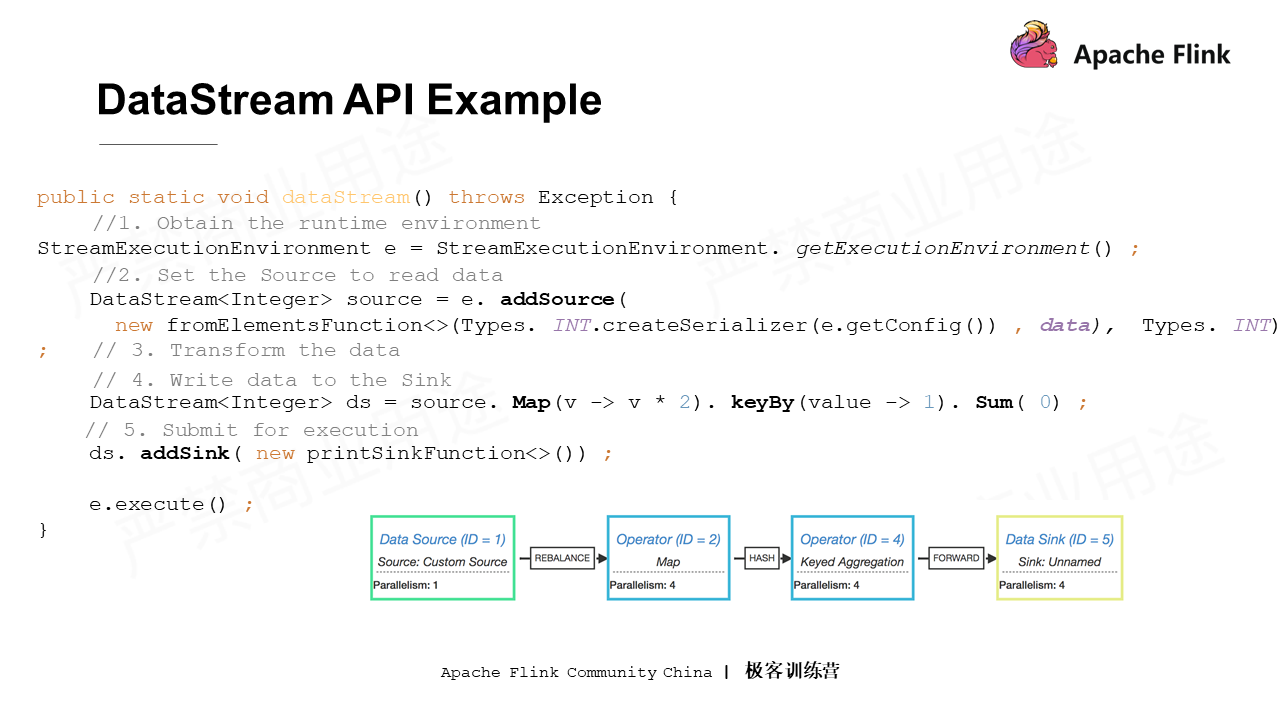

Next, let's take a simple DataStream API program as an example for an overview of the DataStream API. Again perform the above cumulative sum.

The basic code expressed in Flink is shown in the above figure. It seems a little more complicated than the standalone example. Let's look at it step by step.

As shown in the figure, each number is multiplied by 2, and then data must be grouped using keyBy to achieve the sum. The constants introduced indicate that all data is collected into a group and then accumulated according to the first field for the final result. The result cannot be the output as in a standalone program. Instead, a Sink node must be added to the entire logic to write all the data to the target location. After all the preceding work, the Execute method of the Environment is called to submit all the preceding logic uniformly to a remote or local cluster for execution.

Compared with a standalone program, the first few steps in Flink DataStream API program writing do not trigger data computing, but more of a process of drawing a DAG. When the DAG of the entire logic is completed, the entire graph can be submitted to the cluster for execution as a whole by the Execute method.

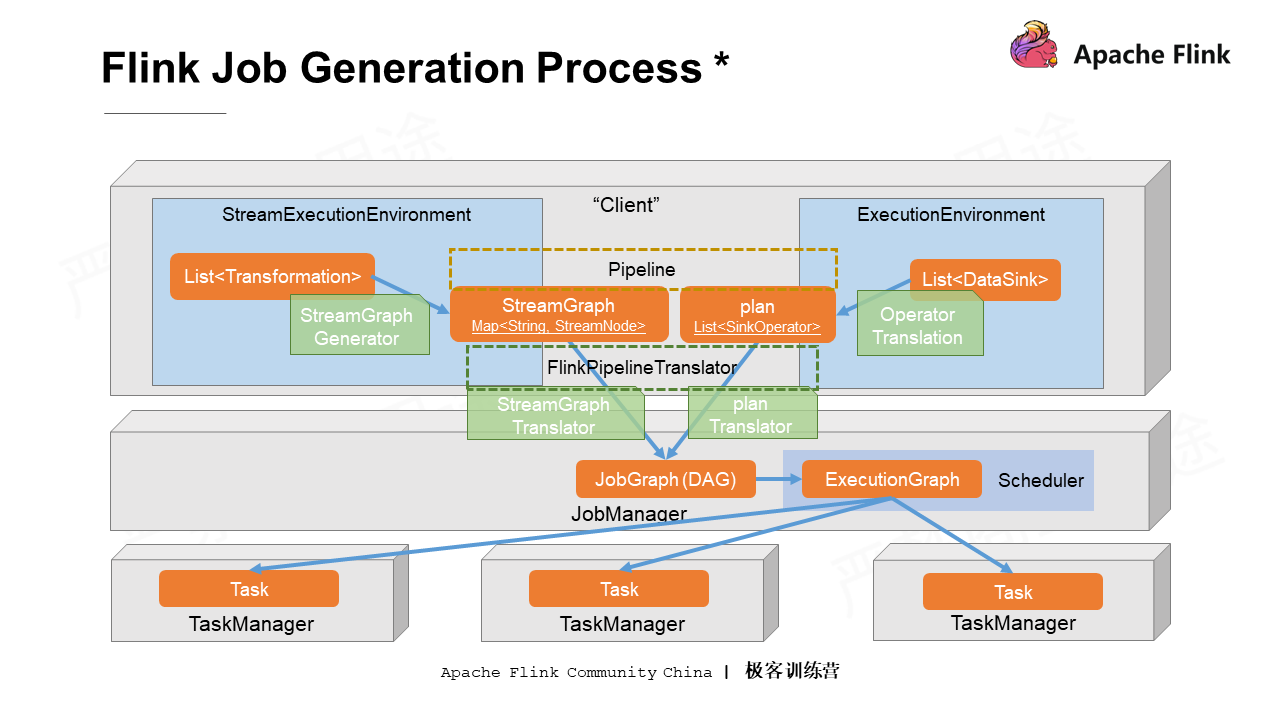

Now, the Flink DataStream API is related to the DAG. In fact, the specific generation of Flink jobs is much more complicated than that described above, and it needs to be converted and optimized step-by-step. The following figure shows specifically about how the Flink jobs are generated.

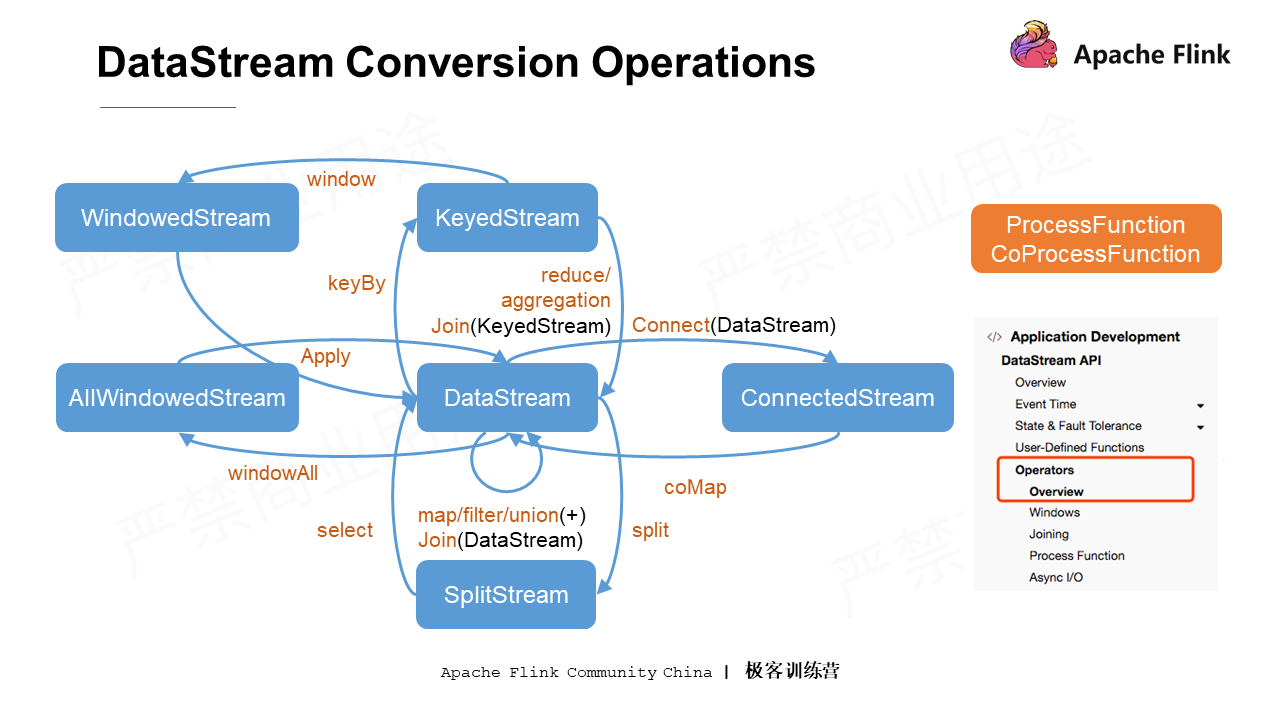

As shown in the sample code above, each DataStream object produces a new conversion when the corresponding method is called. Correspondingly, a new operator is generated at the bottom layer, and this operator is added to the DAG of the existing logic. This is equivalent to adding a line to point to the last node of the existing DAG. All these APIs will generate a new object when calling the operator, and then the conversion methods can continue to be called on the new object. The DAG is drawn step-by-step in a chain-like structure.

The above explanation involves some ideas of higher-order functions. Each time the DataStream calls a transformation, it must be provided with a parameter. In other words, the transformation determines what operation to perform on the data, while the passed function in the operator determines how the transformation is completed.

In the preceding figure, in addition to the APIs listed on the left, there are also two important functions in Flink DataStream API known as the ProcessFunction and the CoProcessFunction. These two functions are provided to users as the underlying processing logic. Theoretically, all the conversions in blue on the left in the preceding figure can be completed by using the underlying ProcessFunction and CoProcessFunction.

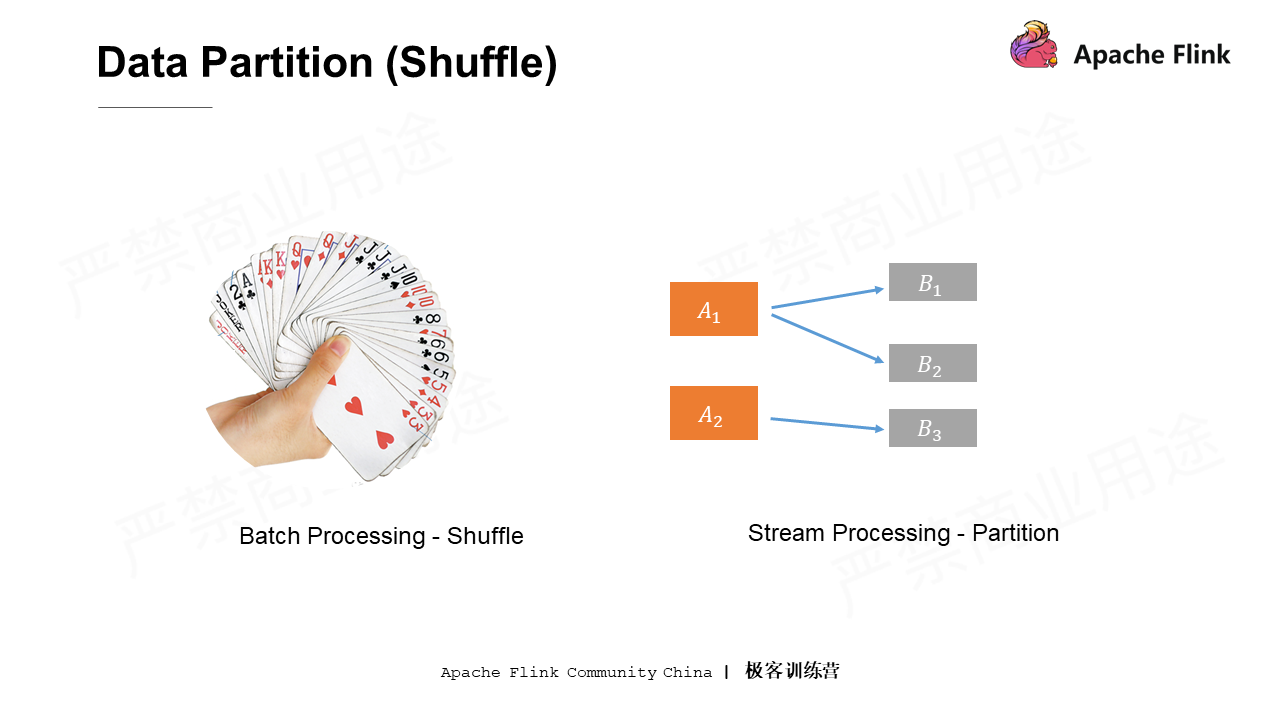

Data partitioning is the process of shuffling data in traditional batch processing. If playing poker is like data, the Shuffle operation in the traditional batch processing is like sorting the cards. Under normal circumstances, the cards are sorted with the same numbers put together. This means that the cards to be shown can be taken out at once. Shuffle is a traditional batch processing method. Since all the data in stream processing is dynamic, the grouping or partitioning of data is also done online.

As shown on the right of the preceding figure, the upstream is two processing instances of operator A (A1 and A2), and the downstream is three processing instances of operator B (B1, B2, B3). The stream processing here equivalent to the Shuffle operation is called data partitioning or data routing. It indicates the specific processing instance of operator B that the results are sent to after the data is processed by A.

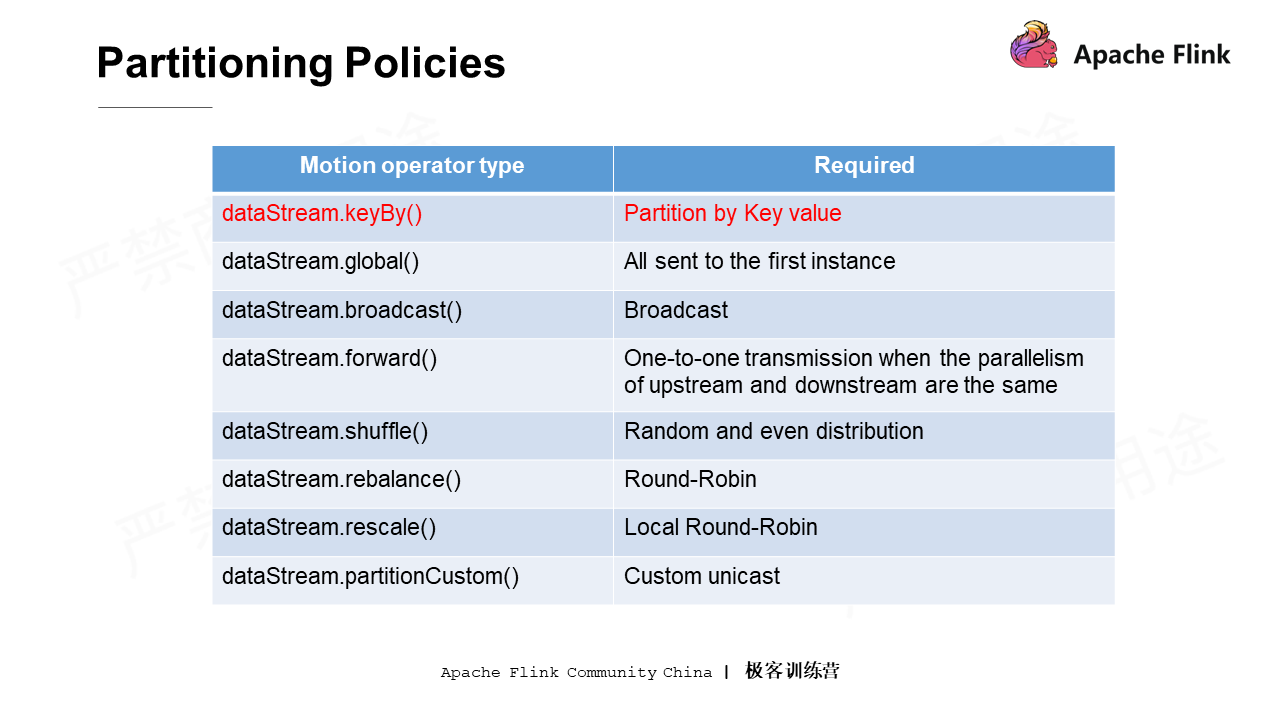

The following figure shows the partitioning policies provided by Flink. Note that, the DataStream can partition the whole data by a Key value after calling the keyBy method. However, strictly speaking, keyBy is not an underlying physical partitioning strategy, but a conversion operation. It is because from the API perspective, keyBy converts the DataStream into the KeyedDataStream type, which supports different operations.

Among all these partitioning policies, Rescale may be slightly difficult to understand. Rescale involves the locality of upstream and downstream data. It is similar to the traditional Rebalance method, namely Round-Robin distribution in turns. The difference is that Rescale attempts to avoid internetwork data transmission.

If none of the preceding partitioning policies are applicable, PartitionCustom can be called to customize a partition. Note that it is only a custom unicast, which means that each data can be specified to be sent to only one downstream instance. There is no way to copy and send the data to multiple downstream instances.

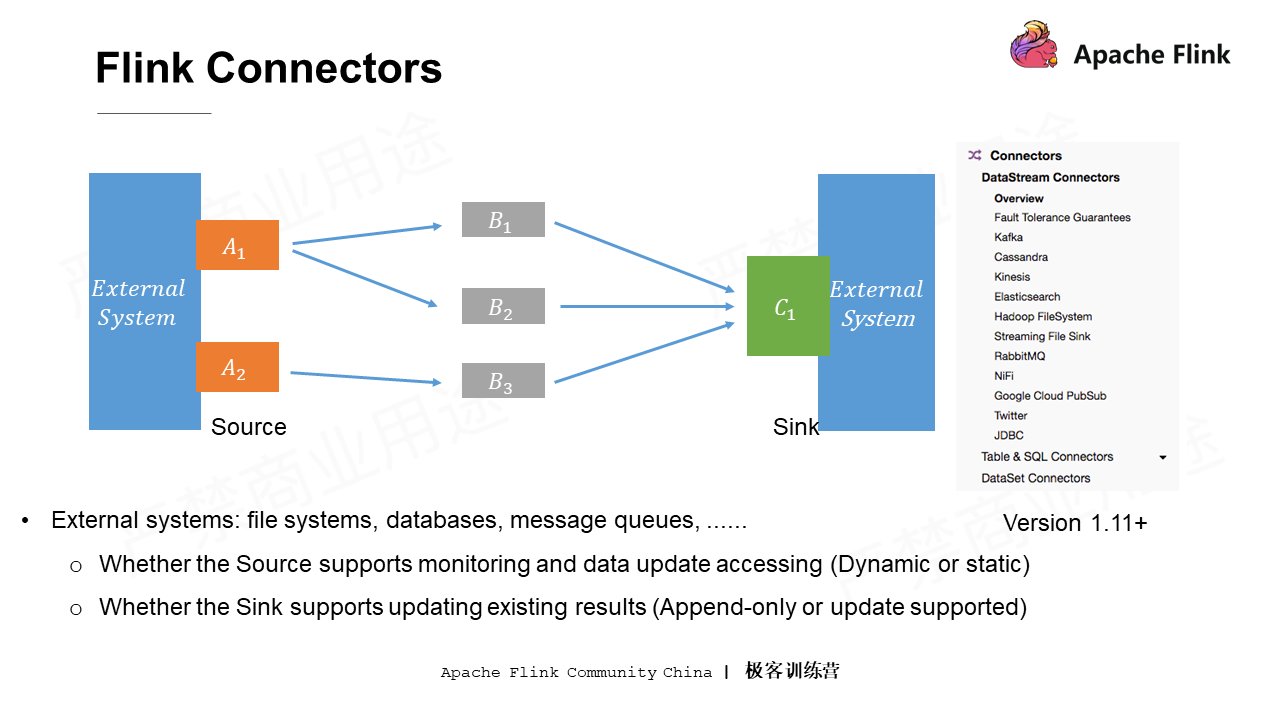

As shown in the parallel computing and DAG figure in the beginning, there are two key nodes: node A which needs to connect to external systems and read data from the external systems to the Flink processing cluster; node C, the Sink node which summarizes the processing results and writes the results to an external system. The external system can be either a file system or a database.

The computational logic in Flink still works with no data output. That is, it still makes sense when the final data is not written to external systems. Flink has a concept of State, so in fact, the results of intermediate computations can be exposed to external systems through the State, thus allowing no dedicated Sink. However, every Flink application has a Source. That is, data must be read in from somewhere before subsequent processing.

Here is something to be noted about the Source and Sink:

The above two notes determine whether the connector is for static data or dynamic data.

Note that the preceding figure shows the version later than Flink 1.11, in which the Flink connector is greatly reconstructed. Besides, connectors at the Table, SQL, and API layers undertake more tasks than connectors at the DataStream layer. The tasks include the pushdown of some predicates or projection operations, which can help improve the overall performance of data processing.

State and time definition in Flink must be mastered for deep understanding of the DataStream API.

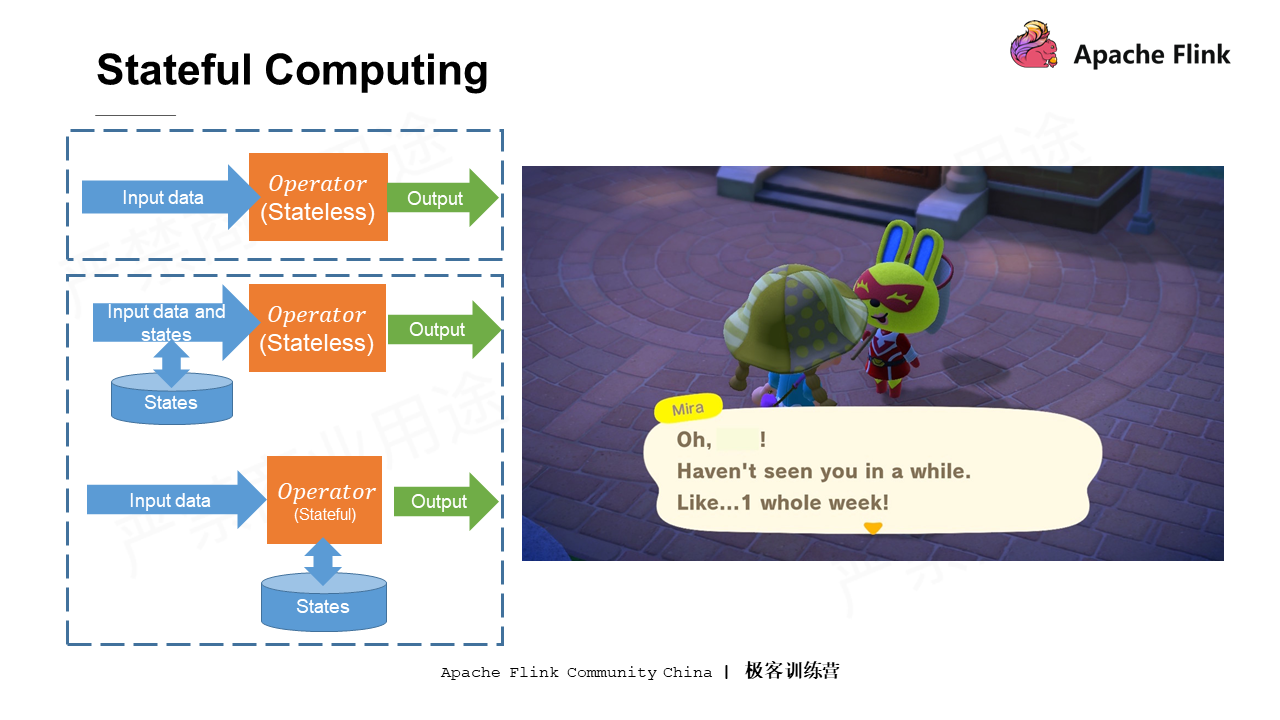

All computations can be roughly divided into stateless computation and stateful computation. Stateless computation is relatively easier. Assume that there is an addition operator. Every time a group of data comes in, it adds all of them and then outputs the results, a little like a pure function. The computation results for pure functions are only related to the input data, which are not affected by the previous operations or external states.

This section focuses on stateful computation in Flink. Take the game picking up branches as an example. This game does well in recording a lot of states on its own. If the player talks to the NPC after being offline for a few days, he will be told that he has not been online for a long time. In other words, the game system will record the previous time online as a state which affects the dialogues between the player and the NPC.

To implement such stateful computation, the previous states need to be recorded and injected into a new computation. There are two specific implementation methods:

The computing engine should be more and more intelligent like the game mentioned above. It is supposed to learn the potential patterns in the data automatically, and then optimize the computational logic adaptively to maintain high processing performance.

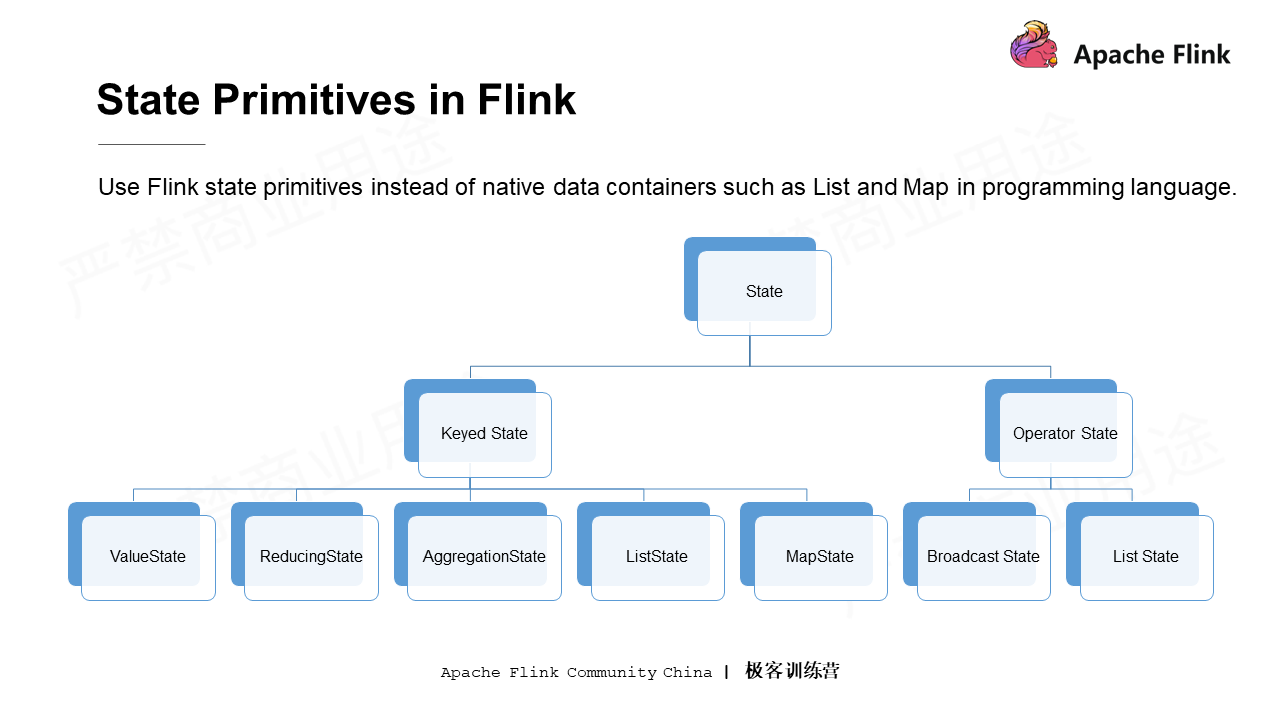

The state primitive of Flink involves how to use Flink state through code. The basic idea is to replace data containers provided by native languages such as Java or Scala with state primitives in Flink.

As a system with good support for states, Flink provides a variety of optional state primitives internally. All state primitives can be roughly divided into Keyed State and Operator State. Operator State is not introduced here due to its rare use. Instead, the Keyed State will be the focus next.

Keyed State is the partition state. The advantage of partition state is that the existing states can be divided into different blocks according to partitions provided by logic. The computations and states within a block are bound together. While the computations, the reads and writes of states with different Key values are isolated. Therefore, only the computing logics and states for each Key value rather than those for other Key values need to be managed.

Keyed State can be further divided into the following five types:

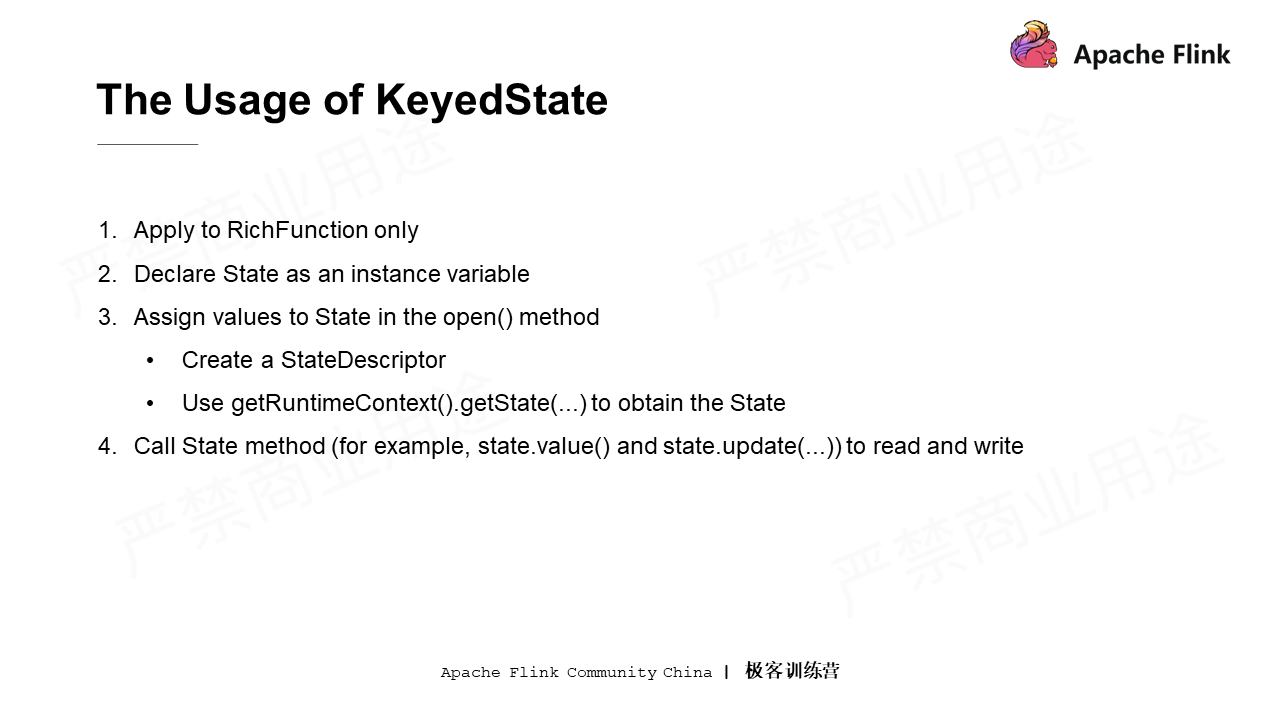

Keyed State can only be used in RichFunction. The biggest difference between RichFunction and common and traditional Function is that it has its own lifecycle. The use of Key State contains the following four steps:

Reminder: If this is the first time that the streaming application is running, the obtained State is empty. If the State is restarted in the middle, it will be restored based on the configuration and the previously saved data.

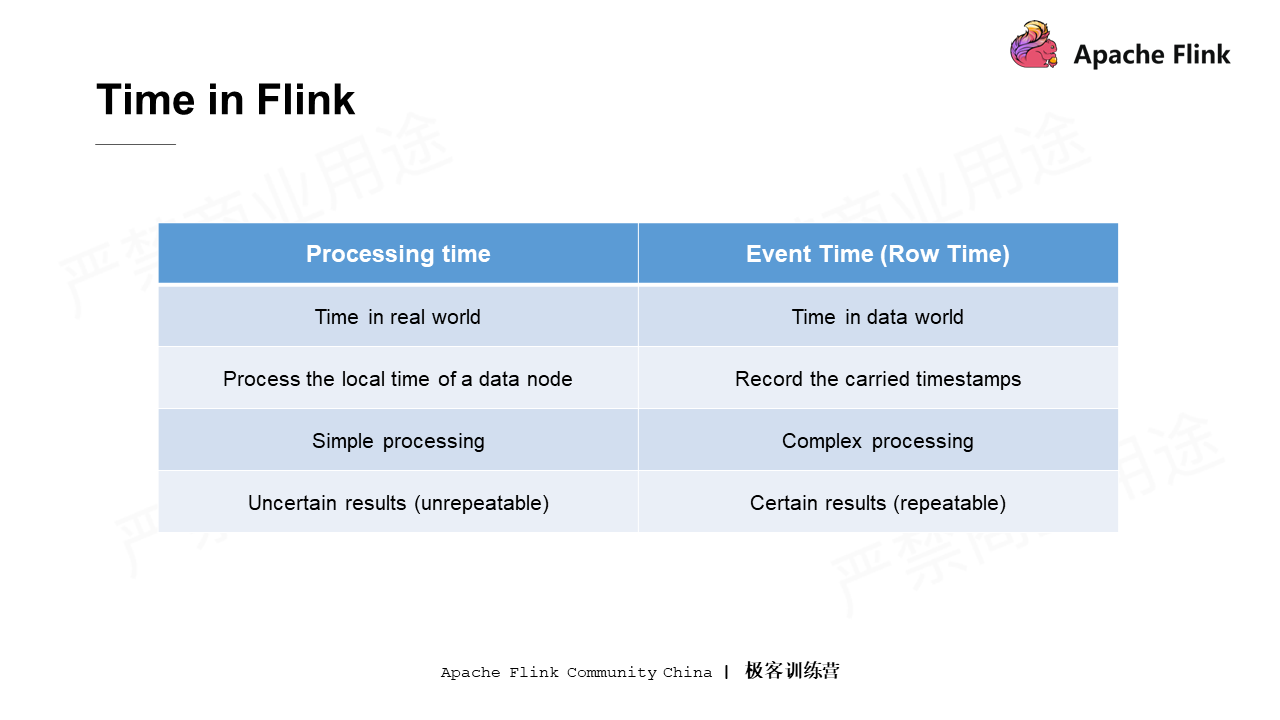

Time is also very important in Flink. Time and State are complementary to each other. Generally, the Flink engine provides two types of time: Processing Time and Event Time. Processing time indicates the real time, while Event time indicates the time contained in the data. In the process of data generation, fields such as timestamps are included, because the data often needs to be processed by time based on the timestamps carried in the data.

Processing time is relatively simple to use, since it doesn't have to solve problems such as disorder, whereas Event time is more complex. Since the time is directly obtained from the system when using Processing time, the result of each operation may be uncertain considering the uncertainty of multiple threads or distributed systems. On the contrary, the Event time timestamp is written into each data record. Therefore, even if certain data is reprocessed multiple times, the timestamp carried by this data record will not change. As long as the processing logic does not change, the final result is relatively certain.

The differences between Processing Time and Event Time are as follows.

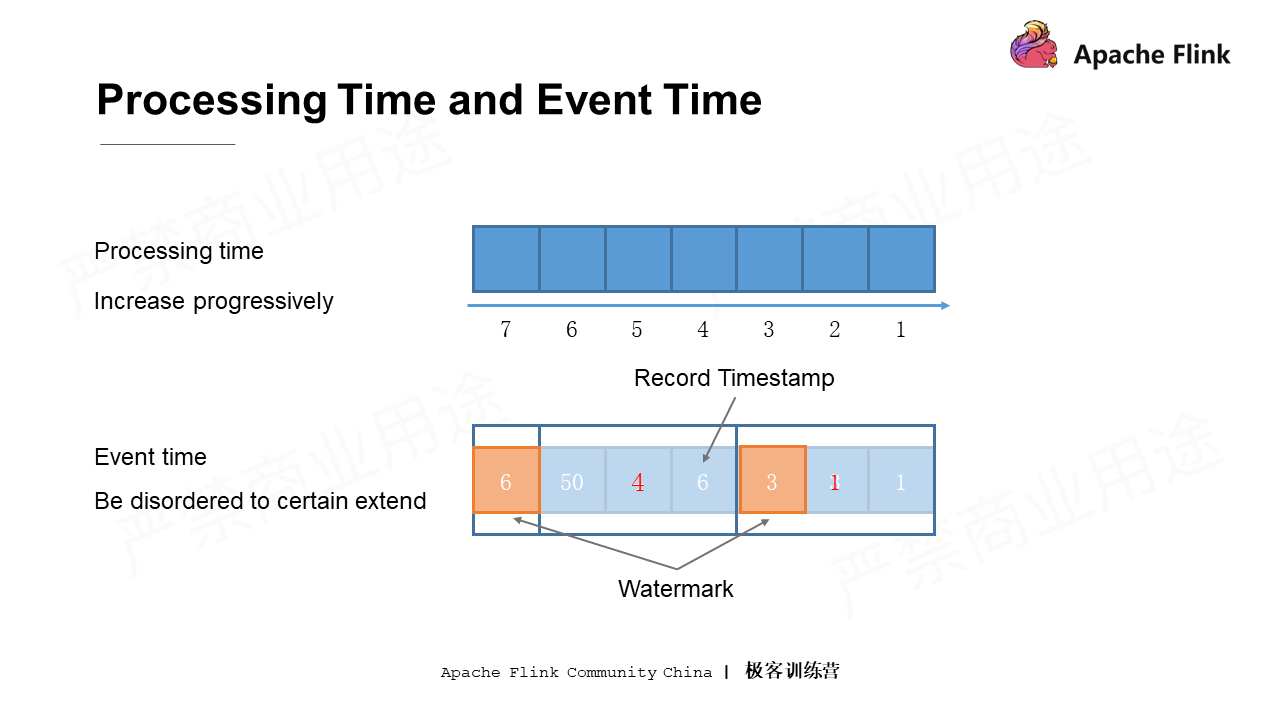

Take the data sorted by timestamps from 1 to 7 in the preceding figure as an example. The time of each machine increases monotonically. In this case, the time obtained by Processing Time is perfectly sorted by time in an ascending order. For Event Time, due to delay or distribution, the data is received in a different order than that in which data is actually generated. Therefore, data may be out of order. In this case, the timestamp contained in the data should be fully used to divide the data into coarse-grained partitions. For example, data can be divided into three groups: the minimum time for the first group is 1, 4 for the second group, and 7 for the third group. After this process, the data is arranged in ascending order between groups.

How to remove the disorder to an extent so that the data is basically in sequence when entering the system?

One solution is to insert metadata called Watermark in the middle of the data. In the preceding figure, a Watermark 3 is inserted into the data when there is no data less than or equal to 3 entering after the first three data entries are received. When the system sees Watermark 3, it knows that there will be no data less than or equal to 3. Then, it can execute its own processing logic smoothly.

In summary, the data in Processing time is in a strict ascending order, while Event time may be disordered to some extent, which needs to be resolved by using Watermark.

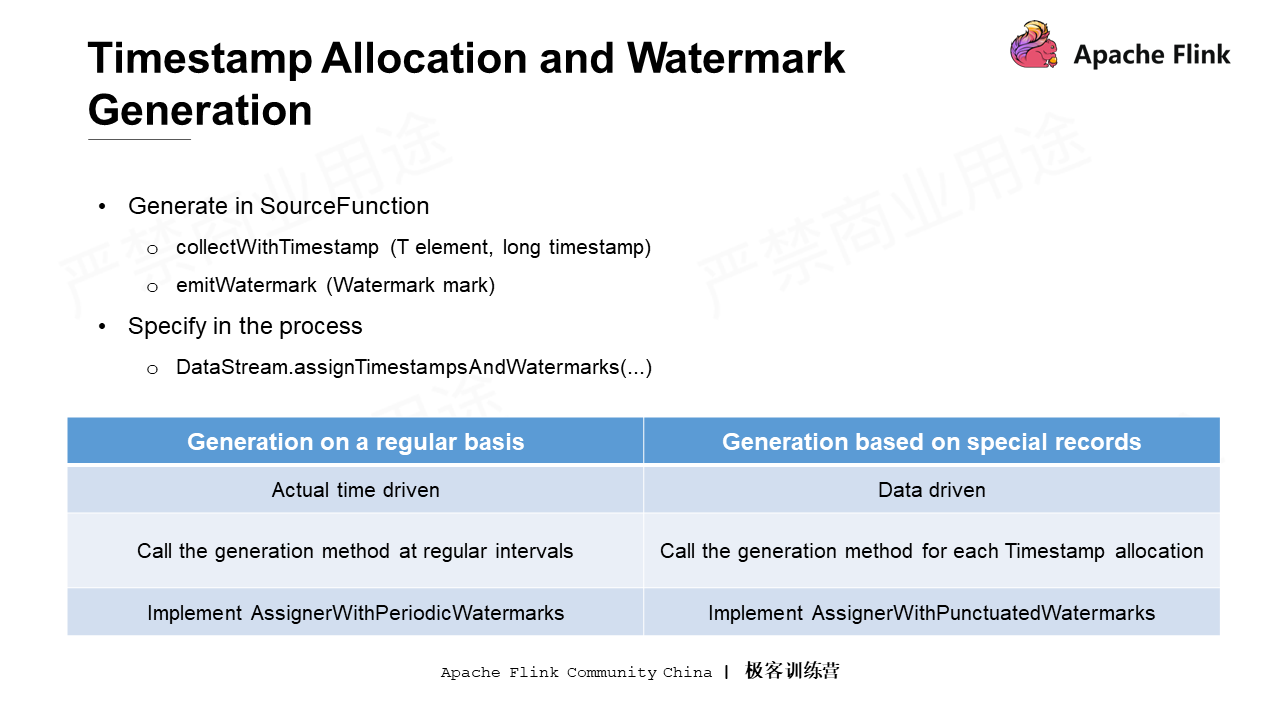

From the perspective of APIs, there are two ways to allocate a Timestamp or generate a Watermark:

The first one: Call the internally provided collectWithTimestamp method in SourceFunction to extract the data containing the timestamp, or generate a Watermark in SourceFunction by the emitWatermark method and insert it into the data stream.

The second one: If not in the SourceFunction, call the DateStream.assignTimestampsAndWatermarks method to pass in two types of Watermark generators:

The first type is generated regularly. It is equivalent to configuring a value in the environment to decide, for example, how often (referring to the actual time) the system automatically calls a Watermark generation policy.

The second type is generated based on special records. For some special data, timestamps and Watermarks can be allocated by the AssignWithPunctuatedWatermarks method.

Reminder: Flink has some common built-in Assigners, namely Watermark Assigner. Take fixed data for example, the timestamp of the data minus the fixed time is considered as a Watermark. There may be certain changes on Timestamp allocation and Watermark generation interfaces in later versions. Note that the above two types of generators have been unified in the new version of Flink.

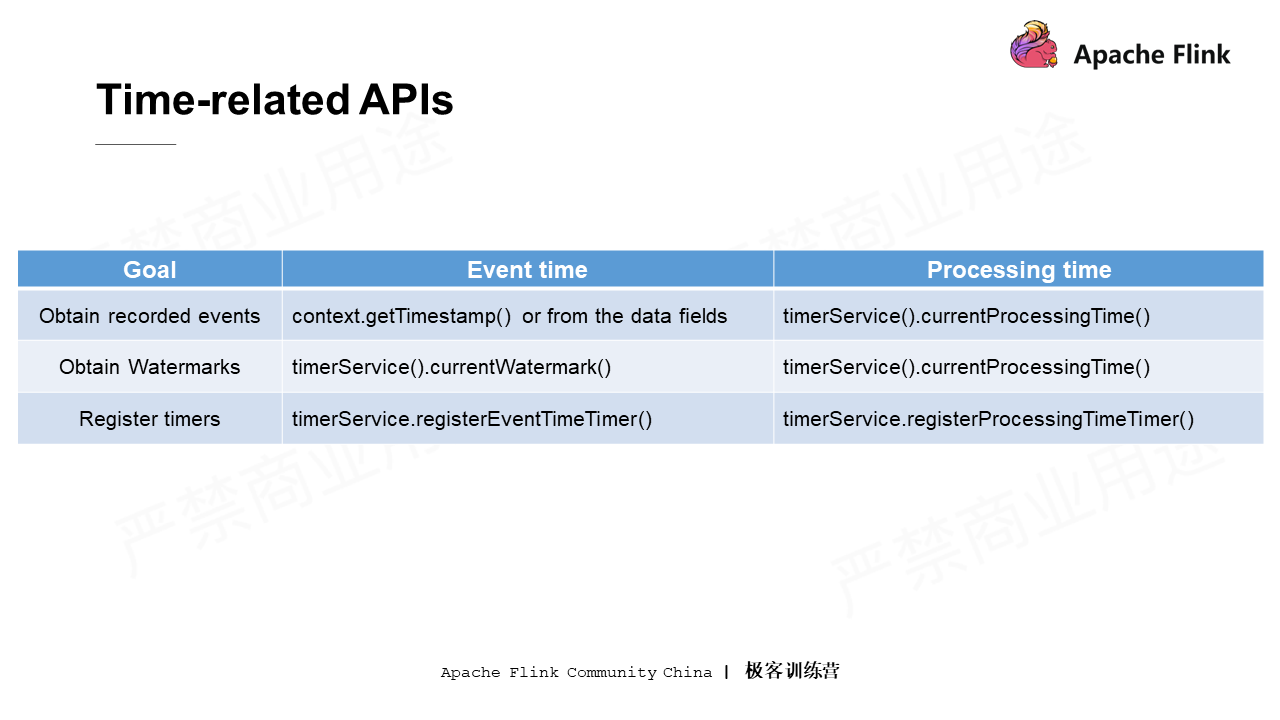

Flink uses Time-based APIs when writing logics. The following figure shows the APIs corresponding to Event Time and Processing Time.

With the application logic, the following three things could be done through interfaces:

That is all for the introduction to Stream Processing with Apache Flink. The next article will focus on the Flink Runtime Architecture.

Flink Course Series (1): A General Introduction to Apache Flink

206 posts | 58 followers

FollowApache Flink Community - August 4, 2021

Apache Flink Community China - August 6, 2021

Apache Flink Community China - August 11, 2021

Apache Flink Community China - August 19, 2021

Apache Flink Community China - August 19, 2021

Apache Flink Community China - September 27, 2019

206 posts | 58 followers

Follow Realtime Compute for Apache Flink

Realtime Compute for Apache Flink

Realtime Compute for Apache Flink offers a highly integrated platform for real-time data processing, which optimizes the computing of Apache Flink.

Learn More Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More Big Data Consulting Services for Retail Solution

Big Data Consulting Services for Retail Solution

Alibaba Cloud experts provide retailers with a lightweight and customized big data consulting service to help you assess your big data maturity and plan your big data journey.

Learn More ECS(Elastic Compute Service)

ECS(Elastic Compute Service)

Elastic and secure virtual cloud servers to cater all your cloud hosting needs.

Learn MoreMore Posts by Apache Flink Community