Stable Diffusion is an open source text-to-image diffusion model that is capable of generating images based on text prompts. You can use EAS to deploy a Stable Diffusion model as an AI-powered web application, and use the deployed application to perform model inference and generate images.

This article describes how to use an image to deploy the Stable Diffusion model as a web application in EAS, perform model inference with web UI and generate images based on text prompts. Perform the following steps:

If you encounter any issues during model deployment and inference, see FAQ section.

• EAS is activated and the default workspace is created. For more information, see Activate PAI and create the default workspace.

• If you use a RAM user to deploy the model, make sure that the RAM user is granted the management permissions on EAS. For more information, see Grant the permissions that are required to use EAS.

The following section describes how to deploy the Stable Diffusion model as an AI-powered web application.

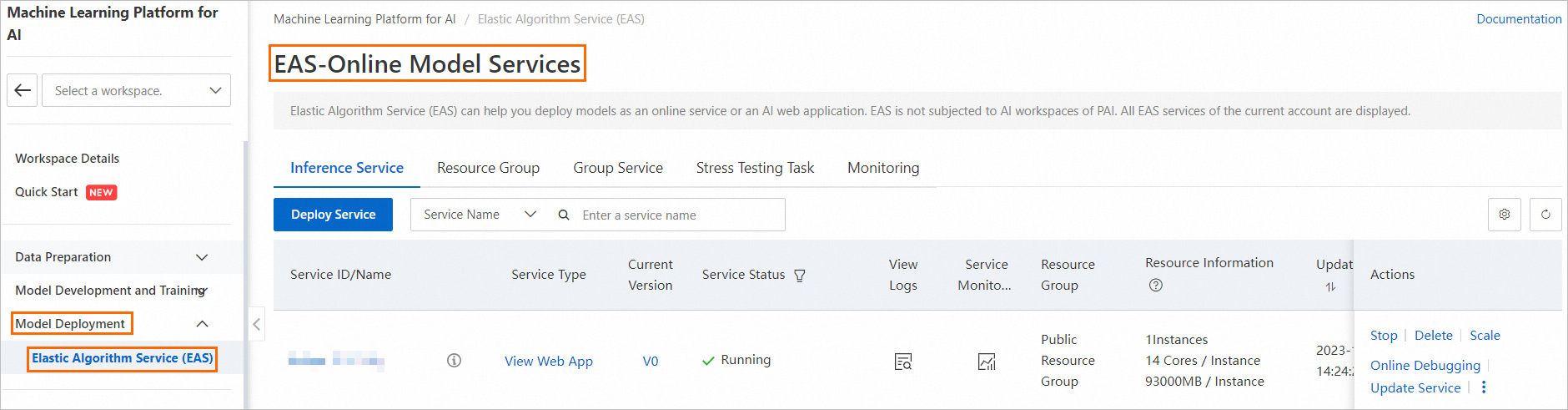

1. Go to the EAS-Online Model Services page.

a) Log on to the Platform for AI (PAI) console.

b) In the left-side navigation pane, click Workspaces. On the Workspaces page, click the name of the workspace to which the model service that you want to manage belongs.

c) In the left-side navigation pane, choose Model Deployment > Elastic Algorithm Service (EAS) to go to the EAS-Online Model Services page.

2. On the EAS-Online Model Services page, click Deploy Service.

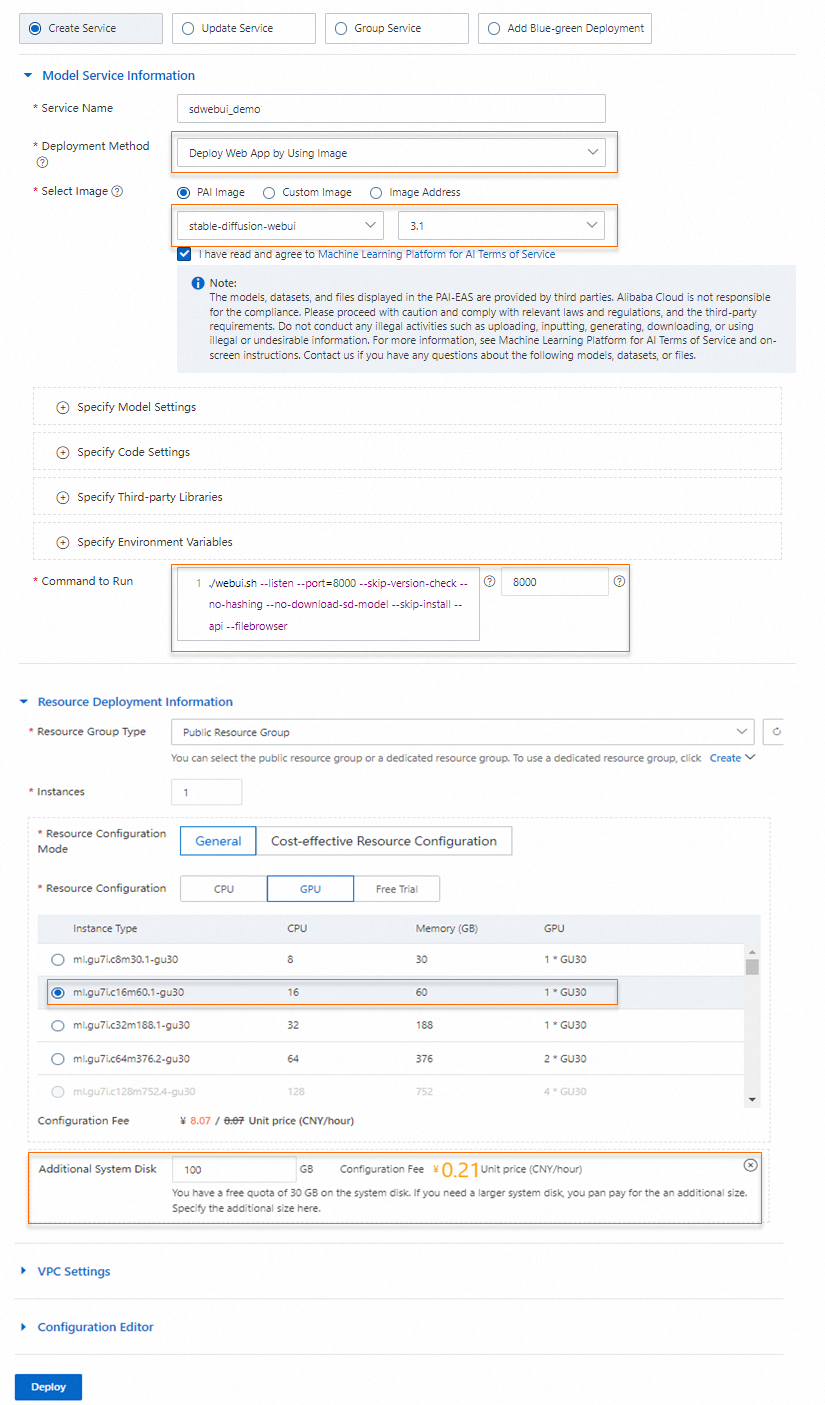

3. On the Deploy Service page, configure the following parameters.

| Parameter | Description |

| Service Name | The name of the service. In this topic, sdwebui_demo is used as an example. |

| Deployment Method | Select Deploy Web App by Using Image |

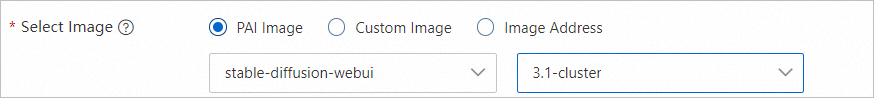

| Select Image | Select PAI Image. Then, select stable-diffusion-webui from the image drop-down list and 3.1 from the image version drop-down list. Note: You can select the latest version of the image when you deploy the model service. |

| Resource Group Type | Select Public Resource Group. |

| Resource Configuration Mode | Select General. |

| Resource Configuration | Select an Instance Type on the GPU tab. In terms of cost-effectiveness, we recommend that you use the ml.gu7i.c16m60.1-gu30 instance type. |

After the image is configured, the system automatically configures parameters such as Command to Run and Additional System Disk. You can use the default settings.

4. Click Deploy. The deployment takes several seconds to complete.

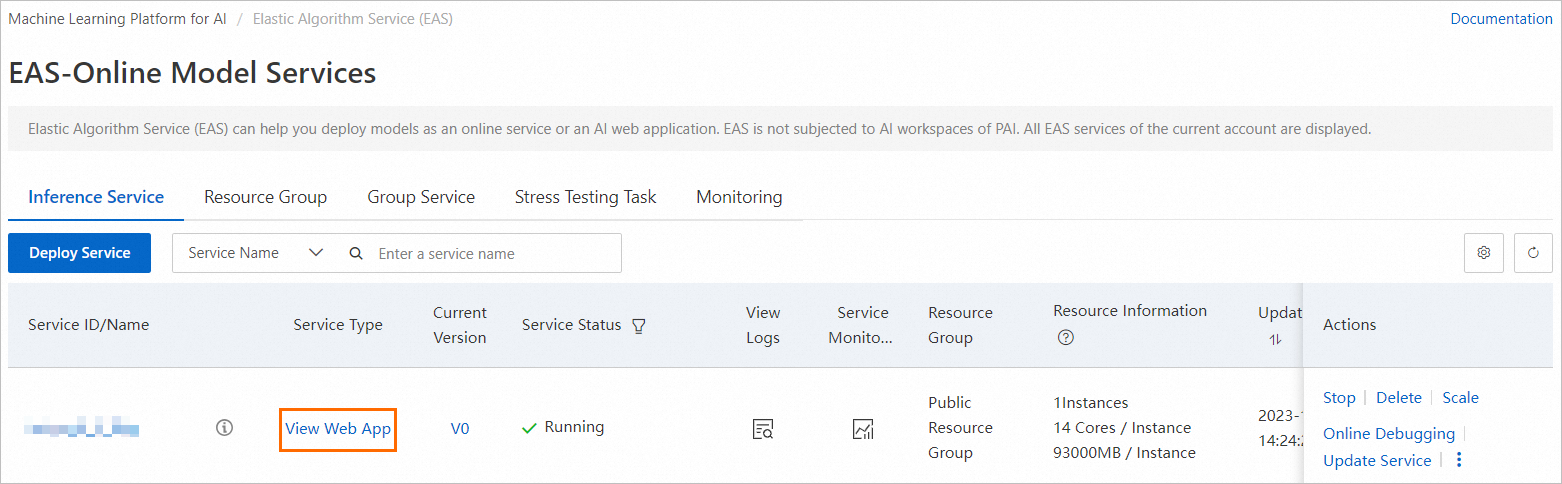

When the Service Status enters Running, the service is deployed.

1. Find the service that you want to manage and click View Web App in the Service Type column.

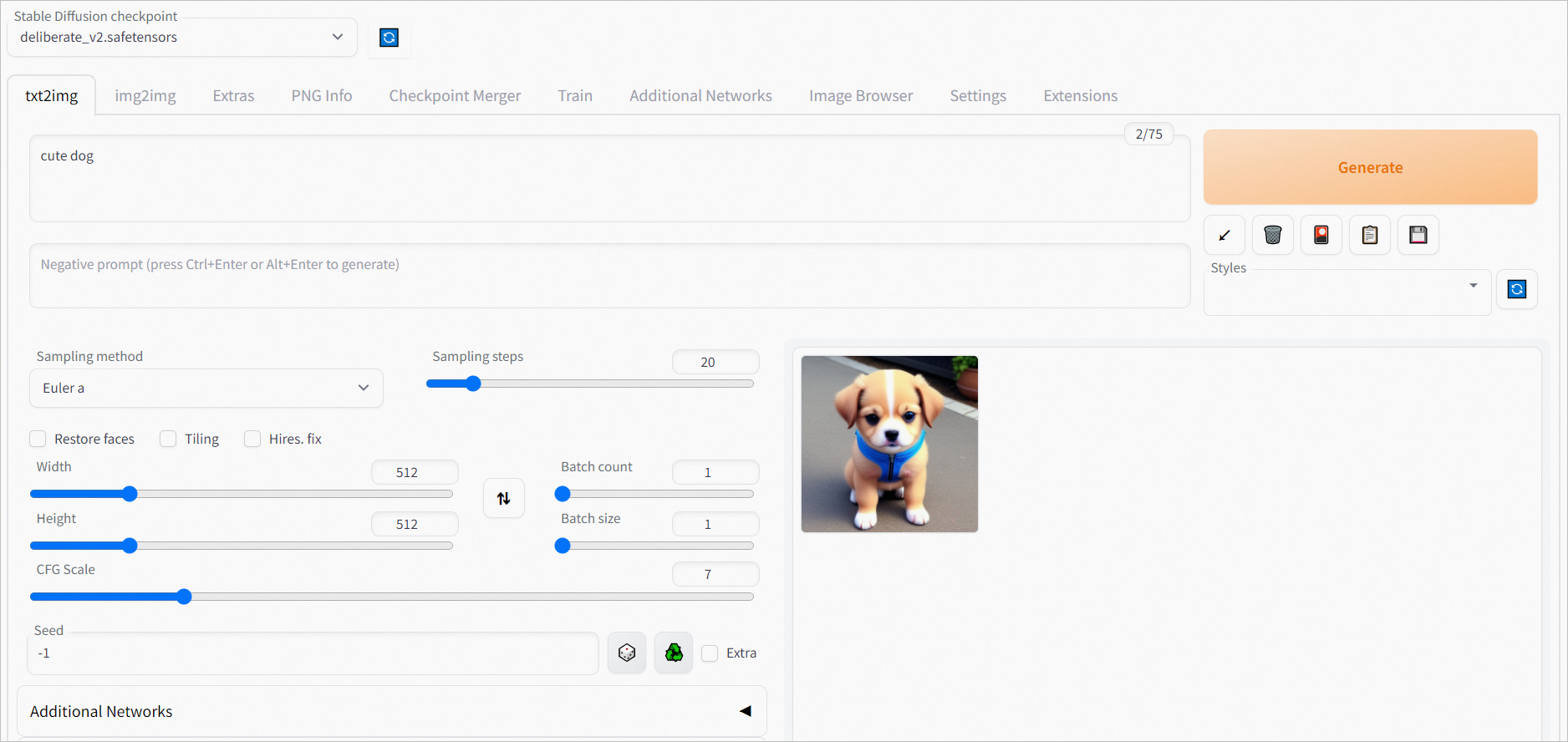

2. Perform model inference on the web UI page.

In the Prompt section of the txt2img tab, enter your input and click Generate.

This example uses cute dog as a sample input in the Prompt section. The following figure shows the inference results.

You can enable Blade or xFormers to accelerate model service.

The following section describes the benefits of using Blade and xFormers.

• Blade is an acceleration tool provided by PAI. It can accelerate model service up to 3.06 times. The actual acceleration effect may vary based on image size and the number of iteration steps. You can enable Blade to enjoy higher performance and lower latency.

• xFormers is an open-source acceleration tool provided by Stable Diffusion web UI. It can be used to accelerate a wide range of models.

To enable Blade or xFormers, perform the following steps.

• Blade

a) Click Update Service in the Actions column of the service.

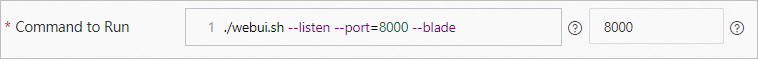

b) On the Deploy Service page, enter the ./webui.sh --listen --port=8000 –blade command in the Command to Run field.

c) Click Deploy.

• xFormers

a) Click Update Service in the Actions column of the service.

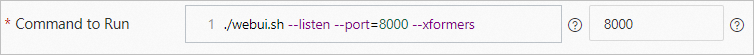

b) On the Deploy Service page, enter the ./webui.sh --listen --port=8000 –xformers command in the Command to Run field.

c) Click Deploy.

You can use the following method to mount your file in scenarios where you need to use your own model or OSS bucket. There are various situations that may arise, such as downloading a model from the open source community or training a model like Low-Rank Adaptation of Large Language Models (LoRA) or Stable Diffusion for use in the SD web UI. Additionally, you may need to save output data to your own Object Storage Service (OSS) bucket directory or configure some third-party library files or install plug-ins.

1. Log on to the OSS console. Create a bucket and an empty directory.

Example: oss://bucket-test/data-oss/, where: bucket-test is the name of the OSS bucket, and data-oss is the empty file directory in the bucket. For more information about how to create a bucket, see Create buckets. For more information about how to create an empty directory, see Manage directories.

2. Click Update Service in the Actions column of the service.

3. In the Model Service Information section, set the following parameters.

| Parameter | Description |

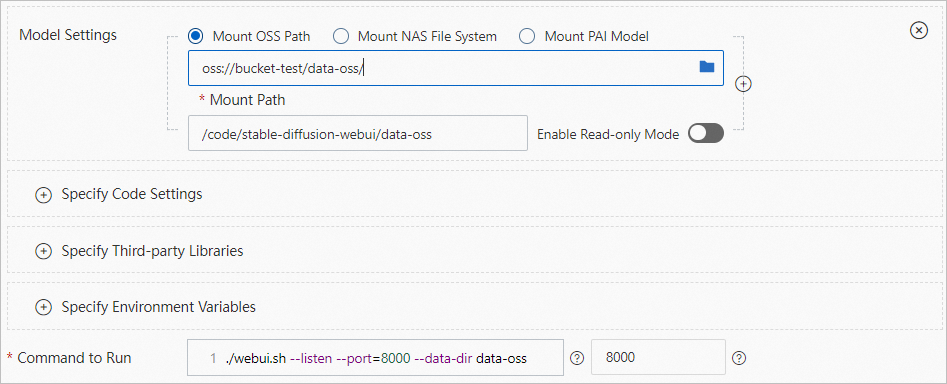

| Model Settings | Click Specify Model Settings to configure the model. - In the Model Settings section, select Mount OSS Path. In the Mount Path field, specify the OSS bucket path that you created in Step 1. Example: oss://bucket-test/data-oss/.- Mount Path: Mount the OSS file directory to the /code/stable-diffusion-webui path of the image. Example: /code/stable-diffusion-webui/data-oss.- Enable Read-only Mode: turn off the read-only mode. |

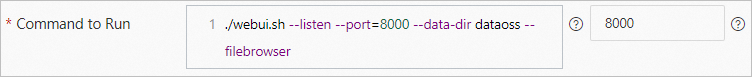

| Command to Run | Add the --data-dir mount directory in the Command to Run field, where mount directory must be the same as the last-level directory of the Mount Path in the Model Settings section. Example: ./webui.sh --listen --port=8000 --data-dir data-oss. |

4. Click Deploy to update the model service.

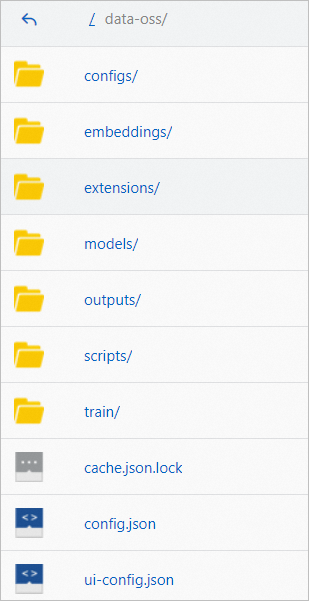

The following figure shows the directory that PAI automatically creates in the empty OSS directory you specified and copies the required data to the directory. We recommend that you upload data to the specified directory after the service is started.

5. After the OSS directory is automatically created, you can upload the downloaded or trained model to the specified directory under models. Click  > Restart Service in the Actions column. After the service is restarted, the configuration takes effect.

> Restart Service in the Actions column. After the service is restarted, the configuration takes effect.

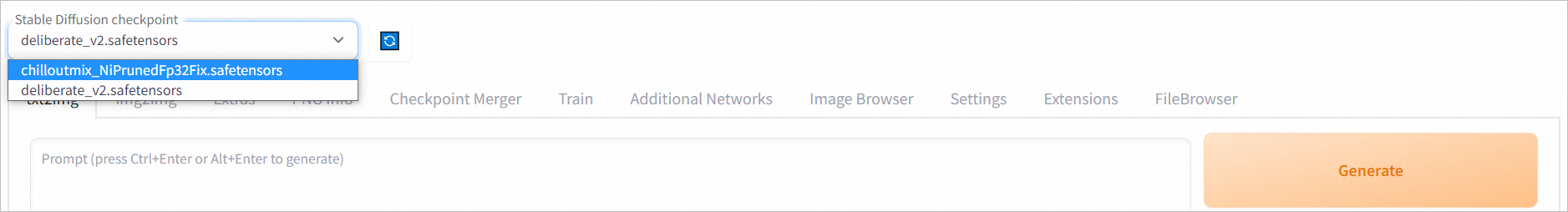

After the configuration takes effect, you can click View Web Application in the Service Type column of the service that you want to use to start the web UI. On the web UI page, select the model from the Stable Diffusion Model (ckpt) drop-down list. Then, you can use the selected model to perform model inference.

You can try to reopen the SD web UI page or restart the EAS service.

> Restart Service in the Actions column of the service to restart the EAS service.

> Restart Service in the Actions column of the service to restart the EAS service.The preceding deployment method supports only individual users. If multiple users access and operate on the SD web UI page at the same time, incompatibility issues may occur. If you want to enable multiple users to operate on the SD web UI page by using the same web application, you can deploy the cluster edition of SD web UI in EAS. You only need to select the stable-diffusion-webui:3.1-cluster image when you deploy the service. We recommend that you use multiple service instances for multiple-user operation to ensure the speed and stability of AI image generation.

The cluster edition has the following benefits:

• Multiple users can independently access the web application through the same URL.

• Multiple users can share one GPU. At the same time, the service scales based on the actual usage. This improves the cluster utilization and achieves cost effectiveness.

• The application can integrate with the enterprise account system to distinguish the image generation models, image results, and plug-ins of each user.

1. Configure an OSS mount path for the model service. For more information, see How do I use my own model and output directory?.

2. On the web UI page that appears, click Settings.

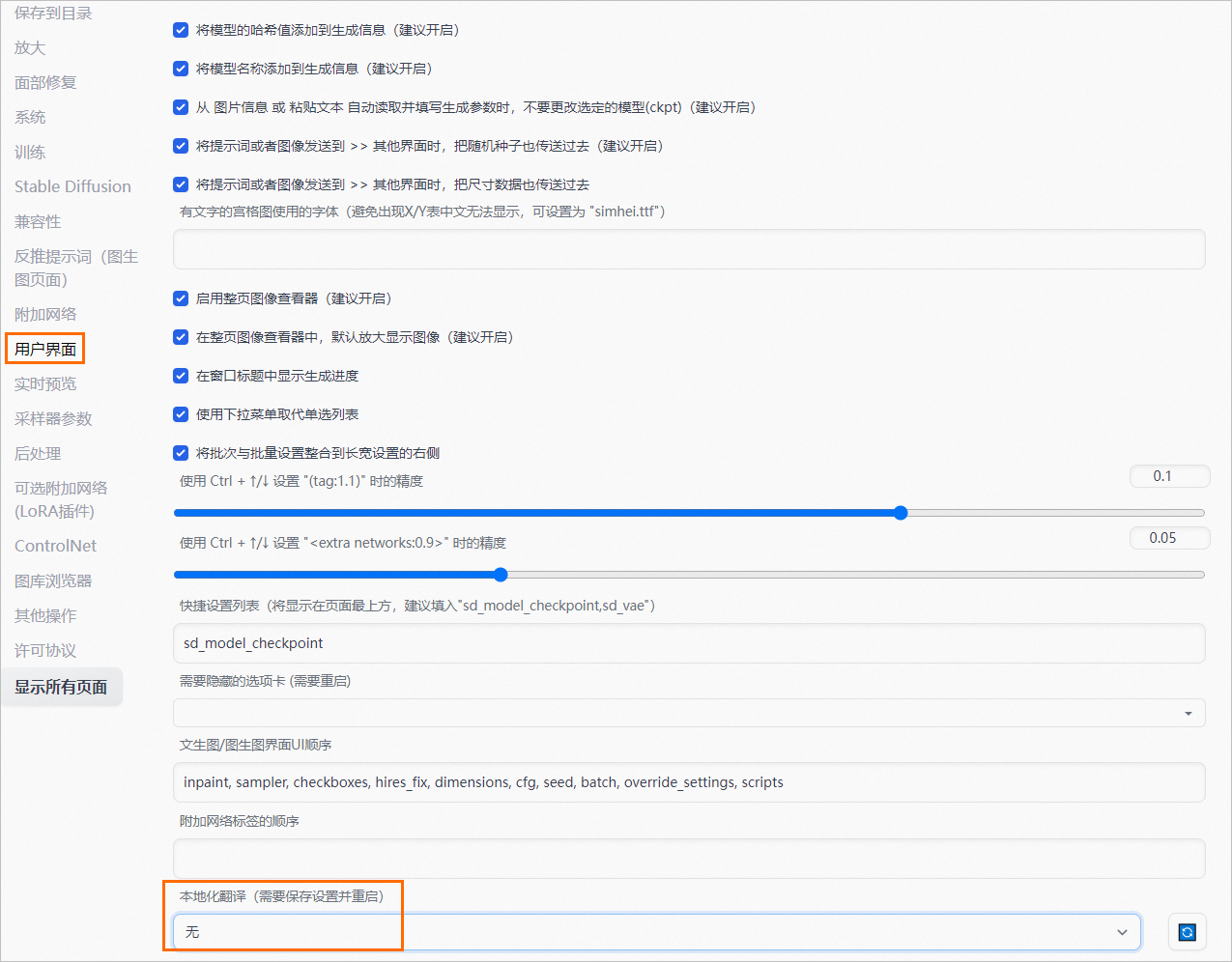

3. In the left-side navigation pane, click 用户界面. In the lower part of the 本地化翻译 page, change the value from zh_CN to 无.

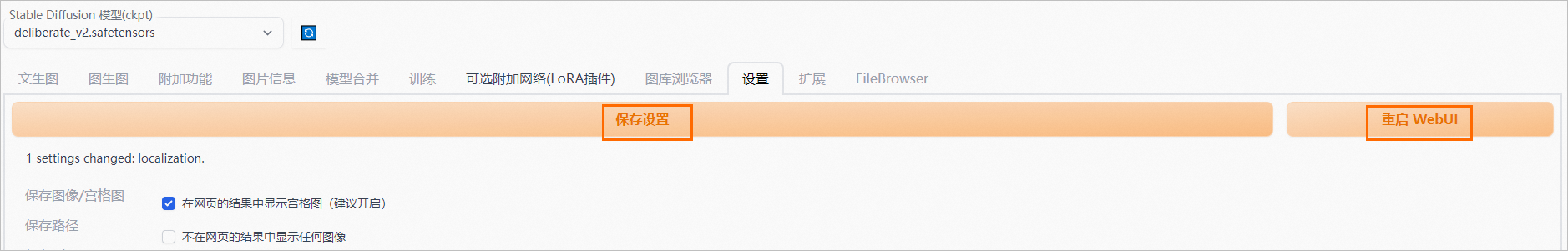

4. In the upper part of the WebUI page, click 保存设置. After the settings are saved, click 重启 WebUI.

When the Model Status changes from Waiting to Running, refresh the web UI page. The page is displayed in English.

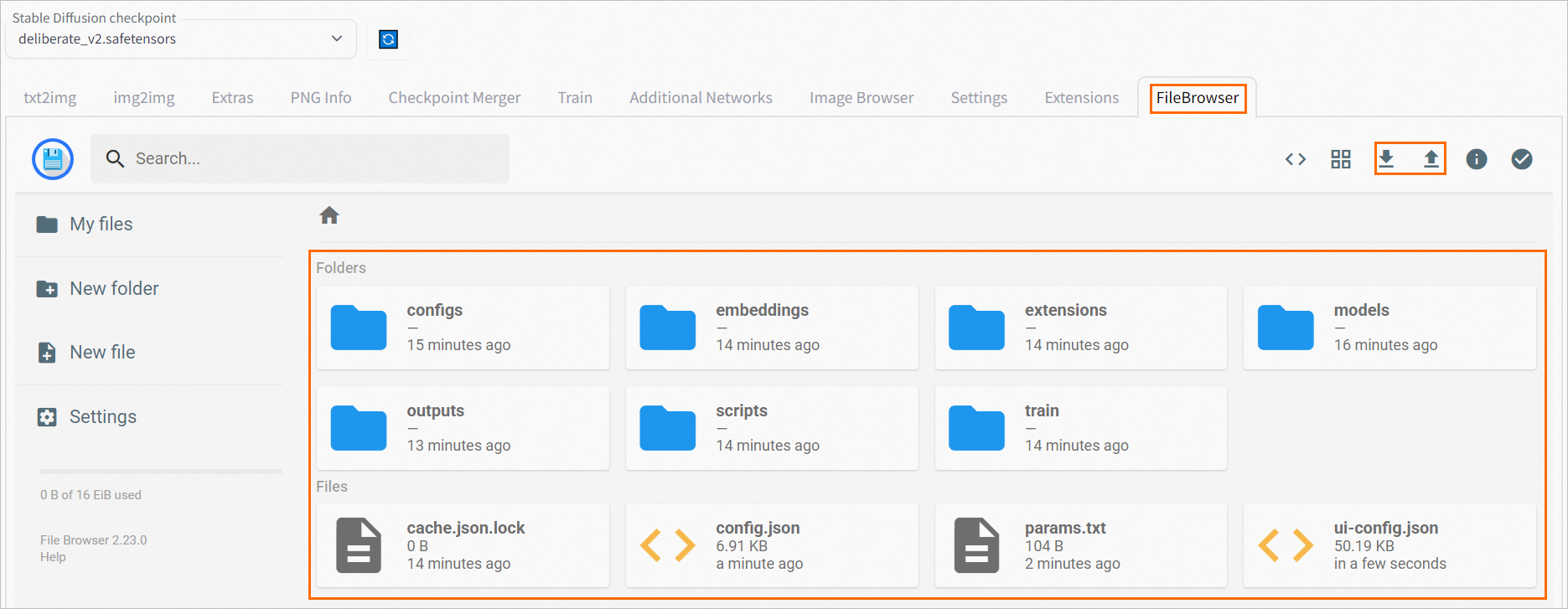

If you want to manage your file systems in a more convenient manner, such as opening the file systems directly on the web UI page, perform the following steps.

1. Make sure that you have mounted an OSS path. For more information, see How do I use my own model and output directory?. When you create or update a service, you can add the ./webui.sh --listen --port=8000 --blade --data-dir data-oss –filebrowser command in the Command to Run field.

2. After the service is deployed, click View Web App in the Service Type column.

3. On the web UI page, click the FileBrowser tab. The file system page is displayed. You can upload local files to the file system, or download files to your local computer.

44 posts | 1 followers

FollowAlibaba Cloud Data Intelligence - December 5, 2023

Farruh - October 2, 2023

Alibaba Cloud Community - December 8, 2023

Alibaba Cloud Data Intelligence - December 5, 2023

Alibaba Cloud Serverless - July 27, 2023

Alibaba Cloud Data Intelligence - April 22, 2024

44 posts | 1 followers

Follow Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Platform For AI

Platform For AI

A platform that provides enterprise-level data modeling services based on machine learning algorithms to quickly meet your needs for data-driven operations.

Learn More Tongyi Qianwen (Qwen)

Tongyi Qianwen (Qwen)

Top-performance foundation models from Alibaba Cloud

Learn MoreMore Posts by Alibaba Cloud Data Intelligence