By Alibaba Cloud Heterogeneous Computing team

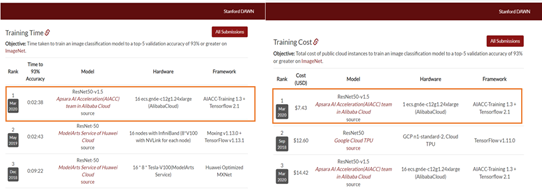

Recently, the latest results of the DAWNBench ImageNet competition released by Stanford University showed that Alibaba surpassed Google and Facebook to take the top place in four rankings.

It took Alibaba just 158 seconds to train ResNet-50 on 128 V100 GPUs, reaching a top 5 accuracy of 93%.

Alibaba reached a top 5 accuracy of not less than 93% when classifying the 10,000 images in a validation set, with an inference performance more than 5 times faster than its closest competitor.

In the field of heterogeneous computing, Alibaba Cloud provides world-class capabilities in integrating artificial intelligence (AI) software and hardware to maximize the performance and minimize the costs of training and inference.

How did Alibaba achieve this? The Alibaba Cloud heterogeneous computing team shared the technical secrets that allowed Alibaba to place first in four competitions.

Stanford University's DAWNBench is a benchmark suite for end-to-end deep learning training and inference performance. It was announced at the 2017 Neural Information Processing Systems (NIPS) Conference and has gained wide support from the industry.

World-renowned participants in DAWNBench competitions include Google, Facebook, and VMware. DAWNBench has become one of the most influential and authoritative rankings in the field of AI.

Performance and cost are two important metrics in AI computing. The latest results from DAWNBench demonstrate Alibaba Cloud's world-class performance optimization capabilities in training and inference based on our integration of software and hardware.

According to the Alibaba Cloud heterogeneous computing AI acceleration team, such superior performance comes from Alibaba Cloud's proprietary Apsara AI Acceleration (AIACC) engine, proprietary AI chip Hanguang 800 (also known as AliNPU), and heterogeneous computing cloud services.

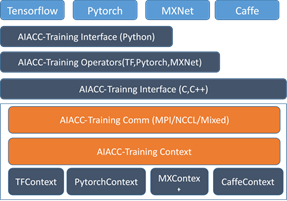

AIACC, an AI acceleration engine independently developed by Alibaba Cloud, is the first in the industry to uniformly accelerate mainstream AI computing frameworks such as TensorFlow, PyTorch, MxNet, Caffe, and Kaldi. AIACC includes the training acceleration engine AIACC-Training and inference acceleration engine AIACC-Inference.

AIACC-Training optimizes the performance of distributed networks to maximize their communication capabilities. AIACC-Inference optimizes the performance of Alibaba Cloud's heterogeneous computing cloud services, including cloud services based on graphics processing units (GPUs) and network processing units (NPUs), in a targeted and deep manner to leverage the computing capabilities of heterogeneous acceleration devices.

The NVIDIA GPU provides a good example. Currently, the fastest inference engine in the industry is TensorRT, but AIACC-Inference provides computing performance with an acceleration ratio 1.5 to 2.5 times that of TensorRT.

As the first AI chip independently developed by Alibaba, the Hanguang 800 is the most powerful AI inference chip in the world and primarily used in cloud vision processing. It surpasses all other existing AI chips in terms of performance and energy efficiency.

In the industry-standard ResNet-50 test, Hanguang 800 reached an inference performance of 78,563 IPS, which is 4 times that of the leading AI chip in the industry. Hanguang 800 also achieved an energy efficiency ratio of 500 IPS/W, 3.3 times that of the second-place chip. AIACC-Inference fully leverages the ultra-high computing capability of Hanguang 800, making it a model of how Alibaba Cloud can achieve unprecedented performance optimization through hardware and software integration.

Alibaba Cloud provides heterogeneous computing cloud services that integrate heterogeneous computing devices such as GPUs, field programmable gate arrays (FPGAs), and NPUs. This gives customers access to heterogeneous computing services in the form of cloud computing services.

The rise of AI has popularized heterogeneous computing as a means to accelerate the performance of AI computing. Alibaba Cloud provides heterogeneous computing services based on a wide array of cloud-based acceleration instances to accelerate AI computing in an inclusive, elastic, and simple manner.

The most representative scenario in the image recognition field is ResNet-50 training in ImageNet.

In the latest rankings, AIACC-Training beat other competitors in ResNet-50 training, demonstrating the highest performance and cost efficiency. This proved AIACC's superiority in distributed training capabilities compared to other training acceleration engines and its ability to help users improve training performance while reducing computing costs.

The new world record in training performance is 2 minutes and 38 seconds, which was the time required to reach a top 5 accuracy of 93% in ResNet-50 training. Training was performed in a cluster that included 128 V100 GPUs (provided by 16 cloud service instances designed for heterogeneous computing: ecs.gn6e-c12g1.24xlarge) and used a 32 Gbit/s Virtual Private Cloud (VPC) as the communication network.

When the previous world record was set, the cluster for training included 128 V100 GPUs and used 100 GB InfiniBand as the communication network, which provided a bandwidth 3 times that of the 32 Gbit/s VPC used to set the new world record. Heterogeneous computing cloud services typically use a 32 Gbit/s VPC as their network configuration. Alibaba also chose a 32 Gbit/s VPC to better simulate the scenarios of end users.

The Alibaba Cloud heterogeneous computing team faced a major challenge due to the huge difference in physical network bandwidth between the 32 Gbit/s VPC and 100 GB InfiniBand. To overcome this, we made in-depth optimizations in two areas:

First, we optimized the model. We adjusted hyperparameters and improved optimizers to reduce the iterations required to reach 93% accuracy. We also tried to improve single-instance performance as much as possible.

Second, we optimized distributed performance. We used AIACC-Training (formerly Ali-Perseus-Training) as a distributed communication library to fully exploit the potential of the 32 Gbit/s VPC.

Together, these two optimizations allowed us to overcome a seemingly insurmountable performance barrier and helped Alibaba set a new world record with relatively low network bandwidth.

Considering the complexity of distributed training deployment, the Alibaba team used the previously developed instant build tool FastGPU to improve efficiency and allow external users to reproduce our results. FastGPU can be used to create clusters and schedule distributed training by using scripts, which can be executed in one click. This significantly improves optimization efficiency.

In the future, we will publish the AIACC-based benchmark code to allow external users to reproduce our results with a single click.

The distributed training field has grown rapidly in recent years and provides many solutions to choose from. TensorFlow supports the PS model, Ring Allreduce-style distributed communication, and third-party Horovod for distributed training.

Horovod is still the optimal open-source solution for distributed ResNet-50 training. Alibaba uses Horovod as a baseline for comparison.

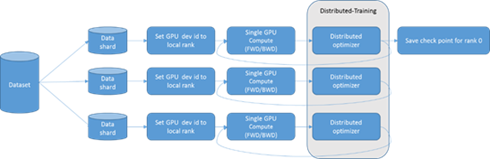

The following figure shows the logical structure of distributed training.

The minimum compute node is a single GPU. A data shard is allocated from the general dataset to every compute node as its training data. Each compute node performs forward and backward computations and obtains batch-generated gradients after backward computation.

The cluster must complete gradient communication before parameters are updated. The Horovod API inserts the multi-node communication process into optimizer implementation before gradient update.

AIACC-Training is a proprietary communication engine developed by Alibaba Cloud for distributed deep learning training. It provides support for TensorFlow, PyTorch, MxNet, and Caffe. At the infrastructure as a service (IaaS) layer, AIACC-Training provides acceleration libraries that can be integrated and are compatible with open-source libraries.

AIACC-Training has been extensively deployed in the production environments of multiple AI and Internet companies to significantly improve the cost efficiency of heterogeneous computing products. AIACC-Training provides differentiated computing services at the software layer. Its architecture is shown in the following figure.

AIACC-Training played a critical role as the distributed backend during DAWNBench deep learning training. The following sections provide a detailed analysis of the distributed optimizations that made AIACC-Training possible.

The key to distributed performance the optimization of communication efficiency. For ResNet-50, about 170 gradients are required for communication, which generates about 50 MB in traffic.

The time each gradient is generated depends on its position in the computation graph. For the gradients that depend on each other, their dependencies determine the chronological order in which they are computed.

The operators in the computation graph are independent of each other and occur at random times in each computation. To enable multi-node communication, we first need to negotiate the order of gradient synchronization.

In Horovod, Node 0 serves as the central node that conducts point-to-point (P2P) communication with other nodes to determine which gradients are ready on all the existing nodes. Then Node 0 also determines how the ready gradients are communicated. Finally, a communication policy is sent to each other node in P2P mode to guide multi-node communication.

When 128 nodes exist, this P2P negotiation policy turns Node 0 into a local hot spot because it communicates with other nodes 256 times. AIACC-Training replaces this central node-based negotiation with decentralized negotiation. The 128 nodes are distributed on 16 instances, and this topology is easily identified due to our optimizations. This avoids the creation of a hot spot with 256 communications on any single GPU.

Our optimizations enable multi-gradient negotiation because more than one gradient is ready in most cases. This reduces the communication traffic generated by negotiation by about one order of magnitude.

After gradient negotiation, all nodes know the gradients that can be used for communication at the current time. Next, we need to determine whether to immediately conduct communication for all collected gradients or select a better combination method for gradient communication.

We know that single-gradient communication is inefficient, so we need to fuse gradients for communication with a larger granularity.

AIACC-Training introduces a fine-grained gradient fusion policy. In the communication process, AIACC-Training dynamically analyzes the communication status to select a balanced fusion policy and avoid significant disparities.

This ensures communication at an even granularity and reduces the likelihood of significant fluctuations. The optimal value of gradient fusion varies depending on different network models. Therefore, we implement automatic optimization to identify the optimal fusion granularity by dynamically adjusting parameter values.

The NVIDIA Collective Communications Library (NCCL) is still used as the underlying communication library for inter-GPU data communication. The NCCL uses a programming model that only supports single-stream communication, which is inefficient. The single-stream forwarding capability is only about 10 Gbit/s.

AIACC-Training supports multi-stream communication at the upper communication engine layer. More than one stream is allocated to gradient communication, and each stream is used to implement a gradient that is split from fused gradients. The granularity of the subsequent split gradient does not depend on the current split gradient.

The communication efficiency is not affected even though multiple streams are implemented asynchronously at different rates. Therefore, optimal network bandwidth utilization can be maintained during scale-out.

Similar to fusion granularity, the number of split streams is strongly influenced by the training model and the current actual network bandwidth. This makes it impossible to determine the optimal settings offline.

We designed an automatic tuning mechanism that includes the number of communication streams. The optimal parameter settings are determined through automatic tuning based on the fusion granularity and the number of streams.

Algorithms are optimized in terms of data, models, hyperparameters, and optimizers.

In terms of data, we use multi-resolution images for progressive training. This not only significantly accelerates forward and backward computations by using low-resolution images, but also minimizes the accuracy loss due to the use of different size images in training and inference.

In terms of the model, we incorporated the advantages of recent network variants and slightly tuned BatchNorm based on the latest research.

In terms of hyperparameters, we have explored many optimization methods. For example, we use linear decay instead of the more common step decay or cosine decay for rate decay learning. We also discovered the importance of the warmup steps count.

In terms of optimizers, we redesigned the optimizer solution to incorporate the generalization advantages of stochastic gradient descent (SGD) and the rapid convergence of an adaptive optimizer. The improved optimizer accelerates training and increases accuracy.

Through the preceding optimizations, we completed training with a top 5 accuracy of 93% in 28 epochs with 1,159 iterations. The previous training required 90 epochs to reach the same accuracy.

By combining the preceding performance optimizations, we were able to reach a top 5 accuracy of 93% in only 158 seconds when training on 128 V100 GPUs, which set a new world record.

We also set a new record on inference performance, with a speed that was 5 times faster than that of the nearest competitor.

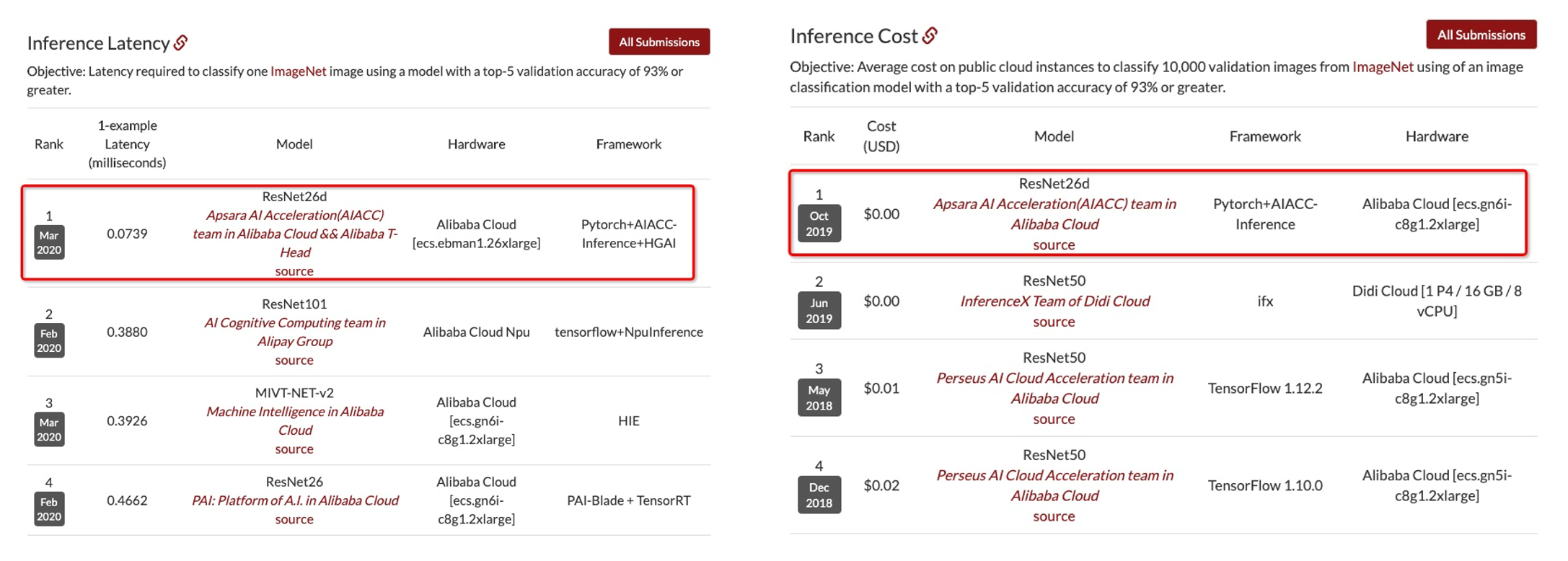

In the inference contest, DAWNBench requires participants to classify the 10,000 images in an ImageNet validation set. The classification model must reach a top 5 accuracy of at least 93%.

The average per-image time and cost for inference are calculated, with the batch size set to 1. For the previous performance record, the average inference time was less than 1 ms, which far exceeded the speed of human visual response.

In the latest rankings, Alibaba used AliNPU cloud service instances (ecs.ebman1.26xlarge) designed for heterogeneous computing to reach the highest inference performance, which was more than 5 times faster than that of the nearest competitor.

Alibaba also used GPU cloud service instances (ecs.gn6i-c8g1.2xlarge) designed for heterogeneous computing to set the record for the lowest inference cost, which has still not been surpassed. As a result, Alibaba earned first place in both the performance and cost rankings.

As we serve our customers and strive to win every DAWNBench contest, we are constantly refining our inference optimization technologies for heterogeneous computing. We developed the model acceleration engine AIACC-Inference based on customer requirements to help customers optimize models under mainstream AI frameworks, such as TensorFlow, PyTorch, MXNet, and Kaldi.

To optimize a model, AIACC-Inference analyzes the computation graph of the model and fuses the compute nodes in it. This reduces the number of compute nodes and improves the efficiency of computation graph execution.

AIACC-Inference allows users to optimize the options of FP32, FP16, and Int8 accuracy models. FP16 and Int8 models can be supported by using the Tensor Core hardware under the NVIDIA Volta and Turing frameworks. This further improves the performance of model inference on V100 and T4 GPUs.

Currently, AIACC-Inference supports common image classification and target detection models, as well as natural language processing (NLP) models and generative adversarial networks (GANs), such as Bert and StyleGAN.

We deeply optimized 1x1, 3x3, and 7x7 convolution kernels and added a new operator fusion mechanism to AIACC-Inference, allowing it to attain a performance acceleration ratio 1.5 to 2.5 times that of the fastest TensorRT in the industry.

In the last version we submitted, we replaced the base model with the simpler ResNet26d model, which attracted a great deal of attention in the industry.

To further improve model accuracy and simplify the model, we adjusted the hyperparameters and introduced more data enhancement methods. By combining AugMix and JSD loss with RandAugment, we improved the accuracy of the ResNet26d model to 93.3%, an increase of more than 0.13%.

We optimized the inference engine based on the architectural features of AliNPU. AliNPU uploads and downloads data stored in the uint8 format.

This requires us to insert the Quant and Dequant operations to restore data before and after entry to the engine. However, these operations cannot be accelerated by AliNPU on central processing units (CPUs) and take up a large amount of the inference time. When these operations are performed during preprocessing and postprocessing, the inference latency is reduced to 0.117 ms.

Considering the small size of the inference model used and the 4 Gbit/s empirical GPU bandwidth, it takes 0.03 ms to upload 147 KB data to AliNPU when an image is imported. Therefore, we introduce the preload mechanism to the framework to pre-fetch the data to AliNPU, which further reduces the average inference latency to 0.0739 ms.

Alibaba Cloud AI Tops DAWN Deep Learning Benchmark (DAWNBench)

Alibaba Cloud Helps Us Picture the Cloud Computing Future Over the Next Decade

33 posts | 12 followers

FollowAlibaba EMR - July 9, 2020

Data Geek - February 21, 2025

ApsaraDB - December 21, 2023

Alibaba Cloud Native Community - November 6, 2025

Alibaba Cloud Serverless - July 9, 2024

ApsaraDB - May 11, 2026

33 posts | 12 followers

Follow Super Computing Cluster

Super Computing Cluster

Super Computing Service provides ultimate computing performance and parallel computing cluster services for high-performance computing through high-speed RDMA network and heterogeneous accelerators such as GPU.

Learn More ECS(Elastic Compute Service)

ECS(Elastic Compute Service)

Elastic and secure virtual cloud servers to cater all your cloud hosting needs.

Learn More Elastic High Performance Computing

Elastic High Performance Computing

A HPCaaS cloud platform providing an all-in-one high-performance public computing service

Learn More Link IoT Edge

Link IoT Edge

Link IoT Edge allows for the management of millions of edge nodes by extending the capabilities of the cloud, thus providing users with services at the nearest location.

Learn MoreMore Posts by Alibaba Cloud ECS