By Motu and Hanchao

The rise of mobile and internet technologies has sparked a new trend in the e-commerce field – image search. When you see someone wearing a beautiful skirt or nice sneakers on TV, you can simply take a photo of it and upload it to your e-commerce app, such as Taobao. Taobao lets you search for similar product visually and will produce recommendations for you to make a purchase. Search by image can also help you locate the target photo from a large number of portrait photos, as well as perform an internet search to get more relevant information from an image.

Image search technology may seem far-fetched at first, but it appears deceptively simple to those familiar with the underlying concept and technology. In fact, with deep learning algorithms and efficient vector search, you can easily build an image search system in SQL without the need to master deep learning frameworks such as Tensorflow and Pytorch, or learn vision algorithm libraries such as OpenCV. This article introduces how you can quickly build your own image search system using Alibaba Cloud AnalyticDB. It describes the principles of the search by image, the demo of the search by image through AnalyticDB, the code implementation, and the AnalyticDB products. It also summarizes this application and shares the source code of the demo system.

Search by image, also called reverse image search, is a content-based image retrieval technology. With the image as the query object, the image search system will return the records that are most relevant to the target image from a large number of image records. For example, images that contain the same product as or similar products to the main item in the source image are returned through the search by image.

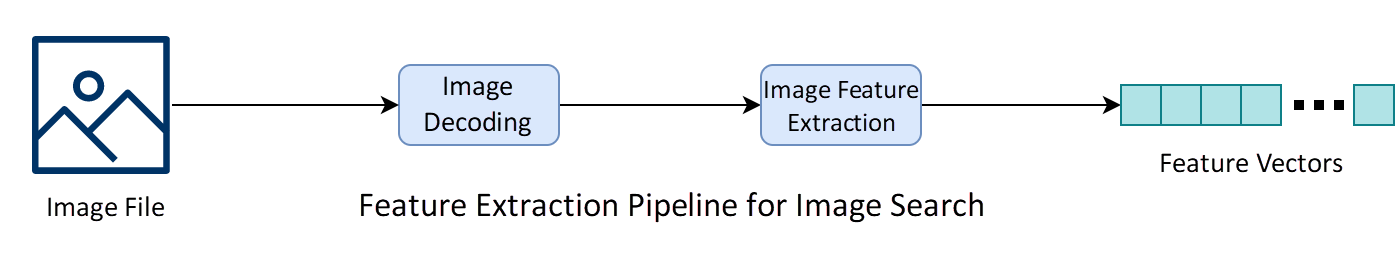

Here is a flow chart of the search by image. The image search application has two core modules: the feature extraction module and the vector search module. The feature extraction module extracts visual features from an image to obtain a high-dimensional feature vector. In this high-dimensional feature space, the more similar the images, the closer the images. The vector search module searches massive sets of image feature vectors for the first k records that are the closest to the features of the source image and returns them.

Mainstream feature extraction algorithms take deep learning models, such as VGG[1], ResNet[2], MobileNet[3], and SqueezeNet[4], as backbone networks. Then, these algorithms use different methods to generate features. Among them, the simplest way is to directly use the output of the layer before the classification layer of the visual geometry group (VGG) model as the image features. In most cases, this algorithm features a low recall rate in image search scenarios. The second way is to obtain the features of the common dimensional layer of the model through pooling by RMAC[5] and GeM[6] and reducing dimensions. The third way is to train the model on the target data set by using the dedicated loss function. For example, the feature extraction model for image search is usually subject to transfer learning on item datasets, accurately extracting the vision features of different items.

The general image search model provided by AnalyticDB uses the feature extraction model developed by Alibaba Cloud. The AnalyticDB model is trained by using massive images and uses advanced feature post-processing methods. In contrast to the feature extraction model of the commonly used VGG classification model, AnalyticDB with multi-scale features can better balance the local features and high-level features of images and provide better generalization ability in a variety of image scenarios.

AnalyticDB provides the image recognition model developed by Alibaba Cloud. Based on enormous data training, the model has been widely used in security and new retail scenarios across multiple cities.

Vector search, also known as nearest neighbor search (NN), is mainly responsible for quickly finding k records nearest to the source vector. The nearest records can be found by calculating the distance between the source vector and all vectors in the database after sorting. However, this method is too time-consuming to meet the requirements of large-scale data scenarios. In practical application scenarios, we usually use the approximate nearest neighbor search (ANN), which quickly returns records that may be the nearest neighbors of the query target based on the features of vector data at the expense of certain retrieval accuracy. Common ANN methods include Locality Sensitive Hashing (LSH)[7], product quantization[8], and image-based search[9].

OpenAnalytic, an unstructured analysis tool on AnalyticDB, provides a wealth of artificial intelligence (AI) algorithm operators for image, video, and text analysis, such as gender recognition, age recognition, commodity attribute recognition, image target detection, voiceprint recognition, and text feature extraction. You can freely use these AI operators to orchestrate your algorithm pipelines based on your actual needs. For example, the image feature extraction pipeline and the feature extraction pipeline are used in this article, as shown in the following figure. You can run these pipelines in a distributed manner on an AnalyticDB cluster to obtain unstructured data analysis results only by creating pipelines through the pipeline_create user-defined function (UDF).

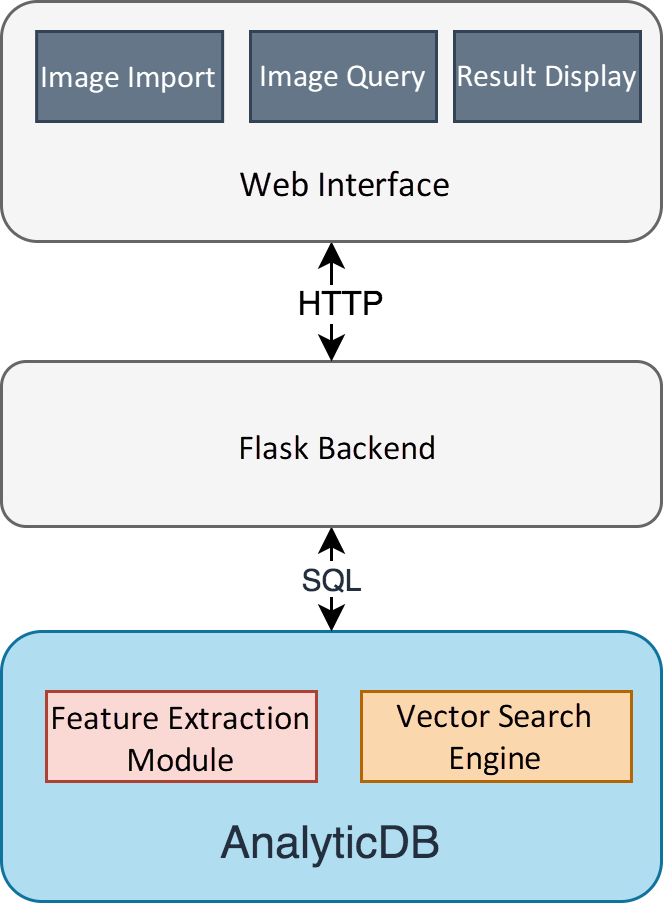

We build two demo systems by using AnalyticDB, that is, the general image search system and the retrieval system. The source code of the demo system is open-source. To start the demo system, you only need to download the source code and activate AnalyticDB. For the address of the source code, see section 6. Only 1 RMB is required to experience AnalyticDB. The following figure shows the architecture of the demo, which is very simple. Without relying on deep learning and inference, AnalyticDB can recognize and store images, and allows you to query data.

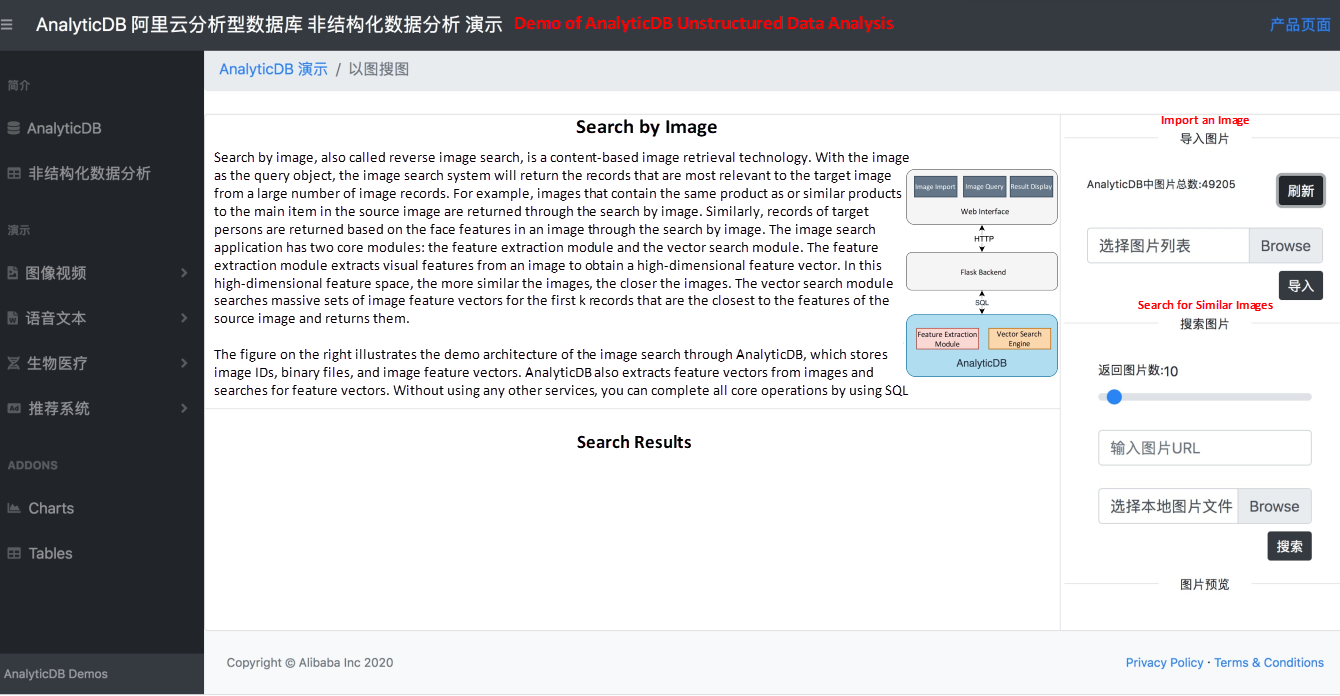

The following figure shows the image search system. You can import multiple local images to AnalyticDB to create the target album. You can search for images by selecting local images or entering the URLs of online images. In addition, you can specify the number of images that best match the query result.

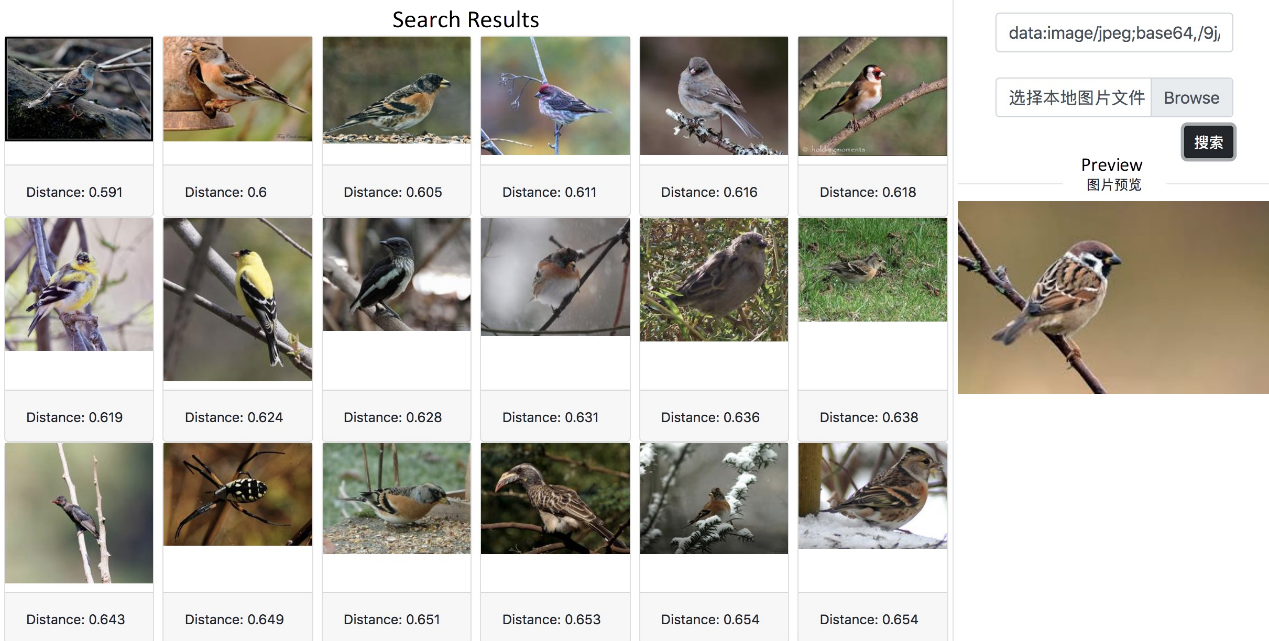

For demonstration, we imported nearly 50,000 images to AnalyticDB in advance. As shown in the following figure, when you search for images (preview on the right) based on a picture of a bird, all the returned images are photos of similar birds. The distance from the features of the source image appears at the bottom of the photos. The closer the distance is, the more similar the image is.

Next, let's see how to use AnalyticDB to build the image search and recognition systems mentioned in the previous section.

Create the unstructured analysis plug-in OpenAnalytic and the vector search plug-in fastun for AnalyticDB.

CREATE EXTENSION IF NOT EXISTS open_analytic;

CREATE EXTENSION IF NOT EXISTS fastann;We can use the following SQL statements to create a table that stores image names, binary files, and image feature vectors. You can also save image files to Object Storage Service (OSS) on Alibaba Cloud. However, we will not describe this in detail here.

CREATE TABLE image_search_table (

image_name TEXT NOT NULL, # Image file name

image_data BYTEA NOT NULL, # Image binary file

feature REAL[] NOT NULL, # Image feature

PRIMARY KEY (image_name)

);Create an ANN index for feature vector columns of images to speed up queries.

CREATE INDEX image_search_feature_index

ON image_search_table USING ann (feature) WITH (dim = 1024);Run the pipeline created in section 4.3 by using the following SQL statement. The input to this UDF is the pipeline name and the image byte array. The output is a JSON string that contains image feature vectors.

SELECT open_analytic.pipeline_run_dist_random('general_feature_extractor',

<image_byte_array>);Run the pipeline created in section 4.3 by using the following SQL statement. The input to this UDF is the pipeline name and the image byte array. The output is a JSON string that contains image feature vectors.

SELECT open_analytic.pipeline_run_dist_random('general_feature_extractor',

<image_byte_array>);After you obtain the image features, you can import the image data to image_search_table created in section 4.1.

INSERT INTO image_search_table VALUES (<image_name>,

<image_byte_array>, <image_feature>);Search for the first 10 entries that are nearest to the query image vector by using the following SQL statements.

SELECT image_name, image_data, l2_distance(feature, <feature_vector>)

FROM image_search_table

ORDER BY feature <-> <feature_vector>

LIMIT 10;AnalyticDB is a high-concurrency, low-latency, and real-time data warehouse on Alibaba Cloud, which supports petabytes of data. It also supports instant multi-dimensional analysis and service exploration for trillions of data entries within milliseconds. AnalyticDB for MySQL is fully compatible with the MySQL protocol and the SQL:2003 standard. AnalyticDB for PostgreSQL supports SQL:2003 and is highly compatible with the Oracle syntax ecosystem.

Vector search and unstructured data analysis are advanced features of AnalyticDB. Both products support vector search, similarity query, and recommendation systems for images, human bodies, and vehicles. In actual application scenarios, AnalyticDB can query billions of vector data entries and respond within milliseconds. AnalyticDB has been widely used in major projects across multiple cities.

In general application systems that involve vector search, developers often use a vector search engine, such as Faiss, to store vector data and then store structured data in relational databases. This means you have to alternate between both systems during queries. Moreover, this solution requires additional development effort and does not provide optimal performance. AnalyticDB supports the retrieval of structured and non-structured data (vectors). This means you can simply use an SQL interface to quickly implement image search and hybrid image + structured data search. In hybrid search scenarios, the optimizer of AnalyticDB selects the optimal execution plan based on data distribution and query conditions in order to achieve optimal performance while ensuring retrievability. AnalyticDB-V adopts many innovative technologies, which are introduced in detail in our paper titled AnalyticDB-V: A Hybrid Analytical Engine Towards Query Fusion for Structured and Unstructured Data. This technically leading paper has been accepted by the International Conference on Very Large Data Bases (VLDB), one of the three top database conferences.

Hybrid structured and unstructured information (image) retrieval is widely used in practical applications. AnalyticDB also provides advanced image and text analysis algorithms, which can extract the features and labels of unstructured data. You can then analyze images and texts by using SQL.

This article described how to build an image search system and image recognition system by using AnalyticDB. You can download the source code of the demo system at https://github.com/aliyun/alibabacloud-AnalyticDB-python-demo-AI. AnalyticDB also supports a variety of other AI algorithms, such as target detection, commodity recognition, voiceprint recognition, and Gene recognition.

[1] Simonyan, Karen, and Andrew Zisserman. "Very deep convolutional networks for large-scale image recognition." arXiv preprint arXiv:1409.1556 (2014).

[2] He, Kaiming, et al. "Deep residual learning for image recognition." Proceedings of the IEEE conference on computer vision and pattern recognition. 2016.

[3] Howard, Andrew G., et al. "Mobilenets: Efficient convolutional neural networks for mobile vision applications." arXiv preprint arXiv:1704.04861 (2017).

[4] Iandola, Forrest N., et al. "SqueezeNet: AlexNet-level accuracy with 50x fewer parameters and< 0.5 MB model size." arXiv preprint arXiv:1602.07360 (2016).

[5] Gordo, Albert, et al. "Deep image retrieval: Learning global representations for image search." European conference on computer vision. Springer, Cham, 2016.

[6] Radenović, Filip, Giorgos Tolias, and Ondřej Chum. "Fine-tuning CNN image retrieval with no human annotation." IEEE transactions on pattern analysis and machine intelligence 41.7 (2018): 1655-1668.

[7] Indyk, Piotr, and Rajeev Motwani. "Approximate nearest neighbors: towards removing the curse of dimensionality." Proceedings of the thirtieth annual ACM symposium on Theory of computing. 1998.

[8] Jegou, Herve, Matthijs Douze, and Cordelia Schmid. "Product quantization for nearest neighbor search." IEEE transactions on pattern analysis and machine intelligence 33.1 (2010): 117-128.

[9] Malkov, Yury A., and Dmitry A. Yashunin. "Efficient and robust approximate nearest neighbor search using hierarchical navigable small world graphs." IEEE transactions on pattern analysis and machine intelligence (2018).

ApsaraDB - October 24, 2025

ApsaraDB - December 21, 2023

Alibaba Cloud Community - July 26, 2023

Alibaba Cloud Community - January 4, 2024

ApsaraDB - February 7, 2025

ApsaraDB - March 20, 2024

Image Search

Image Search

An intelligent image search service with product search and generic search features to help users resolve image search requests.

Learn More CT Image Analytics Solution

CT Image Analytics Solution

This technology can assist realizing quantitative analysis, speeding up CT image analytics, avoiding errors caused by fatigue and adjusting treatment plans in time.

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn MoreMore Posts by ApsaraDB