By Ding Ye, an expert on Alibaba Cloud intelligent products.

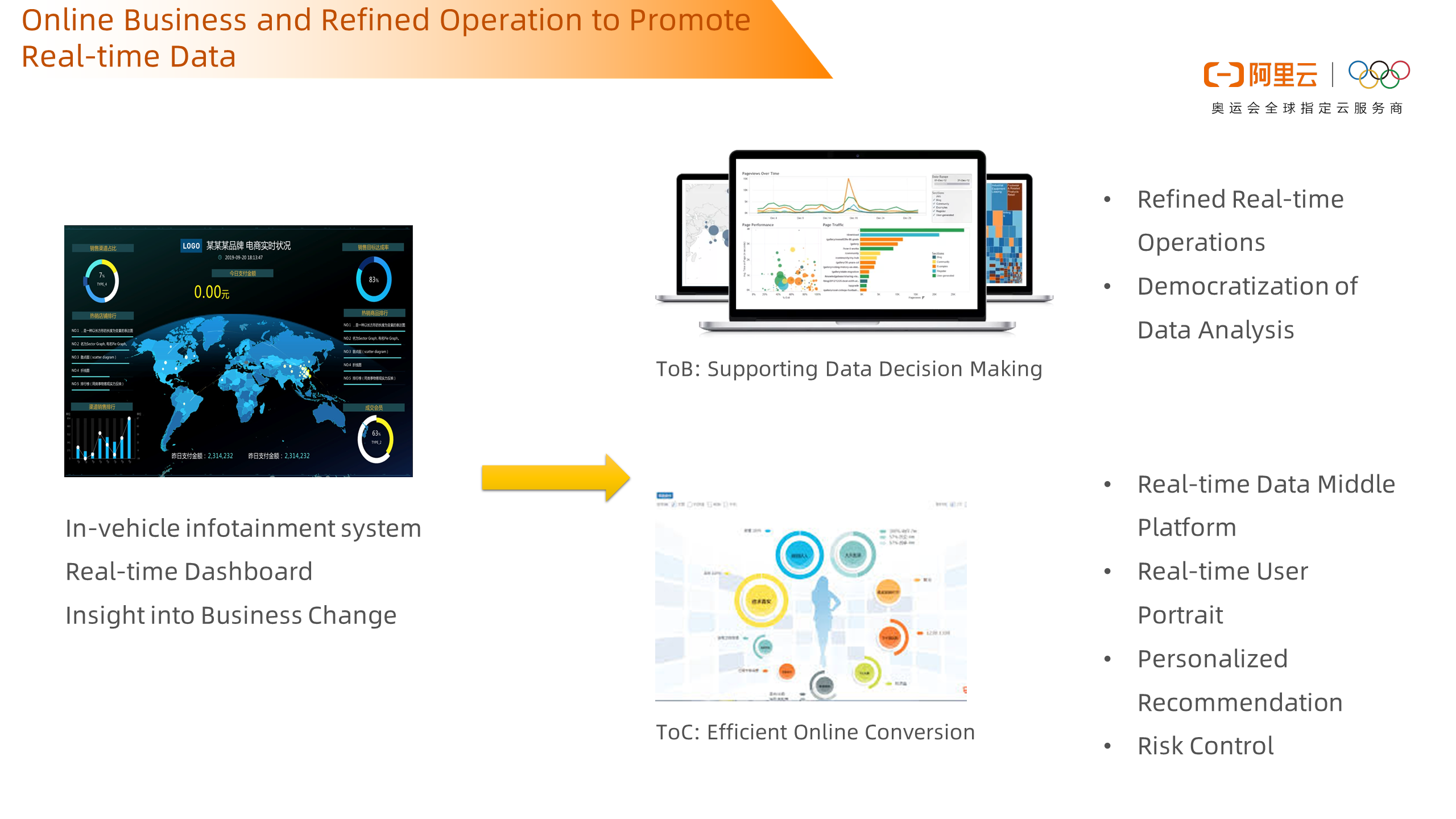

With the development of new internet-based technologies, there has been an increasingly strong demand for online and refined operations for businesses. In-vehicle infotainment system and real-time dashboard have played an important role in this trend. For the ToB business, these tools are necessary to support data decision-making, empowering the business with data analysis and real-time refined operations capabilities. For the ToC business, these tools help improve the efficiency of online conversion, which includes creating a real-time data middle platform, real-time user portraits, personalized recommendations, and real-time risk control requirements.

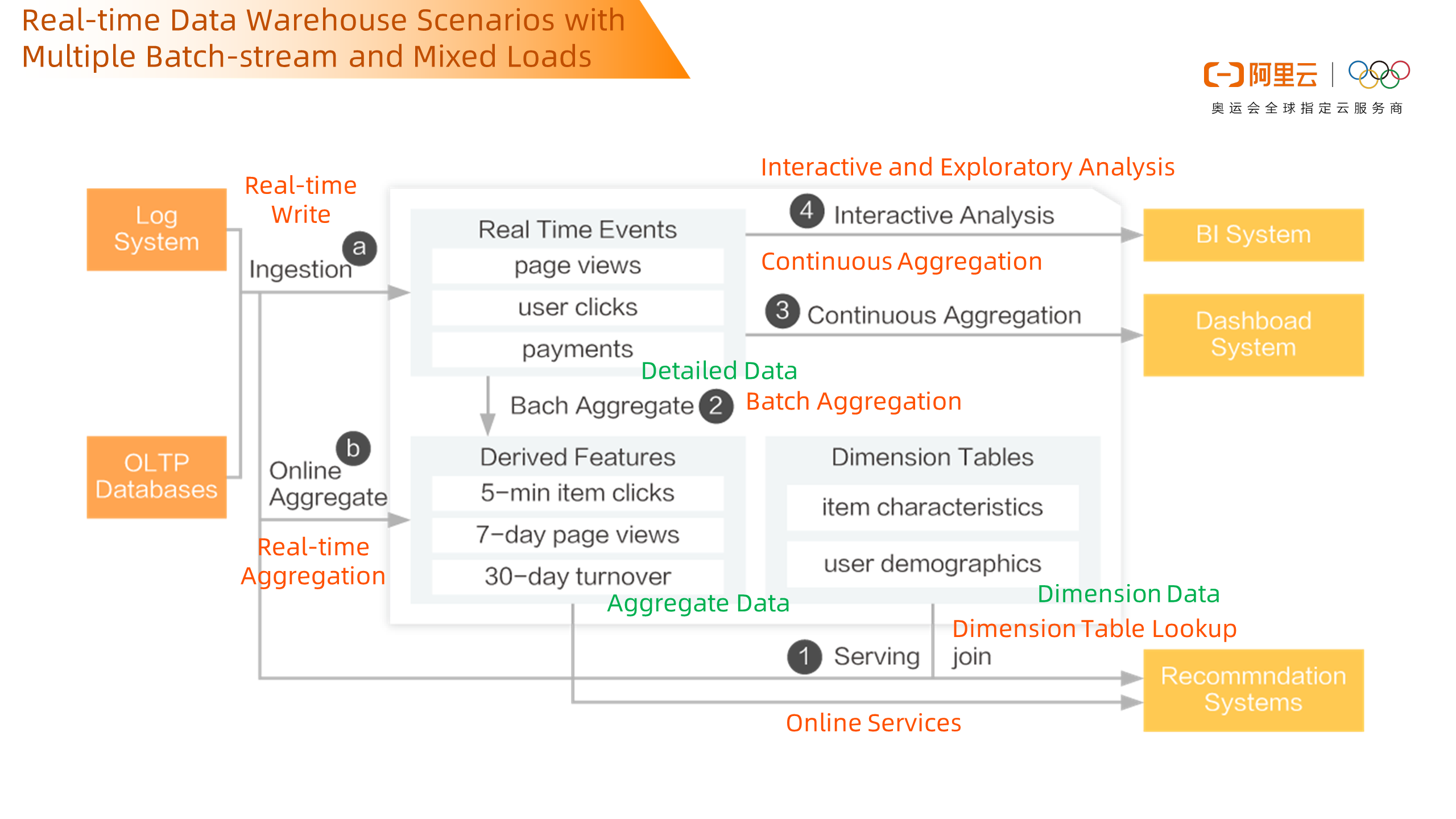

This is a common business requirement architecture. Data from the log system and the trading system are written to data warehouses in real-time. For written data, two traces are passed through, and one trace generates detailed data. The query is ad-hoc online by the front-end BI system. At the same time, it can be continuously displayed by the dashboard in real-time. Also, these detailed data will be aggregated in real-time to form aggregated data, such as page traffic details, and user click details will be aggregated into 5-minute commodity browsing records, 7-day browsing records, 30-day circulation records, etc. These data provide online services to the recommendation system, and this process will also be associated with dimension table data, such as user characteristics, commodity characteristics, etc. Aggregation is performed after association to serve the online system.

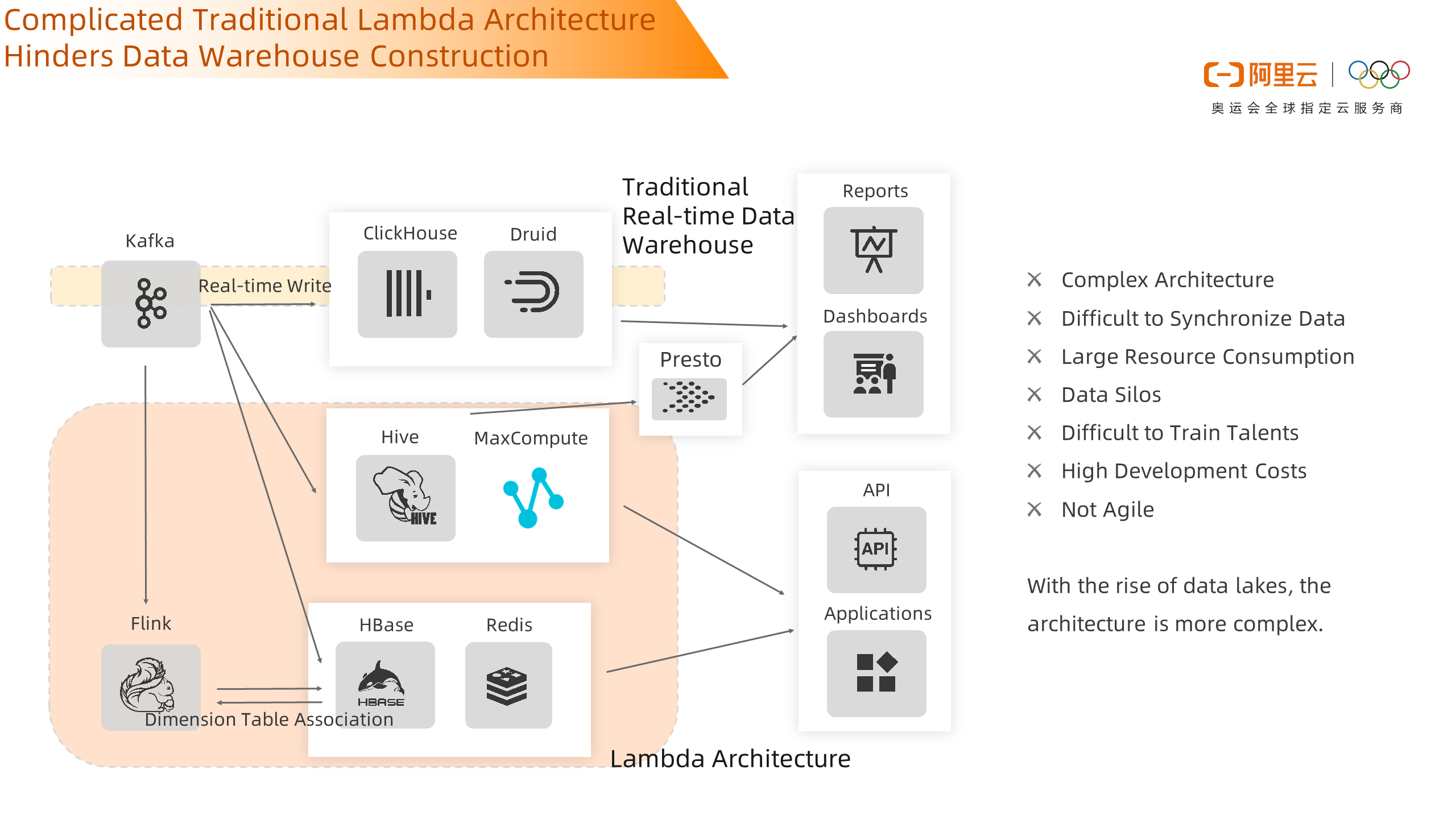

To meet the needs of businesses, Lambda architecture is generally used to build data warehouses. Customers write OLAP systems such as Clickhouse or Druid in real-time. At the same time, for online services, systems such as Hbase and Redis are used to support them. Finally, offline services are archived in offline data warehouses such as Hive and MaxCompute. Sometimes businesses need to integrate online analysis. Presto will be used to accelerate the query of these offline and online data, and then as a unified export, is provided to reports and dashboards for use. As mentioned before, there may be some requirements for real-time aggregation and dimension tables. These dimension tables are often stored in HBase. After real-time aggregating with transaction data, they become the number of views of SKU in 5 days or page traffic data in 7 days and others we mentioned. Then write them back into HBase or Redis, and face services such as API or mobile phone App in real-time. Naturally, we will find that there will be many lines in the whole trace, which will cause some problems, such as complex architecture, difficulty in synchronizing data, large resource consumption, data silos, and other problems. It is easy to find that in this architecture, data is moved many times, resulting in long processing traces and inconsistent data. As components increase, the development difficulty, architecture complexity, and O&M difficulty increase.

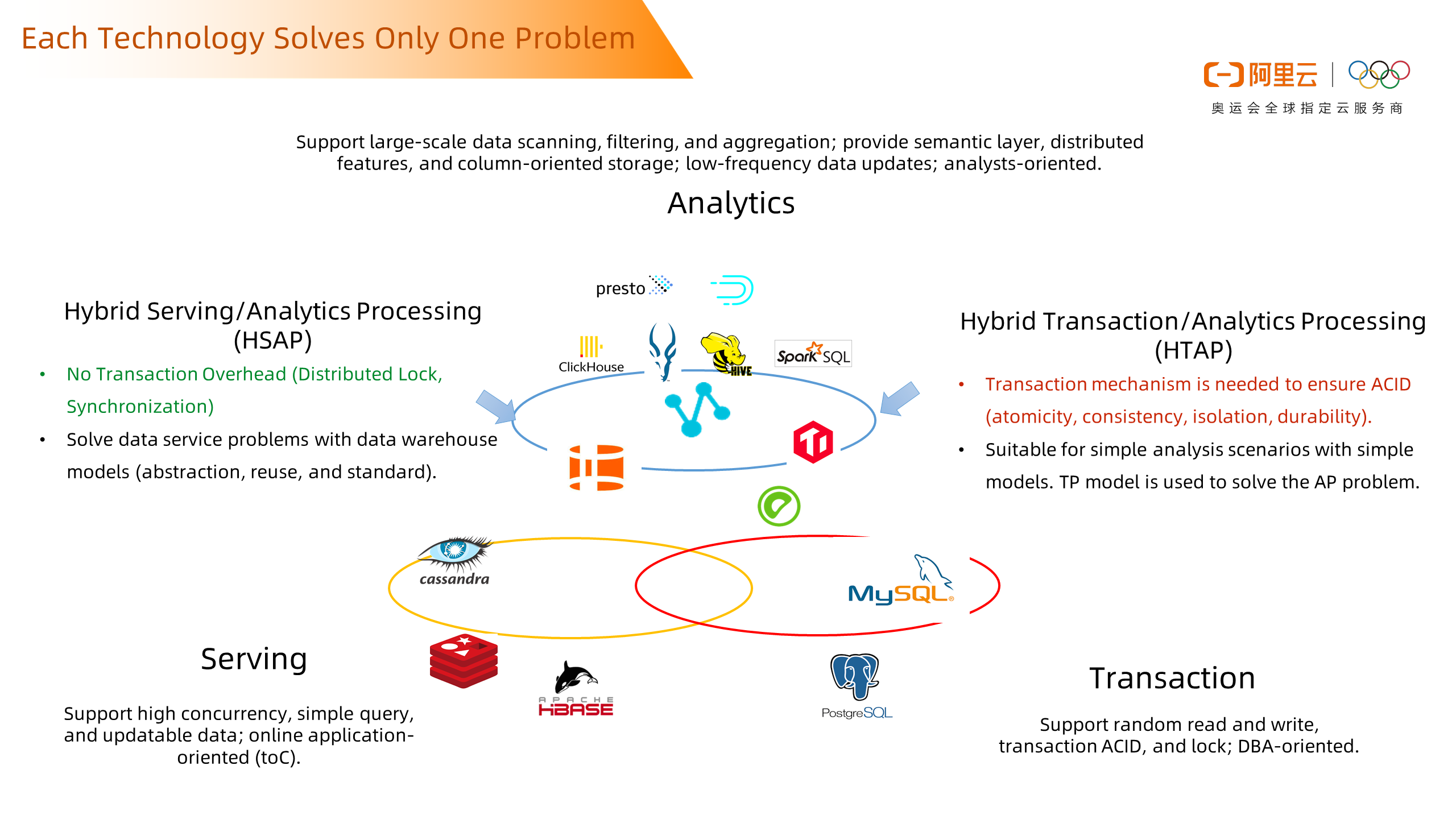

Under this framework, let's look at what problems each technology solves. We can roughly divide these technologies into three categories. We can think about the business requirements of the entire scenario, such as being suitable for aggregate computing, high throughput, and high availability.

The first type is the transaction database, the general transaction database is stored according to the row. For the transaction type of data, this has a good update ability. But for tens of millions of statistical queries and above, the consumption is large, so we do not use the transaction type database for analysis.

The second type is the OLAP system. This type of technology will make many optimizations for analysis scenarios, such as column storage technology, distributed technology, index technology, etc. This type of technology query is fast, but often insufficient in the update.

The third type is also common in big data analysis scenarios. We define them as serving systems. They need to provide online services and have high throughput and ultra-fast queries with flexibility sacrificed, such as document databases or KV query databases. The query and update efficiency for Key/Value is high.

The existing architecture is to split different businesses into different systems for storage according to the characteristics of the business. Data is exchanged in each system. Each data exchange brings the cost of data relocation, the possibility of data inconsistency, and the complexity of data development. Therefore, we innovate in many fields. The first type is to innovate in TP and AP fields. In the mixed load scenario, technology is used to solve loads of TP and AP and a system support both transactions and analysis. This state is ideal. We also hope that this system can land although it is still too ideal. Due to supporting transactions, there will be more lock overhead, then there will be higher cost and more load on the entire concurrent query and update. There can be some innovations from the left side. We found that the most obvious thing on the left side is that it does not support transactions. If you don't need so many transactions, then you don't need such locks. Then, it is more likely to support higher query performance and provide stronger write and update capabilities. Perhaps the technology on the left can cover the analysis and service integration scenarios we mentioned above.

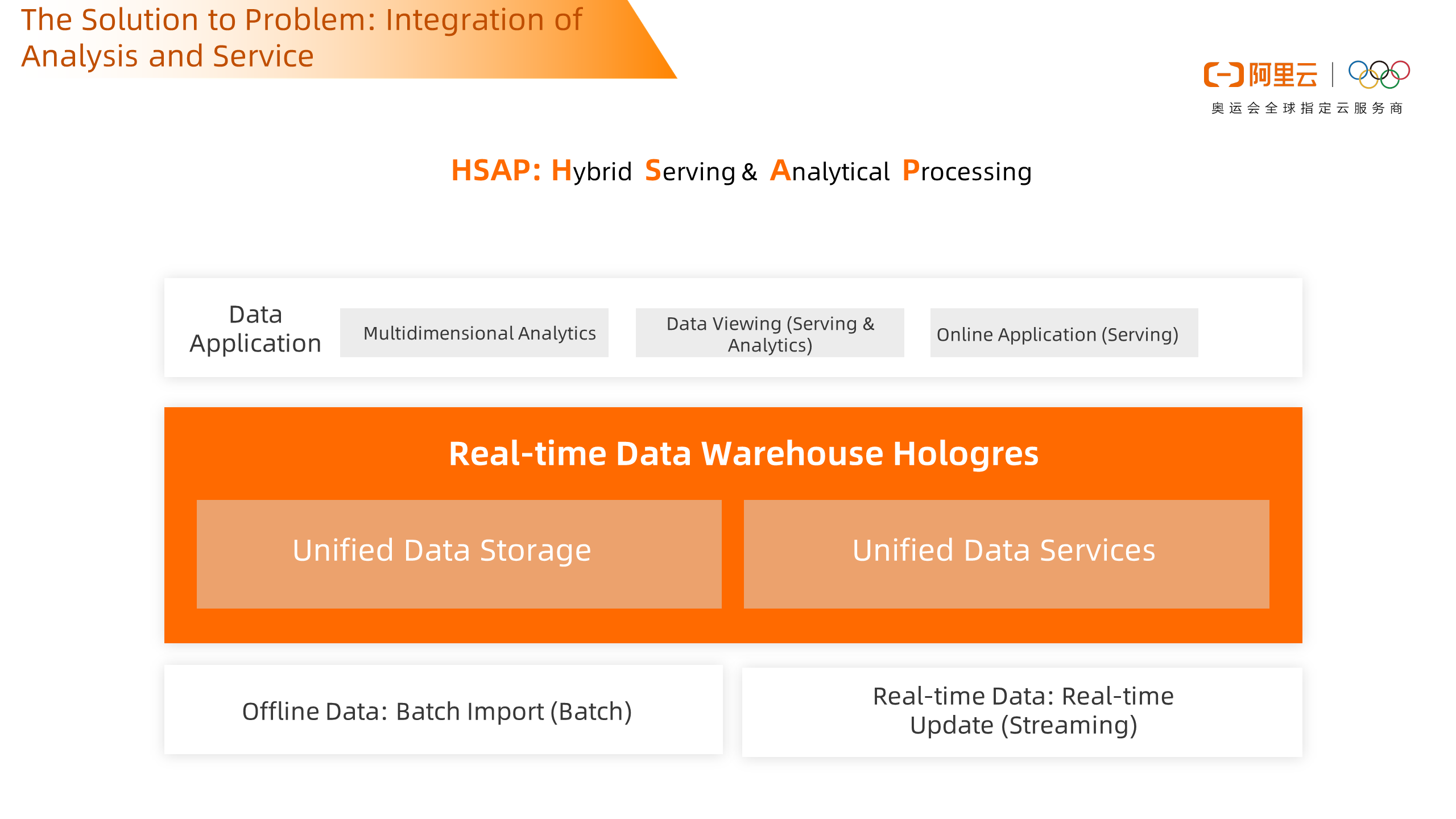

Hologres is a product that integrates analytics and services as described on the left. A system supports multiple scenarios. It supports OLAP analysis, point check, and online services. It also supports offline data import and real-time data updates. This is in the true sense to achieve the integration of analysis and services.

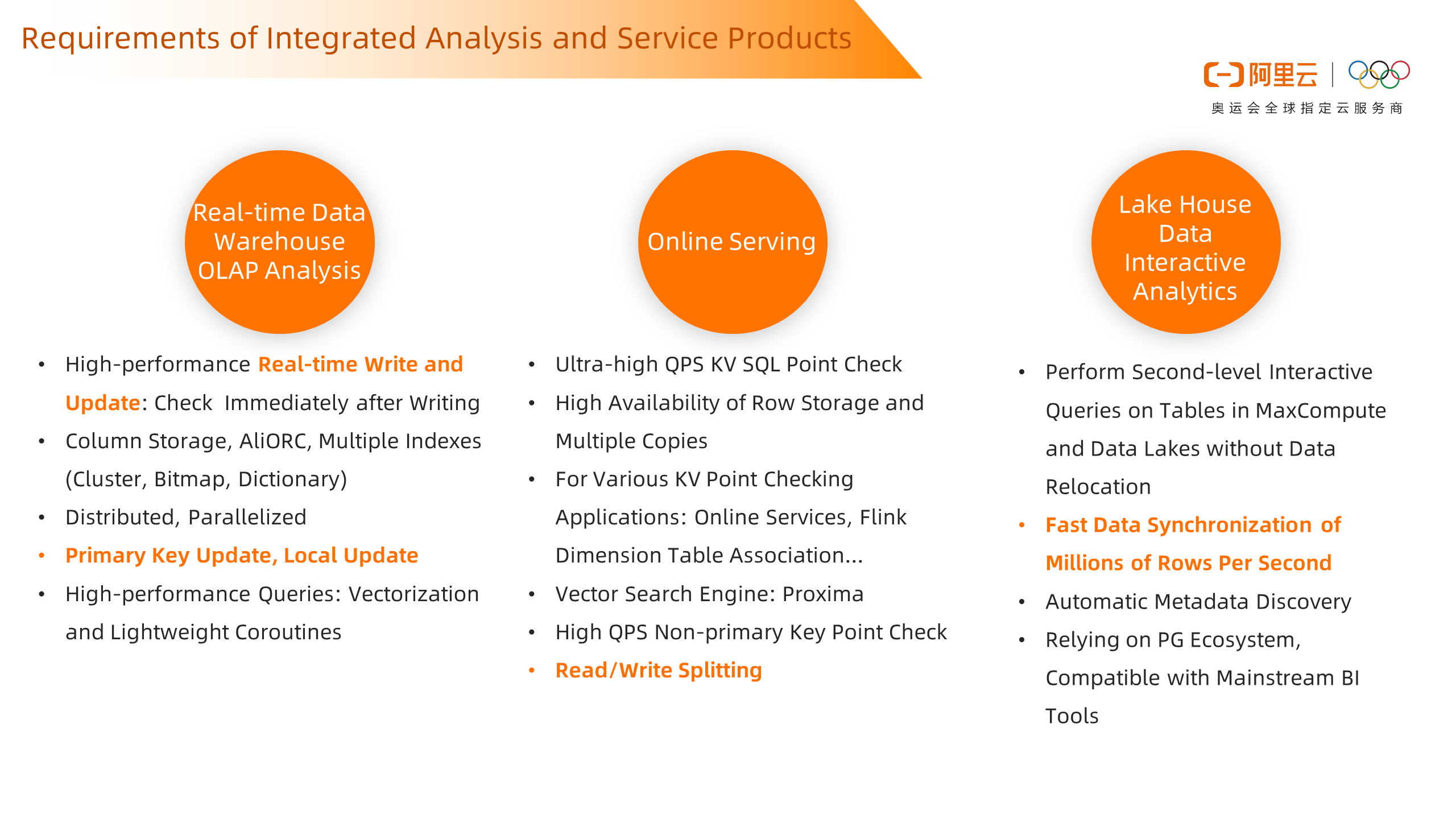

The capabilities of the product are related to requirements. In OLAP analysis scenarios, we provide high-performance real-time write and update capabilities, which can be checked upon writing. We use column storage, compression, indexing, and other technologies to support high-performance queries and analysis. It also supports full update and local update scenarios based on primary keys. This capability is especially important in real-time scenarios where data usually lies in OLTP transaction systems. Data in transactional databases usually have primary keys. The setting of primary keys can also avoid repeated writing of dirty data. Therefore, the update capability of primary keys is becoming more and more important in real-time scenarios. At the same time, in online service scenarios, we support row storage, which can provide tens of millions of QPS key /value point query capabilities, support the high availability of multiple copies of row storage data, and ensure the high availability of services. Service scenarios are sensitive and require stronger resource isolation to ensure service stability. Therefore, we now provide a read /write splitting architecture to avoid the impact of high-throughput writes on reads. Finally, in the data lake analysis scenario, we can accelerate the offline data of MaxCompute and analyze the data in MaxCompute without data relocation. In addition, we can support the rapid synchronization of millions of rows of data per second to reduce the data latency in scenarios such as offline re-brushing.

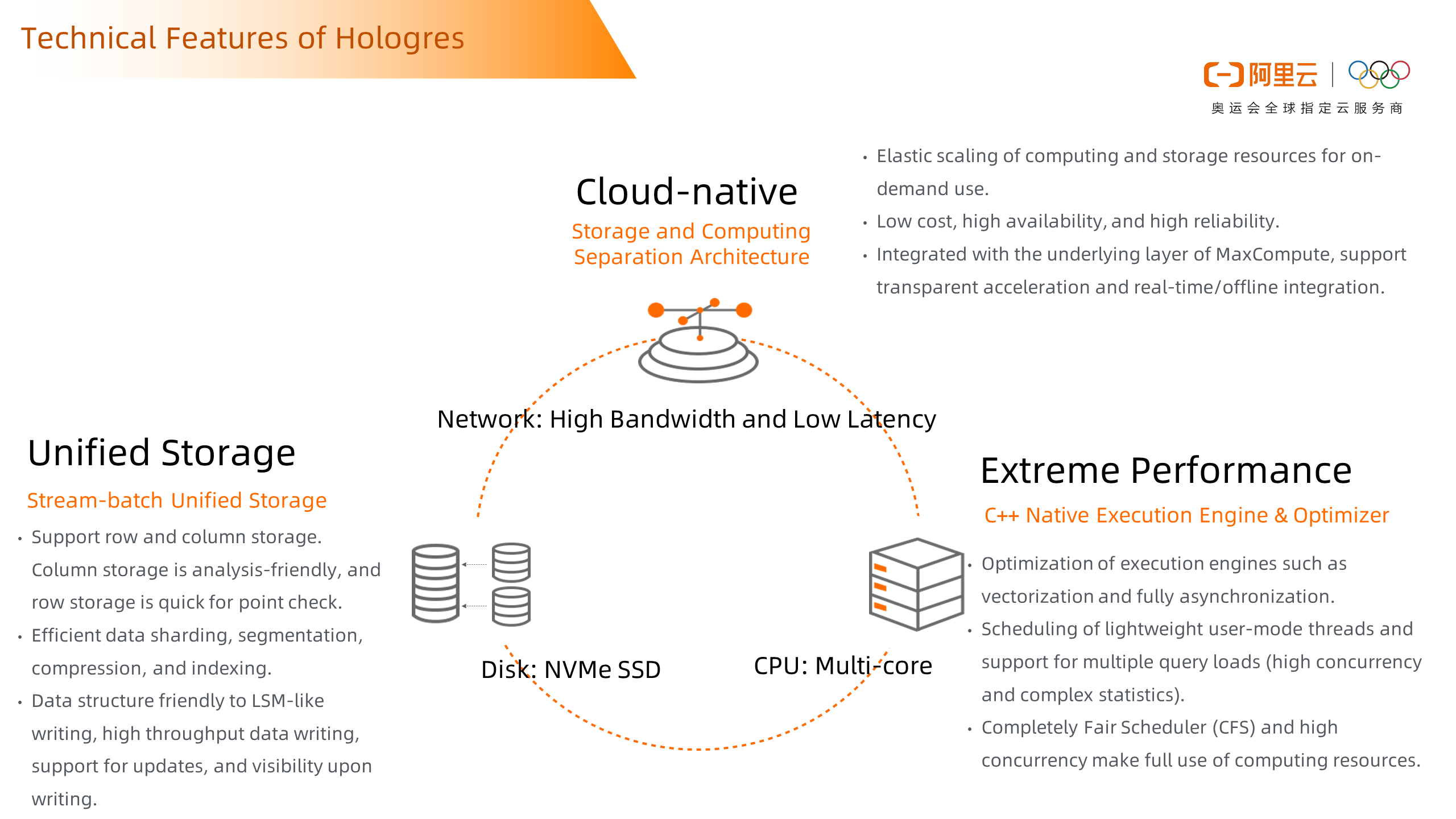

Why can Hologres do this? It is not so mysterious. It is due to the development of IT technology and the increasing network bandwidth. The architecture of storage and computing separation uses the distributed file system – Pangu – proprietary to Alibaba Cloud. And the entire system can be made lighter, with multiple copies and high availability. In the event of an accident, you can quickly load data back from Pangu to restore services. The next step is storage. For data update scenarios, many systems in the past were designed according to scanning scenarios, so it is not suitable for the rapid update. Hologres underlying storage uses SSD storage media, which has stronger random reading and writing capabilities. The traditional system design for scanning scenarios can be put aside and row and column storage can be used to deal with different scenarios. The third is the multi-core of CPU. With more and more CPU cores, improving CPU utilization and exerting parallel computing capabilities can effectively improve performance. Hologres is developed using C++ and uses a fully asynchronous execution engine to maximize the performance of multi-core.

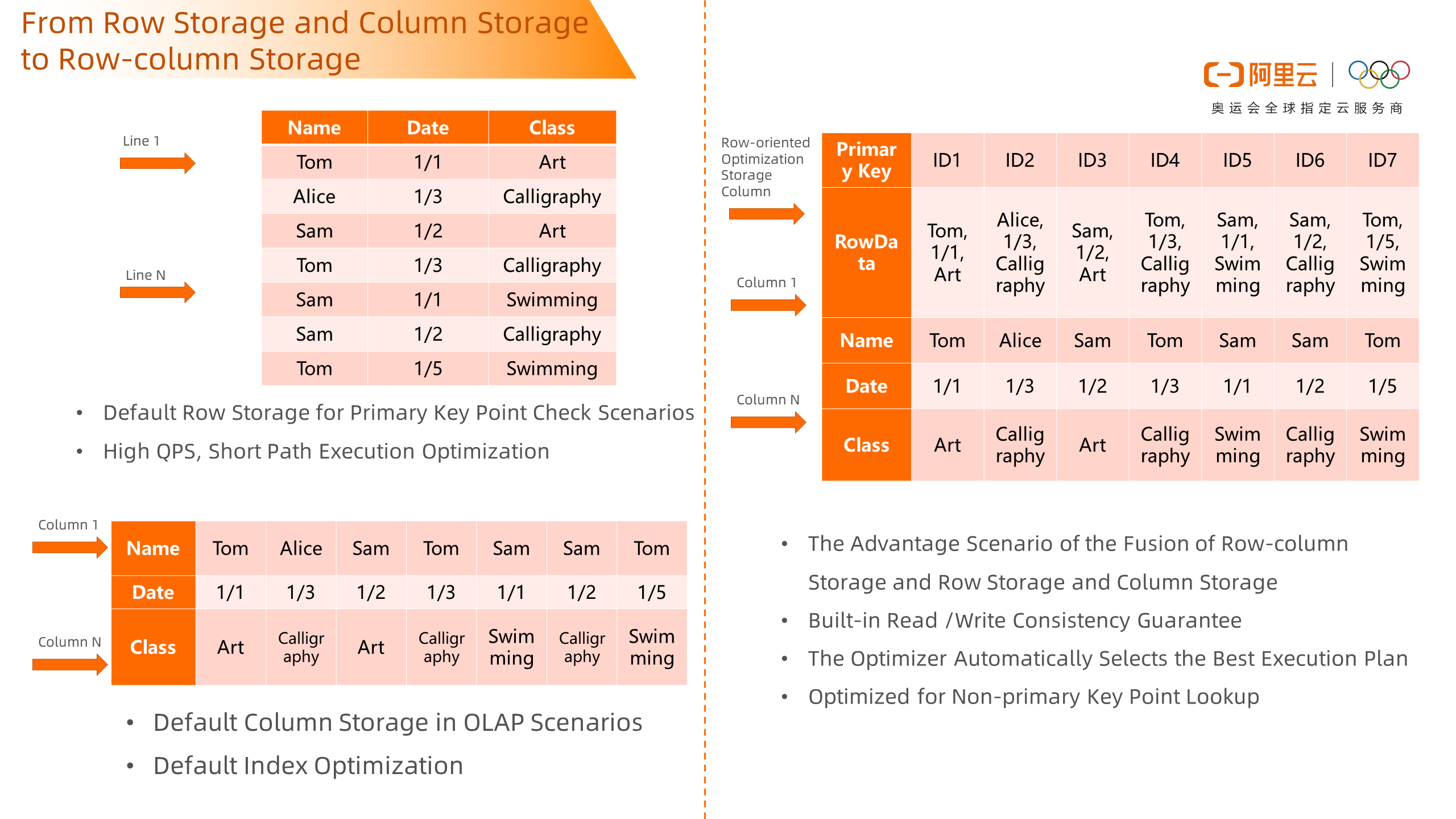

In previous versions, we supported row storage and data is stored by row. This is more suitable for key /value point query scenarios and supports query scenarios with high QPS. It also supports column storage, which stores data by column and is more suitable for OLAP scenarios. However, real scenarios are more complex. After a table is generated, it is difficult to support only one scenario. Therefore, we have introduced a row-column storage table. A table stores both row and column storage tables at the backend. Hologres ensures read and write consistency. The optimizer uses the most suitable storage to answer queries for suitable scenarios based on the characteristics of queries. This takes into account the advantages of both row and column storage scenarios.

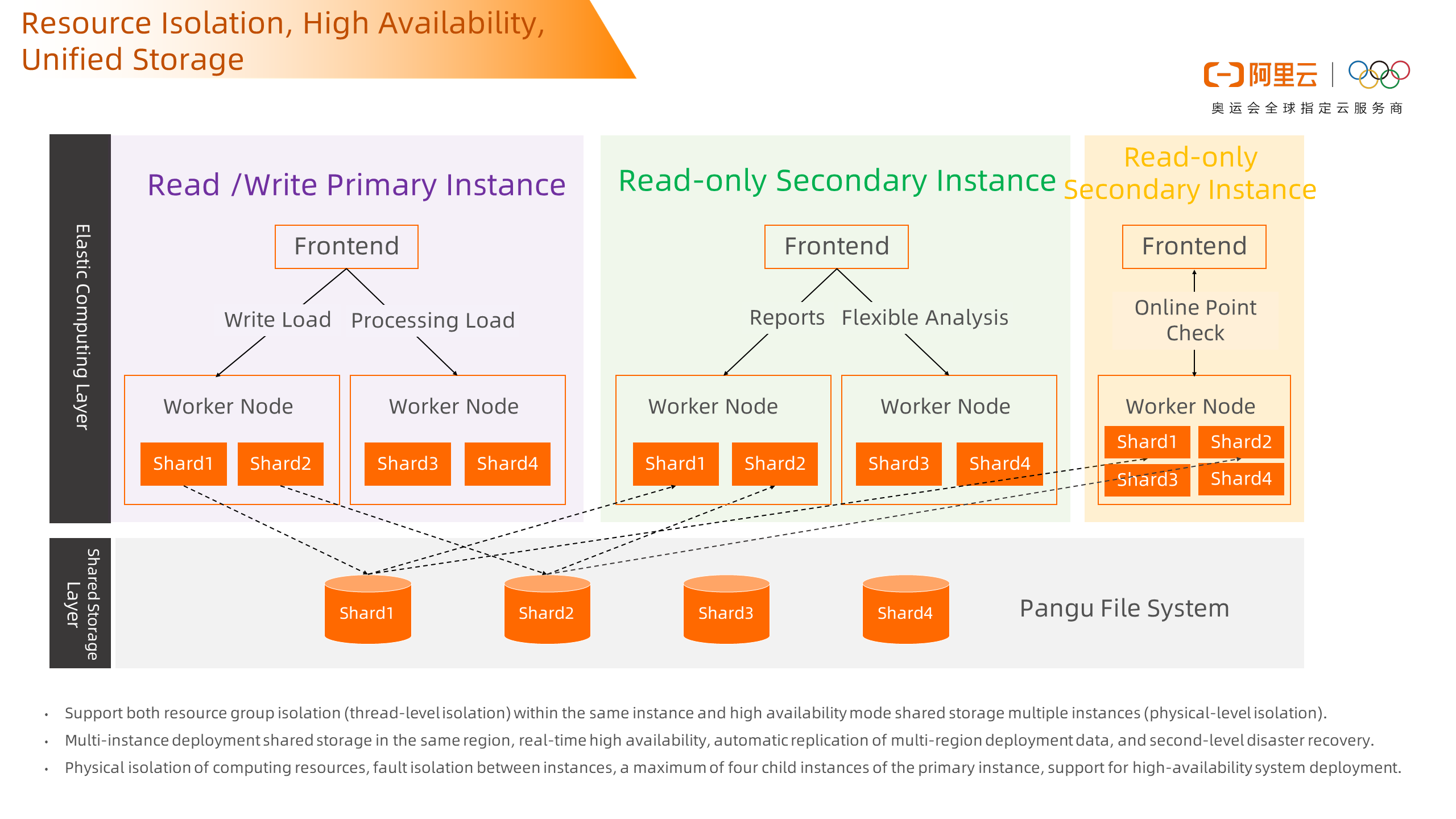

To improve availability and provide stronger resource isolation, we now support not only thread-level resource group isolation within the same instance, but also support shared storage high availability mode, where multiple instances share a share of storage. For primary instances that are read and written, high-performance write capabilities are provided for processing loads. Multiple read-only slave instances are configured at the same time to meet the requirements of different loads. For example, one read-only slave instance provides online OLAP analysis, and one read-only slave instance supports point-based analysis. They do not affect each other to achieve high availability and resource isolation.

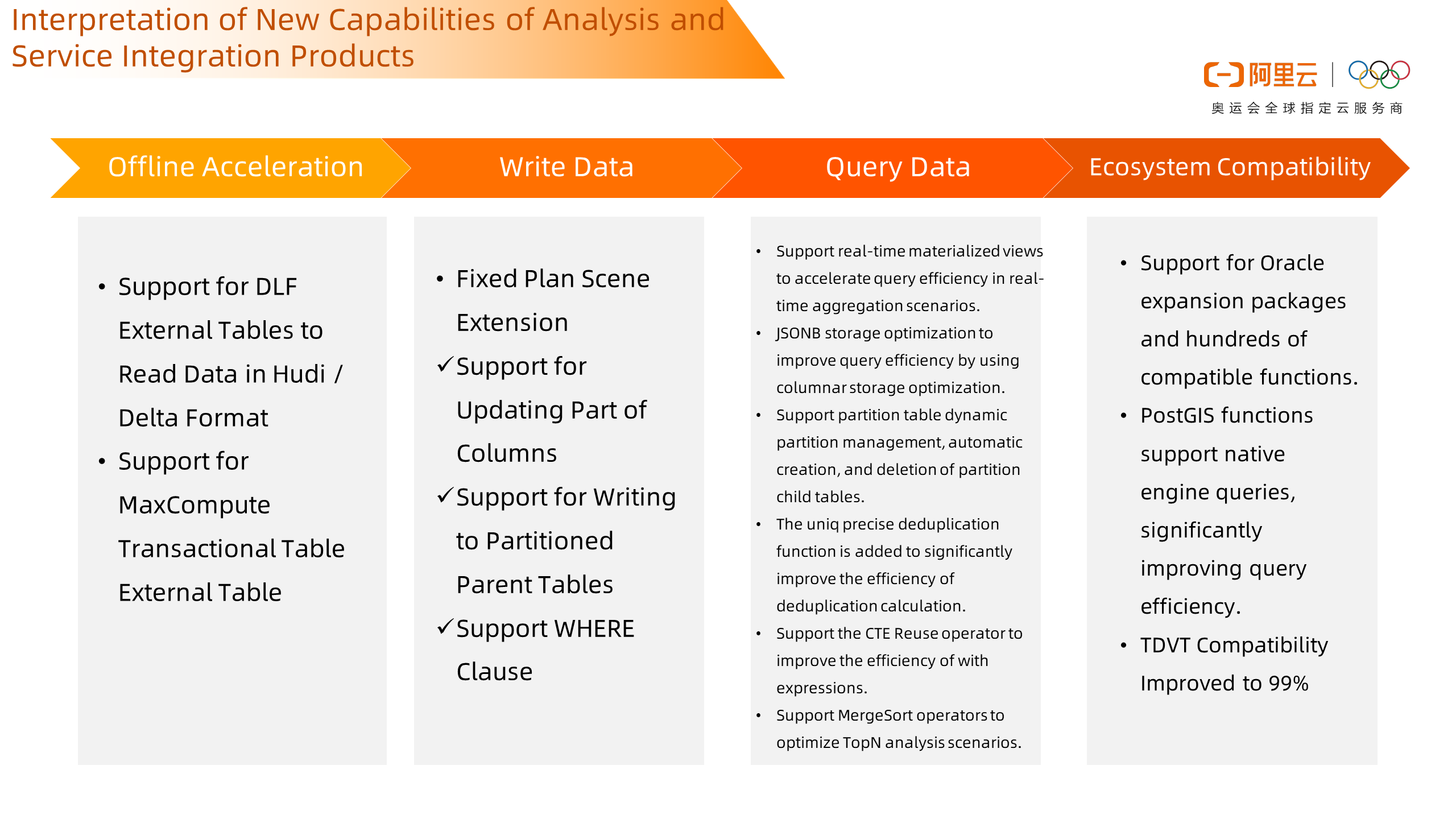

This is a preview of Hologres' future capabilities. In the upcoming 1.3 release, Hologres will provide more capabilities for offline acceleration in data lakes. It supports reading data in Hudi and Delta formats from OSS and supports the offline acceleration of transactional tables from MaxCompute. Data writing further extend the scenarios supported by Fixed Plan to update some columns and write partitions to parent tables. It supports real-time materialized views to accelerate real-time aggregate query scenarios. It also supports column store optimization of JSONB, which improves storage and query efficiency by adopting column storage. Meanwhile, it supports the automatic creation and deletion of partition sub-tables for many partition table scenarios that users use daily. This facilitates easy management and partition table. There are also many optimizations for queries. Finally, in terms of ecological compatibility, it supports the Oracle extension package, which is compatible with hundreds of compatible functions. At the same time, PostGIS supports pushing down to the native Hologres engine to improve query efficiency. As a big data product, it is usually used to connect BI systems. In the latest version, we have passed the official test set of Tableau by more than 99%.

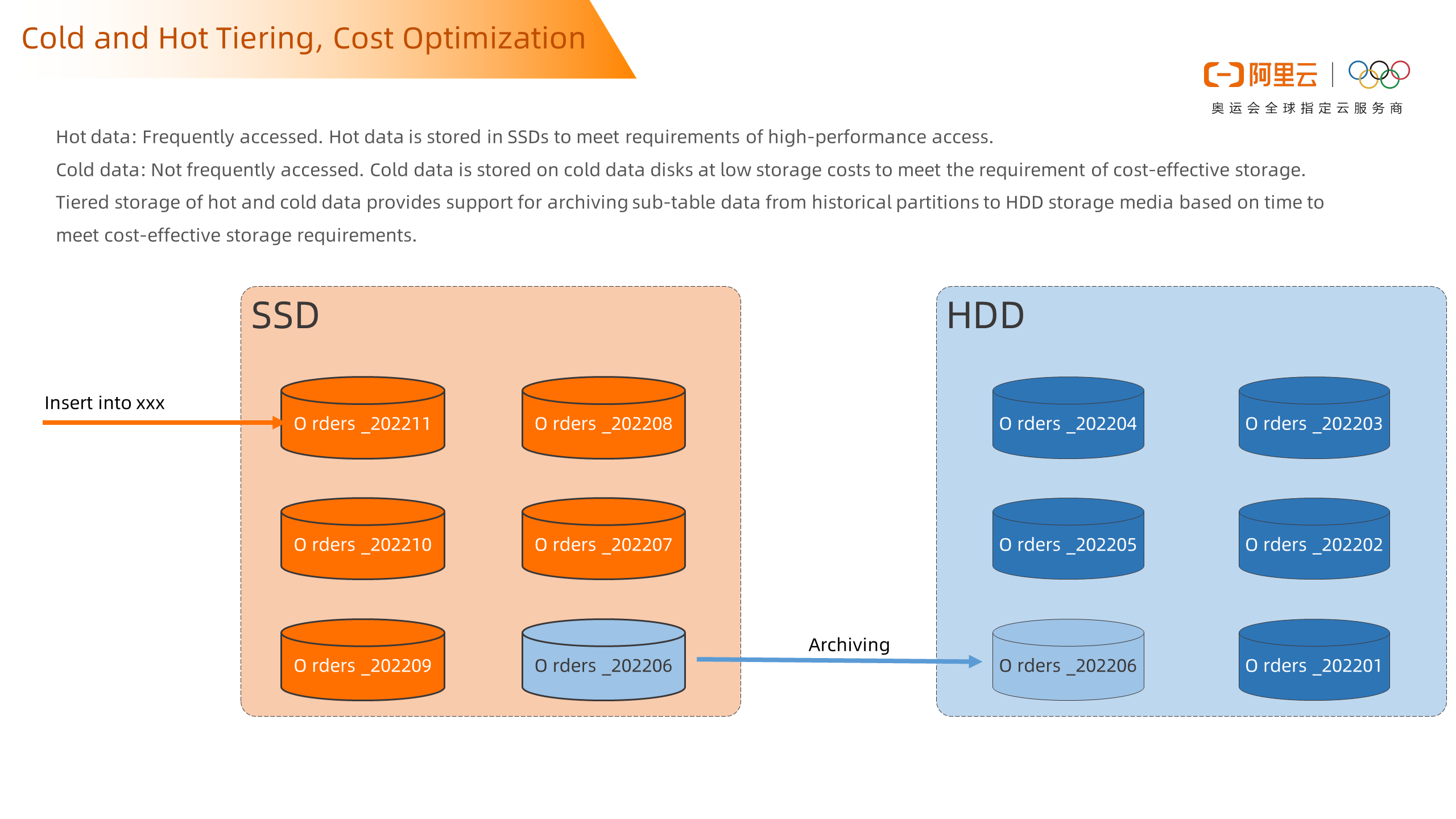

For several important functions, we will give some expansion here. In 1.3, to further help customers optimize costs, hot and cold tiered storage is provided. In the service, for the data in the partition table, the service usually accesses the data of the recent partition at a high frequency. Such data that needs high-frequency access is used in the storage medium of SSD to meet the requirements of high-performance access. Over time, hot data will gradually become cold data with lower access frequency. And the system can transfer the system to the storage medium of HDD according to the policy set by the user to optimize the storage cost.

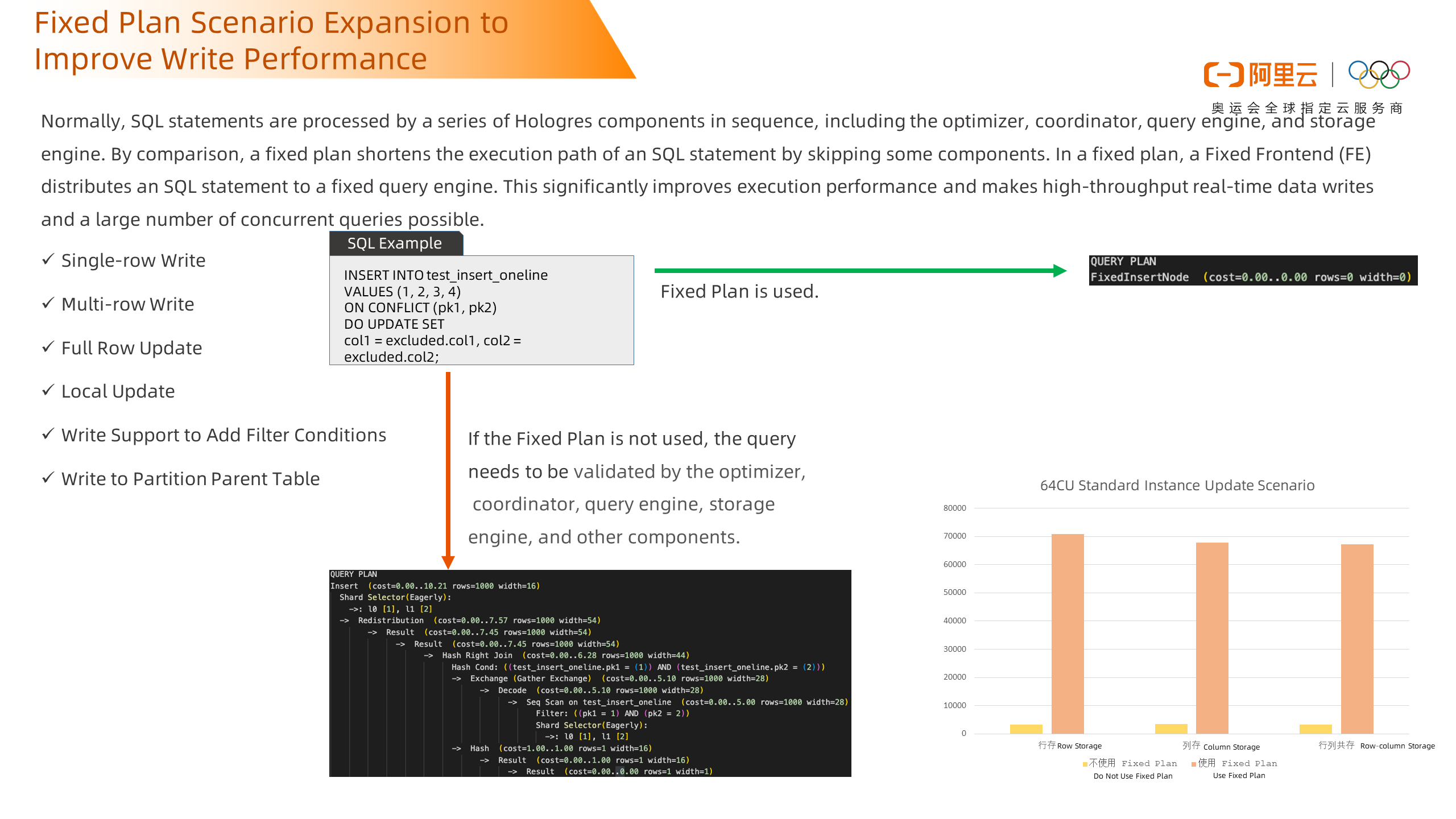

Fixed Plan is a unique execution engine optimization method in Hologres. Traditional SQL execution requires multiple components such as the optimizer, coordinator, query engine, and storage engine. For example, if such an SQL statement does not use FixedPlan, the execution plan is as follows. The entire process requires multiple components such as the optimizer, coordinator, query engine, and storage engine. Fixed Plan selects Short-Cut to optimize SQL statements, bypassing the overhead of optimizers, coordinators, and some query engines. Fixed FrontEnd is used to directly connect to Fixed Query Engine, which can double the SQL execution efficiency. It is a key optimization method to support high-throughput real-time write and high-concurrency queries. Therefore, if Fixed Plan is used, the corresponding execution plan is shown in the figure. The following is a comparison. For data update scenarios, it can be seen that RPS is improved by more than 20 times after Fixed Plan is used, regardless of row storage, column storage, or row and column storage. The orange part in the following figure is the RPS after using Fixed Plan and the yellow part is the RPS not using Fixed Plan.

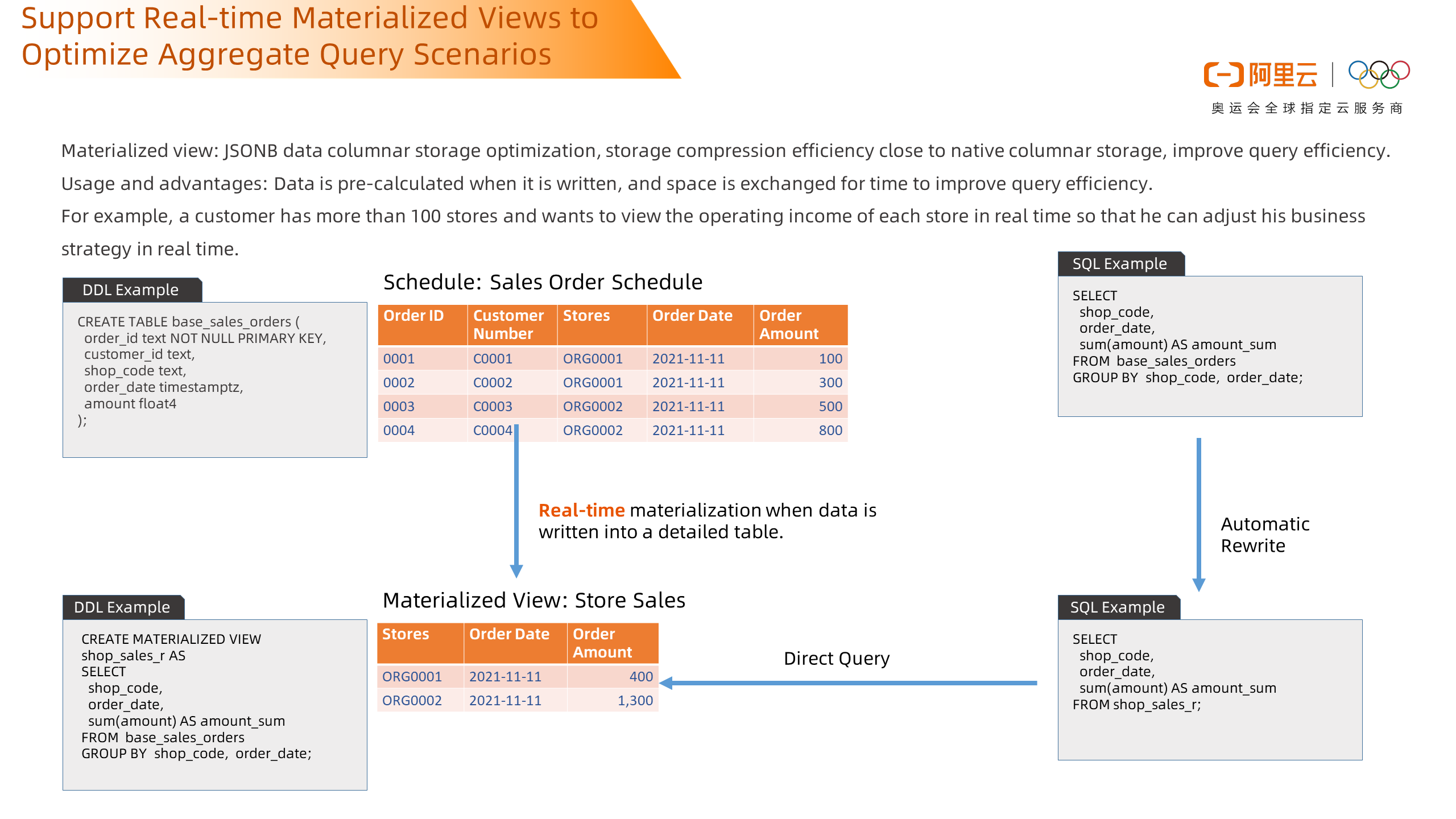

Real-time materialized views are supported in the new version. Materialized views are a common concept. In general databases, materialized views need to be refreshed regularly, causing certain data lag. The materialized view of Hologres does not need to be manually refreshed. Data is pre-calculated when it is written to the materialized view. For example, in a simple business scenario, a customer has more than 100 stores, and he wants to view the operating income of each store to adjust the business strategy in real-time. The details of the customer are shown as follows. The details of the order are stored, including the order number, customer number, store ID, order date, and order amount. After a materialized view is created and data is written to a detailed table, Hologres materializes the view in real-time. When a customer writes SQL, the system can automatically rewrite the SQL to enable SQL to query data of materialized views to improve query performance.

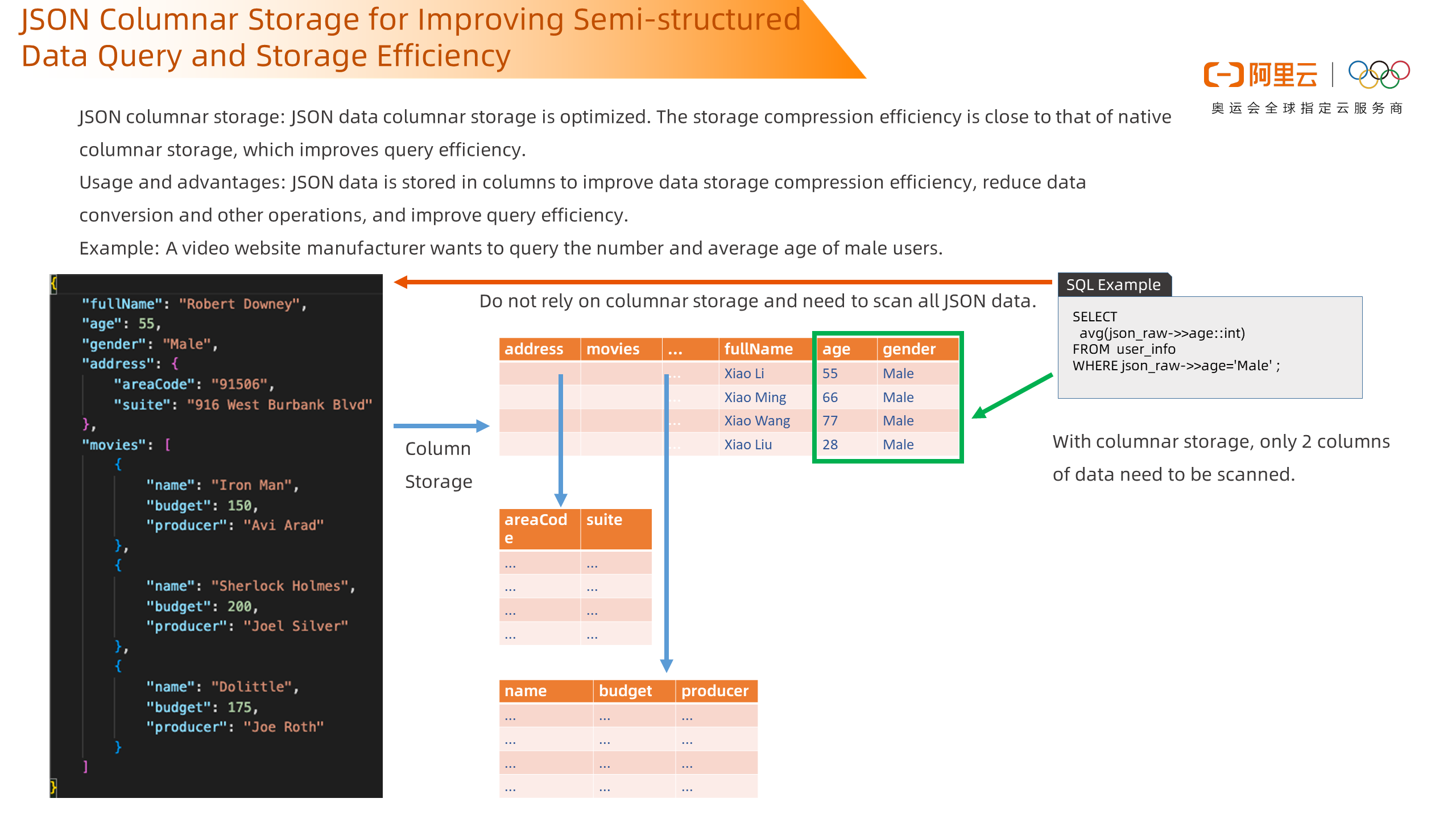

The last big feature is JSON columnar storage, which refers to the use of columnar storage to store JSON data. As the compression efficiency of columnar storage is high, it can effectively improve the storage efficiency of data and save storage space. For example, in a common scenario, a video website manufacturer wants to query the number and average age of male users. The data is stored in the following JSON type. The corresponding SQL is shown in the following figure. When you need to query results, you need to scan all JSON data, read all the data, and then summarize them to obtain the final result. If column-based storage is enabled, Hologres stores the data in Pangu based on the column-based storage mode, as shown in the following figure. If you need to query the number and average age of male users, you need to scan two columns of data, which can significantly improve the query efficiency.

Here is an actual optimization case: The upgrade process of the real-time data warehouse architecture of a head logistics company. Logistics companies have a strong demand for real-time decision-making and analysis, as well as traffic peaks that are promoted by regular marketing. The system load fluctuates greatly. At the same time, they need to support many 2c scenarios, which require high service responsiveness. Before the architecture upgrade, the enterprise mostly adopted some traditional RDS architectures to support real-time query and monitoring of online businesses, including refreshing the logistics status of each package.

However, such an architecture is insufficient for real-time performance. The data update efficiency of orders is low, and the updated trace is long, which is unable to meet the needs of real-time monitoring and will reduce the distribution efficiency of logistics. At the same time, complex correlation calculations are often required between multiple metrics. The query efficiency is relatively slow and fails to meet the requirements of real-time business decision-making.

Another weakness of the architecture is insufficient stability. When multiple services are queried with high concurrency, the overall latency increases, which affects the stability of the service. During Double 11, the traffic that needs to be undertaken will be several times higher than the daily traffic. The original system is unable to withstand the sudden traffic increase, which will require a lot of additional manual O&M.

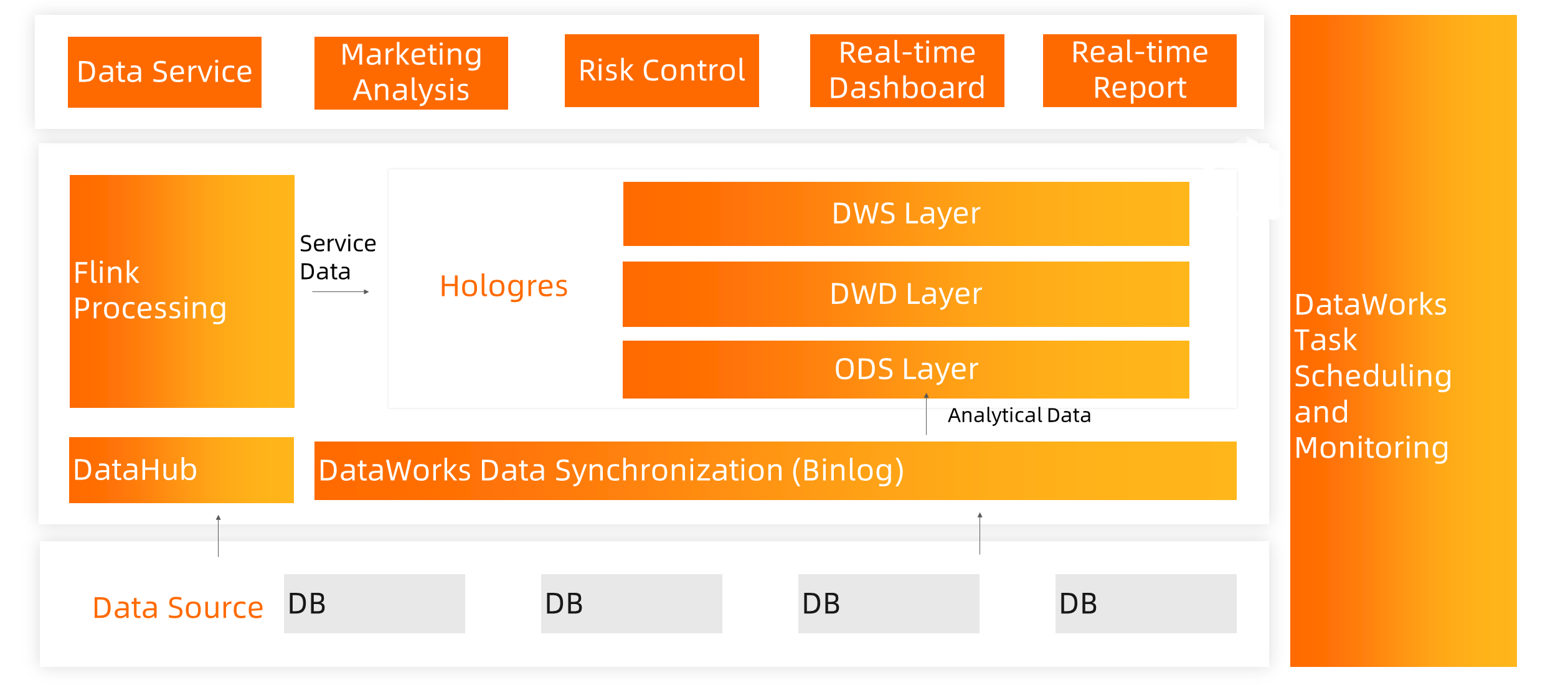

Therefore, we upgraded the real-time data warehouse architecture for users and replaced the original data warehouse architecture with Flink and Hologres. For high-frequency access to service data, Flink is used to consuming data from DataHub and directly store the computing results in Hologres. For some complex-queried analytical data, DataWorks is used to read upstream RDS binlogs and build hierarchical ODSDWDDWS and other data in Hologres. After that, the final aggregate data is connected to upper-layer applications to realize high-concurrency and fast queries.

This solution uses a hybrid model that integrates analysis and services. It not only uses Flink stream computing capabilities to pre-process businesses but also makes full use of Hologres's powerful complex multi-dimensional query capabilities. It replaces traditional database software such as OLAP and RDS systems, simplifying the data architecture.

After the upgrade, the stability of the system has been greatly improved. Whether it is real-time data writing or reading, it presents strong stability. During the whole period of Double 11, zero failure rate was achieved, real-time business requirements were met, real-time dashboards such as the real-time collection of parts and operation transfer in the library were supported, and strong real-time data support was provided for operations.

The overall effectiveness has also been significantly improved, bringing a good logistics experience to users and improving the company's service level.

The peak traffic of Double 11 is thousands of times higher than the daily traffic. The elasticity capability of cloud-native Hologres is used to realize the dynamic expansion of resources, meet different requirements for resources, and reduce the cost of O&M.

New Capabilities of Alibaba Cloud Native Real-Time Offline Integrated Data Warehouse

137 posts | 21 followers

FollowApsaraDB - January 9, 2023

Alibaba EMR - July 9, 2021

ApsaraDB - November 17, 2020

Alibaba Cloud MaxCompute - January 21, 2022

Alibaba Cloud MaxCompute - January 22, 2021

Alibaba EMR - June 8, 2021

137 posts | 21 followers

Follow Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More Hologres

Hologres

A real-time data warehouse for serving and analytics which is compatible with PostgreSQL.

Learn More MaxCompute

MaxCompute

Conduct large-scale data warehousing with MaxCompute

Learn More Big Data Consulting Services for Retail Solution

Big Data Consulting Services for Retail Solution

Alibaba Cloud experts provide retailers with a lightweight and customized big data consulting service to help you assess your big data maturity and plan your big data journey.

Learn MoreMore Posts by Alibaba Cloud MaxCompute