Simple Log Service lets you consume data in real time by defining server-side processing rules with SPL. This topic describes the concept, benefits, use cases, billing rules, and supported consumers of rule-based consumption.

How it works

Rule-based consumption uses server-side SPL to preprocess and clean semi-structured log data. These operations include row filtering, column pruning, regex-based extraction, and JSON field extraction. This process delivers clean, structured data to your client. For more information about the SPL syntax, see SPL syntax.

Both rule-based consumption and query and analysis are used to read data. For more information about the differences between them, see Differences between log consumption and log query.

Use cases

Rule-based consumption is ideal for stream computing and real-time computing scenarios that require data preprocessing. For example, you may need to perform row filtering, column pruning, or extract data by using regular expressions or JSON paths before you consume log data. This feature provides low-latency data consumption, typically within seconds. You can also configure a custom data retention period.

Benefits

-

Reduce traffic costs by consuming data over the internet.

-

This prevents the transmission of large volumes of unnecessary data, reducing network traffic costs.

-

-

Save local CPU resources and accelerate processing.

-

For example, you can offload complex data calculations to Simple Log Service instead of running them on your local machine. This frees up local resources and accelerates your overall workflow.

-

Billing

-

If your Logstore uses the pay-by-ingested-data billing mode, rule-based consumption is free of charge. However, you are charged for internet read traffic if you pull data from a public endpoint of Simple Log Service. Traffic is calculated based on the compressed data size. For more information, see Billable items of the pay-by-ingested-data mode.

-

If your Logstore uses the pay-by-feature billing mode, you are charged for server-side computation. You may also be charged for internet traffic if you use a public endpoint of Simple Log Service. For more information, see Billable items of the pay-by-feature mode.

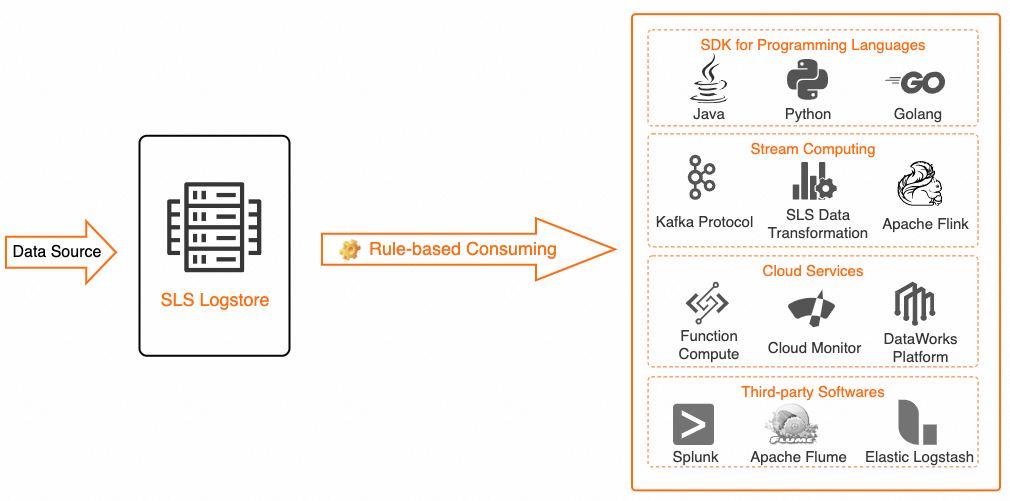

Consumers

The following table describes the consumers that are supported by rule-based consumption.

|

Type |

Consumer |

Description |

|

Multi-language applications |

Multi-language applications |

Applications built in languages like Java, Python, and Go can consume data from Simple Log Service through a rule-based consumer group. For more information, see Consume data by using an API and Consume logs by using a consumer group. Best practice: Use an SDK to consume logs based on a consumption processor (SPL) |

|

cloud service |

Realtime Compute for Apache Flink |

You can use Realtime Compute for Apache Flink to consume data from Simple Log Service in real time. For more information, see Simple Log Service. Best practices: |

|

stream computing |

Kafka |

To request this integration, submit a ticket. |

Usage notes

-

Rule-based consumption requires complex server-side computation. Depending on the complexity of your SPL query and data characteristics, you may notice a slight increase in server-side read latency, such as 10 ms to 100 ms for 5 MB of data. However, the overall end-to-end latency—from data pull to the completion of local computation—is typically reduced.

-

If SPL syntax errors occur or required source fields are missing, rule-based consumption may return incomplete data or fail. For more information, see Error handling.

-

The maximum length of an SPL statement is 4 KB.

-

The read limit for a shard is the same for both rule-based and regular consumption. For rule-based consumption, read traffic is calculated based on the raw data size before SPL processing. For more information about the limits, see Data reads and writes.

FAQ

-

How do I resolve the

ShardReadQuotaExceederror during rule-based consumption?-

To resolve this error:

-

Configure your consumer client to wait and retry when this error occurs.

-

Manually split the shard. This reduces the read speed per shard for new data.

-

-

-

How is traffic throttled for rule-based consumption?

-

The throttling policy for rule-based consumption is the same as for regular consumption. For more information, see Data reads and writes. The traffic for rule-based consumption is calculated based on the raw data size before SPL processing.

-

For example, assume the compressed raw data size is 100 MB. After you filter the data by using the SPL statement

* | where method = 'POST', the compressed data returned to the client is 20 MB. However, the read traffic is calculated based on the original 100 MB.

-

-

-

After enabling rule-based consumption, why is the outflow traffic low in the 'Traffic/min' chart on the Project Monitoring page?

-

The outflow traffic in the

Traffic/minchart represents the data size after SPL processing, not the raw data size. If your SPL statement includes operations that reduce the data volume, such as row filtering or column pruning, the outflow value may be lower than the inflow.

-