For use cases like historical file collection, data migration, or batch data processing, traditional incremental log collection is not suited for a one-time collection of existing static files. The Host Text One-time Collection feature lets you deploy collection configurations in bulk to a Machine Group through the Console or an API. The resulting task collects the content of specified static files once and then automatically terminates.

Scope

-

LoongCollector version 3.3 or later.

-

Supports host collection on Linux and Windows, but not container collection.

Collection configuration workflow

-

Preparation: Create a Project and a Logstore. A Project is a resource management unit used to isolate logs from different services, and a Logstore stores logs.

-

Configure a machine group (install LoongCollector): Install LoongCollector based on your server type and add it to a machine group. Use the machine group to manage collection nodes, distribute configurations, and monitor server health.

-

Create and configure a one-time file collection rule:

-

Global and input configuration: Define the collection configuration name and specify the source and scope of log collection.

-

Log processing and structuring: Configure processing settings based on your log format.

-

Multiline logs: This feature handles log entries that span multiple lines, such as Java stack traces or Python tracebacks. Use a start-of-line regex to identify the start of each log entry, merging subsequent lines into a single record.

-

Structured parsing: Configure a parser plugin (such as regex, delimiter, or NGINX mode) to parse raw strings into structured key-value pairs. This allows you to query and analyze each field independently.

-

-

Log filtering: Configure collection blocklists and content filtering rules to filter for relevant log content, reducing redundant data transmission and storage.

-

Log categorization: Configure log topics to flexibly categorize logs from different services, servers, or source paths.

-

-

Query and analysis configuration: Full-text indexing is enabled by default and supports keyword searches. We recommend enabling a field index on structured fields to improve search efficiency and allow for precise queries and analysis.

-

Verify collection results: After you complete the configuration, verify that logs are collected successfully. If you encounter issues such as logs not being collected, heartbeat failures, or parsing errors, see the FAQ.

Prerequisites

Before you can collect logs, create a Project and a LogStore. If you already have them, skip this step and proceed to Configure a Machine Group (Install LoongCollector).

Create Project

Create LogStore

Step 1: Configure a machine group (install LoongCollector)

After you complete the prerequisites, install LoongCollector on the servers and add them to a machine group.

These installation steps apply only if the log source is an ECS instance in the same account and region as the Log Service Project.

If your ECS instance and Project are not in the same account or region, or if your log source is an on-premises server, see Install and configure LoongCollector.

Procedure

-

On the

Logstores page, click

Logstores page, click  next to the target Logstore name to expand it.

next to the target Logstore name to expand it. -

Click . On the One-time Logtail Configuration tab, click Add Logtail Configuration.

-

In the Quick Data Import dialog box, click Import Now on the One-time File Collection - Host card.

-

On the Machine Group Configuration page, configure the following parameters:

-

Use Case: Host Scenario

-

Installation Environment: ECS

-

Configure Machine Group: The next action depends on whether LoongCollector is installed and a machine group exists.

-

If LoongCollector is installed and added to a machine group, select the machine group from the Source Machine Groups list and add it to the Applied Machine Groups list. A new machine group is not required.

-

If LoongCollector is not installed, click Create Machine Group.

These steps guide you through automatically installing LoongCollector and creating a machine group.

-

The system automatically lists ECS instances that are in the same region as your Project. Select one or more instances from which you want to collect logs.

-

Click Install and Create as Machine Group. The system automatically installs LoongCollector on the selected ECS instances.

-

Enter a Name for the machine group and click OK.

NoteIf the installation fails or remains in a pending state, verify that the ECS instance is in the same region as the Project.

-

-

To add a server with LoongCollector already installed to an existing machine group, see the FAQ topic How do I add a server to an existing machine group?

-

-

-

Check heartbeat status: Click Next. The Machine Group Heartbeat Status section appears. Verify that the Heartbeat status is OK, which indicates a successful connection. Then, click Next to continue to the Logtail configuration.

If the status is FAIL, the initial heartbeat may take a moment to establish. Wait about two minutes, then refresh the status. If the status remains FAIL, see Machine group heartbeat connection fails for troubleshooting.

Step 2: Configure one-time file collection

After you complete the LoongCollector installation and machine group configuration, go to the Logtail configuration page to define log collection and processing rules.

1. Global and input configuration

Define the collection configuration's name and specify the log collection source and scope.

global configuration:

-

Configuration name: Enter a custom name for the collection configuration. The name must be unique within the Project and cannot be modified after creation. The naming rules are as follows:

-

Can contain only lowercase letters, digits, hyphens (-), and underscores (_).

-

Must start and end with a lowercase letter or a digit.

-

-

Execution timeout: The default is 600 seconds (10 minutes), and the valid range is from 600 to 604,800 seconds (10 minutes to 7 days). If a task exceeds this timeout, the system stops it and does not collect any remaining data.

ImportantImportant: When you update a configuration, its effective period is reset. To avoid duplicate tasks or unexpected data reporting, ensure that the machine group scope is correct and that the previous task execution did not exceed the specified execution timeout.

-

Force task rerun on update : This option is turned off by default.

-

Off:

-

When you update any collection parameters other than execution timeout or the input configuration, the system resumes the current collection progress instead of restarting the task. This ensures collection continuity.

-

If you modify the execution timeout or the input configuration, the system reruns the collection task.

-

-

On: When you update the collection configuration, the collection task reruns from the beginning. This ensures all data is processed and reported using the new settings. Note: Previously collected data is not deleted. To clear previously collected data, see Log Service soft delete.

-

input configuration:

-

Type: one-time file collection (available in LoongCollector 3.3 and later).

-

File path: The path from which logs are collected.

NoteLoongCollector determines the list of files and their sizes for collection when it retrieves the configuration. It does not collect new files or content appended after this point.

-

Linux: The path must start with a forward slash (

/). For example,/data/mylogs/**/*.logcollects all files with the .log extension in all subdirectories of/data/mylogs. -

Windows: The path must start with a drive letter. For example,

C:\Program Files\Intel\**\*.Log.

-

-

Maximum directory monitoring depth: The maximum directory depth that the

**wildcard in the file path can match. The default is 0, which means it monitors only the current directory.

2. Log processing and parsing

Configure log processing rules to convert raw, unstructured logs into structured, searchable data, which improves query and analysis efficiency. We recommend that you first Add Log Sample.

In the processor configuration section of the Logtail configuration page, click Add Log Sample and enter your log content. The system uses this sample to identify the log format and helps generate regular expressions and parsing rules, simplifying the configuration process.

Scenario 1: Processing multi-line logs

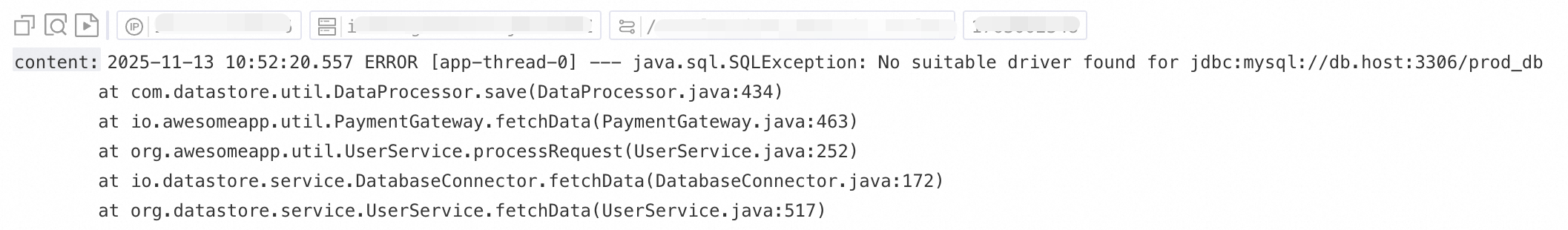

Logs such as Java exception stack traces and JSON objects often span multiple lines. In the default collection mode, these logs are split into multiple incomplete records, which causes a loss of context. To prevent this, you can enable multiline mode. By configuring a line-beginning regular expression, you can merge consecutive lines that belong to the same log entry into a single, complete log.

Example:

|

Unprocessed raw log |

In the default mode, the collector treats each line as a separate log, which breaks up the stack trace and causes context loss. |

With multiline mode enabled, a line-beginning regular expression identifies complete log entries, preserving the full semantic structure. |

|

|

|

|

Procedure: In the processor configuration section of the Logtail configuration page, enable Multiline Mode.

-

Type: Select Custom or Multiline JSON.

-

Custom: Use this option for logs with a non-fixed format. You must configure a line-beginning regular expression to identify the start of each log entry.

-

Line-beginning regular expression: You can generate this expression automatically or enter it manually. The regular expression must match a complete line of data. For example, the expression for the sample log is

\[\d+-\d+-\w+:\d+:\d+,\d+]\s\[\w+]\s.*.-

To automatically generate the expression, click Auto-generate Regex, select the desired log content in the Log Sample text box, and then click Generate Regex.

-

To manually enter the expression, click Manually Enter Regex, provide your expression, and then click Validate.

-

-

-

Multiline JSON: When your raw logs are in standard JSON format, Log Service automatically handles newlines within a single JSON log entry.

-

-

Action on split failure:

-

Discard: If a block of text does not match the line-beginning rule, it is discarded.

-

Keep as Single Lines: Keeps any text that does not match the rule as individual single-line logs.

-

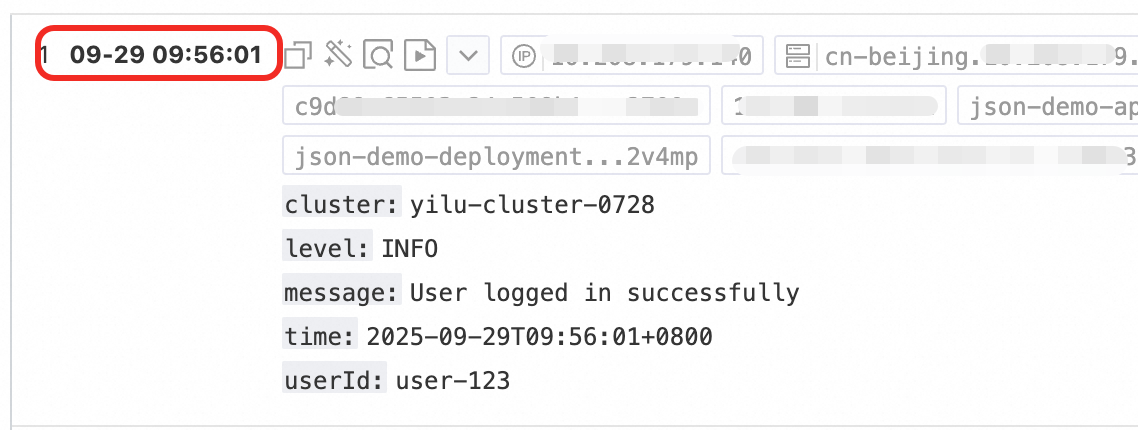

Scenario 2: Processing structured logs

Querying and analyzing unstructured or semi-structured text, such as NGINX access logs or application output, can be inefficient. Log Service provides data processors to automatically convert raw logs of different formats into structured data. This provides a solid foundation for subsequent analysis, monitoring, and alerting.

Example:

|

Unprocessed raw log |

Log after structured parsing |

|

|

Procedure: In the processor configuration section of the Logtail configuration page:

-

Add a processor: Click Add Processing Plugin and configure a regex, delimiter, or JSON processor based on your log format. This example uses NGINX logs and selects .

-

NGINX log configuration: Copy the complete

log_formatdefinition from your NGINX server's configuration file (nginx.conf) and paste it into the text box.Example:

log_format main '$remote_addr - $remote_user [$time_local] "$request" ''$request_time $request_length ''$status $body_bytes_sent "$http_referer" ''"$http_user_agent"';ImportantThe format definition here must exactly match the format used to generate the logs on your server. Otherwise, parsing will fail.

-

Common parameters: The following parameters are common across multiple data processors and serve the same purpose.

-

Source field: Specifies the source field to be parsed. The default is

content, which refers to the entire collected log entry. -

Keep source field on parse failure: Recommended. If the processor fails to parse a log (for example, due to a format mismatch), this option retains the original log content in the specified source field.

-

Keep source field on parse success: If selected, this option retains the original log content after successful parsing.

-

3. Log filtering

Indiscriminately collecting large volumes of low-value or irrelevant logs, such as DEBUG or INFO level entries, can waste storage resources, increase costs, hinder query performance, and pose data leakage risks. To address these issues, you can use fine-grained filtering to ensure efficient and secure log collection.

Filter by content

Filter logs based on field content, for example, by collecting only logs where the level is WARNING or ERROR.

Example:

|

Unprocessed raw logs |

Collect only |

|

|

Procedure: In the processor configuration section of the Logtail configuration page:

Click Add Processing Plugin and select .

-

Field name: The log field to filter by.

-

Field value: The regular expression used for filtering. Full-text matching is required; partial keyword matching is not supported.

Filter with a blacklist

Use a collection blacklist to exclude specified directories or files and prevent the upload of irrelevant or sensitive logs.

Procedure: In the Logtail configuration page, go to the section, enable collection blacklist, and click Add.

Supports exact matching and wildcard matching for directories and filenames. The only supported wildcards are the asterisk (*) and the question mark (?).

-

File path blacklist: The file paths to ignore. Examples:

-

/home/admin/private*.log: Ignores all files in the/home/admin/directory that start withprivateand end with.log. -

/home/admin/private*/*_inner.log: Ignores all files that end with_inner.login directories that start withprivateunder the/home/admin/directory.

-

-

File blacklist: The filenames to ignore during collection. Example:

-

app_inner.log: Ignores all files namedapp_inner.log.

-

-

Directory blacklist: Directory paths cannot end with a forward slash (

/). Examples:-

/home/admin/dir1/: The directory blacklist will have no effect. -

/home/admin/dir*: Ignores all files in subdirectories under the/home/admin/directory that start withdir. -

/home/admin/*/dir: Ignores all files in second-level subdirectories nameddirunder the/home/admin/directory. For example, files in the/home/admin/a/dirdirectory are ignored, but files in the/home/admin/a/b/dirdirectory are collected.

-

4. Log categorization

When logs from multiple applications or instances share the same format but have different paths (for example, /apps/app-A/run.log and /apps/app-B/run.log), it can be difficult to distinguish their sources after collection. By configuring a log topic, you can logically differentiate logs from various applications, services, or paths, enabling efficient categorization and precise querying within a unified storage destination.

Procedure: In the section, select how to generate the topic. You can use one of the following three methods:

-

Machine group topic: When you apply a collection configuration to multiple machine groups, LoongCollector automatically uses the name of the server's machine group as the value for the

__topic__field. This is useful for scenarios where you want to categorize logs by host. -

Custom: Use the format

customized://<your-topic-name>, for example,customized://app-login. This method is suitable for static topics with fixed business identifiers. -

File path-based extraction: Extracts key information from the full path of a log file to dynamically tag the log source. This is useful when multiple users or applications share the same log filename but reside in different paths. For example, when multiple users or services write logs to different top-level directories but use the same subdirectory and filename, you cannot distinguish the source by filename alone.

/data/logs ├── userA │ └── serviceA │ └── service.log ├── userB │ └── serviceA │ └── service.log └── userC └── serviceA └── service.logIn this case, you can configure file path-based extraction and use a regular expression to extract key information from the full path. Log Service then uploads the matched result to the Logstore as the log topic.

File path-based extraction rules: Based on regular expression capture groups

When you configure the regular expression, the system automatically determines the output field format based on the number and names of the capture groups. The rules are as follows:

In a regular expression for a file path, you must escape forward slashes (

/).Capture group type

Use case

Generated field

Regex example

Sample matched path

Sample generated field

Single capture group (one

(.*?))When you need only one dimension to distinguish sources (for example, username or environment).

Generates the

__topic__field.\/logs\/(.*?)\/app\.log/logs/userA/app.log__topic__:userAMultiple unnamed capture groups (multiple

(.*?))When you need multiple dimensions to distinguish sources but do not require semantic labels.

Generates tag fields where the key is formatted as

__topic_{i}__, where{i}is the capture group index.\/logs\/(.*?)\/(.*?)\/app\.log/logs/userA/svcA/app.log__tag__:__topic_1__:userA;__tag__:__topic_2__:svcAMultiple named capture groups (using

(?P<name>.*?))When you need multiple dimensions to distinguish sources and want clear, meaningful field names for easy querying and analysis.

Generates tag fields where the key is the specified capture group

name.\/logs\/(?P<user>.*?)\/(?P<service>.*?)\/app\.log/logs/userA/svcA/app.log__tag__:user:userA;__tag__:service:svcA

Step 3: Query and analysis

After completing log processing and plugin configuration, click Next to go to the query and analysis configuration page.

-

The full-text index is enabled by default and supports keyword searches on raw log content.

-

To query precisely by field, click Automatic Index Generation after the preview data loads. Log Service then generates a field index based on the first entry in the preview data.

After completing the configuration, click Next to finish the collection process.

Step 4: Verify collection results

After the configuration is applied, click Query / Analysis on the Query and Analysis page of the target Logstore to view the collected log data.

FAQ

Lifecycle of a one-time collection configuration

After a one-time collection configuration is created, it follows the lifecycle below:

-

Configuration distribution window: LoongCollector can pull the configuration within 5 minutes of its creation. After 5 minutes, new LoongCollector instances cannot obtain this configuration.

-

Automatic configuration deletion: The configuration is automatically deleted 7 days after creation.

-

Task execution: The collection task must be completed within the execution timeout. If the task exceeds this time, the system forcibly stops it.

One-time vs. legacy file collection

The legacy historical file collection method is no longer recommended. Use the new one-time file collection feature for importing historical data. Compared to the legacy method, which required manually creating configuration files, the new feature significantly improves configuration efficiency, reliability, and observability. The following table provides a detailed comparison:

|

Item |

Legacy method |

One-time collection |

|

Configuration method |

Create a |

Create a configuration in the console or by API and deploy it to a machine group in batches. |

|

File matching |

Manually enter file paths and filenames. |

Provides a streamlined configuration similar to |

|

Progress monitoring |

No status reporting or local logs. |

Uses a checkpoint to track the collection progress, with granularity down to the current offset of each file. |

|

Reliability |

Low. It runs as a separate process with no resource controls or checkpoint mechanism. |

High. It uses standard pipeline-level resource management, supports flow control to avoid impacting other collection tasks, and enables resumable transfer. |

|

Flexibility |

Low. You must use an existing collection configuration. |

High. You can customize the collection configuration and even modify it during the task. |

Machine group heartbeat is FAIL

-

Check the user ID. If your server is not an ECS instance, or if your ECS instance and Project belong to different Alibaba Cloud accounts, check whether the correct user ID file exists in the specified directory. If it does not exist, create it manually by using one of the following commands.

-

Linux: Run the

cd /etc/ilogtail/users/ && touch <uid>command to create a user ID file. -

Windows: Go to the

C:\LogtailData\users\directory and create an empty file named<uid>.

-

-

Check the machine group identifier. If you used a user-defined identifier when creating the machine group, check whether a file named

user_defined_idexists in the specified directory. If it exists, verify that its content matches the user-defined identifier configured for the machine group.-

Linux:

# Configure the user-defined identifier. If the directory does not exist, create it manually. echo "user-defined-1" > /etc/ilogtail/user_defined_id -

Windows: Create a file named

user_defined_idin theC:\LogtailDatadirectory and write the user-defined identifier to it. If the directory does not exist, create it manually.

-

-

If both the user ID and machine group identifier are correct, see Troubleshoot LoongCollector (Logtail) machine group issues for further investigation.

Add a server to a machine group

To add a new server to an existing machine group, such as a newly deployed ECS instance or a self-managed server, follow these steps to associate it with the group and apply its collection configuration.

If a server is added to a machine group more than 5 minutes after the one-time collection configuration is created, it will not receive the configuration. You can view the countdown timer at the top of the collection configuration page for the exact remaining time.

Prerequisites

-

An existing machine group.

-

LoongCollector is installed on the new server.

Procedure

-

View the machine group identifier of the target machine group.

-

In the target Project, click

in the left-side navigation pane.

in the left-side navigation pane. -

On the Machine Groups page, click the name of the target machine group.

-

On the machine group configuration page, view the machine group identifier.

-

-

Perform one of the following actions based on the identifier type.

NoteA single machine group cannot contain both Linux and Windows servers. Do not configure the same user-defined identifier on both Linux and Windows servers. You can configure multiple user-defined identifiers on a single server by separating them with a line break.

-

Type 1: The machine group identifier is an IP address.

-

On the server, run the following command to open the

app_info.jsonfile and view the value of theipparameter.cat /usr/local/ilogtail/app_info.json -

On the configuration page of the target machine group, click Modify and enter the IP address of the server. If you have multiple IP addresses, separate them with line breaks.

-

After you complete the configuration, click Save and check the heartbeat status. If the status is OK, the system automatically applies the machine group's collection configuration to the server.

If the heartbeat status is FAIL, see The machine group heartbeat status is FAIL for further troubleshooting.

-

-

Type 2: The machine group identifier is a user-defined identifier.

Based on the operating system, write the user-defined identifier string that matches the target machine group to the specified file.

If the directory does not exist, create it manually. The file path and name are fixed and cannot be customized.

-

Linux: Write the user-defined identifier to the

/etc/ilogtail/user_defined_idfile. -

Windows: Write the user-defined identifier to the

C:\LogtailData\user_defined_idfile.

-

-

Appendix: Native processing plugins

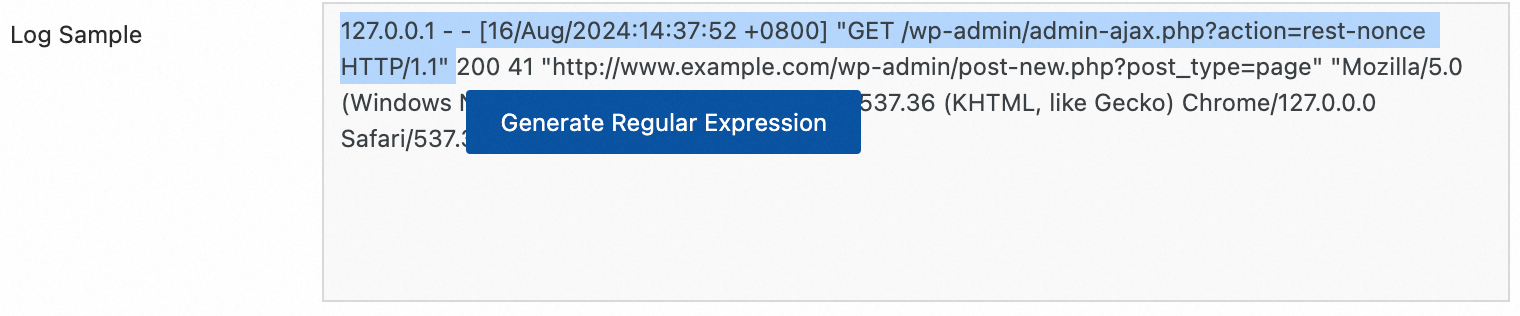

Regular expression parsing

Extract log fields using a regular expression and parse the log into key-value pairs. Each field can be independently queried and analyzed.

Example:

Raw log without any processing | Using the regular expression parsing plugin |

| |

Procedure: In the Processor Configurations section of the Logtail Configuration page, click Add Processor and select :

Regular Expression: The expression used to match logs. Generate it automatically or enter it manually:

Automatic generation:

Click Generate.

In the Log Sample, select the log content to extract.

Click Generate Regular Expression.

Manual entry: Manually Enter Regular Expression based on the log format.

After configuration, click Validate to test whether the regular expression can correctly parse the log content.

Extracted Field: The field name (Key) that corresponds to the extracted log content (Value).

For other parameters, see the description of common configuration parameters in Use case 2: Structured logs.

-

For details on other parameters, see Scenario 2: Structured logs.

Delimiter parsing

Structure log content using a separator to parse it into multiple key-value pairs. Both single-character and multi-character separators are supported.

Example:

Raw log without any processing | Fields split by the specified character |

| |

Procedure: In the Processor Configurations section of the Logtail Configuration page, click Add Processor and select :

Delimiter: Specifies the character used to split log content.

Example: For a CSV file, select Custom and enter a comma (,).

Quote: If a field value contains the separator, you must enclose the field value in quotes to prevent incorrect splitting.

Extracted Field: Specify the field name (Key) for each column in the order that they appear. The rules are as follows:

Field names can contain only letters, digits, and underscores (_).

Must start with a letter or an underscore (_).

Maximum length: 128 bytes.

For other parameters, see the description of common configuration parameters in Use case 2: Structured logs.

-

For details on other parameters, see Scenario 2: Structured logs.

JSON parsing

Structure an Object-type JSON log by parsing it into key-value pairs.

Example:

Raw log without any processing | Automatic extraction of standard JSON key-value pairs |

| |

Procedure: In the Processor Configurations section of the Logtail Configuration page, click Add Processor and select :

Original Field: The field that contains the raw log to be parsed. The default value is content.

For other parameters, see the description of common configuration parameters in Use case 2: Structured logs.

-

For details on other parameters, see Scenario 2: Structured logs.

Nested JSON parsing

Parse a nested JSON log into key-value pairs by specifying the expansion depth.

Example:

Raw log without any processing | Expansion depth: 0, using expansion depth as a prefix | Expansion depth: 1, using expansion depth as a prefix |

| | |

Procedure: In the Processor Configurations section of the Logtail Configuration page, click Add Processor and select :

Original Field: Specifies the name of the source field to expand, such as

content.JSON Expansion Depth: The expansion depth of the JSON object, where 0 (the default) indicates full expansion, 1 indicates expansion of the current level, and so on.

Character to Concatenate Expanded Keys: The separator for field names when a JSON object is expanded. The default value is an underscore (_).

Name Prefix of Expanded Keys: The prefix for field names after JSON expansion.

Expand Array: Expands an array into key-value pairs with indexes.

Example:

{"k":["a","b"]}is expanded to{"k[0]":"a","k[1]":"b"}.To rename the expanded fields (for example, from prefix_s_key_k1 to new_field_name), add a rename fields plugin afterward to complete the mapping.

For other parameters, see the description of common configuration parameters in Use case 2: Structured logs.

-

For details on other parameters, see Scenario 2: Structured logs.

JSON array parsing

Use the json_extract function to extract JSON objects from a JSON array.

Example:

Raw log without any processing | Extract JSON array structure |

| |

Procedure: In the Processor Configurations section of the Logtail Configuration page, switch the Processing Mode to SPL, configure the SPL Statement, and use the json_extract function to extract JSON objects from the JSON array.

Example: Extract elements from the JSON array in the log field content and store the results in new fields json1 and json2.

* | extend json1 = json_extract(content, '$[0]'), json2 = json_extract(content, '$[1]')Apache log parsing

Structure the log content into multiple key-value pairs based on the definition in the Apache log configuration file.

Example:

Raw log without any processing | Apache Common Log Format |

| |

Procedure: In the Processor Configurations section of the Logtail Configuration page, click Add Processor and select :

The Log Format is combined.

The APACHE LogFormat Configuration are automatically populated based on the Log Format.

ImportantMake sure to verify the auto-filled content to ensure it is exactly the same as the LogFormat defined in your server's Apache configuration file (usually located at /etc/apache2/apache2.conf).

For other parameters, see the description of common configuration parameters in Use case 2: Structured logs.

-

For details on other parameters, see Scenario 2: Structured logs.

IIS log parsing

Structure the log content into multiple key-value pairs based on the IIS log format definition.

Comparison example:

Raw log | Adaptation for Microsoft IIS server-specific format |

| |

Procedure: In the Processor Configurations section of the Logtail Configuration page, click Add Processor and select :

Log Format: Select the log format for your IIS server.

IIS: The log file format for Microsoft Internet Information Services.

NCSA: Common Log Format.

W3C refers to the W3C Extended Log File Format.

IIS Configuration Fields: When you select IIS or NCSA, SLS uses the default IIS configuration fields. When you select W3C, you must set the fields to the value of the

logExtFileFlagsparameter in your IIS configuration file. For example:logExtFileFlags="Date, Time, ClientIP, UserName, SiteName, ComputerName, ServerIP, Method, UriStem, UriQuery, HttpStatus, Win32Status, BytesSent, BytesRecv, TimeTaken, ServerPort, UserAgent, Cookie, Referer, ProtocolVersion, Host, HttpSubStatus"For other parameters, see the description of common configuration parameters in Use case 2: Structured logs.

-

For details on other parameters, see Scenario 2: Structured logs.

Data masking

Mask sensitive data in logs.

Example:

Raw log without any processing | Masking result |

| |

Procedure: In the Processor Configurations section of the Logtail Configuration page, click Add Processor and select :

Original Field: The field that contains the log content before parsing.

Data Masking Method:

const: Replaces sensitive content with a constant string.

md5: Replaces sensitive content with its MD5 hash.

Replacement String: If Data Masking Method is set to const, enter a string to replace the sensitive content.

Content Expression that Precedes Replaced Content: The expression used to find sensitive content, which is configured using RE2 syntax.

Content Expression to Match Replaced Content: The regular expression used to match sensitive content. The expression must be written in RE2 syntax.

Time parsing

Parse the time field in the log and set the parsing result as the log's __time__ field.

Example:

Raw log without any processing | Time parsing |

|

|

Procedure: In the Processor Configurations section of the Logtail Configuration page, click Add Processor and select :

Original Field: The field that contains the log content before parsing.

Time Format: Set the time format that corresponds to the timestamps in the log.

Time Zone: Select the time zone for the log time field. By default, this is the time zone of the environment where the LoongCollector (Logtail) process is running.