Filter and transform website access logs to isolate error data and remove sensitive information.

Prerequisites

Before you begin, ensure that you have:

Created a project named web-project. For more information, see Manage projects.

Created a source Logstore named website_log in the web-project project. For more information, see Manage Logstores.

Ingested website access logs into the source Logstore (website_log). For more information, see Data Ingestion Overview.

Created a destination Logstore named website_fail in the web-project project.

Granted the RAM user permissions to perform data transformation operations, if applicable. For more information, see Grant a RAM user permissions to perform data transformation operations.

Configured indexes for the source and destination Logstores. For more information, see Create indexes.

Data transformation jobs do not depend on indexes. However, if you do not configure indexes, you cannot perform query and analysis operations.

Background information

A website stores all access logs in a Logstore named website_log. To improve user experience, you must analyze access errors. This example filters logs with a 4xx status code, removes personal user information, and writes the results to a new Logstore named website_fail for business analysts. The following is a sample log:

body_bytes_sent: 1061

http_user_agent: Mozilla/5.0 (Windows; U; Windows NT 5.1; ru-RU) AppleWebKit/533.18.1 (KHTML, like Gecko) Version/5.0.2 Safari/533.18.5

remote_addr: 192.0.2.2

remote_user: vd_yw

request_method: GET

request_uri: /request/path-1/file-5

status: 400

time_local: 10/Jun/2021:19:10:59

error: Invalid time rangeStep 1: Create a data transformation job

Log on to the Simple Log Service console.

Go to the data transformation page.

In the Projects section, click the project you want.

On the tab, click the logstore you want.

On the query and analysis page, click Data Transformation.

In the upper-right corner of the page, select a time range.

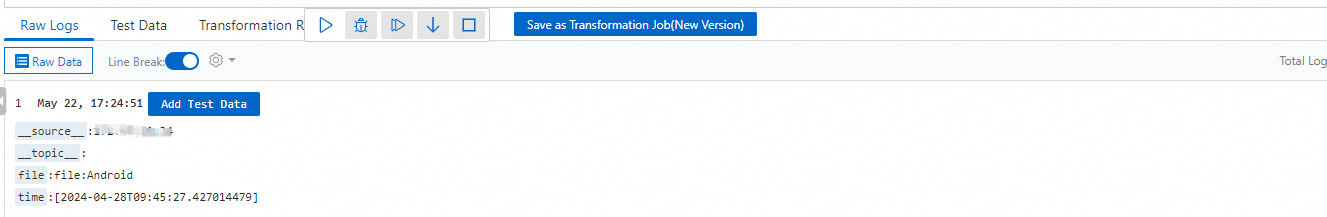

After selecting a time range, verify that logs appear on the Raw Logs tab.

In the editor, enter the following Structured Process Language (SPL) rule.

* | extend status=cast(status as BIGINT) | where status>=0 AND status<500 | project-away remote_addr, remote_userDebug the SPL rule.

Select test data from the Raw Data tab or manually enter test data.

Click ▷ to run the test.

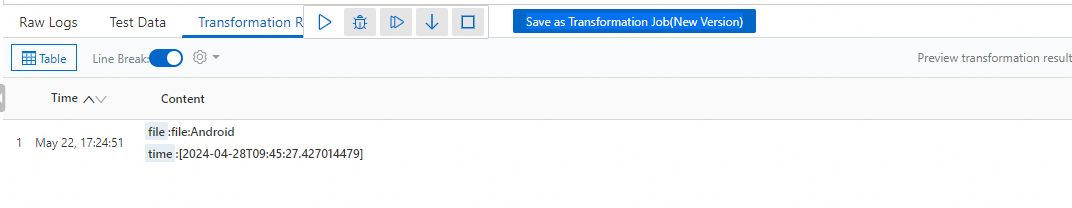

View the preview results.

Create a data transformation job.

Click Save as Transformation Job (New Version).

In the Create Data Transformation Job (New Version) panel, configure the following parameters and click OK.

Parameter

Description

Task Name

The name of the data transformation job.

Display Name

The display name of the job.

Job Description

The description of the job.

Authorization Method

Use one of the following methods to grant the job permissions to read data from the source logstore.

Default Role: The job assumes the AliyunLogETLRole system role to read data from the source logstore. Click Authorize the system role AliyunLogETLRole and complete the authorization as prompted. For more information, see Access data using a default role.

ImportantIf you use a RAM user, an Alibaba Cloud account must complete the authorization first.

If your Alibaba Cloud account is already authorized, you can skip this step.

Custom Role: The job assumes a custom role to read data from the source logstore. You must first grant the custom role permissions to read data from the source logstore, and then enter the ARN of the role in the Role ARN field. For more information, see Access data using a custom role.

AccessKey: For security reasons, you can no longer use an AccessKey pair (AK/SK) to create jobs.

Storage Destination

Destination Name

The name of the storage destination. A storage destination includes configurations such as the Project and logstore.

Destination Region

The region where the destination Project is located.

Destination Project

The destination Project that stores transformation results. The Project specified in your SPL statement overrides this setting. For more information, see Dynamic destination Project/logstore output.

ImportantThe Project that you dynamically specify in the SPL statement must match the region and authorization that you configure here.

Target Store

The destination logstore that stores transformation results. The logstore specified in your SPL statement overrides this setting. For more information, see Dynamic destination Project/logstore output.

ImportantThe logstore that you dynamically specify in the SPL statement must match the region, authorization, and Project that you configure here. The destination logstore cannot be the same as the source logstore.

WarningDo not configure the destination store as the current source store (same-source configuration). Otherwise, logs may be written in a loop, which incurs additional storage and traffic costs. You are responsible for the resource consumption and costs incurred.

Authorization Method

Use one of the following methods to grant the job permissions to write data to the destination logstore.

Default Role: The job assumes the AliyunLogETLRole system role to write results to the destination logstore. Click Authorize the system role AliyunLogETLRole and complete the authorization as prompted. For more information, see Access data using a default role.

ImportantIf you use a RAM user, an Alibaba Cloud account must complete the authorization first.

If your Alibaba Cloud account is already authorized, you can skip this step.

Custom Role: The job assumes a custom role to write results to the destination logstore. You must first grant the custom role permissions to write data to the destination logstore, and then enter the ARN of the role in the Role ARN field. For more information, see Access data using a custom role.

AccessKey: For security reasons, you can no longer use an AccessKey pair (AK/SK) to create jobs.

Write to Result Set

The dataset to write to the destination logstore. For more information about datasets in data transformation (new version), see Dataset description. You can configure multiple datasets for a single destination, and multiple destinations can use the same dataset.

Processing scope

Time Range

(Data Receiving Time)

Specifies the time range for the data transformation job. The following options are available:

All: The job processes data from the first log entry until you manually stop it.

From Specific Time: Specifies a start time for the job. The job processes data from the specified start time until you manually stop it.

Specific Time Range: Specifies a start and end time for the job. The job automatically stops at the specified end time.

Advanced Options

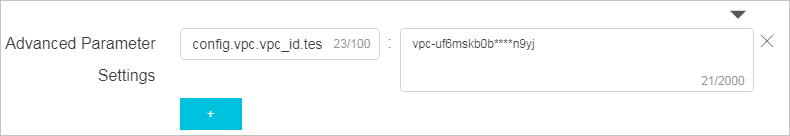

Advanced Parameter Settings

If your transformation statement requires sensitive information, such as a database password, you can store it as a key-value pair. You can then reference it in your statement using the

res_local("key")function.Click + to add multiple key-value pairs. For example,

config.vpc.vpc_id.test1:vpc-uf6mskb0b****n9yjspecifies the ID of the VPC where the RDS instance resides.

Go to the destination Logstore (website_fail) to perform query and analysis operations. For more information, see Quick guide to query and analysis.

Step 2: Observe the data transformation job

In the left-side navigation pane, choose .

In the list of data transformation jobs, find and click the data transformation job that you want to manage.

On the Data Transformation Overview (New Version) page, view the details of the data transformation job. You can view the job details and status. You can also modify, start, stop, and delete the job. For more information, see Manage data transformation jobs (new version). You can also observe the job's running status and metrics. For more information, see Observe and monitor data transformation jobs (new version).