When multiple hosts compete for a shared resource, you need distributed locks to prevent data corruption and logical failures. This topic explains how distributed locks work, how to implement them on ApsaraDB for Redis and Tair (Enterprise Edition), and how to maintain lock consistency after a failover. The Tair-specific CAD and CAS commands eliminate the Lua scripts required by standard Redis, reducing implementation complexity and improving throughput.

When to use distributed locks

Different concurrency scenarios require different coordination mechanisms:

| Scenario | Mechanism |

|---|---|

| Multiple threads in the same process | Mutex or read/write lock |

| Multiple processes on the same host | Semaphore, pipeline, or shared memory |

| Multiple hosts in a distributed system | Distributed lock |

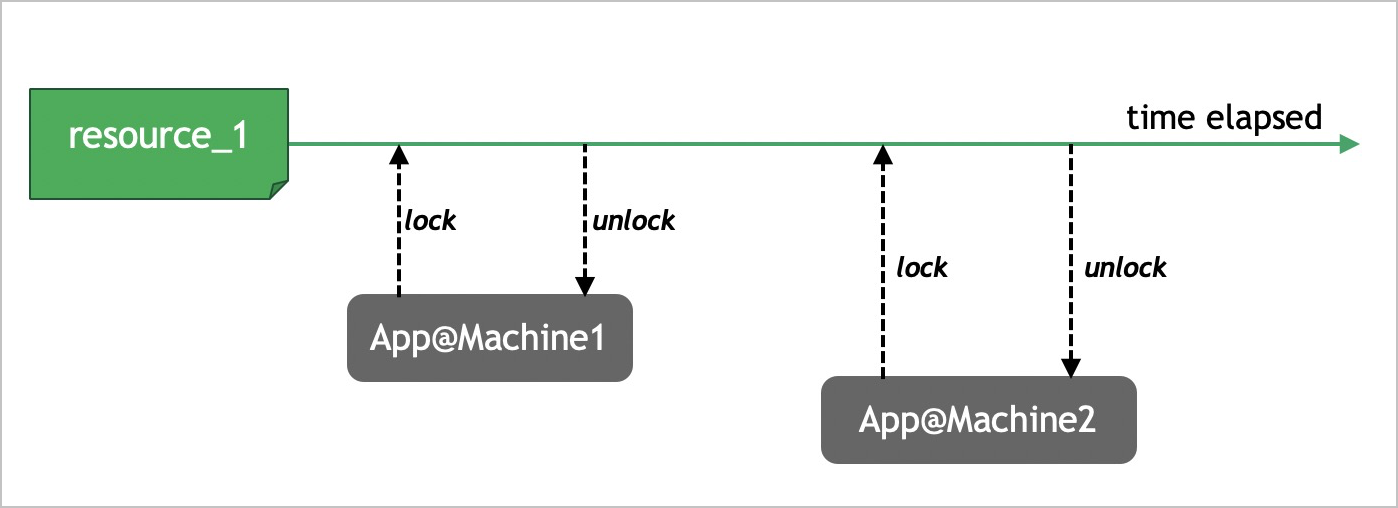

Distributed locks are globally scoped mutual exclusion locks. Use them to coordinate access to shared resources across hosts and prevent logical failures caused by resource contention.

Properties of a well-implemented distributed lock

A distributed lock must satisfy three properties. Each implementation approach in this topic is evaluated against these properties:

Mutually exclusive: At any given moment, only one client holds the lock.

Deadlock-free: Locks use a lease-based mechanism. If a client encounters an exception after acquiring a lock, the lock expires automatically, preventing indefinite resource blocking.

Consistent: After a high availability (HA) switchover, the lock state must remain intact. Switchovers can be triggered by external errors (hardware failures, network exceptions) or internal errors (slow queries, system defects). When a switchover occurs, a replica node is promoted to the new master node.

Implement distributed locks on Redis

The methods in this section apply to both ApsaraDB for Redis and Redis Open-Source Edition.

Acquire a lock

Run the SET command with the NX and EX options to acquire a lock atomically:

SET resource_1 random_value NX EX 5| Parameter | Description |

|---|---|

resource_1 | The lock key. If this key exists, the resource is locked and inaccessible to other clients. |

random_value | A random string unique across all clients. Used to verify ownership before releasing the lock. |

EX | The lock's validity period in seconds. Use PX for millisecond precision. |

NX | Cancels the SET operation if the key already exists. |

In this example, the lock expires after 5 seconds if not released explicitly. The expiry prevents deadlocks: if the client crashes, the system reclaims the lock automatically.

Release a lock

Running DEL resource_1 directly is unsafe. Consider this failure scenario:

At t1, application 1 acquires

resource_1with a 3-second lease.Application 1 stalls for more than 3 seconds. The key expires at t2, and the lock is released automatically.

At t3, application 2 acquires the lock.

Application 1 resumes at t4 and runs

DEL resource_1, accidentally releasing a lock it no longer owns.

A lock must only be released by the client that set it. Use the following Lua script to check ownership and delete atomically:

if redis.call("get", KEYS[1]) == ARGV[1] then

return redis.call("del", KEYS[1])

else

return 0

endRenew a lock

If the client cannot complete its work within the lease period, it must renew the lock. Only the client that set the lock can renew it:

if redis.call("get", KEYS[1]) == ARGV[1] then

return redis.call("expire", KEYS[1], ARGV[2])

else

return 0

endImplement distributed locks on Tair

Supported instances: Tair DRAM-based instances and persistent memory-optimized instances.

On these instances, the TairString data type provides the CAD and CAS commands. These commands replace the Lua scripts above with single atomic operations, reducing implementation complexity and improving throughput.

Acquire a lock

Lock acquisition is identical to the Redis approach:

SET resource_1 random_value NX EX 5Release a lock

The CAD (Compare-And-Delete) command checks ownership and deletes the key in one atomic operation, replacing the Lua script entirely:

CAD resource_1 my_random_valueThis is equivalent to: if GET(resource_1) == my_random_value, run DEL(resource_1).

Renew a lock

The CAS (Compare-And-Swap) command extends the lock's expiry in one atomic operation, replacing the Lua script entirely:

CAS resource_1 my_random_value my_random_value EX 10The CAS command does not check whether the new value equals the original value. Pass the same random value for both the old and new value fields to preserve the lock identity while extending the lease.

Jedis sample code

The following Java examples show a complete lock lifecycle using Jedis. All three operations — acquire, release, and renew — use the same resourceKey and randomValue.

Define the CAS and CAD commands

enum TairCommand implements ProtocolCommand {

CAD("CAD"), CAS("CAS");

private final byte[] raw;

TairCommand(String alt) {

raw = SafeEncoder.encode(alt);

}

@Override

public byte[] getRaw() {

return raw;

}

}Acquire a lock

public boolean acquireDistributedLock(Jedis jedis, String resourceKey, String randomValue, int expireTime) {

SetParams setParams = new SetParams();

setParams.nx().ex(expireTime);

String result = jedis.set(resourceKey, randomValue, setParams);

return "OK".equals(result);

}Release a lock

public boolean releaseDistributedLock(Jedis jedis, String resourceKey, String randomValue) {

jedis.getClient().sendCommand(TairCommand.CAD, resourceKey, randomValue);

Long ret = jedis.getClient().getIntegerReply();

return 1 == ret;

}Renew a lock

public boolean renewDistributedLock(Jedis jedis, String resourceKey, String randomValue, int expireTime) {

jedis.getClient().sendCommand(TairCommand.CAS, resourceKey, randomValue, randomValue, "EX", String.valueOf(expireTime));

Long ret = jedis.getClient().getIntegerReply();

return 1 == ret;

}Ensure lock consistency after a failover

Master-to-replica replication is asynchronous. If a master node fails after writing a lock but before the write is replicated, the promoted replica has no record of that lock. Two clients could then hold the same lock simultaneously, violating the consistent property.

Three approaches address this risk:

| Approach | Cost | Limitations | Best for |

|---|---|---|---|

| Redlock algorithm | High (requires multiple instances) | Cannot be used with cluster or standard master-replica instances; slower lock acquisition | Highest fault tolerance requirements |

| WAIT command | Low | Does not guarantee consistency if a switchover occurs before WAIT returns | Standard deployments where cost matters |

| Tair semi-synchronous replication | Built-in | Degrades to async if a replica fails | High-concurrency workloads on Tair instances |

Use the Redlock algorithm

The Redlock algorithm, proposed by the founders of the open source Redis project, reduces lock-loss probability by distributing locks across N independent Redis instances. A single master-replica instance may lose a lock at probability k% during a switchover. With Redlock, the probability of all N instances losing their locks simultaneously drops to (k%)^N.

The algorithm requires locks to succeed on M out of N nodes, where 1 < M ≤ N. All N locks do not need to succeed simultaneously.

Redlock trade-offs:

Lock acquisition and release are slower because multiple instances are involved.

It requires multiple independent ApsaraDB for Redis or self-managed Redis instances, increasing infrastructure costs.

It is incompatible with cluster instances and standard master-replica instances.

Use the WAIT command

The WAIT command blocks the current client until all previous write commands are replicated to a specified number of replica nodes, or until the timeout (in milliseconds) expires. The WAIT command is far more cost-effective than the Redlock algorithm.

After acquiring a lock, run WAIT to confirm replication:

SET resource_1 random_value NX EX 5

WAIT 1 5000In this example, the timeout period is 5,000 milliseconds. If WAIT returns 1, data is synchronized between the master node and the replica nodes, and consistency is ensured. However, if an HA switchover is triggered before WAIT returns a successful response, data may be lost — the return value only indicates a possible synchronization failure. After WAIT returns an error, re-acquire the lock or verify its state before proceeding.

Usage notes:

WAIT only blocks the client that sends it; other clients are unaffected.

Do not run WAIT before releasing a lock. Distributed locks are mutually exclusive by design, so a slightly delayed release does not cause logical failures.

Use Tair

Tair DRAM-based instances address lock consistency at the infrastructure level:

3x performance: Tair DRAM-based instances provide three times the performance of open source Redis, sustaining high-concurrency lock workloads without service interruption.

Semi-synchronous replication: A success response is returned only after the write is committed to the master node and replicated to at least one replica node. This prevents lock loss after a switchover.

Semi-synchronous replication degrades to asynchronous replication if a replica node fails or a network exception occurs during synchronization.

The CAD and CAS commands further reduce implementation complexity compared to Lua scripts and eliminate the need for multiple independent instances that Redlock requires.