Tair persistent memory-optimized instances use persistent memory (PMEM) to deliver large-capacity, Redis-compatible in-memory databases with built-in data persistence. Each write is persisted at the command level — without relying on traditional disk storage — which reduces costs by up to 30% compared to Redis Open-Source Edition while maintaining near-identical throughput and latency.

Background

Alibaba Cloud began investing in persistent memory research and implementation in 2018. Persistent memory was applied to the core cluster of e-commerce products with remarkably reduced costs during Double 11 that year, making it the first product in China to officially deploy persistent memory in a production environment.

Mature cloud environments and improved persistent memory technologies led Alibaba Cloud to develop a new engine for data persistence. This engine is integrated with ECS bare metal instances to introduce Tair persistent memory-optimized instances, which replace the traditional volatile memory of Redis with PMEM to significantly reduce the risk of data loss.

How it works

Standard Redis relies on append-only file (AOF) rewrites for persistence. These trigger fork() operations that can cause high latency, high network jitter, and slow service data loading in high-memory configurations, forcing a trade-off between performance and durability.

Persistent memory-optimized instances replace volatile DRAM with PMEM on ECS bare metal instances. PMEM delivers memory-level access latency and throughput without relying on traditional disk storage. A response is returned only after each write is persisted on the master node, giving you command-level durability.

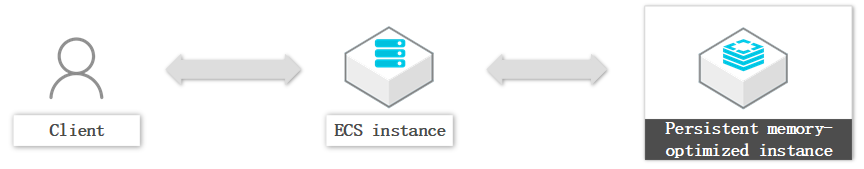

This architecture also simplifies your data layer. The traditional three-tier stack — application, cache, and persistent storage — collapses into two tiers:

Benefits

| Benefit | Description |

|---|---|

| Up to 30% lower cost | PMEM capacity costs significantly less than DRAM, reducing infrastructure spend without sacrificing Redis compatibility. Performance is approximately 90% of Redis Open-Source Edition. |

| Command-level persistence | Every write is persisted before a response is returned. No data loss window from periodic snapshots. |

| No fork-related latency | AOF rewrites no longer trigger fork() operations, eliminating the high latency, network jitter, and slow data loading caused by fork in large-memory configurations. |

| Extended data modules | Supports exString with CAS and CAD commands, the exHash data type, and TairCpc. |

| Redis compatibility | Compatible with most data structures and interfaces of Redis 6.0 or earlier. Includes high availability, auto scaling, logging, intelligent diagnostics, and flexible backup and restoration. |

Use cases

Large-scale intermediate data computation

Redis Open-Source Edition handles the performance requirements but at high cost. Other databases such as HBase reduce cost but cannot meet the throughput and latency requirements. Persistent memory-optimized instances hit both targets: near-Redis performance at significantly lower cost, with full data persistence.

This workload fits well when your working set is large and access patterns are broad — conditions where PMEM's capacity advantage over DRAM is most valuable.

Gaming services requiring high data reliability

Gaming backends typically combine Redis for low-latency access with MySQL for durability. Persistent memory-optimized instances consolidate both roles: command-level persistence removes the data loss window of Redis, while the streamlined architecture reduces operational complexity and cost compared to a Redis + MySQL combination.