Tair (Redis OSS-compatible) provides three levels of disaster recovery to match different availability requirements — from single-zone high availability (HA) to cross-region active geo-redundancy.

| Solution | Protection scope | Failover behavior | Best for |

|---|---|---|---|

| Single-zone HA | Machine-level failures within one zone | Automatic failover between nodes in the same zone | Standard workloads with baseline availability requirements |

| Zone-disaster recovery | Zone-level failures (power outage, network disruption) | Automatic failover to a replica in a different zone | Workloads in a single region that require zone-level resilience |

| Cross-region disaster recovery | Region-level failures; cross-region latency | Real-time replication across regions managed through synchronization channels | Global deployments, geo-disaster recovery, and active geo-redundancy |

Single-zone HA

All Tair instances run in a single-zone HA architecture by default. The HA system continuously monitors master and replica nodes and triggers automatic failovers to prevent single points of failure (SPOFs).

Three deployment architectures support single-zone HA:

Standard master-replica architecture

A standard master-replica instance runs one master node and one replica node. When the HA system detects a master node failure, it switches workloads to the replica node, which becomes the new master. After the original master recovers, it resumes as the replica.

Multi-replica cluster architecture

Data is distributed across shards. Each shard has one master node and multiple replica nodes deployed on different machines. When the master fails, the HA system promotes a replica within the same shard to master.

Read/write splitting architecture

The HA system monitors all nodes. Failover behavior depends on which node fails:

Master node failure: The HA system promotes a replica to master and updates routing and weight information.

Read replica failure: The HA system creates a replacement read replica to handle read requests.

Proxy nodes also monitor each read replica in real time and stop routing traffic in these situations:

Abnormal state: Proxy nodes reduce traffic to the replica. If the replica fails to reconnect after a set number of attempts, proxy nodes stop routing traffic to it until it recovers.

Full data synchronization in progress: Proxy nodes pause routing until synchronization completes.

Zone-disaster recovery (multi-zone)

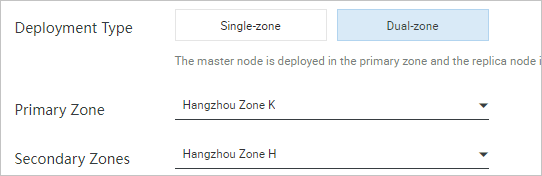

Zone-disaster recovery deploys master and replica nodes in two different zones within the same region. If the zone hosting the master node becomes unavailable due to a power failure or network disruption, the HA system automatically promotes the replica in the other zone to master.

To enable zone-disaster recovery, select the multi-zone deployment mode when creating an instance. For setup details, see Step 1: Create an instance.

How replication works

The replica node is provisioned with the same specifications as the master node and synchronizes data over a dedicated channel.

Tair uses global operation identifiers (OpIDs) — similar to MySQL's global transaction identifiers (GTIDs) — to track synchronization offsets. Lock-free background threads use OpIDs to resume synchronization at the correct position. AOF binary logs (binlogs) are asynchronously replicated from the master to the replica, and you can throttle replication throughput to protect instance performance.

On failover, the system calls an API operation on the configuration server to update proxy routing information and reroute traffic to the new master.

Cross-region disaster recovery

Global Distributed Cache for Tair reduces latency from cross-region data access and provides the foundation for geo-disaster recovery and active geo-redundancy.

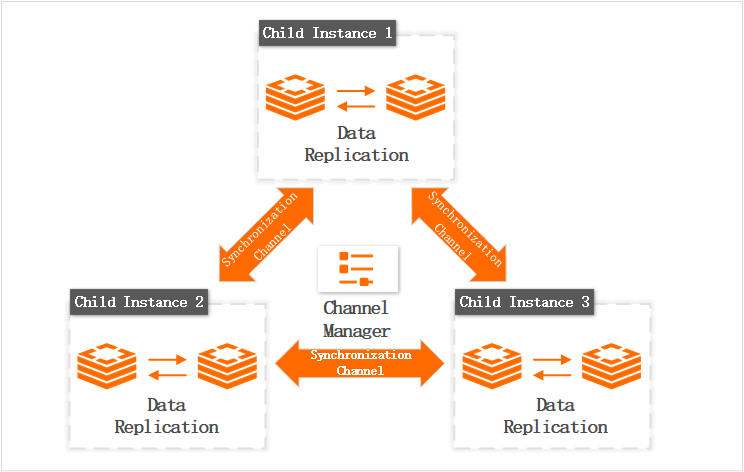

A distributed instance consists of multiple child instances, each in a different region. Child instances synchronize data in real time through synchronization channels. A channel manager monitors child instance health and handles exceptions, such as master-replica switchovers within a child instance.

Key capabilities:

No application-layer redundancy required: Create child instances directly or specify which instances to synchronize. This keeps your application logic focused on business functionality rather than replication management.

Geo-replication: Supports geo-disaster recovery and active geo-redundancy out of the box.

Common use cases: Global deployments in multimedia, gaming, and e-commerce — including geo-disaster recovery, active geo-redundancy, nearby application access, and load balancing.

For architecture details and setup, see Global Distributed Cache.

How failures are handled

Failures are classified as node-level or zone-level. The response depends on how your instance is deployed.

Node failures

| Deployment | What happens on master failure |

|---|---|

| Single-zone, multiple replicas | The system promotes the replica with the lowest replication latency to master and updates the routing relationship. |

| Multi-zone | The system promotes a replica in another zone to master and updates the routing relationship. Cross-zone access between the instance and dependent services may temporarily increase. |

In a multi-zone cluster, if replicas exist in both the primary and secondary zones, workloads are preferentially switched to a replica in the primary zone to avoid cross-zone access.

Zone-level failures

Zone-level failures such as power outages or fires take an entire data center offline.

| Deployment | Impact | Recovery |

|---|---|---|

| Single zone | The instance becomes unavailable. | Wait for the zone to recover, or create a new instance in a different zone from historical backup data. |

| Multi-zone | An automatic failover is triggered to the replica in the healthy zone. | No manual intervention required. |

To minimize downtime, deploy across multiple zones and create multiple replica nodes in each zone. Balance this against failure probability, business data criticality, and cost.

For Global Distributed Cache, a single child instance failure does not affect the availability of other child instances. Deploy child instances across multiple zones to prevent data write failures caused by a single child instance going down.

What's next

Prevent cross-zone switchover by specifying a custom number of nodes