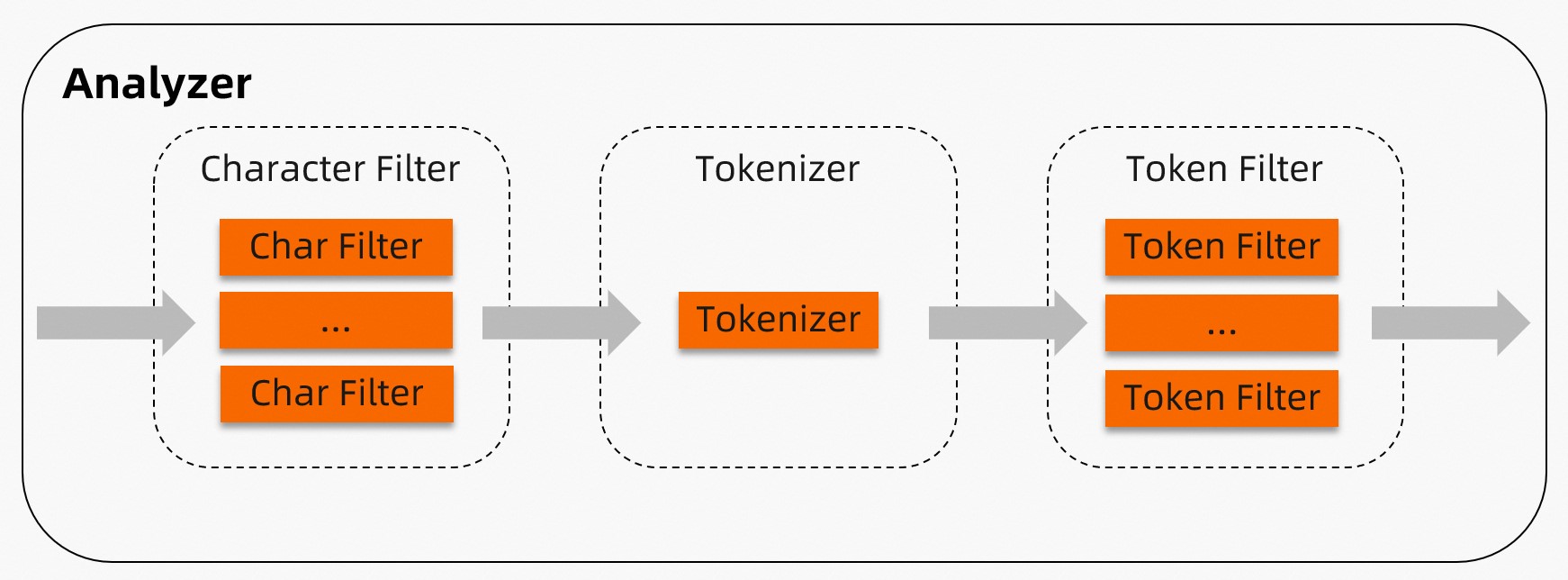

Analyzers parse and tokenize text fields so that TairSearch can build an index and answer full-text queries. Each analyzer runs three stages in order: character filters, a tokenizer, and token filters. TairSearch ships with nine built-in analyzers. For specialized requirements, you can build a custom analyzer from individual components.

| Stage | Purpose | Count per analyzer |

|---|---|---|

| Character filter | Preprocesses the raw text before tokenization (for example, replace :) with _happy_) |

Zero or more, run in order |

| Tokenizer | Splits the preprocessed text into tokens | Exactly one |

| Token filter | Post-processes each token (for example, lowercase, stop-word removal, stemming) | Zero or more, run in order |

Choose an analyzer

| Analyzer | Best for | Tokenizes by | Lowercases | Filters stop words |

|---|---|---|---|---|

| Standard | Most languages | Unicode word boundaries | Yes | Yes |

| Stop | Most languages (stop-word focus) | Non-letter characters | Yes | Yes |

| Jieba | Chinese text | Trained dictionary | English tokens only | Yes |

| IK | Chinese text (Elasticsearch-compatible) | Trained dictionary (two modes) | Yes (default) | Optional |

| Pattern | Custom delimiter logic | Regex pattern | Optional | Optional |

| Whitespace | Pre-tokenized or structured text | Whitespace characters | No | No |

| Simple | Western text, case-insensitive | Non-letter characters | Yes | No |

| Keyword | Exact-match fields | No splitting (whole field = one token) | No | No |

| Language | Specific natural languages | Language-specific rules | Yes | Yes |

How it works

An analyzer processes a document through three sequential stages.

Stage 1 — Character filter: Zero or more character filters preprocess the raw document text. Filters run in the order they are listed. For example, a mapping character filter can replace "(:" with "happy" before tokenization begins.

Stage 2 — Tokenizer: Exactly one tokenizer splits the (possibly filtered) text into tokens. For example, the whitespace tokenizer splits "I am very happy" into ["I", "am", "very", "happy"].

Stage 3 — Token filter: Zero or more token filters post-process the tokens from the tokenizer. Filters run in the order they are listed. For example, the stop token filter removes common words such as "the" and "is".

Built-in analyzers

Standard

The standard analyzer is the default choice for most languages. It splits text on Unicode word boundaries (per Unicode Standard Annex #29), lowercases all tokens, and removes common stop words.

Components: standard tokenizer → lowercase token filter → stop token filter

No character filters are included.

Optional parameters:

| Parameter | Description | Default |

|---|---|---|

stopwords |

Array of stop words to filter. Replaces the default list entirely. | See below |

max_token_length |

Maximum character length per token. Tokens longer than this are split at the limit. | 255 |

Default stop words:

["a", "an", "and", "are", "as", "at", "be", "but", "by", "for", "if", "in",

"into", "is", "it", "no", "not", "of", "on", "or", "such", "that", "the",

"their", "then", "there", "these", "they", "this", "to", "was", "will", "with"]Configuration examples:

// Default configuration

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "standard"

}

}

}

}// Custom stop words and token length

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_analyzer": {

"type": "standard",

"max_token_length": 10,

"stopwords": ["memory", "disk", "is", "a"]

}

}

}

}

}Stop

The stop analyzer splits text at any non-letter character, lowercases all tokens, and removes stop words.

Components: lowercase tokenizer → stop token filter

Optional parameters:

| Parameter | Description | Default |

|---|---|---|

stopwords |

Array of stop words to filter. Replaces the default list entirely. | Same as standard |

Configuration examples:

// Default configuration

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "stop"

}

}

}

}// Custom stop words

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_analyzer": {

"type": "stop",

"stopwords": ["memory", "disk", "is", "a"]

}

}

}

}

}Jieba

The jieba analyzer is recommended for Chinese text. It splits text using jieba dictionary-based segmentation, lowercases English tokens, and removes stop words.

Components: jieba tokenizer → lowercase token filter → stop token filter

-

The jieba analyzer loads a 20 MB built-in dictionary into memory. Only one copy is loaded globally. The first use of jieba may cause a brief latency spike while the dictionary loads.

-

Words in a custom dictionary cannot contain spaces or any of the following characters:

\t,\n,,,。

Optional parameters:

| Parameter | Description | Default |

|---|---|---|

userwords |

Array of strings added to the default dictionary. See the Jieba default dictionarydefault jieba dictionary. | Empty |

use_hmm |

Use a hidden Markov model (HMM) to handle out-of-vocabulary words. | true |

stopwords |

Array of stop words to filter. Replaces the default list entirely. See the Jieba default stop wordsdefault jieba stop words. | Built-in list |

Configuration examples:

// Default configuration

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "jieba"

}

}

}

}// Custom dictionary, stop words, and HMM

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_analyzer": {

"type": "jieba",

"stopwords": ["memory", "disk", "is", "a"],

"userwords": ["Redis", "open-source", "flexible"],

"use_hmm": true

}

}

}

}

}IK

The IK analyzer splits Chinese text and is compatible with the IK analyzer plug-in for Alibaba Cloud Elasticsearch. It supports two modes:

-

`ik_max_word`: identifies all possible tokens.

-

`ik_smart`: filters the results of the

ik_max_wordmode to identify the most possible tokens.

Components: IK tokenizer (no token filters by default)

Optional parameters:

| Parameter | Description | Default |

|---|---|---|

stopwords |

Array of stop words to filter. Replaces the default list entirely. | Same as standard |

userwords |

Array of strings added to the default IK dictionary. See the default IK dictionary. | Empty |

quantifiers |

Array of quantifiers added to the default IK quantifier dictionary. See the default quantifier dictionary. | Empty |

enable_lowercase |

Convert uppercase letters to lowercase before tokenization. | true |

If your custom dictionary contains uppercase letters, set enable_lowercase to false. Lowercase conversion happens before splitting, so uppercase entries in the dictionary would never match.

Configuration examples:

// Default configuration: both IK modes

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "ik_smart"

},

"f1": {

"type": "text",

"analyzer": "ik_max_word"

}

}

}

}// Custom stop words, dictionary, and quantifiers

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_ik_smart_analyzer"

},

"f1": {

"type": "text",

"analyzer": "my_ik_max_word_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_ik_smart_analyzer": {

"type": "ik_smart",

"stopwords": ["memory", "disk", "is", "a"],

"userwords": ["Redis", "open-source", "flexible"],

"quantifiers": ["ns"],

"enable_lowercase": false

},

"my_ik_max_word_analyzer": {

"type": "ik_max_word",

"stopwords": ["memory", "disk", "is", "a"],

"userwords": ["Redis", "open-source", "flexible"],

"quantifiers": ["ns"],

"enable_lowercase": false

}

}

}

}

}Pattern

The pattern analyzer splits text using a regular expression. By default, the matched text is treated as a delimiter (tokens are the text between matches). It also lowercases tokens and filters stop words.

Components: pattern tokenizer → lowercase token filter → stop token filter

Optional parameters:

| Parameter | Description | Default |

|---|---|---|

pattern |

Regular expression. Text matching the pattern is used as a delimiter. See RE2 syntax. | \W+ |

stopwords |

Array of stop words. Replaces the default list entirely. | Same as standard |

lowercase |

Convert tokens to lowercase. | true |

flags |

Set to CASE_INSENSITIVE to make the regex case-insensitive. |

Empty (case-sensitive) |

Configuration examples:

// Default configuration

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "pattern"

}

}

}

}// Custom pattern with case-insensitive matching

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_analyzer": {

"type": "pattern",

"pattern": "\\'([^\\']+)\\'",

"stopwords": ["aaa", "@"],

"lowercase": false,

"flags": "CASE_INSENSITIVE"

}

}

}

}

}Whitespace

The whitespace analyzer splits text at whitespace characters. It does not lowercase tokens or remove stop words.

Components: whitespace tokenizer

Optional parameters: None

Configuration:

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "whitespace"

}

}

}

}Simple

The simple analyzer splits text at any non-letter character and lowercases all tokens. It does not filter stop words.

Components: lowercase tokenizer

Optional parameters: None

Configuration:

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "simple"

}

}

}

}Keyword

The keyword analyzer treats the entire field value as a single token without any splitting. Use it for fields that require exact-match queries, such as IDs, status codes, or tags.

Components: keyword tokenizer

Optional parameters: None

Configuration:

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "keyword"

}

}

}

}Language

The language analyzer supports language-specific tokenization and stop-word removal for a fixed set of languages: arabic, cjk, chinese, brazilian, czech, german, greek, persian, french, dutch, and russian.

Optional parameters:

| Parameter | Description | Default | Supported languages |

|---|---|---|---|

stopwords |

Array of stop words. Replaces the default list. See Appendix 4 for defaults. | Language-specific | All except chinese |

stem_exclusion |

Array of words whose stems are not extracted. For example, adding "apples" prevents it from being reduced to "apple". |

Empty | brazilian, german, french, dutch |

The stop words of the chinese analyzer cannot be modified.

Configuration examples:

// Default configuration (Arabic)

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "arabic"

}

}

}

}// Custom stop words and stem exclusion (German)

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_analyzer": {

"type": "german",

"stopwords": ["ein"],

"stem_exclusion": ["speicher"]

}

}

}

}

}Custom analyzers

Build a custom analyzer when no built-in analyzer fits your needs. Define the analyzer in settings and reference it by name in mappings.

Parameters:

| Parameter | Required | Description | Valid values |

|---|---|---|---|

type |

Yes | Identifies this as a custom analyzer. | custom |

tokenizer |

Yes | The tokenizer to use. Only one is allowed. | whitespace, lowercase, standard, classic, letter, keyword, jieba, pattern, ik_max_word, ik_smart |

char_filter |

No | Array of character filters to apply before tokenization. | mapping (see Appendix 1) |

filter |

No | Array of token filters to apply after tokenization. | classic, elision, lowercase, snowball, stop, asciifolding, length, arabic_normalization, persian_normalization (see Appendix 3) |

Example: custom analyzer with emoticon replacement and stop-word removal

// Character filters replace emoticons and expand "&" before tokenization.

// The whitespace tokenizer splits on spaces.

// Token filters lowercase and remove stop words.

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "whitespace",

"filter": ["lowercase", "stop"],

"char_filter": ["emoticons", "conjunctions"]

}

},

"char_filter": {

"emoticons": {

"type": "mapping",

"mappings": [":) => _happy_", ":( => _sad_"]

},

"conjunctions": {

"type": "mapping",

"mappings": ["&=>and"]

}

}

}

}

}Appendix 1: Supported character filters

Mapping character filter

Replaces specified strings using key-value pairs. When the input contains a key, it is replaced with the corresponding value. Multiple mapping character filters can be used in a single analyzer.

Parameters:

| Parameter | Required | Description |

|---|---|---|

mappings |

Yes | Array of replacement rules. Each rule must use the format "key => value". For example: "& => and". |

Configuration example:

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "standard",

"char_filter": ["emoticons"]

}

},

"char_filter": {

"emoticons": {

"type": "mapping",

"mappings": [":) => _happy_", ":( => _sad_"]

}

}

}

}

}Appendix 2: Supported tokenizers

whitespace

Splits text at whitespace characters. Tokens that exceed max_token_length are split at the limit.

Optional parameters:

| Parameter | Description | Default |

|---|---|---|

max_token_length |

Maximum character length per token. Tokens longer than this are split at the limit. | 255 |

Configuration examples:

// Default configuration

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "whitespace"

}

}

}

}

}// Custom max token length

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "token1"

}

},

"tokenizer": {

"token1": {

"type": "whitespace",

"max_token_length": 2

}

}

}

}

}standard

Splits text using the Unicode Text Segmentation algorithm (Unicode Standard Annex #29). Suitable for most languages.

Optional parameters:

| Parameter | Description | Default |

|---|---|---|

max_token_length |

Maximum character length per token. Tokens longer than this are split at the limit. | 255 |

Configuration examples:

// Default configuration

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "standard"

}

}

}

}

}// Custom max token length

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "token1"

}

},

"tokenizer": {

"token1": {

"type": "standard",

"max_token_length": 2

}

}

}

}

}classic

Splits text using English grammar rules and handles specific patterns specially:

-

Splits at punctuation and removes it. Periods (

.) surrounded by non-whitespace are kept — for example,red.appleis not split, butred. appleproducesredandapple. -

Splits at hyphens, unless the token contains digits (interpreted as a product number and kept intact).

-

Recognizes email addresses and hostnames as single tokens.

Tokens that exceed max_token_length are skipped, not split.

Optional parameters:

| Parameter | Description | Default |

|---|---|---|

max_token_length |

Maximum character length per token. Tokens longer than this are skipped. | 255 |

Configuration examples:

// Default configuration

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "classic"

}

}

}

}

}// Custom max token length

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "token1"

}

},

"tokenizer": {

"token1": {

"type": "classic",

"max_token_length": 2

}

}

}

}

}letter

Splits text at any non-letter character. Works well for European languages.

Configuration:

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "letter"

}

}

}

}

}lowercase

Splits text at any non-letter character and converts all tokens to lowercase. Equivalent to combining the letter tokenizer with the lowercase token filter, but faster because it traverses the document only once.

Configuration:

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "lowercase"

}

}

}

}

}keyword

Treats the entire input as a single token without splitting. Typically paired with a token filter such as lowercase.

Configuration:

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "keyword"

}

}

}

}

}jieba

Splits Chinese text using a trained dictionary. Recommended for Chinese-language fields.

Words in a custom dictionary cannot contain spaces or any of the following characters: \t, \n, ,, 。

Optional parameters:

| Parameter | Description | Default |

|---|---|---|

userwords |

Array of strings added to the default dictionary. See the default jieba dictionary. | Empty |

use_hmm |

Use a hidden Markov model (HMM) to handle out-of-vocabulary words. | true |

Configuration examples:

// Default configuration

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "jieba"

}

}

}

}

}// Custom dictionary

{

"mappings": {

"properties": {

"f1": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "token1"

}

},

"tokenizer": {

"token1": {

"type": "jieba",

"userwords": ["Redis", "open-source", "flexible"],

"use_hmm": true

}

}

}

}

}pattern

Splits text using a regular expression. The matched text is treated as a delimiter by default. Use the group parameter to treat matched text as tokens instead.

Optional parameters:

| Parameter | Description | Default |

|---|---|---|

pattern |

Regular expression. See RE2 syntax. | \W+ |

group |

Controls how the regex result is used. -1 uses matched text as delimiters. 0 uses the full match as a token. 1 or higher uses the corresponding capture group as a token. |

-1 |

flags |

Set to CASE_INSENSITIVE to make the regex case-insensitive. |

Empty (case-sensitive) |

Example of `group` behavior:

Regex: "a(b+)c", input: "abbbcdefabc"

-

group: 0→ tokens:[ abbbc, abc ](full matches) -

group: 1→ tokens:[ bbb, b ](first capture group)

Configuration examples:

// Default configuration

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "pattern"

}

}

}

}

}// Custom pattern with capture group

{

"mappings": {

"properties": {

"f1": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "pattern_tokenizer"

}

},

"tokenizer": {

"pattern_tokenizer": {

"type": "pattern",

"pattern": "AB(A(\\w+)C)",

"flags": "CASE_INSENSITIVE",

"group": 2

}

}

}

}

}IK

Splits Chinese text. Supports two modes:

-

`ik_max_word`: identifies all possible tokens (maximum granularity).

-

`ik_smart`: identifies the most likely tokens (coarser granularity).

Optional parameters:

| Parameter | Description | Default |

|---|---|---|

stopwords |

Array of stop words. Replaces the default list entirely. | Same as standard |

userwords |

Array of strings added to the default IK dictionary. See the default IK dictionary. | Empty |

quantifiers |

Array of quantifiers added to the default quantifier dictionary. See the default quantifier dictionary. | Empty |

enable_lowercase |

Convert uppercase letters to lowercase before tokenization. | true |

If your custom dictionary contains uppercase letters, set enable_lowercase to false. Lowercase conversion happens before splitting.

Configuration examples:

// Default configuration: both IK modes

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_ik_smart_analyzer"

},

"f1": {

"type": "text",

"analyzer": "my_custom_ik_max_word_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_ik_smart_analyzer": {

"type": "custom",

"tokenizer": "ik_smart"

},

"my_custom_ik_max_word_analyzer": {

"type": "custom",

"tokenizer": "ik_max_word"

}

}

}

}

}// Custom dictionary, stop words, and quantifiers

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_ik_smart_analyzer"

},

"f1": {

"type": "text",

"analyzer": "my_custom_ik_max_word_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_ik_smart_analyzer": {

"type": "custom",

"tokenizer": "my_ik_smart_tokenizer"

},

"my_custom_ik_max_word_analyzer": {

"type": "custom",

"tokenizer": "my_ik_max_word_tokenizer"

}

},

"tokenizer": {

"my_ik_smart_tokenizer": {

"type": "ik_smart",

"userwords": ["The tokenizer for the Chinese language", "The custom stop words"],

"stopwords": ["about", "test"],

"quantifiers": ["ns"],

"enable_lowercase": false

},

"my_ik_max_word_tokenizer": {

"type": "ik_max_word",

"userwords": ["The tokenizer for the Chinese language", "The custom stop words"],

"stopwords": ["about", "test"],

"quantifiers": ["ns"],

"enable_lowercase": false

}

}

}

}

}Appendix 3: Supported token filters

classic

Removes possessive 's from the end of tokens and strips periods from acronyms. For example, Fig. becomes Fig.

Configuration:

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "classic",

"filter": ["classic"]

}

}

}

}

}elision

Removes specified elisions from the beginning of tokens. Primarily used for French text (for example, l'avion → avion).

Optional parameters:

| Parameter | Description | Default |

|---|---|---|

articles |

Array of elisions to remove. Replaces the default list entirely. | ["l", "m", "t", "qu", "n", "s", "j"] |

articles_case |

Whether elision matching is case-sensitive. | false |

Configuration examples:

// Default configuration

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "whitespace",

"filter": ["elision"]

}

}

}

}

}// Custom elisions with case-sensitive matching

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "whitespace",

"filter": ["elision_filter"]

}

},

"filter": {

"elision_filter": {

"type": "elision",

"articles": ["l", "m", "t", "qu", "n", "s", "j"],

"articles_case": true

}

}

}

}

}lowercase

Converts all tokens to lowercase.

Optional parameters:

| Parameter | Description | Valid values |

|---|---|---|

language |

Apply language-specific lowercasing rules. If not set, standard English rules apply. | greek, russian |

Configuration examples:

// Default configuration (English)

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "whitespace",

"filter": ["lowercase"]

}

}

}

}

}// Language-specific lowercasing (Greek and Russian)

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_greek_analyzer"

},

"f1": {

"type": "text",

"analyzer": "my_custom_russian_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_greek_analyzer": {

"type": "custom",

"tokenizer": "whitespace",

"filter": ["greek_lowercase"]

},

"my_custom_russian_analyzer": {

"type": "custom",

"tokenizer": "whitespace",

"filter": ["russian_lowercase"]

}

},

"filter": {

"greek_lowercase": {

"type": "lowercase",

"language": "greek"

},

"russian_lowercase": {

"type": "lowercase",

"language": "russian"

}

}

}

}

}snowball

Extracts the stem from each token. For example, cats becomes cat and running becomes run.

Optional parameters:

| Parameter | Description | Default | Valid values |

|---|---|---|---|

language |

The language whose stemming rules to apply. | english |

english, german, french, dutch |

Configuration examples:

// Default configuration (English)

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "whitespace",

"filter": ["snowball"]

}

}

}

}

}// English stemming with standard tokenizer

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "standard",

"filter": ["my_filter"]

}

},

"filter": {

"my_filter": {

"type": "snowball",

"language": "english"

}

}

}

}

}stop

Removes stop words from the token stream.

Optional parameters:

| Parameter | Description | Default |

|---|---|---|

stopwords |

Array of stop words. Replaces the default list entirely. | Same as standard |

ignoreCase |

Whether stop-word matching is case-insensitive. | false |

Configuration examples:

// Default configuration

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "whitespace",

"filter": ["stop"]

}

}

}

}

}// Custom stop words with case-insensitive matching

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "standard",

"filter": ["stop_filter"]

}

},

"filter": {

"stop_filter": {

"type": "stop",

"stopwords": ["the"],

"ignore_case": true

}

}

}

}

}asciifolding

Converts alphabetic, numeric, and symbolic characters outside the Basic Latin Unicode block to their ASCII equivalents. For example, é becomes e and ü becomes u. Use this filter to normalize accented characters in European text.

Configuration:

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "standard",

"filter": ["asciifolding"]

}

}

}

}

}length

Removes tokens that are shorter or longer than specified character lengths.

Optional parameters:

| Parameter | Description | Default |

|---|---|---|

min |

Minimum number of characters a token must have to be kept. | 0 |

max |

Maximum number of characters a token can have to be kept. | 2147483647 (2^31 - 1) |

Configuration examples:

// Default configuration

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "whitespace",

"filter": ["length"]

}

}

}

}

}// Keep only tokens between 2 and 5 characters

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_custom_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_custom_analyzer": {

"type": "custom",

"tokenizer": "whitespace",

"filter": ["length_filter"]

}

},

"filter": {

"length_filter": {

"type": "length",

"max": 5,

"min": 2

}

}

}

}

}Normalization

Normalizes language-specific characters. Use arabic_normalization for Arabic text and persian_normalization for Persian text. Pair this filter with the standard tokenizer for best results.

Configuration:

{

"mappings": {

"properties": {

"f0": {

"type": "text",

"analyzer": "my_arabic_analyzer"

},

"f1": {

"type": "text",

"analyzer": "my_persian_analyzer"

}

}

},

"settings": {

"analysis": {

"analyzer": {

"my_arabic_analyzer": {

"type": "custom",

"tokenizer": "arabic",

"filter": ["arabic_normalization"]

},

"my_persian_analyzer": {

"type": "custom",

"tokenizer": "arabic",

"filter": ["persian_normalization"]

}

}

}

}

}Appendix 4: Default stop words for language analyzers

arabic

["من","ومن","منها","منه","في","وفي","فيها","فيه","و","ف","ثم","او","أو","ب","بها","به","ا","أ","اى","اي","أي","أى","لا","ولا","الا","ألا","إلا","لكن","ما","وما","كما","فما","عن","مع","اذا","إذا","ان","أن","إن","انها","أنها","إنها","انه","أنه","إنه","بان","بأن","فان","فأن","وان","وأن","وإن","التى","التي","الذى","الذي","الذين","الى","الي","إلى","إلي","على","عليها","عليه","اما","أما","إما","ايضا","أيضا","كل","وكل","لم","ولم","لن","ولن","هى","هي","هو","وهى","وهي","وهو","فهى","فهي","فهو","انت","أنت","لك","لها","له","هذه","هذا","تلك","ذلك","هناك","كانت","كان","يكون","تكون","وكانت","وكان","غير","بعض","قد","نحو","بين","بينما","منذ","ضمن","حيث","الان","الآن","خلال","بعد","قبل","حتى","عند","عندما","لدى","جميع"]cjk

["with","will","to","this","there","then","the","t","that","such","s","on","not","no","it","www","was","is","","into","their","or","in","if","for","by","but","they","be","these","at","are","as","and","of","a"]brazilian

["uns","umas","uma","teu","tambem","tal","suas","sobre","sob","seu","sendo","seja","sem","se","quem","tua","que","qualquer","porque","por","perante","pelos","pelo","outros","outro","outras","outra","os","o","nesse","nas","na","mesmos","mesmas","mesma","um","neste","menos","quais","mediante","proprio","logo","isto","isso","ha","estes","este","propios","estas","esta","todas","esses","essas","toda","entre","nos","entao","em","eles","qual","elas","tuas","ela","tudo","do","mesmo","diversas","todos","diversa","seus","dispoem","ou","dispoe","teus","deste","quer","desta","diversos","desde","quanto","depois","demais","quando","essa","deles","todo","pois","dele","dela","dos","de","da","nem","cujos","das","cujo","durante","cujas","portanto","cuja","contudo","ele","contra","como","com","pelas","assim","as","aqueles","mais","esse","aquele","mas","apos","aos","aonde","sua","e","ao","antes","nao","ambos","ambas","alem","ainda","a"]czech

["a","s","k","o","i","u","v","z","dnes","cz","tímto","budeš","budem","byli","jseš","muj","svým","ta","tomto","tohle","tuto","tyto","jej","zda","proc","máte","tato","kam","tohoto","kdo","kterí","mi","nám","tom","tomuto","mít","nic","proto","kterou","byla","toho","protože","asi","ho","naši","napište","re","což","tím","takže","svých","její","svými","jste","aj","tu","tedy","teto","bylo","kde","ke","pravé","ji","nad","nejsou","ci","pod","téma","mezi","pres","ty","pak","vám","ani","když","však","neg","jsem","tento","clánku","clánky","aby","jsme","pred","pta","jejich","byl","ješte","až","bez","také","pouze","první","vaše","která","nás","nový","tipy","pokud","muže","strana","jeho","své","jiné","zprávy","nové","není","vás","jen","podle","zde","už","být","více","bude","již","než","který","by","které","co","nebo","ten","tak","má","pri","od","po","jsou","jak","další","ale","si","se","ve","to","jako","za","zpet","ze","do","pro","je","na","atd","atp","jakmile","pricemž","já","on","ona","ono","oni","ony","my","vy","jí","ji","me","mne","jemu","tomu","tem","temu","nemu","nemuž","jehož","jíž","jelikož","jež","jakož","nacež"]german

["wegen","mir","mich","dich","dir","ihre","wird","sein","auf","durch","ihres","ist","aus","von","im","war","mit","ohne","oder","kein","wie","was","es","sie","mein","er","du","daß","dass","die","als","ihr","wir","der","für","das","einen","wer","einem","am","und","eines","eine","in","einer"]greek

["ο","η","το","οι","τα","του","τησ","των","τον","την","και","κι","κ","ειμαι","εισαι","ειναι","ειμαστε","ειστε","στο","στον","στη","στην","μα","αλλα","απο","για","προσ","με","σε","ωσ","παρα","αντι","κατα","μετα","θα","να","δε","δεν","μη","μην","επι","ενω","εαν","αν","τοτε","που","πωσ","ποιοσ","ποια","ποιο","ποιοι","ποιεσ","ποιων","ποιουσ","αυτοσ","αυτη","αυτο","αυτοι","αυτων","αυτουσ","αυτεσ","αυτα","εκεινοσ","εκεινη","εκεινο","εκεινοι","εκεινεσ","εκεινα","εκεινων","εκεινουσ","οπωσ","ομωσ","ισωσ","οσο","οτι"]persian

["انان","نداشته","سراسر","خياه","ايشان","وي","تاكنون","بيشتري","دوم","پس","ناشي","وگو","يا","داشتند","سپس","هنگام","هرگز","پنج","نشان","امسال","ديگر","گروهي","شدند","چطور","ده","و","دو","نخستين","ولي","چرا","چه","وسط","ه","كدام","قابل","يك","رفت","هفت","همچنين","در","هزار","بله","بلي","شايد","اما","شناسي","گرفته","دهد","داشته","دانست","داشتن","خواهيم","ميليارد","وقتيكه","امد","خواهد","جز","اورده","شده","بلكه","خدمات","شدن","برخي","نبود","بسياري","جلوگيري","حق","كردند","نوعي","بعري","نكرده","نظير","نبايد","بوده","بودن","داد","اورد","هست","جايي","شود","دنبال","داده","بايد","سابق","هيچ","همان","انجا","كمتر","كجاست","گردد","كسي","تر","مردم","تان","دادن","بودند","سري","جدا","ندارند","مگر","يكديگر","دارد","دهند","بنابراين","هنگامي","سمت","جا","انچه","خود","دادند","زياد","دارند","اثر","بدون","بهترين","بيشتر","البته","به","براساس","بيرون","كرد","بعضي","گرفت","توي","اي","ميليون","او","جريان","تول","بر","مانند","برابر","باشيم","مدتي","گويند","اكنون","تا","تنها","جديد","چند","بي","نشده","كردن","كردم","گويد","كرده","كنيم","نمي","نزد","روي","قصد","فقط","بالاي","ديگران","اين","ديروز","توسط","سوم","ايم","دانند","سوي","استفاده","شما","كنار","داريم","ساخته","طور","امده","رفته","نخست","بيست","نزديك","طي","كنيد","از","انها","تمامي","داشت","يكي","طريق","اش","چيست","روب","نمايد","گفت","چندين","چيزي","تواند","ام","ايا","با","ان","ايد","ترين","اينكه","ديگري","راه","هايي","بروز","همچنان","پاعين","كس","حدود","مختلف","مقابل","چيز","گيرد","ندارد","ضد","همچون","سازي","شان","مورد","باره","مرسي","خويش","برخوردار","چون","خارج","شش","هنوز","تحت","ضمن","هستيم","گفته","فكر","بسيار","پيش","براي","روزهاي","انكه","نخواهد","بالا","كل","وقتي","كي","چنين","كه","گيري","نيست","است","كجا","كند","نيز","يابد","بندي","حتي","توانند","عقب","خواست","كنند","بين","تمام","همه","ما","باشند","مثل","شد","اري","باشد","اره","طبق","بعد","اگر","صورت","غير","جاي","بيش","ريزي","اند","زيرا","چگونه","بار","لطفا","مي","درباره","من","ديده","همين","گذاري","برداري","علت","گذاشته","هم","فوق","نه","ها","شوند","اباد","همواره","هر","اول","خواهند","چهار","نام","امروز","مان","هاي","قبل","كنم","سعي","تازه","را","هستند","زير","جلوي","عنوان","بود"]french

["ô","être","vu","vous","votre","un","tu","toute","tout","tous","toi","tiens","tes","suivant","soit","soi","sinon","siennes","si","se","sauf","s","quoi","vers","qui","quels","ton","quelle","quoique","quand","près","pourquoi","plus","à","pendant","partant","outre","on","nous","notre","nos","tienne","ses","non","qu","ni","ne","mêmes","même","moyennant","mon","moins","va","sur","moi","miens","proche","miennes","mienne","tien","mien","n","malgré","quelles","plein","mais","là","revoilà","lui","leurs","","toutes","le","où","la","l","jusque","jusqu","ils","hélas","ou","hormis","laquelle","il","eu","nôtre","etc","est","environ","une","entre","en","son","elles","elle","dès","durant","duquel","été","du","voici","par","dont","donc","voilà","hors","doit","plusieurs","diverses","diverse","divers","devra","devers","tiennes","dessus","etre","dessous","desquels","desquelles","ès","et","désormais","des","te","pas","derrière","depuis","delà","hui","dehors","sans","dedans","debout","vôtre","de","dans","nôtres","mes","d","y","vos","je","concernant","comme","comment","combien","lorsque","ci","ta","nບnmoins","lequel","chez","contre","ceux","cette","j","cet","seront","que","ces","leur","certains","certaines","puisque","certaine","certain","passé","cependant","celui","lesquelles","celles","quel","celle","devant","cela","revoici","eux","ceci","sienne","merci","ce","c","siens","les","avoir","sous","avec","pour","parmi","avant","car","avait","sont","me","auxquels","sien","sa","excepté","auxquelles","aux","ma","autres","autre","aussi","auquel","aujourd","au","attendu","selon","après","ont","ainsi","ai","afin","vôtres","lesquels","a"]dutch

["andere","uw","niets","wil","na","tegen","ons","wordt","werd","hier","eens","onder","alles","zelf","hun","dus","kan","ben","meer","iets","me","veel","omdat","zal","nog","altijd","ja","want","u","zonder","deze","hebben","wie","zij","heeft","hoe","nu","heb","naar","worden","haar","daar","der","je","doch","moet","tot","uit","bij","geweest","kon","ge","zich","wezen","ze","al","zo","dit","waren","men","mijn","kunnen","wat","zou","dan","hem","om","maar","ook","er","had","voor","of","als","reeds","door","met","over","aan","mij","was","is","geen","zijn","niet","iemand","het","hij","een","toen","in","toch","die","dat","te","doen","ik","van","op","en","de"]russian

["а","без","более","бы","был","была","были","было","быть","в","вам","вас","весь","во","вот","все","всего","всех","вы","где","да","даже","для","до","его","ее","ей","ею","если","есть","еще","же","за","здесь","и","из","или","им","их","к","как","ко","когда","кто","ли","либо","мне","может","мы","на","надо","наш","не","него","нее","нет","ни","них","но","ну","о","об","однако","он","она","они","оно","от","очень","по","под","при","с","со","так","также","такой","там","те","тем","то","того","тоже","той","только","том","ты","у","уже","хотя","чего","чей","чем","что","чтобы","чье","чья","эта","эти","это","я"]