PolarDB for MySQL Multi-master Cluster (Limitless) Edition extends the cluster from a single-writer model to a multiple-writer model. Each read/write (RW) node handles concurrent writes for its assigned databases and data objects. You can reassign databases or objects between nodes in seconds to rebalance write load.

Key concepts

| Concept | Description |

|---|---|

| RW node | A read/write compute node. Each database or data object is assigned to exactly one RW node for writes. |

| Database isolation level | The default mode. All objects in a database write through a single RW node. |

| Data object isolation level | An optional mode where individual objects in the same database (tables, views, triggers, functions, stored procedures, and events) can be assigned to different RW nodes. |

| Endpoint | The RW node assigned to a database or data object. Switching an endpoint moves the write assignment to a different node. |

Prerequisites

Before you begin, make sure you have:

A Multi-master Cluster (Limitless) Edition cluster. For more information, see Custom purchase and Purchase a subscription cluster

A privileged account. For more information, see Create a privileged account

A connection to the cluster. For more information, see Connect to a database cluster

PolarDB for MySQL 8.0

Typical workflow

The typical workflow for a new cluster is:

Create databases and assign each to an RW node.

*(Optional)* Switch a database endpoint to rebalance write load across nodes.

*(Optional)* Switch a database to data object isolation level for per-object node assignment.

Limitations

Cross-node queries

Each database or data object can be written from only one node. You cannot read or write data on a node that does not have the corresponding database or data object assigned to it. If a query involves databases or data objects on multiple RW nodes, the system returns an error. Move all involved databases or data objects to a single RW node before running such a query.

Endpoint types

Only cluster endpoints are supported. Primary endpoints are not supported.

Dependent objects

When objects reference each other, both must be on the same RW node:

A view and the tables it references must share the same node. If VIEW1 depends on table t1 but their endpoints differ, accessing VIEW1 returns an error.

Functions, stored procedures, and events fail if any objects they reference are on a different node.

A trigger and its associated table must be on the same node. If they differ, Data Manipulation Language (DML) operations on the table fail.

Foreign keys

If a foreign key constraint exists between two tables, changing the endpoint of one table automatically changes the endpoint of the other.

Create a database on a specific RW node

CREATE DATABASE name [POLARDB_WRITE_NODE master_id];Example: Create db1 on RW1.

CREATE DATABASE db1 POLARDB_WRITE_NODE 1;To create db1 on RW2 instead, replace 1 with 2.

POLARDB_WRITE_NODE, the cluster assigns the database to the node specified by the loose_innodb_mm_default_master_id parameter. When the parameter value is 0, the cluster picks a node at random—databases spread across nodes without a predictable placement, which works well for balanced load but not for scenarios where you need precise control over data distribution. To view or change this parameter, go to Configuration And Management > Parameter Settings in the PolarDB console and view and modify cluster or node parameters.Delete a database

DROP DATABASE name;You do not need to specify POLARDB_WRITE_NODE when deleting a database.

Example: Delete db1.

DROP DATABASE db1;Switch a database endpoint

Move a database's write assignment to a different RW node:

ALTER DATABASE name POLARDB_WRITE_NODE master_id;Example: Switch db1 to RW2.

ALTER DATABASE db1 POLARDB_WRITE_NODE 2;Switch from database isolation level to data object isolation level

By default, all objects in a database share the same RW node. To assign individual objects to different nodes, switch the database to data object isolation level:

ALTER DATABASE name TO TABLE_LOCK POLARDB_WRITE_NODE master_id;Example: Switch db1 to data object isolation level and set the initial endpoint to RW2.

ALTER DATABASE db1 TO TABLE_LOCK POLARDB_WRITE_NODE 2;Switch from data object isolation level to database isolation level

To consolidate all objects in a database back to a single node:

ALTER DATABASE name TO DB_LOCK POLARDB_WRITE_NODE master_id;Example: Switch db1 back to database isolation level and set the endpoint to RW1.

ALTER DATABASE db1 TO DB_LOCK POLARDB_WRITE_NODE 1;Switch a data object endpoint

After switching to data object isolation level, assign individual objects to specific nodes. Supported object types are TABLE, VIEW, TRIGGER, FUNCTION, PROCEDURE, and EVENT.

ALTER obj_type name POLARDB_WRITE_NODE master_id;Examples:

Switch table t1 in db1 to RW3:

ALTER TABLE db1.t1 POLARDB_WRITE_NODE 3;Switch view t2 in the current database to RW2:

ALTER VIEW t2 POLARDB_WRITE_NODE 2;Switch functions f1 and f2 in db2 to RW1:

ALTER FUNCTION db2.f1, db2.f2 POLARDB_WRITE_NODE 1;Route an SQL statement to a specific RW node

This applies only to non-data queries, such as queries against information_schema or status variables. For data queries like SELECT * FROM table1, the database proxy automatically routes to the correct node—no manual routing needed.

To lock a session to a specific RW node:

ALTER SESSION POLARDB_WRITE_NODE master_id;To release the lock:

RESET SESSION POLARDB_WRITE_NODE;Example: Query the value of innodb_buffer_pool_size on RW1.

ALTER SESSION POLARDB_WRITE_NODE 1; -- Route to RW1

SHOW VARIABLES LIKE 'innodb_buffer_pool_size'; -- Query on RW1Query the database distribution

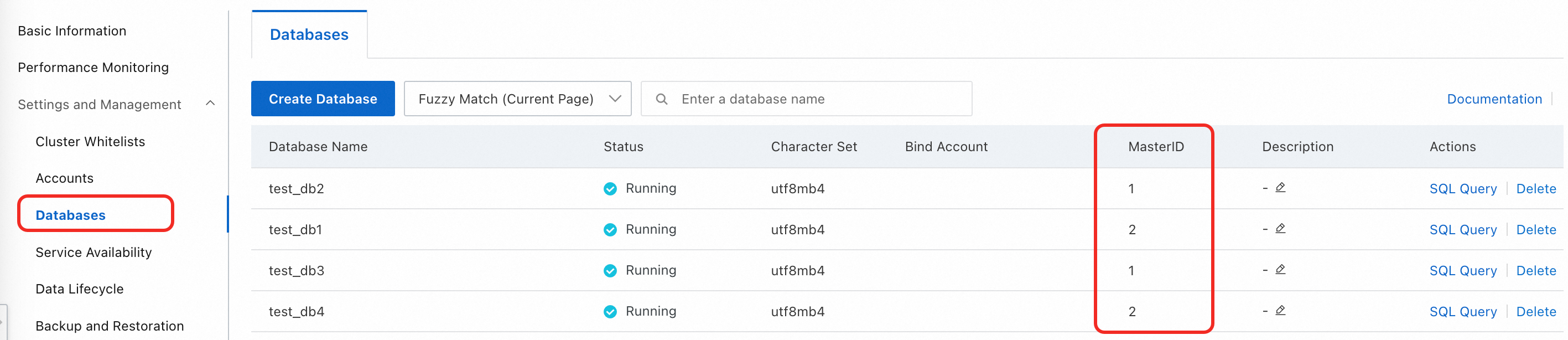

View distribution in the console

Go to Configuration And Management > Database Management in the PolarDB console to see all databases and their node assignments.

Query distribution on a specific node

ALTER SESSION POLARDB_WRITE_NODE master_id;

SELECT * FROM INFORMATION_SCHEMA.INNODB_MASTER_GLOBAL_LOCK_INFO WHERE LOCK_MODE = 'SLS_X';Example: Query the distribution on RW1.

ALTER SESSION POLARDB_WRITE_NODE 1;

SELECT * FROM INFORMATION_SCHEMA.INNODB_MASTER_GLOBAL_LOCK_INFO WHERE LOCK_MODE = 'SLS_X';Sample output:

+------------+---------------------+----------+--------------+-----------+----------+-------------+-------------+---------------------+-----------------+

| table_name | table_id | space_id | s_lock_count | lock_mode | object | current_lsn | hold_thread | hold_start_time | hold_total_time |

+------------+---------------------+----------+--------------+-----------+----------+-------------+-------------+---------------------+-----------------+

| test3/f1 | 9149389368458135753 | 0 | 0 | SLS_X | function | 28076635 | 17 | 2024-07-10 21:35:20 | 214 |

| test3/e1 | 9149389368458332874 | 0 | 0 | SLS_X | event | 28077248 | 17 | 2024-07-10 21:35:30 | 204 |

| test3/v1 | 9149389368457234649 | 0 | 0 | SLS_X | view | 28075972 | 17 | 2024-07-10 21:35:08 | 226 |

| sbtest | 2107518311328629409 | 0 | 0 | SLS_X | db | 28034927 | 4294967295 | 2024-07-07 23:04:41 | 254053 |

| test | 7190879906290573778 | 0 | 0 | SLS_X | db | 28034927 | 4294967295 | 2024-07-10 11:20:57 | 37077 |

| test2 | 3381728963524265351 | 0 | 0 | SLS_X | db | 28034927 | 4294967295 | 2024-07-10 11:13:09 | 37545 |

+------------+---------------------+----------+--------------+-----------+----------+-------------+-------------+---------------------+-----------------+

6 rows in set (0.00 sec)Each row represents a database or data object. In this example, sbtest, test, and test2 use database isolation level, while test3/f1 (function), test3/e1 (event), and test3/v1 (view) use data object isolation level. An entry named mysql/global_ddl_lock with object type Table may also appear—this is internal metadata and can be ignored.

Query distribution across all nodes

SELECT * FROM INFORMATION_SCHEMA.INNODB_CC_GLOBAL_LOCK_INFO WHERE LOCK_MODE = 'SLS_X';Sample output:

+-----------+------------+---------------------+-----------+-----------+-------------+---------------------+-----------------+

| master_id | table_name | table_id | lock_mode | object | current_lsn | hold_start_time | hold_total_time |

+-----------+------------+---------------------+-----------+-----------+-------------+---------------------+-----------------+

| 1 | test3/v1 | 9149389368457234649 | SLS_X | view | 28075972 | 2024-07-10 21:35:08 | 754 |

| 2 | test5/t1 | 9149389447232697561 | SLS_X | table | 7256175 | 2024-07-10 21:46:36 | 66 |

| 1 | test2 | 3381728963524265351 | SLS_X | db | 28034927 | 2024-07-10 11:13:09 | 38073 |

| 2 | test4 | 3381728963524272009 | SLS_X | db | 7255352 | 2024-07-10 21:46:27 | 75 |

| 1 | test3/f1 | 9149389368458135753 | SLS_X | function | 28076635 | 2024-07-10 21:35:20 | 742 |

| 1 | test3/e1 | 9149389368458332874 | SLS_X | event | 28077248 | 2024-07-10 21:35:30 | 732 |

| 1 | test | 7190879906290573778 | SLS_X | db | 28034927 | 2024-07-10 11:20:57 | 37605 |

| 2 | test5/p1 | 9149389447233473757 | SLS_X | procedure | 7257051 | 2024-07-10 21:46:45 | 57 |

| 1 | sbtest | 2107518311328629409 | SLS_X | db | 28034927 | 2024-07-07 23:04:41 | 254581 |

+-----------+------------+---------------------+-----------+-----------+-------------+---------------------+-----------------+

9 rows in set (0.00 sec)The master_id column shows which RW node each database or data object is assigned to. An entry named mysql/global_ddl_lock with object type Table may also appear—this is internal metadata and can be ignored.

Set up binary logging

Multi-master Cluster (Limitless) Edition is fully compatible with MySQL binary logging. It merges operation logs from all RW nodes into globally unified, logically ordered binary logs.

To enable binary logging, configure the

loose_polar_log_binparameter.To set the binary log retention period, configure

binlog_expire_logs_seconds.

For setup instructions, see Enable binary logging.