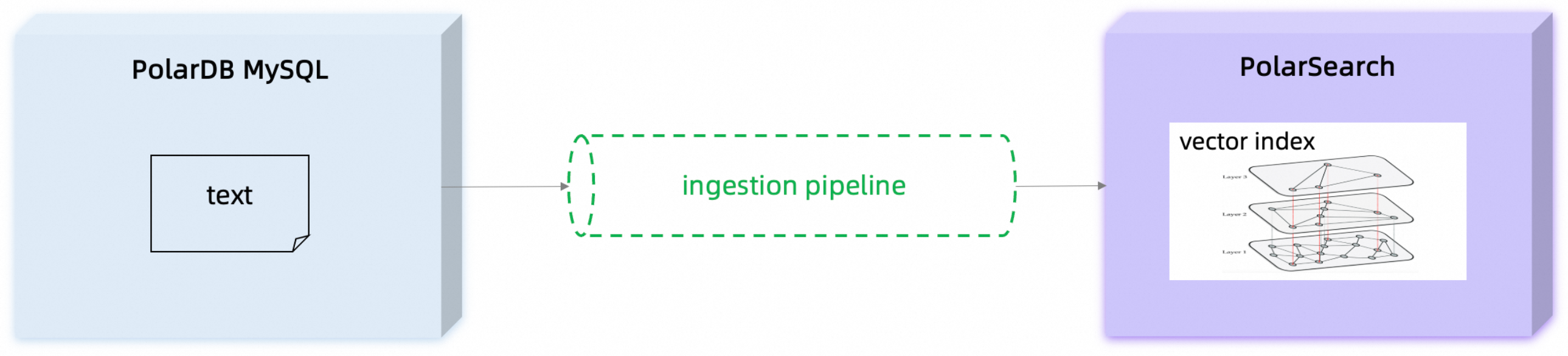

Building AI applications — semantic search, AI chat, recommendation systems — typically requires converting business data into vector embeddings and storing them in a vector index. Doing this manually means writing and maintaining custom extract, transform, load (ETL) pipelines that extract data, call an embedding model, and keep the index in sync with your database.

The AutoETL feature of PolarDB for MySQL eliminates this overhead. Combined with a PolarSearch ingestion pipeline, AutoETL automatically extracts data changes from your MySQL tables, calls an external embedding model to generate vectors, and writes both the raw text and the resulting embeddings to a PolarSearch index in real time.

This tutorial walks you through the full setup in four steps:

-

Set environment variables

-

Allow the embedding model endpoint in PolarSearch

-

Deploy an embedding model in PolarSearch

-

Create an ingestion pipeline, a PolarSearch index, and an AutoETL sync link

How it works

Data flows through three systems: PolarDB for MySQL, an embedding model service, and PolarSearch.

-

Data source: Original text data is stored in a PolarDB for MySQL table.

-

Trigger synchronization: Call the

dbms_etl.sync_by_mapstored procedure in PolarDB for MySQL to create and start an AutoETL synchronization task. -

Data writing: AutoETL captures data changes (inserts, updates, and deletes) and streams them to the target PolarSearch index in real time.

-

Pipeline processing: Before each record is written to the index, the predefined ingestion pipeline intercepts it.

-

Call the model: The

text_embeddingprocessor in the pipeline reads the specified text field and calls the external embedding model service to convert the text into a vector. -

Vector storage: The model's response — a float array — is written to the vector field of the index alongside the raw data, completing the vector index entry.

Prerequisites

Before you begin, ensure that you have:

-

PolarDB for MySQL cluster version:

-

MySQL 8.0.1: revision version 8.0.1.1.52 or later

-

MySQL 8.0.2: revision version 8.0.2.2.33 or later

-

-

Network: The embedding model service must be deployed in the same virtual private cloud (VPC) as your PolarDB for MySQL cluster. PolarSearch nodes act as clients that call the model service.

To access Large Language Models (LLMs) in Alibaba Cloud Model Studio from PolarSearch, you must first establish network connectivity between the PolarSearch cluster VPC and the Alibaba Cloud Model Studio VPC. Submit a ticket if you need this set up. If you use a self-hosted embedding model, make sure it is accessible from within the same VPC. If you encounter issues accessing a self-hosted model, submit a ticket for assistance.

Step 1: Set environment variables

Before running any commands, export the following variables. All subsequent curl examples use them directly, so you only need to set your credentials once.

| Variable | Description | Example value |

|---|---|---|

POLARSEARCH_HOST_PORT |

PolarSearch connection address and port | pc-xxx.polardbsearch.rds.aliyuncs.com:3001 |

USER_PASSWORD |

PolarSearch administrator account | polarsearch_user:your_password |

YOUR_API_KEY |

API key for Alibaba Cloud Model Studio | sk-xxxxxxxxxxxxxxxxxxxxxxxx |

If your self-hosted embedding model does not require credentials, skip YOUR_API_KEY.

Run the following in your terminal. Replace the example values with your actual values.

# PolarSearch endpoint

export POLARSEARCH_HOST_PORT="pc-xxx.polardbsearch.rds.aliyuncs.com:3001"

# PolarSearch administrator credentials

export USER_PASSWORD="polarsearch_user:your_password"

# Alibaba Cloud Model Studio API key

export YOUR_API_KEY="sk-xxxxxxxxxxxxxxxxxxxxxxxx"Step 2: Allow the embedding model endpoint

PolarSearch blocks outbound connections by default. Add the embedding model service URL to the trusted endpoints list so PolarSearch can call it.

Command line

curl -XPUT "http://${POLARSEARCH_HOST_PORT}/_cluster/settings" \

--user "${USER_PASSWORD}" \

-H 'Content-Type: application/json' \

-d '{

"persistent": {

"plugins.ml_commons.trusted_connector_endpoints_regex": [

"^https://dashscope.aliyuncs.com/compatible-mode.*$"

]

}

}'Dashboard

PUT _cluster/settings

{

"persistent": {

"plugins.ml_commons.trusted_connector_endpoints_regex": [

"^https://dashscope.aliyuncs.com/compatible-mode.*$"

]

}

}Step 3: Deploy an embedding model

PolarSearch uses its ML Commons plugin to register and host connectors to external models. Complete the following sub-steps to connect to an embedding model, using Alibaba Cloud Model Studio's text-embedding-v4 model as an example.

Step 3a: Create a model group (optional)

A model group organizes related models. This step is optional but makes model management easier when you have multiple models.

Command line

curl -XPOST "http://${POLARSEARCH_HOST_PORT}/_plugins/_ml/model_groups/_register" \

--user "${USER_PASSWORD}" \

-H 'Content-Type: application/json' \

-d '{

"name": "test_model_group",

"description": "Model group for text embedding"

}'Dashboard

POST _plugins/_ml/model_groups/_register

{

"name": "test_model_group",

"description": "Model group for text embedding"

}Note the model_group_id in the response — you'll use it when registering a model.

{"model_group_id": "kVKdApwxxx", "status": "CREATED"}Step 3b: Create a connector

A connector defines how PolarSearch calls the external model API — the endpoint, authentication, and request/response format. The following example creates a connector to Alibaba Cloud Model Studio.

If your self-hosted model does not require credentials, omit the credential and headers.Authorization fields.

Command line

curl -XPOST "http://${POLARSEARCH_HOST_PORT}/_plugins/_ml/connectors/_create" \

--user "${USER_PASSWORD}" \

-H 'Content-Type: application/json' \

-d '{

"name": "qwen embedding connector",

"description": "The connector to qwen embedding model",

"version": 1,

"protocol": "http",

"parameters": {

"model": "text-embedding-v4",

"endpoint": "dashscope.aliyuncs.com/compatible-mode"

},

"credential": {

"api_key": "${YOUR_API_KEY}"

},

"actions": [

{

"action_type": "predict",

"method": "POST",

"headers": {

"Authorization": "Bearer ${credential.api_key}",

"content-type": "application/json"

},

"url": "https://${parameters.endpoint}/v1/embeddings",

"request_body": "{ \"model\": \"${parameters.model}\", \"input\": ${parameters.input} }",

"pre_process_function": "connector.pre_process.openai.embedding",

"post_process_function": "connector.post_process.openai.embedding"

}

]

}'Dashboard

Replace <YOUR_API_KEY> with your actual API key for Alibaba Cloud Model Studio.

POST _plugins/_ml/connectors/_create

{

"name": "qwen embedding connector",

"description": "The connector to qwen embedding model",

"version": 1,

"protocol": "http",

"parameters": {

"model": "text-embedding-v4",

"endpoint": "dashscope.aliyuncs.com/compatible-mode"

},

"credential": {

"api_key": "<YOUR_API_KEY>"

},

"actions": [

{

"action_type": "predict",

"method": "POST",

"headers": {

"Authorization": "Bearer ${credential.api_key}",

"content-type": "application/json"

},

"url": "https://${parameters.endpoint}/v1/embeddings",

"request_body": "{ \"model\": \"${parameters.model}\", \"input\": ${parameters.input} }",

"pre_process_function": "connector.pre_process.openai.embedding",

"post_process_function": "connector.post_process.openai.embedding"

}

]

}A successful response returns a connector_id. Record it for the next step.

{"connector_id": "GUCpu5sBy-xxx"}Step 3c: Register the model

Register the connector as a model so PolarSearch can reference it in pipelines and queries.

Command line

curl -XPOST "http://${POLARSEARCH_HOST_PORT}/_plugins/_ml/models/_register" \

--user "${USER_PASSWORD}" \

-H 'Content-Type: application/json' \

-d '{

"name": "qwen embedding model",

"function_name": "remote",

"description": "Embedding model",

"connector_id": "GUCpu5sBy-xxx"

}'Dashboard

POST _plugins/_ml/models/_register

{

"name": "qwen embedding model",

"function_name": "remote",

"description": "Embedding model",

"connector_id": "GUCpu5sBy-xxx"

}A successful response returns a model_id. Record it — you need it in the remaining steps.

{"task_id": "xxx_nIW", "status": "CREATED", "model_id": "J0C8u5sBy-xxx"}Step 3d: Deploy the model

Command line

curl -XPOST "http://${POLARSEARCH_HOST_PORT}/_plugins/_ml/models/<model_id>/_deploy" \

--user "${USER_PASSWORD}"Dashboard

POST _plugins/_ml/models/<model_id>/_deployDeployment is complete when status shows COMPLETED.

{"task_id": "xxx", "task_type": "DEPLOY_MODEL", "status": "COMPLETED"}Step 3e: Test the model

Send a test request to confirm the model is reachable and returns embeddings.

Command line

curl -XPOST "http://${POLARSEARCH_HOST_PORT}/_plugins/_ml/models/<model_id>/_predict" \

--user "${USER_PASSWORD}" \

-H 'Content-Type: application/json' \

-d '{

"parameters": {

"input": ["hello world"]

}

}'Dashboard

POST /_plugins/_ml/_predict/text_embedding/<model_id>/_predict

{

"parameters": {

"input": ["hello world"]

}

}A successful response contains a float array with shape [1024], confirming the model returns 1024-dimensional embeddings.

{

"inference_results": [

{

"output": [

{

"name": "sentence_embedding",

"data_type": "FLOAT32",

"shape": [1024],

"data": [

0.019832463935017586,

-0.017113497480750084,

...

]

}

],

"status_code": 200

}

]

}Step 4: Set up the sync pipeline

Step 4a: Create an ingestion pipeline

The ingestion pipeline runs before data is written to a PolarSearch index. It intercepts each document, calls the embedding model on a specified text field, and writes the result to a vector field — all transparently.

Create a pipeline named text_to_vec_pipeline with a text_embedding processor:

-

model_id: the ID of the model you deployed above. -

field_map: maps the input text field (my_text) to the output vector field (my_vector).

Command line

curl -XPUT "http://${POLARSEARCH_HOST_PORT}/_ingest/pipeline/text_to_vec_pipeline" \

--user "${USER_PASSWORD}" \

-H 'Content-Type: application/json' \

-d '{

"description": "A text embedding pipeline",

"processors": [

{

"text_embedding": {

"model_id": "<deployed embedding model ID>",

"field_map": {

"my_text": "my_vector"

}

}

}

]

}'Dashboard

PUT _ingest/pipeline/text_to_vec_pipeline

{

"description": "A text embedding pipeline",

"processors": [

{

"text_embedding": {

"model_id": "<deployed embedding model ID>",

"field_map": {

"my_text": "my_vector"

}

}

}

]

}Step 4b: Prepare test data

Log in to your PolarDB for MySQL cluster and create a test database, table, and sample rows.

CREATE DATABASE IF NOT EXISTS db;

CREATE TABLE IF NOT EXISTS db.test_table (

id INT PRIMARY KEY,

t1 INT,

t2 TEXT

);

INSERT INTO db.test_table(id, t1, t2) VALUES

(1, 11, 'aaa'),

(2, 22, 'bbb'),

(3, 33, 'ccc');Step 4c: Create a PolarSearch index

Create an index that stores both the raw text and the generated vector. Two settings are critical:

-

default_pipeline: set totext_to_vec_pipelineso every write to this index automatically triggers vectorization. -

my_vector: aknn_vectorfield with dimension1024, matching the model's output. The index uses FAISS with the HNSW algorithm for approximate nearest-neighbor search.

Command line

curl -XPUT "http://${POLARSEARCH_HOST_PORT}/test_index" \

--user "${USER_PASSWORD}" \

-H 'Content-Type: application/json' \

-d '{

"settings": {

"index": {

"knn": true,

"default_pipeline": "text_to_vec_pipeline"

}

},

"mappings": {

"properties": {

"id": {

"type": "integer"

},

"my_text": {

"type": "text"

},

"my_vector": {

"type": "knn_vector",

"dimension": 1024,

"method": {

"engine": "faiss",

"name": "hnsw"

}

}

}

}

}'Dashboard

PUT /test_index

{

"settings": {

"index": {

"knn": true,

"default_pipeline": "text_to_vec_pipeline"

}

},

"mappings": {

"properties": {

"id": {

"type": "integer"

},

"my_text": {

"type": "text"

},

"my_vector": {

"type": "knn_vector",

"dimension": 1024,

"method": {

"engine": "faiss",

"name": "hnsw"

}

}

}

}

}Step 4d: Start an AutoETL sync link

Back in your PolarDB for MySQL cluster, call dbms_etl.sync_by_map to create and start the synchronization task.

call dbms_etl.sync_by_map(

"search",

"test_index.my_text(db.test_table.t2),test_index.id(db.test_table.id)",

"test_index.id=db.test_table.id",

"",

""

);This command maps db.test_table to test_index:

-

The

t2column (text content) is written tomy_textin PolarSearch. -

The

idcolumn is written toidin PolarSearch.

Because test_index has text_to_vec_pipeline set as its default pipeline, every write automatically triggers the text_embedding processor. The resulting vector is stored in my_vector.

From this point on, any insert, update, or delete in db.test_table is automatically reflected in test_index, with vectors generated on the fly.

Verify data synchronization

Query test_index to confirm that records have been written and that my_vector contains embedding data.

Command line

curl -XPOST "http://${POLARSEARCH_HOST_PORT}/test_index/_search" \

--user "${USER_PASSWORD}" \

-H 'Content-Type: application/json' \

-d '{

"query": {

"match": {

"my_text": "aaa"

}

}

}'Dashboard

POST /test_index/_search

{

"query": {

"match": {

"my_text": "aaa"

}

}

}A successful response includes the matched document with a populated my_vector field:

"hits": [

{

"_index": "test_index",

"_id": "1",

"_score": 0.44583148,

"_source": {

"my_text": "aaa",

"id": 1,

"my_vector": [

-0.013453668,

0.009771001,

-0.00977745,

...

]

}

}

]If my_vector is populated, the end-to-end pipeline is working: PolarDB for MySQL → AutoETL → PolarSearch ingestion pipeline → embedding model → vector index.

Limitations

Keep the following constraints in mind when using AutoETL with PolarSearch:

-

Version requirements: AutoETL sync to PolarSearch requires MySQL 8.0.1 revision 8.0.1.1.52 or later, or MySQL 8.0.2 revision 8.0.2.2.33 or later.

-

Network: The embedding model service must reside in the same VPC as your PolarDB for MySQL cluster. Cross-VPC access (for example, to Alibaba Cloud Model Studio) requires additional network connectivity — submit a ticket to set this up.