Train Transformer models to generate summaries and headlines from source documents.

Limitations

Runs only on DLC compute resources.

Model architecture

Uses standard Transformer encoder-decoder architecture. Takes source text as input and generates summaries during training.

Prerequisites

Connect a Sentence Split component upstream to split text into one sentence per line.

Parameters

Configure component parameters in Designer.

-

Input ports

Port (left to right)

Data type

Upstream component

Required

Training data

OSS

Yes

Validation data

OSS

Yes

-

Component parameters

Tab

Parameter

Description

Field Settings

Input data format

Column names in input file. Default: target:str:1,source:str:1.

Source column

Source text column name. Default: source.

Summary Column Selection

Summary text column name. Default: target.

Model save path

OSS directory where model is saved.

Parameter Settings

Pre-trained model

Pre-trained model from Parameter Settings tab. Default: alibaba-pai/mt5-title-generation-zh.

Batch size

Samples processed per training batch. INT type. Default: 16.

For multi-GPU servers, specifies batch size per GPU.

Maximum text length

Maximum sequence length. INT type. Range: 1-512. Default: 512.

Number of epochs

Training epochs. INT type. Default: 3.

Learning rate

Learning rate. FLOAT type. Default: 3e-5.

Steps to Save a Model File

Training steps between model evaluations and saves. Default: 150.

Language

Training language:

-

zh: Chinese

-

en: English

Copy text from source

Whether to copy text from source to output:

-

false (Default): Does not copy

-

true: Copies text

Minimum decoder length

Minimum output length. INT type. Default: 12.

Maximum decoder length

Maximum output length. INT type. Default: 32.

Minimum non-repeated n-gram

Minimum n-gram length that cannot repeat in output. INT type. Default: 2. For example, value 1 prevents repeated words such as "day day".

Beam search size

Search space size for candidate outputs. INT type. Default: 5. Larger values reduce prediction speed.

Number of returned candidates

Top-scoring candidates to return. INT type. Default: 5.

Execution Tuning

GPU machine type

GPU instance type for training. Default: gn5-c8g1.2xlarge.

-

-

Output port

Output port

Data type

Downstream component

Required

Output model

OSS path specified in Model save path parameter on Field Settings tab. Model is saved in SavedModel format.

No

Usage example

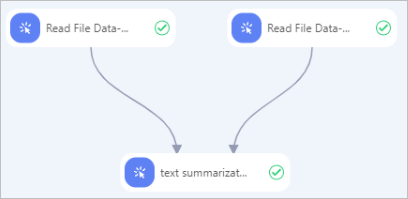

This workflow demonstrates Text Summarization Training component usage. Configure and run the workflow:

Configure and run the workflow:

-

Prepare training dataset (cn_train.txt) and validation dataset (cn_dev.txt), then upload to OSS. These examples use TXT format with tab-separated fields.

CSV files are also supported. Use Tunnel commands in MaxCompute client to upload datasets. For more information, see Connect using the client (odpscmd) and Tunnel commands.

-

Use Read OSS Data-1 and Read OSS Data-2 components to read training and validation datasets. Set OSS Data Path to dataset locations.

-

Connect training and validation datasets to Text Summarization Training-1 component. Configure parameters as described in Parameters.

-

Click

to run the workflow. After workflow completes, view the output model. Model is saved to OSS path specified in Model save path parameter.

to run the workflow. After workflow completes, view the output model. Model is saved to OSS path specified in Model save path parameter.