The decoupled frontend/backend version offers higher performance than the WebUI version. Its backend instance handles 80% of the computing power and, using lossless acceleration technology, can manage the traffic of eight frontend instances. This architecture increases throughput and reduces latency by 25%. This topic describes how to deploy the decoupled frontend/backend version of CosyVoice2 on Alibaba Cloud PAI-EAS.

Function overview

This solution deploys the high-concurrency version of CosyVoice 2 and offers the following benefits:

-

Enterprise-level deployment: It uses a decoupled structure. This separates Hift/Flow from Qwen2LM, allowing you to deploy the time-consuming Qwen2LM independently.

-

High throughput: A high-performance inference engine increases throughput. A single Qwen2LM instance can support multiple Hift/Flow instances, which saves GPU usage and reduces costs.

-

Low latency: Compared to the open-source implementation, the end-to-end latency for a single request is reduced by 25%.

If you only want to test the features, you can directly deploy the CosyVoice 2.0 WebUI version. For more information, see Quickly deploy a WebUI service.

Limitations

The WebUI is limited to a single frontend instance. To access the service from multiple frontend instances simultaneously, you must use an API call.

Deploy the service

Method 1: Scenario-based deployment (Recommended)

-

On the Inference Service tab, click Deploy Service. In the Scenario-based Model Deployment section, click Deploy CosyVoice for AI Speech Generation.

-

In the Basic Information section, configure the Service Name. Set Version selection to High performance. Then, configure the parameters for the backend and frontend instances.

Frontend instance

Parameter

Description

Environment Information

Deployment Version

Select an image based on the resource type. This topic uses

cosyvoice-frontend:0.2.0-pytorch2.3.1-gpu-py310-cu128-ubuntu22.04.NoteImage versions are updated frequently. The versions on the console page prevail. If multiple versions are available, select the latest one.

Storage Mount

To deploy multiple frontend instances, you must mount external storage. This is used to store uploaded audio files or fine-tuned model files. The following example uses a Standard NAS:

-

Select a file system: Select an existing file system. If none is available, click Create NAS File System to create one.

-

Mount Target: Select a mount target. If none is available, click Create a mount point to create one.

-

File System Path: Enter a subpath in the file system, such as

/. -

Mount Path: Specify the mount path within the service instance, such as

/mnt/data/.

NoteIf stability requirements are low, such as in a staging environment, you can skip mounting external storage to save costs.

Command to Run, Port

After you select an image, the system automatically configures the running command and port number. You do not need to modify them.

Note that

--data_dirin the running command specifies the mount folder. It must be the same as the mount path in the storage mounting settings.Resource Information

Resource Type

This solution uses Public Resources.

Number of Replicas

Configure based on the call method:

-

WebUI call: Can only be set to 1.

-

API call: A single backend instance supports up to eight frontend instances. If the number of backend instances is 1, the number of frontend instances can be from 1 to 8.

Deployment

You must select a GPU-accelerated instance type for the resource specification. The minimum GPU memory requirement is 16 GB. Examples include

ecs.gn8is.4xlargeandml.gu8is.c16m128.1-gu60.Configure a system disk

A size of 100 GiB is recommended because the image file is large. This prevents service deployment failure due to insufficient storage. If you do not configure this, the EAS backend assigns 100 GiB of storage space for the CosyVoice2.0 scenario by default.

VPC configuration

If you configured a NAS file system, the system automatically configures the VPC. You only need to select a security group.

Backend instance

Parameter

Description

Environment Information

Deployment Version

Select an image based on the resource type. This solution uses

cosyvoice-backend:0.2.0-pytorch2.3.1-gpu-py310-cu128-ubuntu22.04.NoteImage versions are updated frequently. The versions on the console page prevail. If multiple versions are available, select the latest one.

Command to Run, Port

After you select an image, the system automatically configures the running command and port number. You do not need to modify them.

Resource Information

Resource Type

This solution uses Public Resources.

Number of Replicas

Configure as needed. One backend instance can handle up to eight frontend instances. This solution uses 1.

Deployment

You must select a GPU-accelerated instance type for the resource specification. The minimum GPU memory requirement is 16 GB. Examples include

ecs.gn8is.4xlargeandml.gu8is.c16m128.1-gu60.Configure a system disk

A size of 100 GiB is recommended because the image file is large. This prevents service deployment failure due to insufficient storage. If you do not configure this, the EAS backend assigns 100 GiB of storage space for the CosyVoice2.0 scenario by default.

VPC configuration

Select a VPC, a vSwitch, and a security group. The frontend and backend instances must be in the same VPC. If no VPC is available, see Create and manage a VPC and Manage security groups.

-

-

After you configure the parameters, click Deploy. The service is deployed when its Service Status changes to Running.

Method 2: Custom deployment

For a custom deployment, you must deploy the backend instance first, and then deploy the frontend instance.

Step 1: Deploy the CosyVoice-Backend instance

-

Click Deploy Service. In the Custom Model Deployment section, click Custom Deployment.

-

Log on to the PAI console. Select a region on the top of the page. Then, select the desired workspace and click Elastic Algorithm Service (EAS).

-

On the Custom Deployment page, configure the following key parameters. After you configure the parameters, click Deploy.

-

Deployment Method: Select Image-based Deployment.

-

Image Configuration: In the Alibaba Cloud Image list, select .

NoteImage versions are updated frequently. The versions available on the console are the most current. If multiple versions are listed, select the latest one.

-

Resource Type: This solution uses Public Resources.

-

Number of Replicas: Configure this parameter as needed. One backend instance can handle up to eight frontend instances. For this solution, set the value to 1.

-

Command to Run, Port Number: After you select an image, the system automatically populates the running command and port number. You do not need to change these settings.

-

Deployment: You must select a GPU-accelerated instance type. The minimum GPU memory requirement is 16 GB. Examples include

ecs.gn8is.4xlargeandml.gu8is.c16m128.1-gu60. -

Configure a system disk: We recommend a size of 100 GiB because the image file is large. This prevents service deployment failures caused by insufficient storage. If you do not configure this parameter, the EAS backend assigns 100 GiB of storage space by default for the CosyVoice 2.0 scenario.

-

VPC configuration: Select a VPC, a vSwitch, and a security group. Ensure that the frontend instance you create later is in the same VPC. For more information, see Create and manage a VPC and Manage security groups.

-

-

After the deployment is complete, which takes about 3 minutes, retrieve the VPC endpoint and token. These are required to connect the frontend instance.

-

Click the name of the target service. In the Basic Information section, click View Endpoint Information.

-

In the Invocation Information panel, retrieve the endpoint and token.

Note

NoteWhen connecting the frontend instance to the backend instance, do not use the public endpoint. This method is slow and incurs extra charges.

-

Step 2: Deploy the CosyVoice-Frontend instance

-

Click Deploy Service. In the Custom Model Deployment section, click Custom Deployment.

-

Log on to the PAI console. Select a region on the top of the page. Then, select the desired workspace and click Elastic Algorithm Service (EAS).

-

On the Custom Deployment page, configure the following key parameters. After you configure the parameters, click Deploy.

-

Deployment Method: Select Image-based Deployment and enable Enable Web App.

-

Image Configuration: In the Alibaba Cloud Image list, select .

NoteImage versions are updated frequently. The versions available on the console are the most current. If multiple versions are listed, select the latest one.

-

Storage Mount: To deploy multiple frontend instances, you must mount external storage to store uploaded audio files or fine-tuned model files. This example uses a Standard NAS:

-

File System: Select an existing file system. If none is available, click Create NAS File System to create one.

-

Mount Target: Select a mount target. If none is available, click Create a mount point to create one.

-

File System Path: Enter a subpath in the file system, such as

/. -

Mount Path: Specify the mount path within the service instance, such as

/mnt/data/.

NoteFor environments with low stability requirements, such as a staging environment, you can skip mounting external storage to save costs.

-

-

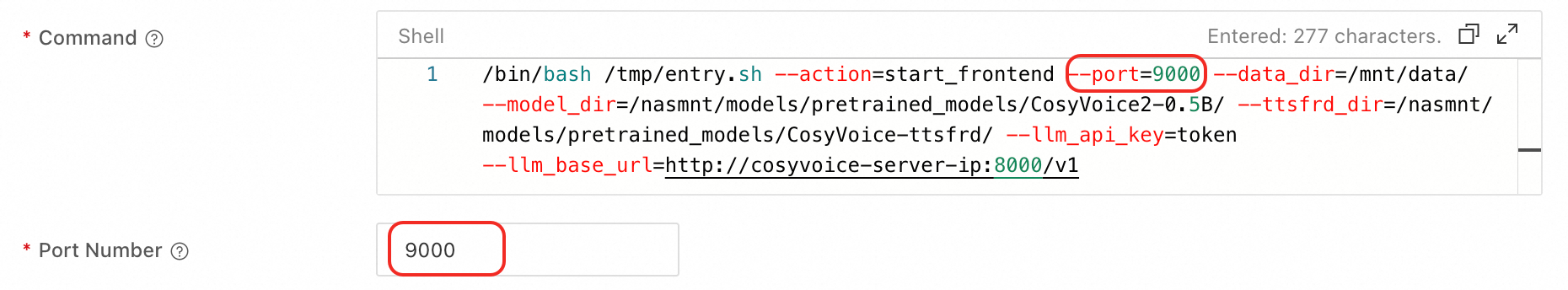

Command to Run: After you select an image, the system automatically populates the running command field with

/bin/bash /tmp/entry.sh --action=start_frontend --port=9000 --data_dir=/mnt/data/ --model_dir=/nasmnt/models/pretrained_models/CosyVoice2-0.5B/ --ttsfrd_dir=/nasmnt/models/pretrained_models/CosyVoice-ttsfrd/ --llm_api_key=token --llm_base_url=http://cosyvoice-server-ip:8000/v1. The parameters are as follows:-

--workers: Specifies the number of workers for the frontend service. If this parameter is not specified, the system automatically allocates workers based on the selected resource specification. To access the WebUI page from a browser, you must set

--workersto 1. -

--port: The service port number. This must match the port number configured for the service.

-

--data_dir: The mount folder. This must match the mount path specified in the Storage Mounting settings.

-

--model_dir: The model loading path. If you use external storage for fine-tuned model files, adjust this path according to your mount path configuration.

-

--llm_api_key: Set this to the token of the CosyVoice-Backend service. For example,

Yjk4YjdlNjM1YW*****GIxZDRmZmNhMjRjZmQwMz*****. -

--llm_base_url: Set this to the VPC endpoint of the CosyVoice-Backend service and append

/v1to the end. For example,http://11577032709*****.vpc.cn-shanghai.pai-eas.aliyuncs.com/api/predict/cosyvoice_backend1/v1.

-

-

Resource Type: This solution uses Public Resources.

-

Deployment: You must select a GPU-accelerated instance type. The minimum GPU memory requirement is 16 GB. Examples include

ecs.gn8is.4xlargeandml.gu8is.c16m128.1-gu60. -

Number of Replicas: Configure this parameter based on the call method:

-

For WebUI calls: Set this value to 1.

-

For API calls: A single backend instance supports up to eight frontend instances. If you have one backend instance, you can set the number of frontend instances to a value from 1 to 8.

-

-

Configure a system disk: We recommend a size of 100 GiB because the image file is large. This prevents service deployment failures caused by insufficient storage. If you do not configure this parameter, the EAS backend assigns 100 GiB of storage space by default for the CosyVoice 2.0 scenario.

-

VPC configuration: If you configured a NAS file system, the system automatically configures the VPC. You only need to select a security group. Ensure that the security group is in the same VPC as the backend instance.

-

Generate audio with the inference service

A scenario-based deployment aggregates the two services from a custom deployment and displays only one service on the Inference Service tab. Note the following:

-

Custom deployment: Access the WebUI and make API calls through the Frontend service.

-

Scenario-based deployment: The WebUI is not supported. Make API calls using the invocation information for the aggregated service.

Use the API

Make API calls using the invocation information for the Frontend service (for custom deployments) or the aggregated service (for scenario-based deployments). For more information, see API operations.

FAQ

Q: Why do I receive a 404 {"detail":"Not Found"} error when I call a CosyVoice API operation?

A 404 error usually indicates that the request URI is incorrect. If you deploy separate Frontend and Backend services, API calls must be sent to the Frontend service. This error occurs if you call the endpoint of the Backend service. Check the URI of your request to ensure it points to the Frontend service.