PyODPS is the Python SDK for MaxCompute. In DataWorks, you can create PyODPS nodes to write and run Python code against MaxCompute — with the MaxCompute entry point pre-configured, so no authentication setup is needed.

This topic covers the DataWorks-specific behaviors of PyODPS nodes: environment limits, key capabilities, and code examples.

Prerequisites

Before you begin, ensure that you have:

A DataWorks workspace with MaxCompute enabled

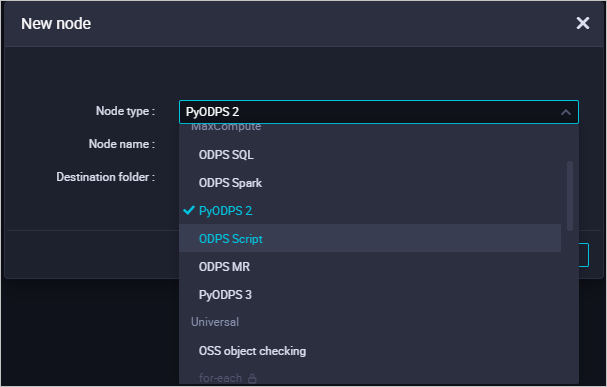

A PyODPS node created in DataStudio — either PyODPS 2 (Python 2) or PyODPS 3 (Python 3)

For instructions on creating a node, see Develop a PyODPS 2 task and Develop a PyODPS 3 task.

Limitations

Memory

If memory usage exceeds the node limit, the node reports Got killed and terminates. To avoid this, push data processing tasks to MaxCompute for distributed execution instead of downloading data to the DataWorks environment and processing it locally. For a comparison of the two approaches, see the "Precautions" section in Overview.

Data records

By default, options.tunnel.use_instance_tunnel is False in DataWorks PyODPS nodes. This means instance.open_reader uses the Result interface, which returns up to 10,000 data records and has limited support for complex data types. To read all records or handle complex types such as Arrays, enable InstanceTunnel. See Read SQL execution results for details.

Packages

Most common data science packages come pre-installed (see Pre-installed packages). The following restrictions apply:

atexit is not supported. Use

try-finallyinstead.matplotlib is not available, which affects the DataFrame

plotfunction.DataFrame UDFs run in a Python sandbox and can only use pure Python libraries and NumPy. Other third-party libraries such as pandas are not supported inside UDFs. For non-UDF operations, NumPy and pandas are available.

Third-party packages that contain binary code are not supported.

MaxCompute entry point

Each PyODPS node exposes odps and o as global variables — both refer to the MaxCompute entry point. DataWorks configures these automatically, so no authentication setup is needed in your code.

# Check whether the pyodps_iris table exists.

print(o.exist_table('pyodps_iris'))The entry objectocan access MaxCompute only. It cannot access other Alibaba Cloud services, and additional authentication cannot be obtained using methods such aso.from_global.

Execute SQL statements

Use execute_sql() or run_sql() to run DDL (data definition language) and DML (data manipulation language) statements.

o.execute_sql('select * from pyodps_iris')For non-DDL/DML statements, use the appropriate method:

run_security_query— for GRANT and REVOKE statementsrun_xfloworexecute_xflow— for API operations

For full SQL documentation, see SQL.

Read SQL execution results

Because InstanceTunnel is not enabled by default in DataWorks, instance.open_reader is limited to 10,000 records and may not support complex data types. If your project does not have data protection enabled and you need to read all records or use complex types such as Arrays, enable InstanceTunnel.

Option 1: Enable globally

Applies to all subsequent open_reader calls in the node.

options.tunnel.use_instance_tunnel = True

options.tunnel.limit_instance_tunnel = False # Remove the 10,000-record limit.

with instance.open_reader() as reader:

# InstanceTunnel is active. Use reader.count to get the total number of records.

passOption 2: Enable per reader

Applies only to the current open_reader call.

with instance.open_reader(tunnel=True, limit=False) as reader:

# InstanceTunnel is active for this reader. All records are accessible.

passFor more information, see Obtain the execution results of SQL statements.

DataFrame

To run DataFrame operations in DataWorks, call an immediately executed method such as execute or persist explicitly. Without this, the operation is not triggered.

from odps.df import DataFrame

iris = DataFrame(o.get_table('pyodps_iris'))

# Use execute() to trigger the operation and iterate over results.

for record in iris[iris.sepalwidth < 3].execute():

print(record)By default, options.verbose is enabled in DataWorks, so execution details such as Logview URLs are printed automatically.

For more information, see DataFrame (not recommended).

Scheduling parameters

DataWorks injects scheduling parameters into PyODPS nodes differently from SQL nodes:

SQL nodes:

${param_name}is substituted directly into the SQL string.PyODPS nodes: A global dictionary

argsis populated before the code runs. Read parameter values usingargs['param_name'], not${param_name}.

This design avoids unintended string substitutions in Python code.

Example: On the Scheduling configuration tab of a PyODPS node, set ds=${yyyymmdd} in the Parameters field under Basic properties. Then read the value in code:

# Print the value of the ds scheduling parameter, for example ds=20161116.

print('ds=' + args['ds'])To query data from the partition that ds points to:

o.get_table('table_name').get_partition('ds=' + args['ds'])For more information, see Configure and use scheduling parameters.

Runtime hints

Use the hints parameter to pass runtime settings to execute_sql. The value must be a dict.

o.execute_sql('select * from pyodps_iris', hints={'odps.sql.mapper.split.size': 16})To apply the same settings to all SQL executions in the node, configure options.sql.settings globally:

from odps import options

options.sql.settings = {'odps.sql.mapper.split.size': 16}

o.execute_sql('select * from pyodps_iris') # The hints are applied automatically.Third-party packages

Pre-installed packages

The following packages are pre-installed in DataWorks nodes:

| Package | Python 2 node | Python 3 node |

|---|---|---|

| requests | 2.11.1 | 2.26.0 |

| numpy | 1.16.6 | 1.18.1 |

| pandas | 0.24.2 | 1.0.5 |

| scipy | 0.19.0 | 1.3.0 |

| scikit_learn | 0.18.1 | 0.22.1 |

| pyarrow | 0.16.0 | 2.0.0 |

| lz4 | 2.1.4 | 3.1.10 |

| zstandard | 0.14.1 | 0.17.0 |

Install a custom package

If the package you need is not pre-installed, use pyodps-pack to bundle it and load_resource_package to load it in the node.

Bundle the package. The following example bundles the

ipaddresspackage:pyodps-pack -o ipaddress-bundle.tar.gz ipaddressFor Python 2 nodes, add

--dwpy27:pyodps-pack --dwpy27 -o ipaddress-bundle.tar.gz ipaddressTo reduce bundle size, exclude packages that are already pre-installed in DataWorks:

pyodps-pack -o bundle.tar.gz --exclude numpy --exclude pandas <your-package>The total size of downloaded packages cannot exceed 100 MB.

Upload and submit the

.tar.gzfile as a MaxCompute resource.In the PyODPS node, load and import the package:

load_resource_package("ipaddress-bundle.tar.gz") import ipaddress

For more information, see Generate a third-party package for PyODPS and Reference a third-party package in a PyODPS node.

Access MaxCompute with a different account

By default, the entry object o uses the credentials provided by DataWorks for the current workspace. To access a MaxCompute project using a different Alibaba Cloud account, use the as_account method to create a separate entry object.

as_account requires PyODPS 0.11.3 or later.

Procedure

Grant the new account the required permissions on the project. See Appendix: Grant permissions to another account.

In the PyODPS node, create an entry object for the new account:

import os # Store credentials in environment variables rather than hardcoding them. new_odps = o.as_account( os.getenv('ALIBABA_CLOUD_ACCESS_KEY_ID'), os.getenv('ALIBABA_CLOUD_ACCESS_KEY_SECRET') )Verify that the account switch succeeded by checking the current user:

print(new_odps.get_project().current_user)If the output matches the AccessKey ID of the new account, the switch was successful.

Example

This example creates a table, queries it using a different account, and prints the results.

Create the

pyodps_iristable and import sample data. For instructions, see Create tables and upload data.CREATE TABLE IF NOT EXISTS pyodps_iris ( sepallength DOUBLE COMMENT 'sepal length (cm)', sepalwidth DOUBLE COMMENT 'sepal width (cm)', petallength DOUBLE COMMENT 'petal length (cm)', petalwidth DOUBLE COMMENT 'petal width (cm)', name STRING COMMENT 'type' );Grant the new account permissions on the project and table. See Appendix: Grant permissions to another account.

Create a PyODPS 3 node and run the following code. For instructions, see Develop a PyODPS 3 task.

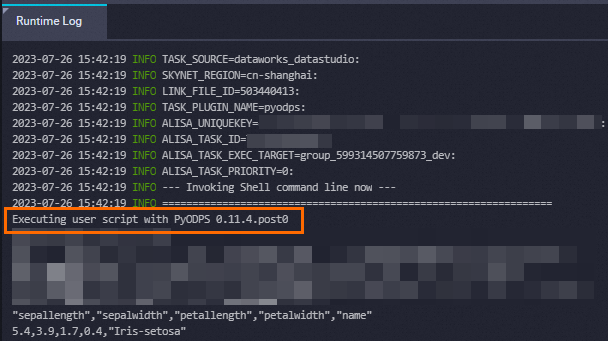

from odps import ODPS import os # Store credentials in environment variables rather than hardcoding them. os.environ['ALIBABA_CLOUD_ACCESS_KEY_ID'] = '<your-access-key-id>' os.environ['ALIBABA_CLOUD_ACCESS_KEY_SECRET'] = '<your-access-key-secret>' od = o.as_account( os.getenv('ALIBABA_CLOUD_ACCESS_KEY_ID'), os.getenv('ALIBABA_CLOUD_ACCESS_KEY_SECRET') ) # Query rows where sepallength > 5. with od.execute_sql('SELECT * FROM pyodps_iris WHERE sepallength > 5').open_reader() as reader: print(reader.raw) for record in reader: print(record["sepallength"], record["sepalwidth"], record["petallength"], record["petalwidth"], record["name"]) # Verify the current user. print(od.get_project().current_user)Run the node. The output looks similar to the following:

Executing user script with PyODPS 0.11.4.post0 "sepallength","sepalwidth","petallength","petalwidth","name" 5.4,3.9,1.7,0.4,"Iris-setosa" ... <User 139xxxxxxxxxxxxx>

Diagnostics

If node execution hangs with no output, add the following comment to the top of your code. DataWorks prints the stack trace of all threads every 30 seconds.

# -*- dump_traceback: true -*-This feature requires PyODPS 3 nodes running versions later than 0.11.4.1.

Check the PyODPS version

Run the following code in a PyODPS node to print the installed version:

import odps

print(odps.__version__)

# Example output: 0.11.2.3The version is also shown in the node's runtime log.

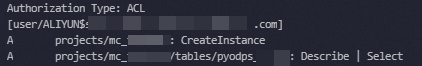

Appendix: Grant permissions to another account

To let a different Alibaba Cloud account access projects and tables in the current workspace, create an ODPS SQL node and run the following commands. For instructions on creating the node, see Create an ODPS SQL node. For more information about permissions, see Users and permissions.

-- Add the account to the project.

ADD USER ALIYUN$<account_name>;

-- Grant CreateInstance permission on the project.

GRANT CreateInstance ON PROJECT <project_name> TO USER ALIYUN$<account_name>;

-- Grant Describe and Select permissions on the table.

GRANT Describe, Select ON TABLE <table_name> TO USER ALIYUN$<account_name>;

-- Verify the permissions.

SHOW GRANTS FOR ALIYUN$<account_name>;

Appendix: Sample data

The examples in this topic use the pyodps_iris table. To create the table and import the iris dataset, follow Step 1 in Use a PyODPS node to query data based on specific criteria.

What's next

Overview of basic operations — complete PyODPS API reference

Overview of DataFrame — DataFrame operations in depth

Use a PyODPS node to segment Chinese text based on Jieba — end-to-end example

View operating history — view runtime logs and stop running tasks