PyODPS DataFrame lets you query and transform MaxCompute tables using a pandas-like API—no raw SQL required. This tutorial walks you through the full workflow: loading data, inspecting schemas, filtering and aggregating, joining tables, and applying user-defined functions (UDFs).

By the end of this tutorial, you will be able to:

-

Create a DataFrame object from a MaxCompute table

-

Inspect schemas and preview rows

-

Select, exclude, and compute columns

-

Group, count, and sort data

-

Join multiple DataFrames and persist the result as a new table

-

Apply row-wise UDFs with the

apply()method

This tutorial covers two environments:

-

IPython — interactive exploration using the MovieLens 100K dataset

-

DataWorks PyODPS node — batch processing using the Iris dataset

Prerequisites

Before you begin, ensure that you have:

-

An Alibaba Cloud account with MaxCompute enabled

-

A MaxCompute project with read and write access

-

Your AccessKey ID and AccessKey secret stored as environment variables:

-

ALIBABA_CLOUD_ACCESS_KEY_ID -

ALIBABA_CLOUD_ACCESS_KEY_SECRET

-

-

PyODPS installed (

pip install pyodps) -

(For Part 1) IPython installed (

pip install IPython) -

(For Part 2) A DataWorks workspace with PyODPS 3 node support

Part 1: Explore data in IPython

This part uses the MovieLens 100K dataset to demonstrate interactive DataFrame operations. The dataset includes three files:

-

u.user— user profiles (user ID, age, gender, occupation, zip code) -

u.item— movie metadata -

u.data— user ratings

Step 1: Set up tables

Create the three MaxCompute tables that will hold the dataset.

Users table

CREATE TABLE IF NOT EXISTS pyodps_ml_100k_users

(

user_id BIGINT COMMENT 'User ID',

age BIGINT COMMENT 'Age',

sex STRING COMMENT 'Gender',

occupation STRING COMMENT 'Occupation',

zip_code STRING COMMENT 'Zip code'

);Movies table

CREATE TABLE IF NOT EXISTS pyodps_ml_100k_movies

(

movie_id BIGINT COMMENT 'Movie ID',

title STRING COMMENT 'Movie title',

release_date STRING COMMENT 'Release date',

video_release_date STRING COMMENT 'Video release date',

IMDb_URL STRING COMMENT 'IMDb URL',

unknown TINYINT COMMENT 'Unknown',

Action TINYINT COMMENT 'Action',

Adventure TINYINT COMMENT 'Adventure',

Animation TINYINT COMMENT 'Animation',

Children TINYINT COMMENT 'Children',

Comedy TINYINT COMMENT 'Comedy',

Crime TINYINT COMMENT 'Crime',

Documentary TINYINT COMMENT 'Documentary',

Drama TINYINT COMMENT 'Drama',

Fantasy TINYINT COMMENT 'Fantasy',

FilmNoir TINYINT COMMENT 'Film noir',

Horror TINYINT COMMENT 'Horror',

Musical TINYINT COMMENT 'Music',

Mystery TINYINT COMMENT 'Mystery',

Romance TINYINT COMMENT 'Romance',

SciFi TINYINT COMMENT 'Science fiction',

Thriller TINYINT COMMENT 'Thriller',

War TINYINT COMMENT 'War',

Western TINYINT COMMENT 'West'

);Ratings table

CREATE TABLE IF NOT EXISTS pyodps_ml_100k_ratings

(

user_id BIGINT COMMENT 'User ID',

movie_id BIGINT COMMENT 'Movie ID',

rating BIGINT COMMENT 'Score',

timestamp BIGINT COMMENT 'Timestamp'

);Step 2: Upload data

Use the Tunnel upload command to load each file into its table. Replace path_to_file with the directory where you saved the dataset files.

Tunnel upload -fd | path_to_file/u.user pyodps_ml_100k_users;

Tunnel upload -fd | path_to_file/u.item pyodps_ml_100k_movies;

Tunnel upload -fd | path_to_file/u.data pyodps_ml_100k_ratings;For the full list of Tunnel commands, see Tunnel commands.

Step 3: Connect to MaxCompute

Start IPython and create a MaxCompute connection object. All DataFrame operations in this part use this object.

import os

from odps import ODPS

o = ODPS(

os.getenv('ALIBABA_CLOUD_ACCESS_KEY_ID'),

os.getenv('ALIBABA_CLOUD_ACCESS_KEY_SECRET'),

project='your-default-project',

endpoint='your-end-point',

)Replace your-default-project and your-end-point with your MaxCompute project name and endpoint.

Step 4: Create a DataFrame

Wrap a MaxCompute table as a DataFrame object.

from odps.df import DataFrame

users = DataFrame(o.get_table('pyodps_ml_100k_users'))Step 5: Inspect the schema

Use the dtypes property to see all columns and their data types.

print(users.dtypes)Output:

odps.Schema {

user_id int64

age int64

sex string

occupation string

zip_code string

}Step 6: Preview rows

Use head(N) to fetch the first N rows. This is the fastest way to verify that your data loaded correctly.

print(users.head(10))Output:

user_id age sex occupation zip_code

0 1 24 M technician 85711

1 2 53 F other 94043

2 3 23 M writer 32067

3 4 24 M technician 43537

4 5 33 F other 15213

5 6 42 M executive 98101

6 7 57 M administrator 91344

7 8 36 M administrator 05201

8 9 29 M student 01002

9 10 53 M lawyer 90703Step 7: Work with columns

Select specific columns

Pass a list of column names to retrieve only those columns.

print(users[['user_id', 'age']].head(5))Output:

user_id age

0 1 24

1 2 53

2 3 23

3 4 24

4 5 33Exclude columns

Use exclude() to drop columns you do not need.

print(users.exclude('zip_code', 'age').head(5))Output:

user_id sex occupation

0 1 M technician

1 2 F other

2 3 M writer

3 4 M technician

4 5 F otherAdd a computed column

Combine exclude() with select() to drop columns and add computed ones in a single step. The example below drops zip_code and sex, then adds a boolean sex_bool column derived from sex.

print(users.select(users.exclude('zip_code', 'sex'), sex_bool=users.sex == 'M').head(5))Output:

user_id age occupation sex_bool

0 1 24 technician True

1 2 53 other False

2 3 23 writer True

3 4 24 technician True

4 5 33 other FalseStep 8: Aggregate and sort

Count by group

Use groupby() followed by agg() to count records within each group.

print(users.groupby(users.sex).agg(count=users.count()))Output:

sex count

0 F 273

1 M 670Find the top 10 occupations

Chain groupby(), agg(), sort(), and head() to rank groups by count.

df = users.groupby('occupation').agg(count=users['occupation'].count())

df1 = df.sort(df['count'], ascending=False)

print(df1.head(10))Output:

occupation count

0 student 196

1 other 105

2 educator 95

3 administrator 79

4 engineer 67

5 programmer 66

6 librarian 51

7 writer 45

8 executive 32

9 scientist 31Alternatively, use value_counts() for a shorter syntax. The number of records returned is controlled by the options.df.odps.sort.limit parameter. For details, see Configurations.

df = users.occupation.value_counts()[:10]

print(df.head(10))Output:

occupation count

0 student 196

1 other 105

2 educator 95

3 administrator 79

4 engineer 67

5 programmer 66

6 librarian 51

7 writer 45

8 executive 32

9 scientist 31Step 9: Join tables and persist the result

Load the movies and ratings DataFrames, join all three tables, and save the result as a new MaxCompute table using persist().

movies = DataFrame(o.get_table('pyodps_ml_100k_movies'))

ratings = DataFrame(o.get_table('pyodps_ml_100k_ratings'))

o.delete_table('pyodps_ml_100k_lens', if_exists=True)

lens = movies.join(ratings).join(users).persist('pyodps_ml_100k_lens')

print(lens.dtypes)Output:

odps.Schema {

movie_id int64

title string

release_date string

ideo_release_date string

imdb_url string

unknown int64

action int64

adventure int64

animation int64

children int64

comedy int64

crime int64

documentary int64

drama int64

fantasy int64

filmnoir int64

horror int64

musical int64

mystery int64

romance int64

scifi int64

thriller int64

war int64

western int64

user_id int64

rating int64

timestamp int64

age int64

sex string

occupation string

zip_code string

}The joined table pyodps_ml_100k_lens contains 31 columns combining all fields from the three source tables.

Part 2: Process data in a DataWorks PyODPS node

This part uses the Iris dataset to demonstrate running DataFrame code in a DataWorks PyODPS 3 node, including row-wise UDFs. For background on PyODPS nodes, see Develop a PyODPS 3 task.

Step 1: Create the Iris table

Use the DataWorks table management feature to create the target table.

-

In the Business Flow section, click the desired workflow. Right-click MaxCompute and select Create Table. In the Create Table dialog box, select a path from the Path drop-down list, enter a name in the Name field, and click Create.

-

On the table editing page, click the

icon in the upper-left corner.

icon in the upper-left corner. -

Run the following statement to create the table:

CREATE TABLE pyodps_iris ( sepallength double COMMENT 'sepal length (cm)', sepalwidth double COMMENT 'sepal width (cm)', petallength double COMMENT 'petal length (cm)', petalwidth double COMMENT 'petal width (cm)', name string COMMENT 'name' );

Step 2: Upload the Iris dataset

-

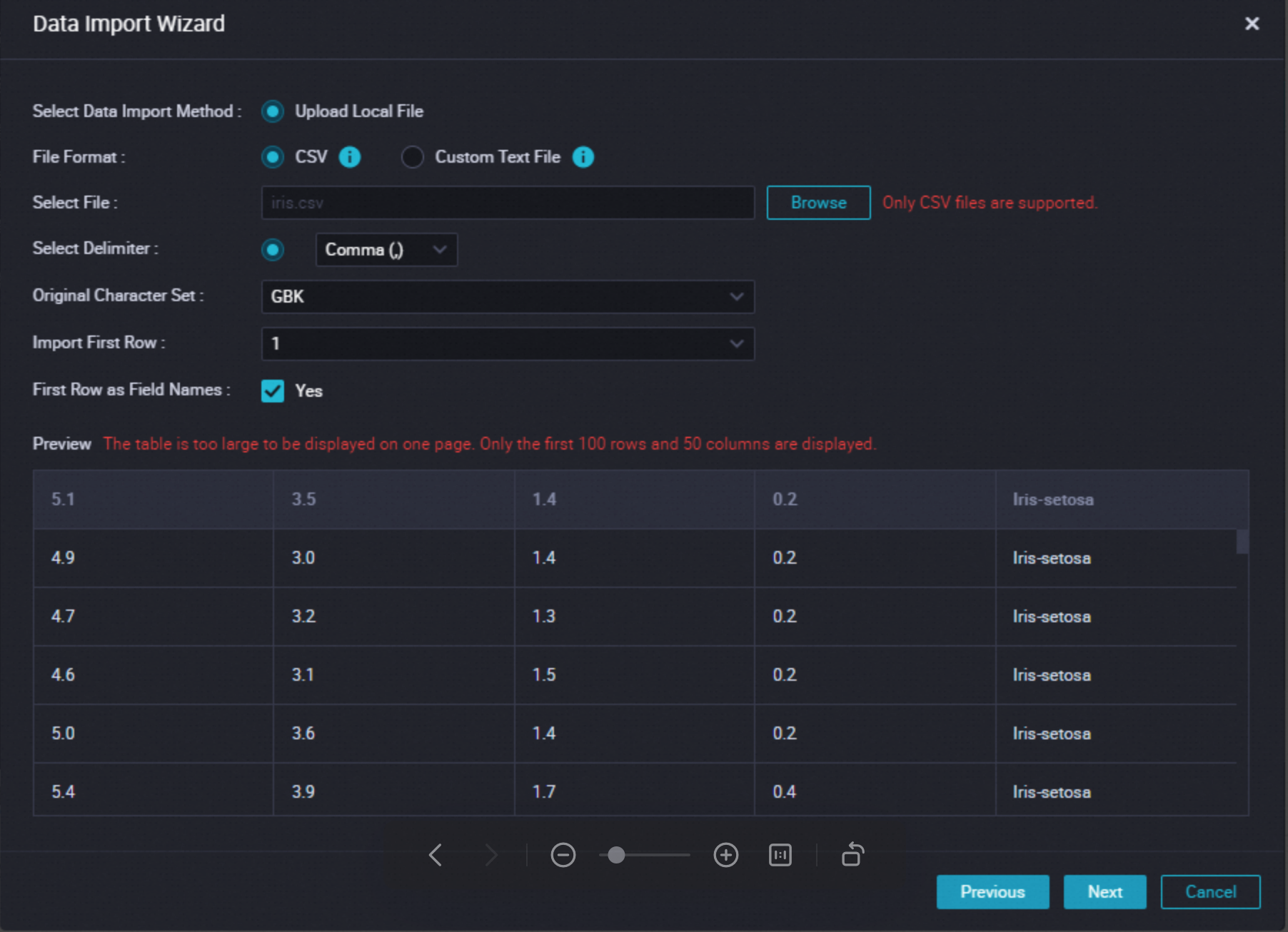

Right-click the table name and select Import Data. In the Data Import Wizard dialog box, click Next, then click Browse to upload the Iris CSV file you downloaded.

-

Select Match by location and click Import Data.

Step 3: Create a PyODPS node

In the Business Flow section, click the desired workflow, right-click MaxCompute, and then choose Create Node > PyODPS 3 to create a node for your code.

Step 4: Write and run the code

Paste the following code into the node editor and click the ![]() icon to run it. Results appear in the Runtime Log pane.

icon to run it. Results appear in the Runtime Log pane.

The code demonstrates three DataFrame operations on the Iris table: previewing rows, selecting a single column, and applying row-wise UDFs.

from odps import ODPS

from odps.df import DataFrame, output

import os

o = ODPS(

os.getenv('ALIBABA_CLOUD_ACCESS_KEY_ID'),

os.getenv('ALIBABA_CLOUD_ACCESS_KEY_SECRET'),

project='your-default-project',

endpoint='your-end-point',

)

# Create a DataFrame from the Iris table

iris = DataFrame(o.get_table('pyodps_iris'))

# Preview the first 10 rows

print(iris.head(10))

# Select a single column

print(iris.sepallength.head(5))

# Apply a lambda UDF to compute the sum of two columns

print(iris.apply(lambda row: row.sepallength + row.sepalwidth, axis=1, reduce=True, types='float').rename('sepaladd').head(3))

# Apply a named UDF that returns multiple output rows per input row

@output(['iris_add', 'iris_sub'], ['float', 'float'])

def handle(row):

# Each yield call produces one output row

yield row.sepallength - row.sepalwidth, row.sepallength + row.sepalwidth

yield row.petallength - row.petalwidth, row.petallength + row.petalwidth

print(iris.apply(handle, axis=1).head(5))Expected output:

# iris.head(10)

sepallength sepalwidth petallength petalwidth name

0 4.9 3.0 1.4 0.2 Iris-setosa

1 4.7 3.2 1.3 0.2 Iris-setosa

2 4.6 3.1 1.5 0.2 Iris-setosa

3 5.0 3.6 1.4 0.2 Iris-setosa

4 5.4 3.9 1.7 0.4 Iris-setosa

5 4.6 3.4 1.4 0.3 Iris-setosa

6 5.0 3.4 1.5 0.2 Iris-setosa

7 4.4 2.9 1.4 0.2 Iris-setosa

8 4.9 3.1 1.5 0.1 Iris-setosa

9 5.4 3.7 1.5 0.2 Iris-setosa

# iris.sepallength.head(5)

sepallength

0 4.9

1 4.7

2 4.6

3 5.0

4 5.4

# iris.apply(lambda ...).rename('sepaladd').head(3)

sepaladd

0 7.9

1 7.9

2 7.7

# iris.apply(handle, axis=1).head(5)

iris_add iris_sub

0 1.9 7.9

1 1.2 1.6

2 1.5 7.9

3 1.1 1.5

4 1.5 7.7How the UDF works:

-

axis=1tellsapply()to pass each row as the function argument. -

The

@outputdecorator specifies the output column names and types. -

yieldreturns multiple rows from a single input row. Eachyieldstatement inhandle()produces one output row—with twoyieldstatements per call, each input row produces two output rows.

What's next

-

Explore the full DataFrame API: filtering with boolean expressions, window functions, and more advanced aggregations

-

Learn how to write and register permanent UDFs in MaxCompute

-

See how to schedule PyODPS nodes as recurring DataWorks workflows