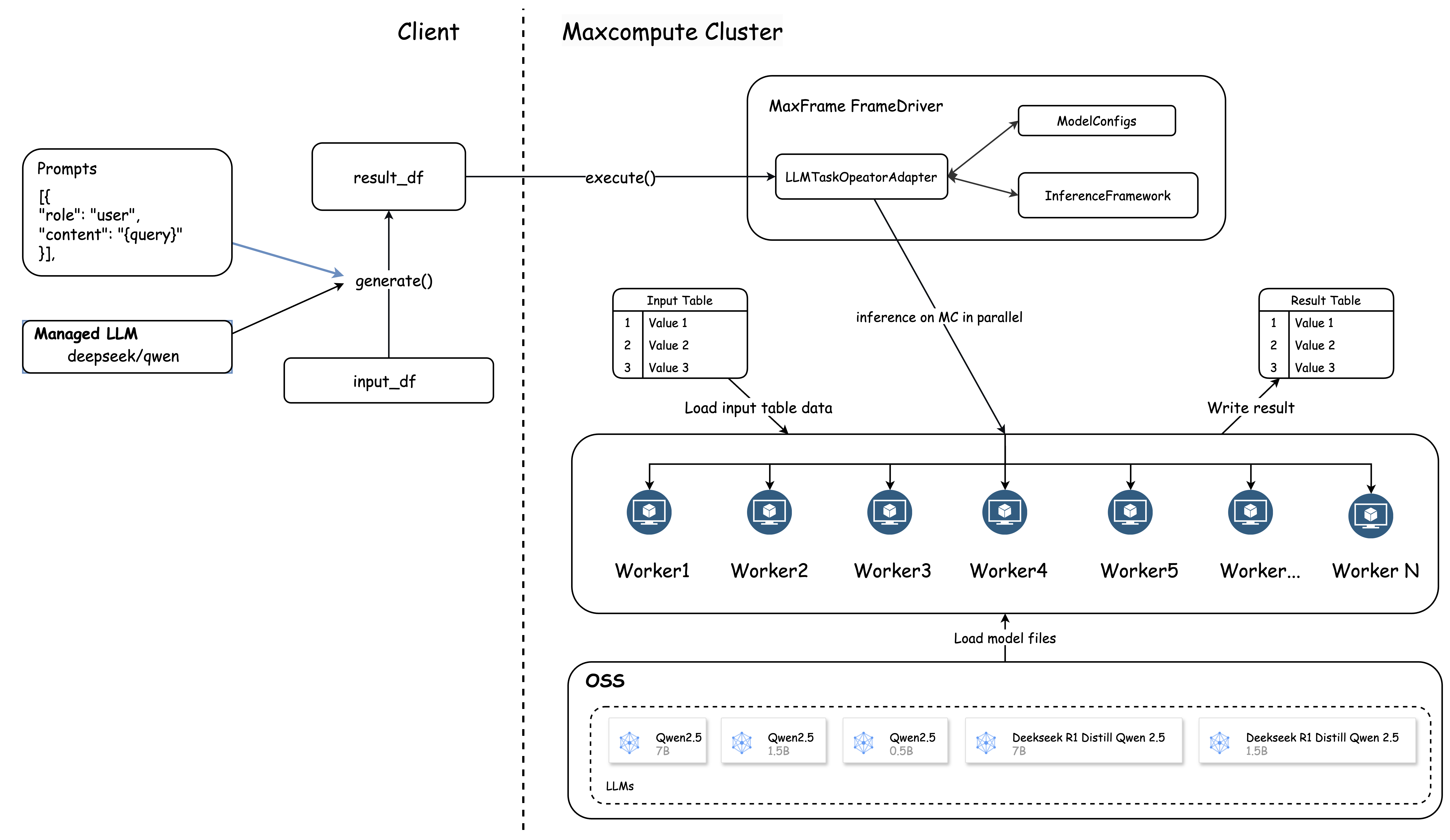

MaxFrame AI Function lets you run large language model (LLM) offline inference at scale directly within MaxCompute. It integrates data processing and AI inference on a single platform: read data from MaxCompute tables, run batch inference using pre-hosted models, and write results back — all using pandas-style Python APIs, with no model deployment required.

How it works

Each inference job follows the same pipeline:

Submit a DataFrame and a prompt template through the

generateinterface.MaxFrame chunks the table data and sets concurrency based on data volume.

A worker group starts, and each worker renders the prompt using your input rows.

Inference results and a

successstatus are written back to MaxCompute.

Prerequisites

Before you begin, make sure you have:

MaxFrame SDK V2.3.0 or later. Check your version:

# Windows pip list | findstr maxframe # Linux pip list | grep maxframeTo upgrade:

pip install --upgrade maxframeThe latest MaxFrame client installed

Python 3.11

Supported regions

China (Hangzhou), China (Shanghai), China (Beijing), China (Ulanqab), China (Shenzhen), China (Chengdu), China (Hong Kong), China (Hangzhou) Finance Cloud, China (Shenzhen) Finance Cloud, Singapore, and Indonesia (Jakarta).

Supported models

MaxFrame provides out-of-the-box support for Qwen 3, Qwen 2.5, and DeepSeek-R1-Distill-Qwen model series. All models are hosted offline within MaxCompute — no model downloads, distribution setup, or API concurrency limits to manage.

Start with a smaller model. If the output quality does not meet your needs, switch to a larger one. Larger models consume more compute resources and take longer to process.

Model catalog (continuously updated)

| Model series | Model name | Resource type |

|---|---|---|

| Qwen 3 series | Qwen3-0.6B, Qwen3-1.7B, Qwen3-4B, Qwen3-8B, Qwen3-14B | CU (Compute Unit) |

| Qwen 3 series | Qwen3-4B-Instruct-2507-FP8, Qwen3-30B-A3B-Instruct-2507-FP8 | GU (GPU Unit) |

| Qwen Embedding | Qwen3-Embedding-8B | CU (Compute Unit) |

| Qwen Embedding | Qwen3-Embedding-0.6 | GU (GPU Unit) |

| Qwen 2.5 text series | Qwen2.5-0.5B-instruct, Qwen2.5-1.5B-instruct, Qwen2.5-3B-instruct, Qwen2.5-7B-instruct | CU (Compute Unit) |

| DeepSeek-R1-Distill-Qwen | DeepSeek-R1-Distill-Qwen-1.5B, DeepSeek-R1-Distill-Qwen-7B, DeepSeek-R1-Distill-Qwen-14B | CU (Compute Unit) |

| DeepSeek-R1-0528-Qwen3 | DeepSeek-R1-0528-Qwen3-8B | CU (Compute Unit) |

Qwen 3 series: Inference-optimized. Supports multilingual translation, complex text generation, and code generation. Best for scenarios that require high-precision output.

Qwen Embedding models: Designed for vectorization tasks. Converts text to vectors efficiently. Best for semantic search and similarity matching.

DeepSeek-R1-Distill-Qwen series: Lightweight, compressed using knowledge distillation. Best for fast inference in resource-constrained environments.

Interfaces

MaxFrame AI Function provides two interfaces: generate for full control over inference logic, and task-specific shortcuts (translate, extract) for common scenarios without writing a prompt.

generate — general-purpose interface

Use generate when you need custom prompt templates and parameter control.

from maxframe.learn.contrib.llm.models.managed import ManagedTextLLM

llm = ManagedTextLLM(name="<model_name>")

messages = [

{"role": "system", "content": "system_messages"},

{"role": "user", "content": "user_messages"},

]

result_df = llm.generate(<df>, prompt_template=messages)

print(result_df.execute())Parameters:

| Parameter | Required | Description |

|---|---|---|

model_name | Yes | Name of the model to use |

df | Yes | Input data as a DataFrame |

prompt_template | Yes | List of messages in OpenAI Chat format. Use f-string syntax ({column_name}) in the content to reference DataFrame columns |

Task-specific interfaces — GU resources only

Use task-specific interfaces for common scenarios without writing a prompt. No prompt engineering required — pass your data and let the interface handle the rest. Currently supported: translate and extract.

Task-specific interfaces require GU (GPU Unit) compute resources.

`translate` example:

from maxframe.learn.contrib.llm.models.managed import ManagedTextLLM

llm = ManagedTextLLM(name="<model_name>")

translated_df = llm.translate(

df["english_column"],

source_language="english",

target_language="Chinese",

examples=[("Hello", "你好"), ("Goodbye", "再见")],

)

translated_df.execute()`extract` example:

from maxframe.learn.contrib.llm.models.managed import ManagedTextLLM

from pydantic import BaseModel

from typing import List, Optional

llm = ManagedTextLLM(name="<model_name>")

class Record(BaseModel):

field_a: str

field_b: Optional[str] = None

result_df = llm.extract(

df["text_column"],

description="Extract structured data from the record. Return strict JSON matching the schema.",

schema=Record

)

result_df.execute()Quick start

This example runs in local mode and answers five questions using qwen3-14b. It covers the complete workflow: create a session, build a DataFrame, define a prompt template, and run inference.

If you're new to MaxFrame, see Get started with MaxFrame first.

import os

from maxframe import new_session

from odps import ODPS

import pandas as pd

import maxframe.dataframe as md

from maxframe.learn.contrib.llm.models.managed import ManagedTextLLM

# Connect to MaxCompute

o = ODPS(

os.getenv('ALIBABA_CLOUD_ACCESS_KEY_ID'),

os.getenv('ALIBABA_CLOUD_ACCESS_KEY_SECRET'),

project='<maxcompute_project_name>',

endpoint='https://service.cn-hangzhou.maxcompute.aliyun.com/api',

)

# 1. Create a MaxFrame session

session = new_session(odps_entry=o)

# Display full column content in output

pd.set_option("display.max_colwidth", None)

pd.set_option("display.max_columns", None)

# 2. Build a DataFrame with five questions

query_list = [

"What is the average distance between Earth and the sun?",

"In what year did the American Revolutionary War begin?",

"What is the boiling point of water?",

"How can I quickly relieve a headache?",

"Who is the protagonist of the Harry Potter series?",

]

df = md.DataFrame({"query": query_list})

# 3. Initialize the model

llm = ManagedTextLLM(name="qwen3-14b")

# 4. Define a prompt template

# Use {column_name} in f-string format to reference DataFrame columns

messages = [

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "Please answer the following question: {query}"},

]

# 5. Run inference — one answer per row

result_df = llm.generate(df, prompt_template=messages)

# 6. Execute: MaxCompute shards and processes the DataFrame in parallel

print(result_df.execute())Each row in the output contains a response_json field (the model's full Chat Completions response) and a success field indicating whether inference succeeded for that row.

Use cases

Translate contracts at scale

Scenario: A multinational corporation needs to translate 100,000 English contracts into Chinese and annotate key clauses. This example covers:

Create a MaxCompute source table with contract data

Define a translation prompt with a few-shot example

Run batch inference and write results back to MaxCompute

Step 1: Create the source table

CREATE TABLE IF NOT EXISTS raw_contracts (

id BIGINT,

en STRING

);

-- Insert sample data

INSERT INTO raw_contracts VALUES

(1, 'This Agreement is made and entered into as of the Effective Date by and between Party A and Party B.'),

(2, 'The Contractor shall perform the Services in accordance with the terms and conditions set forth herein.'),

(3, 'All payments shall be made in US Dollars within thirty (30) days of receipt of invoice.'),

(4, 'Either party may terminate this Agreement upon thirty (30) days written notice to the other party.'),

(5, 'Confidential Information shall not be disclosed to any third party without prior written consent.');Step 2: Run inference

from maxframe.learn.contrib.llm.models.managed import ManagedTextLLM

# 1. Initialize the Qwen3-1.7B model

llm = ManagedTextLLM(name="Qwen3-1.7B")

# 2. Load the source data

df = md.read_odps_table("raw_contracts")

# 3. Define the translation prompt with a few-shot example

messages = [

{

"role": "system",

"content": "You are a document translation expert who can fluently translate English text into Chinese.",

},

{

"role": "user",

"content": "Translate the following English text into Chinese. Output only the translated text.\n\nExample:\nInput: Hi\nOutput: Hello.\n\nText to translate:\n\n{en}",

},

]

# 4. Run translation using the generate interface, referencing the `en` column

result_df = llm.generate(

df,

prompt_template=messages,

params={

"temperature": 0.7,

"top_p": 0.8,

},

).execute()

# 5. Write results back to MaxCompute

result_df.to_odps_table("raw_contracts_result").execute()Extract structured data from medical records

Scenario: Medical consultation records contain unstructured text. This example covers:

Load records from a MaxCompute table

Define a structured schema using Pydantic

Run extraction using the

extractinterfaceWrite structured results back to MaxCompute

Step 1: Create the source table

CREATE TABLE IF NOT EXISTS traditional_chinese_medicine (

index BIGINT,

text STRING

);

-- Insert sample data

INSERT INTO traditional_chinese_medicine VALUES

(1, 'Patient Zhang, male, 45 years old. Chief complaint: recurrent cough for 2 weeks. Current symptoms: cough with abundant white and sticky phlegm, chest tightness, shortness of breath, poor appetite, and loose stools. Tongue: white and greasy coating. Pulse: slippery. Diagnosis: Phlegm-dampness obstructing the lungs. Treatment principle: Dry dampness, resolve phlegm, regulate qi, and stop coughing. Prescription: Modified Erchen Tang.'),

(2, 'Patient Li, female, 32 years old. Visited for "insomnia and excessive dreaming for 1 month." Accompanied by heart palpitations, forgetfulness, mental fatigue, and a sallow complexion. Tongue: pale with a thin white coating. Pulse: thin and weak. Diagnosis: Heart and spleen deficiency syndrome. Prescription: Modified Guipi Tang, one dose daily, decocted in water.'),

(3, 'Patient Wang, 68 years old. Chief complaint: lower back and knee soreness, frequent nocturia for half a year. Accompanied by aversion to cold, cold limbs, tinnitus like cicadas, and listlessness. Tongue: pale, swollen with teeth marks, and a white, slippery coating. Pulse: deep and thin. TCM diagnosis: Kidney yang deficiency syndrome. Treatment: Warm and supplement kidney yang. Prescription: Modified Jinkui Shenqi Wan.'),

(4, 'Patient Zhao, 5 years old. Fever for 3 days, highest temperature 39.5°C, slight aversion to wind and cold, nasal congestion with turbid discharge, red and swollen painful throat. Tongue: red tip with a thin yellow coating. Pulse: floating and rapid. Diagnosis: Wind-heat invading the lungs syndrome. Treatment principle: Dispel wind, clear heat, diffuse the lungs, and stop coughing. Prescription: Modified Yinqiao San.'),

(5, 'Patient Liu, male, 50 years old. Recurrent epigastric bloating and pain for 3 years, worsened for 1 week. Symptoms: epigastric fullness, frequent belching, symptoms worsen with emotional fluctuations, and irregular bowel movements. Tongue: red with a thin yellow coating. Pulse: wiry. Diagnosis: Liver-stomach disharmony syndrome. Treatment principle: Soothe the liver, harmonize the stomach, regulate qi, and relieve pain. Prescription: Modified Chaihu Shugan San combined with Zuojin Wan.');Step 2: Run extraction

from maxframe.learn.contrib.llm.models.managed import ManagedTextLLM

from pydantic import BaseModel

from typing import List, Optional

# 1. Initialize the model (GU resource required for task interfaces)

llm = ManagedTextLLM(name="Qwen3-4B-Instruct-2507-FP8")

# 2. Load the data and distribute across four partitions for parallel processing

df = md.read_odps_table("traditional_chinese_medicine", index_col="index")

df = df.mf.rebalance(num_partitions=4)

# 3. Define the target schema

class MedicalRecord(BaseModel):

"""Structured schema for a traditional Chinese medicine consultation record."""

patient_name: Optional[str] = None # Patient's name (e.g., "Zhang")

age: Optional[int] = None # Age

gender: Optional[str] = None # Gender ("Male"/"Female")

chief_complaint: str # Chief complaint

symptoms: List[str] # List of symptoms

tongue: str # Tongue diagnosis

pulse: str # Pulse diagnosis

diagnosis: str # TCM syndrome diagnosis

treatment_principle: str # Treatment principle

prescription: str # Prescription name

# 4. Extract structured data using the preset extract interface

result_df = llm.extract(

df["text"],

description="Extract structured data from the consultation record. Return strict JSON matching the schema.",

schema=MedicalRecord

)

result_df.execute()

# 5. Write results to MaxCompute

result_df.to_odps_table("result").execute()Resource management and performance

Choose between CU and GU

MaxFrame supports two compute resource types:

| Resource | Type | Best for |

|---|---|---|

| CU (Compute Unit) | CPU | Small models (under 8B parameters), small-scale inference tasks |

| GU (GPU Unit) | GPU | Large models (8B parameters and above), higher throughput, task-specific interfaces |

For models 8B and larger, GU resources deliver significantly better performance than CU. Task-specific interfaces (translate, extract) require GU resources.

Configure the resource type on your session:

# Use CU compute resources

options.session.quota_name = "mf_cpu_quota"

# Use GU compute resources

options.session.gu_quota_name = "mf_gpu_quota"Scale with parallel inference

For large-scale inference jobs, use rebalance to pre-distribute data across worker nodes before running inference. Each worker independently loads the model, avoiding cold start delays from sequential loading.

# Distribute data across 4 partitions before inference

parallel_partitions = 4

df = df.mf.rebalance(num_partitions=parallel_partitions)After inference completes, results are written to MaxCompute by partition and are available for downstream data analytics.

Risk control classification

Deepseek-R1-Distill-Qwen series models have deep inference capabilities. They can perform text classification, sentiment analysis, text quality assessment, and other tasks during the inference process. They can also output a detailed chain-of-thought and logical reasoning process. These capabilities provide a significant advantage for text classification tasks, especially in risk control scenarios that involve complex semantics and contextual associations.

In the AI Function, you can use the DeepSeek-R1-0528-Qwen3-8B model to classify text for risk control.

Sample data

Customer Credit Rating Investigation Report - Number: RJ22222222-ZZ

Customer Name: Mr. Wang

ID Card Number: 110000111111111111

Contact: 111000000000

Permanent Address: Room 10000, Building 10000, Chaoyang Community, Chaoyang Street, Chaoyang District, Beijing

**Asset Overview**

- China Merchants Bank Account: 6200 0000 0000 0000 (Balance: ¥2,000,000.00)

- Ping An Securities Account: A100000000 (Position Market Value: ¥1,000,000.00)

**Recent Large Transactions**

1. 2088-15-35 Transfer to Ms. Li (Account: 6200 0000 0000 0000) ¥1,500,000.00, Remarks: Down payment for house purchase - No. 10000, Lane 10000, Pudong New Road, Pudong New District, Shanghai.

2. 2088-15-35 Received payment of ¥4,000,000.00 from "Shenzhen XX Electronic Technology Co., Ltd." (Bank: Bank of China Shenzhen Branch).

**Approval Opinion**

Customer credit rating AAA, recommended for maximum credit limit. Handled by: Ms. Zhang (Employee ID: ZX000000000), Contact: zhangkaixin@example.com / Extension: 88888.Sample code

import os

from maxframe import new_session

from odps import ODPS

import pandas as pd

import maxframe.dataframe as md

from maxframe.learn.contrib.llm.models.managed import ManagedTextLLM

o = ODPS(

os.getenv('ALIBABA_CLOUD_ACCESS_KEY_ID'),

os.getenv('ALIBABA_CLOUD_ACCESS_KEY_SECRET'),

project='MaxCompute_Project_Name',

endpoint='https://service.cn-hangzhou.maxcompute.aliyun.com/api',

)

session = new_session(odps_entry=o)

# Do not truncate column text to display the full content.

pd.set_option("display.max_colwidth", None)

# Display all columns to prevent middle columns from being omitted.

pd.set_option("display.max_columns", None)

query_list = [

"Customer Credit Rating Investigation Report - Number: RJ22222222-ZZ Customer Name: Mr. Wang ID Card Number: 110000111111111111 Contact: 111000000000 Permanent Address: Room 10000, Building 10000, Chaoyang Community, Chaoyang Street, Chaoyang District, Beijing **Asset Overview** China Merchants Bank Account: 6200 0000 0000 0000 (Balance: ¥2,000,000.00) Ping An Securities Account: A100000000 (Position Market Value: ¥1,000,000.00) **Recent Large Transactions** 1. 2088-15-35 Transfer to Ms. Li (Account: 6200 0000 0000 0000) ¥1,500,000.00, Remarks: Down payment for house purchase - No. 10000, Lane 10000, Pudong New Road, Pudong New District, Shanghai. 2. 2088-15-35 Received payment of ¥4,000,000.00 from 'Shenzhen XX Electronic Technology Co., Ltd.' (Bank: Bank of China Shenzhen Branch). **Approval Opinion** Customer credit rating AAA, recommended for maximum credit limit. Handled by: Ms. Zhang (Employee ID: ZX000000000), Contact: zhangkaixin@example.com / Extension 88888."

]

df = md.DataFrame({"query": query_list})

llm = ManagedTextLLM(name="DeepSeek-R1-0528-Qwen3-8B")

# DeepSeek models should not be provided with system prompts.

messages = [

{

"role": "user",

"content": """Act as a text risk control assessor. Evaluate the safety of the following online text and provide reasons. You must meet the following requirements:

1. **Violation types to identify** (from highest to lowest priority):

- ID card information

- Account information

- Phone number information

- Transaction amount information

- Name information

- Address information

- Number information

2. **Output format**:

Use the following pure JSON format. Ensure the output does not contain ``` or any other Markdown formatting symbols.

"reason": "Briefly explain the basis for the rating (within 100 words).",

"category": "Main violation type (or 'Safe' if none)."

The following is the text to evaluate:

{query}

""",

},

]

result_df = llm.generate(

df,

prompt_template=messages,

)

print(result_df.execute())

Compared to ordinary models, DeepSeek-R1-0528-Qwen3-8B adds extra computation steps during inference. This means it takes longer to run analysis tasks, but it produces higher-quality inference results. The following result is returned:

index | response | success |

0 | {"id": "chatcmpl-1744091383", "object": "chat.completion", "created": 1744091383, "model": "deepseek-r1-distill-qwen-14b", "choices": [{"index": 0, "message": {"role": "assistant", "content": "```json\n{\n \"reason\": \"The text contains sensitive information, including an ID card number, China Merchants Bank account, Ping An Securities account, phone number, address, and transaction amounts. There are multiple violations.\",\n \"category\": \"ID card information\"\n}\n```", "reasoning_content": "Okay, I need to evaluate the safety of this online text. First, I must carefully read the text to find possible violations.\n\nThe text starts with a customer credit rating investigation report, number RJ22222222-ZZ. This is a number, which falls under the 'Number information' violation type and needs to be recorded.\n\nNext, the customer's name is Mr. Wang, which is 'Name information'. Then there is an ID card number: 110000111111111111. This is clearly ID card information, a high-priority violation type.\n\nThe contact number is 111000000000, which is a phone number and falls under 'Phone number information'. The permanent address is a specific address in Chaoyang District, Beijing, which is 'Address information'.\n\nIn the Asset Overview section, the China Merchants Bank account is 6200 0000 0000 0000 with a balance of 2 million. The Ping An Securities account is A100000000 with a position market value of 1 million. This involves 'Transaction amount information'.\n\nIn the Recent Large Transactions section, there are two transactions: a transfer of 1.5 million and a receipt of a 4 million payment. These are large amounts, falling under 'Transaction amount information'.\n\nThe Approval Opinion section mentions a customer credit rating of AAA and a recommended credit limit. The handler's information includes employee ID ZX000000000, contact zhangkaixin@example.com, and extension 88888. The employee ID and contact information are 'Account information'.\n\nOverall, the text contains ID card information, account information, phone number information, transaction amount information, name information, and address information. Among these, ID card information has the highest priority, so the main violation type is ID card information."}, "finish_reason": "stop"}], "usage": {"prompt_tokens": 0, "completion_tokens": 0, "total_tokens": 0}} | True |

In reasoning models, the inference process can provide an important basis for manual review. MaxFrame also supports extracting the chain-of-thought content. This content is stored separately in reasoning_content and can be accessed using flatjson.

The following complete code merges the original text DataFrame with the inference result DataFrame and extracts the reasoning_content for output.

import os

from maxframe import new_session

from odps import ODPS

import pandas as pd

import maxframe.dataframe as md

from maxframe.learn.contrib.llm.models.managed import ManagedTextLLM

import numpy as np

o = ODPS(

os.getenv('ALIBABA_CLOUD_ACCESS_KEY_ID'),

os.getenv('ALIBABA_CLOUD_ACCESS_KEY_SECRET'),

project='MaxCompute_Project_Name',

endpoint='https://service.cn-hangzhou.maxcompute.aliyun.com/api',

)

session = new_session(odps_entry=o)

# Do not truncate column text to display the full content.

pd.set_option("display.max_colwidth", None)

# Display all columns to prevent middle columns from being omitted.

pd.set_option("display.max_columns", None)

query_list = [

"Customer Credit Rating Investigation Report - Number: RJ22222222-ZZ Customer Name: Mr. Wang ID Card Number: 110000111111111111 Contact: 111000000000 Permanent Address: Room 10000, Building 10000, Chaoyang Community, Chaoyang Street, Chaoyang District, Beijing **Asset Overview** China Merchants Bank Account: 6200 0000 0000 0000 (Balance: ¥2,000,000.00) Ping An Securities Account: A100000000 (Position Market Value: ¥1,000,000.00) **Recent Large Transactions** 1. 2088-15-35 Transfer to Ms. Li (Account: 6200 0000 0000 0000) ¥1,500,000.00, Remarks: Down payment for house purchase - No. 10000, Lane 10000, Pudong New Road, Pudong New District, Shanghai. 2. 2088-15-35 Received payment of ¥4,000,000.00 from 'Shenzhen XX Electronic Technology Co., Ltd.' (Bank: Bank of China Shenzhen Branch). **Approval Opinion** Customer credit rating AAA, recommended for maximum credit limit. Handled by: Ms. Zhang (Employee ID: ZX000000000), Contact: zhangkaixin@example.com / Extension 88888."

]

df = md.DataFrame({"query": query_list})

llm = ManagedTextLLM(name="DeepSeek-R1-Distill-Qwen-14B")

# DeepSeek models should not be provided with system prompts.

messages = [

{

"role": "user",

"content": """Act as a text risk control assessor. Evaluate the safety of the following online text and provide reasons. You must meet the following requirements:

1. **Violation types to identify** (from highest to lowest priority):

- ID card information

- Account information

- Phone number information

- Transaction amount information

- Name information

- Address information

- Number information

2. **Output format**:

Use the following pure JSON format. Ensure the output does not contain ``` or any other Markdown formatting symbols.

"reason": "Briefly explain the basis for the rating (within 100 words).",

"category": "Main violation type (or 'Safe' if none)."

The following is the text to evaluate:

{query}

""",

},

]

result_df = llm.generate(

df,

prompt_template=messages,

)

merged_df = md.merge(df, result_df, left_index=True, right_index=True)

# Extract the inference result.

merged_df["content"] = merged_df.response.mf.flatjson(

["$.choices[0].message.content"], dtype=np.dtype(np.str_)

)

# Extract the chain of thought.

merged_df["reasoning_content"] = merged_df.response.mf.flatjson(

["$.choices[0].message.reasoning_content"], dtype=np.dtype(np.str_)

)

# Output the result.

print(merged_df[["query", "content", "reasoning_content"]].execute())

The following result is returned:

index | query | content | reasoning_content |

0 | Customer Credit Rating Investigation Report - Number: RJ22222222-ZZ Customer Name: Mr. Wang ID Card Number: 110000111111111111 Contact: 111000000000 Permanent Address: Room 10000, Building 10000, Chaoyang Community, Chaoyang Street, Chaoyang District, Beijing **Asset Overview** China Merchants Bank Account: 6200 0000 0000 0000 (Balance: ¥2,000,000.00) Ping An Securities Account: A100000000 (Position Market Value: ¥1,000,000.00) **Recent Large Transactions** 1. 2088-15-35 Transfer to Ms. Li (Account: 6200 0000 0000 0000) ¥1,500,000.00, Remarks: Down payment for house purchase - No. 10000, Lane 10000, Pudong New Road, Pudong New District, Shanghai. 2. 2088-15-35 Received payment of ¥4,000,000.00 from Shenzhen XX Electronic Technology Co., Ltd. (Bank: Bank of China Shenzhen Branch). **Approval Opinion** Customer credit rating AAA, recommended for maximum credit limit. Handled by: Ms. Zhang (Employee ID: ZX000000000), Contact: zhangkaixin@example.com / Extension 88888. | ```json\n{\n "reason": "The text contains sensitive information, including an ID card number, China Merchants Bank account, Ping An Securities account, phone number, address, and transaction amounts. There are multiple violations.",\n "category": "ID card information"\n}\n``` | Okay, I need to evaluate the safety of this online text. First, I must carefully read the text to find possible violations. The text starts with a customer credit rating investigation report, number RJ22222222-ZZ. This is a number, which falls under the 'Number information' violation type and needs to be recorded. Next, the customer's name is Mr. Wang, which is 'Name information'. Then there is an ID card number: 110000111111111111. This is clearly ID card information, a high-priority violation type. The contact number is 111000000000, which is a phone number and falls under 'Phone number information'. The permanent address is a specific address in Chaoyang District, Beijing, which is 'Address information'. In the Asset Overview section, the China Merchants Bank account is 6200 0000 0000 0000 with a balance of 2 million. The Ping An Securities account is A100000000 with a position market value of 1 million. This involves 'Transaction amount information'. In the Recent Large Transactions section, there are two transactions: a transfer of 1.5 million and a receipt of a 4 million payment. These are large amounts, falling under 'Transaction amount information'. The Approval Opinion section mentions a customer credit rating of AAA and a recommended credit limit. The handler's information includes employee ID ZX000000000, contact zhangkaixin@example.com, and extension 88888. The employee ID and contact information are 'Account information'. Overall, the text contains ID card information, account information, phone number information, transaction amount information, name information, and address information. Among these, ID card information has the highest priority, so the main violation type is ID card information. |

In this risk control scenario, you can see the powerful performance of the DeepSeek-R1-0528-Qwen3-8B model in text classification and logical reasoning. It not only provides highly accurate classification results but also reveals its chain of thought. This provides a more comprehensive understanding of the model's decisions and analysis. This transparency is crucial for meeting compliance requirements and increasing trust. With the MaxFrame AI Function, you can perform risk control on massive amounts of text, to better address complex risk control issues, and quickly identify potential threats.

Risk control classification

Deepseek-R1-Distill-Qwen series models have deep inference capabilities. They can perform text classification, sentiment analysis, text quality assessment, and other tasks during the inference process. They can also output a detailed chain-of-thought and logical reasoning process. These capabilities provide a significant advantage for text classification tasks, especially in risk control scenarios that involve complex semantics and contextual associations.

In the AI Function, you can use the DeepSeek-R1-0528-Qwen3-8B model to classify text for risk control.

Sample data

Customer Credit Rating Investigation Report - Number: RJ22222222-ZZ

Customer Name: Mr. Wang

ID Card Number: 110000111111111111

Contact: 111000000000

Permanent Address: Room 10000, Building 10000, Chaoyang Community, Chaoyang Street, Chaoyang District, Beijing

**Asset Overview**

- China Merchants Bank Account: 6200 0000 0000 0000 (Balance: ¥2,000,000.00)

- Ping An Securities Account: A100000000 (Position Market Value: ¥1,000,000.00)

**Recent Large Transactions**

1. 2088-15-35 Transfer to Ms. Li (Account: 6200 0000 0000 0000) ¥1,500,000.00, Remarks: Down payment for house purchase - No. 10000, Lane 10000, Pudong New Road, Pudong New District, Shanghai.

2. 2088-15-35 Received payment of ¥4,000,000.00 from "Shenzhen XX Electronic Technology Co., Ltd." (Bank: Bank of China Shenzhen Branch).

**Approval Opinion**

Customer credit rating AAA, recommended for maximum credit limit. Handled by: Ms. Zhang (Employee ID: ZX000000000), Contact: zhangkaixin@example.com / Extension: 88888.Sample code

import os

from maxframe import new_session

from odps import ODPS

import pandas as pd

import maxframe.dataframe as md

from maxframe.learn.contrib.llm.models.managed import ManagedTextLLM

o = ODPS(

os.getenv('ALIBABA_CLOUD_ACCESS_KEY_ID'),

os.getenv('ALIBABA_CLOUD_ACCESS_KEY_SECRET'),

project='MaxCompute_Project_Name',

endpoint='https://service.cn-hangzhou.maxcompute.aliyun.com/api',

)

session = new_session(odps_entry=o)

# Do not truncate column text to display the full content.

pd.set_option("display.max_colwidth", None)

# Display all columns to prevent middle columns from being omitted.

pd.set_option("display.max_columns", None)

query_list = [

"Customer Credit Rating Investigation Report - Number: RJ22222222-ZZ Customer Name: Mr. Wang ID Card Number: 110000111111111111 Contact: 111000000000 Permanent Address: Room 10000, Building 10000, Chaoyang Community, Chaoyang Street, Chaoyang District, Beijing **Asset Overview** China Merchants Bank Account: 6200 0000 0000 0000 (Balance: ¥2,000,000.00) Ping An Securities Account: A100000000 (Position Market Value: ¥1,000,000.00) **Recent Large Transactions** 1. 2088-15-35 Transfer to Ms. Li (Account: 6200 0000 0000 0000) ¥1,500,000.00, Remarks: Down payment for house purchase - No. 10000, Lane 10000, Pudong New Road, Pudong New District, Shanghai. 2. 2088-15-35 Received payment of ¥4,000,000.00 from 'Shenzhen XX Electronic Technology Co., Ltd.' (Bank: Bank of China Shenzhen Branch). **Approval Opinion** Customer credit rating AAA, recommended for maximum credit limit. Handled by: Ms. Zhang (Employee ID: ZX000000000), Contact: zhangkaixin@example.com / Extension 88888."

]

df = md.DataFrame({"query": query_list})

llm = ManagedTextLLM(name="DeepSeek-R1-0528-Qwen3-8B")

# DeepSeek models should not be provided with system prompts.

messages = [

{

"role": "user",

"content": """Act as a text risk control assessor. Evaluate the safety of the following online text and provide reasons. You must meet the following requirements:

1. **Violation types to identify** (from highest to lowest priority):

- ID card information

- Account information

- Phone number information

- Transaction amount information

- Name information

- Address information

- Number information

2. **Output format**:

Use the following pure JSON format. Ensure the output does not contain ``` or any other Markdown formatting symbols.

"reason": "Briefly explain the basis for the rating (within 100 words).",

"category": "Main violation type (or 'Safe' if none)."

The following is the text to evaluate:

{query}

""",

},

]

result_df = llm.generate(

df,

prompt_template=messages,

)

print(result_df.execute())

Compared to ordinary models, DeepSeek-R1-0528-Qwen3-8B adds extra computation steps during inference. This means it takes longer to run analysis tasks, but it produces higher-quality inference results. The following result is returned:

index | response | success |

0 | {"id": "chatcmpl-1744091383", "object": "chat.completion", "created": 1744091383, "model": "deepseek-r1-distill-qwen-14b", "choices": [{"index": 0, "message": {"role": "assistant", "content": "```json\n{\n \"reason\": \"The text contains sensitive information, including an ID card number, China Merchants Bank account, Ping An Securities account, phone number, address, and transaction amounts. There are multiple violations.\",\n \"category\": \"ID card information\"\n}\n```", "reasoning_content": "Okay, I need to evaluate the safety of this online text. First, I must carefully read the text to find possible violations.\n\nThe text starts with a customer credit rating investigation report, number RJ22222222-ZZ. This is a number, which falls under the 'Number information' violation type and needs to be recorded.\n\nNext, the customer's name is Mr. Wang, which is 'Name information'. Then there is an ID card number: 110000111111111111. This is clearly ID card information, a high-priority violation type.\n\nThe contact number is 111000000000, which is a phone number and falls under 'Phone number information'. The permanent address is a specific address in Chaoyang District, Beijing, which is 'Address information'.\n\nIn the Asset Overview section, the China Merchants Bank account is 6200 0000 0000 0000 with a balance of 2 million. The Ping An Securities account is A100000000 with a position market value of 1 million. This involves 'Transaction amount information'.\n\nIn the Recent Large Transactions section, there are two transactions: a transfer of 1.5 million and a receipt of a 4 million payment. These are large amounts, falling under 'Transaction amount information'.\n\nThe Approval Opinion section mentions a customer credit rating of AAA and a recommended credit limit. The handler's information includes employee ID ZX000000000, contact zhangkaixin@example.com, and extension 88888. The employee ID and contact information are 'Account information'.\n\nOverall, the text contains ID card information, account information, phone number information, transaction amount information, name information, and address information. Among these, ID card information has the highest priority, so the main violation type is ID card information."}, "finish_reason": "stop"}], "usage": {"prompt_tokens": 0, "completion_tokens": 0, "total_tokens": 0}} | True |

In reasoning models, the inference process can provide an important basis for manual review. MaxFrame also supports extracting the chain-of-thought content. This content is stored separately in reasoning_content and can be accessed using flatjson.

The following complete code merges the original text DataFrame with the inference result DataFrame and extracts the reasoning_content for output.

import os

from maxframe import new_session

from odps import ODPS

import pandas as pd

import maxframe.dataframe as md

from maxframe.learn.contrib.llm.models.managed import ManagedTextLLM

import numpy as np

o = ODPS(

os.getenv('ALIBABA_CLOUD_ACCESS_KEY_ID'),

os.getenv('ALIBABA_CLOUD_ACCESS_KEY_SECRET'),

project='MaxCompute_Project_Name',

endpoint='https://service.cn-hangzhou.maxcompute.aliyun.com/api',

)

session = new_session(odps_entry=o)

# Do not truncate column text to display the full content.

pd.set_option("display.max_colwidth", None)

# Display all columns to prevent middle columns from being omitted.

pd.set_option("display.max_columns", None)

query_list = [

"Customer Credit Rating Investigation Report - Number: RJ22222222-ZZ Customer Name: Mr. Wang ID Card Number: 110000111111111111 Contact: 111000000000 Permanent Address: Room 10000, Building 10000, Chaoyang Community, Chaoyang Street, Chaoyang District, Beijing **Asset Overview** China Merchants Bank Account: 6200 0000 0000 0000 (Balance: ¥2,000,000.00) Ping An Securities Account: A100000000 (Position Market Value: ¥1,000,000.00) **Recent Large Transactions** 1. 2088-15-35 Transfer to Ms. Li (Account: 6200 0000 0000 0000) ¥1,500,000.00, Remarks: Down payment for house purchase - No. 10000, Lane 10000, Pudong New Road, Pudong New District, Shanghai. 2. 2088-15-35 Received payment of ¥4,000,000.00 from 'Shenzhen XX Electronic Technology Co., Ltd.' (Bank: Bank of China Shenzhen Branch). **Approval Opinion** Customer credit rating AAA, recommended for maximum credit limit. Handled by: Ms. Zhang (Employee ID: ZX000000000), Contact: zhangkaixin@example.com / Extension 88888."

]

df = md.DataFrame({"query": query_list})

llm = ManagedTextLLM(name="DeepSeek-R1-Distill-Qwen-14B")

# DeepSeek models should not be provided with system prompts.

messages = [

{

"role": "user",

"content": """Act as a text risk control assessor. Evaluate the safety of the following online text and provide reasons. You must meet the following requirements:

1. **Violation types to identify** (from highest to lowest priority):

- ID card information

- Account information

- Phone number information

- Transaction amount information

- Name information

- Address information

- Number information

2. **Output format**:

Use the following pure JSON format. Ensure the output does not contain ``` or any other Markdown formatting symbols.

"reason": "Briefly explain the basis for the rating (within 100 words).",

"category": "Main violation type (or 'Safe' if none)."

The following is the text to evaluate:

{query}

""",

},

]

result_df = llm.generate(

df,

prompt_template=messages,

)

merged_df = md.merge(df, result_df, left_index=True, right_index=True)

# Extract the inference result.

merged_df["content"] = merged_df.response.mf.flatjson(

["$.choices[0].message.content"], dtype=np.dtype(np.str_)

)

# Extract the chain of thought.

merged_df["reasoning_content"] = merged_df.response.mf.flatjson(

["$.choices[0].message.reasoning_content"], dtype=np.dtype(np.str_)

)

# Output the result.

print(merged_df[["query", "content", "reasoning_content"]].execute())

The following result is returned:

index | query | content | reasoning_content |

0 | Customer Credit Rating Investigation Report - Number: RJ22222222-ZZ Customer Name: Mr. Wang ID Card Number: 110000111111111111 Contact: 111000000000 Permanent Address: Room 10000, Building 10000, Chaoyang Community, Chaoyang Street, Chaoyang District, Beijing **Asset Overview** China Merchants Bank Account: 6200 0000 0000 0000 (Balance: ¥2,000,000.00) Ping An Securities Account: A100000000 (Position Market Value: ¥1,000,000.00) **Recent Large Transactions** 1. 2088-15-35 Transfer to Ms. Li (Account: 6200 0000 0000 0000) ¥1,500,000.00, Remarks: Down payment for house purchase - No. 10000, Lane 10000, Pudong New Road, Pudong New District, Shanghai. 2. 2088-15-35 Received payment of ¥4,000,000.00 from Shenzhen XX Electronic Technology Co., Ltd. (Bank: Bank of China Shenzhen Branch). **Approval Opinion** Customer credit rating AAA, recommended for maximum credit limit. Handled by: Ms. Zhang (Employee ID: ZX000000000), Contact: zhangkaixin@example.com / Extension 88888. | ```json\n{\n "reason": "The text contains sensitive information, including an ID card number, China Merchants Bank account, Ping An Securities account, phone number, address, and transaction amounts. There are multiple violations.",\n "category": "ID card information"\n}\n``` | Okay, I need to evaluate the safety of this online text. First, I must carefully read the text to find possible violations. The text starts with a customer credit rating investigation report, number RJ22222222-ZZ. This is a number, which falls under the 'Number information' violation type and needs to be recorded. Next, the customer's name is Mr. Wang, which is 'Name information'. Then there is an ID card number: 110000111111111111. This is clearly ID card information, a high-priority violation type. The contact number is 111000000000, which is a phone number and falls under 'Phone number information'. The permanent address is a specific address in Chaoyang District, Beijing, which is 'Address information'. In the Asset Overview section, the China Merchants Bank account is 6200 0000 0000 0000 with a balance of 2 million. The Ping An Securities account is A100000000 with a position market value of 1 million. This involves 'Transaction amount information'. In the Recent Large Transactions section, there are two transactions: a transfer of 1.5 million and a receipt of a 4 million payment. These are large amounts, falling under 'Transaction amount information'. The Approval Opinion section mentions a customer credit rating of AAA and a recommended credit limit. The handler's information includes employee ID ZX000000000, contact zhangkaixin@example.com, and extension 88888. The employee ID and contact information are 'Account information'. Overall, the text contains ID card information, account information, phone number information, transaction amount information, name information, and address information. Among these, ID card information has the highest priority, so the main violation type is ID card information. |

In this risk control scenario, you can see the powerful performance of the DeepSeek-R1-0528-Qwen3-8B model in text classification and logical reasoning. It not only provides highly accurate classification results but also reveals its chain of thought. This provides a more comprehensive understanding of the model's decisions and analysis. This transparency is crucial for meeting compliance requirements and increasing trust. With the MaxFrame AI Function, you can perform risk control on massive amounts of text, to better address complex risk control issues, and quickly identify potential threats.

FAQ

How do I parse the model response?

Each output row contains a response_json field with the full Chat Completions JSON string, and a success boolean. To extract the generated text:

import json

result = result_df.execute()

for _, row in result.iterrows():

if row["success"]:

payload = json.loads(row["response_json"])

text = payload["choices"][0]["message"]["content"]

print(text)What should I do when `success` is `False`?

A success=False row means inference failed for that row (for example, due to a prompt rendering error or model timeout). To reprocess failed rows:

import json

result = result_df.execute()

failed_df = result[result["success"] == False]

# Inspect failed rows, fix the prompt or data, then rerun inference on failed_df

Does the output order match the input order?

Output rows correspond to input rows but may not be in the same order due to parallel processing. Use the original DataFrame index to match inputs to outputs.

Can I stop an inference job after it starts?

MaxFrame inference jobs run as MaxCompute tasks. You can cancel them through the MaxCompute console or by using the MaxCompute job management API.