MaxCompute does not provide Graph development plug-ins. Use Eclipse to develop, test, and deploy MaxCompute Graph programs. The typical workflow is:

-

Develop a Graph program in Eclipse and run local debugging to validate basic logic.

-

Submit the job to a cluster for integration testing.

Development example

This section uses the SSSP (Single Source Shortest Path) algorithm to walk through the full Eclipse development workflow.

-

Create a Java project named

graph_examples. -

Add the JAR package from the

libdirectory of the MaxCompute client to Java Build Path in your Eclipse project.

-

Develop your MaxCompute Graph program. A common starting point is to copy an existing example such as SSSP and modify it. In this example, only the package path is changed to

package com.aliyun.odps.graph.example. -

Compile and package the code. In Eclipse, right-click the

srcdirectory, then choose Export > Java > JAR file. Set the output path, for example:D:\odps\clt\odps-graph-example-sssp.jar. -

Run SSSP on the MaxCompute client. For details, see (Optional) Submit Graph jobs.

Local debugging

MaxCompute Graph supports local debugging with Eclipse breakpoint debugging.

Prerequisites

Before you begin, ensure that you have:

-

Eclipse installed with the

graph_examplesproject set up -

The Maven package

odps-graph-localdownloaded

Run local debugging

-

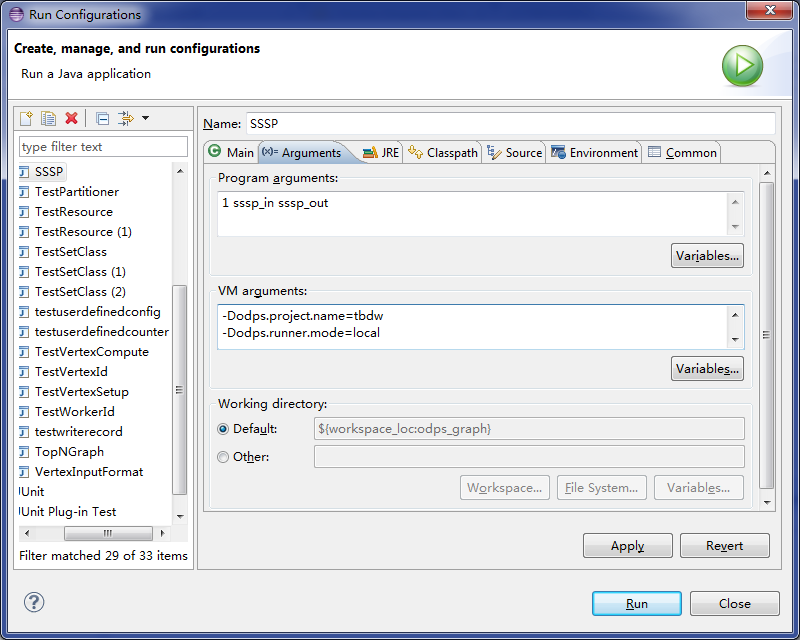

In Eclipse, right-click the main program file that contains the

mainfunction of the Graph job. -

Choose Run As > Run Configurations.

-

On the Arguments tab, set Program arguments to:

1 sssp_in sssp_out -

On the Arguments tab, set VM arguments to:

-Dodps.runner.mode=local -Dodps.project.name=<project.name> -Dodps.end.point=<end.point> -Dodps.access.id=<access.id> -Dodps.access.key=<access.key>

The following table describes the VM arguments:

Parameter Required Description odps.runner.modeYes Must be set to local. Enables local debugging mode.odps.project.nameYes The MaxCompute project to use. odps.end.pointNo The MaxCompute endpoint. If not set, all data is read only from the local warehouse directory — an exception is thrown if data is missing. If set, the SDK reads from the warehouse first and falls back to the remote MaxCompute server. odps.access.idConditional The AccessKey ID for accessing MaxCompute. Required only when odps.end.pointis set.odps.access.keyConditional The AccessKey secret for accessing MaxCompute. Required only when odps.end.pointis set.odps.cache.resourcesNo Resources to use. Has the same effect as -resourcesin thejarcommand.odps.local.warehouseNo Local path to the warehouse directory. Default: ./warehouse.Configure these parameters based on your

conf/odps_config.inisettings on the MaxCompute client. -

If

odps.end.pointis not set, create thesssp_inandsssp_outtables in the warehouse directory. Add the following data tosssp_in:1,"2:2,3:1,4:4" 2,"1:2,3:2,4:1" 3,"1:1,2:2,5:1" 4,"1:4,2:1,5:1" 5,"3:1,4:1"For details about the warehouse directory, see Local run.

-

Click Run to start SSSP locally. The expected output is:

The

sssp_inandsssp_outtables must exist in the local warehouse directory before running. For details, see (Optional) Submit Graph jobs.Counters: 3 com.aliyun.odps.graph.local.COUNTER TASK_INPUT_BYTE=211 TASK_INPUT_RECORD=5 TASK_OUTPUT_BYTE=161 TASK_OUTPUT_RECORD=5 graph task finish

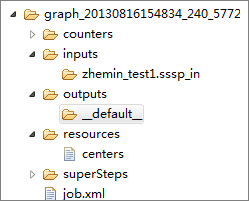

Temporary directory structure

Each local debugging run creates a temporary directory inside the Eclipse project directory.

The temporary directory contains:

| Directory or file | Contents |

|---|---|

counters |

Counter information generated during the job run |

inputs |

Input data. Read from the local warehouse first; if not found and odps.end.point is set, read from the server. Reads 10 records by default (configurable up to 10,000 using -Dodps.mapred.local.record.limit). |

outputs |

Output data. Overwrites the matching table in the local warehouse after the job completes. |

resources |

Resources used by the job. Same read-order as inputs. |

job.xml |

Job configuration |

superstep |

Persistent messages from each iteration |

To enable detailed logging during local debugging, create alog4j.properties_odps_graph_cluster_debugfile in thesrcdirectory.

Cluster debugging

After local debugging, submit the job to a cluster for integration testing.

-

Configure the MaxCompute client.

-

Update the JAR package:

add jar /path/work.jar -f; -

Run the

jarcommand and check the operational log and command output.

For details about running a Graph job in a cluster, see (Optional) Submit Graph jobs.

Performance optimization

Configuration parameters

The following parameters control Graph job performance:

| Parameter | Unit | Range | Default | Description |

|---|---|---|---|---|

setSplitSize(long) |

MB | >0 | 64 | Split size of an input table |

setNumWorkers(int) |

— | 1–1000 | 1 | Number of workers for the job |

setWorkerCPU(int) |

— | 50–800 | 200 | CPU resources per worker (100 = 1 core) |

setWorkerMemory(int) |

MB | 256–12288 | 4096 | Memory per worker |

setMaxIteration(int) |

— | any | -1 | Maximum iterations. Values <=0 mean no iteration limit. |

setJobPriority(int) |

— | 0–9 | 9 | Job priority. Higher value = lower priority. |

Speed up data loading

Symptom: Data loading is slow when the number of workers and the number of splits are mismatched.

Mechanism: The number of splits is calculated as splitNum = inputSize / splitSize. The relationship between workerNum and splitNum determines how load is distributed:

| Condition | Result |

|---|---|

splitNum = workerNum |

Each worker loads exactly one split — optimal. |

splitNum > workerNum |

Each worker loads one or more splits — still efficient. |

splitNum < workerNum |

Some workers load no splits — suboptimal; avoid this case. |

What to do: Keep splitNum >= workerNum to maintain efficient load distribution.

-

Increase

workerNumusingsetNumWorkers. -

Reduce

splitSizeusingsetSplitSizeto create more splits. -

Alternatively, insert the following before the

jarcommand to achieve the same effect without modifying code:set odps.graph.split.size=<m>; set odps.graph.worker.num=<n>; -

If

runtime partitioningis set toFalse, usesetSplitSizeto control the number of workers, or make sure the first or second condition in the table above is met.

In the iteration phase, adjust workerNum only — split size does not affect iteration performance.

Resolve data skew

Symptom: Some workers process far more splits, edges, or messages than others, extending the overall job runtime. Check the counters in the output to identify overloaded workers.

Mechanism: Skew occurs when certain keys have a disproportionately large number of associated records, edges, or messages, concentrating work on a small number of workers.

What to do:

-

Use a combiner to aggregate messages locally before sending them across the network. A combiner reduces both memory usage and network traffic, which shortens job duration.

-

Reduce input volume:

-

For decision-making applications where approximate results are acceptable, sample the data and import only the sample into the input table.

-

Use the

TableInfoclass to read specific columns by name instead of reading entire tables or partitions. This cuts the input data volume.

-

-

Improve the business logic to distribute work more evenly across keys.

Built-in JAR packages

The following JAR packages are loaded into the JVM before your JAR package when running Graph programs. You do not need to upload them or specify them with -libjars:

-

commons-codec-1.3.jar -

commons-io-2.0.1.jar -

commons-lang-2.5.jar -

commons-logging-1.0.4.jar -

commons-logging-api-1.0.4.jar -

guava-14.0.jar -

json.jar -

log4j-1.2.15.jar -

slf4j-api-1.4.3.jar -

slf4j-log4j12-1.4.3.jar -

xmlenc-0.52.jar

Built-in JAR packages are placed before your JAR package in the JVM classpath. This can cause version conflicts. For example, if your program calls a method fromcommons-codec-1.5.jarthat does not exist in the built-incommons-codec-1.3.jar, the call fails. In that case, use an equivalent method available in the built-in version, or wait for MaxCompute to update to a newer version.