This topic shows how to use the open-source Jieba segmentation library in a DataWorks PyODPS node to split Chinese text stored in a MaxCompute table. It also shows how to extend the default dictionary with custom terms using a closure function.

By the end of this topic, you will know how to:

-

Upload a third-party Python package as a MaxCompute archive resource.

-

Reference that package in a PyODPS

map()call. -

Load a MaxCompute file resource inside a user-defined function (UDF) via a closure function.

-

Verify segmentation results on the Runtime Log tab and through an ad hoc query.

The sample code in this topic is for reference only. Do not use it directly in a production environment.

Prerequisites

Before you begin, ensure that you have:

-

A DataWorks workspace with a MaxCompute compute engine associated. See Create a workspace.

-

Basic familiarity with Python and PyODPS 3 nodes. See Develop a PyODPS 3 task.

How PyODPS nodes work with third-party packages

DataWorks PyODPS nodes let you run Python code against MaxCompute using the MaxCompute SDK for Python (PyODPS). Two node versions are available — PyODPS 2 and PyODPS 3. Use PyODPS 3.

To use a third-party Python library in a PyODPS UDF, upload the library's zip file as a MaxCompute archive resource and reference it in the libraries parameter of persist(). MaxCompute distributes the library to each mapper at job initialization, so it is available when the UDF runs.

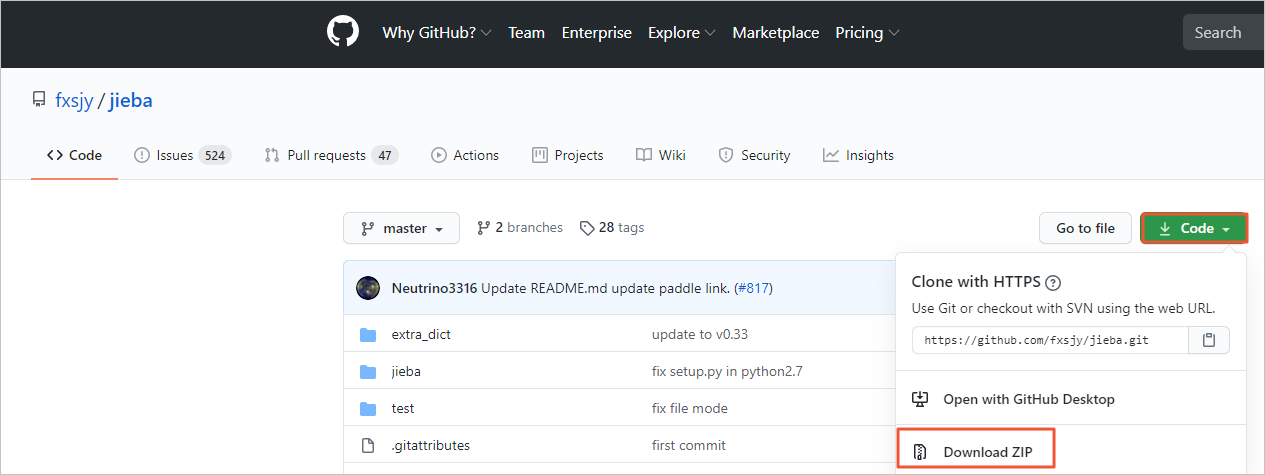

Preparation: Download the Jieba package

Download the Jieba package (jieba-master.zip) from GitHub.

Practice 1: Segment Chinese text with the default Jieba dictionary

Step 1: Create a workflow

Create a workflow in DataWorks. See Create a workflow.

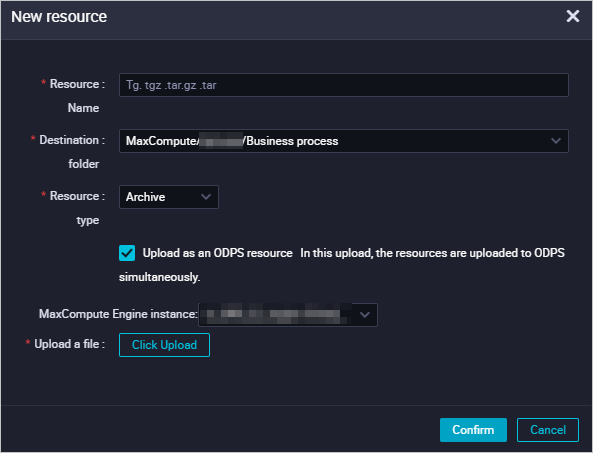

Step 2: Upload the Jieba package as an archive resource

-

In DataStudio, right-click the workflow name and choose Create Resource > MaxCompute > Archive.

-

In the Create Resource dialog box, configure the following parameters and click Create.

Parameter Description File Click Upload and select the jieba-master.zipfile downloaded from GitHub.Name The resource name. Set this to jieba-master.zip. The name can differ from the uploaded file name, but must follow DataWorks naming conventions.

-

Click the

icon in the toolbar to commit the resource to the development environment.

icon in the toolbar to commit the resource to the development environment.

Step 3: Create the source and result tables

Create two MaxCompute tables: jieba_test to store the input data, and jieba_result to store the segmentation output.

To create each table, right-click the workflow name and choose Create Table > MaxCompute > Table. In the Create Table dialog box, configure the parameters and click Create. Then run the following DDL statements to define the table schema.

`jieba_test` — stores the Chinese text to be segmented:

CREATE TABLE jieba_test (

`chinese` string,

`content` string

);`jieba_result` — stores the segmentation output:

CREATE TABLE jieba_result (

`chinese` string

);After creating the tables, commit them to the development environment.

Step 4: Import test data

-

Download jieba_test.csv to your on-premises machine.

-

Click the

icon in the Scheduled Workflow pane on the DataStudio page.

icon in the Scheduled Workflow pane on the DataStudio page. -

In the Data Import Wizard dialog box, enter

jieba_testin the table name field, select the table, and click Next. -

Click Browse, upload

jieba_test.csvfrom your on-premises machine, and click Next. -

Select By Name and click Import Data.

Step 5: Create a PyODPS 3 node

-

Right-click the workflow name and choose Create Node > MaxCompute > PyODPS 3.

-

In the Create Node dialog box, set Name to

word_split(or another appropriate name) and click Confirm.

Step 6: Run the segmentation code

Paste the following code into the PyODPS 3 node and run it. The code reads the chinese column from jieba_test, segments each value using Jieba, and writes the results to jieba_result.

def test(input_var):

import jieba

result = jieba.cut(input_var, cut_all=False)

return "/ ".join(result)

hints = {

'odps.isolation.session.enable': True,

# Increase split.size to improve mapper parallelism. See: Flag parameters.

'odps.stage.mapper.split.size': 64,

}

libraries = ['jieba-master.zip'] # Distribute the Jieba archive to each mapper.

src_df = o.get_table('jieba_test').to_df()

result_df = src_df.chinese.map(test).persist('jieba_result', hints=hints, libraries=libraries)

print(result_df.head(10)) # Print the first 10 rows; full results are in jieba_result.odps.stage.mapper.split.size can be used to improve the execution parallelism. For all available flag parameters, see Flag parameters.Step 7: View the results

-

Runtime log: Check the Runtime Log tab at the bottom of the page for the output of

print(result_df.head(10)) -

Full table: In the left-side navigation pane of DataStudio, click Ad Hoc Query, create an ad hoc query node, and run:

SELECT * FROM jieba_result;

Practice 2: Segment Chinese text with a custom dictionary

The default Jieba dictionary may not recognize domain-specific terms. By loading a custom dictionary, you can ensure those terms are treated as single tokens rather than split into characters.

PyODPS map() passes data row by row into the UDF. To load the dictionary only once — at mapper initialization rather than for every row — use a closure function or a function of the callable class. The outer function loads the dictionary when the mapper starts and returns an inner function that handles each row. This pattern avoids redundant I/O on every row. For more complex UDFs, see Create and use a MaxCompute UDF.

Step 1: Upload the custom dictionary as a file resource

-

Right-click the workflow name and choose Create Resource > MaxCompute > File.

-

In the Create Resource dialog box, set Name to

key_words.txtand click Create. -

On the configuration tab of the

key_words.txtresource, enter your custom terms (one per line) and save and commit the resource. For example:增量备份 安全合规

Step 2: Create the result table

Before running the code, create a table named jieba_result2 using the same DDL as jieba_result:

CREATE TABLE jieba_result2 (

`chinese` string

);Commit the table to the development environment.

Step 3: Run the segmentation code with the custom dictionary

Paste the following code into the same PyODPS 3 node (or a new one) and run it.

def test(resources):

import jieba

fileobj = resources[0]

jieba.load_userdict(fileobj) # Load the custom dictionary once at mapper init.

def h(input_var): # Inner function: called for each row in the map operation.

result = jieba.cut(input_var, cut_all=False)

return "/ ".join(result)

return h # Return the inner function as the UDF handler.

hints = {

'odps.isolation.session.enable': True,

'odps.stage.mapper.split.size': 64,

}

libraries = ['jieba-master.zip']

src_df = o.get_table('jieba_test').to_df()

file_object = o.get_resource('key_words.txt') # Reference the MaxCompute file resource.

mapped_df = src_df.chinese.map(test, resources=[file_object]) # Pass the resource to the closure.

result_df = mapped_df.persist('jieba_result2', hints=hints, libraries=libraries)

print(result_df.head(10))o.get_resource()fetches the file resource from MaxCompute and passes it to the outertest()function via theresourcesparameter. The resource is loaded once per mapper, not once per row. See Flag parameters for hint options.

Step 4: View the results

-

Runtime log: Check the Runtime Log tab for the first 10 rows of output.

-

Full table: Run the following ad hoc query:

SELECT * FROM jieba_result2;

Step 5: Compare outputs

Run the following queries side by side to see how the custom dictionary changes the segmentation of domain-specific terms such as 增量备份 and 安全合规.

SELECT * FROM jieba_result; -- Default dictionary results

SELECT * FROM jieba_result2; -- Custom dictionary resultsTerms listed in key_words.txt appear as single tokens in jieba_result2 rather than being split by the default dictionary.

What's next

-

Use PyODPS in DataWorks — full guide to PyODPS nodes, including resource management, scheduling, and advanced UDF patterns.

-

Create and use a MaxCompute UDF — for more complex UDFs, including callable class patterns.

-

Flag parameters — all hint parameters available for tuning MaxCompute jobs.