When you need to migrate historical data from ApsaraDB RDS to Lindorm — or run a full database migration — Lindorm Tunnel Service (LTS) can handle both full and incremental data in a single task. LTS imports all existing rows first, then switches automatically to incremental synchronization to capture ongoing changes from the source.

Full data synchronization from ApsaraDB RDS via LTS was discontinued on March 10, 2023. LTS instances purchased after that date cannot synchronize full data from ApsaraDB RDS. LTS instances purchased before March 10, 2023 can still use this feature.

How it works

LTS uses two data sources together:

-

Full data: Read directly from the ApsaraDB RDS MySQL database using SQL queries. Each query runs on one read thread. Splitting queries across ranges increases throughput and reduces retry scope if a query fails.

-

Incremental data: Captured through a Data Transmission Service (DTS) task that tracks changes in the source tables after the full import completes.

LTS always imports full data before switching to incremental synchronization. By default, delete operations from the source are not propagated to the destination. To propagate deletes, set skipDelete: false in the configuration.

Prerequisites

Before you begin, confirm the following:

-

Your LTS instance was purchased before March 10, 2023

-

LTS, the destination ApsaraDB for HBase cluster, and the source ApsaraDB RDS instance are in the same virtual private cloud (VPC)

-

You are logged in to the LTS web UI (see Create a synchronization task)

You also need the following data sources configured before creating the migration task:

-

LindormTable destination: Add a LindormTable data source

-

ApsaraDB RDS source: Add an ApsaraDB RDS data source

-

DTS source: Add a DTS data source

Limitations

| Constraint | Requirement |

|---|---|

| Full data source | Must be a MySQL database |

| Incremental data source | Must be a DTS task |

| Destination | LindormTable node with a SQL endpoint or HBase-compatible endpoint |

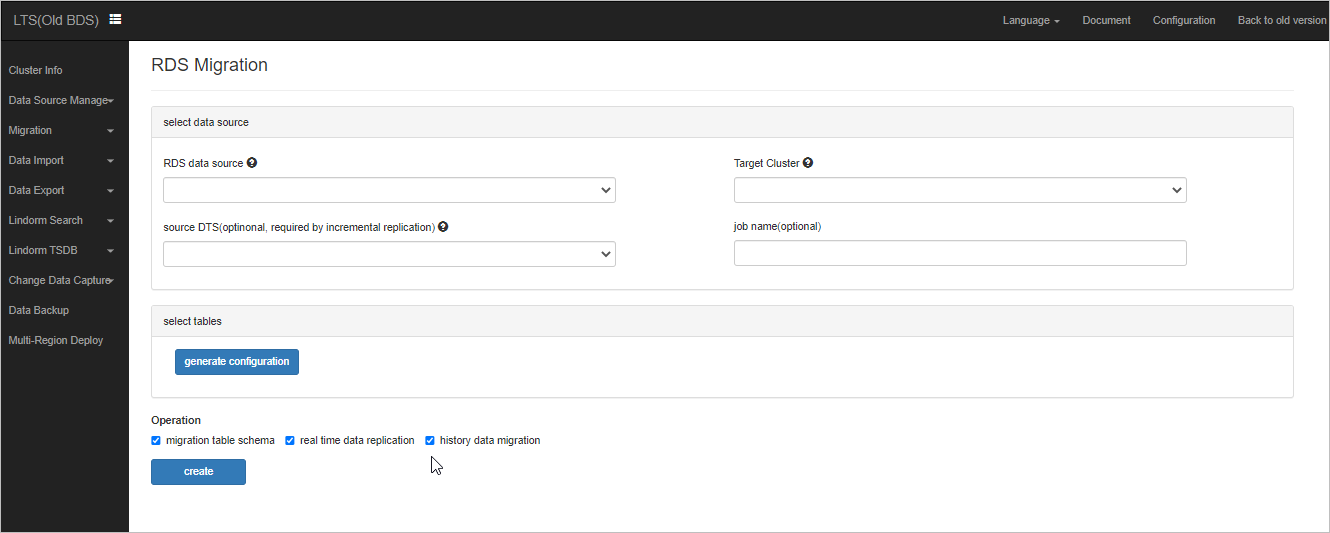

Create an RDS migration task

-

In the LTS web UI, choose Data Import > RDS Migration.

-

Click create.

-

Select the RDS data source, DTS data source, and destination data source.

-

Click Edit to review the default configuration. Modify it as needed. For reference, see Sample configurations.

-

Select the tables to import, then click generate configuration. Note the following default behaviors:

-

LTS imports full data before incremental data.

-

For a LindormTable node compatible with Cassandra Query Language (CQL), LTS auto-generates destination columns with the same names and data types as the source ApsaraDB RDS columns. You can override column names and mappings in the configuration.

-

LTS auto-generates a column family named

f. Each source column maps to a column in thefcolumn family. The row key is the concatenation of the primary key columns from the source table. -

Rows deleted from the source after import are not deleted from the destination. To propagate deletes, set

skipDelete: falsein the configuration (see Sample configurations).

-

-

Click Create.

Sample configurations

All examples use Jtwig template syntax ({{...}}) for data transformations. For the full syntax reference, see the Jtwig Reference Manual.

The two samples below cover the two supported destination endpoint types. The key difference: the Lindorm SQL endpoint sample uses isPk to identify primary key columns, while the HBase-compatible endpoint sample uses a dedicated rowkey field.

Configuration parameters

| Parameter | Description |

|---|---|

querySql |

SQL queries for full data import. Each query runs on one read thread. Split large tables into range-based queries to increase throughput and reduce retry scope. |

name |

Column name in the destination table. |

value |

Column name in the source table, or a Jtwig expression for computed values. |

isPk |

(Lindorm SQL endpoint) Whether the column is a primary key column. Set to true for primary key columns. |

rowkey.value |

(HBase-compatible endpoint) The row key of the destination table. Accepts column names or Jtwig expressions. |

type |

Data type of the destination column. Optional; defaults to the source column's data type. |

config.skipDelete |

When true, delete operations from the source are not propagated to the destination. Default: true. |

table.name |

Destination table name in namespace:tablename format. |

table.parameter.compression |

Compression algorithm for the destination table. Zstandard (ZSTD) is recommended. |

table.parameter.split |

Split keys for pre-partitioning the destination table. |

sourceTable |

Source table name in database.tablename format. |

Lindorm SQL endpoint

Use this configuration when the destination LindormTable node is configured with the SQL endpoint.

{

"reader": {

"querySql": [

"select * from dts.cluster where id < 1000",

"select * from dts.cluster where id >= 1000"

]

},

"writer": {

"columns": [

{

"name": "f:id",

"value": "id",

"isPk": true,

"type": "BIGINT"

},

{

"name": "cluster_id",

"value": "cluster_id",

"isPk": false

},

{

"name": "id_and_cluster",

"value": "{{concat(id, cluster_id)}}",

"isPk": true

}

],

"config": {

"skipDelete": true

},

"table": {

"name": "dts:cluster",

"parameter": {

"compression": "ZSTD"

}

},

"sourceTable": "dts.cluster"

}

}HBase-compatible endpoint

Use this configuration when the destination LindormTable node is configured with the HBase-compatible endpoint. The row key is specified explicitly using the rowkey field instead of isPk.

{

"reader": {

"querySql": [

"select * from dts.cluster where id < 1000",

"select * from dts.cluster where id >= 1000"

]

},

"writer": {

"columns": [

{

"name": "f:id",

"value": "id",

"isPk": false

},

{

"name": "f:cluster_id",

"value": "cluster_id",

"isPk": false

},

{

"name": "f:id_and_cluster",

"value": "{{concat(id, cluster_id)}}"

}

],

"rowkey": {

"value": "id"

},

"config": {

"skipDelete": true

},

"table": {

"name": "dts:cluster",

"parameter": {

"compression": "ZSTD",

"split": ["1", "5", "9", "b"]

}

},

"sourceTable": "dts.cluster"

}

}