This feature is no longer available for Lindorm Tunnel Service (LTS) instances purchased after June 16, 2023. If your LTS instance was purchased before June 16, 2023, you can continue to use this feature.

LTS archives HBase incremental data to MaxCompute by reading HBase write-ahead logs (WAL). Archived data is merged from key-value (KV) format into partitioned wide tables that you can query directly in MaxCompute.

Supported versions

| HBase source | Notes |

|---|---|

| Self-managed HBase V1.x and HBase V2.x | — |

| E-MapReduce HBase | — |

| ApsaraDB for HBase Standard Edition | — |

| ApsaraDB for HBase Performance-enhanced Edition | Cluster mode only |

| Lindorm | — |

Limits

Data archived through LTS is based on HBase logs. Data imported by using bulk loading cannot be exported.

Log data lifecycle

If log data is not consumed after you enable the archiving feature, LTS retains the data for 48 hours by default. After 48 hours, the subscription is automatically canceled and the retained data is automatically deleted.

Log data may fail to be consumed if your LTS cluster is released while a task is running, or if a synchronization task is suspended.

Prerequisites

Before you begin, ensure that you have:

LTS activated

An HBase data source added

A MaxCompute data source added

Archive incremental data

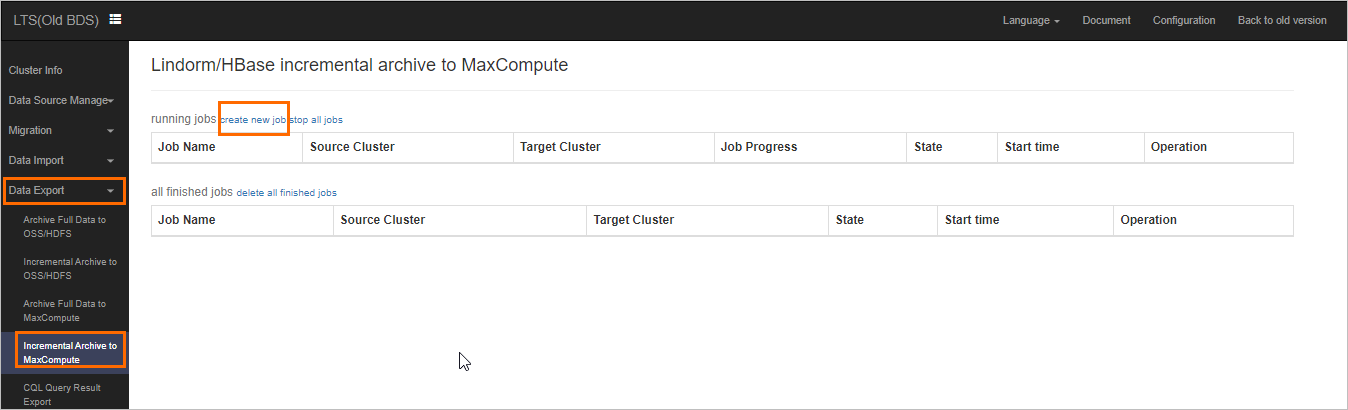

Log on to the LTS web UI. In the left-side navigation pane, choose Data Export > Incremental Archive to MaxCompute.

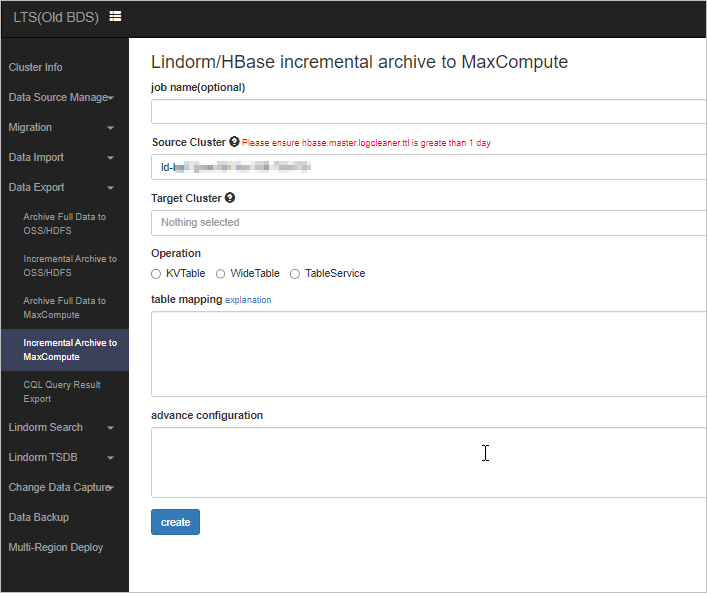

Click create new job. Select a source HBase cluster and a destination MaxCompute resource package, then specify the HBase tables to export.

The example archives data from the

wal-testHBase table to MaxCompute in real time:Columns archived:

cf1:a,cf1:b,cf1:c,cf1:dmergeInterval: set to86400000(the default), so data is archived once per daymergeStartAt: set to20190930000000, specifying September 30, 2019 at 00:00 as the start time. You can specify a past point in time.

Monitor the archiving progress. The Real-time Synchronization Channel section shows the latency and start offset of the log synchronization task. The Table Merge section shows table merging tasks. After a merge completes, the new partitioned tables are queryable in MaxCompute.

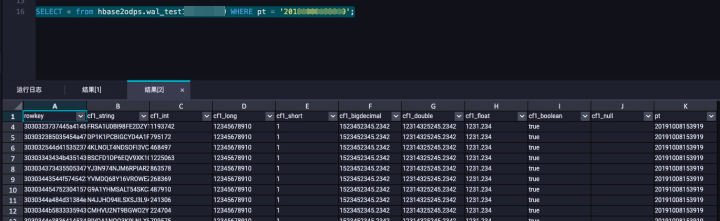

Query data in MaxCompute.

Parameters

Each line in the job configuration specifies one table export using the following format:

<hbaseTable>[/<odpsTable>] <tbConf><hbaseTable>: Source HBase table name.<odpsTable>: Destination MaxCompute table name (optional). Defaults to the same name as the HBase table. Hyphens (-) in HBase table names are converted to underscores (_) in MaxCompute.<tbConf>: JSON object containing the archiving configuration for the table.

Examples:

hbaseTable/odpsTable {"cols": ["cf1:a|string", "cf1:b|int", "cf1:c|long", "cf1:d|short", "cf1:e|decimal", "cf1:f|double", "cf1:g|float", "cf1:h|boolean", "cf1:i"], "mergeInterval": 86400000, "mergeStartAt": "20191008100547"}

hbaseTable/odpsTable {"cols": ["cf1:a", "cf1:b", "cf1:c"], "mergeStartAt": "20191008000000"}

hbaseTable {"mergeEnabled": false}The tbConf object supports the following parameters:

| Parameter | Required | Default | Description | Example |

|---|---|---|---|---|

cols | No | HexString (per column) | Columns to export and their data types. Specify each column as <columnFamily>:<qualifier> or <columnFamily>:<qualifier>|<type>. If no type is specified, the value is exported in HexString format. | "cols": ["cf1:a|string", "cf1:b|int"] |

mergeEnabled | No | true | Whether to convert KV tables into wide tables. Set to false to skip the merge step. | "mergeEnabled": false |

mergeStartAt | No | — | Start time for table merging, in yyyyMMddHHmmss format. Specify a past point in time to backfill historical data. | "mergeStartAt": "20191008000000" |

mergeInterval | No | 86400000 | Interval between table merge tasks, in milliseconds. The default value archives data once per day. | "mergeInterval": 86400000 |