Hologres data lake acceleration, built on Alibaba Cloud Data Lake Formation (DLF) 1.0 and Object Storage Service (OSS), lets you query OSS data lake files directly from Hologres using foreign tables — no data ingestion required. This topic covers how to configure DLF 1.0 access and read OSS data in Hologres. To write Hologres data back to OSS, see Export to a data lake.

Only DLF 1.0 (DLF-Legacy) supports OSS data lake acceleration. The new version of DLF does not support this feature.

Overview

Setting up DLF-based lake access involves six steps:

-

Enable DLF_FDW — activate the foreign data wrapper backend on your instance

-

Create the extension — load the DLF FDW plugin in each target database

-

Create a foreign server — tell Hologres where DLF 1.0 and OSS are located

-

(Optional) Create a user mapping — delegate access to a specific RAM user identity

-

Create foreign tables — map DLF 1.0 metadata tables to Hologres

-

Query data — run standard SQL against OSS lake files

Prerequisites

Before you begin, make sure you have:

-

DLF 1.0 activated. For details, see Quick Start and Supported regions and endpoints.

-

OSS activated with your data ready. For details, see Get started with OSS.

-

OSS authorization configured. The account used to query foreign tables must have OSS access permissions — otherwise queries fail even if the foreign table is created successfully. For details, see Bucket Policy (Java SDK V1).

-

(Optional) OSS-HDFS service enabled, if you plan to use OSS-HDFS as your storage layer. For details, see Enable OSS-HDFS.

Limitations

Read limitations

-

UPDATE,DELETE, andTRUNCATEare not supported on foreign tables. -

Data lake acceleration is not supported for read-only secondary instances.

-

Queries load matching partitions into Hologres memory at runtime. The data volume per query is limited to 200 GB after partition filtering.

-

IMPORT FOREIGN SCHEMAsupports up to 512 partitions per query. Add partition filter conditions to keep each query within this limit.

Write limitations

-

Only

INSERT INTOis supported when exporting Hologres data to OSS.INSERT ON CONFLICT,UPDATE, andDELETEare not supported. -

Writing data back to OSS requires Hologres V1.3 or later, and only ORC, Parquet, CSV, and SequenceFile formats are supported.

Set up the environment

Step 1: Enable DLF_FDW

DLF_FDW is the foreign data wrapper (FDW) that connects Hologres to DLF 1.0 and OSS. Enable it in the Hologres console so the instance can communicate with DLF.

In the Hologres console, go to the Instances or Instance Details page. In the Actions column for the target instance, click Data Lake Acceleration and confirm the operation. The system automatically configures DLF_FDW and restarts the instance in the background. After the restart completes, the feature is available.

After enabling DLF_FDW, the system uses default resources (1 core and 4 GB memory). No additional resources need to be purchased.

Hologres is rolling out self-service DLF_FDW configuration in the console. If the Data Lake Acceleration button is not visible, follow the instructions in Common upgrade preparation errors or contact support via the Hologres DingTalk group. For details, see How to obtain online support?.

Step 2: Create the extension

A Superuser must run the following statement once per database to load the DLF FDW plugin. This enables Hologres to read OSS data through DLF 1.0.

CREATE EXTENSION IF NOT EXISTS dlf_fdw;Step 3: Create a foreign server

The foreign server object tells Hologres where DLF 1.0 and OSS are located, and how to authenticate. A Superuser must create it — non-Superuser accounts result in permission errors.

DLF 1.0 supports multiple catalogs. If you have a single EMR cluster, use the default catalog. If you have multiple EMR clusters, use a custom catalog to control which cluster Hologres connects to.

Choose the storage type that matches your setup:

Native OSS storage (default catalog)

-- View existing servers.

-- meta_warehouse_server and odps_server are built-in system servers and cannot be modified or deleted.

SELECT * FROM pg_foreign_server;

-- Drop an existing server if needed.

DROP SERVER <server_name> CASCADE;

-- Create a server using the DLF 1.0 default catalog and native OSS.

CREATE SERVER IF NOT EXISTS <server_name> FOREIGN DATA WRAPPER dlf_fdw OPTIONS (

dlf_region '<region_id>',

dlf_endpoint 'dlf-share.<region_id>.aliyuncs.com',

oss_endpoint 'oss-<region_id>-internal.aliyuncs.com'

);OSS-HDFS storage

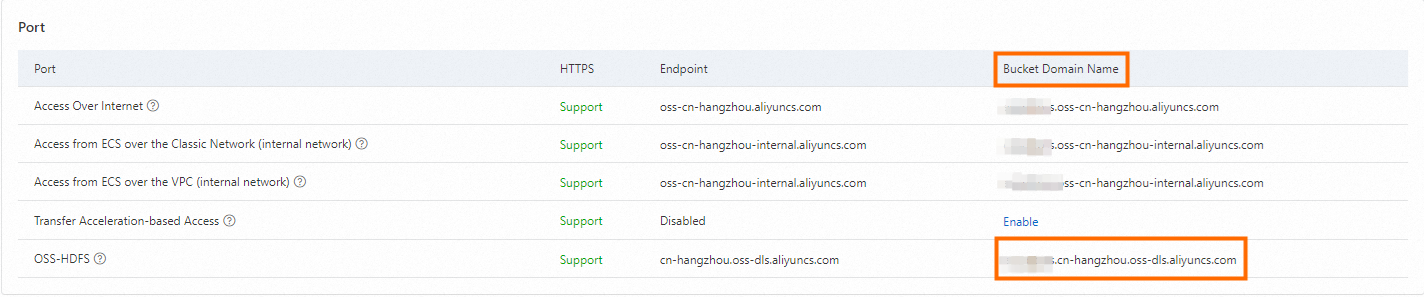

OSS-HDFS supports internal network access only. Find the bucket domain name on the Overview page of your OSS-HDFS-enabled bucket in the OSS console.

After confirming the domain name, create the server:

CREATE EXTENSION IF NOT EXISTS dlf_fdw;

CREATE SERVER IF NOT EXISTS <server_name> FOREIGN DATA WRAPPER dlf_fdw OPTIONS (

dlf_region '<region_id>',

dlf_endpoint 'dlf-share.<region_id>.aliyuncs.com',

oss_endpoint '<bucket_name>.<region_id>.oss-dls.aliyuncs.com'

);Parameters

| Parameter | Description | Example |

|---|---|---|

<server_name> |

Custom name for the foreign server | dlf_server |

dlf_region |

Region where DLF 1.0 is deployed. See the region list below. | cn-hangzhou |

dlf_endpoint |

DLF 1.0 internal endpoint. Pattern: dlf-share.<region_id>.aliyuncs.com |

dlf-share.cn-shanghai.aliyuncs.com |

oss_endpoint |

OSS internal endpoint (native OSS) or OSS-HDFS domain (OSS-HDFS). See examples below. | oss-cn-shanghai-internal.aliyuncs.com |

Supported regions

| Region | dlf_region value |

Native OSS oss_endpoint |

|---|---|---|

| China (Beijing) | cn-beijing |

oss-cn-beijing-internal.aliyuncs.com |

| China (Hangzhou) | cn-hangzhou |

oss-cn-hangzhou-internal.aliyuncs.com |

| China (Shanghai) | cn-shanghai |

oss-cn-shanghai-internal.aliyuncs.com |

| China (Shenzhen) | cn-shenzhen |

oss-cn-shenzhen-internal.aliyuncs.com |

| China (Zhangjiakou) | cn-zhangjiakou |

oss-cn-zhangjiakou-internal.aliyuncs.com |

| Singapore | ap-southeast-1 |

oss-ap-southeast-1-internal.aliyuncs.com |

| Germany (Frankfurt) | eu-central-1 |

oss-eu-central-1-internal.aliyuncs.com |

| US (Virginia) | us-east-1 |

oss-us-east-1-internal.aliyuncs.com |

| Indonesia (Jakarta) | ap-southeast-5 |

oss-ap-southeast-5-internal.aliyuncs.com |

For OSS-HDFS, the oss_endpoint format is <bucket_name>.<region_id>.oss-dls.aliyuncs.com — for example, cn-hangzhou.oss-dls.aliyuncs.com.

Step 4: Create a user mapping (optional)

A user mapping lets the foreign server owner grant a specific identity (such as a RAM user) access to DLF 1.0 and OSS. The mapped account must have query permissions on the external data.

-- Create a user mapping for the current user.

CREATE USER MAPPING FOR current_user SERVER <server_name> OPTIONS (

dlf_access_id '<your_access_key>',

dlf_access_key '<your_access_secret>',

oss_access_id '<your_access_key>',

oss_access_key '<your_access_secret>'

);

-- Create a user mapping for a specific RAM user.

CREATE USER MAPPING FOR "p4_123xxx" SERVER <server_name> OPTIONS (

dlf_access_id '<your_access_key>',

dlf_access_key '<your_access_secret>',

oss_access_id '<your_access_key>',

oss_access_key '<your_access_secret>'

);

-- Drop user mappings.

DROP USER MAPPING FOR current_user SERVER <server_name>;

DROP USER MAPPING FOR "p4_123xxx" SERVER <server_name>;For more information, see the PostgreSQL CREATE USER MAPPING documentation.

Read OSS data

Step 5: Create foreign tables

First create a metadata table in DLF 1.0 and make sure data has been extracted. Then create foreign tables in Hologres using one of two methods:

-

CREATE FOREIGN TABLE — creates a single foreign table with manual field mapping. Partition fields are defined as regular fields (no data is stored in Hologres).

-

IMPORT FOREIGN SCHEMA — creates one or more foreign tables in bulk with automatic field mapping.

If an OSS foreign table has the same name as an existing Hologres internal table,IMPORT FOREIGN SCHEMAskips that table. UseCREATE FOREIGN TABLEwith a different name in that case.

Supported partition key data types: TEXT, VARCHAR, and INT.

Syntax

-- Method 1: CREATE FOREIGN TABLE

CREATE FOREIGN TABLE [ IF NOT EXISTS ] <oss_table_name> (

<column_name> <data_type>

[, ...]

)

SERVER <server_name>

OPTIONS (

schema_name '<dlf_database_name>',

table_name '<dlf_table_name>'

);

-- Method 2: IMPORT FOREIGN SCHEMA

IMPORT FOREIGN SCHEMA <schema_name>

[ { LIMIT TO | EXCEPT } ( <table_name> [, ...] ) ]

FROM SERVER <server_name>

INTO <local_schema>

[ OPTIONS ( option 'value' [, ...] ) ];| Parameter | Description |

|---|---|

schema_name |

Name of the metadatabase created in DLF 1.0 |

table_name |

Name of the metadata table created in DLF 1.0 |

<server_name> |

Name of the foreign server created in Hologres |

local_schema |

Target schema in Hologres |

Examples

Create a single foreign table mapped to the dlf_oss_test table in the DLF 1.0 metadatabase dlfpro:

-- Method 1: CREATE FOREIGN TABLE

CREATE FOREIGN TABLE dlf_oss_test_ext (

id text,

pt text

)

SERVER <server_name>

OPTIONS (

schema_name 'dlfpro',

table_name 'dlf_oss_test'

);

-- Method 2: IMPORT FOREIGN SCHEMA (single table, update if exists)

IMPORT FOREIGN SCHEMA dlfpro LIMIT TO (

dlf_oss_test

)

FROM SERVER <server_name> INTO public OPTIONS (if_table_exist 'update');Import all tables from the dlfpro metadatabase into the public schema:

-- Import the entire database.

IMPORT FOREIGN SCHEMA dlfpro

FROM SERVER <server_name> INTO public OPTIONS (if_table_exist 'update');

-- Import specific tables only.

IMPORT FOREIGN SCHEMA dlfpro (

table1,

table2,

tablen

)

FROM SERVER <server_name> INTO public OPTIONS (if_table_exist 'update');Step 6: Query data

After creating the foreign tables, query them with standard SQL. Hologres loads the relevant partitions into memory at query time.

-- Non-partitioned table

SELECT * FROM dlf_oss_test;

-- Partitioned table (add a partition filter to stay within the 512-partition limit)

SELECT * FROM partition_table WHERE dt = '2013';Troubleshooting

Error: `ERROR: babysitter not ready,req:name:"HiveAccess"`

The DLF_FDW backend configuration has not been enabled. In the Hologres console, go to the Instances page, click Data Lake Acceleration, and enable the backend configuration.

What's next

-

To import OSS data into a Hologres internal table for better query performance, see Import data from a data lake using SQL.

-

To write data from a Hologres internal table back to an OSS data lake, see Export to a data lake.

-

For an end-to-end walkthrough, see Accelerate data lake queries.