Starting from V1.3.26, Hologres can read data from and write results back to OSS-HDFS. When your extract, transform, and load (ETL) pipelines produce data in OSS-HDFS, Hologres lets you query that data in place and write results back — without moving data out of the data lake. Hologres uses Data Lake Formation (DLF) for metadata management and JindoSDK for direct data access and write-back.

Write-back is supported for ORC, Parquet, CSV, and SequenceFile formats only.

How it works

The integration uses the dlf_fdw foreign data wrapper. Hologres queries DLF for table metadata and uses JindoSDK to access the underlying OSS-HDFS storage.

OSS-HDFS (also known as JindoFS) is a cloud-native data lake storage service. Compared with native OSS, OSS-HDFS integrates directly with Hive, Spark, and other Hadoop ecosystem compute engines.

Access modes

Before you configure a foreign server, determine which endpoint type applies to your scenario. To access multiple OSS-HDFS environments at the same time, create a separate foreign server for each environment.

| Scenario | Endpoint type | Notes |

|---|---|---|

| OSS-HDFS (same region) | Private endpoint | OSS-HDFS supports private network access only. Cross-region access is not supported. |

| OSS-HDFS + DLF (cross-region) | Public endpoint for DLF | Public endpoints incur network fees. See Billing overview. |

| Native OSS (same region) | Private endpoint | Better performance. Create a separate foreign server with the OSS private endpoint. |

| Native OSS (cross-region) | Public endpoint | Create a separate foreign server with the OSS public endpoint. |

Prerequisites

Before you begin, ensure that you have:

-

Activated DLF. See Quick start and Regions and endpoints for supported regions

-

Activated OSS-HDFS and loaded your data. See Activate the OSS-HDFS service

-

Enabled the data lake acceleration feature for your Hologres instance. See Environment configuration

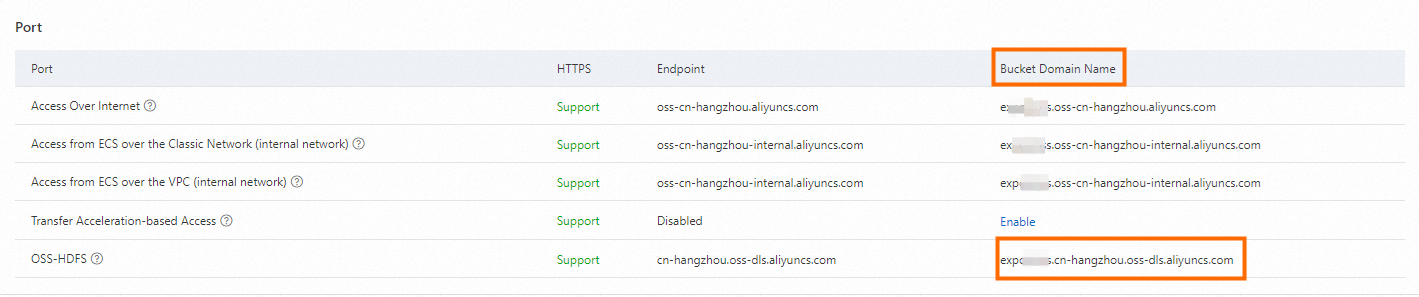

Step 1: Find the OSS-HDFS bucket endpoint

Open the OSS console, navigate to your bucket's Overview page, and copy the OSS-HDFS service domain name. You'll use this value as the oss_endpoint in the next step.

Step 2: Create a foreign server

Enable the dlf_fdw extension and create a foreign server that connects Hologres to DLF and OSS-HDFS.

CREATE EXTENSION IF NOT EXISTS dlf_fdw;

CREATE SERVER IF NOT EXISTS <servername> FOREIGN DATA WRAPPER dlf_fdw OPTIONS (

dlf_region 'cn-<region>',

dlf_endpoint 'dlf-share.cn-<region>.aliyuncs.com',

oss_endpoint '<bucket_name>.cn-<region>.oss-dls.aliyuncs.com' -- OSS-HDFS bucket domain name from Step 1

);| Parameter | Description | Example |

|---|---|---|

servername |

A name you assign to this foreign server | dlf_server |

dlf_region |

The region where DLF is deployed. See Regions and endpoints | cn-beijing |

dlf_endpoint |

The DLF service endpoint. Use the private endpoint for better performance. For cross-region access, use a public endpoint (network fees apply) | dlf-share.cn-beijing.aliyuncs.com |

oss_endpoint |

The OSS-HDFS endpoint from Step 1. For native OSS, use the OSS private or public endpoint instead | bucket_nametest.cn-hangzhou.oss-dls.aliyuncs.com |

Step 3: Create foreign tables

Map DLF metadata tables to Hologres foreign tables using one of the following methods.

Create a single table

Use CREATE FOREIGN TABLE to map one table, or IMPORT FOREIGN SCHEMA LIMIT TO for a targeted import.

-- Option A: Create the foreign table directly

CREATE FOREIGN TABLE dlf_oss_test

(

id text,

pt text

)

SERVER dlf_server

OPTIONS

(

schema_name 'dlfpro',

table_name 'dlf_oss_test'

);

-- Option B: Import a single table from a DLF metadatabase

IMPORT FOREIGN SCHEMA dlfpro LIMIT TO

(

dlf_oss_test

)

FROM SERVER dlf_server INTO public OPTIONS (if_table_exist 'update');Import tables in bulk

Map all tables from a DLF metadatabase, or a selected subset, to a Hologres schema in one operation.

-- Import an entire DLF metadatabase

IMPORT FOREIGN SCHEMA dlfpro

FROM SERVER dlf_server INTO public OPTIONS (if_table_exist 'update');

-- Import selected tables

IMPORT FOREIGN SCHEMA dlfpro

(

table1,

table2,

tablen

)

FROM SERVER dlf_server INTO public OPTIONS (if_table_exist 'update');After the import, the foreign tables appear in the corresponding schema in Hologres and in the table schema folder in HoloWeb.

Step 4: Query data

Query foreign tables the same way you query native Hologres tables.

-- Query a non-partitioned table

SELECT * FROM dlf_oss_test;

-- Query a partitioned table

SELECT * FROM <partition_table> WHERE dt = '2013';To write data back, run INSERT INTO against a foreign table in ORC, Parquet, CSV, or SequenceFile format.

What's next

-

For the full procedure including environment setup, see Accelerate access to OSS data lakes using DLF

-

For OSS-HDFS service details, see What is the OSS-HDFS service?