Serverless Application Center provides customizable pipeline execution. You can configure pipelines and orchestrate task flows to publish code to Function Compute. This topic describes how to manage pipelines in the console, including pipeline configuration, pipeline detail settings, and pipeline execution history.

Background information

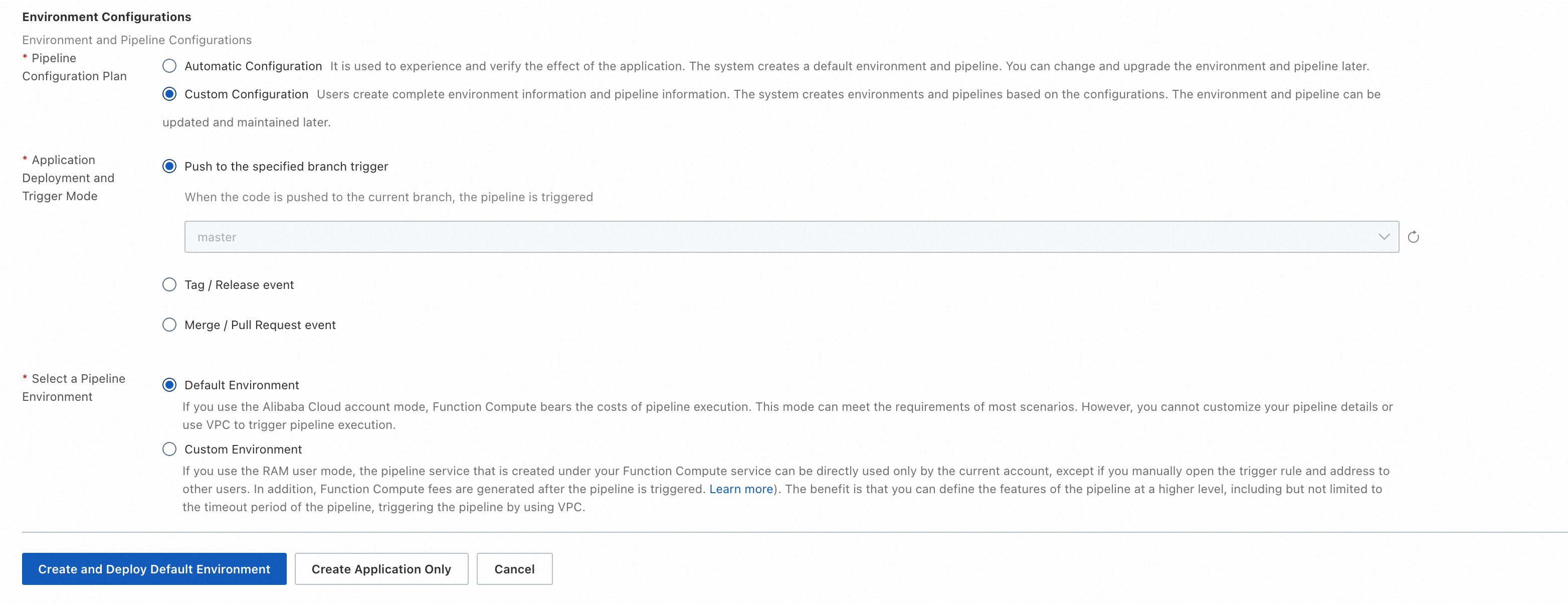

When you create an application, the platform creates a default environment. For this environment, you can specify the Git event that triggers the pipeline and configure the pipeline. You can choose between automatic and custom configuration. If you choose automatic configuration, the platform creates a pipeline using the default values for each configuration item. If you choose custom configuration, you can specify the Git event trigger method for the environment's pipeline and select the pipeline execution environment. Information such as Git and application details is passed to the pipeline as the execution context.

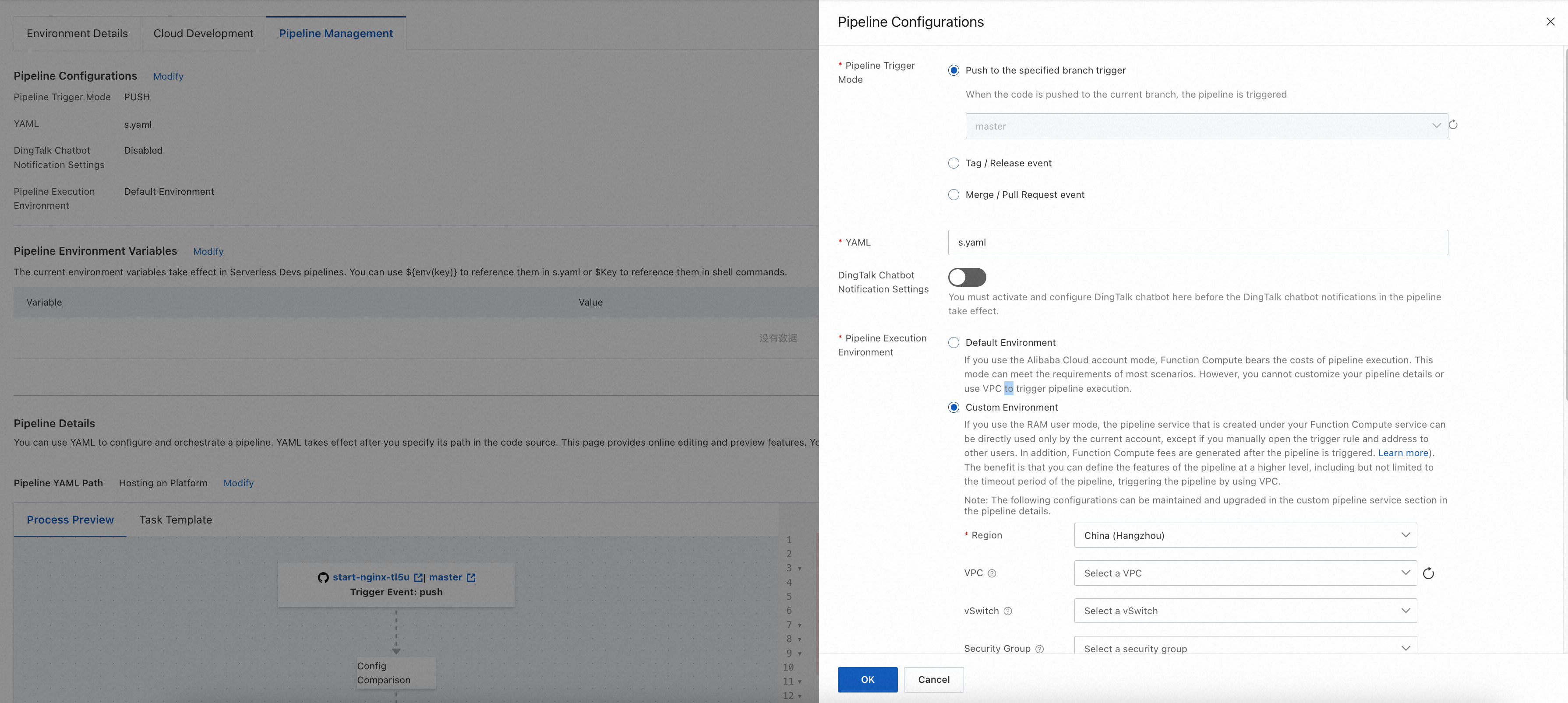

When you edit pipeline configurations, you can also configure DingTalk notifications and the resource description YAML file, in addition to the trigger method and execution environment.

Configure a pipeline when you create an application or environment

When you create an application or environment, you can specify the pipeline's Git trigger method and execution environment.

Edit a pipeline in an environment

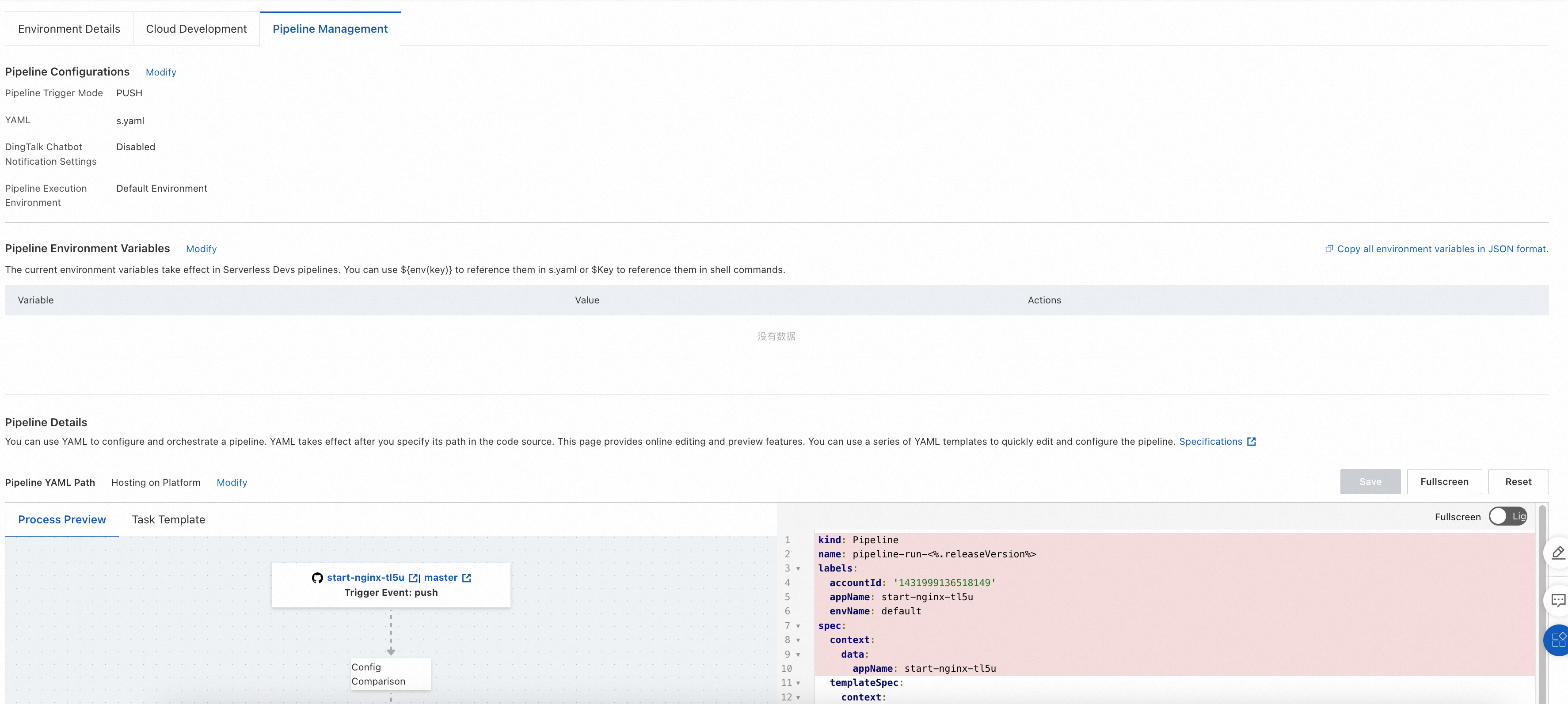

For an existing environment, you can edit the pipeline's Git trigger method, execution environment, DingTalk notifications, and resource description YAML file on the Pipeline management tab.

Pipeline configuration

Pipeline configuration includes four main items: trigger method, execution environment, resource description YAML, and DingTalk robot notification. You can configure the trigger method and execution environment when you create an application or environment. After creation, you can configure all four items by clicking the Edit button.

Pipeline trigger method

Application Center lets you customize the Git events that trigger pipelines. Application Center uses webhooks to receive Git events. When it receives an event that matches the trigger rules, it creates and runs a pipeline based on your configured pipeline YAML file. The supported trigger methods are:

Branch trigger: The environment must be associated with a specified branch. Matches all Push events for the specified branch.

Tag trigger: Matches all Tag creation events for the specified tag expression.

Branch merge trigger: Matches Merge or Pull Request events from a specified source branch to the target branch associated with the environment.

Pipeline execution environment

The Pipeline Execution Environment has two modes: Default Environment and Custom Environment.

Default execution environment

In the Default Environment, the platform fully manages the pipeline resources. Alibaba Cloud Function Compute covers the costs of pipeline execution, so you do not incur any fees. Each pipeline task runs in an independent sandboxed container. The platform ensures isolation of your pipeline execution environment. The default execution environment has the following limits:

Instance resource specifications: 4 vCPU, 8 GB memory.

Temporary disk space: 10 GB.

Task execution timeout: 15 minutes.

Region limits: Direct template deployment and GitHub code sources use the Singapore region outside China. Gitee, public GitLab, and Codeup use the China (Hangzhou) region.

Network limits: Fixed IP addresses and CIDR blocks are not supported. Accessing specified websites using an IP address whitelist is not supported. Accessing resources within your VPC is not supported.

Dedicated execution environment

The Custom Environment runs pipeline tasks in your account and provides more customization options. Based on your authorization, Application Center fully manages the tasks in the dedicated execution environment and schedules Function Compute instances in your account to run the pipelines in real time. Like the default execution environment, the dedicated execution environment is fully serverless. You do not need to manage the underlying infrastructure.

The Custom Environment provides the following customization options:

Region and network: You can specify the region and VPC for the execution environment to enable scenarios such as accessing private network code repositories, artifact repositories, image repositories, and private Maven servers. For more information about supported regions, see Regions.

Instance resource specifications: You can specify the CPU and memory specifications for the execution environment. For example, specify a larger instance to speed up builds.

NoteThe ratio of vCPUs to memory in GB must be between 1:1 and 1:4.

Persistent storage: You can specify NAS and OSS mount configurations. For example, mount a NAS file system for file caching to accelerate builds.

Logs: You can specify an SLS project and Logstore for the persistence of pipeline execution logs.

Timeout: You can customize the execution timeout for pipeline tasks. The default is 600 seconds, and the maximum is 86,400 seconds.

The dedicated execution environment runs pipeline tasks in your own Function Compute account, which incurs related fees. For more information, see Billing overview.

YAML

Serverless Application Center is deeply integrated with the Serverless Devs developer tool. You can use the Serverless Devs resource description file to declare the resource configuration of your application. The default resource description file is named s.yaml, but you can specify a different file name. After you specify a resource description file, you can use it in the pipeline in two ways:

When you use the

@serverless-cd/s-deploydeployment plugin, the plugin automatically uses the specified resource description file for deployment. This works by adding the-t/--templatecommand to the Serverless Devs operation instruction. In the following example, the specified resource description YAML file isdemo.yaml, so the command executed by the plugin iss deploy -t demo.yaml.

- name: deploy

context:

data:

deployFile: demo.yaml

steps:

- plugin: '@serverless-cd/s-setup'

- plugin: '@serverless-cd/checkout'

- plugin: '@serverless-cd/s-deploy'

taskTemplate: serverless-runner-taskWhen you use a script for execution, you can reference the specific resource description file name with

${{ ctx.data.deployFile }}. For example, the following sample code runs the `s plan` command with the specified file if a resource description file is provided. Otherwise, it runs the `s plan` command with the default `s.yaml` file.

- name: pre-check

context:

data:

steps:

- run: s plan -t ${{ ctx.data.deployFile || s.yaml }}

- run: echo "s plan finished."

taskTemplate: serverless-runner-taskDingTalk Chatbot Notification Settings

After you enable this configuration, configure the DingTalk robot's Webhook address, signature key, notification rules, and custom messages. You can centrally manage the tasks that require notifications here, or you can enable notifications for each task separately through the pipeline YAML file. After you complete the overall notification configuration here, you can further refine the notification settings for each task in the Pipeline Details.

Pipeline environment variables

In the Pipeline Environment Variables section, click Modify, choose a method to configure the environment, complete the configuration as described below, and then click Deploy.

Edit using the form (default)

Click +Add Variable.

Configure the key-value pair for the environment variable:

Variable: Custom.

Value: Custom.

Edit using JSON format

Click Edit in JSON Format.

In the text box, enter the key-value pair in JSON format as follows.

{ "key": "value" }The following is an example.

{ "REGION": "MY_REGION", "LOG_PROJECT": "MY_LOG_PROJECT" }

The environment variables take effect in the Serverless Devs pipeline. You can reference them in `s.yaml` using ${env(key)} or in shell commands using $Key. The following is an example.

vars:

region: ${env(REGION)}

service:

name: demo-service-${env(prefix)}

internetAccess: true

logConfig:

project: ${env(LOG_PROJECT)}

logstore: fc-console-function-pre

vpcConfig:

securityGroupId: ${env(SG_ID)}

vswitchIds:

- ${env(VSWITCH_ID)}

vpcId: ${env(VPC_ID)}Pipeline details

In the Pipeline Details section, you can configure the pipeline flow and specify detailed settings for tasks and their dependencies within the pipeline. The platform automatically generates a default pipeline flow, which you can edit.

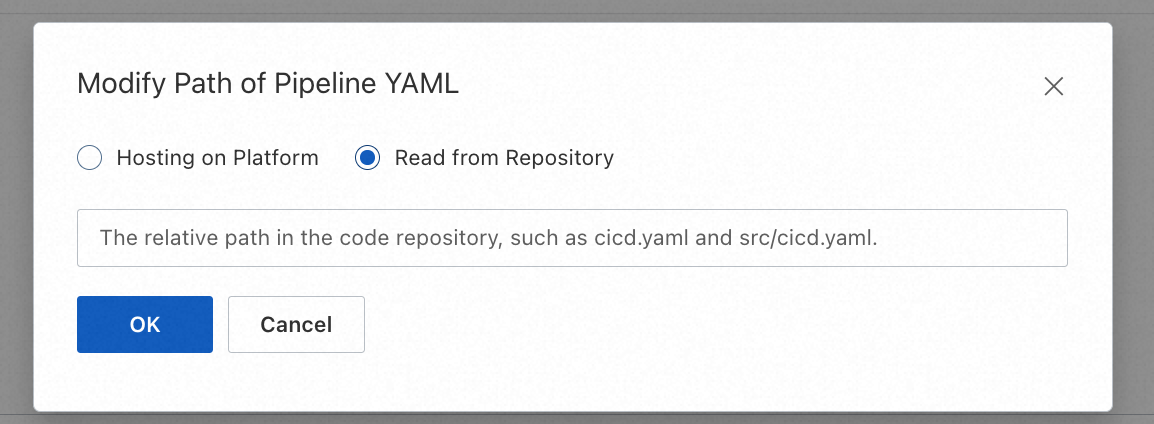

Pipelines are managed using a YAML file. Two configuration methods are available: Hosting on Platform and Read from Repository .

The Platform-hosted method does not support predefined YAML variables. For more information, see Use a YAML file to describe a pipeline.

Repository hosting supports predefined variables in YAML files.

Platform-hosted

By default, the pipeline YAML file is hosted by the platform. This means the configuration is centrally managed on the platform and takes effect on the next deployment after an update.

Repository read

The pipeline's YAML description file is stored in a remote Git repository. After you edit and save it in the console, the platform commits the changes to your Git repository in real time. This commit does not trigger a pipeline execution. When an event in your code repository triggers a pipeline, the platform uses the pipeline YAML file from your specified Git repository to create and run the pipeline.

You can select Read from Repository above the Pipeline Details section and enter the name of the YAML file, as shown in the following figure.

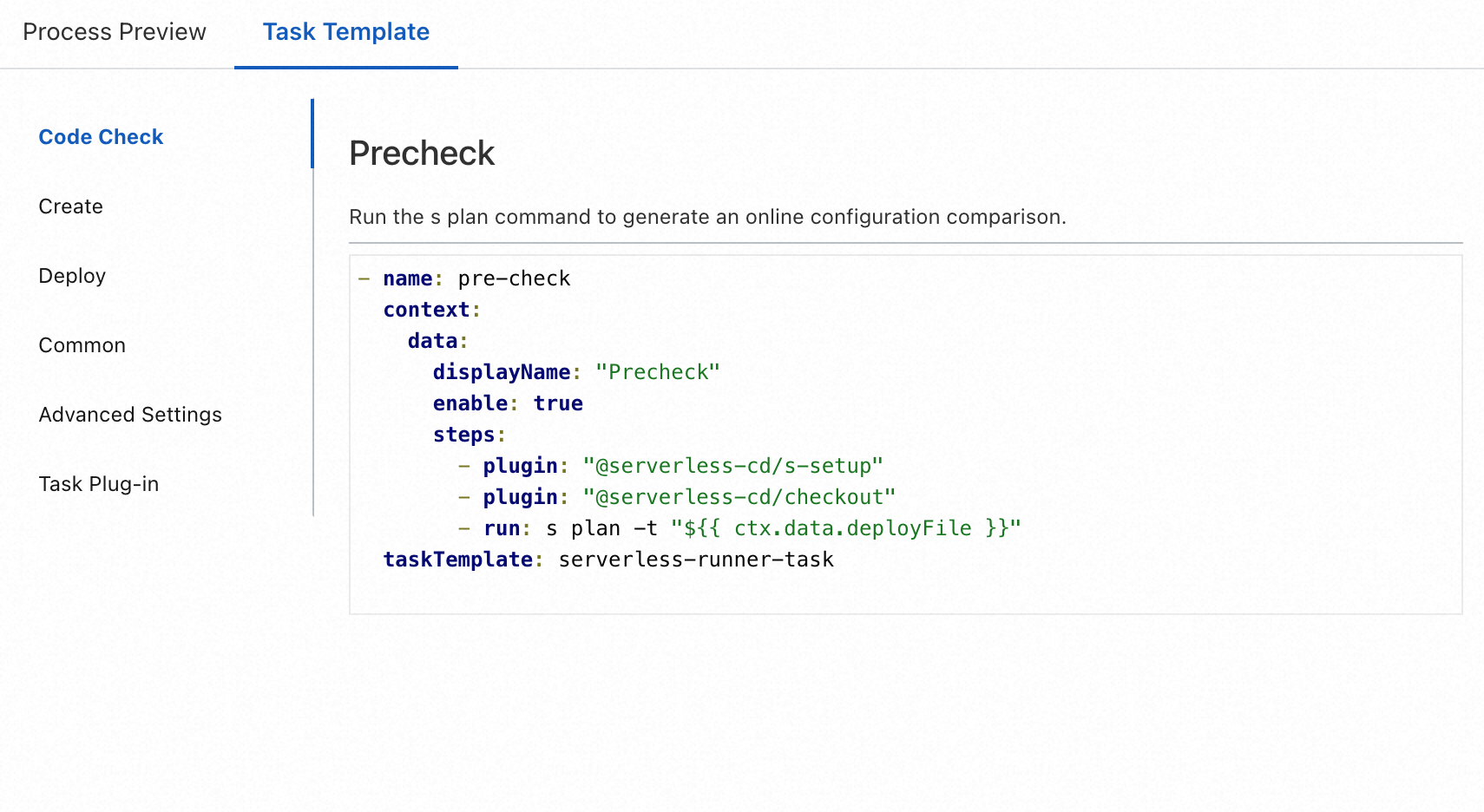

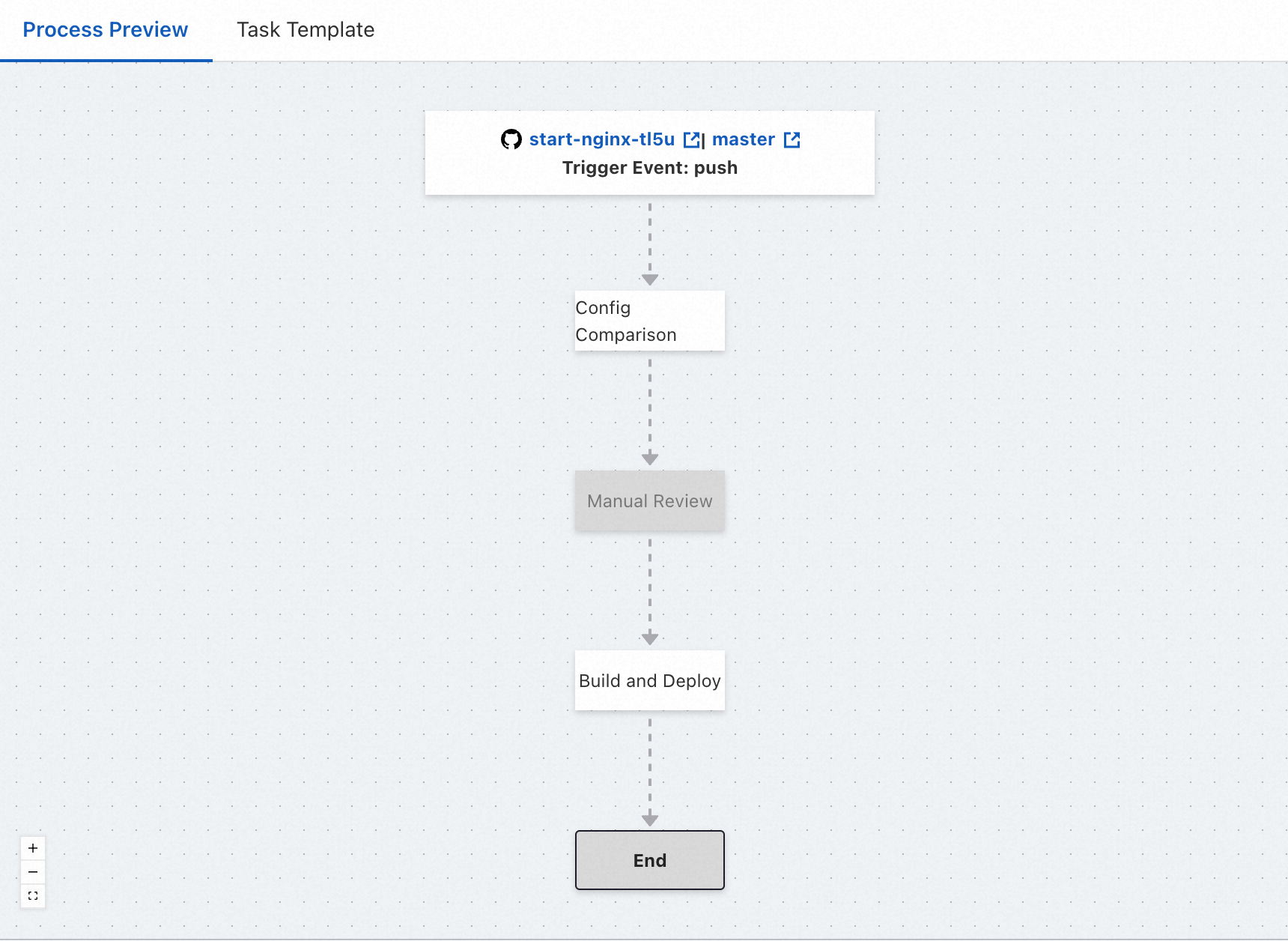

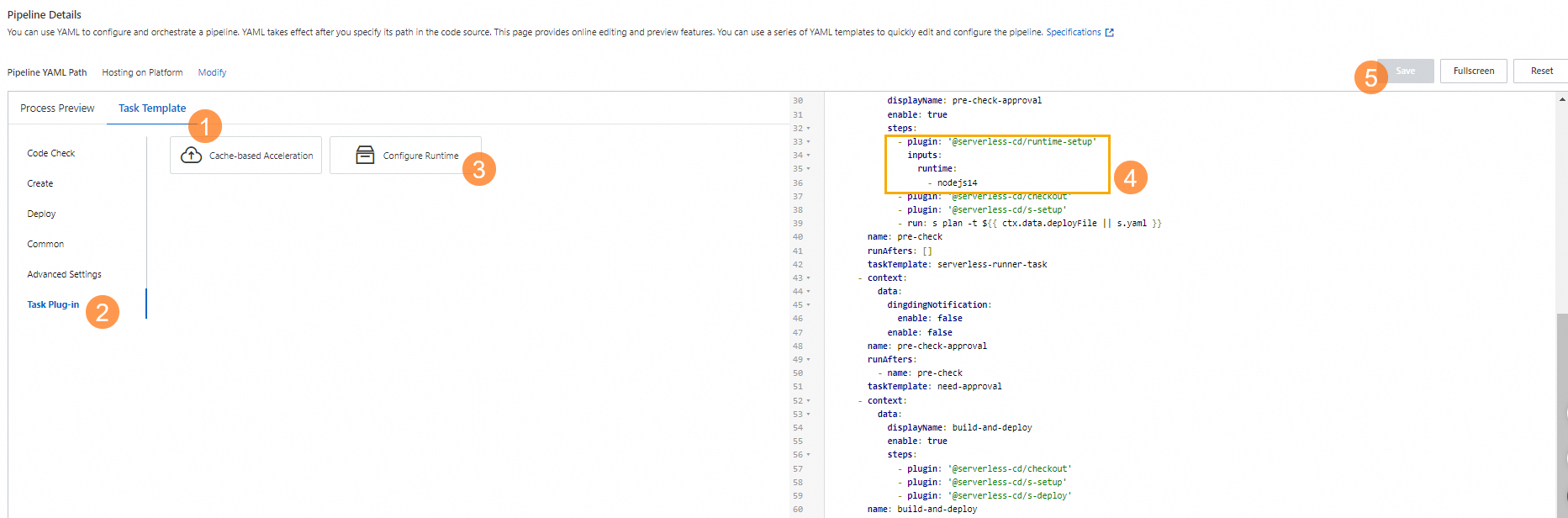

The main part of the Pipeline Details section consists of the supporting tools area on the left and the YAML editing area on the right:

YAML editing area: You can directly edit the pipeline YAML file to modify the pipeline flow. For more information, see Use a YAML file to describe a pipeline.

Supporting tools area: Provides tools to assist with YAML editing, including the following:

Process Preview: Provides a visual preview and simple editing capabilities for the pipeline flow.

Task Template: Provides a series of YAML templates for common tasks.

In the upper-right corner of the section are three buttons: Save, Full screen mode, and Reset:

The Save button saves the changes made to the YAML file on the page and syncs them to the pipeline YAML file.

The Full screen mode button expands the main editing area to full screen.

The Reset button cancels all changes made since the last save and reverts to the initial state.

If you choose to reset, all changes made after the last save will be lost. Use this option with caution and make backups.

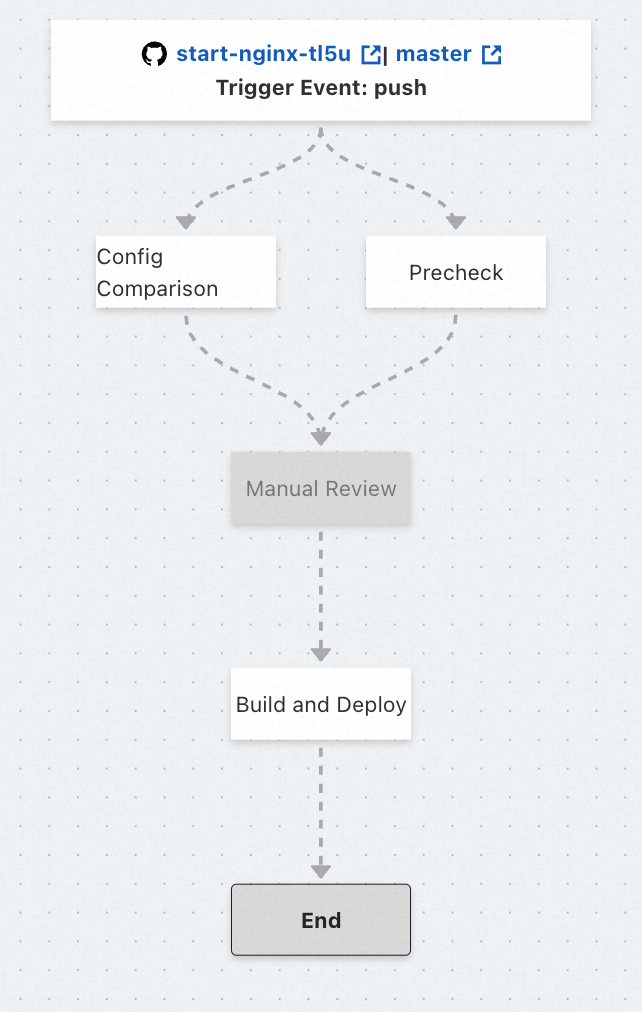

Flow preview

The Process Preview area provides a visual preview of the pipeline flow. It supports simple editing of basic task content and dependencies, and lets you quickly create and add tasks from templates. The flowchart nodes are divided into three main types: the starting code source and trigger method node, the end node, and task nodes. The nodes are connected by lines that represent dependencies. When you move the mouse over a task node, the Create task and Delete task buttons appear, allowing you to easily add or remove tasks.

Code source and trigger method node (start node)

This node displays the current pipeline's code source information and Trigger Mode. It is for display only and cannot be edited. To modify the pipeline trigger method, go to the Pipeline Configurations section.

ImportantTo modify the code repository, do so on the application details page. For more information, see Manage applications. Modifying the code repository will invalidate all pipelines. Proceed with caution.

End node

Marks the end of the pipeline flow, where all tasks in the pipeline are complete. This node is read-only and has no practical meaning.

Task node

Displays and maintains basic information for a specified task. By default, the task node displays the task name. Clicking the node opens a pop-up box where you can view and edit basic information such as the Task Name, Pre-task, and whether to Start Task. If you choose not to enable a task, it will be automatically skipped during execution and appear grayed out.

Dependency

A one-way arrow that reflects the relationship between tasks. If an arrow points from Task A to Task B, it means A is a preceding task for B, and B depends on A. Each task can depend on multiple tasks and be a dependency for multiple tasks.

You can change dependencies by editing the Pre-task of the dependent task. For example, to remove B's dependency on A, click the task node for B and remove A from its preceding tasks.

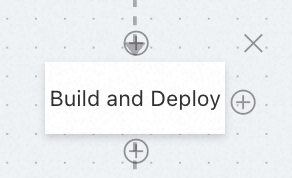

Create task

This button is represented by a '+' icon and appears above, below, and to the right of a task node. Clicking the Create task button above Task A creates a preceding task B, on which A depends. Clicking the Create task button below Task A creates a subsequent task C, which depends on A. Clicking the Create task button to the right of Task A creates a sibling task D, which has the same dependencies as A.

Delete task

This button is represented by a '×' icon in the upper-right corner of the task node and is used to delete the selected task. The platform will ask for confirmation to prevent accidental deletion.

Task templates

Task Template provide a series of YAML templates for common pipeline tasks, including templates for four types of tasks: Code Check, Create, Deploy, and Common. It also provides YAML templates for Advanced Settings within tasks and for Task Plug-in.

You can select the template you need from the template list. Click a template to view its detailed introduction and YAML content, then copy and paste the YAML content into the corresponding position in your pipeline YAML file.

Default pipeline flow

The default pipeline flow includes three tasks that run sequentially: Config Comparison, Manual Review, and Build and Deploy. The Manual review task is disabled by default and must be enabled manually.

Config Comparison

Determines whether the resource description file involved in the pipeline is consistent with the online configuration, helping to detect unexpected configuration changes in advance.

Manual Review

To ensure the secure and stable release of your application, you can enable a manual review mechanism at this stage. When the pipeline reaches this point, it will be blocked and wait for manual confirmation. The pipeline continues only after the review is approved. Otherwise, the current pipeline is stopped. This task is disabled by default and must be enabled manually.

Build and Deploy

Builds the application and deploys it to the cloud. By default, a full deployment is performed.

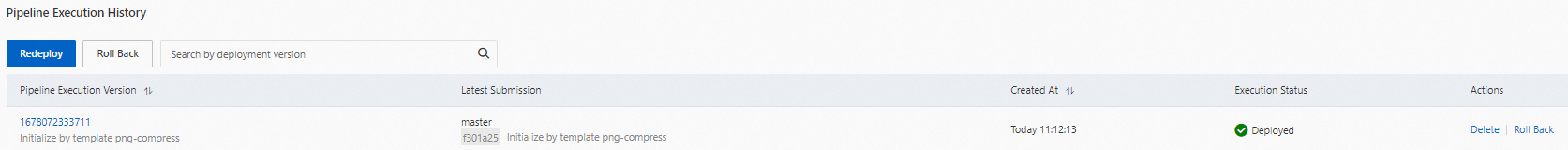

View pipeline execution history

On the details page of the specified environment, select the Pipeline Management tab. In the Pipeline Execution History section below, you can view the historical execution records of the specified pipeline.

You can click a specific pipeline execution version to view its details. This information lets you quickly check the execution logs and status of the pipeline, making it easier to monitor its progress or troubleshoot issues.

Upgrade the pipeline build environment runtime

The runtimes currently supported by the default pipeline build environment are listed below. The built-in package management tools include Maven, PIP, and NPM. Currently, only the Debian 10 operating system is supported for the runtime environment.

Runtime | Supported versions |

Node.js |

|

Java |

|

Python |

|

Golang |

|

PHP |

|

.NET |

|

You can set the pipeline runtime version using the runtime-setup pipeline plugin or by modifying the environment variables in the resource description file.

runtime-setup pipeline plugin (recommended)

In the Function Compute console, find your application. On the Pipeline Management tab, in the Pipeline Details section, select the Set Runtime task plugin template. Use the task template to update the pipeline YAML file on the right. The procedure is: Task Template (① in the figure below) → Task Plug-in (② in the figure below) → Configure Runtime (③ in the figure below) → Update YAML on the right (④ in the figure below) → Save (⑤ in the figure below).

Place the runtime-setup plugin first to ensure it takes effect for all subsequent steps.

For detailed parameters of the runtime-setup plugin, see Use the runtime-setup plugin to initialize the runtime environment.

Resource description file environment variables

You can also use Action hooks in the resource description file to switch the Node.js or Python version. The details are as follows.

Node.js

export PATH=/usr/local/versions/node/v12.22.12/bin:$PATH

export PATH=/usr/local/versions/node/v16.15.0/bin:$PATH

export PATH=/usr/local/versions/node/v14.19.2/bin:$PATH

export PATH=/usr/local/versions/node/v18.14.2/bin:$PATH

The following is an example.

services: upgrade_runtime: component: 'fc' actions: pre-deploy: - run: export PATH=/usr/local/versions/node/v18.14.2/bin:$PATH && npm run build props: ...Python

export PATH=/usr/local/envs/py27/bin:$PATH

export PATH=/usr/local/envs/py36/bin:$PATH

export PATH=/usr/local/envs/py37/bin:$PATH

export PATH=/usr/local/envs/py39/bin:$PATH

export PATH=/usr/local/envs/py310/bin:$PATH

The following is an example.

services: upgrade_runtime: component: 'fc' actions: pre-deploy: - run: export PATH=/usr/local/envs/py310/bin:$PATH && pip3 install -r requirements.txt -t . props: ...