A Hive catalog connects Realtime Compute for Apache Flink to your Hive metastore or Alibaba Cloud Data Lake Formation (DLF), so you can query and write Hive tables without declaring DDL statements for each table. Tables registered in a Hive catalog are available as source tables, result tables, or dimension tables in both streaming and batch deployments.

This topic covers:

Prerequisites

Before you begin, make sure that you have:

If you use a Hive metastore:

The Hive metastore service is running. To start it, run

hive --service metastore. To verify it is listening on the default port (9083), runnetstat -ln | grep 9083. If you configured a different port inhive-site.xml, replace9083with that port.A whitelist configured for the Hive metastore service, with the CIDR blocks of Realtime Compute for Apache Flink added. For steps, see Configure a whitelist and Add a security group rule.

If you use Alibaba Cloud DLF:

DLF activated in your Alibaba Cloud account.

Limitations

| Limitation | Details |

|---|---|

| Supported metastore types | Self-managed Hive metastores |

| Supported Hive versions | 2.0.0–2.3.9 and 3.1.0–3.1.3 |

| Legacy Hive versions (1.X, 2.1.X, 2.2.X) | Not supported in Apache Flink 1.16 or later; requires Ververica Runtime (VVR) 6.X |

| Non-Hive tables in DLF-backed catalogs | Requires VVR 8.0.6 or later |

| Writing to OSS-HDFS via Hive catalog | Requires VVR 8.0.6 or later |

Configure Hive metadata

Before creating a Hive catalog, connect your Hadoop cluster to the virtual private cloud (VPC) where Realtime Compute for Apache Flink runs, then upload your configuration files to Object Storage Service (OSS).

Step 1: Connect your Hadoop cluster to the VPC

Use Alibaba Cloud DNS PrivateZone to resolve Hadoop hostnames within the VPC. For setup instructions, see Resolver.

Step 2: Configure the metastore in hive-site.xml

Choose the metastore type that matches your setup.

Hive metastore

Verify that hive.metastore.uris in hive-site.xml points to the internal or public IP address of your Hive host:

<property>

<name>hive.metastore.uris</name>

<value>thrift://xx.yy.zz.mm:9083</value>

<description>Thrift URI for the remote metastore. Used by metastore client to connect to remote metastore.</description>

</property>If you sethive.metastore.uristo a hostname instead of an IP address, configure Alibaba Cloud DNS PrivateZone to resolve it. Without DNS resolution, Ververica Platform (VVP) returns anUnknownHostExceptionwhen connecting to the metastore. See Add a DNS record to a private zone.

Alibaba Cloud DLF

If hive-site.xml contains a dlf.catalog.akMode entry, delete it before proceeding. Leaving it in place prevents the Hive catalog from accessing DLF.

Add the following properties to hive-site.xml:

<property>

<name>hive.imetastoreclient.factory.class</name>

<value>com.aliyun.datalake.metastore.hive2.DlfMetaStoreClientFactory</value>

</property>

<property>

<name>dlf.catalog.uid</name>

<value>${YOUR_DLF_CATALOG_UID}</value>

</property>

<property>

<name>dlf.catalog.endpoint</name>

<value>${YOUR_DLF_ENDPOINT}</value>

</property>

<property>

<name>dlf.catalog.region</name>

<value>${YOUR_DLF_CATALOG_REGION}</value>

</property>

<property>

<name>dlf.catalog.accessKeyId</name>

<value>${YOUR_ACCESS_KEY_ID}</value>

</property>

<property>

<name>dlf.catalog.accessKeySecret</name>

<value>${YOUR_ACCESS_KEY_SECRET}</value>

</property>| Parameter | Required | Description |

|---|---|---|

dlf.catalog.uid | Yes | Your Alibaba Cloud account ID. Find it on the Security Settings page. |

dlf.catalog.endpoint | Yes | DLF service endpoint. Use the VPC endpoint for your region, for example dlf-vpc.cn-hangzhou.aliyuncs.com. For all endpoints, see Supported regions and endpoints. For cross-VPC access, see How does Realtime Compute for Apache Flink access a service across VPCs? |

dlf.catalog.region | Yes | Region ID where DLF is activated. Must match the region of dlf.catalog.endpoint. See Supported regions and endpoints. |

dlf.catalog.accessKeyId | Yes | AccessKey ID of your Alibaba Cloud account. See Obtain an AccessKey pair. |

dlf.catalog.accessKeySecret | Yes | AccessKey secret of your Alibaba Cloud account. See Obtain an AccessKey pair. |

Step 3: Configure table storage in hive-site.xml

Hive catalogs support two storage backends for table data: OSS and OSS-HDFS.

OSS

Add the following properties to hive-site.xml:

<property>

<name>fs.oss.impl.disable.cache</name>

<value>true</value>

</property>

<property>

<name>fs.oss.impl</name>

<value>org.apache.hadoop.fs.aliyun.oss.AliyunOSSFileSystem</value>

</property>

<property>

<name>hive.metastore.warehouse.dir</name>

<value>${YOUR_OSS_WAREHOUSE_DIR}</value>

</property>

<property>

<name>fs.oss.endpoint</name>

<value>${YOUR_OSS_ENDPOINT}</value>

</property>

<property>

<name>fs.oss.accessKeyId</name>

<value>${YOUR_ACCESS_KEY_ID}</value>

</property>

<property>

<name>fs.oss.accessKeySecret</name>

<value>${YOUR_ACCESS_KEY_SECRET}</value>

</property>

<property>

<name>fs.defaultFS</name>

<value>oss://${YOUR_OSS_BUCKET_DOMIN}</value>

</property>| Parameter | Required | Description |

|---|---|---|

hive.metastore.warehouse.dir | Yes | Directory where table data is stored. |

fs.oss.endpoint | Yes | OSS endpoint. See Regions and endpoints. |

fs.oss.accessKeyId | Yes | AccessKey ID. See Obtain an AccessKey pair. |

fs.oss.accessKeySecret | Yes | AccessKey secret. See Obtain an AccessKey pair. |

fs.defaultFS | Yes | Default file system for table data. Set to the HDFS endpoint of the target bucket, for example oss://oss-hdfs-bucket.cn-hangzhou.oss-dls.aliyuncs.com/. |

OSS-HDFS

Add the following properties to

hive-site.xml:Parameter Required Description hive.metastore.warehouse.dirYes Directory where table data is stored. fs.oss.endpointYes OSS endpoint. See Regions and endpoints. fs.oss.accessKeyIdYes AccessKey ID. See Obtain an AccessKey pair. fs.oss.accessKeySecretYes AccessKey secret. See Obtain an AccessKey pair. fs.defaultFSYes Default file system for table data. Set to the HDFS endpoint of the target bucket, for example oss://oss-hdfs-bucket.cn-hangzhou.oss-dls.aliyuncs.com/.<property> <name>fs.jindo.impl</name> <value>com.aliyun.jindodata.jindo.JindoFileSystem</value> </property> <property> <name>hive.metastore.warehouse.dir</name> <value>${YOUR_OSS_WAREHOUSE_DIR}</value> </property> <property> <name>fs.oss.endpoint</name> <value>${YOUR_OSS_ENDPOINT}</value> </property> <property> <name>fs.oss.accessKeyId</name> <value>${YOUR_ACCESS_KEY_ID}</value> </property> <property> <name>fs.oss.accessKeySecret</name> <value>${YOUR_ACCESS_KEY_SECRET}</value> </property> <property> <name>fs.defaultFS</name> <value>oss://${YOUR_OSS_HDFS_BUCKET_DOMIN}</value> </property>(Optional) If you plan to read Parquet-format Hive tables stored in OSS-HDFS, add the following configuration to Realtime Compute for Apache Flink:

fs.oss.jindo.accessKeyId: ${YOUR_ACCESS_KEY_ID} fs.oss.jindo.accessKeySecret: ${YOUR_ACCESS_KEY_SECRET} fs.oss.jindo.endpoint: ${YOUR_JINODO_ENDPOINT} fs.oss.jindo.buckets: ${YOUR_JINDO_BUCKETS}For parameter details, see Write data to OSS-HDFS.

If your data is already stored in Realtime Compute for Apache Flink, skip Steps 2–3 and go directly to Create a Hive catalog.

Step 4: Upload configuration files to OSS

Log on to the OSS console and click Buckets in the left-side navigation pane.

Click the name of the bucket used by your Realtime Compute for Apache Flink workspace.

Create the folder

${hms}at the pathoss://${bucket}/artifacts/namespaces/${ns}/. For instructions, see Create directories.Realtime Compute for Apache Flink automatically creates the

/artifacts/namespaces/${ns}/directory when a workspace is provisioned. If the directory does not appear in the OSS console, upload any file on the Artifacts page in the development console to trigger its creation.Variable Description ${bucket}The bucket used by your Realtime Compute for Apache Flink workspace ${ns}The name of the workspace for which you are creating the Hive catalog ${hms}The folder name. Use the same name as the Hive catalog you will create Inside

oss://${bucket}/artifacts/namespaces/${ns}/${hms}/, create two subdirectories: After creating both directories, go to Files > Projects in the OSS console to verify the structure and copy the OSS URLs.hive-conf-dir/— storeshive-site.xmlhadoop-conf-dir/— stores Hadoop configuration files

Upload

hive-site.xmltohive-conf-dir/. See Upload objects.Upload the following files to

hadoop-conf-dir/. See Upload objects.hive-site.xmlcore-site.xmlhdfs-site.xmlmapred-site.xmlAny other required files, such as compressed packages used by Hive deployments

Create a Hive catalog

After configuring Hive metadata, create a Hive catalog from the console or by running an SQL statement. The console method is recommended.

After creating a Hive catalog, you cannot modify its configuration. To change any parameter, drop the catalog and create it again.

Create a Hive catalog from the console

Log on to the Realtime Compute for Apache Flink console. Find the workspace and click Console in the Actions column.

Click Catalogs.

On the Catalog List page, click Create Catalog. In the dialog box, go to the Built-in Catalog tab, select Hive in the Choose Catalog Type step, and click Next.

In the Configure Catalog step, set the following parameters:

Parameter Required Description catalog nameYes Name of the Hive catalog hive-versionYes Version of the Hive metastore. Supported: 2.0.0–2.3.9, 3.1.0–3.1.3. Map your installed version: 2.0.X or 2.1.X → 2.2.0; 2.2.X →2.2.0; 2.3.X →2.3.6; 3.1.X →3.1.2default-databaseYes Name of the default database hive-conf-dirYes OSS path to the directory containing hive-site.xml, created in Step 4. For fully managed storage, upload the file as promptedhadoop-conf-dirYes OSS path to the directory containing Hadoop configuration files, created in Step 4. For fully managed storage, upload the files as prompted hive-kerberosNo Enable Kerberos authentication and associate a registered Kerberized Hive cluster and a Kerberos principal. See Register a Kerberized Hive cluster Click Confirm.

In the Catalogs pane on the left side of the Catalog List page, verify the new catalog appears.

Create a Hive catalog by running an SQL statement

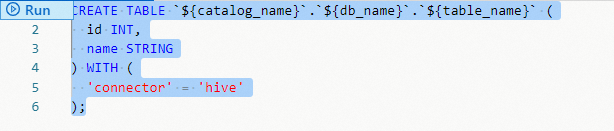

On the Scripts page, run the following statement:

Parameter Required Description ${HMS Name}Yes Name of the Hive catalog typeYes Connector type. Set to hivedefault-databaseYes Name of the default database hive-versionYes Hive metastore version. Supported: 2.0.0–2.3.9, 3.1.0–3.1.3. Map your installed version: 2.0.X or 2.1.X → 2.2.0; 2.2.X →2.2.0; 2.3.X →2.3.6; 3.1.X →3.1.2hive-conf-dirYes OSS path to the directory containing hive-site.xml. See Configure Hive metadatahadoop-conf-dirYes OSS path to the directory containing Hadoop configuration files. See Configure Hive metadata CREATE CATALOG ${HMS Name} WITH ( 'type' = 'hive', 'default-database' = 'default', 'hive-version' = '<hive-version>', 'hive-conf-dir' = '<hive-conf-dir>', 'hadoop-conf-dir' = '<hadoop-conf-dir>' );Select the

CREATE CATALOGstatement and click Run.

After the catalog is created, tables in it are available in drafts as result tables or dimension tables without DDL declarations. Table names use the format ${hive-catalog-name}.${hive-db-name}.${hive-table-name}.

Use a Hive catalog

All examples below use a catalog named flinkexporthive and a database named flinkhive.

Create a Hive table

UI method

From the console:

Log on to the Realtime Compute for Apache Flink console, find the workspace, and click Console in the Actions column.

Click Catalogs.

On the Catalog List page, find the catalog and click View in the Actions column.

Find the target database and click View in the Actions column.

Click Create Table.

On the Built-in tab of the Create Table dialog box, select a table type from the Connection Type drop-down list, select a connector type, and click Next.

Enter the table creation statement and configure the parameters:

CREATE TABLE `${catalog_name}`.`${db_name}`.`${table_name}` ( id INT, name STRING ) WITH ( 'connector' = 'hive' );Click OK.

SQL command method

By running an SQL statement:

On the Scripts page, run the CREATE TABLE statement, then select it and click Run.

Example — create flink_hive_test in the flinkhive database under flinkexporthive:

-- Create a table named flink_hive_test in the flinkhive database under the flinkexporthive catalog.

CREATE TABLE `flinkexporthive`.`flinkhive`.`flink_hive_test` (

id INT,

name STRING

) WITH (

'connector' = 'hive'

);Modify a Hive table

On the Scripts page, run the ALTER TABLE statement:

-- Add a column to the Hive table.

ALTER TABLE `${catalog_name}`.`${db_name}`.`${table_name}`

ADD column type-column;

-- Drop a column from the Hive table.

ALTER TABLE `${catalog_name}`.`${db_name}`.`${table_name}`

DROP column;Example — add and then remove the color column from flink_hive_test:

-- Add the color field to the Hive table.

ALTER TABLE `flinkexporthive`.`flinkhive`.`flink_hive_test`

ADD color STRING;

-- Drop the color field from the Hive table.

ALTER TABLE `flinkexporthive`.`flinkhive`.`flink_hive_test`

DROP color;Read data from a Hive table

INSERT INTO ${other_sink_table}

SELECT ...

FROM `${catalog_name}`.`${db_name}`.`${table_name}`;Write data to a Hive table

INSERT INTO `${catalog_name}`.`${db_name}`.`${table_name}`

SELECT ...

FROM ${other_source_table};Drop a Hive table

UI method

From the console:

Log on to the Realtime Compute for Apache Flink console, find the workspace, and click Console in the Actions column.

Click Catalogs.

In the Catalogs pane, navigate to the target table under the target database and catalog.

On the table details page, click Delete in the Actions column.

In the confirmation dialog, click OK.

SQL command method

By running an SQL statement:

On the Scripts page, run:

-- Drop the Hive table.

DROP TABLE `${catalog_name}`.`${db_name}`.`${table_name}`;Example:

-- Drop the Hive table.

DROP TABLE `flinkexporthive`.`flinkhive`.`flink_hive_test`;View a Hive catalog

Log on to the Realtime Compute for Apache Flink console, find the workspace, and click Console in the Actions column.

Click Catalogs.

On the Catalog List page, check the Name and Type columns for the catalog.

To view the databases and tables in the catalog, click View in the Actions column.

Drop a Hive catalog

Dropping a Hive catalog does not affect running deployments. However, it affects unpublished drafts and deployments that need to be suspended and resumed. Drop a catalog only when necessary.

Drop a Hive catalog from the console

Log on to the Realtime Compute for Apache Flink console, find the workspace, and click Console in the Actions column.

Click Catalogs.

On the Catalog List page, find the catalog and click Delete in the Actions column.

In the confirmation dialog, click Delete.

Verify the catalog no longer appears in the Catalogs pane.

Drop a Hive catalog by running an SQL statement

On the Scripts page, run:

DROP CATALOG ${HMS Name};${HMS Name}is the catalog name shown in the development console of Realtime Compute for Apache Flink.Right-click the statement and select Run from the shortcut menu.

Verify the catalog no longer appears in the Catalogs pane.