Business Insight analyzes T+1 data (previous day) from your EMR Serverless StarRocks instance across five dimensions: Query Insight, Import Insight, Table Insight, Compaction Insight, and Cache Insight.

Typical diagnostic workflow

When query performance degrades, start with Query Insight to identify slow SQL and P99 trends. If slow queries correlate with low cache hit ratios, check Cache Insight to find tables worth prefetching. If high import frequency is the root cause, use Import Insight to detect small file issues or write imbalances. Table Insight rounds out the picture with data distribution and access pattern metrics.

View Business Insight

Log on to the E-MapReduce console.

In the left navigation pane, choose EMR Serverless > StarRocks.

In the top menu bar, select the target region.

Click the ID of the target instance.

Click the Business Insight tab.

Query Insight

The Query Insight page is divided into four sections: Query Latency, DB Lock, SQL Query Analysis, and Parameterized SQL Analysis. Use the date, SQL type, and database filters to scope each view.

The following SQL types are supported:

DML: Data Manipulation Language (DML) statements for data query and modification, such as SELECT, UPDATE, and DELETE.

DDL: Data Definition Language (DDL) statements for defining or modifying data structures, such as CREATE and ALTER.

Other: All other SQL commands, such as auxiliary commands like SHOW.

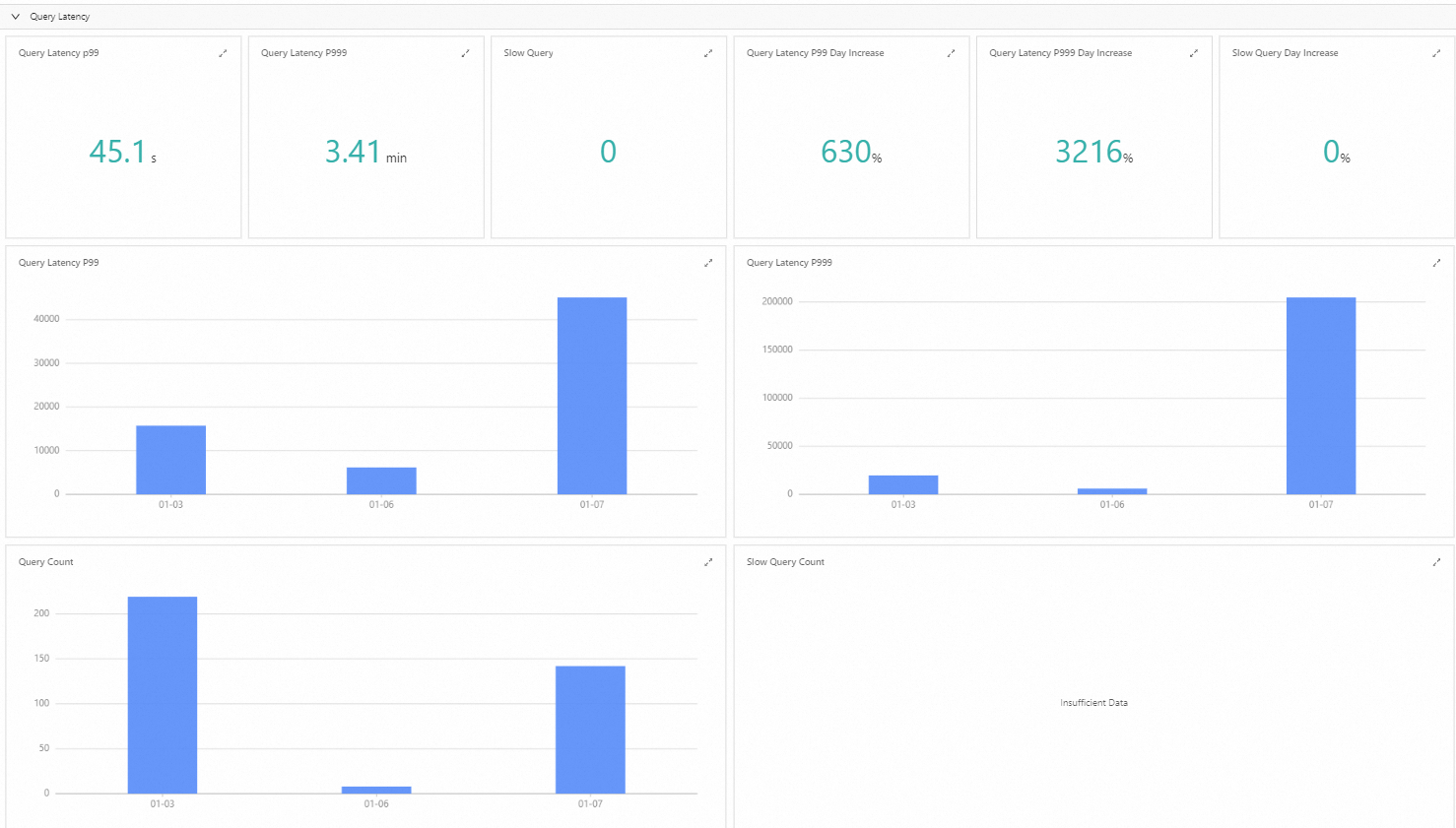

Query latency

Query latency statistics are derived from daily audit data and cover the last seven days, helping you spot performance trends before they affect users.

P99 latency measures the response time within which 99% of requests complete. If P99 latency is elevated, consider adding compute resources or optimizing the queries with the longest execution times.

DB Lock

The DB Lock section monitors lock contention during database access — conditions that arise when multiple transactions compete to read or modify the same data. Prolonged lock waits slow down overall system throughput. Use the lock contention data here to identify which transactions are causing delays and adjust their concurrency accordingly.

SQL query analysis

SQL Query Analysis ranks SQL statements by query time, CPU consumption, and memory consumption. The Slow SQL Top10 view shows the ten statements with the longest execution time on a selected date. To investigate a slow statement further, use the details in Profile Query Analysis. For more information, see Introduction to Query Profile.

The following table describes the main fields.

| Field | Description |

|---|---|

| Query ID | The unique identifier for each SQL execution. A new ID is generated each time a statement runs. |

| User | The StarRocks database user who ran the statement. |

| Query Time | Total execution time. Unit: ms. |

| CPU Execution Time | Sum of CPU time across all cores involved in the execution. Unit: ns. |

| Memory Usage | Memory consumed during execution. Unit: bytes. |

| Scan Bytes | Data volume scanned during execution. Unit: bytes. |

| Scan Rows | Number of rows scanned. |

| Return Rows | Number of rows returned. |

| SQL Text | The text of the executed statement. |

Parameterized SQL analysis

Parameterized SQL groups similar statements into a single template by replacing literal constants with ? placeholders, preserving the syntax structure while stripping comments and normalizing whitespace.

For example, the following two statements map to a single parameterized form:

Original SQL:

SELECT * FROM orders WHERE customer_id=10 AND quantity>20 SELECT * FROM orders WHERE customer_id=20 AND quantity>100Parameterized SQL:

SELECT * FROM orders WHERE customer_id=? AND quantity>?

Parameterized SQL analysis ranks statement groups by execution frequency, total execution time, execution time variance, CPU consumption, memory consumption, and failure frequency. This lets you:

Get an overview of the SQL workload on the StarRocks database.

Prioritize optimization for statements that run frequently, take a long time, or consume large amounts of CPU and memory.

Use the Coefficient of Variation (CV) of Query Time to detect execution time instability. A high CV for a parameterized SQL group — especially combined with high frequency — indicates potential data skew or resource contention. A CV value close to zero means execution time is stable; a high CV means similar statements are taking significantly different amounts of time, which warrants investigation.

Track failures using Execution Failure Count to find statements with recurring errors.

Fields for execution time analysis:

| Field | Description |

|---|---|

| Parameterized SQL ID | The hash value of the parameterized statement. |

| Coefficient of Variation of Query Time | Ratio of the standard deviation to the average execution time. A higher value means greater variability across executions of similar statements. If this value is high for a frequently run group, investigate for data skew or resource contention. |

| Executions | Total number of executions. |

| Parameterized SQL Text | The text of the parameterized statement. |

Fields for failure analysis:

| Field | Description |

|---|---|

| Parameterized SQL ID | The hash value of the parameterized statement. |

| Execution Failure Count | Number of times the statement failed. A high count relative to total executions indicates recurring errors that need investigation. |

| Executions | Total number of executions. |

| Parameterized SQL Text | The text of the parameterized statement. |

Import Insight

Import Insight provides statistics and analysis of import tasks from multiple perspectives.

Import Insight currently supports shared-nothing instances only.

Too many versions

When import frequency is too high, the Compaction Score can exceed 1,000, causing StarRocks to return a "Too many versions" error. Import Insight identifies the affected tables by analyzing "too many versions" log entries. To resolve this, reduce the concurrency and frequency of imports for those tables.

Top analysis

Analysis of potential small files in top hot import tables

For table-level imports, the system evaluates the severity of small file issues using an impact score and surfaces the 20 most affected tables. Small files degrade query performance and reduce compaction efficiency.

The impact score is calculated differently by table type:

Primary key tables:

Total number of files written / Average file size. A small average size with a high file count means a more severe small file problem.Non-primary key tables:

Total number of files written / Average write time per file. A short write time with a high file count means a more severe small file problem.

Analysis of top hot import tables

This view ranks the 20 tables with the most import tasks, showing which tables have the highest import frequency and transaction volume.

Analysis of hot import nodes

This view shows per-node import statistics. Use the Total Write Size metric to check whether write load is distributed evenly across brokers. If write load is concentrated on a small number of nodes, check your data distribution and bucket configuration.

Data table insights

Table Insight surfaces metrics for optimizing table design, including query frequency, SQL patterns, data distribution balance, and tables that have not been accessed in the last 90 days.

| Metric | Description |

|---|---|

| SQL Executions | Total SQL executions involving this table. Frequently accessed tables generally benefit the most from design optimization. |

| Associated Parameterized SQL Count | Number of distinct parameterized SQL groups that access this table. Analyzing these patterns can reveal opportunities to create materialized views that accelerate common query types. |

| Coefficient of Variation of Tablet Data Size | Standard deviation of tablet data sizes divided by their average within the same partition. A high value suggests data skew — uneven distribution that can slow down queries on this table. If this value is high, review your partition key and bucket count to improve data distribution. |

Cache Insight

Cache Insight shows cache performance statistics for shared-data instances, analyzed across three dimensions: table, parameterized SQL, and individual SQL statement.

Cache Insight is not currently available for Storage-Compute Integrated Edition instances.

How it works

A higher cache hit ratio means faster queries, because data is served from local disk rather than remote storage. Cache Insight derives its metrics from Query Profile data. Each table scan in a Query Profile corresponds to a CONNECTOR_SCAN operator, which exposes the following cache-related metrics:

| Metric | Description | Internal table metric | External table metric |

|---|---|---|---|

| Cache hit volume | Bytes read from the local cache | CompressedBytesReadLocalDisk | dataCacheReadBytes |

| Cache miss volume | Bytes read from remote storage | CompressedBytesReadRemote | FSIOBytesRead |

| Local IO count | IO operations from the local cache | IOCountLocalDisk | dataCacheReadCounter |

| Remote IO count | IO operations from remote storage | IOCountRemote | FSIOCounter |

| Local IO time | Time spent reading from the local cache | IOTimeLocalDisk | dataCacheReadTimer |

| Remote IO time | Time spent reading from remote storage | IOTimeRemote | FSIOTime |

From these six raw metrics, three derived ratios are calculated:

Cache hit ratio = Cache hit volume / (Cache hit volume + Cache miss volume)Local IO time percentage = Local IO time / (Local IO time + Remote IO time)Local IO count percentage = Local IO count / (Local IO count + Remote IO count)

Each day's Query Profile records are processed to extract these metrics for all CONNECTOR_SCAN operators, then grouped by the following dimensions:

| Dimension | Use case |

|---|---|

| Table | Find frequently accessed tables with low cache hit ratios and high cache miss volumes. Prefetching the cache for these tables can improve query performance. |

| Parameterized SQL | Analyze cache efficiency for SQL groups with many CONNECTOR_SCAN operators or long average query times. Prefetching or optimizing these statements has the broadest impact. |

| Individual SQL | Investigate specific statements with long execution times, low cache hit ratios, or high cache miss counts. |

Table dimension

This section ranks tables by access count, cache hit ratio, cache hit volume, and cache miss volume.

A table that appears in multiple top-20 lists — high access frequency, low cache hit ratio, and high average cache miss volume — is the best candidate for cache prefetching. For example, a table with 6,411 accesses but a cache hit ratio of only 24.2% would benefit significantly from prefetching.

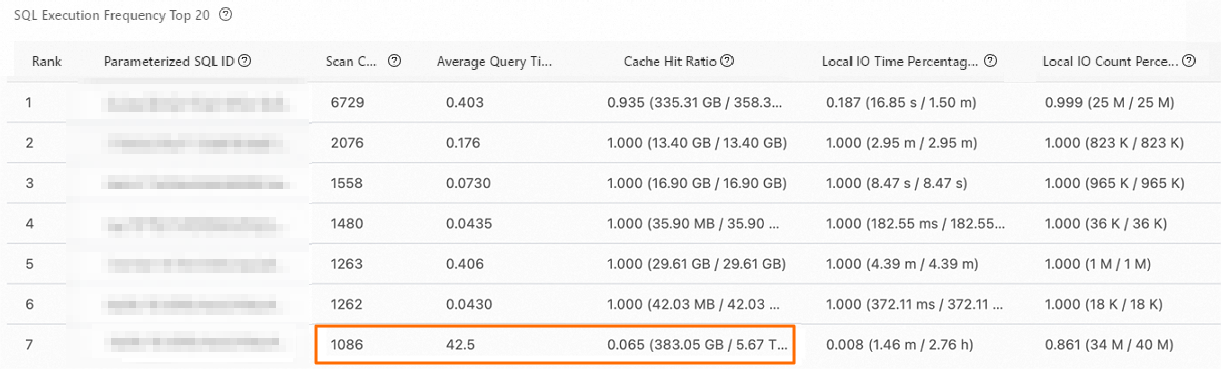

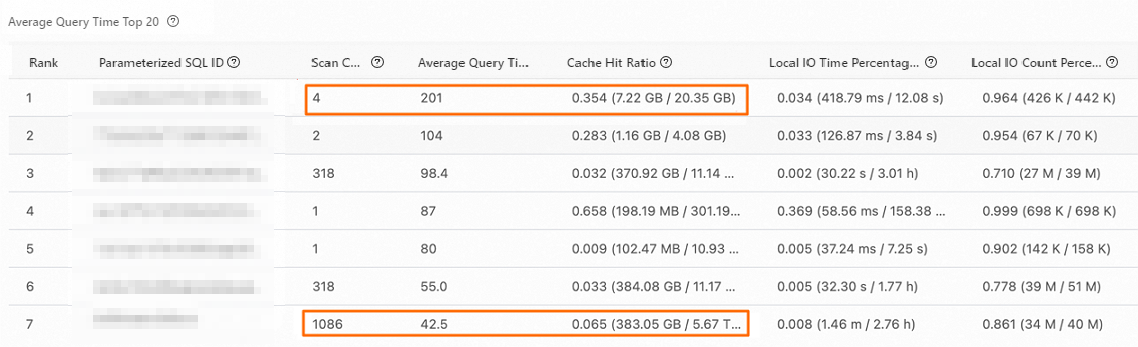

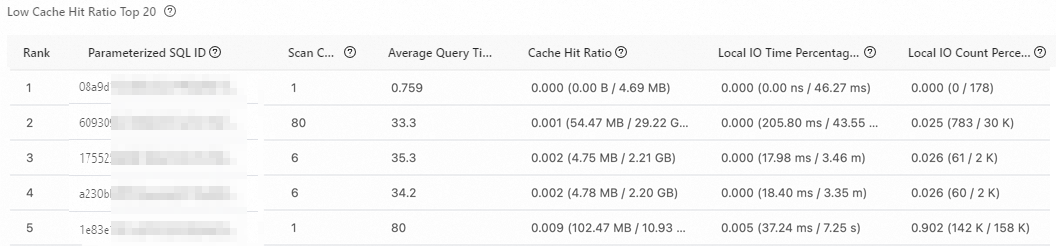

Parameterized SQL dimension

This section ranks parameterized SQL groups by scan count, cache hit ratio, cache hit volume, and cache miss volume.

Use the two views together to prioritize optimization:

A group ranked seventh by scan count (1,086 scans) with an average query time of 42.5 seconds and a cache hit ratio of 6.5% is a high-priority candidate — it's frequent, slow, and cache-cold.

A group ranked first by average query time (201 seconds) but with only 4 scans is a cold query. Prioritize optimization based on business impact.

Groups with low cache hit ratios and high cache miss volumes: weigh against scan count and average query time before deciding to prefetch.

The following table describes the main fields.

| Field | Description |

|---|---|

| Parameterized SQL ID | The hash value of the parameterized statement. |

| Scan count | Total CONNECTOR_SCAN operators across all SQL executions mapped to this parameterized statement. |

| Cache hit ratio | Total cache hit volume / (Total cache hit volume + Total cache miss volume). |

| Local IO time percentage | Total local IO time / (Total local IO time + Total remote IO time). |

| Local IO count percentage | Total local IO count / (Total local IO count + Total remote IO count). |

| Average cache hit volume | Total cache hit volume / Executions. |

| Average cache miss volume | Total cache miss volume / Executions. |

| Parameterized SQL statement | The text of the parameterized statement. |

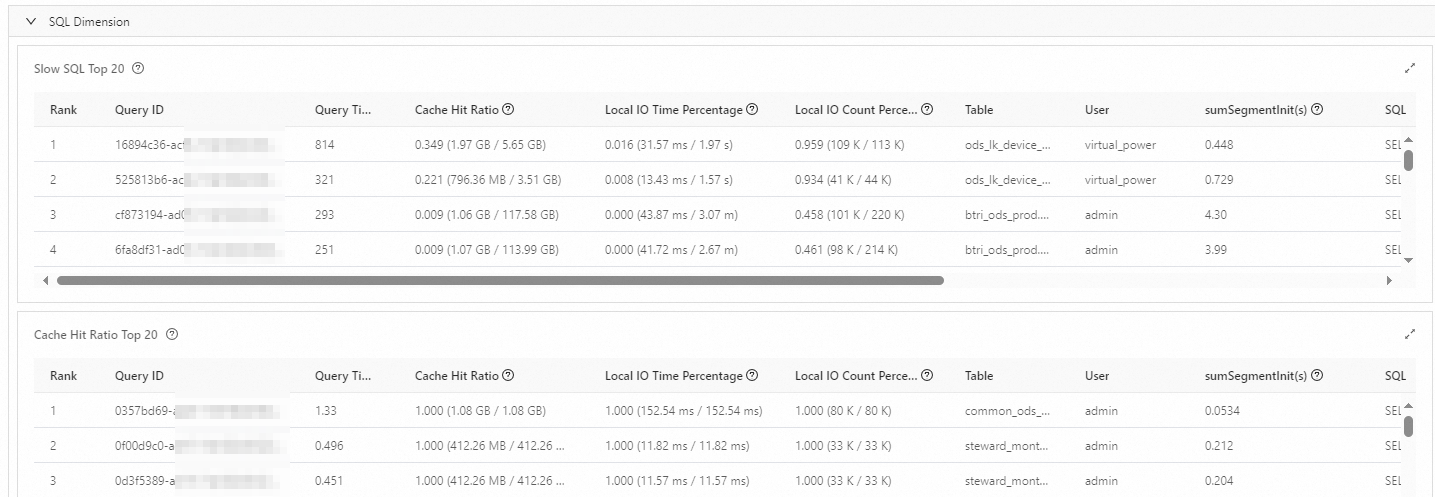

SQL dimension

This section ranks individual SQL statements by query time, cache hit ratio, cache hit volume, and cache miss volume. Use it to investigate specific statements with long execution times or poor cache efficiency.

The following table describes the main fields.

| Field | Description |

|---|---|

| Query ID | The unique identifier for each SQL execution. |

| Query time | Total execution time. Unit: seconds. |

| Cache hit ratio | Total cache hit volume / (Total cache hit volume + Total cache miss volume). |

| Local IO time percentage | Total local IO time / (Total local IO time + Total remote IO time). |

| Local IO count percentage | Total local IO count / (Total local IO count + Total remote IO count). |

| Data table | All tables, views, and materialized views queried by the statement. |

| Username | The StarRocks database user who ran the statement. |

| sumSegmentInit | Sum of segment initialization time for all CONNECTOR_SCAN operators in the query. |

| SQL statement | The text of the executed statement. |