Kyuubi Gateway provides Java Database Connectivity (JDBC) and ODBC interfaces to connect EMR Serverless Spark with SQL query and business intelligence (BI) tools such as Tableau and Power BI. It supports multi-tenancy and resource isolation for enterprise workloads.

Create a Kyuubi Gateway

Prerequisites

Before you begin, make sure you have:

An EMR Serverless Spark workspace

A queue configured in the workspace

(Optional) A virtual private cloud (VPC) network connection, if the gateway needs to reach data sources or external services in another VPC. See Network connectivity between EMR Serverless Spark and other VPCs.

Steps

Go to the Gateway page.

Log on to the EMR console.

In the left navigation pane, choose EMR Serverless > Spark.

Click the name of the workspace you want to manage.

In the left navigation pane of the workspace page, click O&M Center > Gateway.

On the Kyuubi Gateway page, click Create Kyuubi Gateway.

Configure the following parameters, then click Create

A large number of Spark configuration items reduces instantaneous concurrency for Spark job submission.

Parameter Description Name The gateway name. Use only lowercase letters, digits, and hyphens (-). The name must start and end with a letter or digit. Kyuubi Gateway Resource The compute resource allocated to the gateway. Default: 2 CPU, 8 GB. See the table below for specs and recommended maximum concurrent connections.Kyuubi Version The Kyuubi version for the gateway. If you use DLF (formerly DLF 2.5) as the Data Catalog, set this to 1.9.2-0.0.1 or later. Engine Version The Spark engine version. For details, see Engine versions. Associated Queue The queue where the gateway is deployed. Spark jobs submitted through the gateway run under the gateway creator's identity. Authentication Method Token-based authentication only. After creating the gateway, generate a token for it. See Manage tokens. Service High Availability When enabled, deploys three or more Kyuubi servers for high availability. Configure Number Of Kyuubi Servers and Zookeeper cluster address (comma-separated endpoints, for example, zk1:2181,zk2:2181,zk3:2181). Make sure the network is reachable.Network Connection The VPC network connection for accessing data sources or external services. See Network connectivity between EMR Serverless Spark and other VPCs. Endpoint(Public) Disabled by default. When enabled, the gateway is accessible via a public endpoint. Otherwise, access is through an internal endpoint. Kyuubi Configuration Kyuubi configuration key-value pairs, space-separated (for example, kyuubi.engine.pool.size 1). See the supported configurations below.Spark Configuration Spark configuration key-value pairs, space-separated (for example, spark.sql.catalog.paimon.metastore dlf). All parameters are supported exceptspark.kubernetes.*.Resource specs and recommended concurrent connections

Spec Recommended max concurrent connections 1 CPU, 4 GB10 2 CPU, 8 GB(default)20 4 CPU, 16 GB30 8 CPU, 32 GB45 16 CPU, 64 GB85 32 CPU, 128 GB135 Supported Kyuubi configurations

kyuubi.engine.pool.size kyuubi.engine.pool.size.threshold kyuubi.engine.share.level kyuubi.engine.single.spark.session kyuubi.session.engine.idle.timeout kyuubi.session.engine.initialize.timeout kyuubi.engine.security.token.max.lifetime kyuubi.session.engine.check.interval kyuubi.session.idle.timeout kyuubi.session.engine.request.timeout kyuubi.session.engine.login.timeout kyuubi.backend.engine.exec.pool.shutdown.timeout kyuubi.backend.server.exec.pool.shutdown.timeout kyuubi.backend.server.exec.pool.keepalive.time kyuubi.frontend.thrift.login.timeout kyuubi.operation.status.polling.timeout kyuubi.engine.pool.selectPolicy kyuubi.authentication kyuubi.kinit.principal kyuubi.kinit.keytab kyuubi.authentication.ldap.* kyuubi.hadoop.proxyuser.hive.hosts kyuubi.hadoop.proxyuser.hive.groups kyuubi.hadoop.proxyuser.kyuubi.hosts kyuubi.hadoop.proxyuser.kyuubi.groups kyuubi.ha.*On the Kyuubi Gateway page, find the gateway and click Start in the Actions column.

Manage tokens

Tokens are required for authentication when connecting to a Kyuubi Gateway.

On the Kyuubi Gateway page, find the target gateway and click Tokens in the Actions column.

Click Create Token.

Configure the following parameters, then click OK.

Parameter Description Name The token name. Expired At The token expiration time in days (minimum: 1). Enabled by default; expires after 365 days. Assigned To The Resource Access Management (RAM) user or RAM role to which the token is assigned. Required when using DLF (formerly DLF 2.5) as the default on the Catalog tab. Select from the drop-down list of users and roles added in Access control. The assigned identity is used to access DLF when submitting Spark jobs through the gateway. Make sure the RAM user or RAM role has DLF access permissions. See Add an authorization. Copy the token immediately after creation.

ImportantThe token value is only shown once and cannot be retrieved later. If the token expires or is lost, create a new token or reset the existing one.

Connect to a Kyuubi Gateway

Gather the following values before connecting. All connection methods use the same placeholders.

| Placeholder | Where to find it |

|---|---|

<endpoint> | Overview tab of the gateway |

<port> | 443 for public endpoints; 80 for internal same-region endpoints |

<token> | Token Management page — copy immediately after creation |

<tokenname> | Token Management page |

<UserName/RoleName> | Access control — the RAM user or RAM role added to the gateway |

If your gateway uses DLF (formerly DLF 2.5) as the default Data Catalog, you must include the user=<UserName/RoleName> parameter in connection strings. Each method below notes where to add it.Connect using Beeline

Before connecting, make sure your Beeline version is compatible with the Kyuubi server version. If Beeline is not installed, see Getting Started - Apache Kyuubi.

The JDBC URL format is:

jdbc:hive2://<endpoint>:<port>/;transportMode=http;httpPath=cliservice/token/<token>If you use DLF, add user=<UserName/RoleName> after transportMode=http;:

beeline -u "jdbc:hive2://<endpoint>:<port>/;transportMode=http;user=<UserName/RoleName>;httpPath=cliservice/token/<token>"For other catalogs:

beeline -u "jdbc:hive2://<endpoint>:<port>/;transportMode=http;httpPath=cliservice/token/<token>"To set session parameters, append them after #:

beeline -u "jdbc:hive2://<endpoint>:<port>/;transportMode=http;httpPath=cliservice/token/<token>;#spark.sql.shuffle.partitions=100;spark.executor.instances=2;"Connect using Java

Add the following dependencies to your

pom.xmlfile. Replace version numbers as needed.<dependencies> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-common</artifactId> <version>3.0.0</version> </dependency> <dependency> <groupId>org.apache.hive</groupId> <artifactId>hive-jdbc</artifactId> <version>2.3.9</version> </dependency> </dependencies>Connect to the gateway with the following code. The JDBC URL format is:

jdbc:hive2://<endpoint>:<port>/;transportMode=http;httpPath=cliservice/token/<token>If you use DLF, append;user=<UserName/RoleName>to the URL.import org.apache.hive.jdbc.HiveStatement; import java.sql.Connection; import java.sql.DriverManager; import java.sql.ResultSet; import java.sql.ResultSetMetaData; public class Main { public static void main(String[] args) throws Exception { // For DLF: append ;user=<UserName/RoleName> to the URL String url = "jdbc:hive2://<endpoint>:<port>/;transportMode=http;httpPath=cliservice/token/<token>"; Class.forName("org.apache.hive.jdbc.HiveDriver"); Connection conn = DriverManager.getConnection(url); HiveStatement stmt = (HiveStatement) conn.createStatement(); String sql = "select * from students;"; System.out.println("Running " + sql); ResultSet res = stmt.executeQuery(sql); ResultSetMetaData md = res.getMetaData(); String[] columns = new String[md.getColumnCount()]; for (int i = 0; i < columns.length; i++) { columns[i] = md.getColumnName(i + 1); } while (res.next()) { System.out.print("Row " + res.getRow() + "=["); for (int i = 0; i < columns.length; i++) { if (i != 0) { System.out.print(", "); } System.out.print(columns[i] + "='" + res.getObject(i + 1) + "'"); } System.out.println(")]"); } conn.close(); } }

Connect using Python

Install the required packages.

pip3 install pyhive thriftConnect to the gateway. Store sensitive values in environment variables rather than hardcoding them in your script. Set the following environment variables before running:

KYUUBI_ENDPOINT— the gateway endpointKYUUBI_PORT— the port numberKYUUBI_TOKEN— the token valueKYUUBI_USERNAME— the RAM user or role name (required when using DLF; for other catalogs, set this to the token name)

import os from pyhive import hive if __name__ == '__main__': cursor = hive.connect( os.environ['KYUUBI_ENDPOINT'], port=os.environ['KYUUBI_PORT'], scheme='http', username=os.environ['KYUUBI_USERNAME'], password=os.environ['KYUUBI_TOKEN'] ).cursor() cursor.execute('show databases') print(cursor.fetchall()) cursor.close()When using DLF, set

KYUUBI_USERNAMEto the RAM user or role name (<UserName/RoleName>). For other catalogs, setKYUUBI_USERNAMEto the token name (<tokenname>).

Connect using the REST API

Kyuubi Gateway supports open-source-compatible Representational State Transfer (REST) APIs over HTTP. The following API paths are supported:

/api/v1/sessions/*/api/v1/operations/*/api/v1/batches/*

Example 1: Run an SQL query

All four steps use token-based authentication in the URL path (/token/<token>). If you use DLF, add -u '<UserName/RoleName>:<password>' to the curl command in each step, where <password> is a placeholder and can be any value.

Create a session and specify Spark configurations. Replace

<dev_queue>with the actual queue name. If you use DLF:curl -X 'POST' \ 'http://<endpoint>:<port>/api/v1/sessions/token/<token>' \ -H 'accept: application/json' \ -H 'Content-Type: application/json' \ -u '<UserName/RoleName>:<password>' \ -d '{ "configs": { "set:hivevar:spark.emr.serverless.kyuubi.engine.queue": "<dev_queue>" } }'If you use another catalog:

curl -X 'POST' \ 'http://<endpoint>:<port>/api/v1/sessions/token/<token>' \ -H 'accept: application/json' \ -H 'Content-Type: application/json' \ -d '{ "configs": { "set:hivevar:spark.emr.serverless.kyuubi.engine.queue": "<dev_queue>" } }'The response returns a session handle (

identifier) that identifies this session:{"identifier":"619e6ded-xxxx-xxxx-xxxx-c2a43f6fac46","kyuubiInstance":"0.0.0.0:10099"}Use the

identifiervalue as<sessionHandle>in subsequent requests.Submit a SQL statement. If you use DLF:

curl -X 'POST' \ 'http://<endpoint>:<port>/api/v1/sessions/<sessionHandle>/operations/statement/token/<token>' \ -H 'accept: application/json' \ -H 'Content-Type: application/json' \ -u '<UserName/RoleName>:<password>' \ -d '{ "statement": "select * from test;", "runAsync": true, "queryTimeout": 0, "confOverlay": { "additionalProp1": "string", "additionalProp2": "string" } }'If you use another catalog:

curl -X 'POST' \ 'http://<endpoint>:<port>/api/v1/sessions/<sessionHandle>/operations/statement/token/<token>' \ -H 'accept: application/json' \ -H 'Content-Type: application/json' \ -d '{ "statement": "select * from test;", "runAsync": true, "queryTimeout": 0, "confOverlay": { "additionalProp1": "string", "additionalProp2": "string" } }'The response returns an operation handle:

{"identifier":"a743e8ff-xxxx-xxxx-xxxx-a66fec66cfa4"}Use the

identifiervalue as<operationHandle>in subsequent requests.Check the statement status. If you use DLF:

curl --location -X 'GET' \ 'http://<endpoint>:<port>/api/v1/operations/<operationHandle>/event/token/<token>' \ -H 'accept: application/json' \ -u '<UserName/RoleName>:<password>'If you use another catalog:

curl --location -X 'GET' \ 'http://<endpoint>:<port>/api/v1/operations/<operationHandle>/event/token/<token>' \ -H 'accept: application/json'Retrieve the results. If you use DLF:

curl --location -X 'GET' \ 'http://<endpoint>:<port>/api/v1/operations/<operationHandle>/rowset/token/<token>/?maxrows=100&fetchorientation=FETCH_NEXT' \ -H 'accept: application/json' \ -u '<UserName/RoleName>:<password>'If you use another catalog:

curl --location -X 'GET' \ 'http://<endpoint>:<port>/api/v1/operations/<operationHandle>/rowset/token/<token>/?maxrows=100&fetchorientation=FETCH_NEXT' \ -H 'accept: application/json'

Example 2: Submit a batch job

Use the batches API to submit a Spark batch job. The gateway starts a Spark application and runs the specified task.

Download the test JAR package: spark-examples_2.12-3.3.1.jar. This is the SparkPi example that comes with Spark and calculates the value of Pi (π).

If you use DLF:

curl --location \

--request POST 'http://<endpoint>:<port>/api/v1/batches/token/<token>' \

--user '<UserName/RoleName>:<password>' \

--form 'batchRequest="{

\"batchType\": \"SPARK\",

\"className\": \"org.apache.spark.examples.SparkPi\",

\"name\": \"kyuubi-spark-pi\",

\"resource\": \"oss://bucket/path/to/spark-examples_2.12-3.3.1.jar\"

}";type=application/json'If you use another catalog:

curl --location \

--request POST 'http://<endpoint>:<port>/api/v1/batches/token/<token>' \

--form 'batchRequest="{

\"batchType\": \"SPARK\",

\"className\": \"org.apache.spark.examples.SparkPi\",

\"name\": \"kyuubi-spark-pi\",

\"resource\": \"oss://bucket/path/to/spark-examples_2.12-3.3.1.jar\"

}";type=application/json'Configure a high-availability Kyuubi Gateway

A high-availability (HA) Kyuubi Gateway uses ZooKeeper for coordination and requires a custom JDBC driver for HA-aware connections.

Prerequisites

A ZooKeeper cluster accessible from your client (for example, Alibaba Cloud MSE or the ZooKeeper component on EMR on ECS)

Network connectivity between your client and the ZooKeeper cluster's VPC. See Network connectivity between EMR Serverless Spark and other VPCs.

Enable high availability

When creating or editing a Kyuubi Gateway, enable Service High Availability, set the number of Kyuubi servers and the ZooKeeper cluster address, and select a network connection for Network Connection.

Connect to the HA gateway

After enabling HA, the gateway coordinates through ZooKeeper. Use the same placeholders described in Connect to a Kyuubi Gateway.

Connect using Beeline

The HA Beeline connection requires a custom JDBC Driver JAR.

Download kyuubi-hive-jdbc-1.9.2.jar.

Replace the default JDBC Driver JAR file.

Back up the existing driver:

mv /your_path/apache-kyuubi-1.9.2-bin/beeline-jars /bak_pathNoteOn EMR on ECS, the default Kyuubi path is

/opt/apps/KYUUBI/kyuubi-1.9.2-1.0.0/beeline-jars. To find the installation path, runenv | grep KYUUBI_HOME.Copy the downloaded driver to the Beeline directory:

cp /download/serverless-spark-kyuubi-hive-jdbc-1.9.2.jar /your_path/apache-kyuubi-1.9.2-bin/beeline-jars

Connect using Beeline:

/your_path/apache-kyuubi-1.9.2-bin/bin/beeline -u 'jdbc:hive2://<endpoint>:<port>/;transportMode=http;httpPath=cliservice/token/<token>'

Connect using Java

The HA Java connection uses a shaded JDBC driver from Serverless Spark instead of the standard Hive JDBC driver.

Download serverless-spark-kyuubi-hive-jdbc-shaded-1.9.2.jar.

Install the driver to your local Maven repository:

mvn install:install-file \ -Dfile=/download/serverless-spark-kyuubi-hive-jdbc-shaded-1.9.2.jar \ -DgroupId=org.apache.kyuubi \ -DartifactId=kyuubi-hive-jdbc-shaded \ -Dversion=1.9.2-ss \ -Dpackaging=jarAdd the following dependencies to your

pom.xmlfile:<dependencies> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-common</artifactId> <version>3.0.0</version> </dependency> <dependency> <groupId>org.apache.kyuubi</groupId> <artifactId>kyuubi-hive-jdbc-shaded</artifactId> <version>1.9.2-ss</version> </dependency> </dependencies>Connect with the following code. Note that the driver class and import differ from the standard (non-HA) Java example.

import org.apache.kyuubi.jdbc.hive.KyuubiStatement; import java.sql.Connection; import java.sql.DriverManager; import java.sql.ResultSet; import java.sql.ResultSetMetaData; public class Main { public static void main(String[] args) throws Exception { String url = "jdbc:hive2://<endpoint>:<port>/;transportMode=http;httpPath=cliservice/token/<token>"; Class.forName("org.apache.kyuubi.jdbc.KyuubiHiveDriver"); Connection conn = DriverManager.getConnection(url); KyuubiStatement stmt = (KyuubiStatement) conn.createStatement(); String sql = "select * from test;"; ResultSet res = stmt.executeQuery(sql); ResultSetMetaData md = res.getMetaData(); String[] columns = new String[md.getColumnCount()]; for (int i = 0; i < columns.length; i++) { columns[i] = md.getColumnName(i + 1); } while (res.next()) { System.out.print("Row " + res.getRow() + "=["); for (int i = 0; i < columns.length; i++) { if (i != 0) { System.out.print(", "); } System.out.print(columns[i] + "='" + res.getObject(i + 1) + "'"); } System.out.println(")]"); } conn.close(); } }

View Spark jobs submitted through Kyuubi

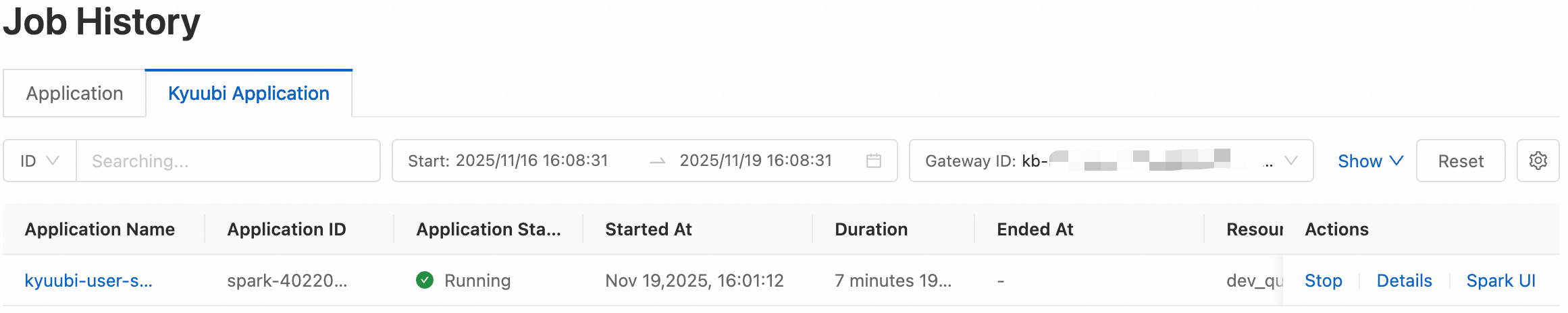

Monitor Spark jobs submitted through a Kyuubi Gateway on the Kyuubi Application tab of the Job History page. The tab shows Application ID, Application Name, Application Status, and Start At for each job.

The Application ID (in spark-xxxx format) is generated by the Spark engine and matches the ID returned by the Kyuubi client, uniquely identifying each task instance.

To view jobs for a specific gateway:

On the Kyuubi Gateway page, click the target gateway name.

In the upper-right corner, click Applications. The page lists all Spark jobs submitted through this gateway.