EMR Serverless Spark lets you register external large language model (LLM) services and call them directly from Spark SQL using the ai_query() function—no custom code required. Once a model service is registered, you can run batch AI workloads such as sentiment analysis, content generation, smart tag extraction, and vector embedding as part of your existing data pipeline.

Supported model service providers: Alibaba Cloud Model Studio, Platform for AI - Elastic Algorithm Service (PAI-EAS), and self-hosted models.

How it works

Deploy an LLM in PAI-EAS and get its VPC endpoint and Token.

Register the endpoint in EMR Serverless Spark as an external model service.

Call the model from Spark SQL using

ai_query('<prompt>', '<service_name>').

The SQL call goes to your registered service. Switching the underlying model or provider only requires updating the registration, not your SQL code.

Prerequisites

Before you begin, make sure you have:

An EMR Serverless Spark workspace

Access to the PAI console with permissions to deploy inference services

An active PAI-EAS service, or follow the steps below to create one

Deploy a model on PAI-EAS

This walkthrough uses Qwen3-0.6B deployed on PAI-EAS as the example model. Skip to Get the endpoint credentials if your service is already running.

Public models have preconfigured deployment templates and can be deployed without uploading model files. Custom models require mounting the model files using Object Storage Service (OSS) or a similar storage service.

Log in to the PAI console. Select a region at the top of the page, choose a workspace, and click Elastic Algorithm Service (EAS).

On the Inference Service tab, click Deploy Service. In the Scenario-based Model Deployment section, click Deploy LLM.

On the Deploy LLM page, configure the following parameters:

Model Configuration: Select Public Model, then search for and select Qwen3-0.6B.

Inference Engine: Select vLLM or SGLang. Both are compatible with the OpenAI API standard. This walkthrough uses vLLM. For guidance on choosing an engine, see Select a suitable inference engine.

Deployment Template: Select Standalone. The system fills in the recommended instance type and image automatically.

Click Deploy. Deployment takes about 5 minutes. When the service status changes to Running, the deployment is complete.

NoteIf deployment fails, see Service deployment and status abnormalities to troubleshoot.

Get the endpoint credentials

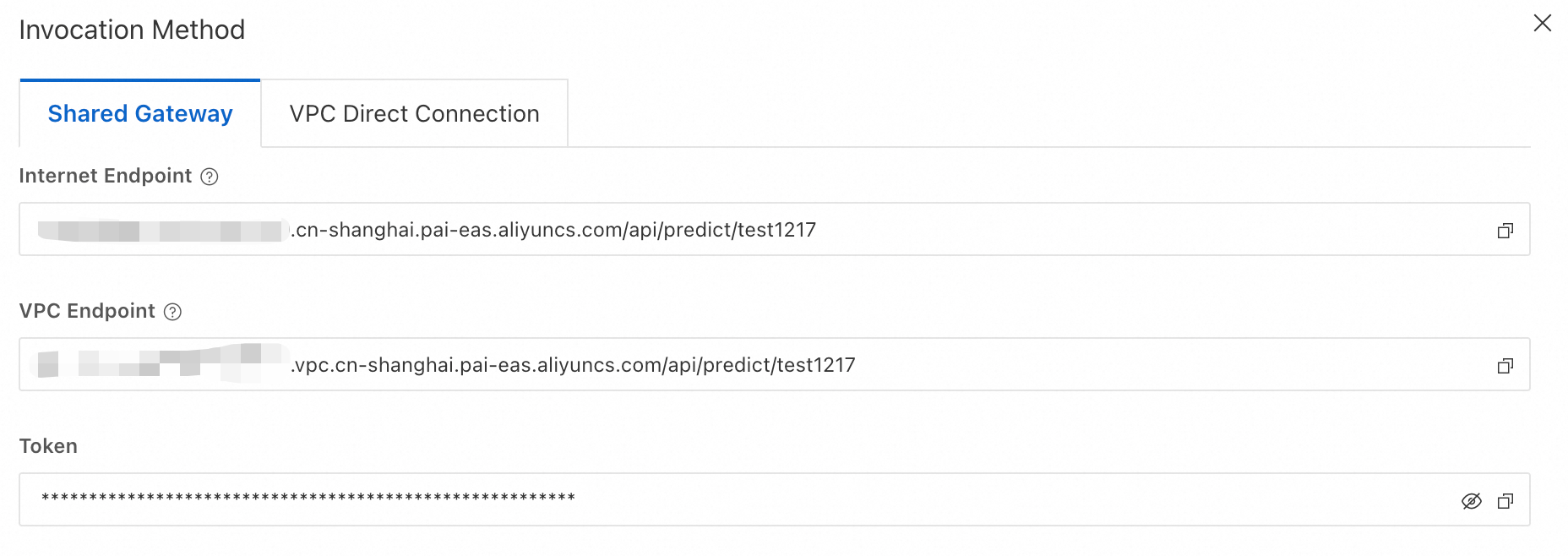

After the service is running, retrieve the VPC endpoint and Token. You'll use both to register the service in EMR Serverless Spark.

On the Inference Service tab, click your service name to open the Overview page. In the Basic Information section, click View Endpoint Information.

In the Endpoint Information panel, copy the VPC endpoint and Token.

Register the model service

Register the PAI-EAS service in EMR Serverless Spark. This makes the service available to ai_query() in Spark SQL.

Go to the model service page.

Log in to the E-MapReduce console.

In the left navigation pane, choose EMR Serverless > Spark.

Click the name of your workspace.

In the left navigation pane, click AI Center > Model Service.

On the Model Service tab, click Create External Model Service and fill in the following fields:

Field Example value Description Model Service Name my_qwen_serviceUsed as the endpointNameargument inai_query(). Must be unique within the workspace and cannot be changed after creation.Endpoint http://12*****39.vpc.cn-hangzhou.pai-eas.aliyuncs.com/api/predict/<ServiceName>/v1Paste the VPC endpoint from the previous step and append /v1to the end.Model Name Qwen3.5-PlusThe model name used when calling the service. Model Type ChatSelect Chatfor text generation orEmbeddingfor vector embedding.API KEY nMzI******************Zg==Paste the Token from the previous step. Description The latest Qwen multimodal model service (Optional) A short description for identification. Click Create.

Call the model with Spark SQL

After registration, use ai_query() in a Spark SQL job to call the model.

Gateway-type tasks (Apache Livy, Apache Kyuubi) are not currently supported.

Syntax:

ai_query(

'<prompt>', -- The prompt text sent to the model

'<service_name>' -- The Model Service Name set during registration

)Create a Spark SQL job and enable the AI feature

On the Development tab, click the

icon to create a job.

icon to create a job.In the dialog box, enter a Name, select SparkSQL as the type, and click OK.

In the upper-right corner, select Create SQL Session from the drop-down list. Configure the session with the following settings:

Setting Value Engine Version esr-4.6.0 or later (esr-4.x), esr-3.5.0 or later (esr-3.x), or esr-2.9.0 or later (esr-2.x) Advanced Configuration Add spark.emr.serverless.ai.function.enable trueto enable the AI feature.

Write the SQL query

The following example uses ai_query() to mask personally identifiable information in a text string:

-- 'my_qwen_service' is the Model Service Name set during registration.

SELECT ai_query(

'Please mask the information in the following text according to these rules:

1) Replace all Chinese names with "".

2) Keep the first 5 digits of phone numbers and replace the rest with "*".

3) Replace complete addresses with "*****".

4) Keep all other text unchanged.

5) Output only the masked text, without explanations.

Original text: My name is Zhang San, my phone number is 12345678900, navigate to Smart Home, Longgang District, Shenzhen City',

'my_qwen_service'

);Review the result

After the query completes, the following result is returned:

My name is , my phone number is 12345*****, navigate to *****Next steps

For custom model deployment on PAI-EAS, see Quick start for Elastic Algorithm Service (EAS).

For a full LLM deployment guide on PAI-EAS, see Deploy large language models (LLMs).