Deploy and call the Qwen3-0.6B model with vLLM framework as an online inference service on EAS.

For production LLM deployment, we recommend using scenario-based LLM deployment or one-click deployment from Model Gallery. These methods are more convenient and faster.

Prerequisites

Activate PAI and create a workspace using your Alibaba Cloud main account. Log on to the PAI console, select a region in the top-left corner, and complete the one-click authorization and product activation.

Billing

This example uses public resources to create a model service. The billing method is pay-as-you-go. For more information about billing rules, see EAS billing.

Prepare resources

To deploy a model service, prepare model files and code files, such as web interfaces. If the official platform image does not meet your deployment requirements, also build your own image.

Prepare model files

To obtain the Qwen3-0.6B model file for this example, run the following Python code. The file downloads from ModelScope to the default path ~/.cache/modelscope/hub.

# Download the model

from modelscope import snapshot_download

model_dir = snapshot_download('Qwen/Qwen3-0.6B')Prepare code files

The vLLM framework makes it easy to build an OpenAI API-compatible service. Therefore, no separate code file is needed.

If you have complex business logic or specific API requirements, prepare your own code files. For example, the following code uses Flask to create a simple API interface.

Upload files to OSS

Use ossutil to upload model and code files to OSS. Mount OSS to the service to read the model file.

For alternative storage methods, see Storage configurations.

You can also package all required files into an image for deployment. However, we do not recommend this method because:

-

Model updates or iterations require rebuilding and re-uploading the image, which increases maintenance costs.

-

Large model files significantly increase image size. This leads to longer image pull times and affects service startup efficiency.

Prepare images

The Qwen3-0.6B model requires vllm>=0.8.5 to create an OpenAI-compatible API endpoint. The official EAS image vllm:0.11.2-mows0.5.1 meets this requirement. Therefore, this example uses the official image.

If no official image meets your requirements, create a custom image. If you develop and train models in DSW, create a DSW instance image to ensure consistency between development and deployment environments.

Deploy the service

-

Log on to the PAI console. Select a region on the top of the page. Then, select the desired workspace and click Elastic Algorithm Service (EAS).

-

Click Deploy Service. In the Custom Model Deployment section, click Custom Deployment.

-

Configure the deployment parameters. Configure key parameters as follows and keep default values for other parameters.

-

Deployment Method: Select Image-based Deployment.

-

Image Configuration: In the Alibaba Cloud Image list, select

vllm:0.11.2-mows0.5.1. -

Mount storage: This example stores the model file in OSS at path

oss://examplebucket/models/Qwen/Qwen3-0___6B. Select OSS and configure as follows.-

Uri: OSS path where the model is located. Set to

oss://examplebucket/models/. -

Mount Path: Destination path in the service instance where the file is mounted, such as

/mnt/data/.

-

-

Command: The official image has a default startup command. Modify as needed. For this example, change to

vllm serve /mnt/data/Qwen/Qwen3-0___6B. -

Resource Type: Select Public Resources. For Resource Specification, select

ecs.gn7i-c16g1.4xlarge. To use other resource types, see Resource configurations.

-

-

Click Deploy. Service deployment takes about 5 minutes. When Service Status changes to Running, the service is successfully deployed.

Test the service

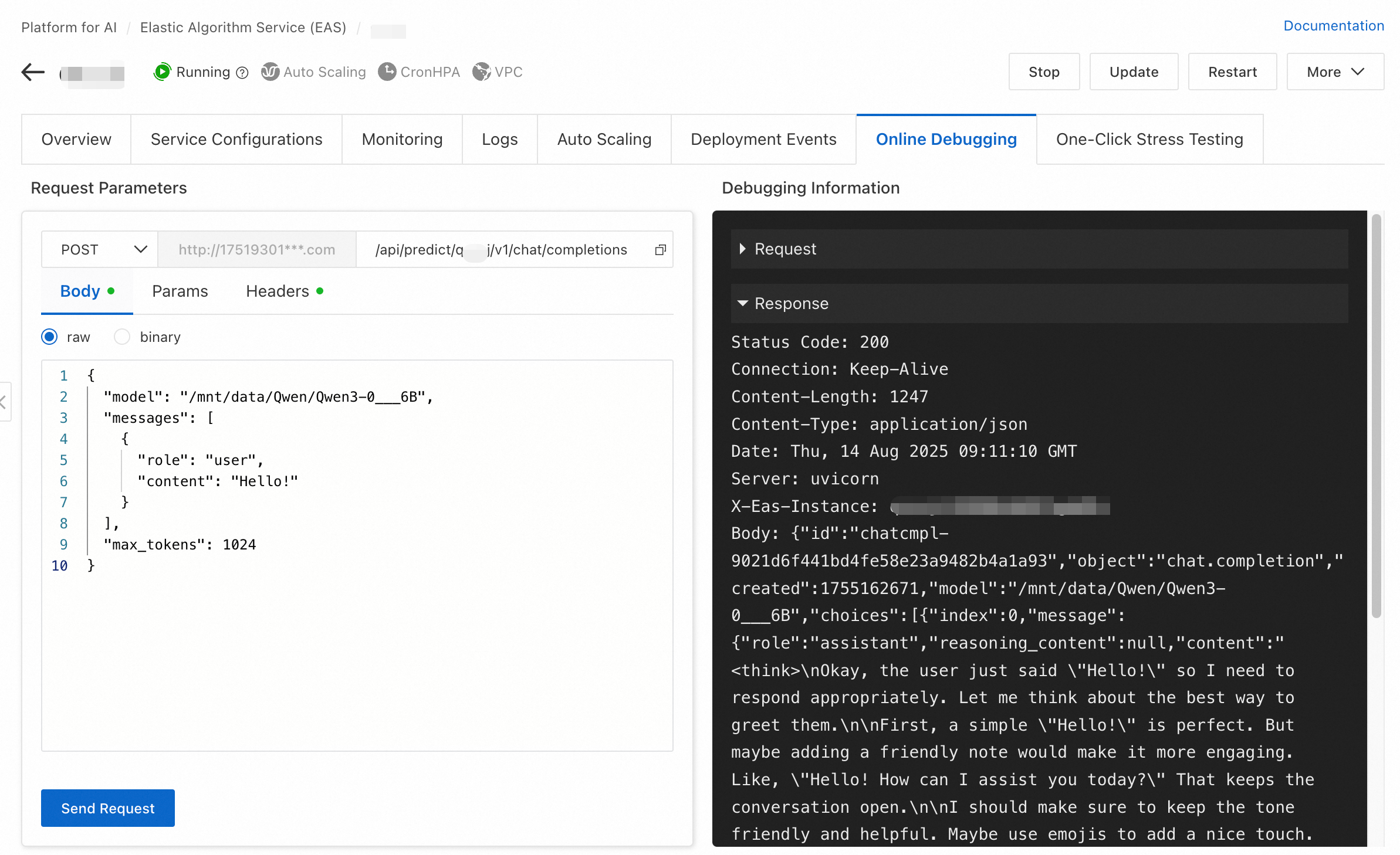

After the service is deployed, use the online debugging feature to test whether the service runs correctly. Configure the request method, request path, and request body based on your model service.

The online debugging method for the service deployed in this example:

-

On the Inference Service tab, click the destination service to go to the service overview page. Switch to the Online Debugging tab.

-

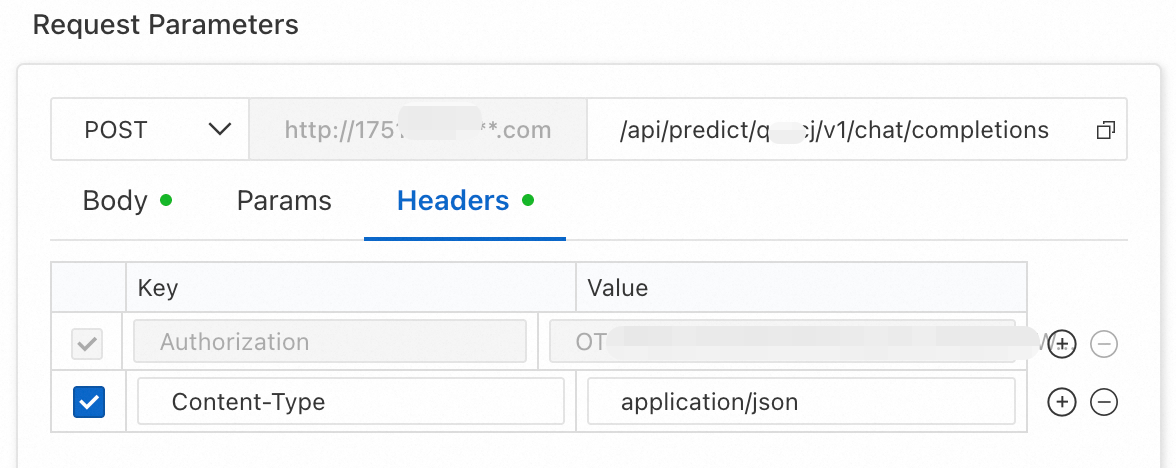

In the Request Parameter Online Tuning section of the debugging page, set request parameters and click Send Request. Request parameters:

-

Chat interface: Append

/v1/chat/completionsto the existing URL. -

Headers: Add a request header. Set key to

Content-Typeand value toapplication/json.

-

Body:

{ "model": "/mnt/data/Qwen/Qwen3-0___6B", "messages": [ { "role": "user", "content": "Hello!" } ], "max_tokens": 1024 }

-

-

The response is shown in the following figure:

Call the service

Obtain endpoint and token

This deployment uses the shared gateway by default. After deployment completes, obtain the endpoint and token required for invocation from the service overview information.

-

On the Inference Service tab, click the name of the target service to go to the Overview page.

-

In the Basic Information section, click View Endpoint Information.

-

In the Invocation Method panel, copy the endpoint and token:

-

Choose the Internet endpoint or VPC endpoint as needed.

-

The following examples use <EAS_ENDPOINT> as the endpoint and <EAS_TOKEN> as the token.

-

Call with curl or Python

The following code provides examples:

curl http://16********.cn-hangzhou.pai-eas.aliyuncs.com/api/predict/****/v1/chat/

completions \

-H "Content-Type: application/json" \

-H "Authorization: *********5ZTM1ZDczg5OT**********" \

-X POST \

-d '{

"model": "/mnt/data/Qwen/Qwen3-0___6B",

"messages": [

{

"role": "user",

"content": "Hello!"

}

],

"max_tokens": 1024

}' import requests

# Replace with the actual endpoint.

url = 'http://16********.cn-hangzhou.pai-eas.aliyuncs.com/api/predict/***/v1/chat/completions'

# For the header, set Authorization value to the actual token.

headers = {

"Content-Type": "application/json",

"Authorization": "*********5ZTM1ZDczg5OT**********",

}

# Construct the service request based on the data format required by the model.

data = {

"model": "/mnt/data/Qwen/Qwen3-0___6B",

"messages": [

{

"role": "user",

"content": "Hello!"

}

],

"max_tokens": 1024

}

# Send the request.

resp = requests.post(url, json=data, headers=headers)

print(resp)

print(resp.content)Stop or delete the service

This example uses public resources to create the EAS service, which is billed pay-as-you-go. When no longer needed, stop or delete the service to avoid further charges.

References

-

To improve LLM service efficiency, see Deploy an LLM Intelligent Router.

-

For more information about EAS features, see EAS overview.