When a disk on a Kafka broker fills up, the Kafka log directory on that disk goes offline. Partition replicas on the offline directory can no longer be read or written, affected partitions lose availability, and leader replicas migrate to other brokers—increasing their load. Resolve full-disk issues as quickly as possible to restore cluster health.

This topic uses E-MapReduce (EMR) Kafka 2.4.1 as the example version.

How it works

Kafka writes all message data to log directories on disk. Each broker may have multiple disks, each hosting one or more log directories. When a disk reaches capacity:

-

The log directory on that disk goes offline.

-

Partition replicas in the offline directory stop accepting reads and writes.

-

Kafka migrates leader replicas to other brokers, increasing their load.

-

Cluster availability and fault tolerance degrade until the disk issue is resolved.

Monitor disk usage

Set up a CloudMonitor alert on the OfflineLogDirectoryCount metric for your EMR Kafka cluster. When this count exceeds 0, at least one log directory is already offline—act immediately using one of the recovery strategies below.

Choose a recovery strategy

| Strategy | When to use | Risk | Effort |

|---|---|---|---|

| Resize a disk | A disk attached to a broker has insufficient capacity | Low | Low |

| Migrate partitions within a broker | Disk usage is imbalanced across disks on the same broker | Medium — may cause I/O hotspotting | High |

| Clear logs | Outdated business log data can be deleted, or a data surge occurred due to a special event | Medium — data is permanently deleted | Medium |

Resize a disk

Resizing the full disk directly adds capacity without moving data. This is the lowest-risk option and the fastest way to restore service when a broker disk is full.

In the EMR console, resize the disk attached to the affected broker. For detailed steps, see Expand a disk.

Migrate partitions within a broker

When a disk is full, its log directory goes offline and kafka-reassign-partitions.sh cannot move partitions—the tool requires the directory to be accessible. Instead, directly move partition data files on the Elastic Compute Service (ECS) instance running the broker, then update the Kafka metadata files to reflect the new location.

This strategy only moves partitions between disks on the same broker. It does not move data across brokers.

Usage notes

-

Partition migration can cause I/O hotspotting on disks, which may affect cluster performance. Evaluate the size and duration of each migration before you proceed.

-

Test this procedure on a non-production cluster running the same Kafka version before applying it to production.

Procedure

The following steps use a test topic to illustrate the migration. Replace the example paths, topic names, and broker IDs with your actual values.

-

Create a test topic.

-

Log on to the master node of the Kafka cluster over SSH. For details, see Log on to a cluster.

-

Create a test topic named

test-topicwith replicas on Broker 0 and Broker 1:kafka-topics.sh --bootstrap-server core-1-1:9092 --topic test-topic --replica-assignment 0:1 --create -

Verify the topic. Both brokers should appear in the in-sync replicas (ISR) list:

kafka-topics.sh --bootstrap-server core-1-1:9092 --topic test-topic --describeExpected output — Broker 0 is the leader and both brokers are in the ISR list:

Topic: test-topic PartitionCount: 1 ReplicationFactor: 2 Configs: Topic: test-topic Partition: 0 Leader: 0 Replicas: 0,1 Isr: 0,1

-

-

Simulate data writing (optional — skip if you are working on an existing full disk):

kafka-producer-perf-test.sh --topic test-topic --record-size 1000 --num-records 600000000 --print-metrics --throughput 10240 --producer-props linger.ms=0 bootstrap.servers=core-1-1:9092 -

Simulate a full disk by restricting log directory permissions on Broker 0. On the master node, switch to the

emr-useraccount and log on to the core node:su emr-user ssh core-1-1Get root permissions:

sudo su - rootFind which disk hosts the

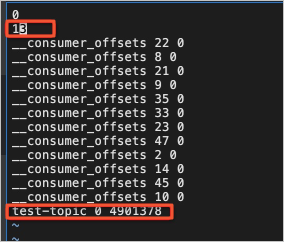

test-topic-0partition:sudo find / -name test-topic-0If the output is

/mnt/disk4/kafka/log/test-topic-0, the partition is on/mnt/disk4. Set the log directory permissions to000to simulate an offline state:sudo chmod 000 /mnt/disk4/kafka/logVerify that Broker 0 is removed from the ISR list:

kafka-topics.sh --bootstrap-server core-1-1:9092 --topic test-topic --describeExpected output — Broker 0 is no longer in the ISR list:

Topic: test-topic PartitionCount: 1 ReplicationFactor: 2 Configs: Topic: test-topic Partition: 0 Leader: 1 Replicas: 0,1 Isr: 1 -

Stop Broker 0. In the EMR console, stop the Kafka service on Broker 0.

-

Move the partition data to a different disk on Broker 0:

mv /mnt/disk4/kafka/log/test-topic-0 /mnt/disk1/kafka/log/ -

Update the Kafka metadata files in both the source directory (

/mnt/disk4/kafka/log) and the destination directory (/mnt/disk1/kafka/log). Update both files:-

replication-offset-checkpoint: Move the

test-topic-related entries from the source file to the destination file, and update the entry count in both files.

-

recovery-point-offset-checkpoint: Move the

test-topic-related entries from the source file to the destination file, and update the entry count in both files.

-

-

Restore log directory permissions on Broker 0:

sudo chmod 755 /mnt/disk4/kafka/log -

Start Broker 0. In the EMR console, start the Kafka service on Broker 0.

-

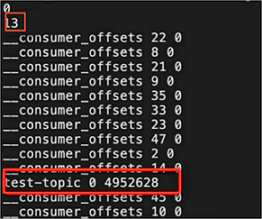

Verify the cluster is healthy:

kafka-topics.sh --bootstrap-server core-1-1:9092 --topic test-topic --describeBoth brokers should reappear in the ISR list, confirming that the partition migration succeeded.

Clear logs

Delete business log data from the full disk to free up space. Data is deleted chronologically, starting from the oldest log segments.

Deleting log data is irreversible. Do not delete data from internal Kafka topics, including __consumer_offsets and _schema. Do not delete topics whose names start with an underscore (_).

This strategy is suitable for recovering from data surges caused by exceptional events. If you do not adjust the retention period after clearing logs, the disk will fill up again.

Procedure

-

Log on to the machine where the full disk resides.

-

Identify the full disk and delete unnecessary business log data:

-

Do not delete the Kafka cluster data directories themselves.

-

Find topics that occupy large amounts of space or that are no longer needed.

-

For the identified topics, delete log segments starting from the oldest data. Delete the corresponding index and timeindex files along with each log segment.

-

Do not delete data from internal topics such as

__consumer_offsetsand_schema.

-

-

Restart the broker where the full disk resides to bring the log directory back online.