Auto scaling adds and removes task nodes automatically based on rules you define, keeping compute resources aligned with your actual workload. This topic explains how to configure auto scaling for an EMR Hadoop cluster in the EMR console.

Prerequisites

Before you begin, ensure that you have:

-

An EMR Hadoop cluster. For more information, see Create a cluster.

Usage notes

-

Hardware specifications for scaling nodes can only be modified when auto scaling is disabled. To change specifications, disable auto scaling, make your changes, then re-enable it.

-

The system automatically searches for instance types that match the vCPU and memory specifications you enter, and lists the results in the Instance Type section. Select at least one instance type from the list — the cluster scales using the selected types.

-

Select up to three instance types to reduce the risk of scaling failures caused by insufficient ECS resources.

-

The minimum data disk size is 40 GiB, regardless of disk type (ultra disk or standard SSD).

-

Load-based scaling relies on CloudMonitor. When you save load-based scaling rules, CloudMonitor automatically creates the corresponding alert rules. Do not modify, delete, or disable these alert rules, as doing so can disrupt auto scaling activities.

Choose a scaling trigger mode

Before configuring rules, decide which trigger mode fits your workload:

| If your workload... | Use... |

|---|---|

| Follows a predictable schedule — for example, batch jobs that run nightly or end-of-day reporting | Time-based scaling |

| Fluctuates unpredictably throughout the day based on actual cluster load | Load-based scaling |

If you disable auto scaling, all rules are cleared. Re-enabling auto scaling requires you to reconfigure the rules. If you switch between trigger modes, rules from the previous mode become invalid, but any nodes that were already added are retained.

Configure auto scaling

Step 1: Open the Auto Scaling tab

-

Log on to the EMR console. In the left-side navigation pane, click EMR on ECS.

-

In the top navigation bar, select the region where your cluster resides and select a resource group.

-

On the EMR on ECS page, click the name of your cluster in the Cluster ID/Name column.

-

Click the Auto Scaling tab.

Step 2: Create an auto scaling group

-

On the Configure Scaling tab, click Create Auto Scaling Group.

Auto scaling groups can only be configured and managed on the Auto Scaling tab.

-

In the Add Auto Scaling Group dialog box, enter a name in the Node Group Name field and click OK.

Step 3: Open the scaling rule configuration

On the Configure Scaling tab, find the auto scaling group you just created and click Configure Rule in the Actions column.

Step 4: Configure basic settings

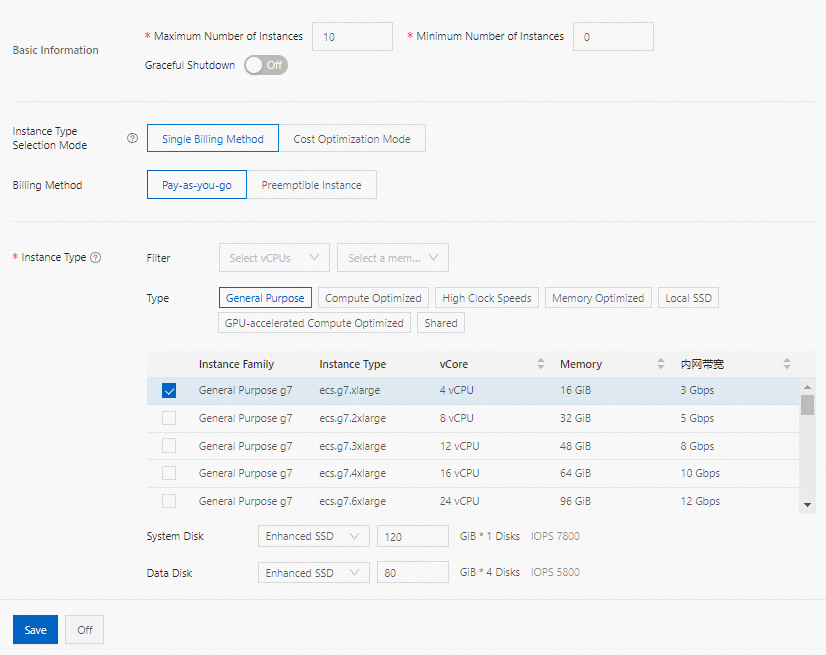

In the Basic Information section, configure the following parameters:

| Parameter | Description |

|---|---|

| Maximum number of instances | The maximum number of task nodes in the auto scaling group. If a scaling rule is triggered but the group has already reached this limit, no additional nodes are added. Maximum value: 1,000. |

| Minimum number of instances | The minimum number of task nodes in the auto scaling group. If a scale-out rule would add fewer nodes than this minimum, the system scales to the minimum on the first trigger. For example, if the minimum is 3 and a rule adds 1 node daily at 00:00, the system adds 3 nodes on the first day to satisfy the minimum, then follows the rule for subsequent triggers. |

| Graceful shutdown | The timeout period before a task node running a YARN job is decommissioned. If the job runs longer than this timeout, or if no YARN jobs are running on the node, the system decommissions it. Maximum value: 3,600 seconds. See the Usage notes for graceful shutdown section below before enabling this setting. |

Usage notes for graceful shutdown

Before enabling graceful shutdown:

-

On the YARN service page, set the

yarn.resourcemanager.nodes.exclude-pathparameter to/etc/ecm/hadoop-conf/yarn-exclude.xml. -

After changing the Timeout Period, restart YARN ResourceManager during off-peak hours for the change to take effect.

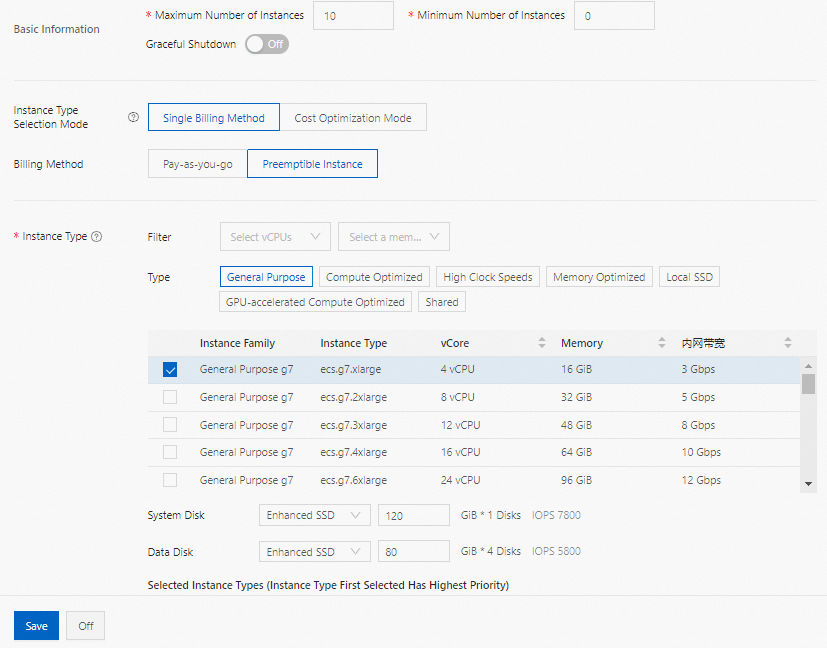

Step 5: Configure instance type and billing method

In the middle section of the Configure Auto Scaling panel, configure Instance Type Selection Mode, Billing Method, and Instance Type.

Single billing method

The system matches instance types to the vCPU and memory specifications you enter and lists them in the Instance Type section. The order in which you select instance types sets their priority. Choose one of the following billing methods:

-

Pay-as-you-go: The hourly price shown below each disk specification is the sum of the EMR service price and ECS instance price.

-

Preemptible instance: Uses the same priority order as pay-as-you-go. The pay-as-you-go price is shown for reference, and you can set a maximum hourly price — an instance type appears in the list only if its price is at or below your limit. For more information, see What are preemptible instances?

ImportantPreemptible instances can be released when a bid fails or resources become unavailable. Do not use preemptible instances if your jobs have strict SLA requirements.

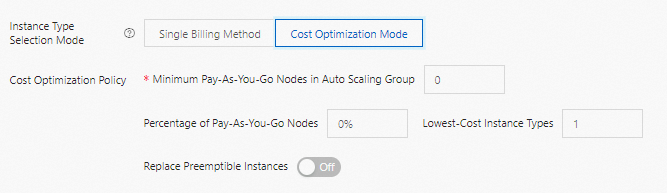

Cost Optimization Mode

Cost Optimization Mode lets you define a mixed fleet of pay-as-you-go and preemptible instances to balance cost and stability.

| Parameter | Description |

|---|---|

| Minimum pay-as-you-go nodes in auto scaling group | The minimum number of pay-as-you-go instances the group must maintain. When the current count falls below this value, the system creates pay-as-you-go instances first. |

| Percentage of pay-as-you-go nodes | After the minimum is met, this percentage determines the proportion of new pay-as-you-go instances relative to the total group size. |

| Lowest-cost instance types | The number of cheapest instance types to consider when creating preemptible instances. The system distributes preemptible instances evenly across these types. Maximum value: 3. |

| Replace preemptible instances | When enabled, the system automatically replaces a preemptible instance with a pay-as-you-go instance approximately 5 minutes before the preemptible instance is reclaimed. |

Common Cost Optimization Mode configurations:

If you leave Minimum pay-as-you-go nodes, Percentage of pay-as-you-go nodes, and Lowest-cost instance types blank, the group operates as a common cost optimization scaling group. Configuring these parameters creates a mixed-instance cost optimization scaling group. Both types are fully compatible.

To replicate common cost optimization scaling group behavior using mixed-instance settings:

-

Pay-as-you-go instances only: Set Minimum to

0, Percentage to100%, Lowest-cost instance types to1. -

Prefer preemptible instances: Set Minimum to

0, Percentage to0%, Lowest-cost instance types to1.

Step 6: Configure the trigger mode and rules

Time-based scaling

Time-based scaling adds or removes a fixed number of task nodes at scheduled times — daily, weekly, or monthly. Use it when your workload follows a predictable pattern.

Auto scaling rules are split into scale-out rules and scale-in rules. Configure them separately. The following table describes the parameters for a scale-out rule (scale-in rules use the same parameters):

| Parameter | Description |

|---|---|

| Rule name | A unique name for the rule within the cluster. |

| Execution rule | Execute repeatedly: triggers at the specified time on a recurring schedule (daily, weekly, or monthly). Execute only once: triggers once at the specified time. |

| Execution time | The time at which the rule runs. |

| Rule expiration time | The date and time after which the rule stops running. |

| Retry time range | The window during which the system retries a failed scaling operation. The system retries every 30 seconds within this window until the operation succeeds. Valid values: 0–21,600 seconds. For example, if another scaling operation is still running or in cooldown when this rule triggers, the system keeps retrying every 30 seconds until the window closes or conditions are met. |

| Number of adjusted instances | The number of task nodes to add (or remove) each time the rule triggers. |

| Cooldown time (s) | The interval between two scale-out activities. Scale-out activities are forbidden during the cooldown. |

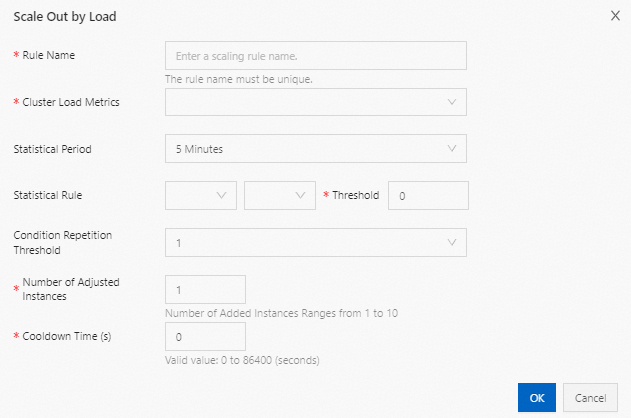

Load-based scaling

Load-based scaling adds or removes task nodes based on YARN cluster metrics. Use it when workload fluctuations are hard to predict in advance.

| Parameter | Description |

|---|---|

| Rule name | A unique name for the rule within the cluster. |

| Cluster load metrics | The YARN metric that drives this rule. Metrics are sourced from YARN. For the full list, see Hadoop official documentation and the YARN metric reference table at the end of this topic. |

| Statistical period | The time window over which the system evaluates the selected metric using the configured aggregation (average, maximum, or minimum). |

| Statistical rule | The aggregation dimension and threshold condition that must be met for the rule to count toward the repetition threshold. |

| Condition repetition threshold | The number of consecutive statistical periods during which the threshold must be met before scaling is triggered. |

| Number of adjusted instances | The number of task nodes to add (or remove) each time the rule triggers. |

| Cooldown time (s) | The minimum interval between two consecutive scaling operations. During cooldown, scaling is not triggered even if the threshold conditions are met again. After cooldown ends, the rule triggers as soon as conditions are met. |

Step 7: Save and enable auto scaling

Click Save to save your configuration.

Saving the configuration does not enable auto scaling. To activate the rules, see Enable or disable auto scaling (Hadoop clusters).

YARN metric reference

The following YARN metrics are available for load-based scaling rules. Scale-out typically triggers when a resource demand metric (Pending, AllocatedContainers) rises above a threshold; scale-in triggers when it falls below the threshold.

| EMR auto scaling metric | Service | Description |

|---|---|---|

| YARN.AvailableVCores | YARN | Available vCPUs |

| YARN.PendingVCores | YARN | vCPUs requested but not yet allocated |

| YARN.AllocatedVCores | YARN | Allocated vCPUs |

| YARN.ReservedVCores | YARN | Reserved vCPUs |

| YARN.AvailableMemory | YARN | Available memory (MB) |

| YARN.PendingMemory | YARN | Memory requested but not yet allocated (MB) |

| YARN.AllocatedMemory | YARN | Allocated memory (MB) |

| YARN.ReservedMemory | YARN | Reserved memory (MB) |

| YARN.AppsRunning | YARN | Running applications |

| YARN.AppsPending | YARN | Pending applications |

| YARN.AppsKilled | YARN | Terminated applications |

| YARN.AppsFailed | YARN | Failed applications |

| YARN.AppsCompleted | YARN | Completed applications |

| YARN.AppsSubmitted | YARN | Submitted applications |

| YARN.AllocatedContainers | YARN | Allocated YARN containers |

| YARN.PendingContainers | YARN | Containers requested but not yet allocated |

| YARN.ReservedContainers | YARN | Reserved YARN containers |

| YARN.MemoryAvailablePrecentage | YARN | Available memory as a percentage of total memory: AvailableMemory / Total Memory |

| YARN.ContainerPendingRatio | YARN | Ratio of pending to allocated containers: PendingContainers / AllocatedContainers |