The training and inference process of a large language model (LLM) involves high energy consumption and long response times. These challenges limit the deployment of LLMs in resource-constrained environments. To address these challenges, PAI provides a model distillation feature. This feature transfers knowledge from an LLM to a smaller model, which significantly reduces the model size and compute resource requirements while retaining most of its performance. This enables deployment in a wider range of real-world application scenarios. This topic describes the complete development workflow of a data augmentation and model distillation solution for LLMs, using the Qwen2 LLM as an example.

Workflow

The development workflow of the solution is as follows:

Prepare a training dataset based on the data format requirements and data preparation strategies.

(Optional) Augment instructions using an instruction augmentation model

In PAI-Model Gallery, you can use the pre-built instruction augmentation model Qwen2-1.5B-Instruct-Exp or Qwen2-7B-Instruct-Exp to automatically generate more instructions based on the semantics of your prepared dataset. Instruction augmentation helps improve the generalization of distillation training for LLMs.

(Optional) Optimize instructions using an instruction optimization model

In PAI-Model Gallery, you can use the pre-built instruction optimization model Qwen2-1.5B-Instruct-Refine or Qwen2-7B-Instruct-Refine to optimize and refine the instructions (and augmented instructions) in your prepared dataset. Instruction optimization helps improve the language generation capabilities of LLMs.

Deploy a teacher LLM to generate corresponding responses

In PAI-Model Gallery, you can use the pre-built teacher LLM Qwen2-72B-Instruct to generate responses to the instructions in the training dataset. This process distills the knowledge from the teacher LLM.

Distill and train a smaller student model

In PAI-Model Gallery, you can use the generated instruction-response dataset to distill and train a smaller student model for use in real-world application scenarios.

Prerequisites

Before you begin, complete the following preparations:

Deep Learning Containers (DLC) and EAS of PAI are activated on a pay-as-you-go basis and a default workspace is created. For more information, see Activate PAI and create a default workspace.

An Object Storage Service (OSS) bucket is created to store training data and the model file obtained from model training. For information about how to create a bucket, see Quick Start.

Prepare instruction data

For information about how to prepare instruction data, see Data preparation strategies and Data format requirements.

Data preparation strategies

To improve the effectiveness and stability of model distillation, prepare your data based on the following strategies:

Prepare at least several hundred data entries. A larger dataset helps improve the model's effectiveness.

The distribution of the seed dataset should be as broad and balanced as possible. For example, task scenarios should be diverse, and the lengths of data inputs and outputs should cover both short and long scenarios. If the data includes more than one language, such as Chinese and English, ensure that the language distribution is relatively balanced.

Remove abnormal data. Even a small amount of abnormal data can have a significant impact on the fine-tuning results. Use a rule-based method to clean the data and filter out abnormal entries from the dataset.

Data format requirements

The training dataset must be a JSON file that contains an `instruction` field for the input instruction. The following code shows an example of instruction data:

[

{

"instruction": "What were the main measures taken by governments to stabilize financial markets during the 2008 financial crisis?"

},

{

"instruction": "In the context of increasing climate change, what important actions have governments taken to promote sustainable development?"

},

{

"instruction": "What were the main measures taken by governments to support economic recovery during the bursting of the tech bubble in 2001?"

}

](Optional) Augment instructions using an instruction augmentation model

Instruction augmentation is a common prompt engineering technique for LLMs that automatically expands a user-provided instruction dataset.

For example, if you provide the following input:

How to make fish-fragrant shredded pork? How to prepare for the GRE exam? What should I do if a friend misunderstands me?The model output is as follows:

Teach me how to make mapo tofu? Provide a detailed guide on how to prepare for the Test of English as a Foreign Language (TOEFL) exam? How will you adjust your mindset if you encounter setbacks in your work?

Because instruction diversity directly affects the generalization capability of an LLM, instruction augmentation can effectively improve the performance of the final student model. Based on the Qwen2 base model, PAI provides two self-developed instruction augmentation models: Qwen2-1.5B-Instruct-Exp and Qwen2-7B-Instruct-Exp. You can deploy a model service with a single click in PAI-Model Gallery. Follow these steps:

Deploy a model service

Follow these steps to deploy an instruction augmentation model as an EAS online service:

Go to the Model Gallery page.

Log on to the PAI console.

In the upper-left corner, select a region.

In the navigation pane on the left, click Workspaces. Click the name of the target workspace.

In the navigation pane on the left, choose .

On the Model Gallery page, search the model list on the right for Qwen2-1.5B-Instruct-Exp or Qwen2-7B-Instruct-Exp, and click Deploy on the corresponding card.

In the Deploy configuration panel, the Model Service Information and Resource Deployment Information are configured by default. You can modify the parameters as needed. After you finish configuring the parameters, click the Deploy button.

In the Billing Notification dialog box, click OK.

You are automatically redirected to the Deployment Tasks page. A Status of Running indicates a successful deployment.

Call the model service

After the model service is deployed, you can use an API for model inference. For more information, see Deploy a large language model. The following example shows how to send a model service request from a client:

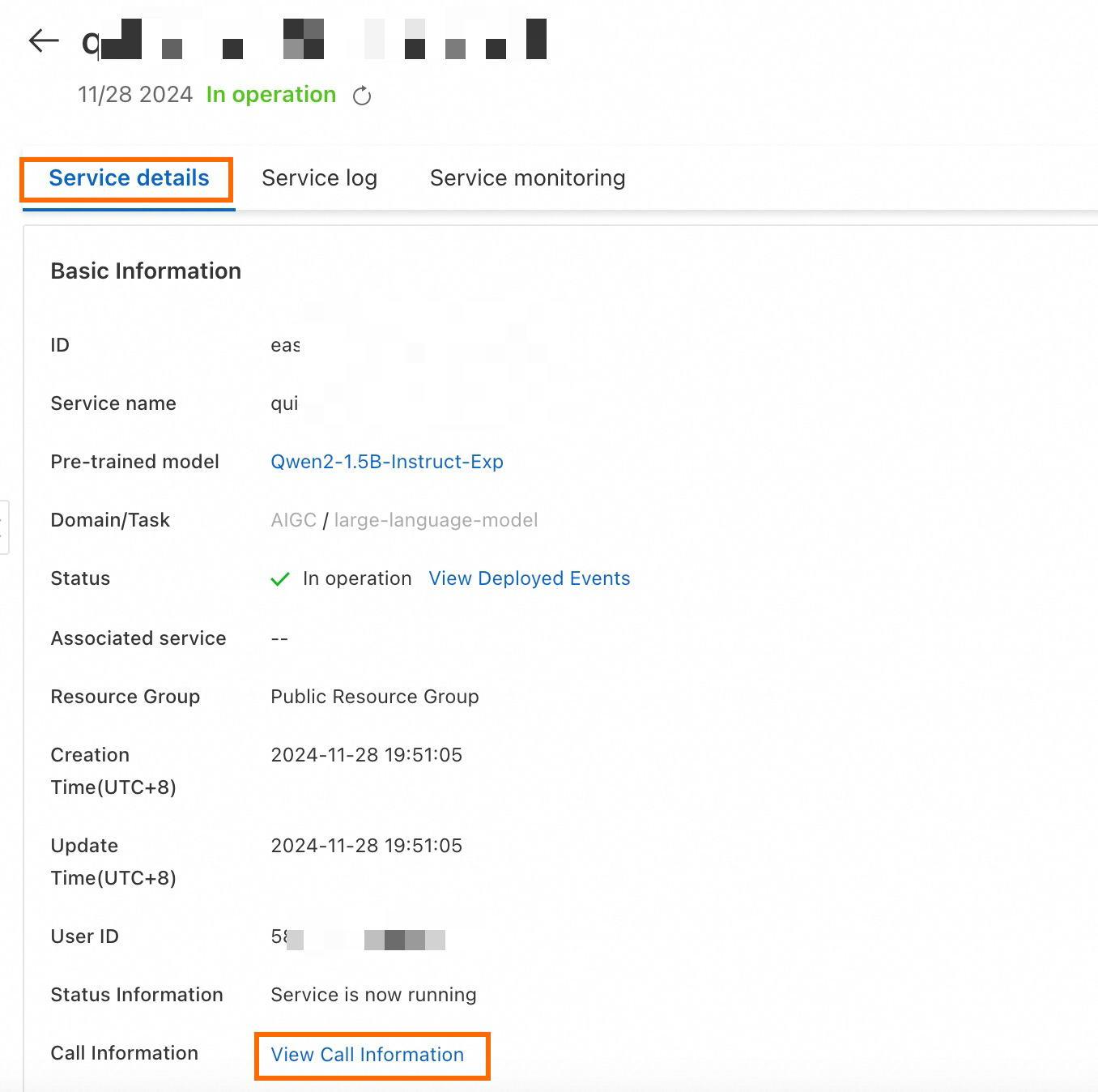

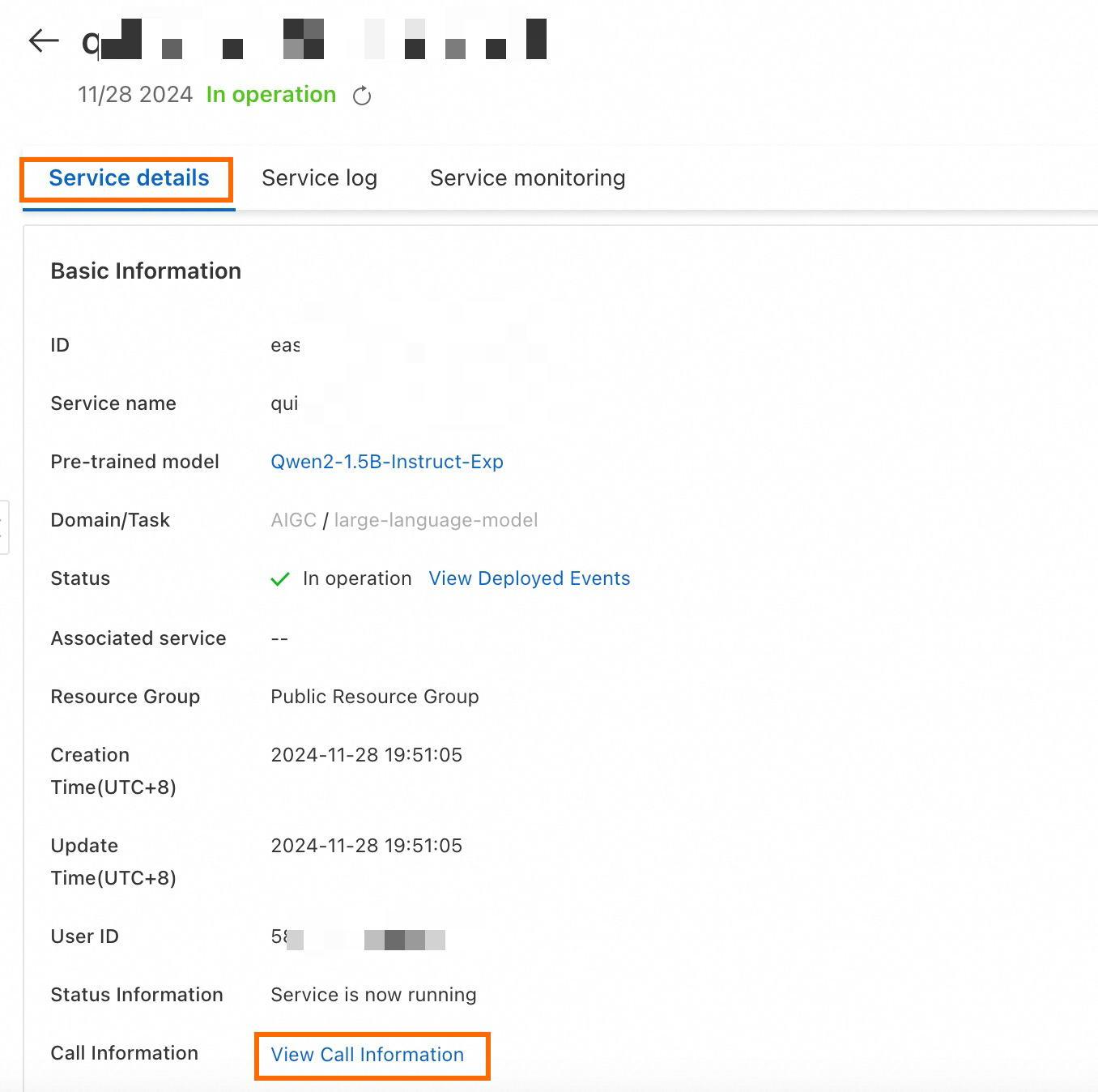

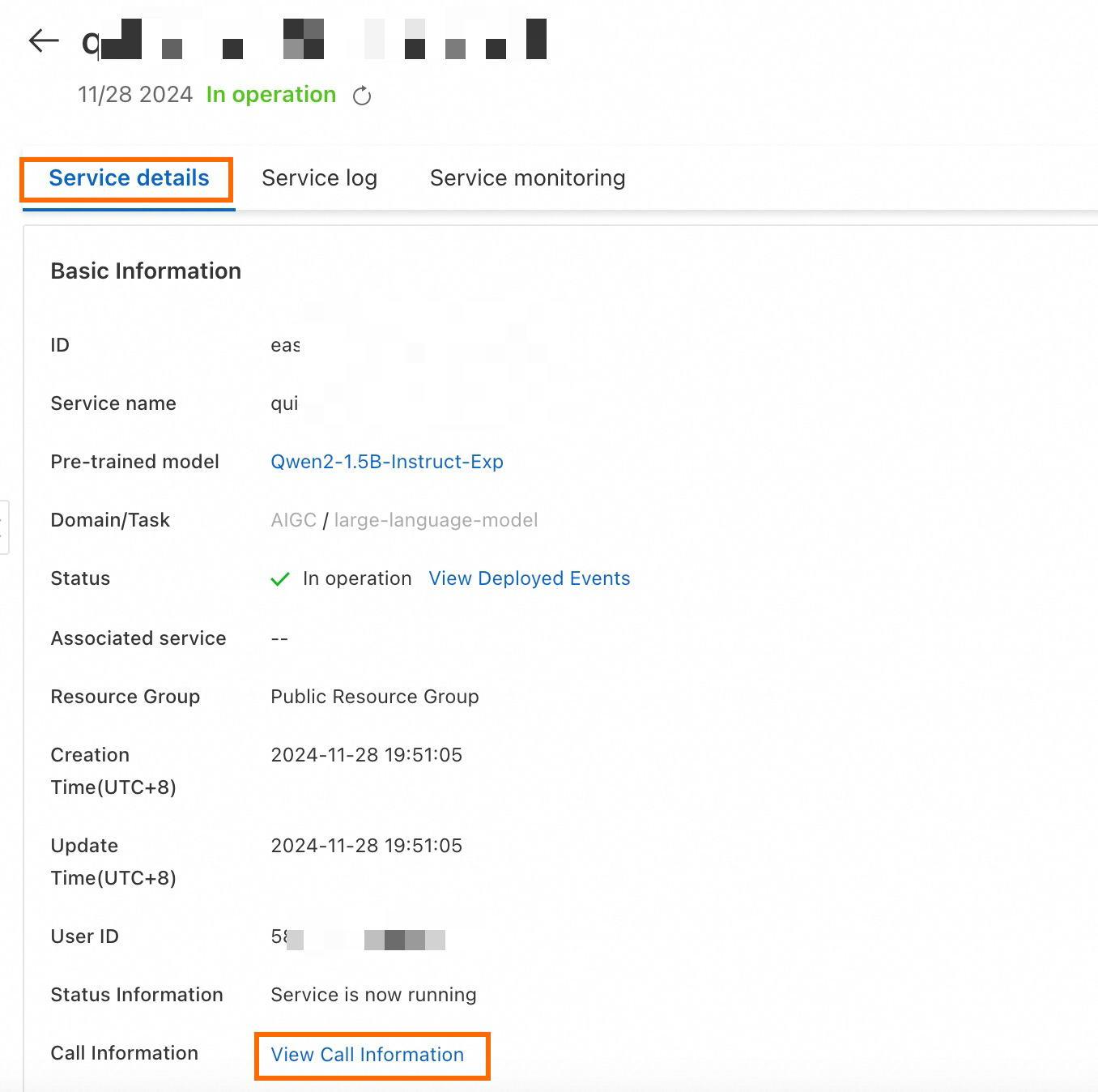

Obtain the service endpoint and token.

On the Service Details page, click View Endpoint Information in the Basic Information area.

In the Endpoint Information dialog box, copy the service endpoint and token.

In a terminal, create and run the following Python script to call the service.

import argparse import json import requests from typing import List def post_http_request(prompt: str, system_prompt: str, host: str, authorization: str, max_new_tokens: int, temperature: float, top_k: int, top_p: float) -> requests.Response: headers = { "User-Agent": "Test Client", "Authorization": f"{authorization}" } pload = { "prompt": prompt, "system_prompt": system_prompt, "top_k": top_k, "top_p": top_p, "temperature": temperature, "max_new_tokens": max_new_tokens, "do_sample": True, "eos_token_id": 151645 } response = requests.post(host, headers=headers, json=pload) return response def get_response(response: requests.Response) -> List[str]: data = json.loads(response.content) output = data["response"] return output if __name__ == "__main__": parser = argparse.ArgumentParser() parser.add_argument("--top-k", type=int, default=50) parser.add_argument("--top-p", type=float, default=0.95) parser.add_argument("--max-new-tokens", type=int, default=2048) parser.add_argument("--temperature", type=float, default=1) parser.add_argument("--prompt", type=str, default="Sing me a song.") args = parser.parse_args() prompt = args.prompt top_k = args.top_k top_p = args.top_p temperature = args.temperature max_new_tokens = args.max_new_tokens host = "EAS HOST" authorization = "EAS TOKEN" print(f" --- input: {prompt}\n", flush=True) system_prompt = "I want you to act as an instruction creator. Your goal is to draw inspiration from the [given instruction] to create a brand new instruction." response = post_http_request( prompt, system_prompt, host, authorization, max_new_tokens, temperature, top_k, top_p) output = get_response(response) print(f" --- output: {output}\n", flush=True)Where:

host: The service endpoint that you obtained.

authorization: The service token that you obtained.

Augment instructions in a batch

You can use the EAS online service to make batch calls and augment instructions. The following code shows how to read a custom JSON dataset and call the model interface in a batch for instruction augmentation. In a terminal, create and run the following Python script to call the model service in a batch.

import requests

import json

import random

from tqdm import tqdm

from typing import List

input_file_path = "input.json" # The name of the input file.

with open(input_file_path) as fp:

data = json.load(fp)

total_size = 10 # The expected total number of data entries after expansion.

pbar = tqdm(total=total_size)

while len(data) < total_size:

prompt = random.sample(data, 1)[0]["instruction"]

system_prompt = "I want you to act as an instruction creator. Your goal is to draw inspiration from the [given instruction] to create a brand new instruction."

top_k = 50

top_p = 0.95

temperature = 1

max_new_tokens = 2048

host = "EAS HOST"

authorization = "EAS TOKEN"

response = post_http_request(

prompt, system_prompt,

host, authorization,

max_new_tokens, temperature, top_k, top_p)

output = get_response(response)

temp = {

"instruction": output

}

data.append(temp)

pbar.update(1)

pbar.close()

output_file_path = "output.json" # The name of the output file.

with open(output_file_path, 'w') as f:

json.dump(data, f, ensure_ascii=False)Where:

host: The service endpoint that you obtained.

authorization: The service token that you obtained.

file_path: The local path to your dataset file.

The definitions of the

post_http_requestandget_responsefunctions are the same as their corresponding definitions in the Python script in Call the model service.

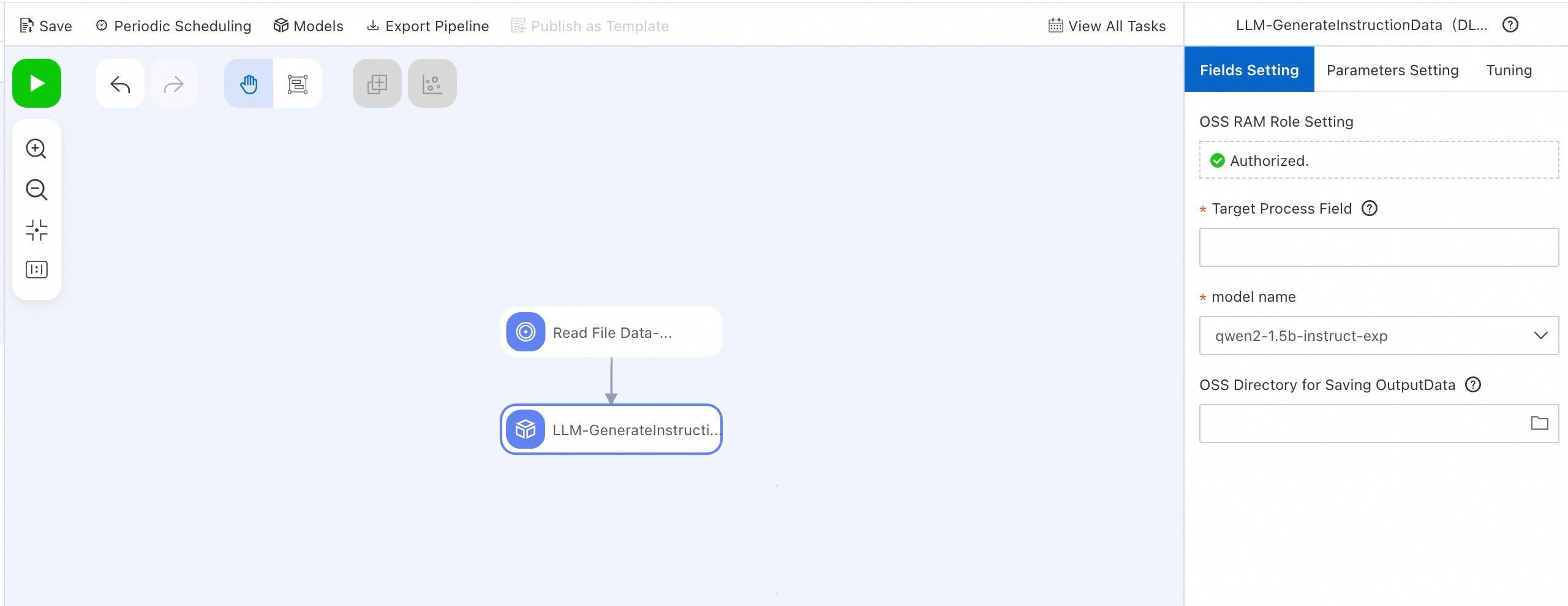

Alternatively, you can use the LLM-Instruction Augmentation (DLC) component in PAI-Designer to perform this function without writing code. For more information, see Custom pipelines.

(Optional) Optimize instructions using an instruction optimization model

Instruction optimization is another common prompt engineering technique for LLMs. It is used to automatically optimize a user-provided instruction dataset to generate more detailed instructions, which enables the LLM to provide more detailed responses.

For example, if you provide the following input to the instruction optimization model:

How to make fish-fragrant shredded pork? How to prepare for the GRE exam? What should I do if a friend misunderstands me?The model output is as follows:

Provide a detailed recipe of Chinese Sichuan-style fish-fragrant shredded pork. The recipe contains a list of specific ingredients, such as vegetables, pork, and spices, and detailed cooking instructions. If possible, recommend side dishes and other main courses that pair well with this dish. Provide a detailed guide, including registration for the GRE test, required materials, test preparation strategies, and recommended review materials. If possible, recommend some effective practice questions and mock exams to help me prepare for the exam. Provide a detailed guide to teach me how to be calm and rational, and communicate effectively to solve the problem when I am misunderstood by my friends. Provide some practical suggestions, such as how to express my thoughts and feelings, and how to avoid aggravating misunderstandings, and provide specific dialogue scenarios and situations so that I can better understand and practice.

Because the level of detail in an instruction directly affects the LLM's output, instruction optimization can effectively improve the performance of the final student model. Based on the Qwen2 base model, PAI provides two instruction optimization models: Qwen2-1.5B-Instruct-Refine and Qwen2-7B-Instruct-Refine. You can deploy a model service with a single click in PAI-Model Gallery. Follow these steps:

Deploy a model service

Go to the Model Gallery page.

Log on to the PAI console.

In the upper-left corner, select a region.

In the navigation pane on the left, click Workspaces. Click the name of the target workspace.

In the navigation pane on the left, choose .

On the Model Gallery page, search for Qwen2-1.5B-Instruct-Refine or Qwen2-7B-Instruct-Refine, and then click Deploy on the corresponding model card.

In the Deploy configuration panel, the Model Service Information and Resource Deployment Information are configured by default. You can modify the parameters as needed. After you finish configuring the parameters, click the Deploy button.

In the Billing Notification dialog box, click OK.

You are automatically redirected to the Deployment Tasks page. A Status of Running indicates a successful deployment.

Call the model service

After the model service is deployed, you can use an API for model inference. For more information, see Deploy a large language model. The following example shows how to send a model service request from a client:

Obtain the service endpoint and token.

On the Service Details page, click View Endpoint Information in the Basic Information area.

In the Endpoint Information dialog box, copy the service endpoint and token.

In a terminal, create and run the following Python script to call the service.

import argparse import json import requests from typing import List def post_http_request(prompt: str, system_prompt: str, host: str, authorization: str, max_new_tokens: int, temperature: float, top_k: int, top_p: float) -> requests.Response: headers = { "User-Agent": "Test Client", "Authorization": f"{authorization}" } pload = { "prompt": prompt, "system_prompt": system_prompt, "top_k": top_k, "top_p": top_p, "temperature": temperature, "max_new_tokens": max_new_tokens, "do_sample": True, "eos_token_id": 151645 } response = requests.post(host, headers=headers, json=pload) return response def get_response(response: requests.Response) -> List[str]: data = json.loads(response.content) output = data["response"] return output if __name__ == "__main__": parser = argparse.ArgumentParser() parser.add_argument("--top-k", type=int, default=2) parser.add_argument("--top-p", type=float, default=0.95) parser.add_argument("--max-new-tokens", type=int, default=256) parser.add_argument("--temperature", type=float, default=0.5) parser.add_argument("--prompt", type=str, default="Sing me a song.") args = parser.parse_args() prompt = args.prompt top_k = args.top_k top_p = args.top_p temperature = args.temperature max_new_tokens = args.max_new_tokens host = "EAS HOST" authorization = "EAS TOKEN" print(f" --- input: {prompt}\n", flush=True) system_prompt = "Optimize this instruction to make it more detailed and specific." response = post_http_request( prompt, system_prompt, host, authorization, max_new_tokens, temperature, top_k, top_p) output = get_response(response) print(f" --- output: {output}\n", flush=True)Where:

host: The service endpoint that you obtained.

authorization: The service token that you obtained.

Optimize instructions in a batch

You can use the preceding EAS online service to make calls and optimize instructions in batches. The following code example shows how to read a custom JSON dataset and call the model interface in batches for quality optimization. In a terminal, create and run the following Python file to call the model service in batches.

import requests

import json

import random

from tqdm import tqdm

from typing import List

input_file_path = "input.json" # The name of the input file.

with open(input_file_path) as fp:

data = json.load(fp)

pbar = tqdm(total=len(data))

new_data = []

for d in data:

prompt = d["instruction"]

system_prompt = "Optimize the following instruction."

top_k = 50

top_p = 0.95

temperature = 1

max_new_tokens = 2048

host = "EAS HOST"

authorization = "EAS TOKEN"

response = post_http_request(

prompt, system_prompt,

host, authorization,

max_new_tokens, temperature, top_k, top_p)

output = get_response(response)

temp = {

"instruction": output

}

new_data.append(temp)

pbar.update(1)

pbar.close()

output_file_path = "output.json" # The name of the output file.

with open(output_file_path, 'w') as f:

json.dump(new_data, f, ensure_ascii=False)

Where:

host: The service endpoint that you obtained.

authorization: The service token that you obtained.

file_path: The local path to your dataset file.

The definitions of the

post_http_requestandget_responsefunctions are the same as those in the Python script in Call the model service.

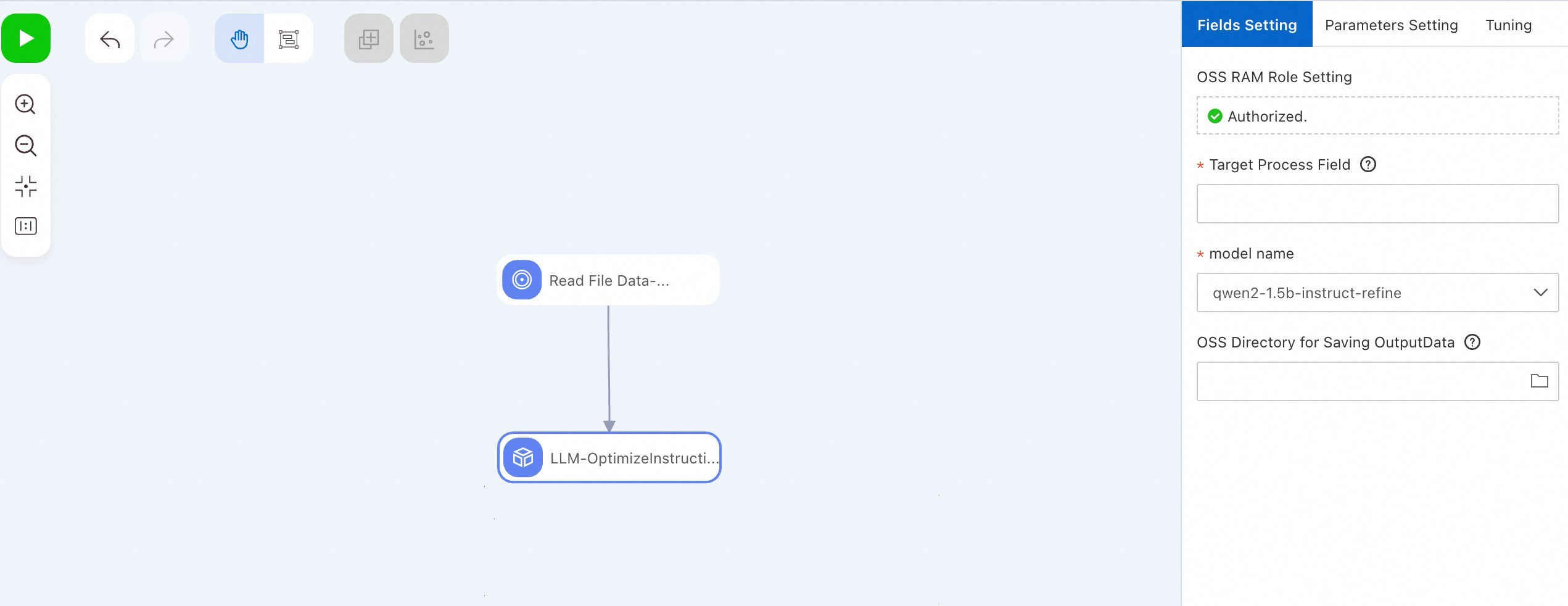

You can also use the LLM-Instruction Optimization (DLC) component in PAI-Designer to perform the same function without writing code. For more information, see Custom pipelines.

Deploy a teacher LLM to generate corresponding responses

Deploy a model service

After optimizing the instructions in the dataset, follow these steps to deploy a teacher LLM to generate the corresponding responses.

Go to the Model Gallery page.

Log on to the PAI console.

In the upper-left corner, select a region.

In the navigation pane on the left, click Workspaces. Click the name of the target workspace.

In the navigation pane on the left, choose .

On the Model Gallery page, search for Qwen2-72B-Instruct in the model list, and click Deploy in the corresponding card.

In the Deploy configuration panel, the Model Service Information and Resource Deployment Information are configured by default. You can modify the parameters as needed. After you finish configuring the parameters, click the Deploy button.

In the Billing Notification dialog box, click OK.

You are automatically redirected to the Deployment Tasks page. A Status of Running indicates a successful deployment.

Call the model service

After the model service is deployed, you can use an API for model inference. For more information, see Deploy a large language model. The following example shows how to send a model service request from a client:

Obtain the service endpoint and token.

On the Service Details page, click View Endpoint Information in the Basic Information area.

In the Endpoint Information dialog box, copy the service endpoint and token.

In a terminal, create and run a Python file with the following code to call the service.

import argparse import json import requests from typing import List def post_http_request(prompt: str, system_prompt: str, host: str, authorization: str, max_new_tokens: int, temperature: float, top_k: int, top_p: float) -> requests.Response: headers = { "User-Agent": "Test Client", "Authorization": f"{authorization}" } pload = { "prompt": prompt, "system_prompt": system_prompt, "top_k": top_k, "top_p": top_p, "temperature": temperature, "max_new_tokens": max_new_tokens, "do_sample": True, } response = requests.post(host, headers=headers, json=pload) return response def get_response(response: requests.Response) -> List[str]: data = json.loads(response.content) output = data["response"] return output if __name__ == "__main__": parser = argparse.ArgumentParser() parser.add_argument("--top-k", type=int, default=50) parser.add_argument("--top-p", type=float, default=0.95) parser.add_argument("--max-new-tokens", type=int, default=2048) parser.add_argument("--temperature", type=float, default=0.5) parser.add_argument("--prompt", type=str) parser.add_argument("--system_prompt", type=str) args = parser.parse_args() prompt = args.prompt system_prompt = args.system_prompt top_k = args.top_k top_p = args.top_p temperature = args.temperature max_new_tokens = args.max_new_tokens host = "EAS HOST" authorization = "EAS TOKEN" print(f" --- input: {prompt}\n", flush=True) response = post_http_request( prompt, system_prompt, host, authorization, max_new_tokens, temperature, top_k, top_p) output = get_response(response) print(f" --- output: {output}\n", flush=True)Where:

host: The service endpoint that you obtained.

authorization: The service token that you obtained.

Batch-label instructions using the teacher model

The following code shows how to read a custom JSON dataset and call the teacher model in a batch to label instructions. In a terminal, create and run the following Python file to call the model service.

import json

from tqdm import tqdm

import requests

from typing import List

input_file_path = "input.json" # The name of the input file.

with open(input_file_path) as fp:

data = json.load(fp)

pbar = tqdm(total=len(data))

new_data = []

for d in data:

system_prompt = "You are a helpful assistant."

prompt = d["instruction"]

print(prompt)

top_k = 50

top_p = 0.95

temperature = 0.5

max_new_tokens = 2048

host = "EAS HOST"

authorization = "EAS TOKEN"

response = post_http_request(

prompt, system_prompt,

host, authorization,

max_new_tokens, temperature, top_k, top_p)

output = get_response(response)

temp = {

"instruction": prompt,

"output": output

}

new_data.append(temp)

pbar.update(1)

pbar.close()

output_file_path = "output.json" # The name of the output file.

with open(output_file_path, 'w') as f:

json.dump(new_data, f, ensure_ascii=False)Where:

host: The service endpoint that you obtained.

authorization: The service token that you obtained.

file_path: The local path to your dataset file.

The definitions of the

post_http_requestandget_responsefunctions are the same as those in the script in Call the model service.

Distill and train a smaller student model

Train the model

After you obtain the responses from the teacher model, you can train the student model in PAI-Model Gallery without writing code. This greatly simplifies the model development process. This solution uses the Qwen2-7B-Instruct model as an example to demonstrate how to train a model in PAI-Model Gallery with prepared training data. The procedure is as follows:

Go to the Model Gallery page.

Log on to the PAI console.

In the upper-left corner, select a region.

In the navigation pane on the left, click Workspaces. Click the name of the target workspace.

In the navigation pane on the left, choose .

On the Model Gallery page, search for and click the Qwen2-7B-Instruct model card to open the model details page.

On the model details page, click Fine-tune in the upper-right corner.

In the Fine-tune panel, configure the following key parameters. Use the default values for other parameters.

Parameter

Description

Default value

Dataset Configuration

Training dataset

Select OSS file or directory from the drop-down list, and follow these steps to select the OSS storage path where the dataset file is located:

Click

and select the created OSS bucket.

and select the created OSS bucket.Click Upload File and follow the instructions in the console to upload the dataset file obtained from the previous steps to the OSS directory.

Click OK.

None

Training Output Configuration

model

Click

and select an existing OSS directory.

and select an existing OSS directory.None

tensorboard

Click

and select an existing OSS directory.

and select an existing OSS directory.None

Compute Resource Configuration

Task resource

Select the resource specification. The system automatically recommends a suitable specification.

None

Hyperparameter Configuration

learning_rate

The learning rate for model training. The data type is float.

5e-5

num_train_epochs

The number of training epochs. The data type is integer.

1

per_device_train_batch_size

The amount of data on each GPU card in a single training iteration. The data type is integer.

1

seq_length

The length of the text sequence. The data type is integer.

128

lora_dim

The LoRA dimension. The data type is integer. When lora_dim > 0, lightweight LoRA/QLoRA training is used.

32

lora_alpha

The LoRA weight. The data type is integer. This parameter takes effect when lora_dim > 0 for lightweight LoRA/QLoRA training.

32

load_in_4bit

Specifies whether to load the model in 4-bit quantization. The data type is boolean. Valid values:

true

false

When lora_dim > 0, load_in_4bit is true, and load_in_8bit is false, 4-bit QLoRA lightweight training is used.

true

load_in_8bit

Specifies whether to load the model in 8-bit quantization. The data type is boolean. Valid values:

true

false

When lora_dim > 0, load_in_4bit is false, and load_in_8bit is true, 8-bit QLoRA lightweight training is used.

false

gradient_accumulation_steps

The number of gradient accumulation steps. The data type is integer.

8

apply_chat_template

Specifies whether the algorithm combines the training data with the default chat template to optimize the model output. The data type is boolean. Valid values:

true

false

For the Qwen2 series models, the format is as follows:

Question:

<|im_end|>\n<|im_start|>user\n + instruction + <|im_end|>\nAnswer:

<|im_start|>assistant\n + output + <|im_end|>\n

true

system_prompt

The system prompt used for model training. The data type is string.

You are a helpful assistant

After you configure the parameters, click Train.

In the Billing Notification dialog box, click OK.

You are automatically redirected to the training task page.

Deploy a model service

After the model is trained, you can follow these steps to deploy it as an EAS online service.

On the right side of the training task page, click Deploy.

In the deployment configuration panel, the Model Service Information and Resource Deployment Information are configured by default. You can modify these configurations as needed. After you configure the parameters, click the Deploy button.

In the Billing Notification dialog box, click OK.

The system automatically navigates to the Deployment Tasks page. When the Status changes to Running, the service is successfully deployed.

Call the model service

After you deploy a model service, you can use an API to perform model inference. For more information, see Deploy a large language model.

Related documents

For more information about EAS, see EAS overview.

Using the PAI Model Gallery feature, you can easily deploy and fine-tune models from the Llama-3, Qwen1.5, and Stable Diffusion V1.5 series for various scenarios. For more information, see Model Gallery use cases.