Apsara File Storage NAS (NAS) is a distributed file system that provides shared access across multiple pods. Use NAS persistent volumes when your applications need persistent, high-performance shared storage — for example, when multiple pods must read and write the same data simultaneously.

Three provisioning approaches are available:

| Approach | When to use |

|---|---|

| Static provisioning | You already have a NAS file system and want to reference it directly via a PVC annotation. |

| Dynamic provisioning | You do not have a NAS file system. A StorageClass defines the configuration and the system creates the file system automatically when a PVC is created. |

| Inline NFS volume | You want to mount a NAS file system directly in the pod spec, without a PVC or PV. Simpler to configure, without the overhead of managing PVCs and PVs. |

For most use cases, static or dynamic provisioning is recommended because they decouple storage configuration from application deployment.

Prerequisites

Before you begin, make sure that:

-

The managed-csiprovisioner component is installed in your ACS cluster. To check, go to your cluster management page in the ACS console, then choose Operations > Add-ons and look for managed-csiprovisioner on the Storage tab.

Limitations

| Limitation | Details |

|---|---|

| Protocol | Only NFSv3 is supported. SMB protocol mounting is not supported. |

| Network scope | A NAS file system can only be mounted to pods in the same VPC. Cross-VPC mounting is not supported. If you need cross-zone access within the same VPC, cross-zone mounting is supported. |

Usage notes

-

Shared writes require application-level synchronization. NAS provides shared storage — a single NAS volume can be mounted to multiple pods simultaneously. If multiple pods write to the same data at the same time, your application must handle data synchronization.

-

Do not set `securityContext.fsgroup`. The

/root directory of a NAS file system does not support permission, owner, or group changes. Settingfsgroupin the pod spec causes the mount to fail. -

Do not delete a mount target while it is in use. Deleting an active mount target may cause the node's operating system to stop responding.

Use an existing NAS file system as a persistent volume

Use static provisioning when you already have a NAS file system. The system creates a static persistent volume (PV) based on the mount target address in the PVC annotation and binds the PVC to it automatically.

Step 1: Get NAS file system information

-

Get the VPC ID and vSwitch ID used by your ACS pods. Run the following command to read the acs-profile ConfigMap:

-

Log on to the ACS console.

-

On the Clusters page, click the cluster name.

-

In the left-side navigation pane, choose Configurations > ConfigMaps.

-

Set the namespace to kube-system, find acs-profile, and click Edit YAML.

-

Copy the

vpcIdandvSwitchIdsvalues.

kubectl get cm -n kube-system acs-profile -o yamlFind the

vpcIdandvSwitchIdsfields in the output. Alternatively, get these values from the ACS console: -

-

Verify that your NAS file system is compatible and get its mount target address.

-

Log on to the NAS console and click File System List.

-

Locate your NAS file system and confirm the following:

-

The NAS file system is in the same region as the ACS cluster. NAS file systems cannot be mounted across regions or VPCs.

-

The NAS file system uses the NFS protocol. SMB is not supported.

-

For best performance, the NAS file system is in the same zone as your pods. Within the same VPC, cross-zone mounting is supported.

-

-

Get the mount target address:

-

Click the file system ID to open the file system details page.

-

In the left-side navigation pane, click Mount Targets.

-

Confirm that the mount target meets the following requirements, then copy its address: > Note: General-purpose NAS file systems have a mount target created automatically. For Extreme NAS file systems, you must create mount targets manually. If no suitable mount target exists, see Manage mount targets.

-

The mount target is in the same VPC as your pods. If the VPCs differ, the mount fails.

-

For best performance, the vSwitch of the mount target matches the vSwitch used by your pods.

-

The mount target status is Available.

-

-

-

Step 2: Create a PVC

kubectl

-

Save the following content as

nas-pvc.yaml. Replace*****-mw*.cn-shanghai.nas.aliyuncs.comwith your actual mount target address.ImportantWhen you apply this PVC, the system first creates a static PV based on the NAS configuration in the

annotations, then creates the PVC and binds it to that PV.Parameter Description csi.alibabacloud.com/mountpointThe NAS directory to mount. Enter a mount target address (e.g., **-.region.nas.aliyuncs.com`) to mount the root directory (`/`). Append a subdirectory path (e.g., `-**.region.nas.aliyuncs.com:/dir) to mount a specific subdirectory. The subdirectory is created automatically if it does not exist.csi.alibabacloud.com/mount-optionsMount options. Use nolock,tcp,noresvport.accessModesAccess mode. ReadWriteManyallows multiple pods to read and write simultaneously.storageStorage capacity allocated to the pod. kind: PersistentVolumeClaim apiVersion: v1 metadata: name: nas-pvc annotations: csi.alibabacloud.com/mountpoint: *******-mw***.cn-shanghai.nas.aliyuncs.com csi.alibabacloud.com/mount-options: nolock,tcp,noresvport spec: accessModes: - ReadWriteMany resources: requests: storage: 20Gi storageClassName: alibaba-cloud-nasKey parameters:

-

Create the PVC:

kubectl create -f nas-pvc.yaml -

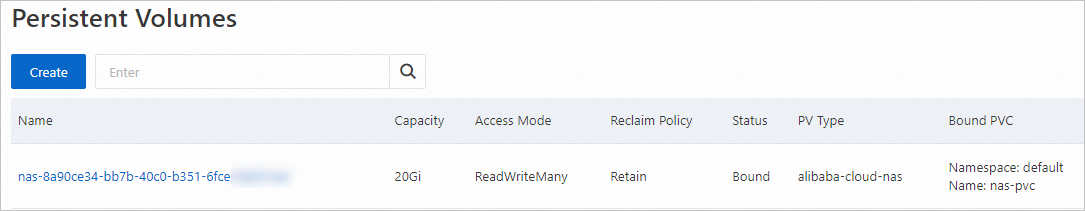

Verify the PV was created:

kubectl get pvExpected output:

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE nas-ea7a0b6a-bec2-4e56-b767-47222d3a**** 20Gi RWX Retain Bound default/nas-pvc alibaba-cloud-nas 1m58s -

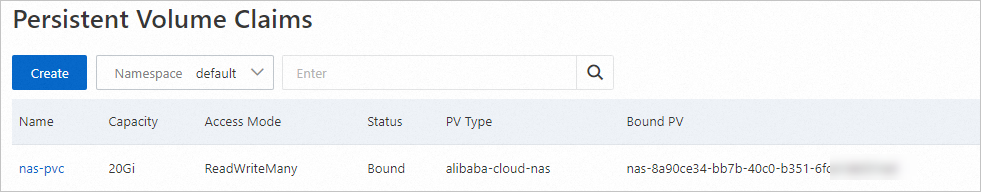

Verify the PVC is bound to the PV:

kubectl get pvcExpected output:

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS VOLUMEATTRIBUTESCLASS AGE nas-pvc Bound nas-ea7a0b6a-bec2-4e56-b767-47222d3a**** 20Gi RWX alibaba-cloud-nas <unset> 2m14s

Console

-

Log on to the ACS console.

-

On the Clusters page, click the cluster name.

-

In the left-side navigation pane, choose Volumes > Persistent Volume Claims.

-

Click Create.

-

Configure the following parameters, then click Create. After the PVC is created, it appears on the Persistent Volume Claims page with a status of Bound, linked to an automatically created PV.

Parameter Description Example PVC Type Select NAS. NAS Name A name for the PVC. nas-pvc Allocation Mode Select Use Mount Target Domain Name. Create with Mount Target Domain Name Volume Plug-in CSI is selected by default. CSI Capacity Storage capacity allocated to the pod. 20 GiB Access Mode ReadWriteManyorReadWriteOnce.ReadWriteMany Mount Target Domain Name The NAS directory to mount. Enter a mount target address to mount the root directory ( /), or append a subdirectory path (e.g.,**-**.region.nas.aliyuncs.com:/dir) to mount a subdirectory. The subdirectory is created automatically if it does not exist.350514**-mw*.cn-shanghai.nas.aliyuncs.com

Step 3: Create a deployment and mount the NAS volume

kubectl

-

Save the following content as

nas-test.yaml. This deployment creates two pods, both mounting thenas-pvcPVC to/data.apiVersion: apps/v1 kind: Deployment metadata: name: nas-test labels: app: nginx spec: replicas: 2 selector: matchLabels: app: nginx template: metadata: labels: app: nginx spec: containers: - name: nginx image: registry.cn-hangzhou.aliyuncs.com/acs-sample/nginx:latest ports: - containerPort: 80 volumeMounts: - name: pvc-nas mountPath: /data volumes: - name: pvc-nas persistentVolumeClaim: claimName: nas-pvc -

Create the deployment:

kubectl create -f nas-test.yaml -

Verify both pods are running:

kubectl get pod | grep nas-testExpected output:

nas-test-****-***a 1/1 Running 0 40s nas-test-****-***b 1/1 Running 0 40s -

Verify the NAS file system is mounted:

kubectl exec nas-test-****-***a -- df -h /dataExpected output:

Filesystem Size Used Avail Use% Mounted on 350514*****-mw***.cn-shanghai.nas.aliyuncs.com:/ 10P 0 10P 0% /data

Console

-

In the left-side navigation pane of the cluster management page, choose Workloads > Deployments.

-

Click Create from Image.

-

Configure the following key parameters, then click Create. For other parameters, keep the defaults. For more information, see Create a stateless application using a deployment.

Configuration page Parameter Description Example Basic Information Name A name for the deployment. nas-test Basic Information Replicas Number of pod replicas. 2 Container Image Name Container image address. registry.cn-hangzhou.aliyuncs.com/acs-sample/nginx:latestContainer Required Resources vCPU and memory resources. 0.25 vCPU, 0.5 GiB Volume — Click Add PVC. Set Mount Source to the PVC you created and Container Path to the mount path. Mount Source: nas-pvc; Container Path: /data -

Verify the deployment:

-

On the Deployments page, click the deployment name.

-

On the Pods tab, confirm that all pods show a status of Running.

-

Create a new NAS file system as a persistent volume

Use dynamic provisioning when you do not have an existing NAS file system. A StorageClass defines the file system configuration, and the system creates it automatically when a persistent volume claim (PVC) is created.

NAS file system types, storage classes, and protocol support vary by region and zone. Before configuring the StorageClass, check the supported regions and zones for your chosen NAS type:

-

Specifications, performance, billing, and supported regions: General-purpose NAS file systems and Extreme NAS file systems

-

Mount connectivity limits and protocol types: Limits

Step 1: Create a StorageClass

-

Save the following content as

nas-sc.yaml, replacing the placeholder values with those for your ACS cluster. To get the VPC ID and vSwitch ID, run:Parameter Required Description volumeAsRequired Must be filesystem. Each NAS volume corresponds to one NAS file system.fileSystemTypeRequired standard(default) for General-purpose NAS, orextremefor Extreme NAS.storageTypeRequired Storage class within the file system type. General-purpose NAS: Performance(default, compute-optimized) orCapacity(storage-optimized). Extreme NAS:standard(default, medium) oradvanced.regionIdRequired Region of the NAS file system. Must match the region of your ACS cluster. zoneIdRequired Zone of the NAS file system. Select the zone based on the vSwitch used by your pods. Cross-zone mounting within the same VPC is supported, but using the same zone gives better performance. vpcId,vSwitchIdRequired VPC and vSwitch IDs for the NAS mount target. Must match the VPC and vSwitch used by your pods. accessGroupNameOptional Permission group for the mount target. Defaults to DEFAULT_VPC_GROUP_NAME.provisionerRequired Must be nasplugin.csi.alibabacloud.com.reclaimPolicyRequired Only Retainis supported. When the PV is deleted, the NAS file system and mount target are retained.kubectl get cm -n kube-system acs-profile -o yamlapiVersion: storage.k8s.io/v1 kind: StorageClass metadata: name: alicloud-nas-fs mountOptions: - nolock,tcp,noresvport - vers=3 parameters: volumeAs: filesystem fileSystemType: standard storageType: Performance regionId: cn-shanghai zoneId: cn-shanghai-e vpcId: "vpc-2ze2fxn6popm8c2mzm****" vSwitchId: "vsw-2zwdg25a2b4y5juy****" accessGroupName: DEFAULT_VPC_GROUP_NAME deleteVolume: "false" provisioner: nasplugin.csi.alibabacloud.com reclaimPolicy: RetainKey parameters:

-

Create the StorageClass:

kubectl create -f nas-sc.yaml -

Verify the StorageClass was created:

kubectl get scExpected output:

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE alicloud-nas-fs nasplugin.csi.alibabacloud.com Retain Immediate false 13m ......

Step 2: Create a PVC

-

Save the following content as

nas-pvc-fs.yaml.Parameter Description accessModesAccess mode. storageClassNameMust match the name of the StorageClass you created. storageStorage capacity for the NAS volume. For Extreme NAS file systems, the minimum value is 100 GiB. Setting a smaller value prevents the PV from being created. kind: PersistentVolumeClaim apiVersion: v1 metadata: name: nas-pvc-fs spec: accessModes: - ReadWriteMany storageClassName: alicloud-nas-fs resources: requests: storage: 20GiKey fields:

-

Create the PVC:

kubectl create -f nas-pvc-fs.yaml -

Verify the PVC is bound:

kubectl get pvcExpected output:

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS VOLUMEATTRIBUTESCLASS AGE nas-pvc-fs Bound nas-04a730ba-010d-4fb1-9043-476d8c38**** 20Gi RWX alicloud-nas-fs <unset> 14sThe PVC is bound to an automatically provisioned PV. The corresponding NAS file system is visible in the NAS console.

Step 3: Create a deployment and mount the NAS volume

-

Save the following content as

nas-test-fs.yaml. This deployment creates two pods, both mounting thenas-pvc-fsPVC to/data.apiVersion: apps/v1 kind: Deployment metadata: name: nas-test labels: app: nginx spec: replicas: 2 selector: matchLabels: app: nginx template: metadata: labels: app: nginx spec: containers: - name: nginx image: registry.cn-hangzhou.aliyuncs.com/acs-sample/nginx:latest ports: - containerPort: 80 volumeMounts: - name: pvc-nas mountPath: /data volumes: - name: pvc-nas persistentVolumeClaim: claimName: nas-pvc-fs -

Create the deployment:

kubectl create -f nas-test-fs.yaml -

Verify both pods are running:

kubectl get pod | grep nas-testExpected output:

nas-test-****-***a 1/1 Running 0 40s nas-test-****-***b 1/1 Running 0 40s -

Verify the NAS file system is mounted:

kubectl exec nas-test-****-***a -- df -h /dataExpected output:

Filesystem Size Used Avail Use% Mounted on 350514*****-mw***.cn-shanghai.nas.aliyuncs.com:/ 10P 0 10P 0% /data

Mount an existing NAS file system using an NFS volume

Use an inline NFS volume when you want to mount a NAS file system directly in the pod spec, without creating a PVC or PV. This approach requires fewer resources to configure.

Step 1: Get the NAS mount target address

Follow the steps in Step 1: Get NAS file system information to get the mount target address.

Step 2: Create a deployment

-

Save the following content as

nas-test-nfs.yaml, replacing theservervalue with your actual mount target address. This deployment creates two pods, both mounting the NAS file system to/datausing an inline NFS volume.apiVersion: apps/v1 kind: Deployment metadata: name: nas-test labels: app: nginx spec: replicas: 2 selector: matchLabels: app: nginx template: metadata: labels: app: nginx spec: containers: - name: nginx image: registry.cn-hangzhou.aliyuncs.com/acs-sample/nginx:latest ports: - containerPort: 80 volumeMounts: - name: nfs-nas mountPath: /data volumes: - name: nfs-nas nfs: server: file-system-id.region.nas.aliyuncs.com # Mount target address. Example: 7bexxxxxx-xxxx.ap-southeast-1.nas.aliyuncs.com path: / # The path of the directory in the NAS file system. The directory must be an existing directory or the root directory. The root directory for a General-purpose NAS file system is "/". The root directory for an Extreme NAS file system is "/share". -

Create the deployment:

kubectl create -f nas-test-nfs.yaml -

Verify both pods are running:

kubectl get pod | grep nas-testExpected output:

nas-test-****-***a 1/1 Running 0 40s nas-test-****-***b 1/1 Running 0 40s -

Verify the NAS file system is mounted:

kubectl exec nas-test-****-***a -- df -h /dataExpected output:

Filesystem Size Used Avail Use% Mounted on 350514*****-mw***.cn-shanghai.nas.aliyuncs.com:/ 10P 0 10P 0% /data

Verify shared storage and data persistence

The deployments in the examples above create two pods that share the same NAS file system. Use these steps to confirm that shared storage and data persistence both work correctly.

Verify shared storage

-

Get the pod names:

kubectl get pod | grep nas-testSample output:

nas-test-****-***a 1/1 Running 0 40s nas-test-****-***b 1/1 Running 0 40s -

Create a file from one pod:

kubectl exec nas-test-****-***a -- touch /data/test.txt -

Read the file from the other pod:

kubectl exec nas-test-****-***b -- ls /dataExpected output:

test.txttest.txtis visible from both pods, confirming that shared storage works.

Verify data persistence

-

Restart the deployment to create new pods:

kubectl rollout restart deploy nas-test -

Wait for the new pods to be ready:

kubectl get pod | grep nas-testSample output:

nas-test-****-***c 1/1 Running 0 67s nas-test-****-***d 1/1 Running 0 49s -

Read the file from a new pod:

kubectl exec nas-test-****-***c -- ls /dataExpected output:

test.txttest.txtis still present after the pods were replaced, confirming that the NAS file system persists data across pod restarts.