Migrate Hive Metastore metadata from an E-MapReduce (EMR) cluster to Data Lake Formation (DLF), then reconfigure your compute engines to use DLF as the unified metadata store. Once migrated, you can write data from multiple sources to a data lake and manage all metadata from a single location.

DLF metadata integration requires EMR V3.33 or later (V3.x), EMR V4.6 or later (V4.x), or EMR V5.1 or later (V5.x). For earlier EMR versions, join the DingTalk group 33719678.

Choose your migration path

Use this table to find the right procedure for your situation.

| Starting point | Goal | Procedure |

|---|---|---|

| Big data cluster (non-EMR) | Migrate metadata to an EMR cluster that already uses DLF | Migrate metadata |

| EMR cluster using built-in MySQL or self-managed ApsaraDB RDS | Move metadata to a different EMR cluster that stores metadata in DLF | Migrate metadata |

| EMR cluster using built-in MySQL or self-managed ApsaraDB RDS | Switch the existing cluster's metadata storage to DLF | Use DLF for unified metadata storage |

Migrate metadata

DLF lets you migrate metadata from a Hive Metastore to a data lake through a visual interface, without manually scripting data transfers.

Prerequisites

Before you begin, ensure that you have:

An EMR cluster (V3.33+, V4.6+, or V5.1+) with metadata stored in a self-managed ApsaraDB RDS database or a built-in MySQL database

A Hive database in the EMR cluster. For instructions, see Use Hive to perform basic operations. This example uses a database named

testdb2.Remote access permissions configured on the source database (see below)

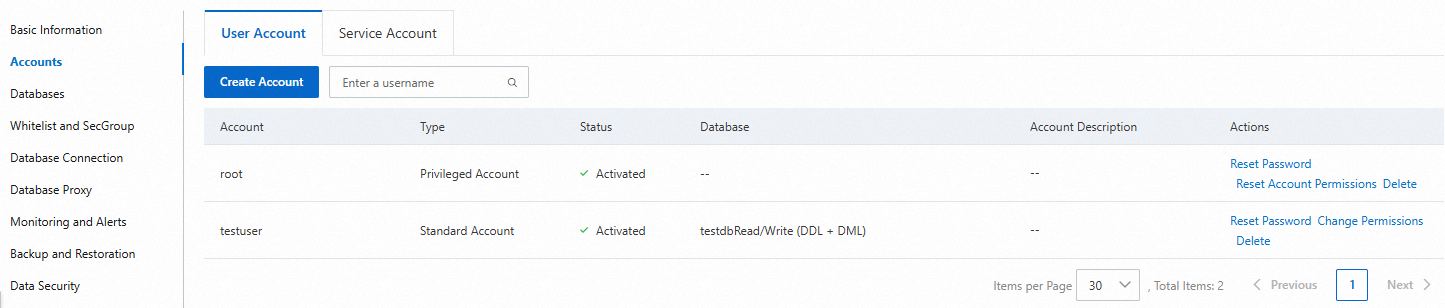

Configure remote access permissions

Log on to the ApsaraDB RDS or MySQL database and run the following statements. This example grants permissions to the root user on the testdb database. Replace xxxx with the actual password.

CREATE USER 'root'@'%' IDENTIFIED BY 'xxxx';

GRANT ALL PRIVILEGES ON testdb.* TO 'root'@'%' WITH GRANT OPTION;

FLUSH PRIVILEGES;For ApsaraDB RDS, you can also view and update access permissions directly in the console. For instructions, see Modify account permissions.

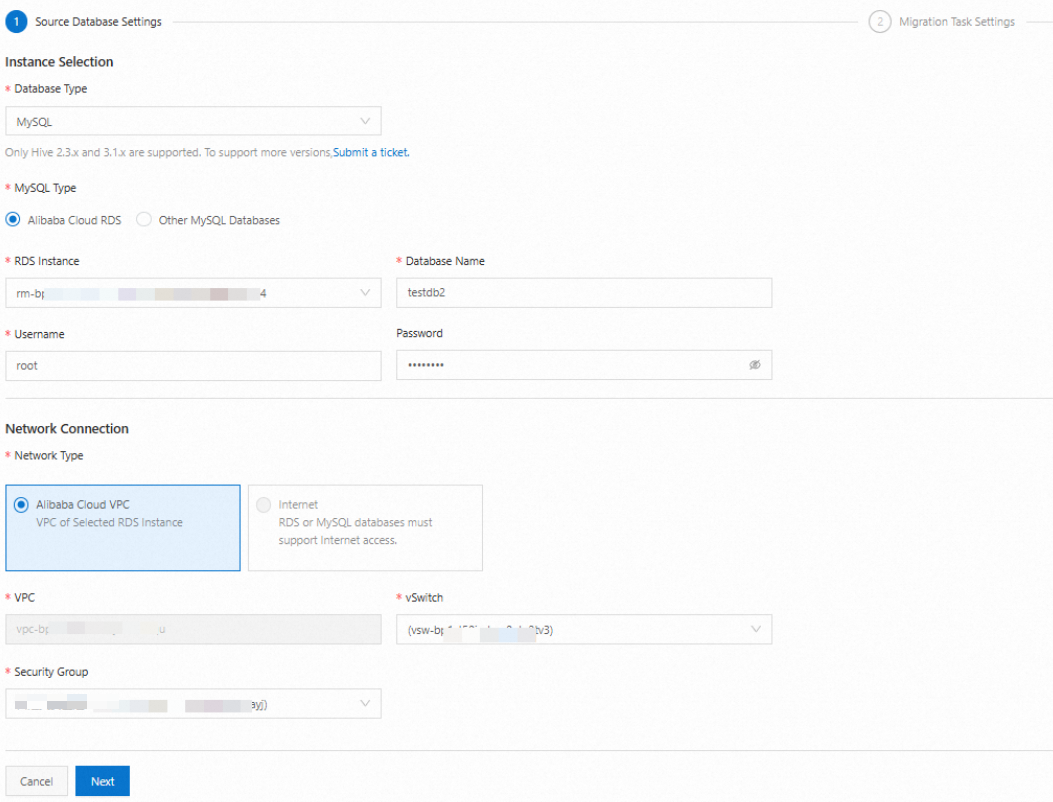

Create a migration task

Log on to the DLF console.

Select the region where your EMR cluster resides.

In the left-side navigation pane, choose Metadata > Migrate Metadata.

On the Migration Task tab, click Create Cloud Migration Task.

Configure the source connection parameters and click Next. For parameter details, see Create a metadata migration task.

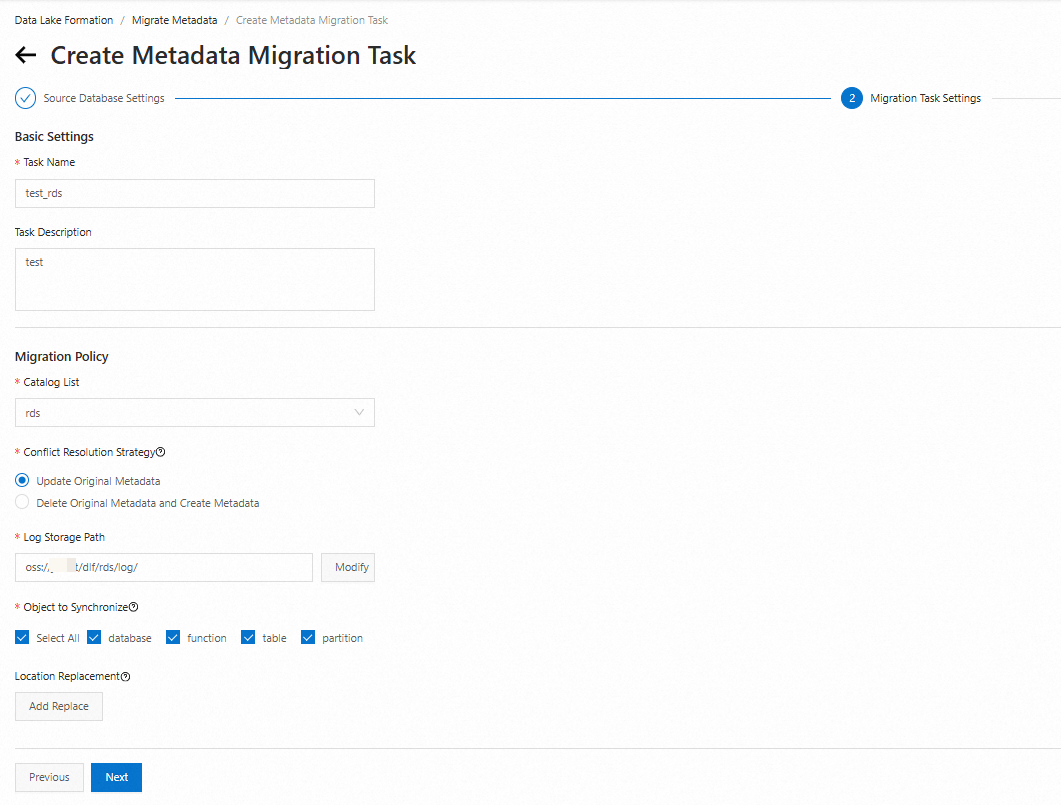

Configure the migration task details and click Next. This example names the task

test_rds.

Review the task configuration and click OK.

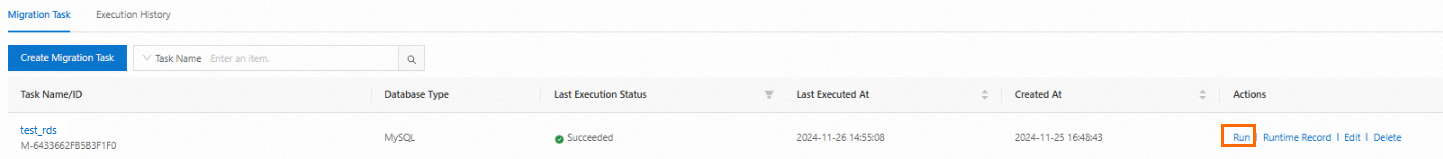

Run the migration task

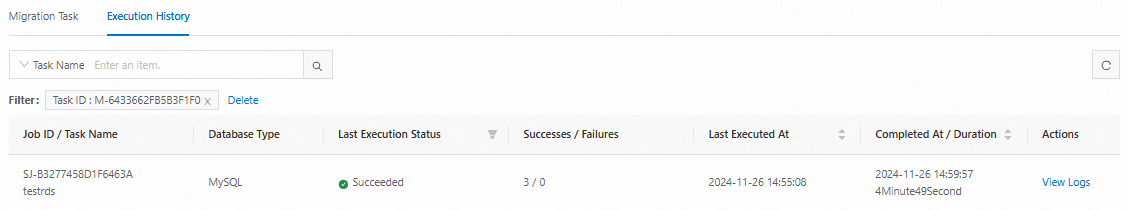

On the Migration Task tab, find the task named test_rds and click Run in the Actions column. When the task completes successfully, its status changes to Succeeded.

View the running record and logs

On the Migration Task tab, click Running Record in the Actions column to view the execution history.

On the Execution History tab, click View Logs in the Actions column to view the log details.

Verify metadata migration

In the left-side navigation pane, choose Metadata > Metadata.

Click the Database tab, select your catalog from the Catalog List drop-down list, enter your database name in the Database Name field, and press Enter. If the database appears in the results, the metadata was migrated successfully.

Use DLF for unified metadata storage

To switch an EMR cluster from MySQL-based metadata storage to DLF, update the configuration for each compute engine to point to the DLF metadata service.

To store metadata in a specific catalog rather than the default catalog, set the dlf.catalog.id configuration item to your catalog ID.Hive

In hive-site.xml, add or update the following configuration items, then save and apply the changes. For instructions on modifying configuration items, see Modify configuration items.

<!-- DLF metadata service endpoint. Replace {regionId} with your cluster's region ID, such as cn-hangzhou. -->

dlf.catalog.endpoint=dlf-vpc.{regionId}.aliyuncs.com

<!-- After pasting, verify that no extra spaces were introduced. -->

hive.imetastoreclient.factory.class=com.aliyun.datalake.metastore.hive2.DlfMetaStoreClientFactory

dlf.catalog.akMode=EMR_AUTO

dlf.catalog.proxyMode=DLF_ONLY

<!-- Required for Hive 3 -->

hive.notification.event.poll.interval=0s

<!-- Required for EMR versions earlier than V3.33 or V4.6.0 -->

dlf.catalog.sts.isNewMode=falsePresto

In hive.properties, add the following configuration items, then save and apply the changes. For instructions, see Add configuration items.

hive.metastore=dlf

<!-- DLF metadata service endpoint. Replace {regionId} with your cluster's region ID, such as cn-hangzhou. -->

dlf.catalog.endpoint=dlf-vpc.{regionId}.aliyuncs.com

dlf.catalog.akMode=EMR_AUTO

dlf.catalog.proxyMode=DLF_ONLY

<!-- Set this to the value of hive.metastore.warehouse.dir in hive-site.xml. -->

dlf.catalog.default-warehouse-dir=

<!-- Required for EMR versions earlier than V3.33 or V4.6.0 -->

dlf.catalog.sts.isNewMode=falseSpark

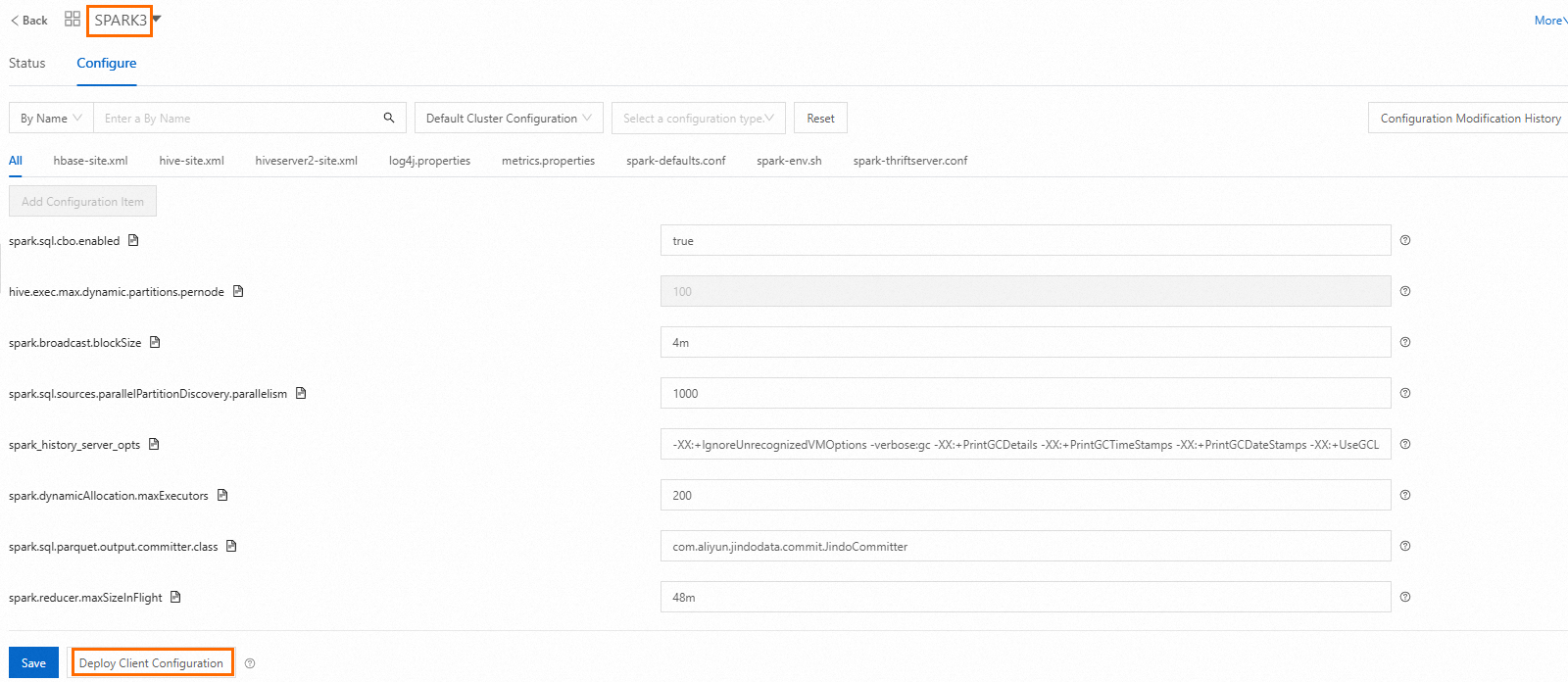

On the Configure tab of the Spark service page, click Deploy Client Configuration and follow the prompts. Then restart Spark.

Impala

On the Configure tab of the Impala service page, click Deploy Client Configuration and follow the prompts. Then restart Impala.

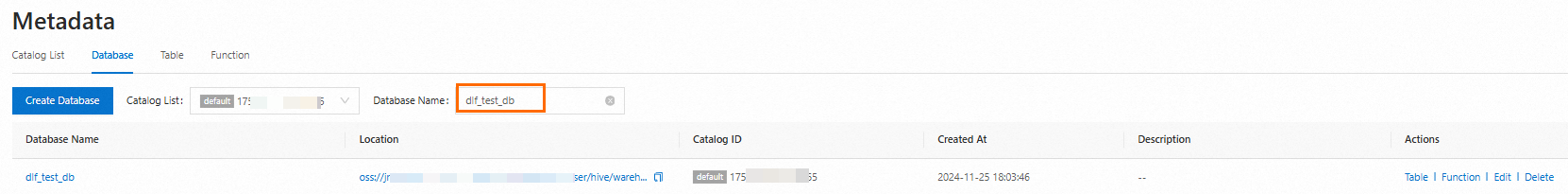

Verify the metadata storage change

The following steps use Hive. The same verification applies to other engines.

Log on to the cluster over SSH. For instructions, see Log on to a cluster.

Open the Hive CLI:

hiveCreate a test database:

CREATE database if NOT EXISTS dlf_test_db;If the output contains

OK, the database was created successfully.Log on to the DLF console.

In the left-side navigation pane, choose Metadata > Metadata.

Click the Database tab, select your catalog from the Catalog List drop-down list, enter

dlf_test_dbin the Database Name field, and press Enter. Ifdlf_test_dbappears in the results, the metadata storage for Hive is changed. Otherwise, the metadata storage for Hive fails to be changed.

FAQ

What happens if I run a migration task more than once?

Running the same migration task multiple times produces the same result. The reason is that a migration task is executed based on metadata in an ApsaraDB RDS or a MySQL database to ensure eventual consistency between the metadata in the source database and the metadata in DLF.

To handle conflicts between source and target catalog metadata, configure the Conflict Resolution Strategy parameter when creating the task. For details, see Create a metadata migration task.