E-MapReduce (EMR) lets you view, modify, and add configuration items for services such as Hadoop Distributed File System (HDFS), YARN, and Spark directly in the EMR console.

Prerequisites

Before you begin, ensure that you have:

An EMR cluster. For more information, see Create a cluster.

How it works

EMR manages configuration items at three levels: cluster, node group, and node. When the same configuration item is set at multiple levels, the value at the highest-priority level takes effect. Priority order: node > node group > cluster.

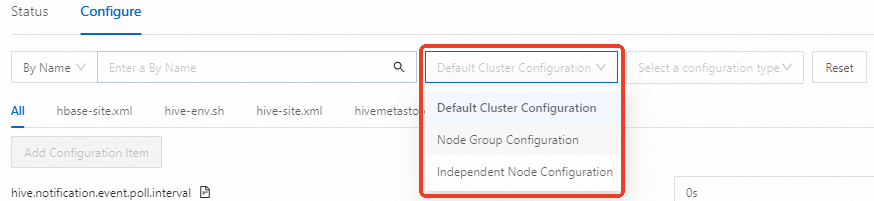

By default, the Configure tab shows cluster-level configuration items. To view or modify node group or node level items, switch the scope using the Default Cluster Configuration drop-down list:

Node Group Configuration — applies the setting to all nodes in a node group

Independent Node Configuration — applies the setting to a specific node

Node group and node level items can only be viewed when Default Cluster Configuration is selected. To modify them, switch to the appropriate scope first.

View configuration items

Log on to the EMR console. In the left-side navigation pane, click EMR on ECS.

In the top navigation bar, select a region and a resource group.

Find the cluster and click Services in the Actions column.

On the Services tab, find the service and click Configure.

In the search box, enter the name of the configuration item.

If a configuration item at the node group or node level is modified, or the settings of a configuration item are inconsistent with the default settings at the cluster level, the settings of the configuration item at the node group or node level are displayed on the Configure tab, with Default Cluster Configuration selected.

To view a configuration item at the node group or node level, select Node Group Configuration or Independent Node Configuration from the Default Cluster Configuration drop-down list, then select the specific node group or node.

Modify configuration items

Log on to the EMR console. In the left-side navigation pane, click EMR on ECS.

In the top navigation bar, select a region and a resource group.

Find the cluster and click Services in the Actions column.

On the Services tab, find the service and click Configure.

(Optional) To modify a node group or node level item, select Node Group Configuration or Independent Node Configuration from the Default Cluster Configuration drop-down list.

In the search box, enter the name of the configuration item and click the search icon.

Change the value of the configuration item. > Tip: For example, to increase NodeManager memory, set

yarn.nodemanager.resource.memory-mbto a higher value such as8192.Click Save. In the Save dialog box, set Execution Reason and click Save.

The Save and Deliver Configuration switch is turned on by default. When enabled, configurations are sent to the client immediately after saving, and you can activate them using prompt mode. Turn off this switch to activate configurations manually using manual mode instead.

Activate the configurations. See Activate configurations.

Add configuration items

Log on to the EMR console. In the left-side navigation pane, click EMR on ECS.

In the top navigation bar, select a region and a resource group.

Find the cluster and click Services in the Actions column.

On the Services tab, find the service and click Configure.

Click the tab where you want to add configuration items, then click Add Configuration Item.

Fill in the configuration details. You can add multiple items at the same time.

Field Description Key The name of the configuration item Value The value of the configuration item Description A description of the configuration item Actions Remove the configuration item Click OK. In the dialog box, set Execution Reason and click Save.

Activate the configurations. See Activate configurations.

Activate configurations

After saving, activate the configurations based on their type and your preferred mode.

Prompt mode

Prompt mode is available only for EMR V5.12.1, EMR V3.46.1, and later minor versions.

Client-side configurations

After saving, click the

(To Be Delivered) prompt.

(To Be Delivered) prompt.In the Configurations to Be Delivered message, click Deliver.

For the YARN service, if the modified items include queue-related configuration items, click the

(Not Effective Yet) prompt or click Deploy on the Edit Resource Queue tab of the YARN service page to activate the changes.

(Not Effective Yet) prompt or click Deploy on the Edit Resource Queue tab of the YARN service page to activate the changes.

Server-side configurations

After saving, click the

(Not Effective Yet) prompt.

(Not Effective Yet) prompt.In the Configurations to Take Effect dialog box, activate each component based on its type:

Configurations That Require Custom Operations: Click the corresponding action in the Actions column for each component.

Configurations That Require Restart: Click restart in the Actions column for a component, or select multiple components and click Batch Restart. In the dialog box, set Execution Reason and click OK.

Manual mode

Client-side configurations

Click Deploy Client Configuration next to Save at the bottom of the page.

In the dialog box, set Execution Reason and click OK.

In the Confirm message, click OK.

Server-side configurations

Choose More > Restart in the upper-right corner of the Configure tab.

In the dialog box, set Execution Reason and click OK.

In the Confirm message, click OK.

Modifiable configuration items

The following table lists the configuration items that can be modified at the node and node group levels in EMR V5.17.1 clusters.

Kerberos-related configuration items are available only if Kerberos authentication is enabled.

<table> <thead> <tr> <td><p><b>Service name</b></p></td> <td><p><b>File</b></p></td> <td><p><b>Configuration item</b></p></td> </tr> </thead> <colgroup></colgroup> <colgroup></colgroup> <colgroup></colgroup> <tbody> <tr> <td><p>Hadoop-Common</p></td> <td><p>core-site.xml</p></td> <td><p>fs.oss.tmp.data.dirs</p><p>hadoop.tmp.dir</p></td> </tr> <tr> <td><p>HDFS</p></td> <td><p>hdfs-env.sh</p></td> <td><p>hadoop_datanode_heapsize</p><p>hadoop_secondarynamenode_opts</p><p>hadoop_namenode_heapsize</p></td> </tr> <tr> <td><p>hdfs-site.xml</p></td> <td><p>dfs.datanode.data.dir</p><p>dfs.datanode.failed.volumes.tolerated</p><p>dfs.datanode.du.reserved</p><p>dfs.datanode.balance.max.concurrent.moves</p></td> </tr> <tr> <td><p>OSS-HDFS</p></td> <td><p>None</p></td> <td><p>None</p></td> </tr> <tr> <td><p>Hive</p></td> <td><p>hive-env.sh</p></td> <td><p>hive_metastore_heapsize</p><p>hive_server2_heapsize</p></td> </tr> <tr> <td><p>Spark2</p></td> <td><p>hiveserver2-site.xml</p></td> <td><p>hive.server2.authentication.kerberos.principal</p></td> </tr> <tr> <td><p>spark-env.sh</p></td> <td><p>spark_history_daemon_memory</p><p>spark_thrift_daemon_memory</p></td> </tr> <tr> <td><p>spark-thriftserver.conf</p></td> <td><p>spark.yarn.historyServer.address</p><p>spark.hadoop.hive.server2.thrift.bind.host</p><p>spark.yarn.principal</p></td> </tr> <tr> <td><p>spark-defaults.conf</p></td> <td><p>spark.yarn.historyServer.address</p><p>spark.history.kerberos.principal</p></td> </tr> <tr> <td><p>Spark3</p></td> <td><p>hiveserver2-site.xml</p></td> <td><p>hive.server2.authentication.kerberos.principal</p></td> </tr> <tr> <td><p>spark-env.sh</p></td> <td><p>spark_history_daemon_memory</p><p>spark_thrift_daemon_memory</p></td> </tr> <tr> <td><p>spark-thriftserver.conf</p></td> <td><p>spark.yarn.historyServer.address</p><p>spark.hadoop.hive.server2.thrift.bind.host</p><p>spark.kerberos.principal</p></td> </tr> <tr> <td><p>spark-defaults.conf</p></td> <td><p>spark.yarn.historyServer.address</p><p>spark.history.kerberos.principal</p></td> </tr> <tr> <td><p>Tez</p></td> <td><p>None</p></td> <td><p>None</p></td> </tr> <tr> <td><p>Trino</p></td> <td><p>iceberg.properties</p></td> <td><p>hive.hdfs.trino.principal</p><p>hive.metastore.client.principal</p></td> </tr> <tr> <td><p>delta.properties</p></td> <td><p>hive.hdfs.trino.principal</p><p>hive.metastore.client.principal</p></td> </tr> <tr> <td><p>config.properties</p></td> <td><p>coordinator</p><p>node-scheduler.include-coordinator</p><p>query.max-memory</p><p>query.max-total-memory</p><p>query.max-memory-per-node</p><p>http-server.authentication.type</p><p>http-server.authentication.krb5.user-mapping.pattern</p><p>http-server.authentication.krb5.service-name</p><p>http-server.authentication.krb5.keytab</p><p>http.authentication.krb5.config</p><p>http-server.https.enabled</p><p>http-server.https.port</p><p>http-server.https.keystore.key</p><p>http-server.https.keystore.path</p><p>event-listener.config-files</p> <div> <div> <i></i> </div> <div> <strong>Note </strong> <p>event-listener.config-files specifies the path where the configuration file of an event listener is stored. This configuration item is available only if you turn on EmrEventListener. </p> </div> </div></td> </tr> <tr> <td><p>jvm.config</p></td> <td><p>jvm parameter</p></td> </tr> <tr> <td><p>hudi.properties</p></td> <td><p>hive.hdfs.trino.principal</p><p>hive.metastore.client.principal</p></td> </tr> <tr> <td><p>password-authenticator.properties</p></td> <td><p>ldap.url</p><p>ldap.user-bind-pattern</p></td> </tr> <tr> <td><p>hive.properties</p></td> <td><p>hive.hdfs.trino.principal</p><p>hive.metastore.client.principal</p></td> </tr> <tr> <td><p>DeltaLake</p></td> <td><p>None</p></td> <td><p>None</p></td> </tr> <tr> <td><p>Hudi</p></td> <td><p>None</p></td> <td><p>None</p></td> </tr> <tr> <td><p>Iceberg</p></td> <td><p>None</p></td> <td><p>None</p></td> </tr> <tr> <td><p>JindoData</p></td> <td><p>storage.yaml</p></td> <td><p>jindofsx.storage.cache-mode</p><p>storage.watermark.high.ratio</p><p>storage.watermark.low.ratio</p><p>storage.handler.threads</p> <div> <div> <i></i> </div> <div> <strong>Note </strong> <ul> <li><p>JindoData applies to clusters of EMR V5.14.0 or a later minor version and clusters of EMR V3.48.0 or a later minor version. </p></li> <li><p>JindoData is unavailable for clusters of EMR V5.15.0 or a later minor version and clusters of EMR V3.49.0 or a later minor version. You can use <a href="https://www.alibabacloud.com/help/en/document_detail/2579701.html">JindoCache</a> for data caching and <a href="https://www.alibabacloud.com/help/en/document_detail/455181.html">DLF-Auth</a> for authentication. </p></li> </ul> </div> </div></td> </tr> <tr> <td><p>Flume</p></td> <td><p>flume-conf.properties</p></td> <td><p>agent_name</p><p>flume-conf.properties</p></td> </tr> <tr> <td><p>Kyuubi</p></td> <td><p>kyuubi-env.sh</p></td> <td><p>kyuubi_java_opts</p></td> </tr> <tr> <td><p>YARN</p></td> <td><p>yarn-site.xml</p></td> <td><p>yarn.nodemanager.resource.memory-mb</p><p>yarn.nodemanager.local-dirs</p><p>yarn.nodemanager.log-dirs</p><p>yarn.nodemanager.resource.cpu-vcores</p><p>yarn.nodemanager.address</p><p>yarn.nodemanager.node-labels.provider.configured-node-partition</p></td> </tr> <tr> <td><p>yarn-env.sh</p></td> <td><p>YARN_RESOURCEMANAGER_HEAPSIZE</p><p>YARN_TIMELINESERVER_HEAPSIZE</p><p>YARN_PROXYSERVER_HEAPSIZE</p><p>YARN_NODEMANAGER_HEAPSIZE</p><p>YARN_RESOURCEMANAGER_HEAPSIZE_MIN</p><p>YARN_TIMELINESERVER_HEAPSIZE_MIN</p><p>YARN_PROXYSERVER_HEAPSIZE_MIN</p><p>YARN_NODEMANAGER_HEAPSIZE_MIN</p></td> </tr> <tr> <td><p>mapred-env.sh</p></td> <td><p>HADOOP_JOB_HISTORYSERVER_HEAPSIZE</p></td> </tr> <tr> <td><p>mapred-site.xml</p></td> <td><p>mapreduce.cluster.local.dir</p></td> </tr> <tr> <td><p>Impala</p></td> <td><p>None</p></td> <td><p>None</p></td> </tr> <tr> <td><p>OpenLDAP</p></td> <td><p>None</p></td> <td><p>None</p></td> </tr> <tr> <td><p>Ranger</p></td> <td><p>None</p></td> <td><p>None</p></td> </tr> <tr> <td><p>Ranger-Plugin</p></td> <td><p>None</p></td> <td><p>None</p></td> </tr> <tr> <td><p>DLF-Auth</p></td> <td><p>None</p></td> <td><p>None</p></td> </tr> <tr> <td><p>Presto</p></td> <td><p>iceberg.properties</p></td> <td><p>hive.hdfs.presto.principal</p><p>hive.metastore.client.principal</p></td> </tr> <tr> <td><p>delta.properties</p></td> <td><p>hive.hdfs.presto.principal</p><p>hive.metastore.client.principal</p></td> </tr> <tr> <td><p>hive.properties</p></td> <td><p>hive.hdfs.presto.principal</p><p>hive.metastore.client.principal</p></td> </tr> <tr> <td><p>config.properties</p></td> <td><p>coordinator</p><p>node-scheduler.include-coordinator</p><p>query.max-memory-per-node</p><p>query.max-total-memory-per-node</p><p>http-server.authentication.type</p><p>http.authentication.krb5.principal-hostname</p><p>http.server.authentication.krb5.service-name</p><p>http.server.authentication.krb5.keytab</p><p>http.authentication.krb5.config</p><p>http-server.https.enabled</p><p>http-server.https.port</p><p>http-server.https.keystore.key</p><p>http-server.https.keystore.path</p></td> </tr> <tr> <td><p>jvm.config</p></td> <td><p>jvm parameter</p></td> </tr> <tr> <td><p>hudi.properties</p></td> <td><p>hive.hdfs.presto.principal</p><p>hive.metastore.client.principal</p></td> </tr> <tr> <td><p>password-authenticator.properties</p></td> <td><p>ldap.url</p><p>ldap.user-bind-pattern</p></td> </tr> <tr> <td><p>Starrocks2</p></td> <td><p>fe.conf</p></td> <td><p>JAVA_OPTS</p><p>meta_dir</p></td> </tr> <tr> <td><p>be.conf</p></td> <td><p>storage_root_path</p><p>JAVA_OPTS</p></td> </tr> <tr> <td><p>Starrocks3</p></td> <td><p>fe.conf</p></td> <td><p>JAVA_OPTS</p><p>meta_dir</p></td> </tr> <tr> <td><p>be.conf</p></td> <td><p>storage_root_path</p><p>JAVA_OPTS</p></td> </tr> <tr> <td><p>Doris</p></td> <td><p>fe.conf</p></td> <td><p>JAVA_OPTS</p><p>JAVA_OPTS_FOR_JDK_9</p><p>meta_dir</p></td> </tr> <tr> <td><p>be.conf</p></td> <td><p>storage_root_path</p></td> </tr> <tr> <td><p>ClickHouse</p></td> <td><p>server-config</p></td> <td><p>interserver_http_host</p></td> </tr> <tr> <td><p>server-metrika</p></td> <td><p>macros.shard</p><p>macros.replica</p></td> </tr> <tr> <td><p>ZooKeeper</p></td> <td><p>None</p></td> <td><p>None</p></td> </tr> <tr> <td><p>Sqoop</p></td> <td><p>None</p></td> <td><p>None</p></td> </tr> <tr> <td><p>Knox</p></td> <td><p>None</p></td> <td><p>None</p></td> </tr> <tr> <td><p>Celeborn</p></td> <td><p>celeborn-env.sh</p></td> <td><p>CELEBORN_WORKER_MEMORY</p><p>CELEBORN_WORKER_OFFHEAP_MEMORY</p><p>CELEBORN_MASTER_MEMORY</p></td> </tr> <tr> <td><p>celeborn-defaults.conf</p></td> <td><p>celeborn.worker.storage.dirs</p><p>celeborn.worker.flusher.threads</p></td> </tr> <tr> <td><p>Flink</p></td> <td><p>flink-conf.yaml</p></td> <td><p>security.kerberos.login.principal</p><p>security.kerberos.login.keytab</p></td> </tr> <tr> <td><p>HBase</p></td> <td><p>hbase-env.sh</p></td> <td><p>hbase_master_opts</p><p>hbase_thrift_opts</p><p>hbase_rest_opts</p><p>hbase_regionserver_opts</p></td> </tr> <tr> <td><p>hbase-site.xml</p></td> <td><p>hbase.regionserver.handler.count</p><p>hbase.regionserver.global.memstore.size</p><p>hbase.regionserver.global.memstore.lowerLimit</p><p>hbase.regionserver.thread.compaction.throttle</p><p>hbase.regionserver.thread.compaction.large</p><p>hbase.regionserver.thread.compaction.small</p></td> </tr> <tr> <td><p>HBASE-HDFS</p></td> <td><p>hdfs-env.sh</p></td> <td><p>hadoop_secondarynamenode_opts</p><p>hadoop_namenode_heapsize</p><p>hadoop_datanode_heapsize</p></td> </tr> <tr> <td><p>hdfs-site.xml</p></td> <td><p>dfs.datanode.data.dir</p><p>dfs.datanode.failed.volumes.tolerated</p><p>dfs.datanode.du.reserved</p><p>dfs.datanode.balance.max.concurrent.moves</p></td> </tr> <tr> <td><p>JindoCache</p></td> <td><p>None</p></td> <td><p>None</p></td> </tr> <tr> <td><p>Kafka</p></td> <td><p>server.properties</p></td> <td><p>broker.id</p><p>num.network.threads</p><p>num.io.threads</p><p>kafka.heap.opts</p><p>log.dirs</p><p>kafka.public-access.ip</p><p>listeners</p><p>advertised.listeners</p> <div> <div> <i></i> </div> <div> <strong>Note </strong> <p><code>kafka.public-access.ip</code> specifies the public IP address of a Kafka broker. You can use this configuration item to configure listeners that have public IP addresses. </p> </div> </div></td> </tr> <tr> <td><p>kafka-internal-config</p></td> <td><p>broker_id</p></td> </tr> <tr> <td><p>user_params</p></td> <td><p>is_local_disk_instance</p></td> </tr> <tr> <td><p>Kudu</p></td> <td><p>master.gflags</p></td> <td><p>fs_data_dirs</p><p>fs_wal_dir</p><p>fs_metadata_dir</p><p>log_dir</p></td> </tr> <tr> <td><p>tserver.gflags</p></td> <td><p>fs_data_dirs</p><p>fs_wal_dir</p><p>fs_metadata_dir</p><p>log_dir</p></td> </tr> <tr> <td><p>Paimon</p></td> <td><p>None</p></td> <td><p>None</p></td> </tr> <tr> <td><p>Phoenix</p></td> <td><p>None</p></td> <td><p>None</p></td> </tr> </tbody> </table>