Data Map tracks the full lifecycle of your data — where it originates, how it moves through pipelines, and what depends on it. Use data lineages to debug broken pipelines, assess the impact of schema changes before applying them, and satisfy compliance audits that require traceable data flows.

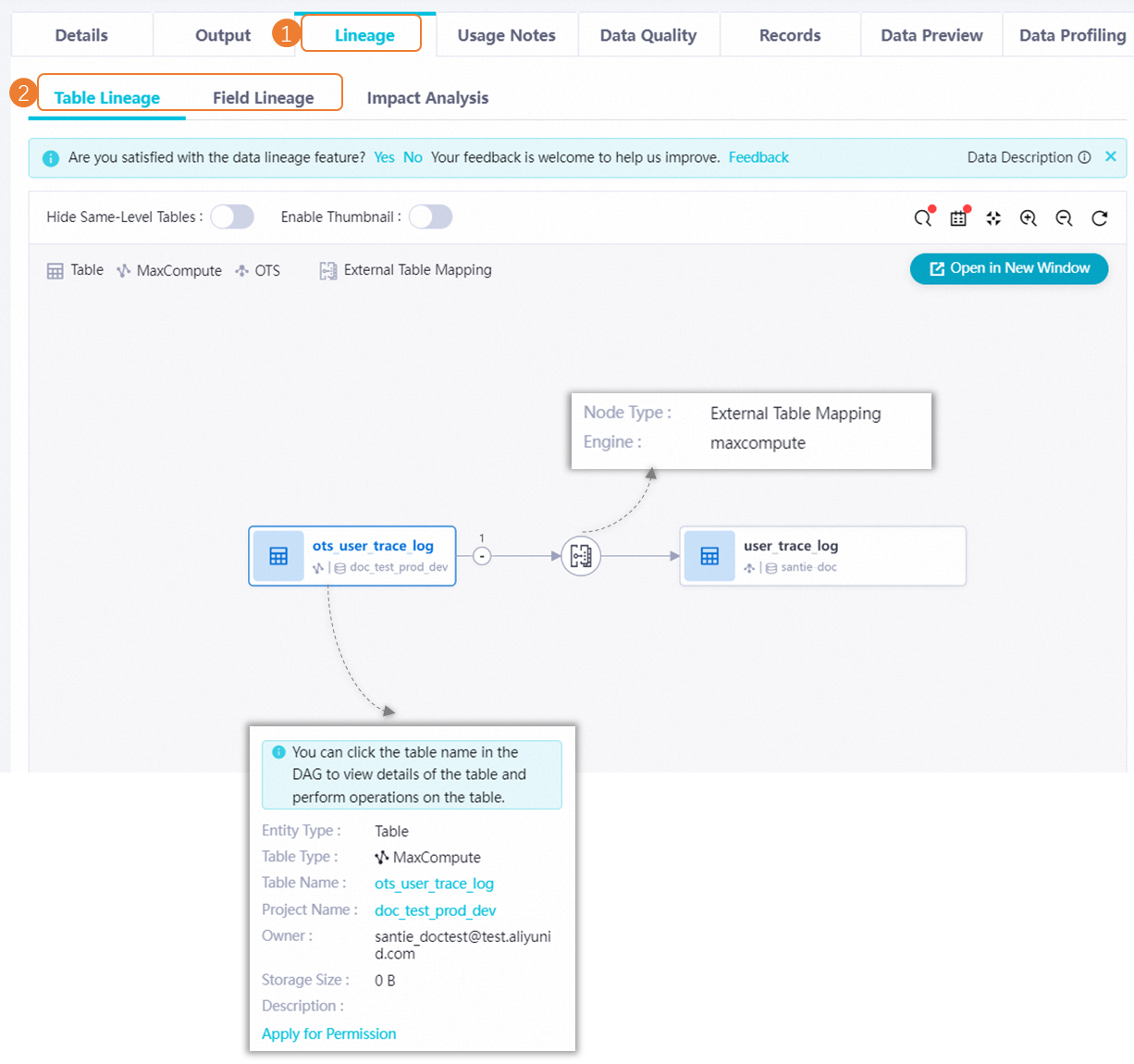

From a table's or DataService Studio API's details page, the Lineage tab gives you both table-level and field-level lineage graphs, along with tools to act on what you find.

Data Map builds lineages from scheduling jobs and data forwarding metadata. Lineages from temporary queries and other manual operations are not included. Offline data lineage is updated on a T+1 basis.

Table lineages

Access the lineage view

Find a table in Data Map and open its details page.

Click the Lineage tab.

From the Lineage tab, you can:

View table-level and field-level lineage graphs

Run impact analysis to see which downstream tables are affected by a change

Retrieve and download the list of descendant tables as a local file

Send change notifications by email

Limitations by data source

Not all data sources support lineage collection out of the box. Some require additional configuration; others have SQL-level restrictions.

E-MapReduce

To collect metadata for a DataLake or custom cluster, configure EMR-HOOK in the cluster first. Without EMR-HOOK, Data Map cannot display lineages for that cluster. See Configure EMR-HOOK for Hive.

Lineage is not available for Spark clusters created on the EMR on ACK page.

Lineage is available for EMR Serverless Spark clusters.

Lineage is not available for tasks developed using an EMR Presto node.

AnalyticDB for MySQL

Submit a ticket to enable data lineage for your AnalyticDB for MySQL instance before using the feature.

AnalyticDB for MySQL supports real-time lineage when the metadata source is AnalyticDB for Spark. If the metadata source is AnalyticDB for Spark, data is collected automatically. To enable real-time lineage, set the following Spark parameter:

spark.sql.queryExecutionListeners = com.aliyun.dataworks.meta.lineage.LineageListenerSQL statements that do not generate lineage

Data Map cannot parse lineage from SQL that uses JOIN, UNION, the wildcard *, or subqueries. For example:

-- Lineage not supported: contains a wildcard (*)

INSERT INTO test SELECT * FROM test1, test2 WHERE test1.id = test2.id

-- Lineage not supported: contains a subquery

SELECT column1, column2 FROM table1 WHERE column3 IN (SELECT column4 FROM table2 WHERE column5 = 'value')SQL statements that generate lineage

Lineage is captured for INSERT INTO, INSERT OVERWRITE, and CREATE TABLE AS SELECT when specific columns (not *) are listed:

-- Create a table from specific columns

CREATE TABLE test AS SELECT id, name FROM test1;

-- Insert specific columns with a filter

INSERT INTO test SELECT id, name FROM test1 WHERE name = 'test';

-- Overwrite using specific columns

INSERT OVERWRITE INTO db_name.test SELECT id, name FROM test1;CDH

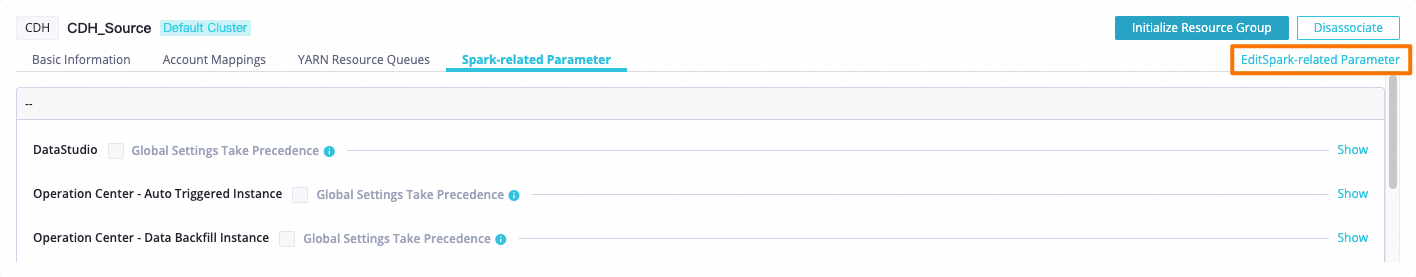

To display table lineage for CDH Spark SQL and CDH Spark nodes, add a Spark parameter to the relevant data transformation module in Management Center.

Log on to the DataWorks console. In the top navigation bar, select the region. In the left-side navigation pane, choose More > Management Center. Select your workspace from the drop-down list and click Go to Management Center.

In the left navigation pane, click Cluster Management and locate the target CDH cluster.

Click Edit Spark-related Parameter.

Add the following Spark parameter for the data transformation module. For example, to capture lineage for CDH Spark SQL and CDH Spark nodes under Operation Center > Auto Triggered Instances, add:

Field Value Spark Property Name spark.sql.queryExecutionListenersSpark Property Value com.aliyun.dataworks.meta.lineage.LineageListenerClick OK.

Lindorm

Lineage collection is only supported in instance mode. Connection string mode is not supported.

To display table lineage for Lindorm Spark and Lindorm Spark SQL nodes, add a Spark parameter in Management Center.

Log on to the DataWorks console. In the top navigation bar, select the region. In the left-side navigation pane, choose More > Management Center. Select your workspace and click Go to Management Center.

In the left navigation pane, click Computing Resource and locate your Lindorm computing resource.

Click Edit Spark-related Parameter.

Add the following Spark parameter for the data transformation module. For example, to capture lineage for Lindorm Spark and Lindorm Spark SQL nodes under Operation Center > Auto Triggered Instances, add:

Field Value Spark Property Name spark.sql.queryExecutionListenersSpark Property Value com.aliyun.dataworks.meta.lineage.LineageListenerClick OK.

Lineage support by data source

The table below shows which lineage types each data source supports, broken down by Data Integration (sync jobs) and Data Studio (SQL-based transformation jobs).

Legend: Y = supported, N = not supported

| Data source | Data Integration — table-level | Data Integration — field-level | Data Studio — table-level | Data Studio — field-level |

|---|---|---|---|---|

| AnalyticDB for MySQL | Batch sync Y / Real-time sync N | Batch sync Y / Real-time sync N | INSERT INTO/OVERWRITE Y, CREATE AS SELECT Y, CREATE EXTERNAL TABLE N | Same as table-level |

| AnalyticDB for PostgreSQL | Offline sync Y / Real-time sync N | Batch sync Y / Real-time sync N | INSERT INTO/OVERWRITE Y, CREATE AS SELECT Y, CREATE EXTERNAL TABLE N | Same as table-level |

| ClickHouse | N | N | N | N |

| CDH/CDP | N | N | Hive, Impala, Spark, Spark SQL: INSERT INTO/OVERWRITE Y, CREATE AS SELECT Y, CREATE EXTERNAL TABLE N | Same as table-level |

| E-MapReduce | Batch sync Y (OSS, Hive) / Real-time sync N | Batch sync Y (OSS, Hive) / Real-time sync N | Hive, Spark (spark-submit), Spark SQL (Hudi format supported), Shell (Hive SQL via beeline): INSERT INTO/OVERWRITE Y, CREATE AS SELECT Y, CREATE EXTERNAL TABLE N | Same as table-level |

| Hologres | Batch sync Y / Real-time sync Y (from MySQL, Kafka, or Log Service) | Batch sync Y / Real-time sync N | INSERT INTO/OVERWRITE Y, CREATE AS SELECT Y, CREATE EXTERNAL TABLE Y | Same as table-level |

| Kafka | Offline sync Y / Real-time sync Y (to MaxCompute or Hologres) | N | N | N |

| Lindorm | N | N | INSERT INTO/OVERWRITE Y, CREATE AS SELECT Y, CREATE TABLE Y, CREATE TABLE LIKE Y | Same as table-level |

| MaxCompute | Batch sync Y / Real-time sync Y (from MySQL, Kafka, PolarDB for MySQL, or Log Service) | Batch sync Y / Real-time sync N | INSERT INTO/OVERWRITE Y, CREATE AS SELECT Y, CREATE EXTERNAL TABLE Y | Same as table-level |

| MySQL | Batch sync Y / Real-time sync Y (to MaxCompute or Hologres) | Batch sync Y / Real-time sync N | N | N |

| Oracle | Batch sync Y / Real-time sync N | Batch sync Y / Real-time sync N | N | N |

| OceanBase | Offline sync Y / Real-time sync N | Batch sync Y / Real-time sync N | N | N |

| OSS | Batch sync Y / Real-time sync N | N | N | N |

| PolarDB for MySQL | Batch sync Y / Real-time sync Y (to MaxCompute) | Batch sync Y / Real-time sync N | N | N |

| PolarDB for PostgreSQL | Batch sync Y / Real-time sync N | Batch sync Y / Real-time sync N | N | N |

| PostgreSQL | Batch sync Y / Real-time sync N | Batch sync Y / Real-time sync N | N | N |

| StarRocks | N | N | INSERT INTO/OVERWRITE Y, CREATE AS SELECT Y, CREATE EXTERNAL TABLE N | Same as table-level |

| SQL Server | Batch sync Y / Real-time sync N | Offline sync Y / Real-time sync N | N | N |

| Tablestore (OTS) | Offline sync Y / Real-time sync N | Batch sync Y / Real-time sync N | N | N |

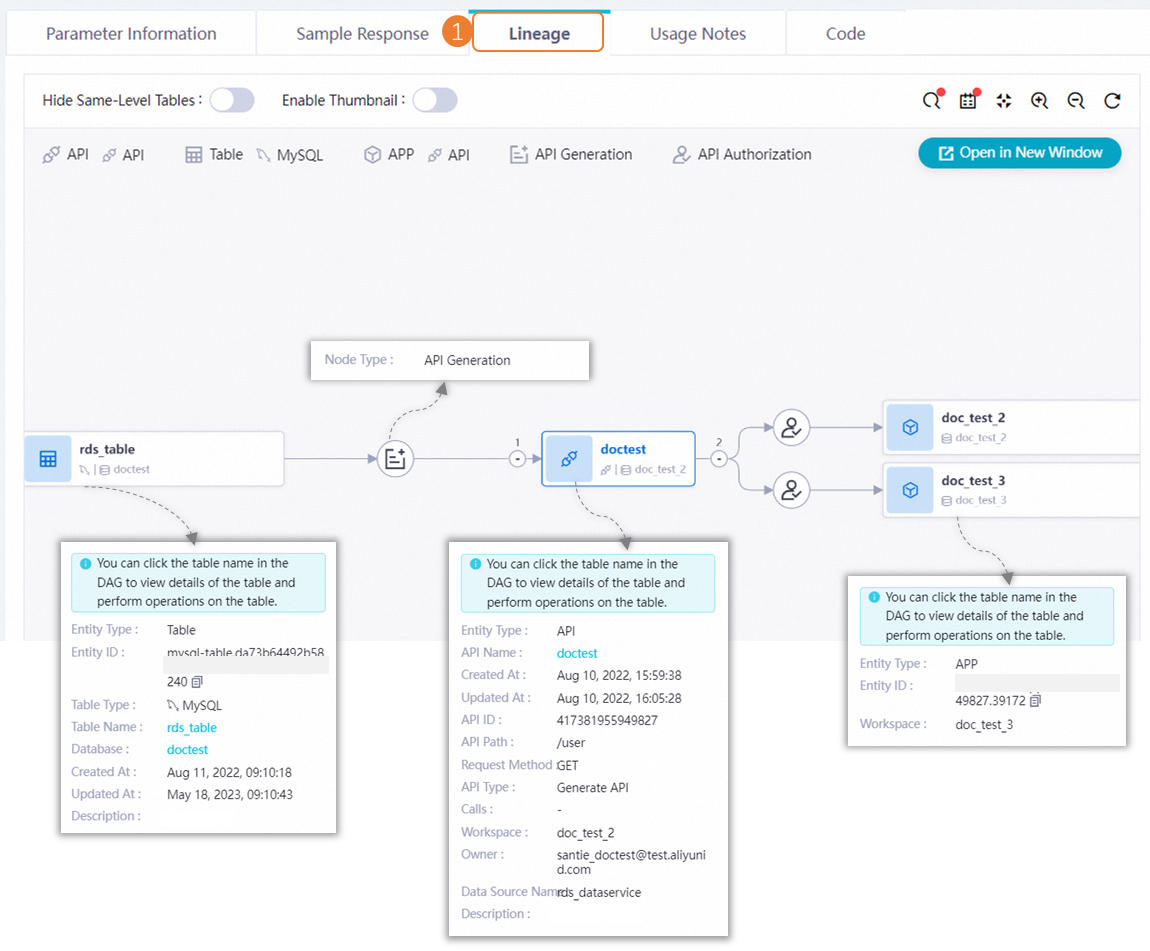

DataService Studio API lineages

Find a DataService Studio API in Data Map and open its details page. Click the Lineage tab to view the API's lineage.