RestAPI Reader reads data from RESTful APIs and writes it to a destination store through Data Integration. Configure an HTTP request URL and RestAPI Reader fetches the response, converts the JSON data to supported types, and passes it to a writer.

This topic shows four common patterns: time-range queries, paginated results, POST requests, and loop traversal with a for-each node.

For parameter reference, see RestAPI (HTTP). For RestAPI Writer configuration, see RestAPI (HTTP) and Writer script parameters.

Capabilities

| Dimension | Details |

|---|---|

| Supported response format | JSON only |

| Supported data types | INT, BOOLEAN, DATE, DOUBLE, FLOAT, LONG, STRING |

| Request methods | GET, POST over HTTP |

| Authentication | None, Basic Auth, Token Auth, Alibaba Cloud API signature |

Authentication options

Use the following guidance to identify which authentication method your API requires:

-

None: Your API is publicly accessible without credentials.

-

Basic Auth: Your API documentation mentions keywords such as "Basic Auth", "Basic HTTP", or "Authorization: Basic". During sync, the username and password are passed in the RESTful API URL for authentication.

-

Token Auth: Your API documentation mentions keywords such as "bearer token", "token authentication", or "Authorization: Bearer". Pass a fixed token in the request header, for example:

{"Authorization":"Bearer TokenXXXXXX"}. For APIs with custom encryption, provide the encrypted value as theAuthToken. -

Alibaba Cloud API signature: Your API uses Alibaba Cloud's signature authentication scheme.

RestAPI data sources require an exclusive resource group for Data Integration. Select and test network connectivity to the resource group before configuring a task.

Prerequisites

Before you begin, ensure that you have:

-

A DataWorks workspace with DataStudio access

-

An exclusive resource group for Data Integration, with network connectivity to your RESTful API endpoint tested and confirmed

-

A MaxCompute data source configured in your workspace (for the examples in this topic)

Example 1: Read data within a time range

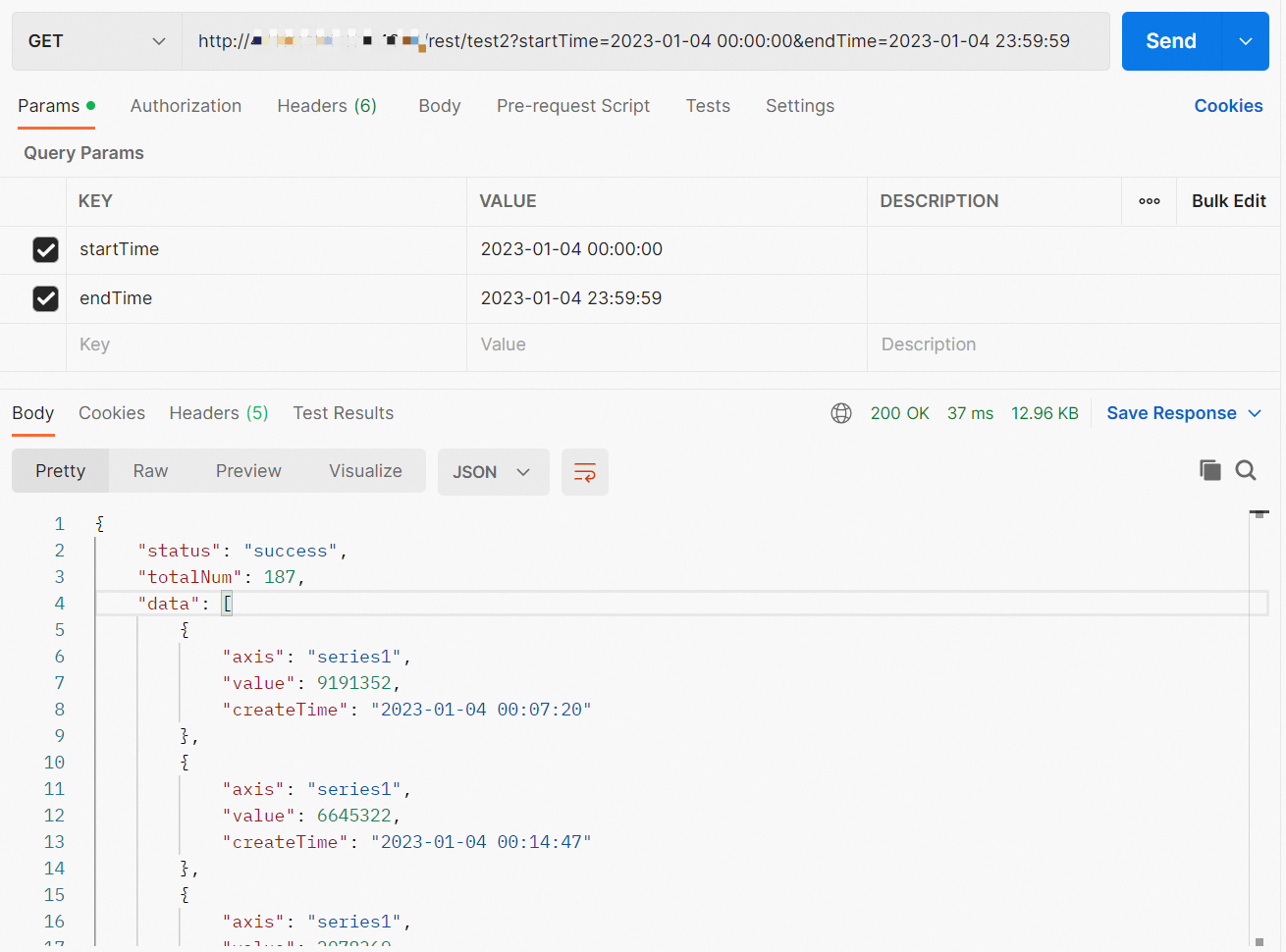

This example synchronizes time-range query results from a GET-based RESTful API to a MaxCompute partitioned table. The API accepts startTime and endTime parameters and returns matching records.

The API used here is for reference only. Adjust configurations to match your own API.

Sample API

Request:

http://TestAPIAddress:Port/rest/test2?startTime=<StartTime>&endTime=<EndTime>Response:

{

"status": "success",

"totalNum": 187,

"data": [

{"axis": "series1", "value": 9191352, "createTime": "2023-01-04 00:07:20"},

{"axis": "series1", "value": 6645322, "createTime": "2023-01-04 00:14:47"},

{"axis": "series1", "value": 2078369, "createTime": "2023-01-04 00:22:13"},

{"axis": "series1", "value": 7325410, "createTime": "2023-01-04 00:29:30"},

{"axis": "series1", "value": 7448456, "createTime": "2023-01-04 00:37:04"},

{"axis": "series1", "value": 5808077, "createTime": "2023-01-04 00:44:30"},

{"axis": "series1", "value": 5625821, "createTime": "2023-01-04 00:52:06"}

]

}The queried records are in data. The returned fields are axis, value, and createTime.

Step 1: Create a MaxCompute partitioned table

CREATE TABLE IF NOT EXISTS ods_xiaobo_rest2

(

`axis` STRING,

`value` BIGINT,

`createTime` STRING

)

PARTITIONED BY

(

ds STRING

)

LIFECYCLE 3650;If you work in a workspace in standard mode, deploy the table to the production environment to make it visible in Data Map.

With the write mode set to overwriting, the sync task overwrites partition data on each run, making reruns safe and preventing duplicate records.

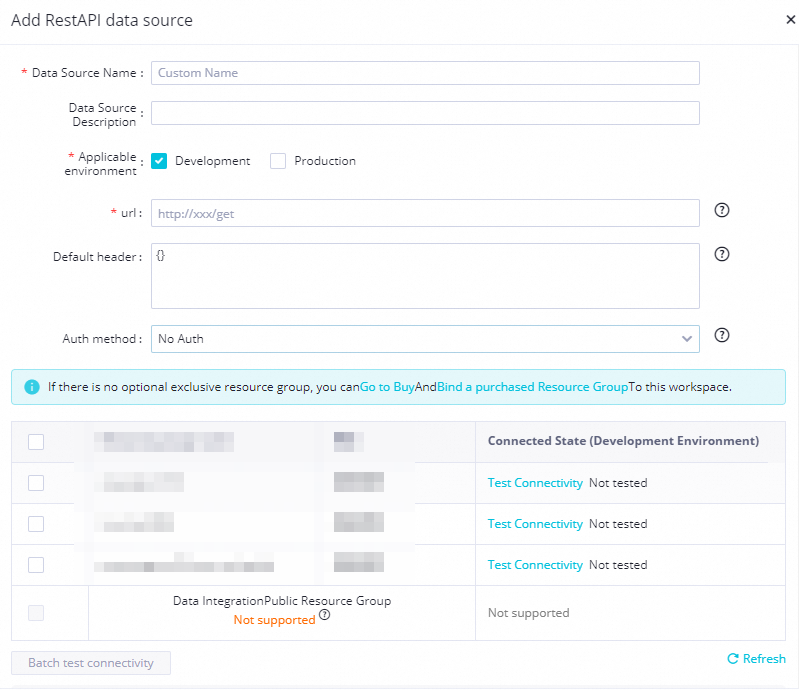

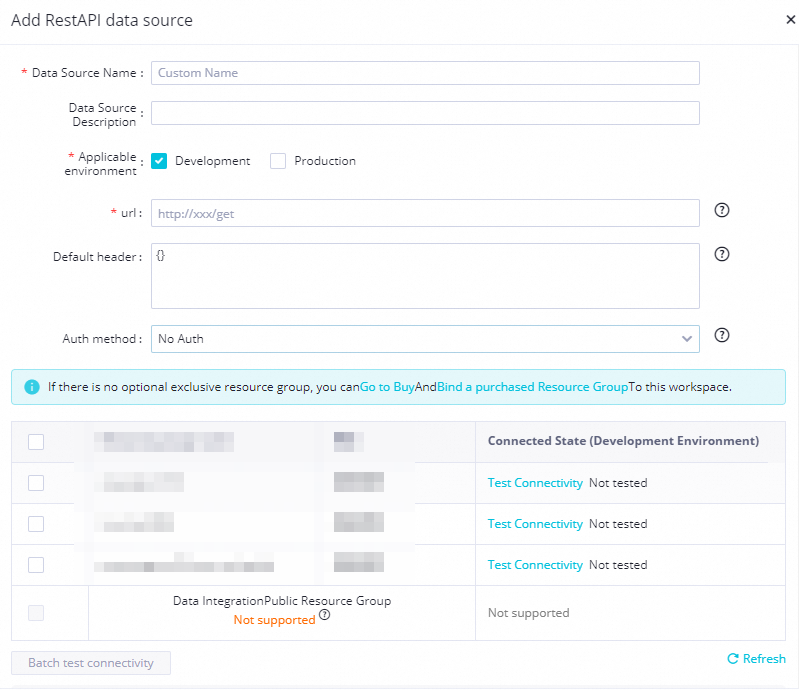

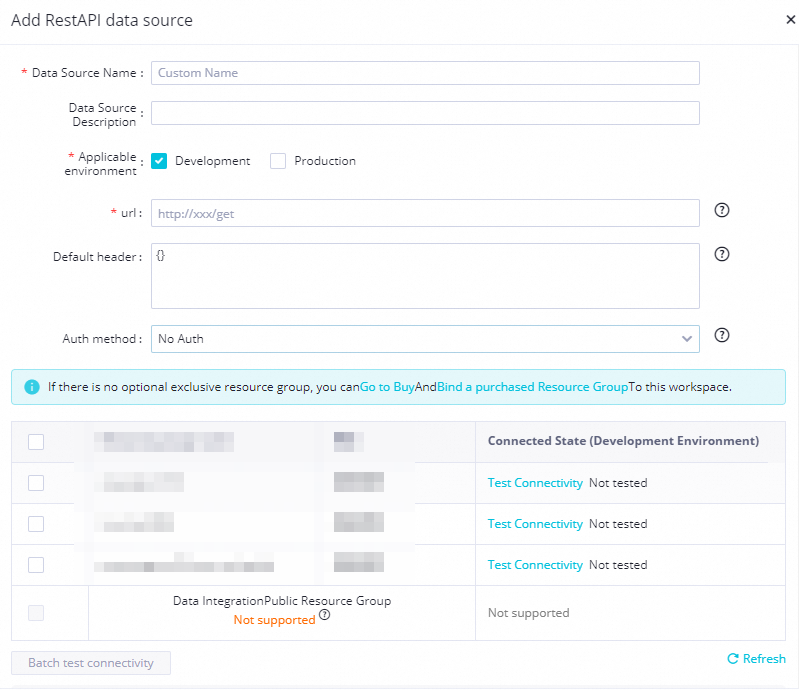

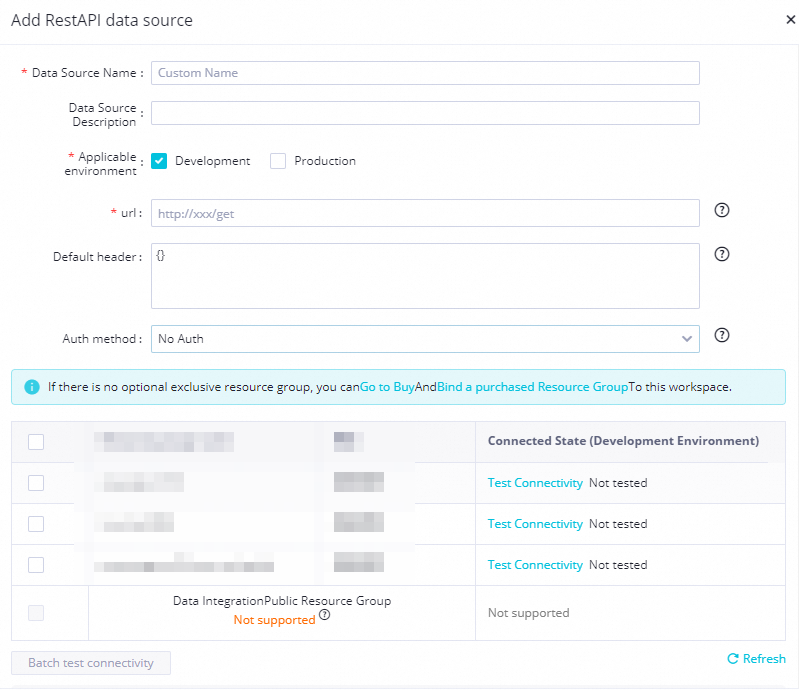

Step 2: Add a RestAPI data source

Add a RestAPI data source to your workspace. For details, see Add a RestAPI data source.

Key fields:

-

url: The RESTful API endpoint URL.

-

Auth method: The authentication method supported by your data source and its required parameters.

Step 3: Configure the batch synchronization task

On the DataStudio page, create a batch synchronization task using the codeless UI. For details, see Use the codeless UI.

Source settings:

| Setting | Value |

|---|---|

| Data source | The RestAPI data source added above |

| Request Method | GET |

| Data Structure | Array Data |

| json path to store data | data |

Request parameters — combine request parameters with scheduling parameters to sync data daily:

startTime=${extract_day} ${start_time}&endTime=${extract_day} ${end_time}Add these scheduling parameters in the task's scheduling properties:

extract_day=${yyyy-mm-dd}

start_time=00:00:00

end_time=23:59:59When the task runs on 2023-01-05, extract_day resolves to 2023-01-04, producing:

startTime=2023-01-04 00:00:00&endTime=2023-01-04 23:59:59Destination settings:

| Setting | Value |

|---|---|

| Data source | The MaxCompute data source |

| Table | ods_xiaobo_rest2 |

| Partition information | ${bizdate} — add scheduling parameter bizdate=$bizdate |

When the task runs on 2023-01-05, data is written to partition 20230104.

Field mappings: Specify the fields returned by the API (axis, value, createTime). Field names are case-sensitive. Click Map Fields with Same Name to auto-map, or drag lines to map manually.

Step 4: Test the task

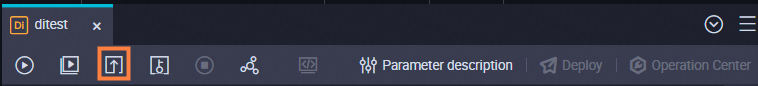

Click Run with Parameters in the toolbar. Specify values for the scheduling parameters in the dialog.

After the run, check the logs at the bottom of the configuration tab to confirm the parameter values are correct.

Step 5: Verify the data

Create an ad hoc query node in DataStudio to check the result:

SELECT * FROM ods_xiaobo_rest2 WHERE ds='20230104' ORDER BY createtime;20230104 is the partition written in the test run above.

Step 6: Deploy and backfill

Deploy the task to the production environment. For standard mode workspaces, see Publishing process for tasks in a standard mode workspace.

Find the deployed task on the Cycle Task page in Operation Center and backfill historical data as needed. See Backfill data for an auto triggered task and view data backfill instances generated for the task.

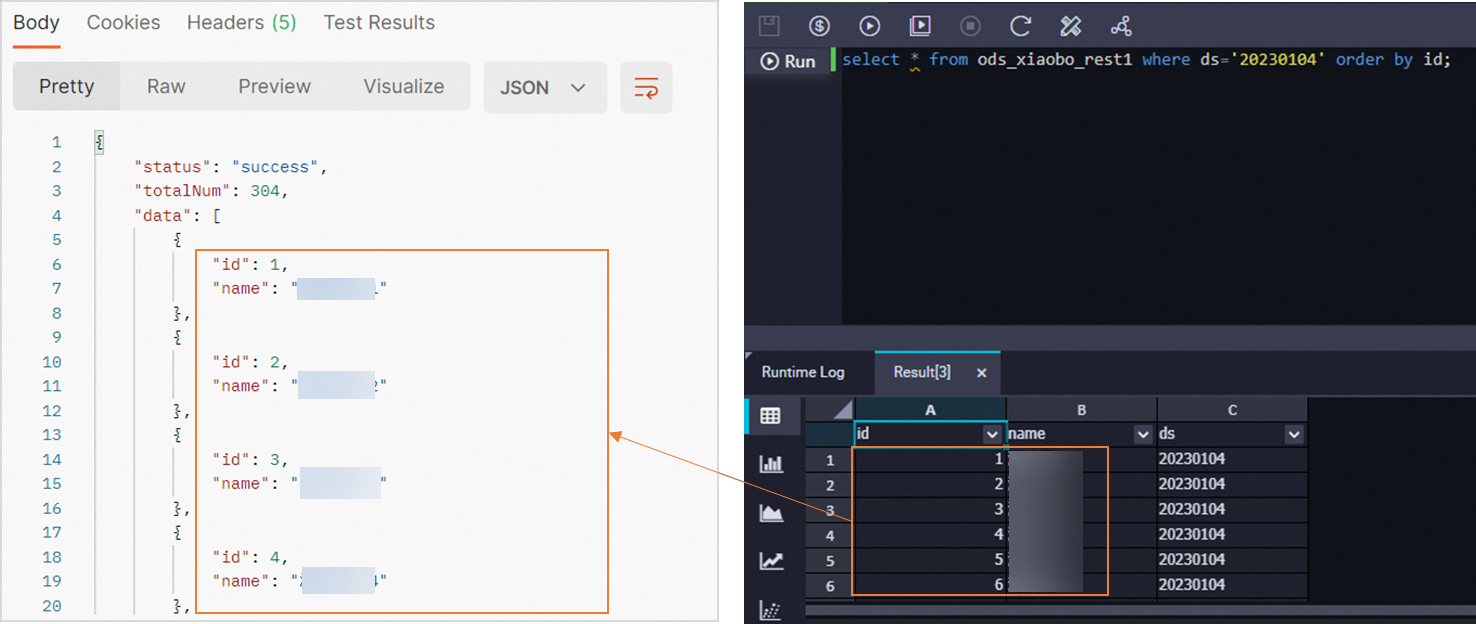

Example 2: Read paginated results

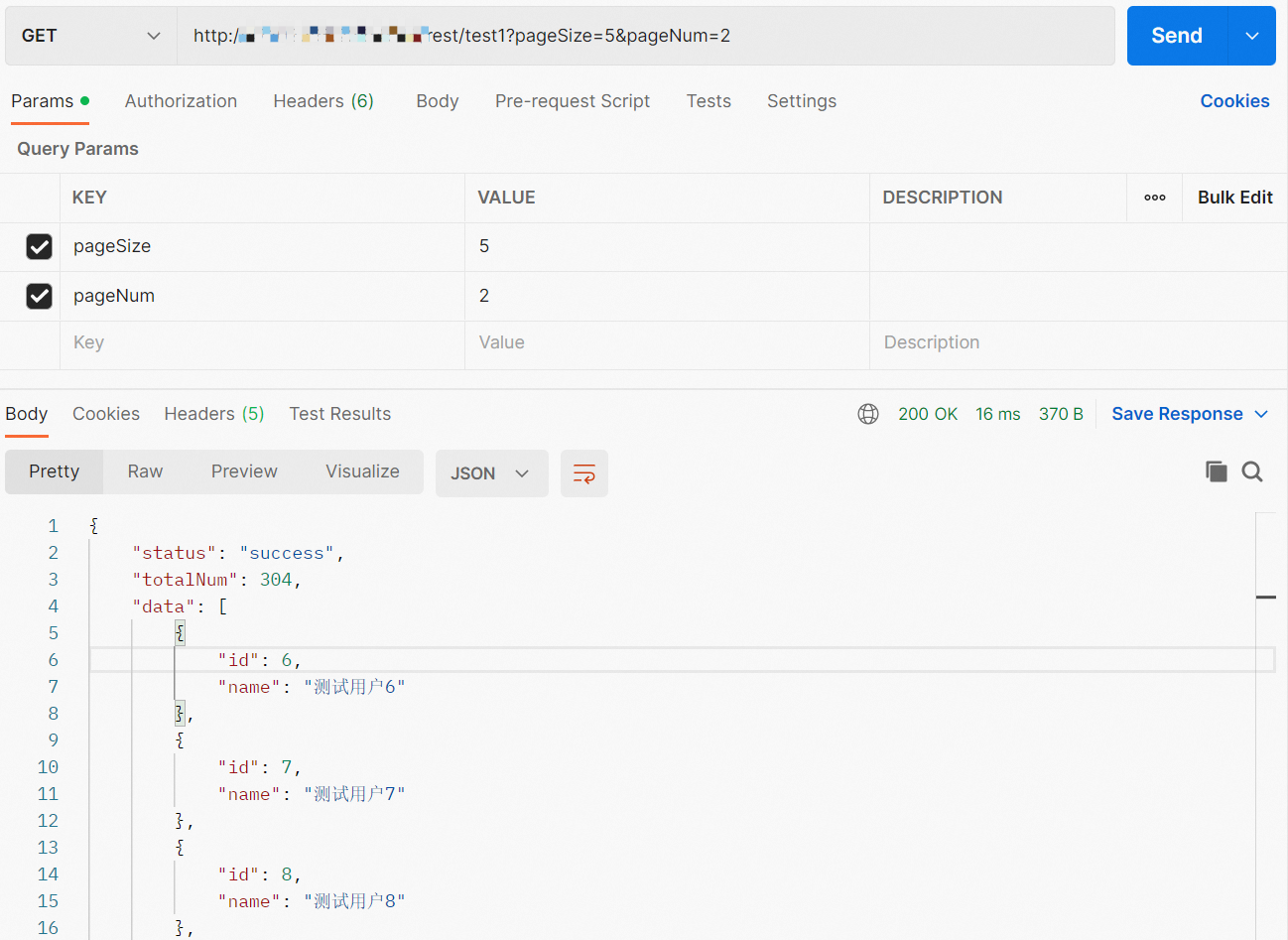

This example synchronizes paged data from a GET-based RESTful API to MaxCompute. The API uses pageSize and pageNum to control pagination.

The API used here is for reference only. Adjust configurations to match your own API.

Sample API

Request:

http://TestAPIAddress:Port/rest/test1?pageSize=5&pageNum=1pageSize controls how many records to return per page. pageNum specifies the page number.

Response:

{

"status": "success",

"totalNum": 304,

"data": [

{"id": 6, "name": "User 6"},

{"id": 7, "name": "User 7"},

{"id": 8, "name": "User 8"},

{"id": 9, "name": "User 9"},

{"id": 10, "name": "User 10"}

]

}The queried records are in data. The returned fields are id and name.

Step 1: Create a MaxCompute partitioned table

CREATE TABLE IF NOT EXISTS ods_xiaobo_rest1

(

`id` BIGINT,

`name` STRING

)

PARTITIONED BY

(

ds STRING

)

LIFECYCLE 3650;Deploy the table to the production environment in standard mode to view it in Data Map.

Step 2: Add a RestAPI data source

Add a RestAPI data source to your workspace. For details, see Add a RestAPI data source.

Step 3: Configure the batch synchronization task

On the DataStudio page, create a batch synchronization task using the codeless UI. For details, see Use the codeless UI.

Source settings:

| Setting | Value |

|---|---|

| Data source | The RestAPI data source added above |

| Request Method | GET |

| Data Structure | Array Data |

| json path to store data | data |

| Request parameters | pageSize=50 |

| Number of requests | Multiple requests |

For Multiple requests, configure the pagination traversal:

| Parameter | Value | Description |

|---|---|---|

| Multiple requests parameter | pageNum |

The page number parameter in the API |

| StartIndex | 1 |

First page number |

| Step | 1 |

Increment per request |

| EndIndex | 100 |

Last page to fetch |

Setting pageSize=50 tells the API to return 50 records per page. Keep this value reasonable — an excessively large pageSize increases load on the API server and the sync task.

With this configuration, Data Integration sends requests from pageNum=1 to pageNum=100 in steps of 1, collecting all returned records.

Destination settings:

| Setting | Value |

|---|---|

| Data source | The MaxCompute data source |

| Table | ods_xiaobo_rest1 |

| Partition information | ${bizdate} — add scheduling parameter bizdate=$bizdate |

When the task runs on 2023-01-05, data is written to partition 20230104.

Field mappings: Specify id and name. Field names are case-sensitive. Click Map Fields with Same Name to auto-map.

Step 4: Test the task

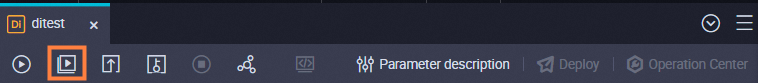

Click Run with Parameters and specify the scheduling parameter values.

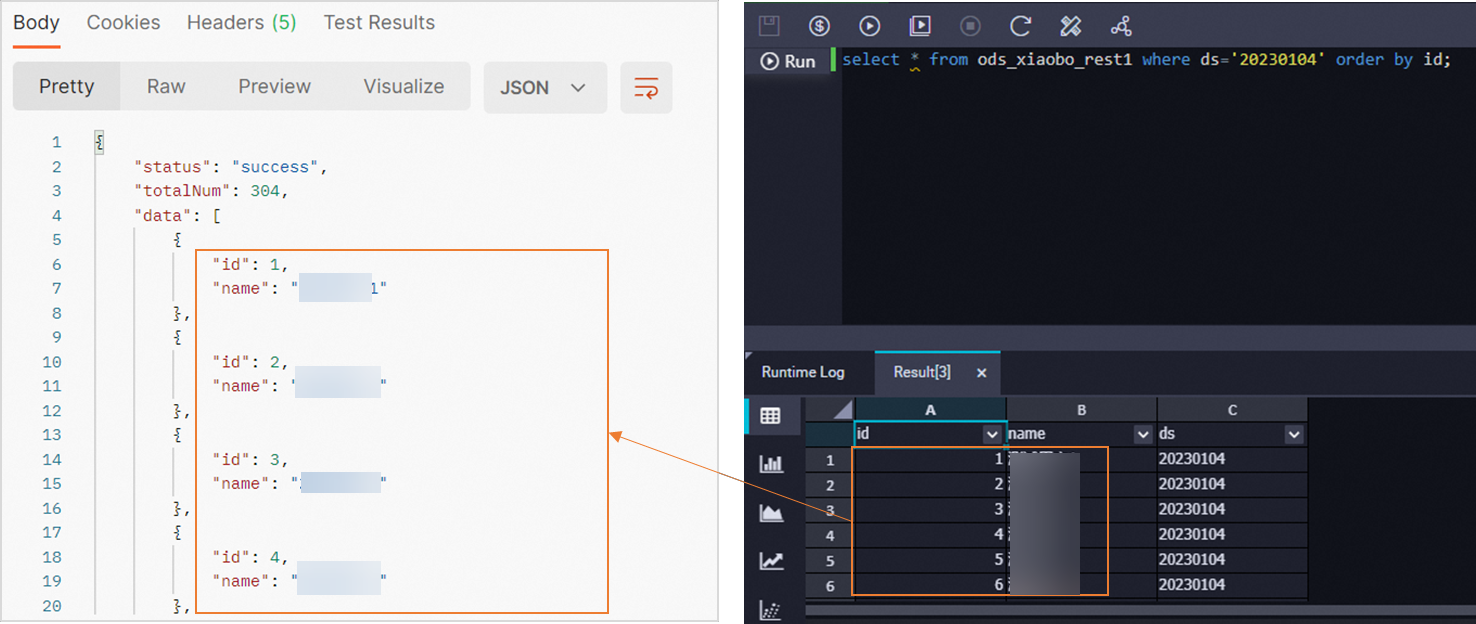

Step 5: Verify the data

SELECT * FROM ods_xiaobo_rest1 WHERE ds='20230104' ORDER BY id;

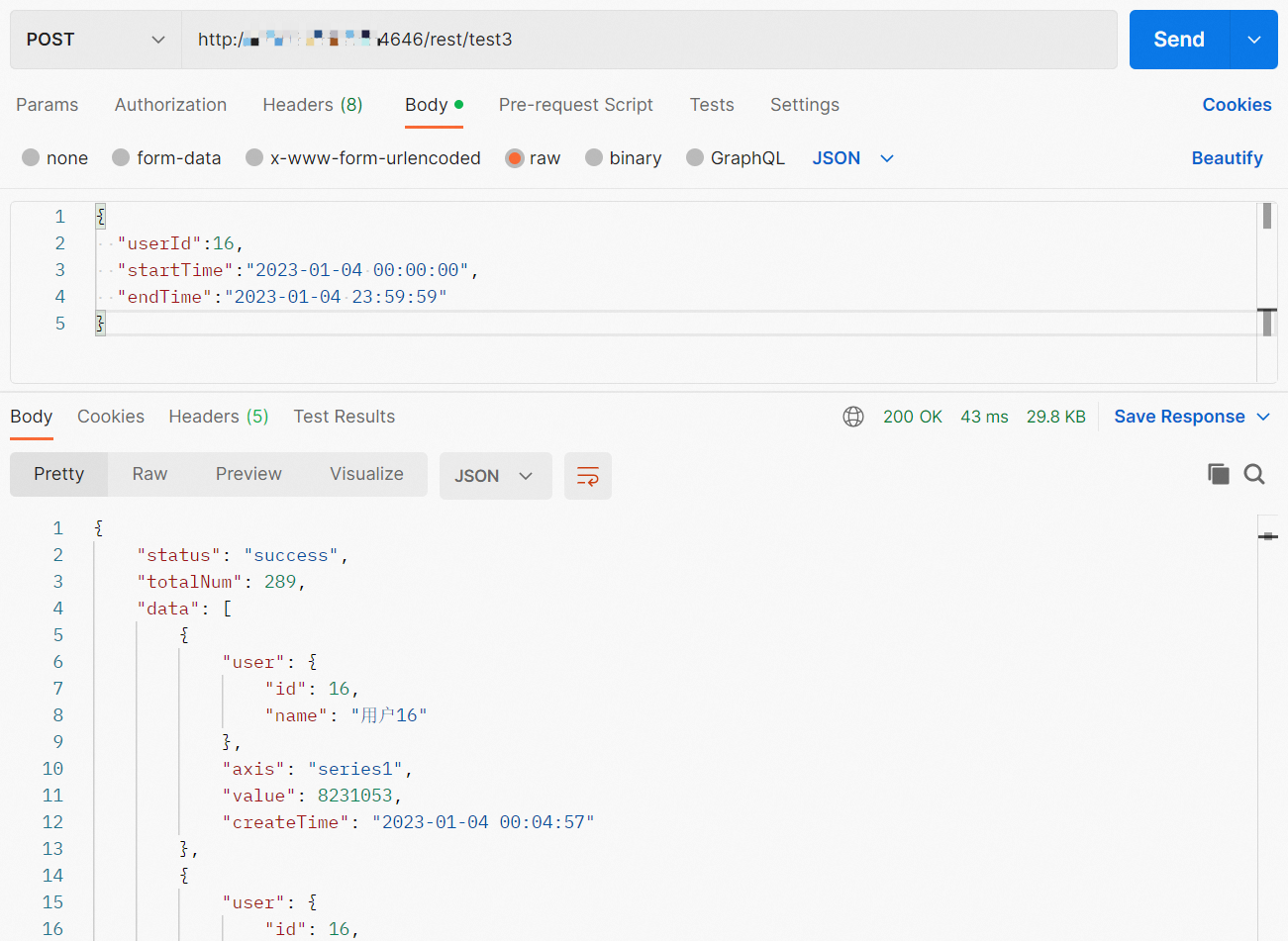

Example 3: Read data from a POST-based API

This example synchronizes data from a POST-based RESTful API. The request body is JSON and includes a user ID and time range. The API returns nested records.

The API used here is for reference only. Adjust configurations to match your own API.

Sample API

Request:

http://TestAPIAddress:Port/rest/test3Request body:

{

"userId": 16,

"startTime": "2023-01-04 00:00:00",

"endTime": "2023-01-04 23:59:59"

}Response:

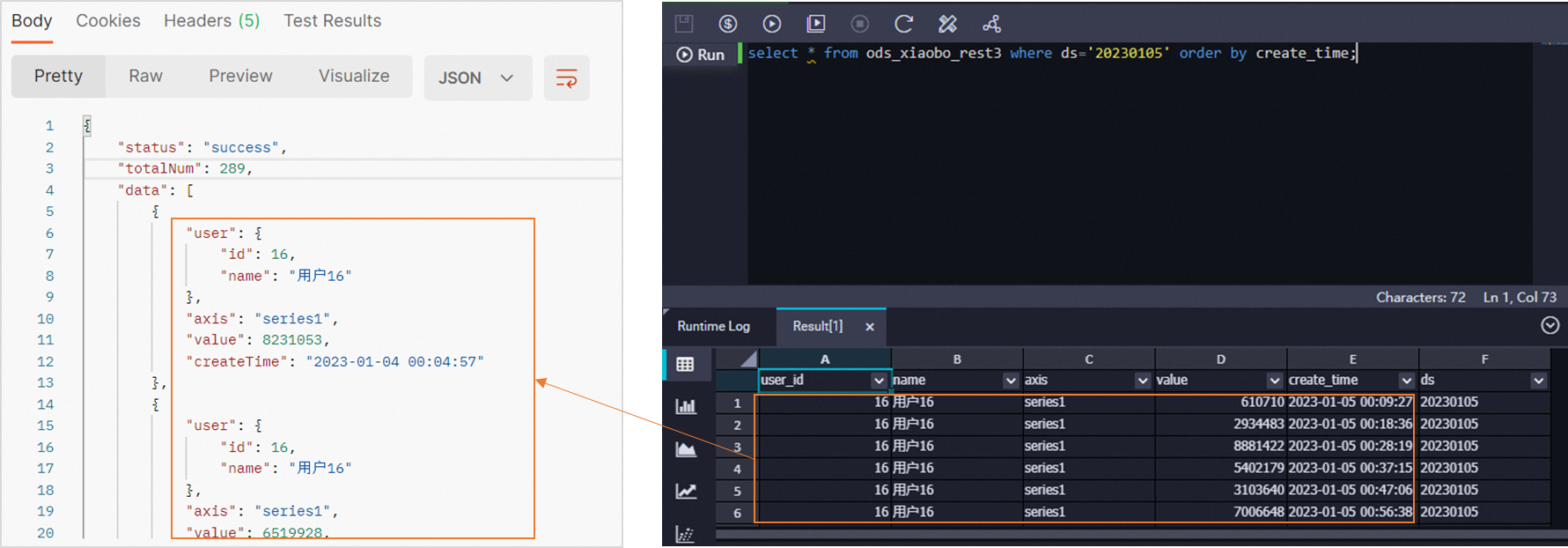

{

"status": "success",

"totalNum": 289,

"data": [

{

"user": {"id": 16, "name": "User 16"},

"axis": "series1",

"value": 8231053,

"createTime": "2023-01-04 00:04:57"

},

{

"user": {"id": 16, "name": "User 16"},

"axis": "series1",

"value": 6519928,

"createTime": "2023-01-04 00:09:51"

},

{

"user": {"id": 16, "name": "User 16"},

"axis": "series1",

"value": 2915920,

"createTime": "2023-01-04 00:14:36"

},

{

"user": {"id": 16, "name": "User 16"},

"axis": "series1",

"value": 7971851,

"createTime": "2023-01-04 00:19:51"

},

{

"user": {"id": 16, "name": "User 16"},

"axis": "series1",

"value": 6598996,

"createTime": "2023-01-04 00:24:30"

}

]

}The returned fields are user.id, user.name, axis, value, and createTime. Note that user.id and user.name are nested fields — use a period (.) to reference them in field mappings.

Step 1: Create a MaxCompute partitioned table

CREATE TABLE IF NOT EXISTS ods_xiaobo_rest3

(

`user_id` BIGINT,

`name` STRING,

`axis` STRING,

`value` BIGINT,

`create_time` STRING

)

PARTITIONED BY

(

ds STRING

)

LIFECYCLE 3650;Deploy the table to the production environment in standard mode to view it in Data Map.

Step 2: Add a RestAPI data source

Add a RestAPI data source to your workspace. For details, see Add a RestAPI data source.

Step 3: Configure the batch synchronization task

On the DataStudio page, create a batch synchronization task using the codeless UI. For details, see Use the codeless UI.

Source settings:

| Setting | Value |

|---|---|

| Data source | The RestAPI data source added above |

| Request Method | POST |

| Data Structure | Array Data |

| json path to store data | data |

| Header | {"Content-Type":"application/json"} |

Request parameters — use a JSON body with scheduling parameters for daily sync:

{

"userId": 16,

"startTime": "${extract_day} 00:00:00",

"endTime": "${extract_day} 23:59:59"

}Add this scheduling parameter in the task's scheduling properties:

extract_day=${yyyy-mm-dd}Destination settings:

| Setting | Value |

|---|---|

| Data source | The MaxCompute data source |

| Table | ods_xiaobo_rest3 |

| Partition information | ${bizdate} — add scheduling parameter bizdate=$bizdate |

When the task runs on 2023-01-05, data is written to partition 20230104.

Field mappings: Specify the fields returned by the API. For nested fields, use dot notation — for example, enter user.id to reference the id field inside user. Field names are case-sensitive. Click Map Fields with Same Name to auto-map, or drag lines to map manually.

Step 4: Test the task

Click Run with Parameters and specify the scheduling parameter values.

Step 5: Verify the data

SELECT * FROM ods_xiaobo_rest3 WHERE ds='20230105' ORDER BY create_time;20230105 is the partition written in the test run above.

Example 4: Traverse request parameters in a loop

This example fetches weather data for multiple city-province combinations by looping through a parameter table. An assignment node reads the city list from MaxCompute, a for-each node iterates over it, and a batch synchronization task runs once per combination.

The API used here is for reference only. Adjust configurations to match your own API.

Sample API

Request:

http://TestAPIAddress:Port/rest/test5?date=2023-01-04&province=zhejiang&city=hangzhouResponse:

{

"province": "P1",

"city": "hz",

"date": "2023-01-04",

"minTemperature": "-14",

"maxTemperature": "-7",

"unit": "℃",

"weather": "cool"

}

Step 1: Create the parameter table and destination table

The parameter table stores the province-city combinations to iterate over. The destination table stores the sync results, partitioned by province, city, and date.

Create a parameter table

-- Parameter table: stores the loop values

CREATE TABLE IF NOT EXISTS `citys`

(

`province` STRING,

`city` STRING

);

INSERT INTO citys

SELECT 'shanghai', 'shanghai'

UNION ALL SELECT 'zhejiang', 'hangzhou'

UNION ALL SELECT 'sichuan', 'chengdu';Create a MaxCompute partitioned table

-- Destination table: partitioned by province, city, and date

CREATE TABLE IF NOT EXISTS ods_xiaobo_rest5

(

`minTemperature` STRING,

`maxTemperature` STRING,

`unit` STRING,

`weather` STRING

)

PARTITIONED BY

(

`province` STRING,

`city` STRING,

`ds` STRING

)

LIFECYCLE 3650;In standard mode, deploy both tables to the production environment to view them in Data Map.

With the write mode set to overwriting, each run overwrites partition data, making reruns safe and preventing duplicate records.

Step 2: Add a RestAPI data source

Add a RestAPI data source to your workspace. For details, see Add a RestAPI data source.

Step 3: Create an assignment node

On the DataStudio page, create an assignment node named setval_citys. For details, see Assignment node.

Configure the node as follows:

| Setting | Value |

|---|---|

| Programming language | ODPS SQL |

| Code | SELECT province, city FROM citys; |

| Rerun property | Allow Regardless of Running Status |

After configuration, deploy the assignment node.

Step 4: Create a for-each node

On the DataStudio page, create a for-each node. For details, see the For-each node documentation.

| Parameter | Value |

|---|---|

| Rerun property | Allow Regardless of Running Status |

| Ancestor node | setval_citys |

| Input parameter | Select the sources of the input parameters |

Step 5: Configure the batch synchronization task inside the for-each node

Create a batch synchronization task using the codeless UI. For details, see Use the codeless UI.

The for-each node passes each row from citys as output parameters: dag.foreach.current[0] is province and dag.foreach.current[1] is city. Use these to build the API request and destination partition for each iteration.

Scheduling parameters:

bizdate=$[yyyymmdd-1]

bizdate_year=$[yyyy-1]

bizdate_month=$[mm-1]

bizdate_day=$[dd-1]Request parameters (builds the API query string from scheduling and for-each parameters):

date=${bizdate_year}-${bizdate_month}-${bizdate_day}&province=${dag.foreach.current[0]}&city=${dag.foreach.current[1]}Destination partition configuration:

| Parameter | Value | Description |

|---|---|---|

| province | ${dag.foreach.current[0]} |

Province from the current for-each iteration |

| city | ${dag.foreach.current[1]} |

City from the current for-each iteration |

| ds | ${bizdate} |

Scheduling parameter for the date partition |

Field mappings: Specify the fields returned by the API (minTemperature, maxTemperature, unit, weather). Field names are case-sensitive. Click Map Fields with Same Name to auto-map, or drag lines to map manually.

After configuration, deploy the for-each node.

Step 6: Test the assignment node and for-each node

-

On the Cycle Task page in Operation Center, find the assignment node and trigger a data backfill. For details, see Perform O&M on data backfill instances.

-

Select the data timestamp range (start and end time) and the nodes to backfill.

-

After the backfill instances are generated, check the run logs of the assignment node to confirm that scheduling parameters are rendered correctly. When parameters are correct, data is written to partitions such as

province=shanghai,city=shanghai,ds=20231215.

Step 7: Verify the data

SELECT weather,

mintemperature,

maxtemperature,

unit,

province,

city,

ds

FROM ods_xiaobo_rest5

WHERE ds != 1

ORDER BY ds, province, city;ods_xiaobo_rest5 is the destination table created in Step 1.