The Tablestore data source gives DataWorks a bidirectional channel to read data from and write data to Tablestore, a NoSQL storage service built on the Alibaba Cloud Apsara Distributed File System.

Supported capabilities

The Tablestore Reader and Writer plugins support two table types and two read/write modes. Use the following table to confirm whether your scenario is supported before configuring a task.

| Table type | Row mode | Column mode |

|---|---|---|

| Wide table | Read / Write | Read / Write |

| Time series table | Read / Write | Not supported |

SetnewVersiontotrueto enable all modes above. The legacy version (newVersion: false) supports only row mode for wide tables.

Key concepts

Row mode and column mode

Row mode maps each record to one row in the Tablestore table. The data format is {primary key values, attribute column values}.

Column mode uses Tablestore's three-level multi-version model: Row > Column > Version. Each column can have multiple versions identified by a timestamp. In column mode, each record is a four-tuple: {primary key value, column name, timestamp, column value}.

The following example shows how column mode transforms data. A table with two primary keys (gid, uid) produces nine input records in four-tuple format:

// Input: 9 records in four-tuple format

1, pk1, row1, 1677699863871, value_0_0

1, pk1, row2, 1677699863871, value_0_1

1, pk1, row3, 1677699863871, value_0_2

2, pk2, row1, 1677699863871, value_1_0

2, pk2, row2, 1677699863871, value_1_1

2, pk2, row3, 1677699863871, value_1_2

3, pk3, row1, 1677699863871, value_2_0

3, pk3, row2, 1677699863871, value_2_1

3, pk3, row3, 1677699863871, value_2_2After writing to a wide table in column mode, the result is:

// Output: 3 rows in the wide table

gid uid row1 row2 row3

1 pk1 value_0_0 value_0_1 value_0_2

2 pk2 value_1_0 value_1_1 value_1_2

3 pk3 value_2_0 value_2_1 value_2_2Wide tables and time series tables

Wide tables are standard Tablestore tables with primary key columns and attribute columns. Both row mode and column mode are supported.

Time series tables store timestamped data organized by measurement name, data source, and tags. Time series tables support row mode only — column mode is not compatible.

Plugin versions

The newVersion parameter controls which version of the Reader and Writer plugins DataWorks uses.

newVersion value |

Supported modes | Notes |

|---|---|---|

false (default) |

Row mode, wide tables only | Legacy version |

true (recommended) |

Row mode and column mode for wide tables; row mode for time series tables; Writer also supports auto-increment primary key columns | Uses fewer system resources; compatible with existing configurations |

Limits

-

Only one table per synchronization task is supported. Tablestore does not support synchronizing multiple tables in a single task.

-

Source column order must match the order of primary key columns followed by attribute columns in the destination Tablestore table. A mismatch causes a column mapping error.

-

Tablestore Reader splits the data range into N concurrent tasks, where N is the concurrency level configured for the synchronization job.

-

Tablestore does not support date types. Use the LONG type to store UNIX timestamps at the application layer.

-

Configure INTEGER columns as

INTin the code editor. DataWorks convertsINTtoINTEGERinternally. Configuring the type directly asINTEGERcauses a task failure.

Supported field types

Tablestore Reader and Writer support all Tablestore data types.

| Type category | Tablestore data type | Code editor value |

|---|---|---|

| Integer | INTEGER | INT |

| Floating-point | DOUBLE | DOUBLE |

| String | STRING | STRING |

| Boolean | BOOLEAN | BOOLEAN |

| Binary | BINARY | BINARY |

Add a Tablestore data source

Before configuring a synchronization task, add Tablestore as a data source in DataWorks. See Data source management for instructions. Parameter descriptions are available in the DataWorks console when you add the data source.

Configure a synchronization task

For the entry point and general procedure for configuring a synchronization task, see Configure a task in the code editor.

The following sections provide script examples and parameter references for the Tablestore Reader and Writer.

Appendix I: Reader script demo and parameter description

All Tablestore Reader scripts use the stepType: ots plugin. Set newVersion to true and configure mode and isTimeseriesTable to select the read mode.

Reader script examples

Row mode — wide table

{

"type": "job",

"version": "2.0",

"steps": [

{

"stepType": "ots",

"parameter": {

"datasource": "<data-source-name>",

"newVersion": "true",

"mode": "normal",

"isTimeseriesTable": "false",

"table": "<table-name>",

"column": [

{"name": "column1"},

{"name": "column2"},

{"name": "column3"},

{"name": "column4"},

{"name": "column5"}

],

"range": {

"begin": [

{"type": "STRING", "value": "beginValue"},

{"type": "INT", "value": "0"},

{"type": "INF_MIN"},

{"type": "INF_MIN"}

],

"end": [

{"type": "STRING", "value": "endValue"},

{"type": "INT", "value": "100"},

{"type": "INF_MAX"},

{"type": "INF_MAX"}

],

"split": [

{"type": "STRING", "value": "splitPoint1"},

{"type": "STRING", "value": "splitPoint2"},

{"type": "STRING", "value": "splitPoint3"}

]

}

},

"name": "Reader",

"category": "reader"

},

{

"stepType": "stream",

"parameter": {},

"name": "Writer",

"category": "writer"

}

],

"setting": {

"errorLimit": {"record": "0"},

"speed": {

"throttle": true,

"concurrent": 1,

"mbps": "12"

}

},

"order": {

"hops": [{"from": "Reader", "to": "Writer"}]

}

}Row mode — time series table

{

"type": "job",

"version": "2.0",

"steps": [

{

"stepType": "ots",

"parameter": {

"datasource": "<data-source-name>",

"table": "<table-name>",

"mode": "normal",

"newVersion": "true",

"isTimeseriesTable": "true",

"measurementName": "measurement_1",

"column": [

{"name": "_m_name"},

{"name": "tagA", "is_timeseries_tag": "true"},

{"name": "double_0", "type": "DOUBLE"},

{"name": "string_0", "type": "STRING"},

{"name": "long_0", "type": "INT"},

{"name": "binary_0", "type": "BINARY"},

{"name": "bool_0", "type": "BOOL"},

{"type": "STRING", "value": "testString"}

]

},

"name": "Reader",

"category": "reader"

},

{

"stepType": "stream",

"parameter": {},

"name": "Writer",

"category": "writer"

}

],

"setting": {

"errorLimit": {"record": "0"},

"speed": {

"throttle": true,

"concurrent": 1,

"mbps": "12"

}

},

"order": {

"hops": [{"from": "Reader", "to": "Writer"}]

}

}Column mode — wide table

{

"type": "job",

"version": "2.0",

"steps": [

{

"stepType": "ots",

"parameter": {

"datasource": "<data-source-name>",

"table": "<table-name>",

"newVersion": "true",

"mode": "multiversion",

"column": [

{"name": "mobile"},

{"name": "name"},

{"name": "age"},

{"name": "salary"},

{"name": "marry"}

],

"range": {

"begin": [{"type": "INF_MIN"}, {"type": "INF_MAX"}],

"end": [{"type": "INF_MAX"}, {"type": "INF_MIN"}],

"split": []

}

},

"name": "Reader",

"category": "reader"

},

{

"stepType": "stream",

"parameter": {},

"name": "Writer",

"category": "writer"

}

],

"setting": {

"errorLimit": {"record": "0"},

"speed": {

"throttle": true,

"concurrent": 1,

"mbps": "12"

}

},

"order": {

"hops": [{"from": "Reader", "to": "Writer"}]

}

}Reader general parameters

| Parameter | Description | Required | Default |

|---|---|---|---|

endpoint |

The endpoint of the Tablestore instance. See Endpoints. | Yes | None |

accessId |

The AccessKey ID for Tablestore. | Yes | None |

accessKey |

The AccessKey secret for Tablestore. | Yes | None |

instanceName |

The name of the Tablestore instance. An instance is the basic unit for resource management in Tablestore. Access control and resource metering are performed at the instance level. | Yes | None |

table |

The name of the table to read from. Only one table per task is supported. | Yes | None |

newVersion |

The plugin version. Set to true to use the new Reader, which supports row mode, column mode, wide tables, and time series tables, and uses fewer system resources. The new plugin is compatible with existing configurations — existing tasks continue to work after adding newVersion: true. |

No | false |

mode |

The read mode. normal reads in row mode with format {primary key values, attribute column values}. multiVersion reads in column mode with format {primary key, attribute column name, timestamp, value}. Takes effect only when newVersion: true. |

No | normal |

isTimeseriesTable |

Set to true if the table is a time series table. Takes effect only when newVersion: true and mode: normal. Time series tables cannot be read in column mode. |

No | false |

Row mode parameters — wide table

| Parameter | Description | Required | Default |

|---|---|---|---|

column |

The columns to read. Use a JSON array of column name objects. Supports normal columns ({"name":"col1"}), constant columns ({"type":"STRING","value":"DataX"}), and INF_MIN/INF_MAX constants. If you use INF_MIN or INF_MAX, do not specify the value property, or an error occurs. Functions and custom expressions are not supported. |

Yes | None |

begin / end |

The primary key range to read. Use {"type":"INF_MIN"} and {"type":"INF_MAX"} for open-ended ranges. When fewer values are configured than the number of primary key columns, the leftmost primary key matching principle applies — unconfigured columns default to [INF_MIN, INF_MAX). The last primary key column uses a left-closed, right-open interval; all other columns use a closed interval. |

No | [INF_MIN, INF_MAX) |

split |

Custom split points for data sharding. Use this when hot spots cause uneven data distribution. The task splits data at the specified values and reads each segment concurrently. Set more split points than the task concurrency level for best performance. If not configured, the Reader automatically splits data by finding the min and max of the partition key and dividing evenly (integers by integer division, strings by Unicode code of the first character). | No | Auto split |

`begin`/`end` examples

To read rows where Hundreds is in [3, 5] and Tens is in [4, 6] from a table with three primary key columns [Hundreds, Tens, Ones]:

"range": {

"begin": [

{"type": "INT", "value": "3"},

{"type": "INT", "value": "4"}

],

"end": [

{"type": "INT", "value": "5"},

{"type": "INT", "value": "6"}

]

}To further narrow the range so that Ones is in [5, 7):

"range": {

"begin": [

{"type": "INT", "value": "3"},

{"type": "INT", "value": "4"},

{"type": "INT", "value": "5"}

],

"end": [

{"type": "INT", "value": "5"},

{"type": "INT", "value": "6"},

{"type": "INT", "value": "7"}

]

}Row mode parameters — time series table

| Parameter | Description | Required | Default |

|---|---|---|---|

column |

The columns to read. Each element is either a constant column (requires type and value) or a normal column (requires name). Predefined column names: _m_name (measurement name, String), _data_source (data source, String), _tags (tags, String), _time (timestamp, Long). Set is_timeseries_tag: true to read an individual tag value as a separate column. |

Yes | None |

measurementName |

The measurement name to filter by. If not configured, data is read from the entire table. | No | None |

timeRange |

The time range to read, as [begin, end). begin must be less than end. The timestamp unit is microseconds. begin defaults to 0; end defaults to Long.MAX_VALUE (9223372036854775807). |

No | All versions |

Column mode parameters — wide table

| Parameter | Description | Required | Default |

|---|---|---|---|

column |

The attribute columns to export. Primary key columns and constant columns are not supported. The exported four-tuple includes the complete primary key automatically. Each column can be listed only once. | Yes | All columns |

range |

The primary key range to read, as [begin, end). If begin is less than end, data is read in ascending order. If begin is greater than end, data is read in descending order. begin and end cannot be equal. Supported types: string, int, binary (Base64-encoded), INF_MIN, INF_MAX. The split sub-parameter accepts partition key values (first primary key column only) to enable concurrent reading. |

No | All data |

timeRange |

The time range of versions to read, as [begin, end). The timestamp unit is microseconds. |

No | All versions |

maxVersion |

The maximum number of versions to read per column. Value range: 1 to INT32_MAX. | No | All versions |

Appendix II: Writer script demo and parameter description

All Tablestore Writer scripts use the stepType: ots plugin. Set newVersion to true and configure mode and isTimeseriesTable to select the write mode.

Writer script examples

Row mode — wide table

{

"type": "job",

"version": "2.0",

"steps": [

{

"stepType": "stream",

"parameter": {},

"name": "Reader",

"category": "reader"

},

{

"stepType": "ots",

"parameter": {

"datasource": "<data-source-name>",

"table": "<table-name>",

"newVersion": "true",

"mode": "normal",

"isTimeseriesTable": "false",

"primaryKey": [

{"name": "gid", "type": "INT"},

{"name": "uid", "type": "STRING"}

],

"column": [

{"name": "col1", "type": "INT"},

{"name": "col2", "type": "DOUBLE"},

{"name": "col3", "type": "STRING"},

{"name": "col4", "type": "STRING"},

{"name": "col5", "type": "BOOL"}

],

"writeMode": "PutRow"

},

"name": "Writer",

"category": "writer"

}

],

"setting": {

"errorLimit": {"record": "0"},

"speed": {

"throttle": true,

"concurrent": 1,

"mbps": "12"

}

},

"order": {

"hops": [{"from": "Reader", "to": "Writer"}]

}

}Row mode — time series table

{

"type": "job",

"version": "2.0",

"steps": [

{

"stepType": "stream",

"parameter": {},

"name": "Reader",

"category": "reader"

},

{

"stepType": "ots",

"parameter": {

"datasource": "<data-source-name>",

"table": "testTimeseriesTableName01",

"mode": "normal",

"newVersion": "true",

"isTimeseriesTable": "true",

"timeunit": "microseconds",

"column": [

{"name": "_m_name"},

{"name": "_data_source"},

{"name": "_tags"},

{"name": "_time"},

{"name": "string_1", "type": "string"},

{"name": "tag3", "is_timeseries_tag": "true"}

]

},

"name": "Writer",

"category": "writer"

}

],

"setting": {

"errorLimit": {"record": "0"},

"speed": {

"throttle": true,

"concurrent": 1,

"mbps": "12"

}

},

"order": {

"hops": [{"from": "Reader", "to": "Writer"}]

}

}Column mode — wide table

{

"type": "job",

"version": "2.0",

"steps": [

{

"stepType": "stream",

"parameter": {},

"name": "Reader",

"category": "reader"

},

{

"stepType": "ots",

"parameter": {

"datasource": "<data-source-name>",

"table": "<table-name>",

"newVersion": "true",

"mode": "multiVersion",

"primaryKey": ["gid", "uid"]

},

"name": "Writer",

"category": "writer"

}

],

"setting": {

"errorLimit": {"record": "0"},

"speed": {

"throttle": true,

"concurrent": 1,

"mbps": "12"

}

},

"order": {

"hops": [{"from": "Reader", "to": "Writer"}]

}

}Writer general parameters

| Parameter | Description | Required | Default |

|---|---|---|---|

datasource |

The data source name as configured in DataWorks. The value must match the name of the added data source exactly. | Yes | None |

endPoint |

The endpoint of the Tablestore instance. See Endpoints. | Yes | None |

accessId |

The AccessKey ID for Tablestore. | Yes | None |

accessKey |

The AccessKey secret for Tablestore. | Yes | None |

instanceName |

The name of the Tablestore instance. An instance is the basic unit for resource management in Tablestore. Access control and resource metering are performed at the instance level. | Yes | None |

table |

The name of the table to write to. Only one table per task is supported. | Yes | None |

newVersion |

The plugin version. Set to true to use the new Writer, which supports row mode, column mode, wide tables, time series tables, and auto-increment primary key columns, and uses fewer system resources. The new plugin is compatible with existing configurations. |

Yes | false |

mode |

The write mode. normal writes in row mode. multiVersion writes in column mode. Takes effect only when newVersion: true. |

No | normal |

isTimeseriesTable |

Set to true if the table is a time series table. Takes effect only when newVersion: true and mode: normal. Column mode is not compatible with time series tables. |

No | false |

Row mode parameters — wide table

| Parameter | Description | Required | Default |

|---|---|---|---|

primaryKey |

The primary key columns of the destination table. Specify each column as {"name":"<col>","type":"<type>"}. Tablestore primary keys support only STRING and INT types. |

Yes | None |

column |

The attribute columns to write. Specify each column as {"name":"<col>","type":"<type>"}. Supported types: STRING, INT, DOUBLE, BOOL, BINARY. Constants, functions, and custom expressions are not supported. |

Yes | None |

writeMode |

The write behavior when a row with matching primary keys already exists in the destination table. PutRow overwrites the entire existing row with the new data. UpdateRow adds, modifies, or deletes the values of specified columns in the existing row based on the request content. If the row does not exist, both modes create a new row. |

Yes | None |

enableAutoIncrement |

Set to true if the destination table has an auto-increment primary key column. The Writer automatically detects and handles the auto-increment column — do not include it in primaryKey. Set to false (or omit) if the table has no auto-increment column; writing to a table with an auto-increment column without this flag causes an error. |

No | false |

requestTotalSizeLimitation |

The maximum size of a single row, in bytes. | No | 1 MB |

attributeColumnSizeLimitation |

The maximum size of a single attribute column value, in bytes. | No | 2 MB |

primaryKeyColumnSizeLimitation |

The maximum size of a single primary key column value, in bytes. | No | 1 KB |

attributeColumnMaxCount |

The maximum number of attribute columns per row. | No | 1,024 |

Row mode parameters — time series table

| Parameter | Description | Required | Default |

|---|---|---|---|

column |

The fields to write. Each element maps to one field in the time series record. Predefined column names: _m_name (measurement name, String), _data_source (data source, String), _tags (tags, String, format: ["key1=value1","key2=value2"]), _time (timestamp, Long). Set is_timeseries_tag: true to write an individual column as a tag key-value pair. The _m_name and _time fields are required. |

Yes | None |

timeunit |

The unit of the _time timestamp field. Supported values: NANOSECONDS, MICROSECONDS, MILLISECONDS, SECONDS, MINUTES. |

No | MICROSECONDS |

Column mode parameters — wide table

| Parameter | Description | Required | Default |

|---|---|---|---|

primaryKey |

The primary key column names of the destination table, as a JSON array of strings. The input record format must be {pk0, pk1, ...}, {columnName}, {timestamp}, {value} — primary key values come first, followed by the column name, timestamp, and value. |

Yes | None |

columnNamePrefixFilter |

A prefix to strip from column names before writing. Use this when importing from HBase, where the column family prefix (e.g., cf:) is part of the column name but is not supported by Tablestore. If a column name does not start with the configured prefix, the record is sent to the dirty data queue. |

No | None |

FAQ

How do I write to a table that has an auto-increment primary key column?

Add "newVersion": "true" and "enableAutoIncrement": "true" to the Writer configuration. Do not include the auto-increment column name in primaryKey. The total number of entries in primaryKey plus column must equal the number of columns in the upstream Reader output.

What is the difference between `_tags` and `is_timeseries_tag` in time series configurations?

They control whether tags are handled as a batch or individually.

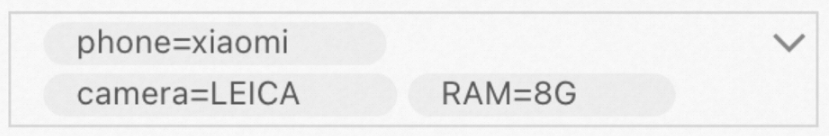

_tags reads or writes all tags as a single column. The format is ["phone=xiaomi","camera=LEICA","RAM=8G"]. Use this when you want to preserve the full tag set as one value.

is_timeseries_tag: true reads or writes a single tag by key name as a separate column. For example, configuring {"name": "phone", "is_timeseries_tag": "true"} and {"name": "camera", "is_timeseries_tag": "true"} produces two columns with values xiaomi and LEICA respectively.

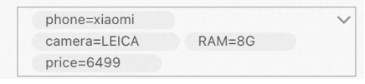

You can mix both approaches in the same configuration. For example, if the upstream data has one column containing ["phone=xiaomi","camera=LEICA","RAM=8G"] and another column with a numeric value 6499 that you want to add as a tag price:

"column": [

{"name": "_tags"},

{"name": "price", "is_timeseries_tag": "true"}

]This imports the first column as a tag batch and adds price=6499 as an additional individual tag.