Salesforce provides customer relationship management (CRM) software focused on contact management, product catalog management, order management, opportunity management, and sales management. DataWorks provides Salesforce Reader to batch-synchronize data from Salesforce into your data warehouse or data lake. Salesforce Reader supports four sync modes — standard object sync, Bulk API 1.0, Bulk API 2.0, and SOQL query — each suited to different data volumes and column types.

Prerequisites

Before you begin, make sure you have:

-

A resource group whose virtual private cloud (VPC) network has outbound connectivity to your Salesforce domain. Without this, the data source connection fails.

Data type mappings

The following table shows how Salesforce data types map to DataWorks types in the code editor.

| Salesforce type | DataWorks type |

|---|---|

| address | STRING |

| anyType | STRING |

| base64 | BYTES |

| boolean | BOOL |

| combobox | STRING |

| complexvalue | STRING |

| currency | DOUBLE |

| date | DATE |

| datetime | DATE |

| double | DOUBLE |

| STRING | |

| encryptedstring | STRING |

| id | STRING |

| int | LONG |

| json | STRING |

| long | LONG |

| multipicklist | STRING |

| percent | DOUBLE |

| phone | STRING |

| picklist | STRING |

| reference | STRING |

| string | STRING |

| textarea | STRING |

| time | DATE |

| url | STRING |

| geolocation | STRING |

Thecurrency,double, andpercenttypes all map toDOUBLE. If your data has high-precision decimal values, verify thatDOUBLEprecision meets your requirements before running a production sync.

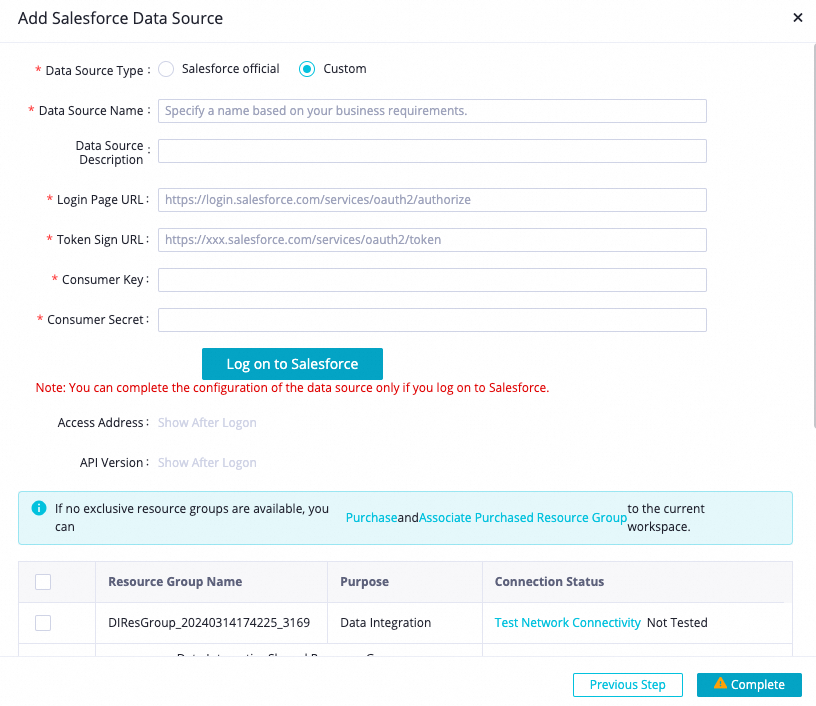

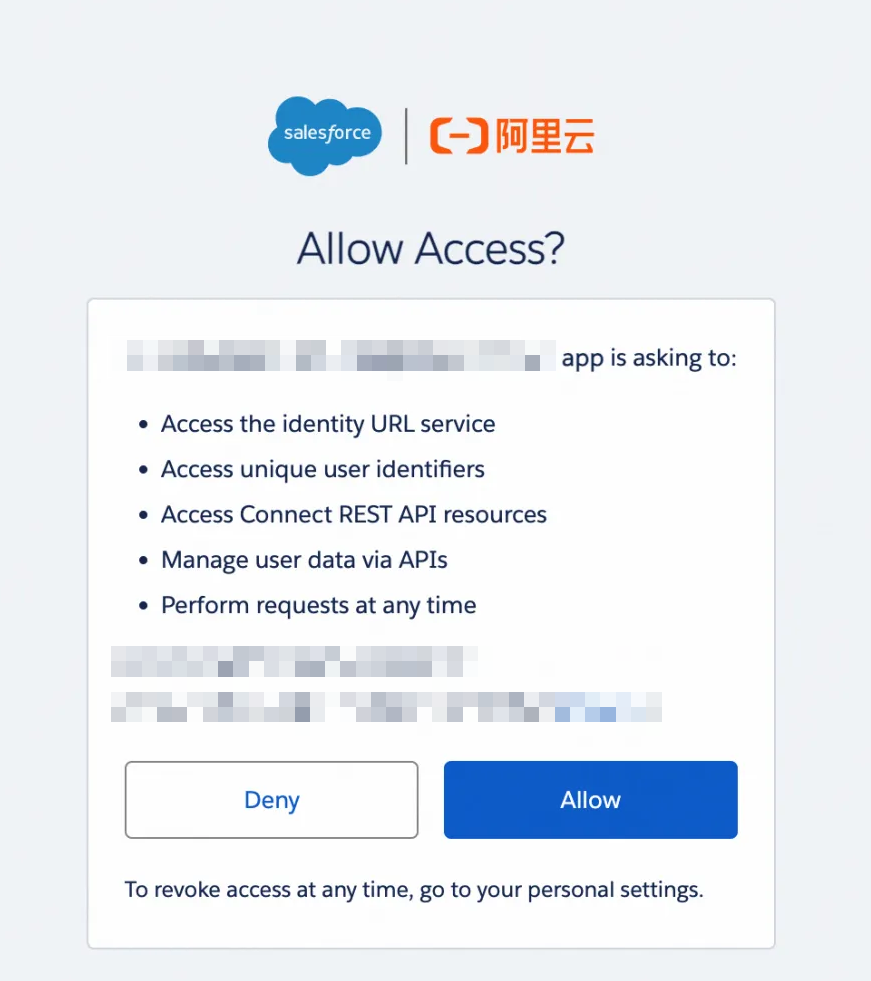

Add a data source

Configure a batch synchronization task

After adding the data source, configure a batch synchronization task to read data from Salesforce.

-

For the UI-based procedure, see Configure a task in the codeless UI.

-

For the code editor procedure and all supported parameters, see Configure a task in the code editor and the Reader parameters section below.

Choose a sync mode

Salesforce Reader supports four sync modes via the serviceType parameter. Use the following table to select the right mode.

| Mode | serviceType value |

When to use |

|---|---|---|

| Standard object sync | sobject (default) |

General-purpose sync; supports data sharding via splitPk for parallel reads; required for objects with compound columns (e.g., address, geolocation) |

| SOQL query | query |

When you need to query data by executing a SOQL statement with custom filtering conditions |

| Bulk API 1.0 | bulk1 |

Large-volume syncs; may outperform bulk2 for some objects |

| Bulk API 2.0 | bulk2 |

Large-volume syncs; does not support distributed tasks |

-

bulk1andbulk2do not support columns of compound data types such asaddressandgeolocation. If those columns exist in your object, usesobjectinstead, or setblockCompoundColumntofalseto read them as NULL. -

bulk2does not support distributed tasks. -

Test both

bulk1andbulk2against your specific Salesforce objects to determine which performs better.

Incremental sync

When serviceType is sobject, bulk1, or bulk2, set beginDateTime and endDateTime to sync only records modified within a time window. DataWorks filters records using the following timestamp fields, in priority order:

-

SystemModstamp -

LastModifiedDate -

CreatedDate

The time range is a left-closed, right-open interval (beginDateTime is included, endDateTime is excluded).

Use beginDateTime and endDateTime together with DataWorks scheduling parameters to automate incremental data reads.

Appendix: Code and parameters

Code examples

All four examples use the same job structure. The serviceType parameter in the Reader parameter block determines the sync mode.

Example 1: Standard object sync (sobject)

{

"type": "job",

"version": "2.0",

"steps": [

{

"stepType": "salesforce",

"parameter": {

"datasource": "",

"serviceType": "sobject",

"table": "Account",

"beginDateTime": "20230817184200",

"endDateTime": "20231017184200",

"where": "",

"column": [

{ "type": "STRING", "name": "Id" },

{ "type": "STRING", "name": "Name" },

{ "type": "BOOL", "name": "IsDeleted" },

{ "type": "DATE", "name": "CreatedDate" }

]

},

"name": "Reader",

"category": "reader"

},

{

"stepType": "stream",

"parameter": {},

"name": "Writer",

"category": "writer"

}

],

"setting": {

"errorLimit": { "record": "0" },

"speed": { "throttle": true, "concurrent": 1, "mbps": "12" }

},

"order": { "hops": [{ "from": "Reader", "to": "Writer" }] }

}Example 2: Bulk API 1.0

bulk1 adds blockCompoundColumn and bulkQueryJobTimeoutSeconds. It does not use the speed.throttle or mbps settings.

{

"type": "job",

"version": "2.0",

"steps": [

{

"stepType": "salesforce",

"parameter": {

"datasource": "",

"serviceType": "bulk1",

"table": "Account",

"beginDateTime": "20230817184200",

"endDateTime": "20231017184200",

"where": "",

"blockCompoundColumn": true,

"bulkQueryJobTimeoutSeconds": 86400,

"column": [

{ "type": "STRING", "name": "Id" },

{ "type": "STRING", "name": "Name" },

{ "type": "BOOL", "name": "IsDeleted" },

{ "type": "DATE", "name": "CreatedDate" }

]

},

"name": "Reader",

"category": "reader"

},

{

"stepType": "stream",

"parameter": { "print": true },

"name": "Writer",

"category": "writer"

}

],

"setting": {

"errorLimit": { "record": "0" },

"speed": { "concurrent": 1 }

},

"order": { "hops": [{ "from": "Reader", "to": "Writer" }] }

}Example 3: Bulk API 2.0

bulk2 uses the same extra parameters as bulk1. Note that bulk2 does not support distributed tasks.

{

"type": "job",

"version": "2.0",

"steps": [

{

"stepType": "salesforce",

"parameter": {

"datasource": "",

"serviceType": "bulk2",

"table": "Account",

"beginDateTime": "20230817184200",

"endDateTime": "20231017184200",

"where": "",

"blockCompoundColumn": true,

"bulkQueryJobTimeoutSeconds": 86400,

"column": [

{ "type": "STRING", "name": "Id" },

{ "type": "STRING", "name": "Name" },

{ "type": "BOOL", "name": "IsDeleted" },

{ "type": "DATE", "name": "CreatedDate" }

]

},

"name": "Reader",

"category": "reader"

},

{

"stepType": "stream",

"parameter": {},

"name": "Writer",

"category": "writer"

}

],

"setting": {

"errorLimit": { "record": "0" },

"speed": { "throttle": true, "concurrent": 1, "mbps": "12" }

},

"order": { "hops": [{ "from": "Reader", "to": "Writer" }] }

}Example 4: SOQL query

When serviceType is query, use the query field to provide a full SOQL statement. DataWorks ignores table, column, beginDateTime, endDateTime, where, and splitPk.

{

"type": "job",

"version": "2.0",

"steps": [

{

"stepType": "salesforce",

"parameter": {

"datasource": "",

"serviceType": "query",

"query": "select Id, Name, IsDeleted, CreatedDate from Account where Name!='Aliyun'",

"column": [

{ "type": "STRING", "name": "Id" },

{ "type": "STRING", "name": "Name" },

{ "type": "BOOL", "name": "IsDeleted" },

{ "type": "DATE", "name": "CreatedDate" }

]

},

"name": "Reader",

"category": "reader"

},

{

"stepType": "stream",

"parameter": {},

"name": "Writer",

"category": "writer"

}

],

"setting": {

"errorLimit": { "record": "0" },

"speed": { "throttle": true, "concurrent": 1, "mbps": "12" }

},

"order": { "hops": [{ "from": "Reader", "to": "Writer" }] }

}Reader parameters

| Parameter | Required | Default | Description |

|---|---|---|---|

datasource |

Yes | — | Name of the Salesforce data source as added in DataWorks. |

serviceType |

No | sobject |

Sync mode. Valid values: sobject, query, bulk1, bulk2. See Choose a sync mode. |

table |

Yes (sobject, bulk1, bulk2) | — | Salesforce object name, such as Account, Case, or Group. Objects are equivalent to tables. |

beginDateTime |

No | — | Start of the sync time window in yyyymmddhhmmss format. Applies to sobject, bulk1, and bulk2. The interval is left-closed and right-open. |

endDateTime |

No | — | End of the sync time window. Same format as beginDateTime. |

splitPk |

No | — | Field used for data sharding, enabling parallel reads. Applies to sobject. Supported field types: datetime, int, long. Other field types cause an error. |

blockCompoundColumn |

No | true |

Controls behavior when compound-type columns (e.g., address, geolocation) are present. Applies to bulk1 and bulk2. true: the task fails if compound columns exist; false: compound columns are read as NULL. |

bulkQueryJobTimeoutSeconds |

No | 86400 |

Timeout for Salesforce's batch data preparation phase, in seconds. Applies to bulk1 and bulk2. If Salesforce exceeds this duration preparing data, the task fails. |

batchSize |

No | 300000 |

Number of records to download per batch. Applies to bulk1 and bulk2. Set slightly above Salesforce's automatic shard size for optimal throughput. Data is streamed, so a larger value does not increase memory usage. Advanced parameter — code editor only. |

where |

No | — | WHERE clause for filtering data. Applies to sobject, bulk1, and bulk2. If blank, all records are returned. Do not use limit 10 — Salesforce does not support LIMIT in a standalone WHERE clause. |

query |

No | — | Full SOQL statement. Applies to query mode only. When set, DataWorks ignores table, column, beginDateTime, endDateTime, where, and splitPk. Example: select Id, Name, IsDeleted from Account where Name!='Aliyun'. Advanced parameter — code editor only. |

queryAll |

No | false |

When true, includes deleted records in the result. Applies to sobject and query. Use the IsDeleted field to identify deleted records. |

column |

Yes | — | JSON array of columns to sync. Each entry specifies name and type. Supports constants enclosed in single quotation marks (e.g., '123' for an integer constant, 'abc' for a string constant). Cannot be empty. |

connectTimeoutSeconds |

No | 30 |

HTTP request timeout, in seconds. Advanced parameter — code editor only. |

socketTimeoutSeconds |

No | 600 |

HTTP response timeout, in seconds. The task fails if the interval between two packets exceeds this value. Advanced parameter — code editor only. |

retryIntervalSeconds |

No | 60 |

Interval between retries, in seconds. Advanced parameter — code editor only. |

retryTimes |

No | 3 |

Number of retry attempts. Advanced parameter — code editor only. |

Column constants example:

[

{ "name": "Id", "type": "STRING" },

{ "name": "Name", "type": "STRING" },

{ "name": "'123'", "type": "LONG" },

{ "name": "'abc'", "type": "STRING" }

]Id and Name are column names. '123' and 'abc' are constants — integer and string respectively — enclosed in single quotation marks.