DataWorks provides a real-time data development and governance feature. This feature integrates a data governance plugin with Language Server Protocol (LSP) technology. It triggers smart checks when you save your code and provides targeted suggestions for fixes. Developers can apply fixes with a single click to resolve issues quickly. This feature uses a preset governance rule library and a semantic analysis engine to proactively identify syntax errors, data specification issues, and potential bugs in your code. This process creates a closed-loop management system for code quality.

Precautions

Changes to the data development node check governance plugin, related governance items, and LSP settings affect only the current account.

Diagnose governance item issues

The DataWorks data governance plugin includes a rich, built-in rule library that works out of the box with no configuration required. When you save an SQL query or a script, the plugin automatically scans the code for issues and suggests fixes. This process improves development efficiency. If you do not need this feature, you can disable it at any time.

Scope

Applicable regions: China (Hangzhou), China (Shanghai), China (Beijing), China (Zhangjiakou), China (Ulanqab), China (Shenzhen), China (Chengdu), China (Hong Kong), Singapore, Malaysia (Kuala Lumpur), Indonesia (Jakarta), Germany (Frankfurt), US (Silicon Valley), and US (Virginia).

Applicable nodes: To view the node types that apply to each governance item, see View solutions for check item events.

Connect to the governance plugin

On the Settings page in Data Studio, the DataStudio Governance Check Module Enablement option is selected by default. This setting allows the system to proactively detect governable issues in your code during editing. To manage this user-level configuration item, follow these steps.

Go to the DataWorks Workspaces page. In the top navigation bar, switch to the destination region, find the target workspace, and click in the Actions column to go to the DataStudio page.

In the lower-left corner of the navigation pane, click and go to the User tab on the Settings page.

On the User tab, find . You can enable or disable the data governance plugin for data development as required.

Enable the data governance plugin (default setting) To use the data governance plugin to detect and fix issues in your code, ensure that the DataStudio Governance Check Module Enablement checkbox is selected. You can then configure governance items to perform targeted governance on data development tasks.

Disable the data governance plugin If you do not want to use the data governance plugin to check for code issues, clear the DataStudio Governance Check Module Enablement checkbox. After you disable the plugin, this feature becomes unavailable for data development node tasks.

Enable governance check items

When the governance plugin is enabled, all governance items that are integrated with data development are enabled by default. You can enable or disable a specific governance item by following these steps.

In the navigation pane on the left of the DataStudio page, click the

icon to open the Data ASSET Governance folder.

icon to open the Data ASSET Governance folder.In the Rule Library, you can enable or disable governance items based on their descriptions in the List of governance items. All items are enabled by default.

If you do not want to use a specific governance rule, find the corresponding check item in the Rule Library and click the

icon next to it to disable it.Important

icon next to it to disable it.ImportantAfter you disable a governance item, checks for that item are no longer triggered when you edit and save data development tasks in the workspace for the current account.

Discover issues

After you enable the governance plugin and the governance check items, DataWorks Copilot automatically scans your code when you save a node type that supports governance checks. It flags potential issues. You can decide whether to accept these suggestions based on your needs.

When you edit and save the node content, the system detects whether the content has changed. If the content has changed, the system automatically clears the previous issue detection results. After you save the node, a new issue discovery process is triggered.

View the number of issues.

While you edit your code, the system scans for issues in real time. When you save the node, the number of governable issues in the current node appears in the lower-left corner of the page.

View issue details.

Click the

icon in the lower-left corner of the page. In the Problems area that appears, you can view the issues that require governance in the node.

icon in the lower-left corner of the page. In the Problems area that appears, you can view the issues that require governance in the node.

Fix issues

For each issue listed in the Problems panel, an explanation of the detection criteria is provided. You can click the hyperlink next to the issue to view detailed explanations and background information. Suggestions are also provided to help you resolve these issues quickly. Follow these steps:

Click the Data Asset Governance link next to the relevant issue to go to the Data Asset Governance page on the right.

On the Related Issues tab, you can view the issue description from the AI model, the governance analysis report, and suggestions for how to handle the issue.

To learn more about the governance details, switch to the Rule Description tab. On this tab, you can view the check items, rule logic, and handling guides that are associated with the issue.

Click the relevant link under Suggested Actions and follow the on-screen instructions to fix the issue.

The operation method varies based on the governance item. The interface provides specific instructions.

Diagnose LSP syntax issues

This section describes how to use the Language Server Protocol (LSP) feature to scan for code issues in real time while you edit SQL or scripts. It also explains how to use the Copilot feature to fix these issues quickly.

Enable LSP

On the Settings page in DataStudio, you can use the SyntaxErrorEnable configuration item to control whether syntax diagnosis is enabled during code editing. To manage this user-level configuration item, follow these steps.

Go to the DataWorks Workspaces page. In the top navigation bar, switch to the destination region, find the target workspace, and click in the Actions column to go to the DataStudio page.

In the lower-left corner of the navigation pane, click and go to the User tab on the Settings page.

On the User tab, click to configure syntax diagnosis.

SyntaxErrorEnable: Enables or disables syntax diagnosis. You can set this configuration item to

trueorfalseto enable or disable the syntax diagnosis feature during data development.SyntaxErrorSeverity: Sets the severity level for syntax error diagnosis. You can set this configuration item to different levels to control the alert level for syntax diagnosis exceptions during data development.

Discover issues

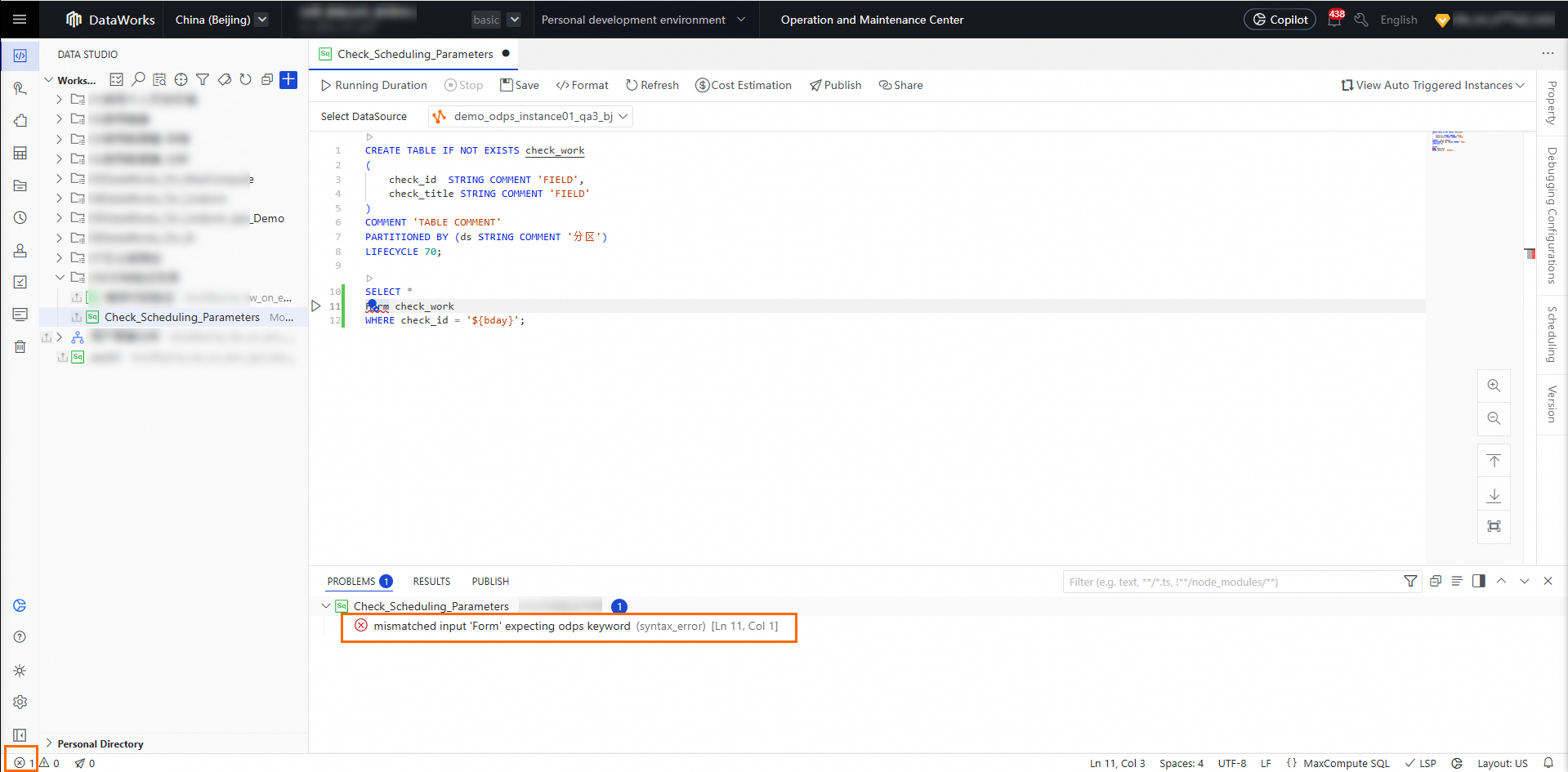

While you edit node code, click the  icon in the lower-left corner of the page. In the Problems area that appears, you can view code issue exceptions in the node. Click an issue to locate the problematic code snippet in the target node quickly.

icon in the lower-left corner of the page. In the Problems area that appears, you can view code issue exceptions in the node. Click an issue to locate the problematic code snippet in the target node quickly.

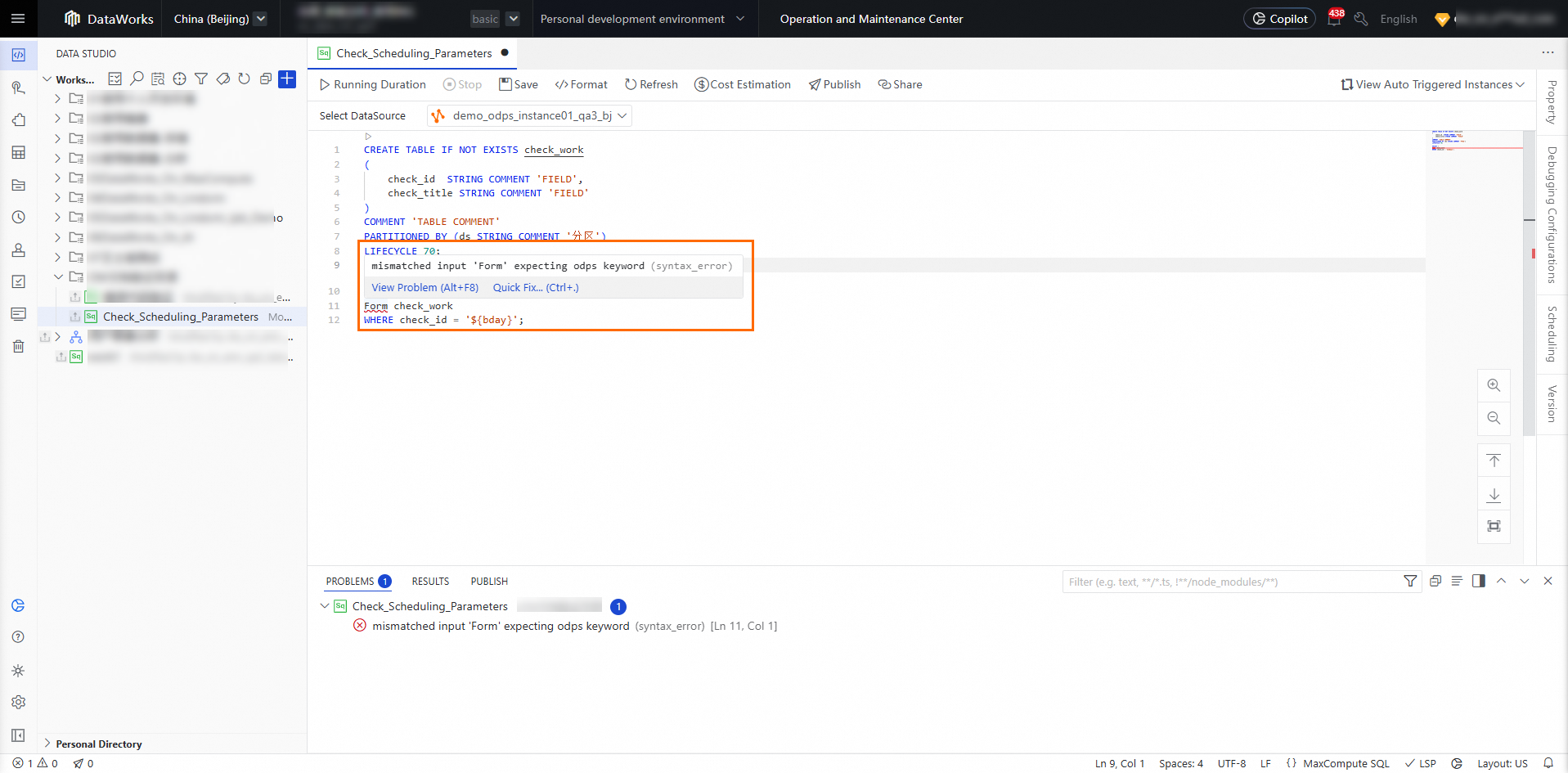

Fix issues

After you locate the problematic code snippet in the target node, you can hover your mouse over the incorrect code snippet to display a quick fix entry point. From this entry point, you can use DataWorks Copilot and choose from different models to correct the code.

Appendix: List of governance items

Rule name | Scenario |

Constants in JOIN ON Conditions | In data transformation, if you use a constant in the |

Missing Schedule Parameter Checker | Scheduling parameters are dynamic parameters that are used in code during task scheduling. These parameters are automatically replaced with specific values based on the data timestamp, scheduled time, and preset value format of the task. This process enables dynamic parameter substitution during task scheduling. Missing scheduling parameters can cause data transformation errors or task execution failures. |

Prevent Non-partitioned Tables in Scheduled Jobs | Using a non-partitioned table in an auto triggered task means that a non-partitioned table is used as a temporary table to store intermediate data within the same scheduling task, and data is read from this table in the task. When concurrent data backfills are performed for multiple data timestamps, data inconsistency and data loss can occur. This issue occurs because there is no guarantee that the intermediate table stores data for the correct data timestamp. |

Specify the Partition to Query | When you query a MaxCompute partitioned table, if you fail to specify a partition, a full table scan (brute-force scan) is triggered. This scan results in extremely high computing overhead. |

Prevent INSERT INTO with Rerun Enabled | If the SQL code contains only logic to write data to a target table using |

Require Matching Data Types of JOIN Columns | During MaxCompute SQL data development, `JOIN` operations are frequently used. When you write the `ON` condition, developers often ignore the consistency of field types. This can cause transformation errors and affect data quality. |

Prevent Scheduled Production Jobs from Writing to Development Tables | If an auto triggered task writes data to a table in a development project within a standard workspace, the data protection level is reduced and security risks are created. |

Prevent Overriding System Functions | If a submitted function has the same name as a built-in function, the submitted function overwrites the system's built-in function. When other project members use the function, this can lead to an inconsistent understanding of the built-in function, which affects usage and maintenance. |

Disallow MAX_PT Function | For an auto triggered task that is submitted for scheduled execution, if the code contains the `MAX_PT` function, the function always returns the largest partition of the table (in alphabetical order) in scenarios such as data backfill. This may produce unexpected behavior. For a detailed description of the value retrieval behavior of the `MAX_PT` function, see Other functions. |

Prevent Table Creation in SQL | Creating a table in an SQL script that is submitted for scheduled execution has two potential problems. First, the table is owned by the scheduling tenant account or the workspace owner (the Alibaba Cloud account). This unclear ownership creates additional management costs. Second, because there is no manual review, there is a high risk that data could be mistakenly purged. Therefore, this practice is prohibited. |

INSERT into External Project Tables | When a task that is running in a MaxCompute project is used to write data to a table in a different MaxCompute project, the system considers this a high-risk security operation. This operation poses risks of unauthorized data access and data leakage. We recommend that you use project isolation. A task in Project A should not write to a table in Project B. This applies to both development and production project environments. |